WideArea Service Composition Availability Performance and Scalability Bhaskaran

Wide-Area Service Composition: Availability, Performance, and Scalability Bhaskaran Raman SAHARA, EECS, U. C. Berkeley SAHARA Retreat, Jan 2002

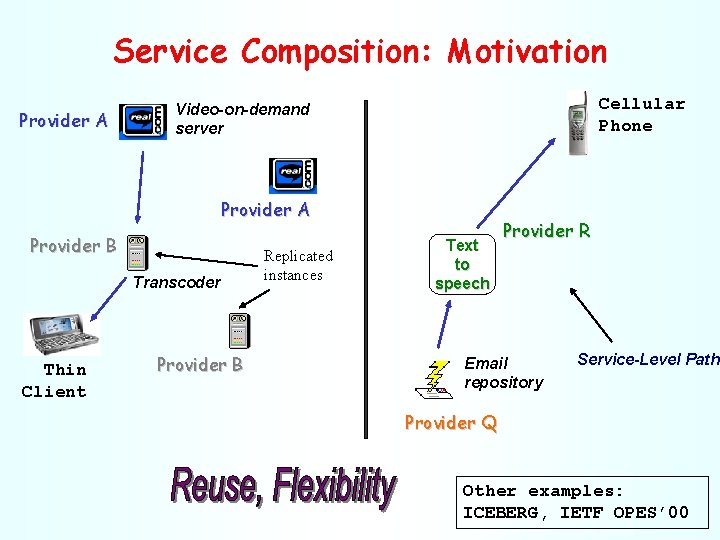

Service Composition: Motivation Provider A Cellular Phone Video-on-demand server Provider A Provider B Transcoder Thin Client Provider B Replicated instances Text to speech Provider R Email repository Service-Level Path Provider Q Other examples: ICEBERG, IETF OPES’ 00

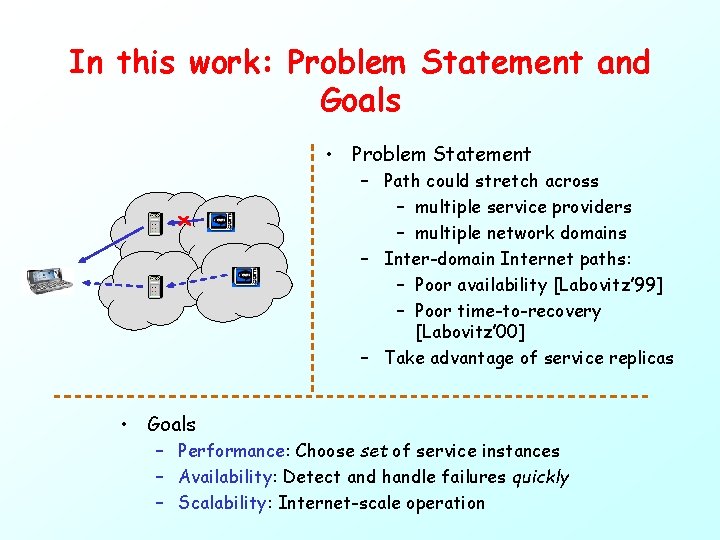

In this work: Problem Statement and Goals • Problem Statement – Path could stretch across – multiple service providers – multiple network domains – Inter-domain Internet paths: – Poor availability [Labovitz’ 99] – Poor time-to-recovery [Labovitz’ 00] – Take advantage of service replicas • Goals – Performance: Choose set of service instances – Availability: Detect and handle failures quickly – Scalability: Internet-scale operation

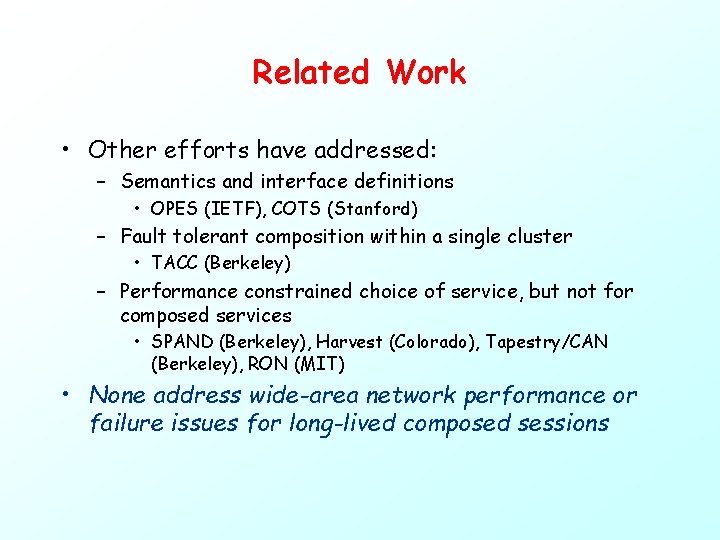

Related Work • Other efforts have addressed: – Semantics and interface definitions • OPES (IETF), COTS (Stanford) – Fault tolerant composition within a single cluster • TACC (Berkeley) – Performance constrained choice of service, but not for composed services • SPAND (Berkeley), Harvest (Colorado), Tapestry/CAN (Berkeley), RON (MIT) • None address wide-area network performance or failure issues for long-lived composed sessions

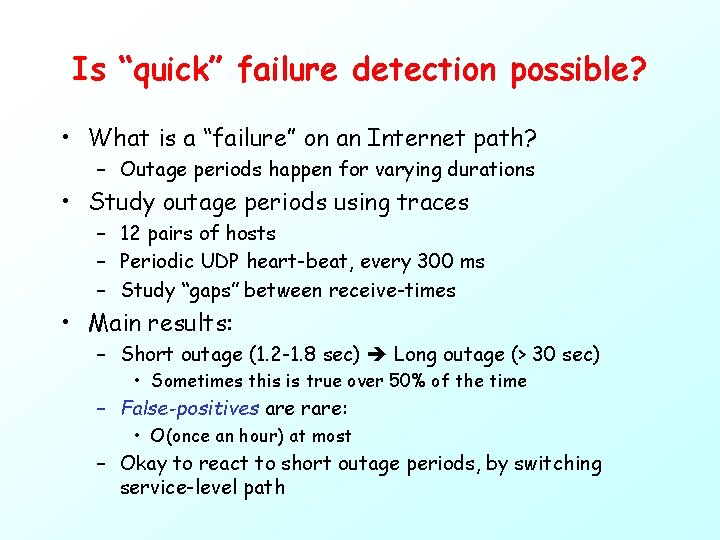

Design challenges • Scalability and Global information – Information about all service instances, and network paths inbetween should be known • Quick failure detection and recovery – Internet dynamics intermittent congestion

Is “quick” failure detection possible? • What is a “failure” on an Internet path? – Outage periods happen for varying durations • Study outage periods using traces – 12 pairs of hosts – Periodic UDP heart-beat, every 300 ms – Study “gaps” between receive-times • Main results: – Short outage (1. 2 -1. 8 sec) Long outage (> 30 sec) • Sometimes this is true over 50% of the time – False-positives are rare: • O(once an hour) at most – Okay to react to short outage periods, by switching service-level path

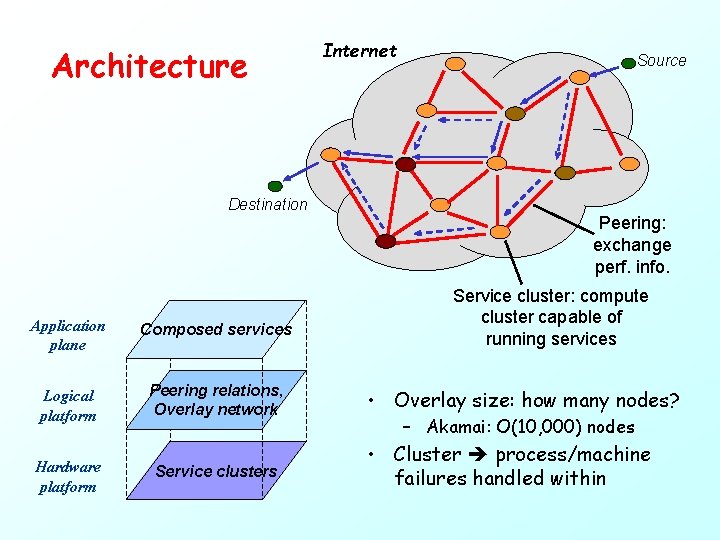

Architecture Destination Application plane Composed services Logical platform Peering relations, Overlay network Hardware platform Service clusters Internet Source Peering: exchange perf. info. Service cluster: compute cluster capable of running services • Overlay size: how many nodes? – Akamai: O(10, 000) nodes • Cluster process/machine failures handled within

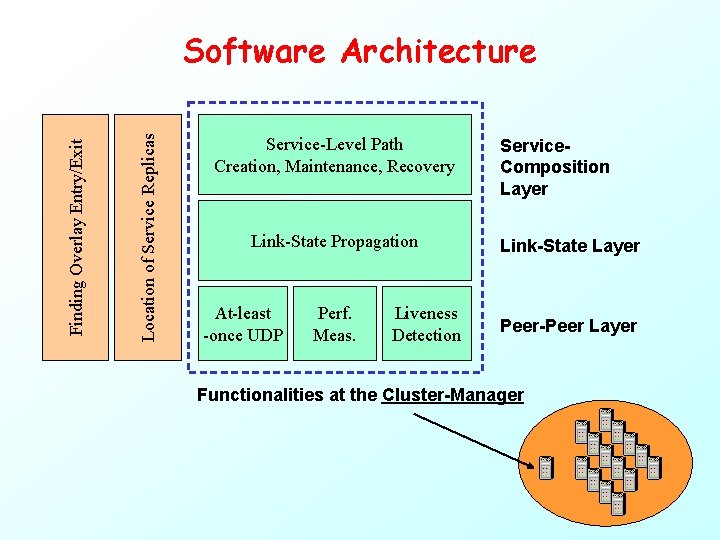

Location of Service Replicas Finding Overlay Entry/Exit Software Architecture Service-Level Path Creation, Maintenance, Recovery Link-State Propagation At-least -once UDP Perf. Meas. Liveness Detection Service. Composition Layer Link-State Layer Peer-Peer Layer Functionalities at the Cluster-Manager

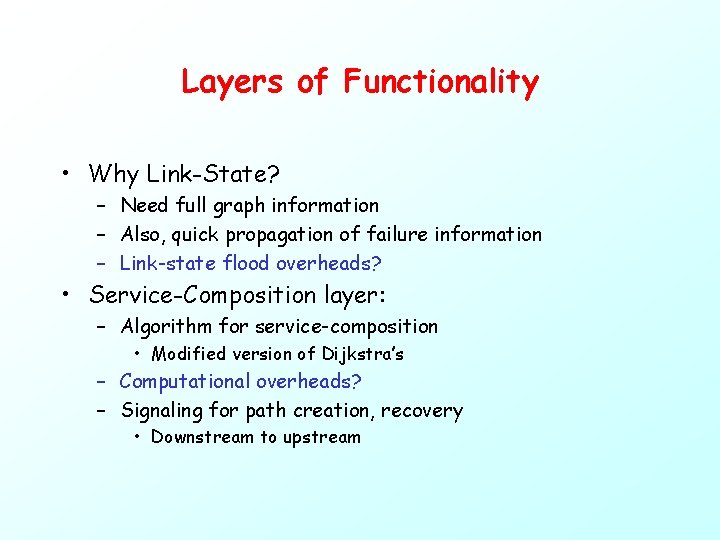

Layers of Functionality • Why Link-State? – Need full graph information – Also, quick propagation of failure information – Link-state flood overheads? • Service-Composition layer: – Algorithm for service-composition • Modified version of Dijkstra’s – Computational overheads? – Signaling for path creation, recovery • Downstream to upstream

Evaluation • What is the effect of recovery mechanism on application? • What is the scaling bottleneck? – Overheads: • Signaling messages during path recovery • Link-state floods • Graph computations • Testbed: – Emulation platform – On Millennium cluster of workstations

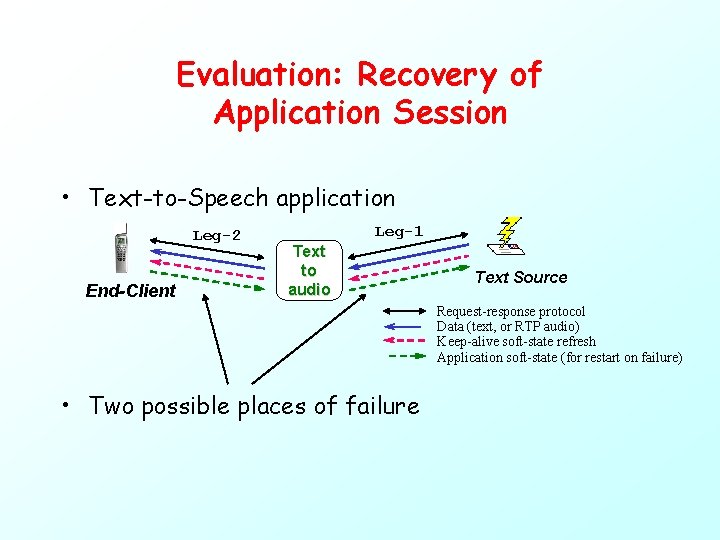

Evaluation: Recovery of Application Session • Text-to-Speech application Leg-2 End-Client Leg-1 Text to audio Text Source Request-response protocol Data (text, or RTP audio) Keep-alive soft-state refresh Application soft-state (for restart on failure) • Two possible places of failure

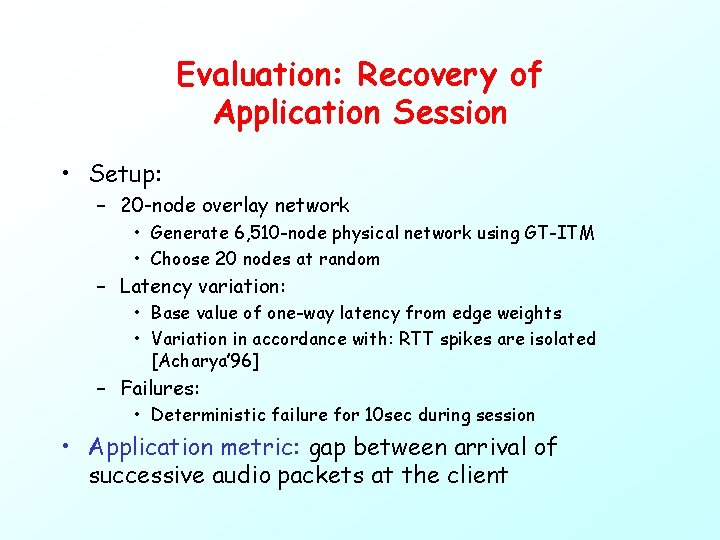

Evaluation: Recovery of Application Session • Setup: – 20 -node overlay network • Generate 6, 510 -node physical network using GT-ITM • Choose 20 nodes at random – Latency variation: • Base value of one-way latency from edge weights • Variation in accordance with: RTT spikes are isolated [Acharya’ 96] – Failures: • Deterministic failure for 10 sec during session • Application metric: gap between arrival of successive audio packets at the client

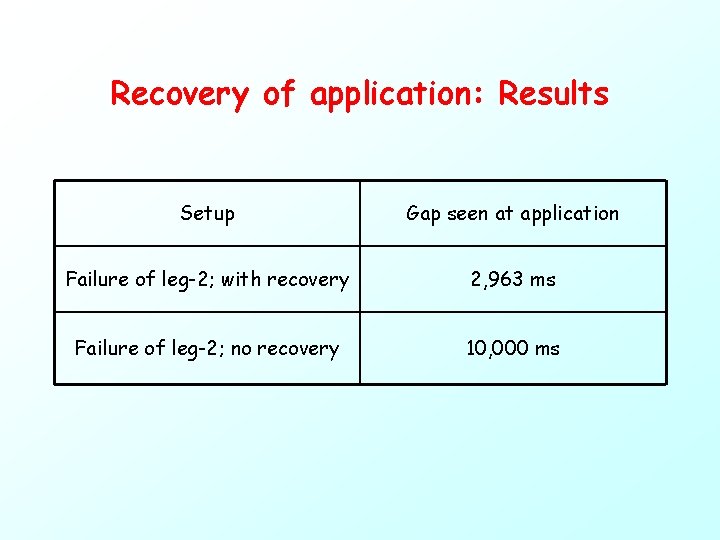

Recovery of application: Results Setup Gap seen at application Failure of leg-2; with recovery 2, 963 ms Failure of leg-2; no recovery 10, 000 ms

Discussion • Recovery after failure of leg-2 – – Breakup: 2, 963 = 1, 800 + O(700) + O(450) 1, 800 ms: timeout to conclude failure 700 ms: signaling to setup alternate path 450 ms: recovery of application soft-state • Re-processing current sentence • Without recovery algo. : takes as long as failure duration • O(3 sec) recovery – Can be completely masked with buffering – Interactive apps: still much better than without recovery • Why is quick recovery possible? – Failure information does not have to propagate across network – Overlay network is a virtual-circuit based network

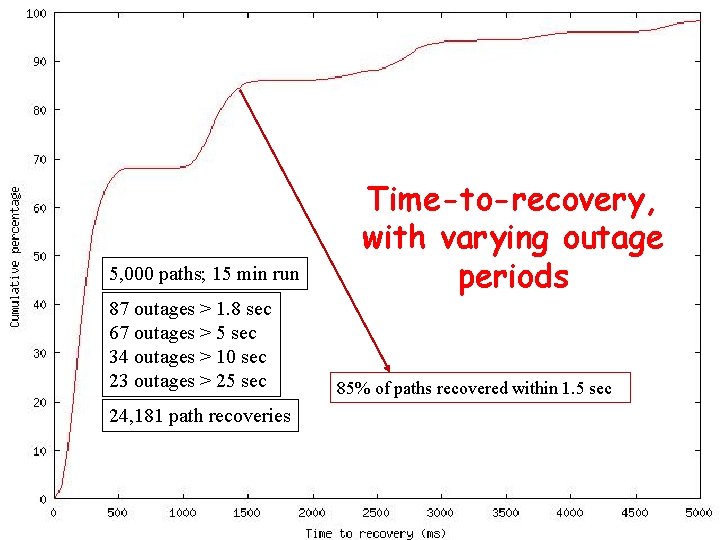

Evaluation: Scaling • Scaling bottleneck: – Simultaneous recovery of all client sessions on a failed overlay link • Setup: – – 20 -node overlay network 5, 000 service-level paths Latency variation: same as earlier Deterministic failure of 12 different links • 12 data points on the graph – Metric: average time-to-recovery, of all paths failed

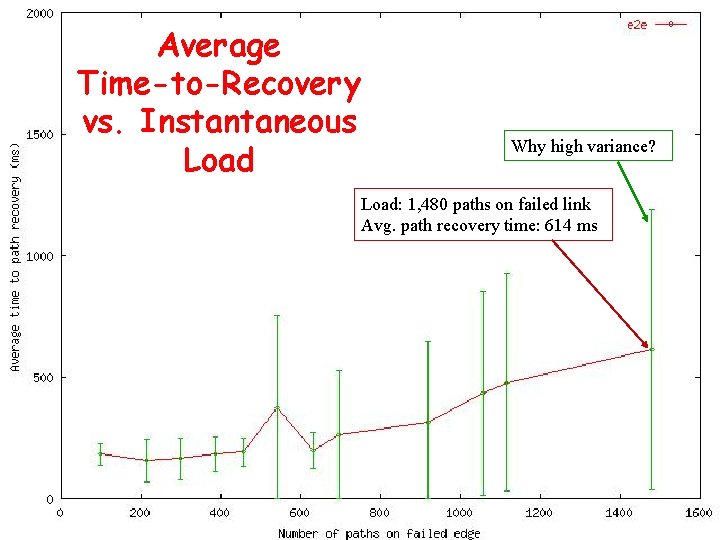

Average Time-to-Recovery vs. Instantaneous Load Why high variance? Load: 1, 480 paths on failed link Avg. path recovery time: 614 ms

Scaling: Discussion • Can recover at least 1, 500 paths without hitting bottlenecks – How many client sessions per cluster-manager? • Compute using #nodes, #edges in graph • Translates to about 700 simultaneous client sessions per cluster-manager – In comparison, our text-to-speech implementation can support O(15) clients per machine – Minimal additional provisioning for cluster-manager

5, 000 paths; 15 min run 87 outages > 1. 8 sec 67 outages > 5 sec 34 outages > 10 sec 23 outages > 25 sec 24, 181 path recoveries Time-to-recovery, with varying outage periods 85% of paths recovered within 1. 5 sec

Other Scaling Bottlenecks? • Link-state floods: – Twice for each failure – For a 1, 000 -node graph • Estimate #edges = 10, 000 – Failures (>1. 8 sec outage): O(once an hour) in the worst case – Only about 6 floods/second in the entire network! • Graph computation: – O(k*E*log(N)) computation time; k = #services composed – For 6, 510 -node network, this takes 50 ms – Huge overhead, but: path caching helps

Summary – Good recovery time for real-time applications: O(3 sec) – Good scalability -- minimal additional provisioning for cluster managers • Ongoing work: – Overlay topology issues: how many nodes, peering – Stability issues Feedback, Questions? Presentation made using VMWare Eva aly sis lua An • Service Composition: flexible service creation • We address performance, availability, scalability • Initial analysis: Failure detection -- meaningful to timeout in O(1. 2 -1. 8 sec) • Design: Overlay network of service clusters • Evaluation: results so far gn Desi tio n

![References • • • [OPES’ 00] A. Beck and et. al. , “Example Services References • • • [OPES’ 00] A. Beck and et. al. , “Example Services](http://slidetodoc.com/presentation_image_h2/8101c289a1e7588fd1b233b00f139f6c/image-21.jpg)

References • • • [OPES’ 00] A. Beck and et. al. , “Example Services for Network Edge Proxies”, Internet Draft, draft-beck-opes-esfnep-01. txt, Nov 2000 [Labovitz’ 99] C. Labovitz, A. Ahuja, and F. Jahanian, “Experimental Study of Internet Stability and Wide-Area Network Failures”, Proc. Of FTCS’ 99 [Labovitz’ 00] C. Labovitz, A. Ahuja, A. Bose, and F. Jahanian, “Delayed Internet Routing Convergence”, Proc. SIGCOMM’ 00 [Acharya’ 96] A. Acharya and J. Saltz, “A Study of Internet Round. Trip Delay”, Technical Report CS-TR-3736, U. of Maryland [Yajnik’ 99] M. Yajnik, S. Moon, J. Kurose, and D. Towsley, “Measurement and Modeling of the Temporal Dependence in Packet Loss”, Proc. INFOCOM’ 99 [Balakrishnan’ 97] H. Balakrishnan, S. Seshan, M. Stemm, and R. H. Katz, “Analyzing Stability in Wide-Area Network Performance”, Proc. SIGMETRICS’ 97

- Slides: 21