Tight Bounds for Approximate Carathodory and Beyond Vahab

Tight Bounds for Approximate Carathéodory and Beyond Vahab Mirrokni Google Renato Paes Leme Google Adrian Vladu MIT Sam Chiu-wai Wong UC Berkeley

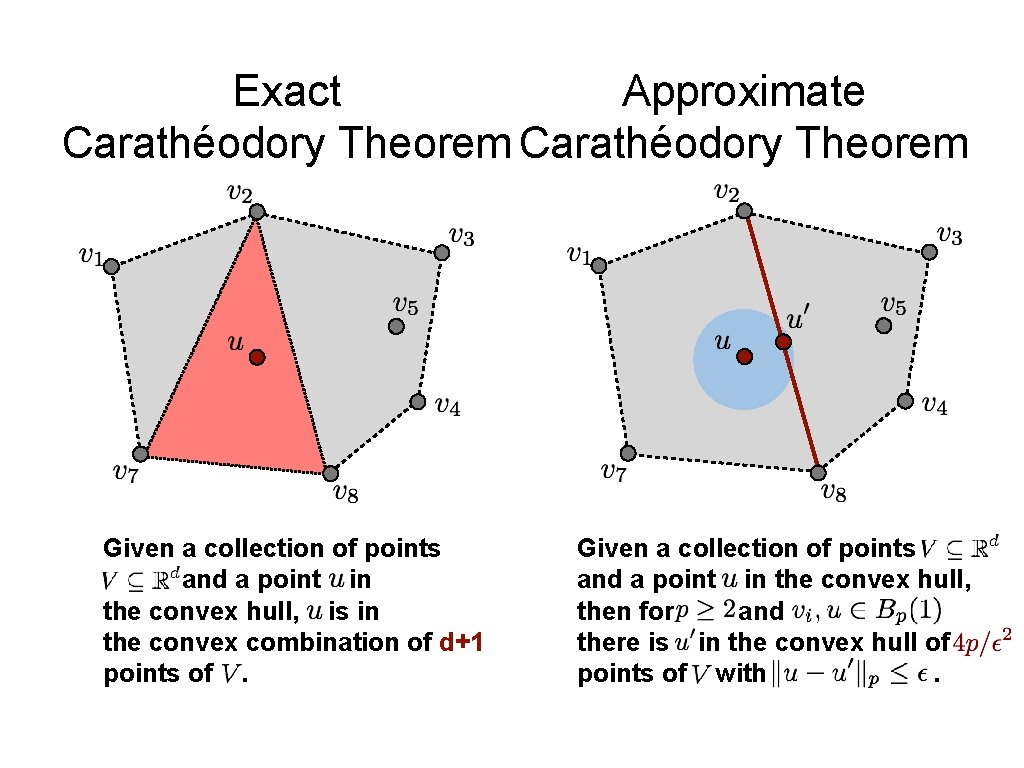

Exact Approximate Carathéodory Theorem Given a collection of points and a point in the convex hull, is in the convex combination of d+1 points of. Given a collection of points and a point in the convex hull, then for and there is in the convex hull of points of with.

![Brief History of Approximate Carathéodory • [Barman, STOC’ 15]: there exist • • points Brief History of Approximate Carathéodory • [Barman, STOC’ 15]: there exist • • points](http://slidetodoc.com/presentation_image/3533021b002ae54a6521cb7f4f6f7a6b/image-3.jpg)

Brief History of Approximate Carathéodory • [Barman, STOC’ 15]: there exist • • points such that Application: -nets for There is a set of points that approximates w. r. t. Bilinear programs: programs of the type can be solved by enumerating over possible values of -norm. . • PTAS for Nash equilibrium in s-sparse bi-matrix games in • additive PTAS for the k-densest subgraph problem for bounded degree • applications in combinatorics • lower bound of , so tight for

![Brief History of Approximate Carathéodory • [Barman, STOC’ 15]: there exist • [Maurey, 1980]: Brief History of Approximate Carathéodory • [Barman, STOC’ 15]: there exist • [Maurey, 1980]:](http://slidetodoc.com/presentation_image/3533021b002ae54a6521cb7f4f6f7a6b/image-4.jpg)

Brief History of Approximate Carathéodory • [Barman, STOC’ 15]: there exist • [Maurey, 1980]: functional analysis • points such that [Shalev-Shwartz, Srebro and Zhang, 2010]: sparsity/accuracy tradeoffs in linear regressions • [Novikoff, 1962]: analysis of the perceptron algorithm implies an • This paper: • A deterministic time algorithm • A lower bound showing that sparsity is necessary for version

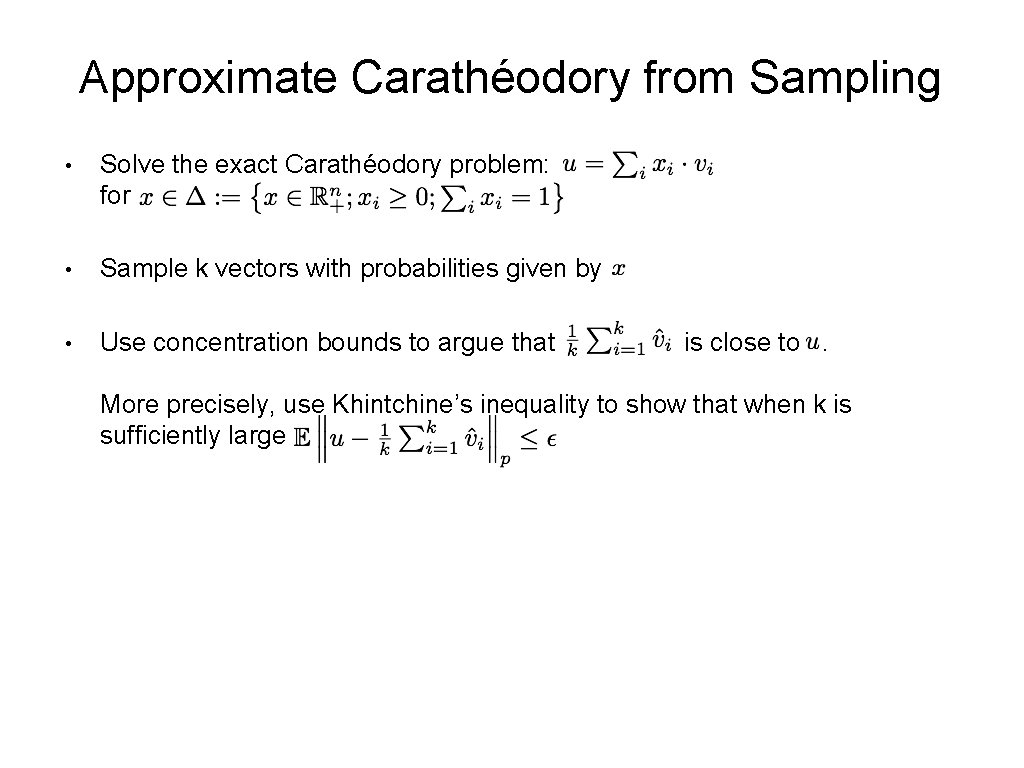

Sparsification via Sampling Exact solution Approximate optimal solution Plan #1: (1) solve the exact problem. (2) sample from it.

Approximate Carathéodory from Sampling • Solve the exact Carathéodory problem: for • Sample k vectors with probabilities given by • Use concentration bounds to argue that is close to. More precisely, use Khintchine’s inequality to show that when k is sufficiently large

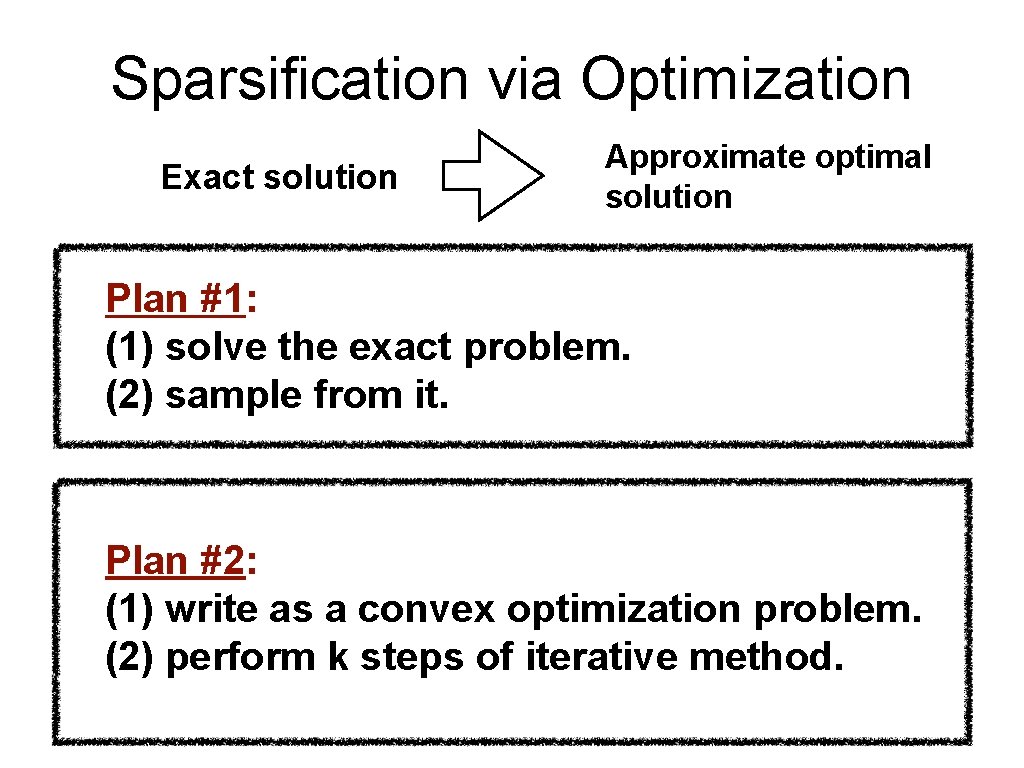

Sparsification via Optimization Exact solution Approximate optimal solution Plan #1: (1) solve the exact problem. (2) sample from it. Plan #2: (1) write as a convex optimization problem. (2) perform k steps of iterative method.

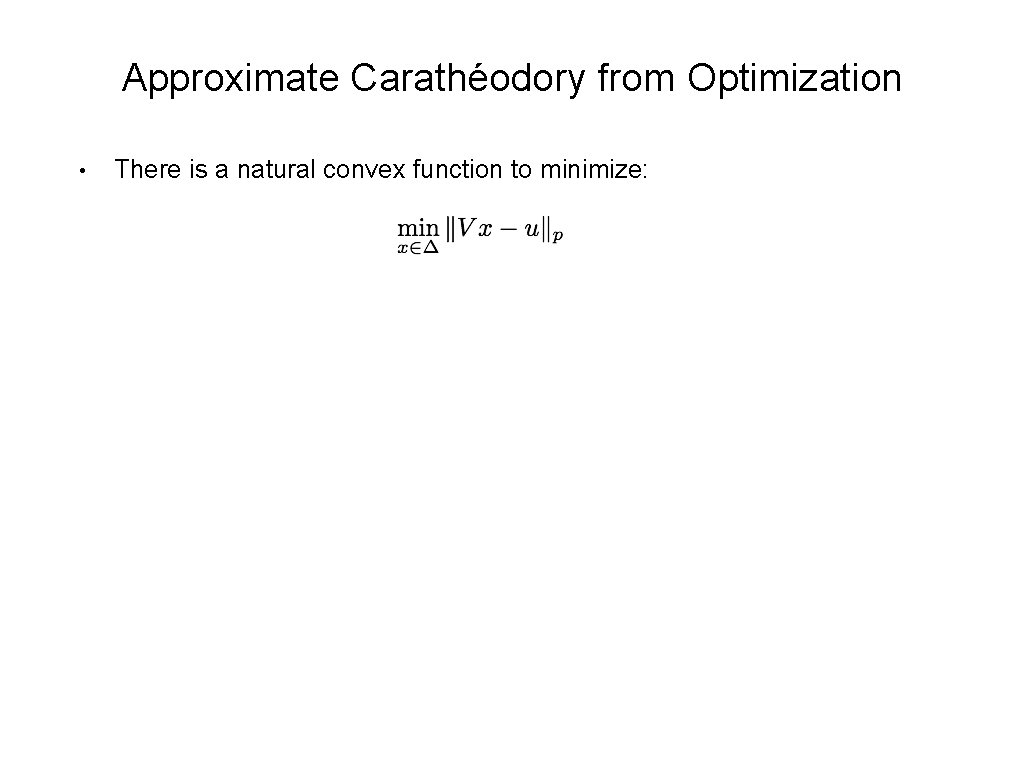

Approximate Carathéodory from Optimization • There is a natural convex function to minimize:

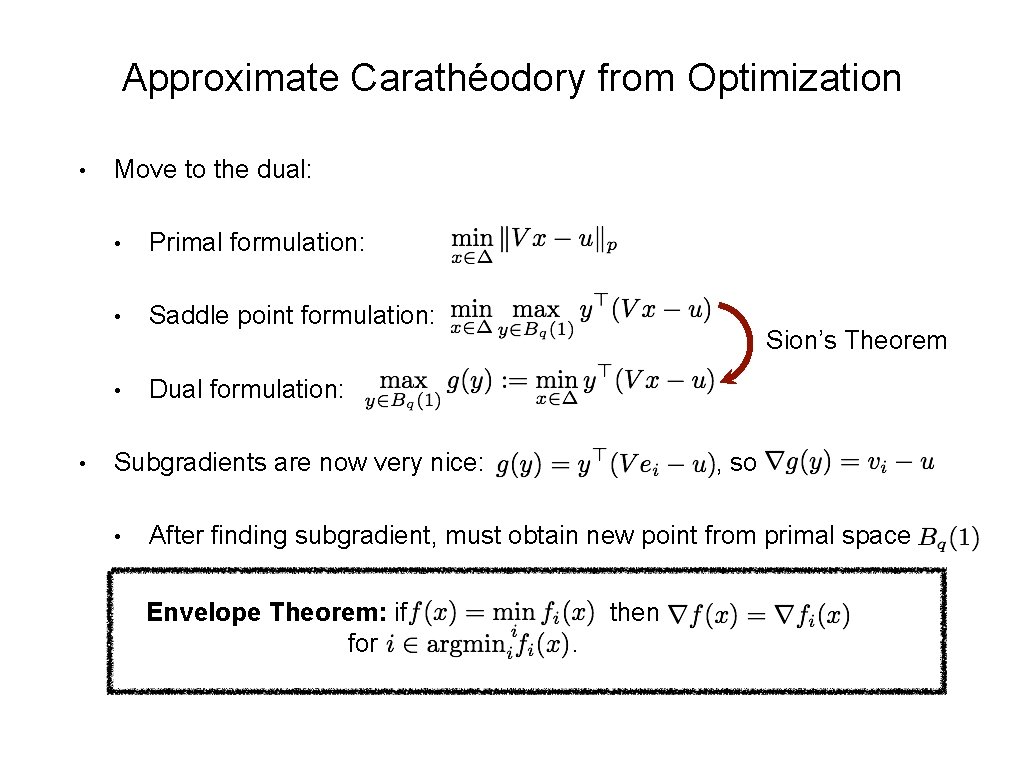

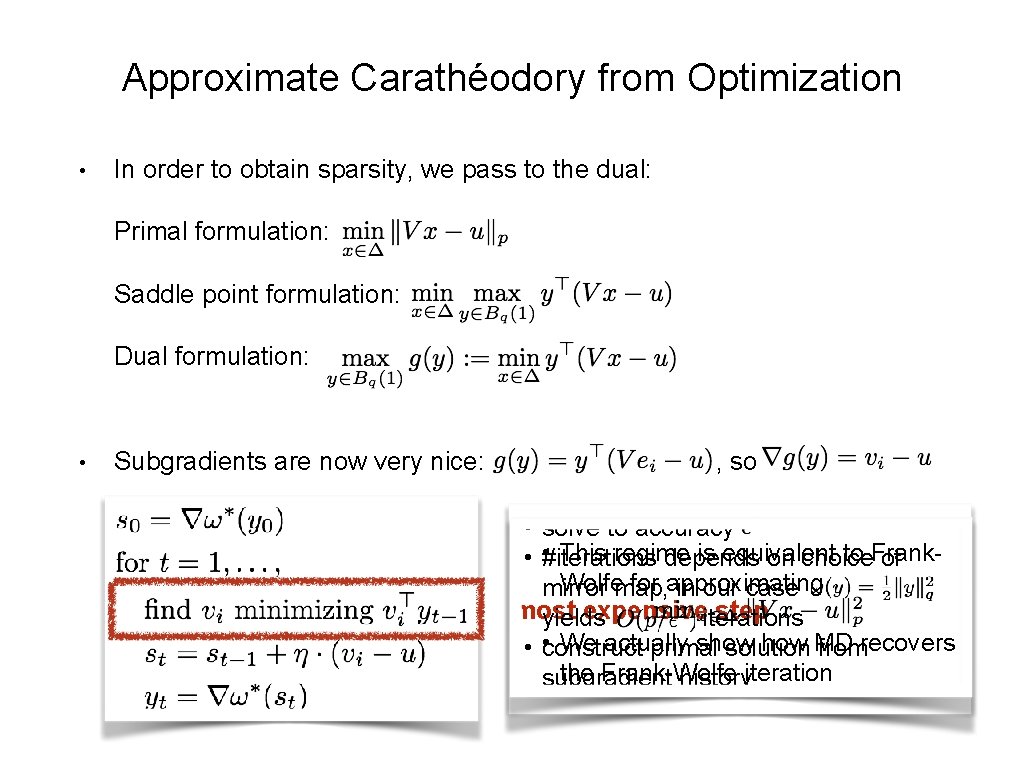

Approximate Carathéodory from Optimization • • Move to the dual: • Primal formulation: • Saddle point formulation: • Dual formulation: Sion’s Theorem Subgradients are now very nice: • , so After finding subgradient, must obtain new point from primal space Envelope Theorem: if for then.

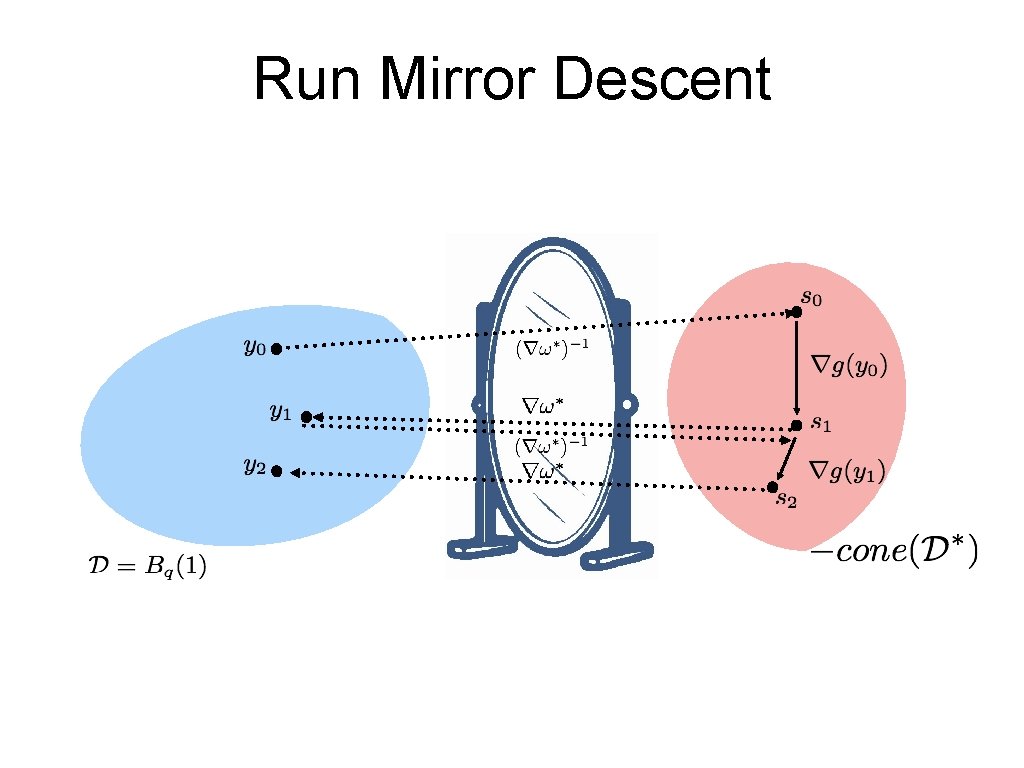

Run Mirror Descent

Approximate Carathéodory from Optimization • In order to obtain sparsity, we pass to the dual: Primal formulation: Saddle point formulation: Dual formulation: • Subgradients are now very nice: , so • solve to accuracy • This regime is equivalent to Frank • #iterations depends on choice of Wolfemap, for approximating mirror in our case most expensiveiterations step yields • We actually show MD • construct primal solution fromrecovers the Frank-Wolfe subgradient historyiteration

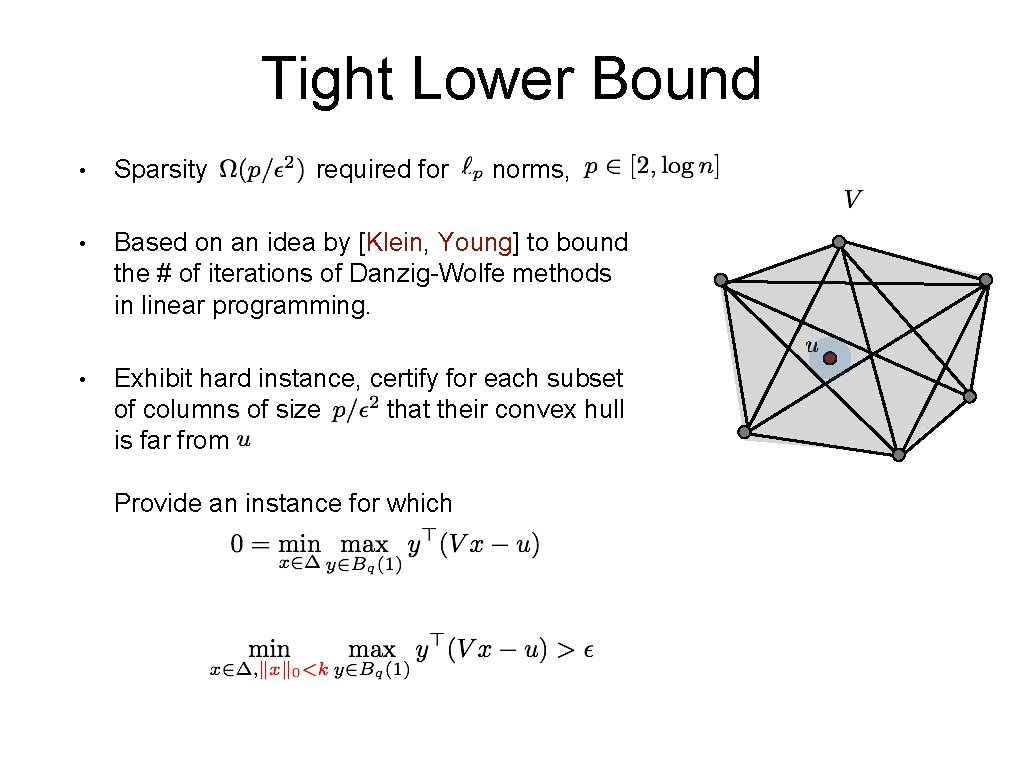

Tight Lower Bound • Sparsity required for • Based on an idea by [Klein, Young] to bound the # of iterations of Danzig-Wolfe methods in linear programming. • Exhibit hard instance, certify for each subset of columns of size that their convex hull is far from Provide an instance for which norms,

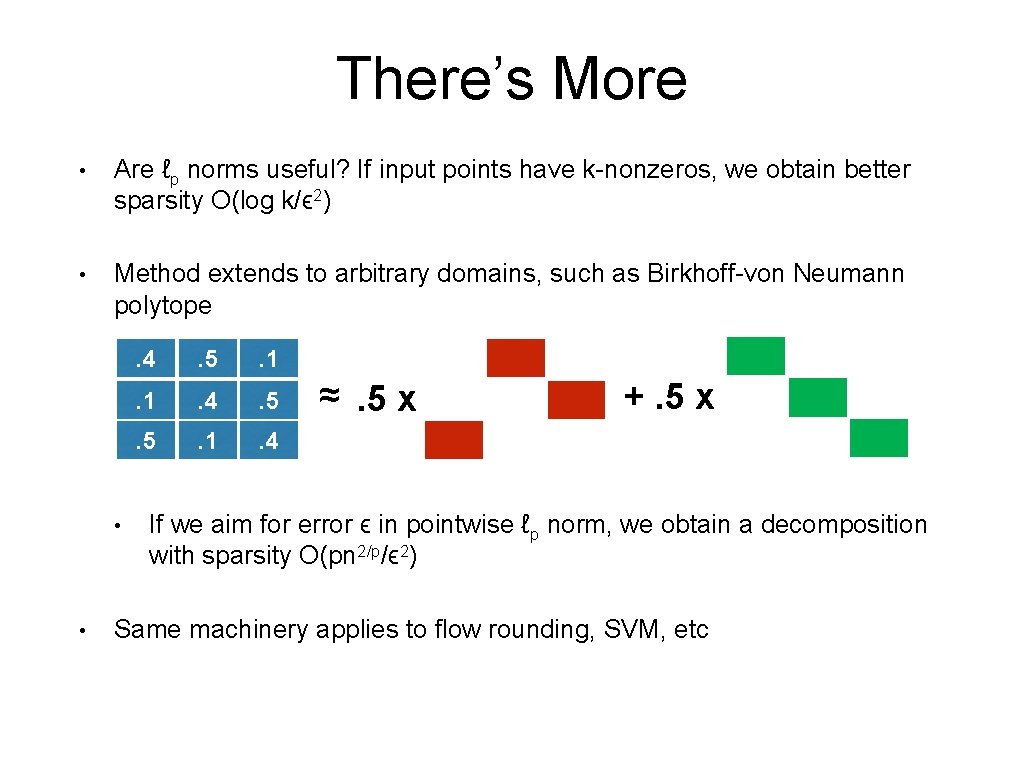

There’s More • Are ℓp norms useful? If input points have k-nonzeros, we obtain better sparsity O(log k/ϵ 2) • Method extends to arbitrary domains, such as Birkhoff-von Neumann polytope • • . 4 . 5 . 1 . 4 ≈. 5 x +. 5 x If we aim for error ϵ in pointwise ℓp norm, we obtain a decomposition with sparsity O(pn 2/p/ϵ 2) Same machinery applies to flow rounding, SVM, etc

Thank You!

- Slides: 14