Sieve of Eratosthenes by Fola Olagbemi Outline What

Sieve of Eratosthenes by Fola Olagbemi

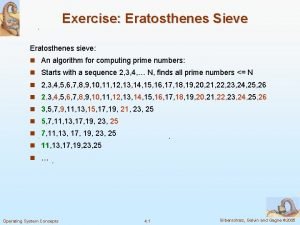

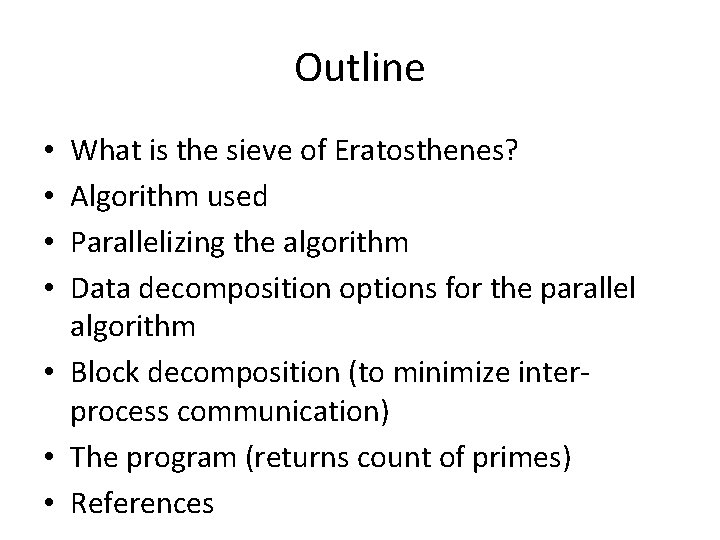

Outline What is the sieve of Eratosthenes? Algorithm used Parallelizing the algorithm Data decomposition options for the parallel algorithm • Block decomposition (to minimize interprocess communication) • The program (returns count of primes) • References • •

What is the sieve of Eratosthenes? •

Algorithm •

Parallelizing the algorithm • Step 3 a – key parallel computation step • Reduction needed in each iteration to determine new value of k (process 0 – controller - may not have the next k) • Broadcast needed after reduction to inform all processes of new k. • The goal in parallelizing is to reduce interprocess communication as much as possible.

Data decomposition options • Goal of data decomposition approach chosen – each process should have roughly the same number of values to work on (optimal load balancing) • Decomposition options: – Interleaved data decomposition – Block data decomposition: • Grouped • Distributed

Data decomposition options (contd. ) • Interleaved data decomposition (e. g. ): – Process 0 processes numbers 2, 2+p, 2+2 p, … – Process 1 processes numbers 3, 3+p, 3+2 p, … • Advantage – easy to know which process is working on the value in any array index i • Disadvantages – It can lead to significant load imbalance among processes (depending on how array elements are divided up among processes) – May require a significant amount of reduction/broadcast (communication overheads)

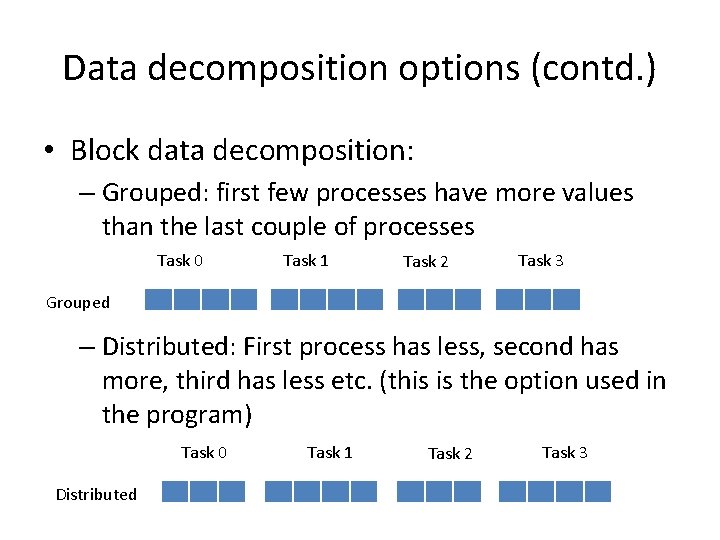

Data decomposition options (contd. ) • Block data decomposition: – Grouped: first few processes have more values than the last couple of processes Task 0 Task 1 Task 2 Task 3 Grouped – Distributed: First process has less, second has more, third has less etc. (this is the option used in the program) Task 0 Distributed Task 1 Task 2 Task 3

Data decomposition (contd. ) • Grouped data decomposition: – Let r = n mod p (n – number of values in the list, p – number of processes) – If r = 0, all processes get block of values of size n/p – Else, first r processes get blocks of size ceil(n/p), remaining processes blocks of size (floor(n/p) • Distributed data decomposition: – First element controlled by process i is floor(i*n/p) – Last element controlled by process I is floor((i+1)n/p) - 1

Data decomposition (Contd. ) • To reduce communication (i. e. reduce number of MPI_Reduce calls to 1), all the primes used for sieving must be held by process 0. • This requirement places a limitation on the number of processes that can be used in this approach.

References • Michael J. Quinn, Parallel Programming in C with MPI and Open. MP; Mc. Graw Hill; 2004

Questions?

- Slides: 12