Presented by Jingxuan Zhang Dalian University of Technology

Presented by Jingxuan Zhang Dalian University of Technology, China Mar. 2016 He Jiang, Jingxuan Zhang, Xiaochen Li, Zhilei Ren and David Lo. ” A More Accurate Model for Finding Tutorial Segments Explaining APIs”, In Proc. of 23 rd IEEE International Conference on Software Analysis, Evolution, and Reengineering.

1 2 Tutorial Datasets 3 4

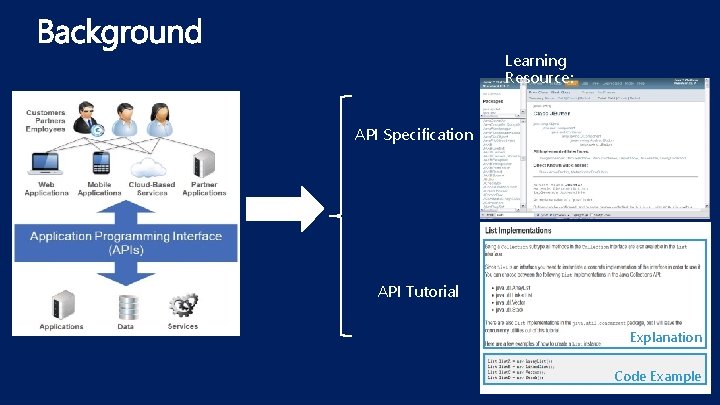

Learning Resource: API Specification API Tutorial Explanation Code Example

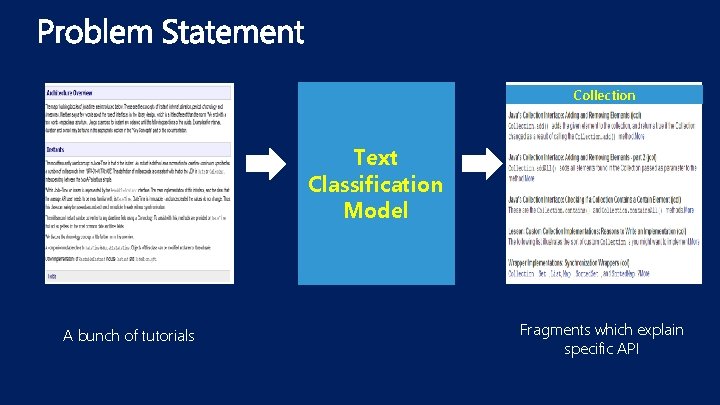

Collection Text Classification Model A bunch of tutorials Fragments which explain specific API

1. 2. Challenges: APIs are scattered in different parts of the tutorial. APIs are often mentioned in a fragment without being the topic of it. Literature Shortcomings: The existing models do not consider the domain specific knowledge of APIs effectively: 1. The co-occurrence APIs are important indicators to discover fragments explaining an API. 2. The API usage experience shared in some technical forums can be leveraged. 3. The specifications written by some experienced developers can be exploited.

1 2 Tutorial Datasets 3 4

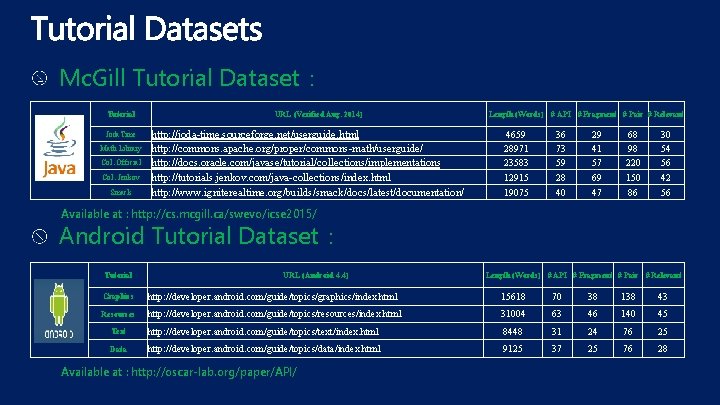

Mc. Gill Tutorial Dataset: Tutorial Joda. Time Math Library Col. Official Col. Jenkov Smack URL (Verified Aug. 2014) http: //joda-time. sourceforge. net/userguide. html http: //commons. apache. org/proper/commons-math/userguide/ http: //docs. oracle. com/javase/tutorial/collections/implementations http: //tutorials. jenkov. com/java-collections/index. html http: //www. igniterealtime. org/builds/smack/docs/latest/documentation/ Length (Words) 4659 28971 23583 12915 19075 # API # Fragment # Pair # Relevant 36 73 59 28 40 29 41 57 69 47 68 98 220 150 86 30 54 56 42 56 Available at : http: //cs. mcgill. ca/swevo/icse 2015/ Android Tutorial Dataset: Tutorial URL (Android 4. 4) Length (Words) # API # Fragment # Pair # Relevant Graphics http: //developer. android. com/guide/topics/graphics/index. html 15618 70 38 138 43 Resources http: //developer. android. com/guide/topics/resources/index. html 31004 63 46 140 45 Text http: //developer. android. com/guide/topics/text/index. html 8448 31 24 76 25 Data http: //developer. android. com/guide/topics/data/index. html 9125 37 25 76 28 Available at : http: //oscar-lab. org/paper/API/

1 2 3 4 1. They explain basic Android development topics which are relevant to many developers. 2. They are easy to understand so that can be annotated quickly and accurately. 3. They have different lengths and formats which can simulate different situations.

1 2 Tutorial Datasets 3 4

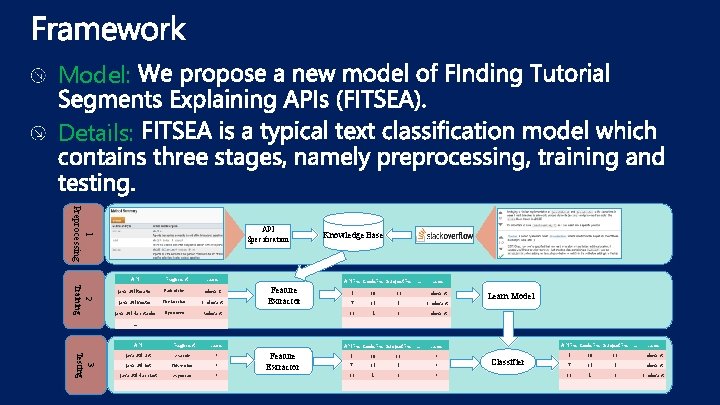

Model: Details: 1 Preprocessing API Specification Knowledge Base 2 Training API Fragment Label java. util. Iterator Each of the … relevant java. util. Vector The fact that … irrelevant java. util. Hashtable If you need… Relevant 16 … … 3 Testing API Fragment Label java. util. List As a rule… ? java. util. Set This section … ? java. util. Hash. Set As you can … ? … … … APIFre Code. Fre Subject. Fre Feature Extractor … Label 5 19 11 … relevant 7 35 5 … irrelevant 2 1 … relevant … … APIFre Code. Fre Subject. Fre Feature Extractor … Label 5 19 11 … ? Learn Model APIFre Code. Fre Subject. Fre Classifier … Label 5 19 11 … relevant 7 35 5 … ? 7 35 5 … relevant 16 2 1 … ? 16 2 1 … irrelevant … … … … …

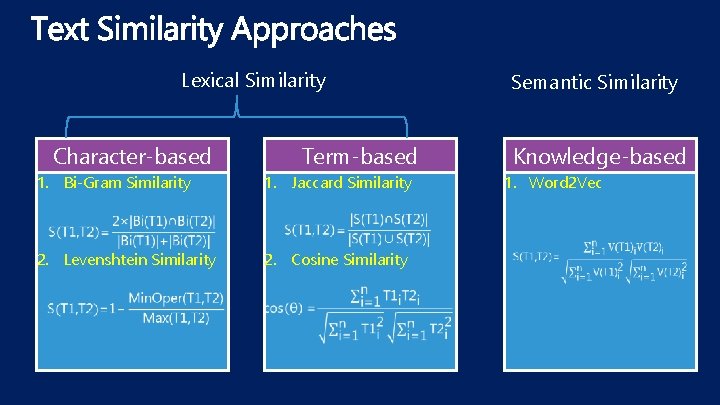

Lexical Similarity Character-based Term-based 1. Bi-Gram Similarity 1. Jaccard Similarity 2. Levenshtein Similarity 2. Cosine Similarity Semantic Similarity Knowledge-based 1. Word 2 Vec

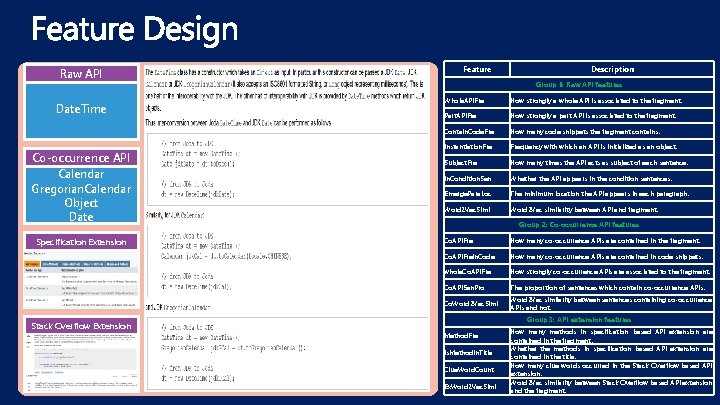

Raw API Date. Time Co-occurrence API Calendar Gregorian. Calendar Object Date Specification Extension Stack Overflow Extension Feature Description Group 1: Raw API features Whole. APIFre How strongly a whole API is associated to the fragment. Part. APIFre How strongly a part API is associated to the fragment. Contain. Code. Fre How many code snippets the fragment contains. Instantiation. Frequency with which an API is initialized as an object. Subject. Fre How many times the API acts as subject of each sentence. In. Condition. Sen Whether the API appears in the condition sentences. Emerge. Para. Loc The minimum location the API appears in each paragraph. Word 2 Vec. Simi Word 2 Vec similarity between API and fragment. Group 2: Co-occurrence API features Co. APIFre How many co-occurrence APIs are contained in the fragment. Co. APIFre. In. Code How many co-occurrence APIs are contained in code snippets. whole. Co. APIFre How strongly co-occurrence APIs are associated to the fragment. Co. APISen. Pro The proportion of sentences which contain co-occurrence APIs. Co. Word 2 Vec. Simi Word 2 Vec similarity between sentences containing co-occurrence APIs and not. Group 3: API extension features Method. Fre Is. Method. In. Title Clue. Word. Count Ex. Word 2 Vec. Simi How many methods in specification based API extension are contained in the fragment. Whether the methods in specification based API extension are contained in the title. How many clue words occurred in the Stack Overflow based API extension. Word 2 Vec similarity between Stack Overflow based API extension and the fragment.

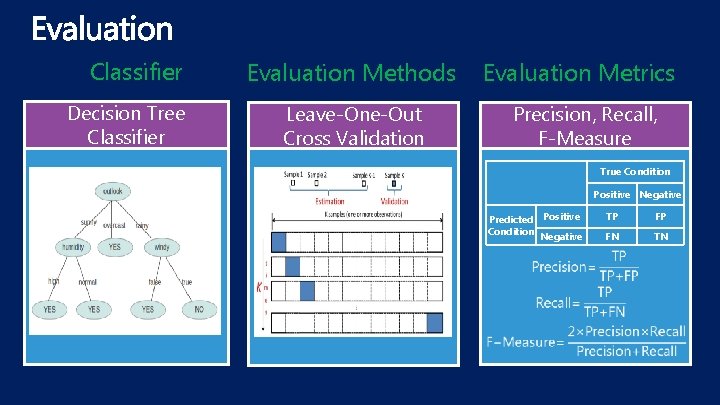

Classifier Decision Tree Classifier Evaluation Methods Evaluation Metrics Leave-One-Out Cross Validation Precision, Recall, F-Measure True Condition Positive Negative Predicted Positive Condition Negative TP FP FN TN

1 2 Tutorial Datasets 3 4

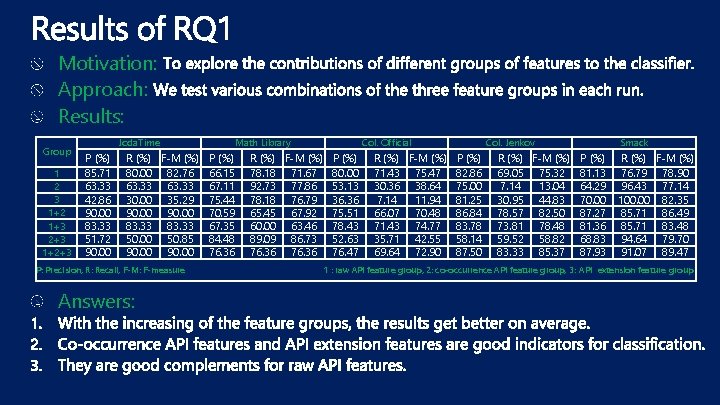

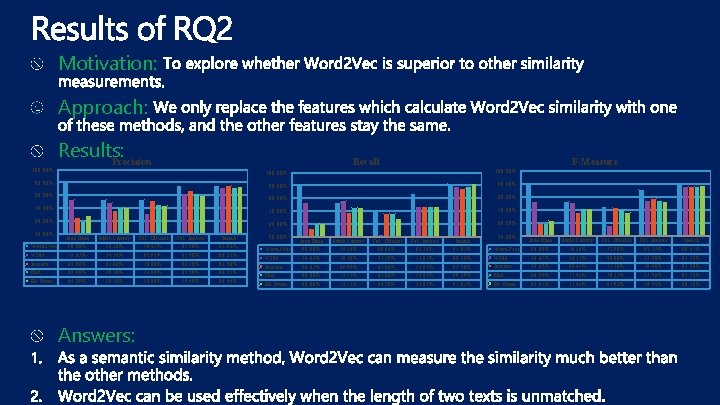

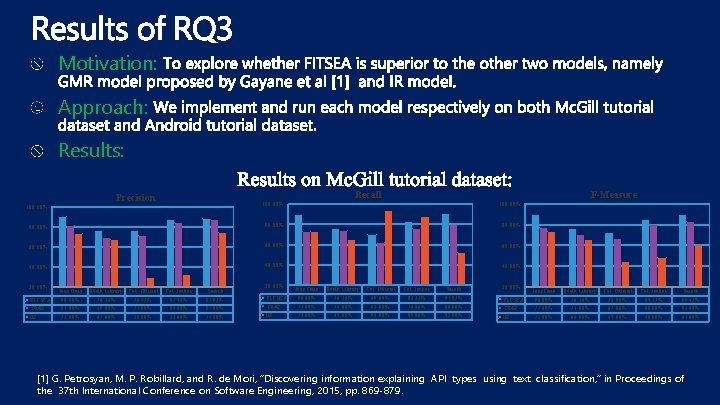

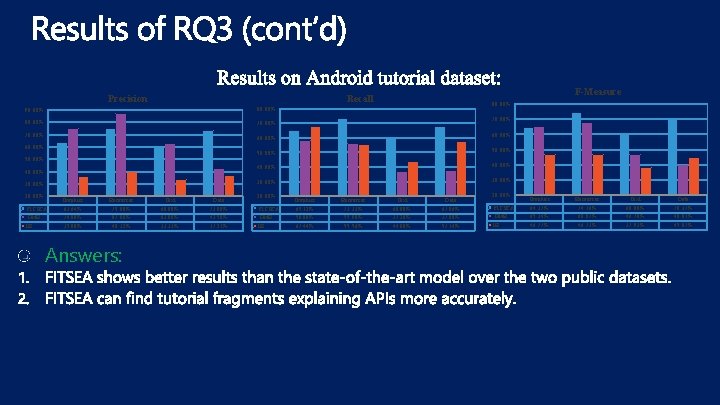

RQ 1: How will FITSEA perform when using different groups of features? Features are divided into three groups. In this RQ, we want to explore the performances of FITSEA when applying different groups of features. RQ 2: Does it achieve better results when using Word 2 Vec semantic similarity than the other similarity calculation methods? In this RQ, we compare Word 2 Vec against the other four methods, namely Bi-Gram, Levenshtein, Jaccard and Cosine similarity. RQ 3: Can FITSEA perform better than the other models over the two tutorial datasets? In this RQ, we try to explore whether FITSEA could discover more explaining fragments for APIs than the other models, namely GMR model proposed by Gayane et al [1] and IR model. [1] G. Petrosyan, M. P. Robillard, and R. de Mori, “Discovering information explaining API types using text classification, ” in Proc. of the 37 th International Conference on Software Engineering, 2015, pp. 869 -879.

Motivation: Approach: Results: Group 1 2 3 1+2 1+3 2+3 1+2+3 Joda. Time P (%) 85. 71 63. 33 42. 86 90. 00 83. 33 51. 72 90. 00 R (%) F-M (%) 80. 00 82. 76 63. 33 30. 00 35. 29 90. 00 83. 33 50. 00 50. 85 90. 00 P: Precision, R: Recall, F-M: F-measure Answers: Math Library P (%) 66. 15 67. 11 75. 44 70. 59 67. 35 84. 48 76. 36 R (%) F-M (%) 78. 18 71. 67 92. 73 77. 86 78. 18 76. 79 65. 45 67. 92 60. 00 63. 46 89. 09 86. 73 76. 36 Col. Official P (%) 80. 00 53. 13 36. 36 75. 51 78. 43 52. 63 76. 47 R (%) F-M (%) 71. 43 75. 47 30. 36 38. 64 7. 14 11. 94 66. 07 70. 48 71. 43 74. 77 35. 71 42. 55 69. 64 72. 90 Col. Jenkov P (%) 82. 86 75. 00 81. 25 86. 84 83. 78 58. 14 87. 50 R (%) F-M (%) 69. 05 75. 32 7. 14 13. 04 30. 95 44. 83 78. 57 82. 50 73. 81 78. 48 59. 52 58. 82 83. 33 85. 37 Smack P (%) 81. 13 64. 29 70. 00 87. 27 81. 36 68. 83 87. 93 R (%) F-M (%) 76. 79 78. 90 96. 43 77. 14 100. 00 82. 35 85. 71 86. 49 85. 71 83. 48 94. 64 79. 70 91. 07 89. 47 1 : raw API feature group, 2: co-occurrence API feature group, 3: API extension feature group

Motivation: Approach: 100. 00% Results: Precision Recall F-Measure 100. 00% 90. 00% 80. 00% 70. 00% 60. 00% 50. 00% Word 2 Vec VSM Jaccard Edit Bi-Gram Joda. Time 90. 00% 76. 67% 62. 96% 60. 00% 64. 29% Math Library 76. 36% 74. 14% 67. 86% 70. 18% Answers: Col. Official 76. 47% 65. 91% 76. 00% 73. 08% 72. 00% Col. Jenkov 87. 50% 81. 58% 83. 78% 81. 58% 79. 49% Smack 87. 93% 86. 21% 87. 50% 86. 21% 86. 44% 50. 00% Word 2 Vec VSM Jaccard Edit Bi-Gram Joda. Time 90. 00% 76. 67% 56. 67% 60. 00% Math Library 76. 36% 78. 18% 69. 09% 72. 73% Col. Official 69. 64% 51. 79% 67. 86% 64. 29% Col. Jenkov 83. 33% 73. 81% Smack 91. 07% 89. 29% 87. 50% 89. 29% 91. 07% 50. 00% Word 2 Vec VSM Jaccard Edit Bi-Gram Joda. Time 90. 00% 76. 67% 59. 65% 60. 00% 62. 07% Math Library 76. 36% 76. 11% 68. 47% 71. 43% Col. Official 72. 90% 58. 00% 71. 70% 70. 37% 67. 92% Col. Jenkov 85. 37% 77. 50% 78. 48% 77. 50% 76. 54% Smack 89. 47% 87. 72% 87. 50% 87. 72% 88. 70%

Motivation: Approach: Results: F-Measure Recall Precision 100. 00% 80. 00% 60. 00% 40. 00% 20. 00% FITSEA GMR IR Joda. Time 90. 00% 81. 00% 73. 00% Math Library Col. Official 76. 36% 76. 47% 69. 00% 71. 00% 67. 00% 30. 00% Col. Jenkov 87. 50% 84. 00% 33. 00% Smack 87. 93% 87. 00% 74. 00% 20. 00% FITSEA GMR IR Joda. Time 90. 00% 73. 00% Math Library Col. Official 76. 36% 69. 64% 74. 00% 62. 00% 65. 00% 94. 00% Col. Jenkov 83. 33% 76. 00% 88. 00% Smack 91. 07% 80. 00% 52. 00% 20. 00% FITSEA GMR IR Joda. Time 90. 00% 77. 00% 73. 00% Math Library Col. Official 76. 36% 72. 90% 71. 00% 67. 00% 66. 00% 45. 00% Col. Jenkov 85. 37% 80. 00% 48. 00% Smack 89. 47% 83. 00% 61. 00% [1] G. Petrosyan, M. P. Robillard, and R. de Mori, “Discovering information explaining API types using text classification, ” in Proceedings of the 37 th International Conference on Software Engineering, 2015, pp. 869 -879.

Precision F-Measure Recall 90. 00% 80. 00% 70. 00% 60. 00% 50. 00% 40. 00% 30. 00% 20. 00% FITSEA GMR IR Graphics 63. 64% 74. 80% 35. 80% Resources 75. 00% 87. 00% 40. 32% Answers: Text 60. 00% 63. 00% 33. 33% Data 73. 08% 42. 50% 37. 21% 20. 00% FITSEA GMR IR Graphics 65. 12% 58. 00% 67. 44% Resources 73. 33% 55. 90% 55. 56% Text 60. 00% 37. 20% 44. 00% Data 67. 86% 37. 80% 57. 14% 20. 00% FITSEA GMR IR Graphics 64. 37% 65. 34% 46. 77% Resources 74. 16% 68. 07% 46. 73% Text 60. 00% 46. 78% 37. 93% Data 70. 37% 40. 01% 45. 07%

Problem: Model: Advantage 1: Advantage 2: Results:

Thank you ! Contact: jingxuanzhang@mail. dlut. edu. cn

- Slides: 21