Nave Bayes Geoff Hulten Two Approaches to Supervised

Naïve Bayes Geoff Hulten

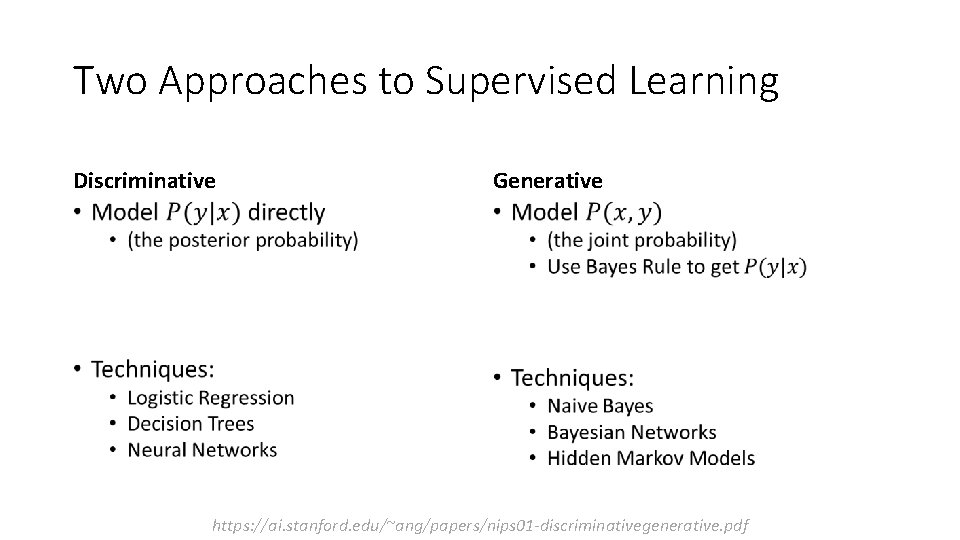

Two Approaches to Supervised Learning Discriminative Generative • • https: //ai. stanford. edu/~ang/papers/nips 01 -discriminativegenerative. pdf

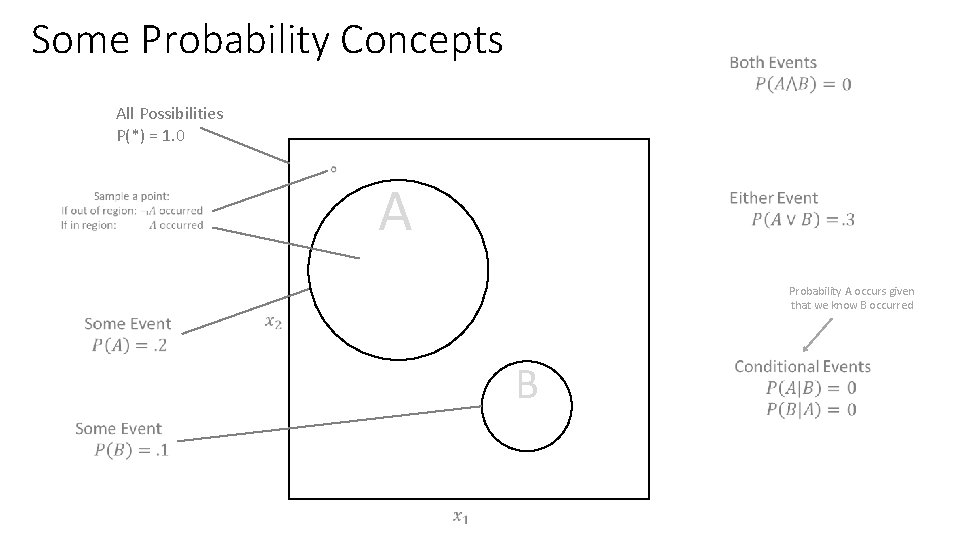

Some Probability Concepts All Possibilities P(*) = 1. 0 A Probability A occurs given that we know B occurred B

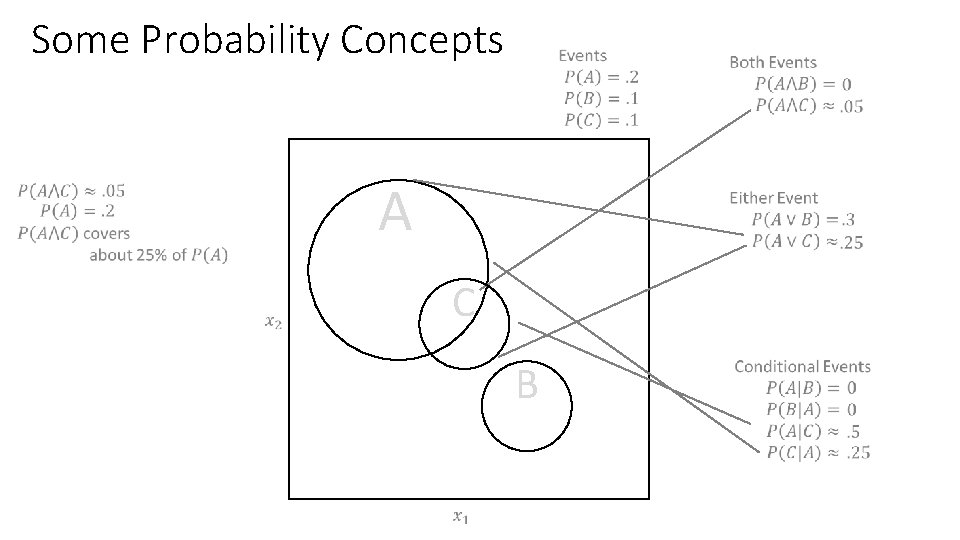

Some Probability Concepts A C B

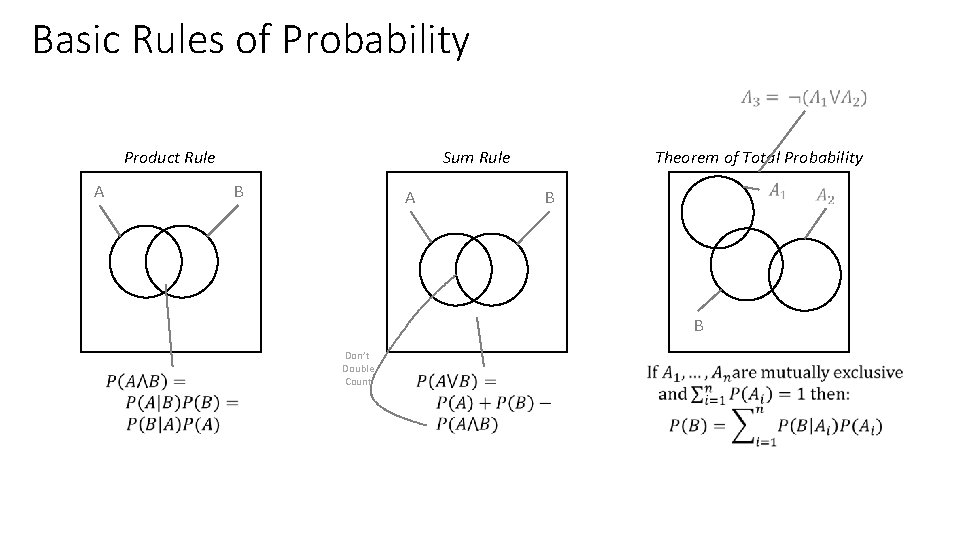

Basic Rules of Probability Product Rule A Theorem of Total Probability Sum Rule B A B B Don’t Double Count

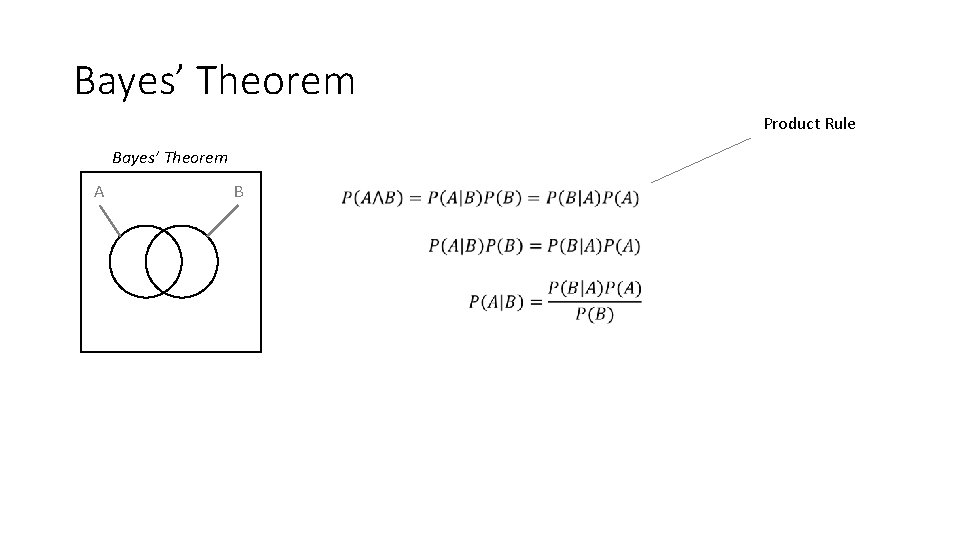

Bayes’ Theorem Product Rule Bayes’ Theorem A B

Example of using Bayes Theorem 0. 008 0. 992 0. 98 0. 02 0. 03 0. 97 ? ? Theorem of total probability

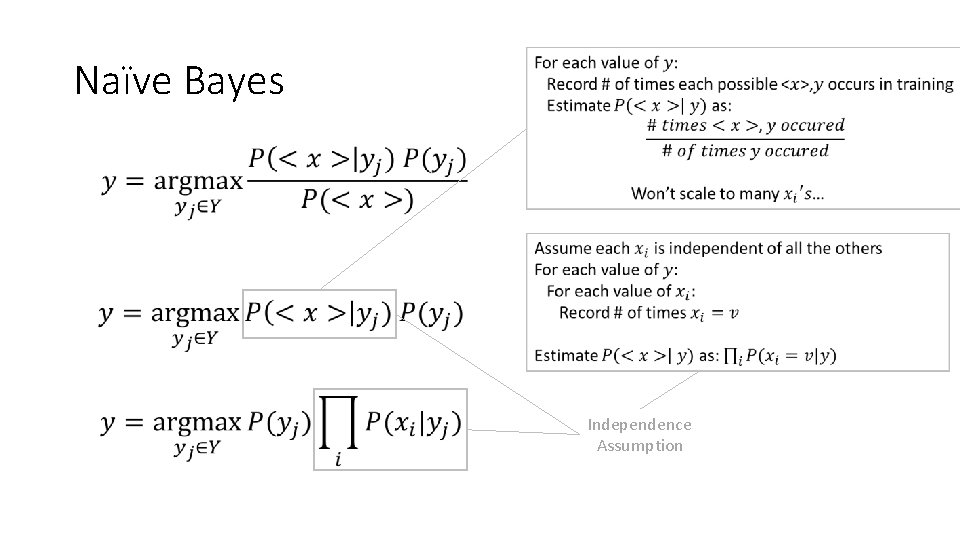

Naïve Bayes • Independence Assumption

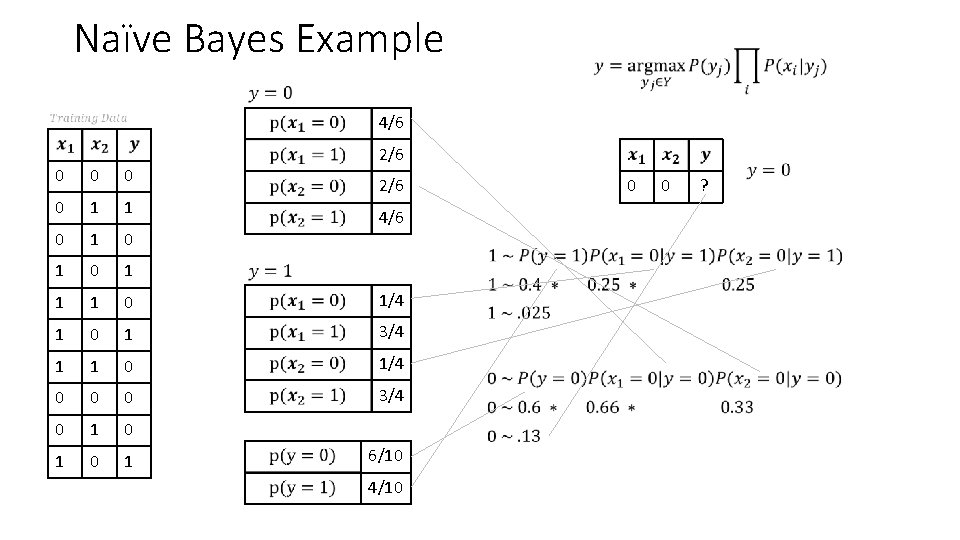

Naïve Bayes Example 4/6 2/6 0 0 1 1 0 1 0 1 1 1 0 1/4 1 0 1 3/4 1 1 0 1/4 0 0 0 3/4 0 1 0 1 2/6 0 4/6 6/10 4/10 0 ?

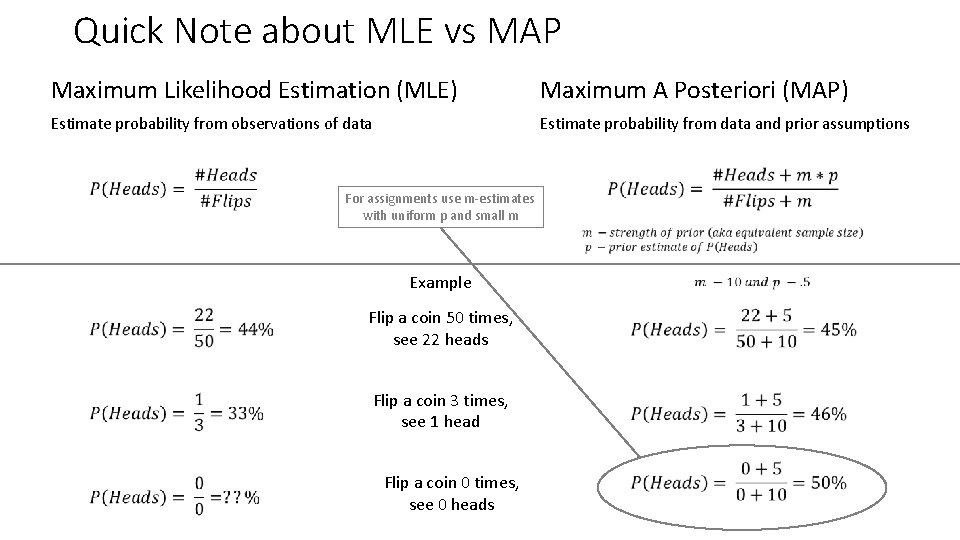

Quick Note about MLE vs MAP Maximum Likelihood Estimation (MLE) Maximum A Posteriori (MAP) Estimate probability from observations of data Estimate probability from data and prior assumptions For assignments use m-estimates with uniform p and small m Example Flip a coin 50 times, see 22 heads Flip a coin 3 times, see 1 head Flip a coin 0 times, see 0 heads

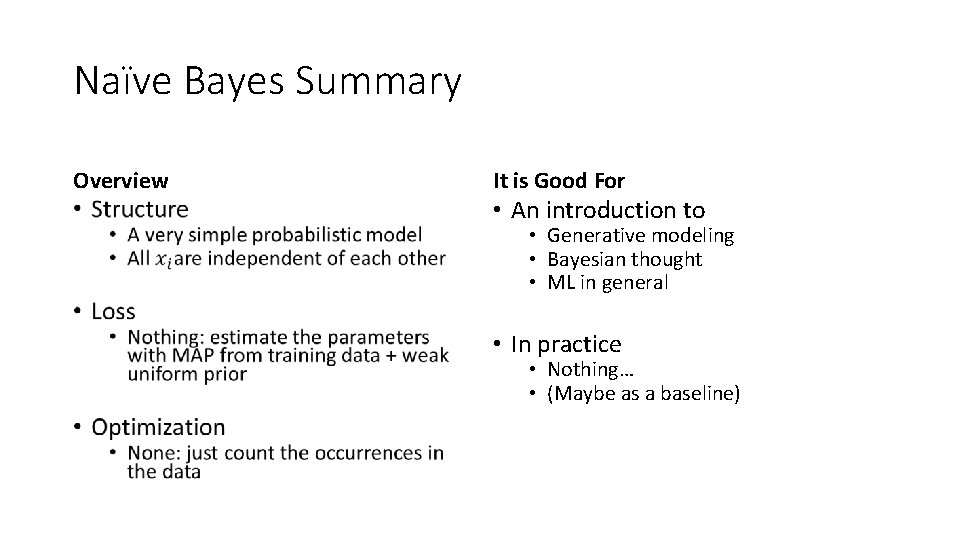

Naïve Bayes Summary Overview • It is Good For • An introduction to • Generative modeling • Bayesian thought • ML in general • In practice • Nothing… • (Maybe as a baseline)

- Slides: 11