Multiple Random Vaviables l l An ndimensional random

Multiple Random Vaviables l l An n-dimensional random variable vector is a function from a sample space S into Rn, n-dimesional Euclidean Space Let (X, Y)be a dicrete bivariate random vector. Then f(x, y)from R 2 into R defined by f(x, y)=P{X=x, Y=y}is called the joint probability mass function or joint pmf of (X, Y)

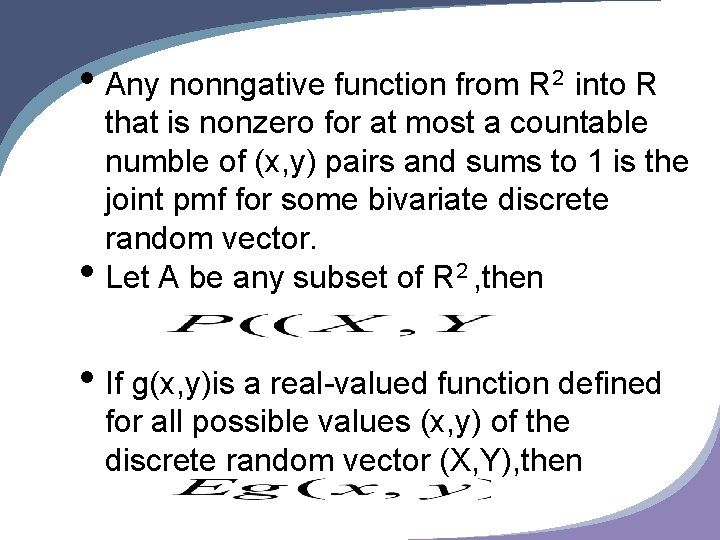

• Any nonngative function from R 2 into R • that is nonzero for at most a countable numble of (x, y) pairs and sums to 1 is the joint pmf for some bivariate discrete random vector. Let A be any subset of R 2 , then • If g(x, y)is a real-valued function defined for all possible values (x, y) of the discrete random vector (X, Y), then

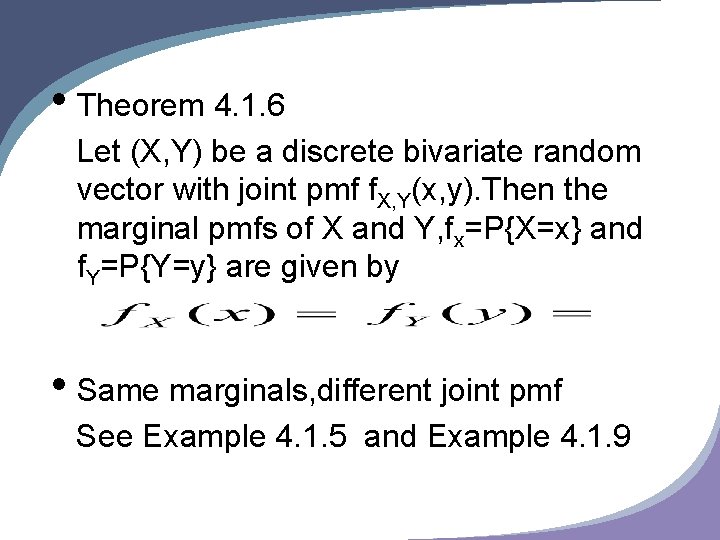

• Theorem 4. 1. 6 Let (X, Y) be a discrete bivariate random vector with joint pmf f. X, Y(x, y). Then the marginal pmfs of X and Y, fx=P{X=x} and f. Y=P{Y=y} are given by • Same marginals, different joint pmf See Example 4. 1. 5 and Example 4. 1. 9

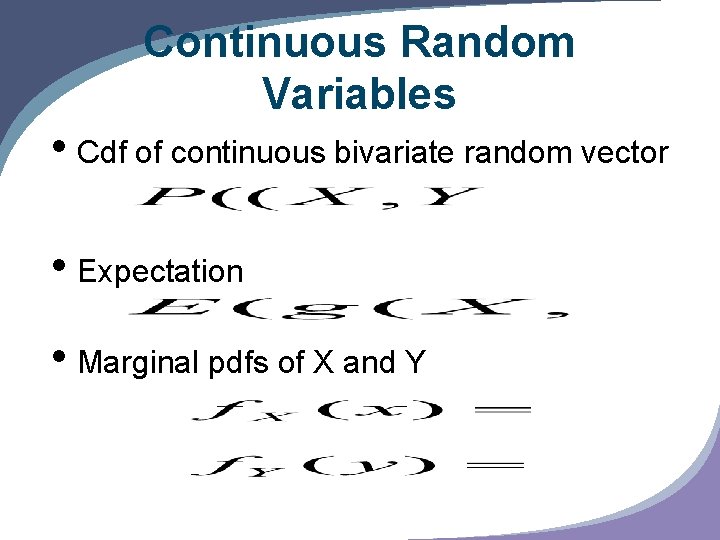

Continuous Random Variables • Cdf of continuous bivariate random vector • Expectation • Marginal pdfs of X and Y

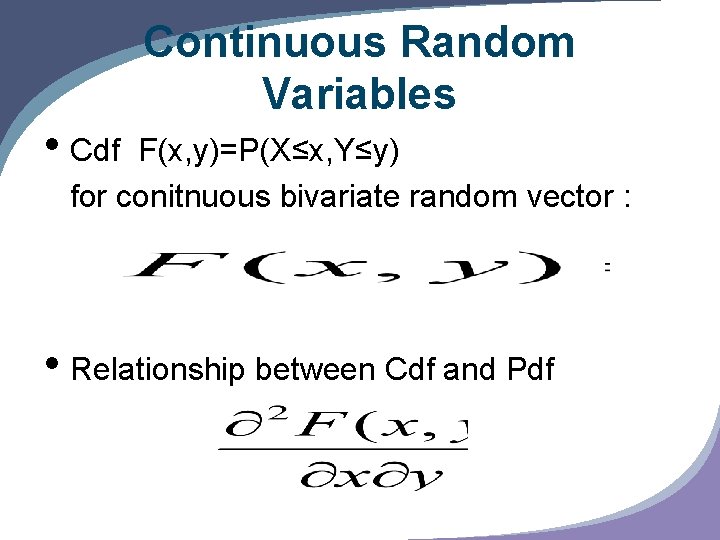

• Cdf Continuous Random Variables F(x, y)=P(X≤x, Y≤y) for conitnuous bivariate random vector : • Relationship between Cdf and Pdf

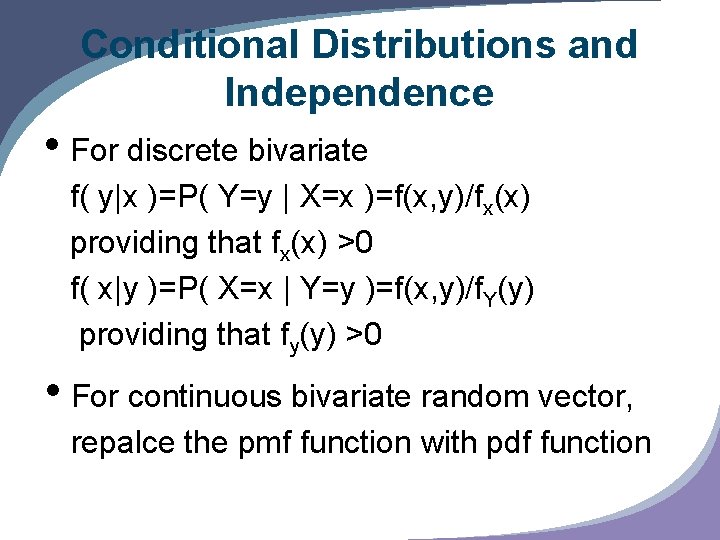

Conditional Distributions and Independence • For discrete bivariate f( y|x )=P( Y=y | X=x )=f(x, y)/fx(x) providing that fx(x) >0 f( x|y )=P( X=x | Y=y )=f(x, y)/f. Y(y) providing that fy(y) >0 • For continuous bivariate random vector, repalce the pmf function with pdf function

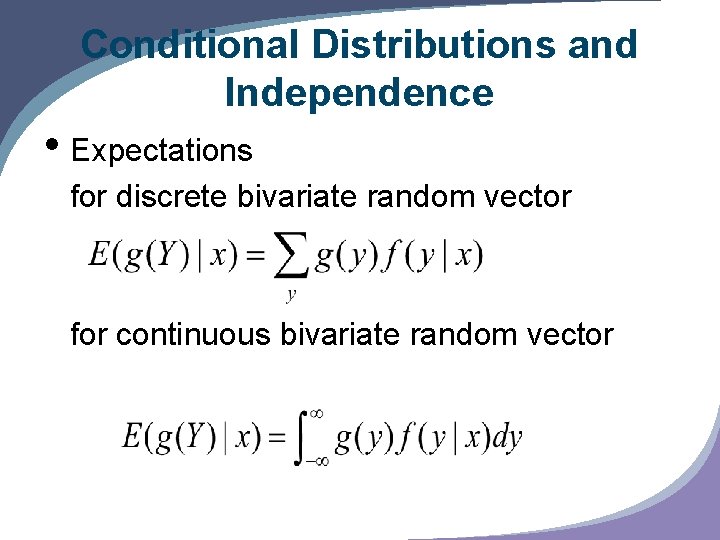

Conditional Distributions and Independence • Expectations for discrete bivariate random vector for continuous bivariate random vector

Conditional Distributions and Independence • X and Y are called indepent random variables if for every x and y f(x, y)=fx(x)f. Y(y) • if X and Y are indepentdent , then f(y|x)=f(x, y)/fx(x)= fx(x)f. Y(y)/ fx(x)=f. Y(y) • The knowledge X=x does't give us any infomation about Y

Conditional Distributions and Independence • X and Y are independent random variables if and only if there exists functions g(x) and h(y) for every x and y f(x, y)=g(x)h(y) • If X and Y are independent random variables , then E(g(x)h(Y))=(E(g(x))E(h(y)))

Conditional Distributions and Independence • Let X and Y be independent random variables with mgf Mx(t) and My(t), then the mgf of Z=X+Y is Mz(t)=Mx(t)My(t) • Theorem 4. 2. 14 Let X ~n(u 1, σ12) and Y ~n(u 2, σ22) , then Z=X+Y has a distribution of n(u 1+u 2, σ12+σ22)

Conditional Distributions and Independence • If two joint pdf f 1(x, y) and f 2(x, y) differs only in a set A that ∫A∫dxdy=0, then the two according bivariate random vectors have the same cdf.

Bivariate Transformations • If (X, Y) are discrete bivariate random variables , U=g 1(X, Y) and V=g 2(X, Y), then f. U, V(u, v)=∑Af. X, Y(x, y) A={(x, y)|g 1(x, y)=u, g 2(x, y)=v} • Theorem 4. 3. 2 (using mgf to prove this) If X~Poisson(θ) and Y~Poisson (λ) and X and Y are independent , then X+Y ~ Poisson(θ+λ)

Bivariate Transformations • If (X, Y) is a continuous random vector with joint pdf f. X, Y(x, y), and U=g 1(X, Y) , V=g 2(X, Y), A={(x, y)| f. X, Y(x, y)>0}, B={(u, v)|u=g 1(X, Y) , v=g 2(X, Y)} for some (x, y)∈A Suppose A->B is a one to one transformation

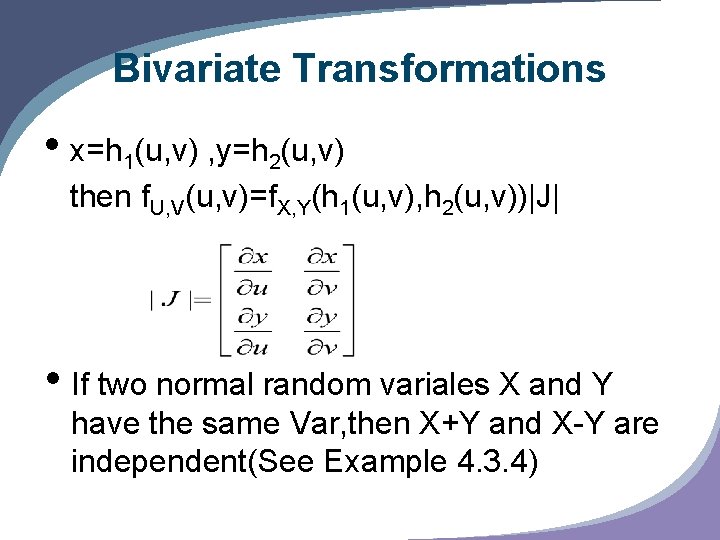

Bivariate Transformations • x=h 1(u, v) , y=h 2(u, v) then f. U, V(u, v)=f. X, Y(h 1(u, v), h 2(u, v))|J| • If two normal random variales X and Y have the same Var, then X+Y and X-Y are independent(See Example 4. 3. 4)

Bivariate Transformations • Theorem 4. 3. 5 Let X and Y be independent random variables. Let g(x) e a function only of x and h(y) only of y. Then the random variables U=g(X) and V=g(y) are independent • The extension of get the method to get joint pdf(see p 161 and example 4. 3. 6) f. U, V(u, v) = ∑f. X, Y(h 1 i(u, v), h 2 i(u, v))|Ji|

Hierarchical Models and Mixture Distributions • Example 4. 4. 1 Binamial-Poisson hierarchy • Theoroem 4. 4. 3 If X and Y are any two random variables then EX=E(E(X|Y)) (All those E has different meaning!)

Hierarchical Models and Mixture Distributions • A random variable X is said to have a mixture distribution if the distribution of X depends on a quantity that also has a ditribution • Theorem 4. 4. 7 (Conditional variance identity) For any two random variables X and Y, Var X = E(Var(X|Y))+ Var(E(X|Y)) if the expectations exist

Covariance and Correlation • The covariance of X and Y is the number defined by Cov(X, Y)=E((X-u. X)(Y-u. Y)) The correlation of X and Y is the number defined by ρXY=Cov(X, Y)/σXσY

Covariance and Correlation • Theorem 4. 5. 3 For any random variables X and Y, Cov(X, Y)=EXY-u. Xu. Y • Theorem 4. 5. 5 If X and Y are independent random variables , then Cov(X, Y)=0 and ρXY=0 • However Cov(X, Y)=0 and ρXY=0 does't mean the two are independent(P 171)

Covariance and Correlation • Theorem 4. 5. 6 If X and Y are any two random variables and a and b are any two constants , then Var(a. X+b. Y)=a 2 Var. X+b 2 Var. Y+2 ab. Cov(X, Y ) • Theorem 4. 5. 7 For any random variables X and Y, a. -1≤ρXY≤ 1

Covariance and Correlation b. |ρXY|=1 if and only if there exist numbers a≠ 0 and b such that P(Y=a. X+b)=1. If ρXY=1, then a>0, and if ρXY=-1, then a<0

- Slides: 21