Language modelling word FST Operational model for categorizing

- Slides: 8

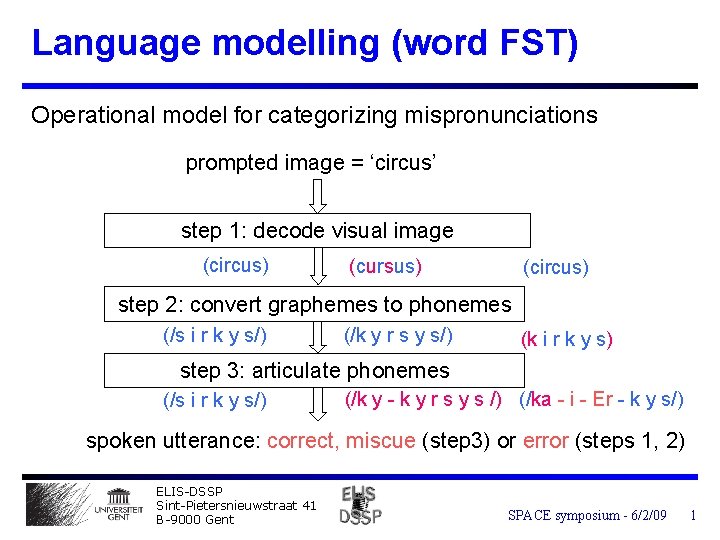

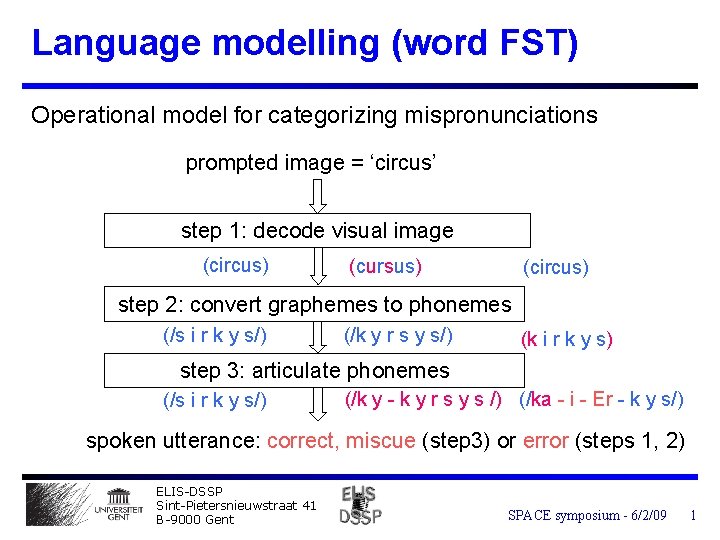

Language modelling (word FST) Operational model for categorizing mispronunciations prompted image = ‘circus’ step 1: decode visual image (circus) (cursus) (circus) step 2: convert graphemes to phonemes (/s i r k y s/) (/k y r s y s/) (k i r k y s) step 3: articulate phonemes (/s i r k y s/) (/k y - k y r s y s /) (/ka - i - Er - k y s/) spoken utterance: correct, miscue (step 3) or error (steps 1, 2) ELIS-DSSP Sint-Pietersnieuwstraat 41 B-9000 Gent SPACE symposium - 6/2/09 1

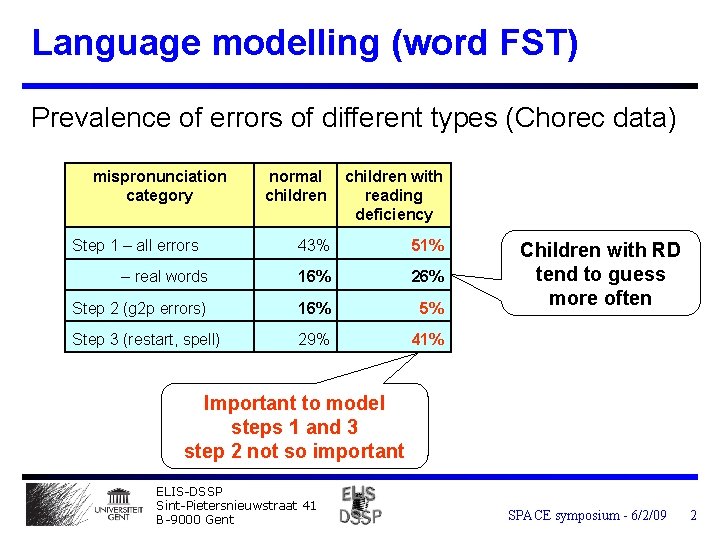

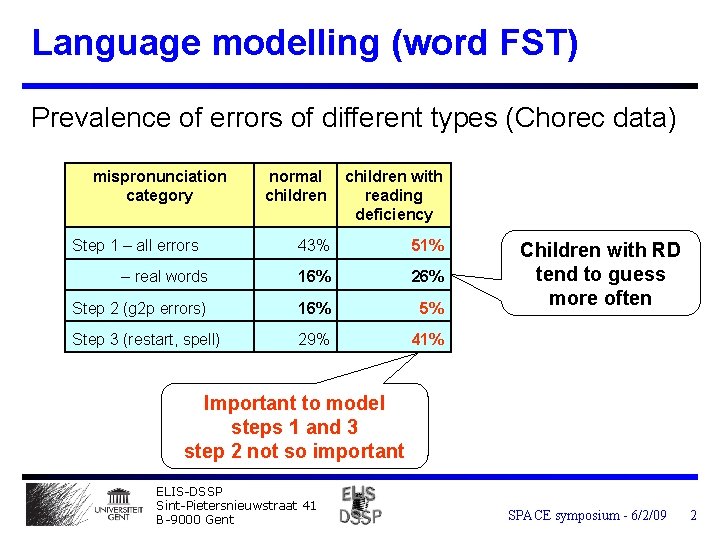

Language modelling (word FST) Prevalence of errors of different types (Chorec data) mispronunciation category normal children with reading deficiency 43% 51% – real words 16% 26% Step 2 (g 2 p errors) 16% 5% Step 3 (restart, spell) 29% 41% Step 1 – all errors Children with RD tend to guess more often Important to model steps 1 and 3 step 2 not so important ELIS-DSSP Sint-Pietersnieuwstraat 41 B-9000 Gent SPACE symposium - 6/2/09 2

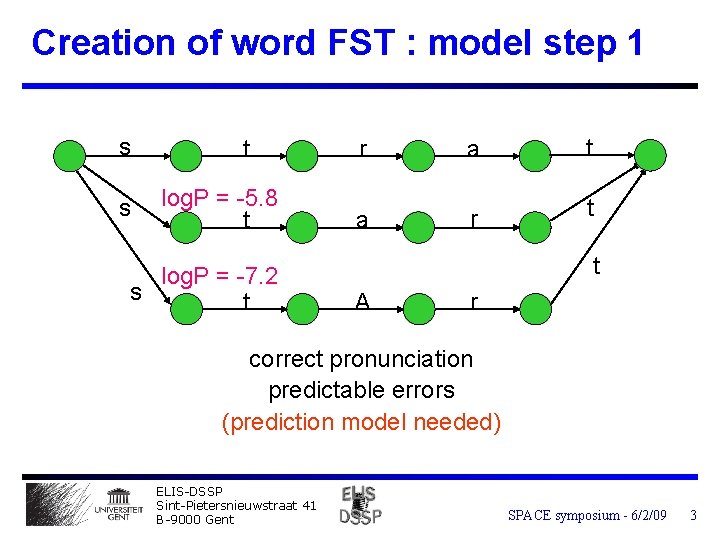

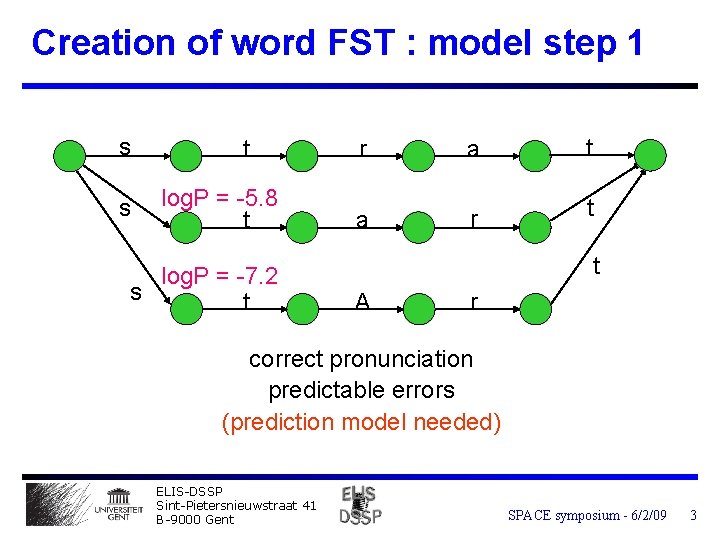

Creation of word FST : model step 1 s s t log. P = -5. 8 t log. P = -7. 2 s t r a t a r t t A r correct pronunciation predictable errors (prediction model needed) ELIS-DSSP Sint-Pietersnieuwstraat 41 B-9000 Gent SPACE symposium - 6/2/09 3

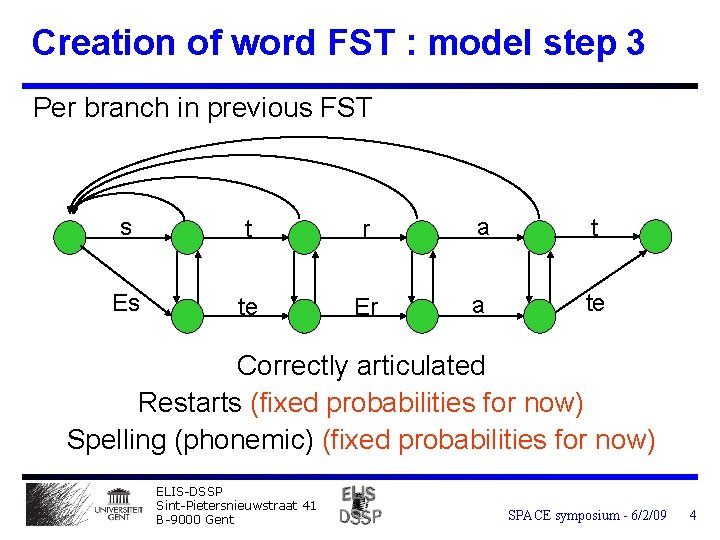

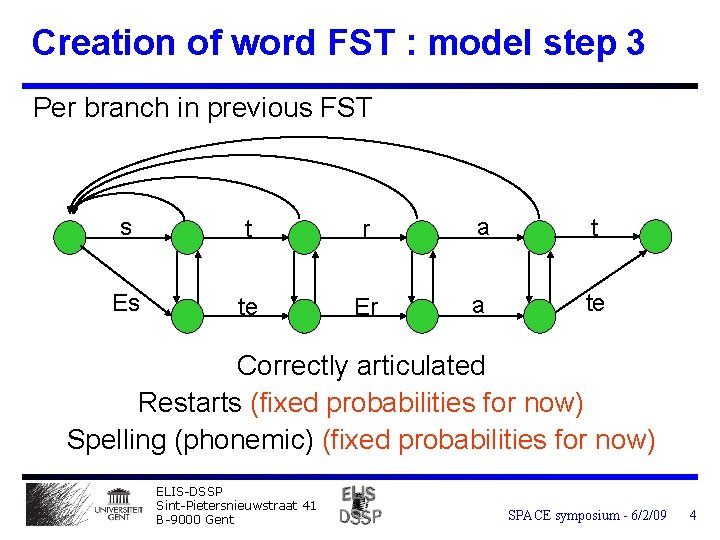

Creation of word FST : model step 3 Per branch in previous FST s t r a t Es te Er a te Correctly articulated Restarts (fixed probabilities for now) Spelling (phonemic) (fixed probabilities for now) ELIS-DSSP Sint-Pietersnieuwstraat 41 B-9000 Gent SPACE symposium - 6/2/09 4

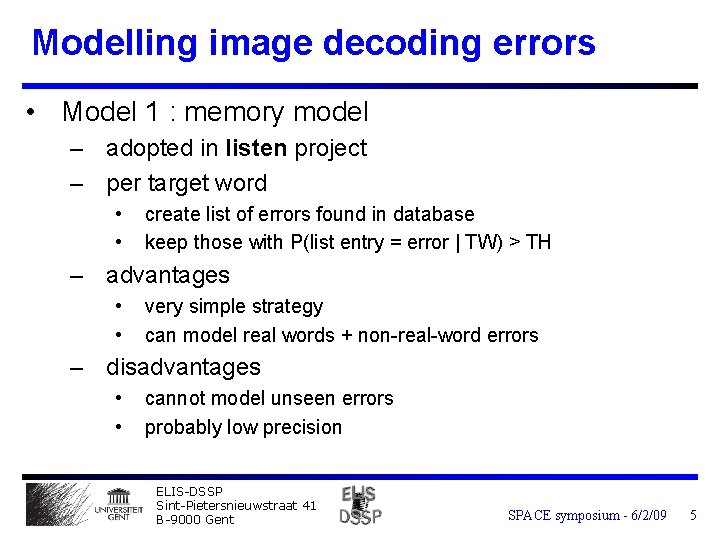

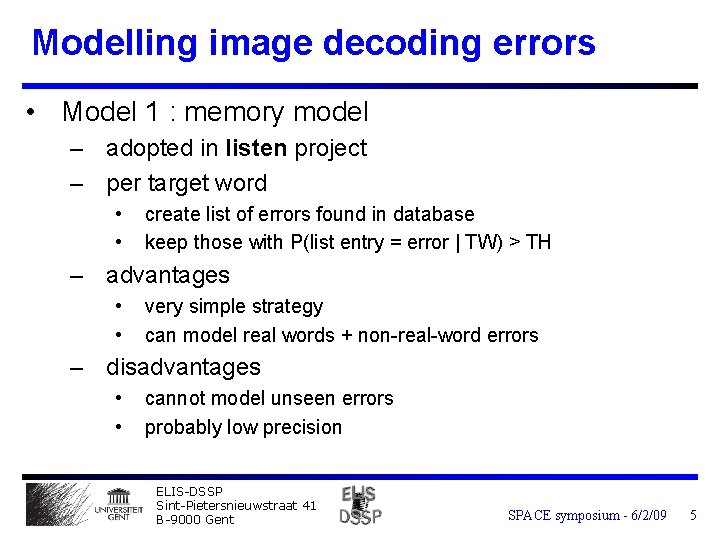

Modelling image decoding errors • Model 1 : memory model – adopted in listen project – per target word • • create list of errors found in database keep those with P(list entry = error | TW) > TH – advantages • • very simple strategy can model real words + non-real-word errors – disadvantages • • cannot model unseen errors probably low precision ELIS-DSSP Sint-Pietersnieuwstraat 41 B-9000 Gent SPACE symposium - 6/2/09 5

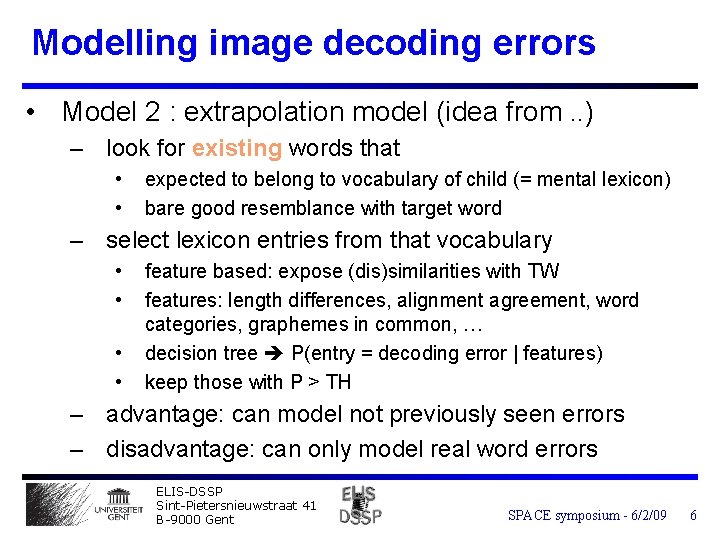

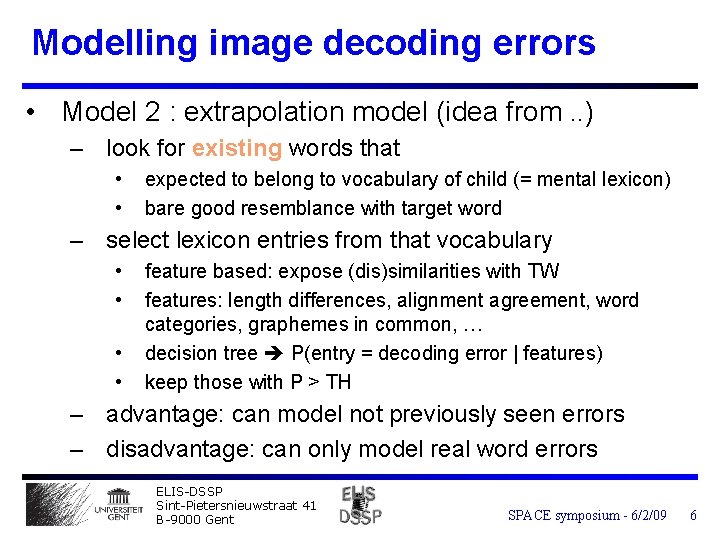

Modelling image decoding errors • Model 2 : extrapolation model (idea from. . ) – look for existing words that • • expected to belong to vocabulary of child (= mental lexicon) bare good resemblance with target word – select lexicon entries from that vocabulary • • feature based: expose (dis)similarities with TW features: length differences, alignment agreement, word categories, graphemes in common, … decision tree P(entry = decoding error | features) keep those with P > TH – advantage: can model not previously seen errors – disadvantage: can only model real word errors ELIS-DSSP Sint-Pietersnieuwstraat 41 B-9000 Gent SPACE symposium - 6/2/09 6

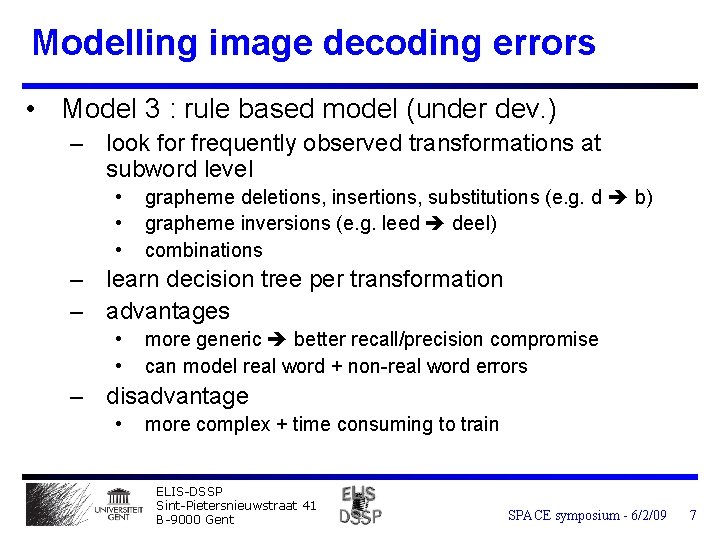

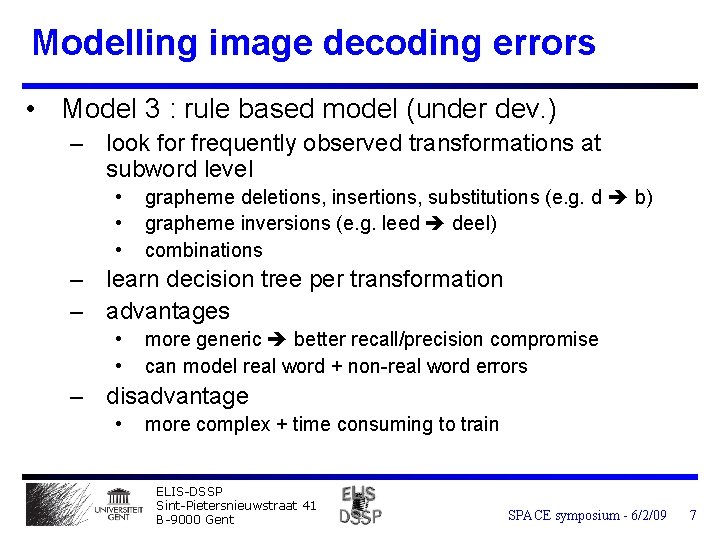

Modelling image decoding errors • Model 3 : rule based model (under dev. ) – look for frequently observed transformations at subword level • • • grapheme deletions, insertions, substitutions (e. g. d b) grapheme inversions (e. g. leed deel) combinations – learn decision tree per transformation – advantages • • more generic better recall/precision compromise can model real word + non-real word errors – disadvantage • more complex + time consuming to train ELIS-DSSP Sint-Pietersnieuwstraat 41 B-9000 Gent SPACE symposium - 6/2/09 7

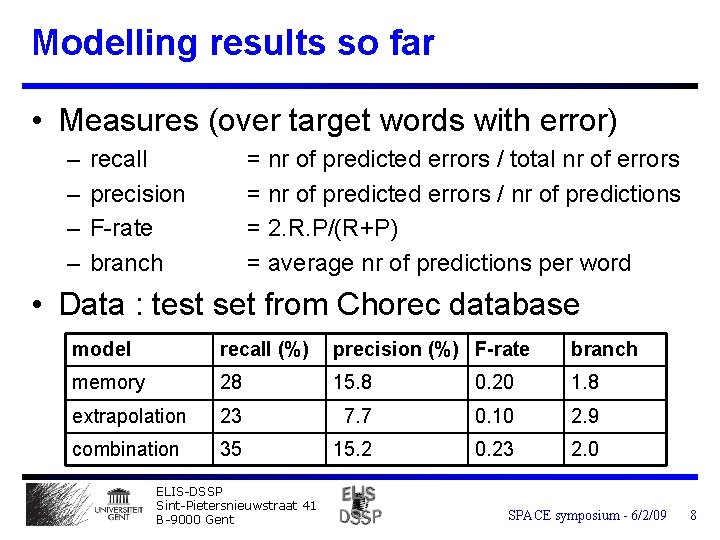

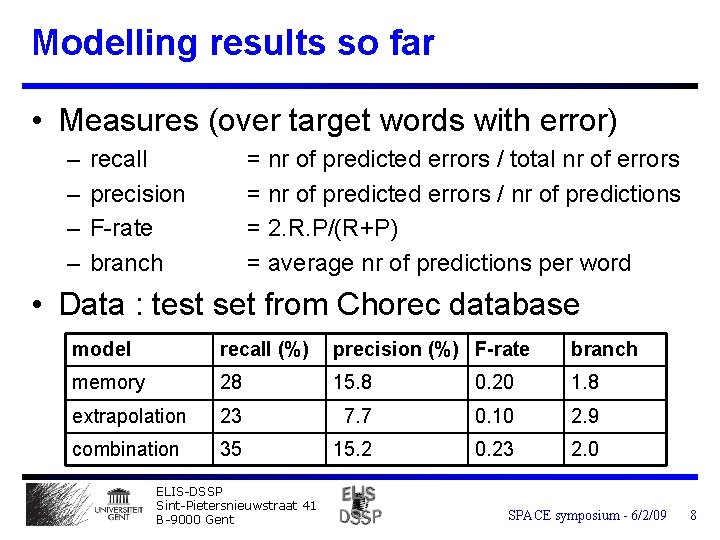

Modelling results so far • Measures (over target words with error) – – recall precision F-rate branch = nr of predicted errors / total nr of errors = nr of predicted errors / nr of predictions = 2. R. P/(R+P) = average nr of predictions per word • Data : test set from Chorec database model recall (%) precision (%) F-rate branch memory 28 15. 8 0. 20 1. 8 extrapolation 23 7. 7 0. 10 2. 9 combination 35 15. 2 0. 23 2. 0 ELIS-DSSP Sint-Pietersnieuwstraat 41 B-9000 Gent SPACE symposium - 6/2/09 8