GuiltFree JSON Smarter Searches and Other Useful DB

Guilt-Free JSON, Smarter Searches, and Other Useful DB 2 App. Dev Patterns Fred Sobotka FRS Consulting Session Code: G 08 Tuesday, 24 May 2016| Platform: Cross-Platform Photo by Steve from Austin, TX, USA

DBAs can make their own jobs easier by proactively helping developers improve the way their apps use DB 2 • Getting more done with fewer statements and app round-trips • Publishing relational data as JSON without losing your soul • Determining whether a CGTT/DGTT is helping or hurting a query • Achieving static access plans for more flexible search routines • Detecting invalid UTF-8 while it’s still someone else’s problem 2

It’s time DBAs declared war on round trips 3

Hasn’t technology solved all of our problems by now? We are apparently living in the future • 144 Xeon cores (that’s 288 threads) in a single 8 U server • 12 terabytes of RAM • 12. 8 terabytes of ultra-low latency (3. 3µs) e. XFlash storage 4

Let’s not forget about advancements in software, either • Column-oriented tables make even big scans absurdly cheap • Data can stay compressed longer, requiring less I/O • The optimizer’s bag of tricks continues to grow • Zigzag joins, scan sharing, index jump scans, to name a few • When all else fails, specific queries can be adjusted by the DBA • MQTs, SELECTIVITY, STMT_CONC, statistical views, optimization profiles How can it be that application performance is still unsatisfactory in this age of massive hardware and near-magical software? 5

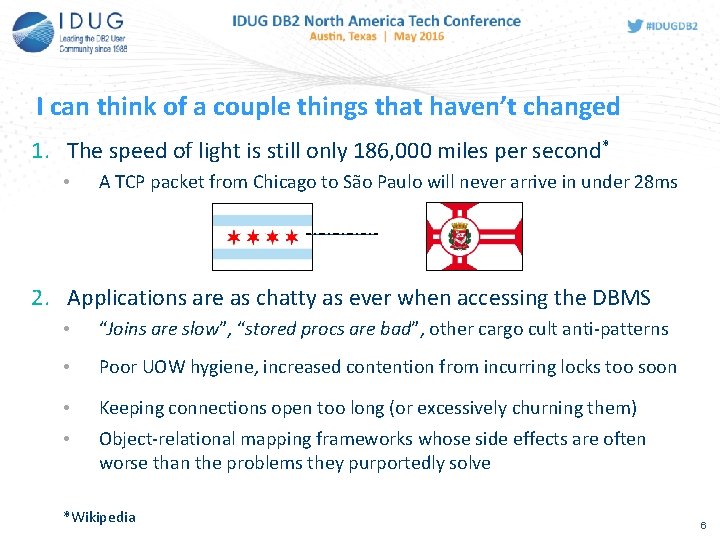

I can think of a couple things that haven’t changed 1. The speed of light is still only 186, 000 miles per second* • A TCP packet from Chicago to São Paulo will never arrive in under 28 ms 2. Applications are as chatty as ever when accessing the DBMS • “Joins are slow”, “stored procs are bad”, other cargo cult anti-patterns • Poor UOW hygiene, increased contention from incurring locks too soon • Keeping connections open too long (or excessively churning them) • Object-relational mapping frameworks whose side effects are often worse than the problems they purportedly solve *Wikipedia 6

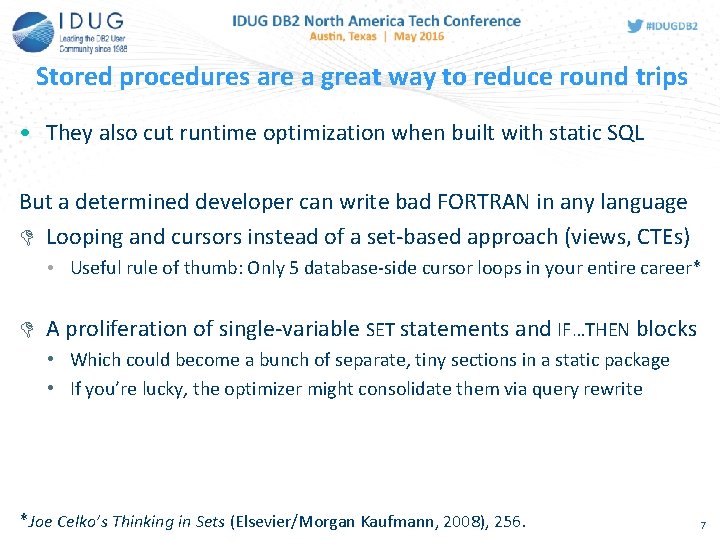

Stored procedures are a great way to reduce round trips • They also cut runtime optimization when built with static SQL But a determined developer can write bad FORTRAN in any language Looping and cursors instead of a set-based approach (views, CTEs) • Useful rule of thumb: Only 5 database-side cursor loops in your entire career* A proliferation of single-variable SET statements and IF…THEN blocks • Which could become a bunch of separate, tiny sections in a static package • If you’re lucky, the optimizer might consolidate them via query rewrite *Joe Celko’s Thinking in Sets (Elsevier/Morgan Kaufmann, 2008), 256. 7

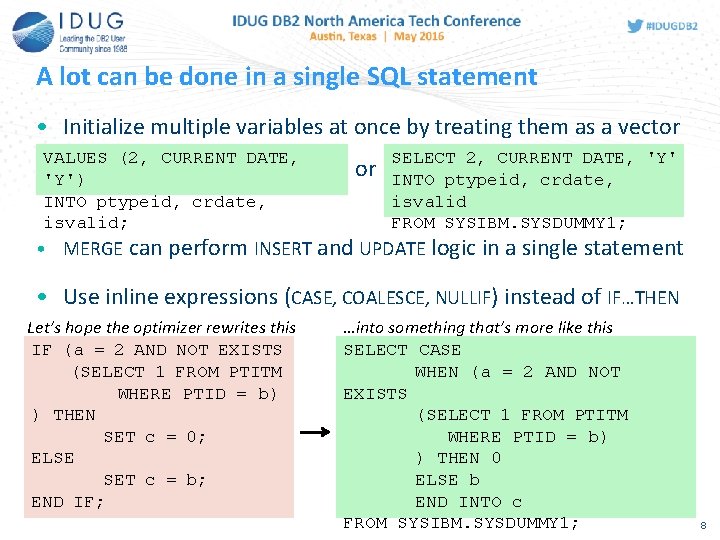

A lot can be done in a single SQL statement • Initialize multiple variables at once by treating them as a vector VALUES (2, CURRENT DATE, 'Y') INTO ptypeid, crdate, isvalid; or SELECT 2, CURRENT DATE, 'Y' INTO ptypeid, crdate, isvalid FROM SYSIBM. SYSDUMMY 1; • MERGE can perform INSERT and UPDATE logic in a single statement • Use inline expressions (CASE, COALESCE, NULLIF) instead of IF…THEN Let’s hope the optimizer rewrites this IF (a = 2 AND NOT EXISTS (SELECT 1 FROM PTITM WHERE PTID = b) ) THEN SET c = 0; ELSE SET c = b; END IF; …into something that’s more like this SELECT CASE WHEN (a = 2 AND NOT EXISTS (SELECT 1 FROM PTITM WHERE PTID = b) ) THEN 0 ELSE b END INTO c FROM SYSIBM. SYSDUMMY 1; 8

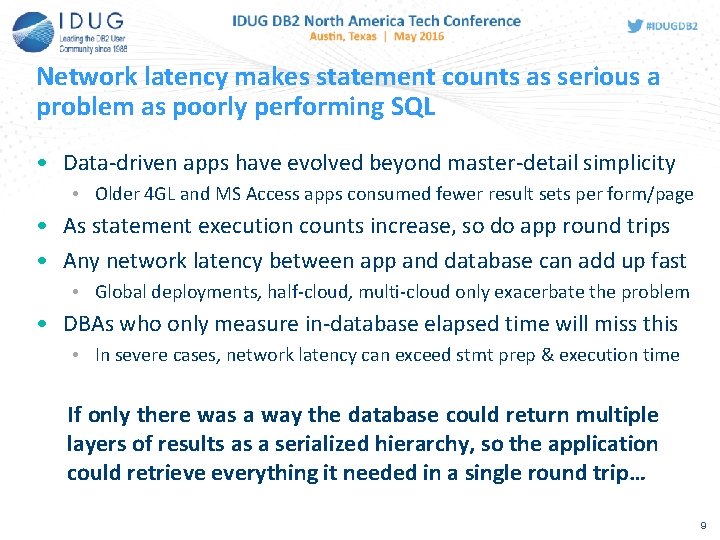

Network latency makes statement counts as serious a problem as poorly performing SQL • Data-driven apps have evolved beyond master-detail simplicity • Older 4 GL and MS Access apps consumed fewer result sets per form/page • As statement execution counts increase, so do app round trips • Any network latency between app and database can add up fast • Global deployments, half-cloud, multi-cloud only exacerbate the problem • DBAs who only measure in-database elapsed time will miss this • In severe cases, network latency can exceed stmt prep & execution time If only there was a way the database could return multiple layers of results as a serialized hierarchy, so the application could retrieve everything it needed in a single round trip… 9

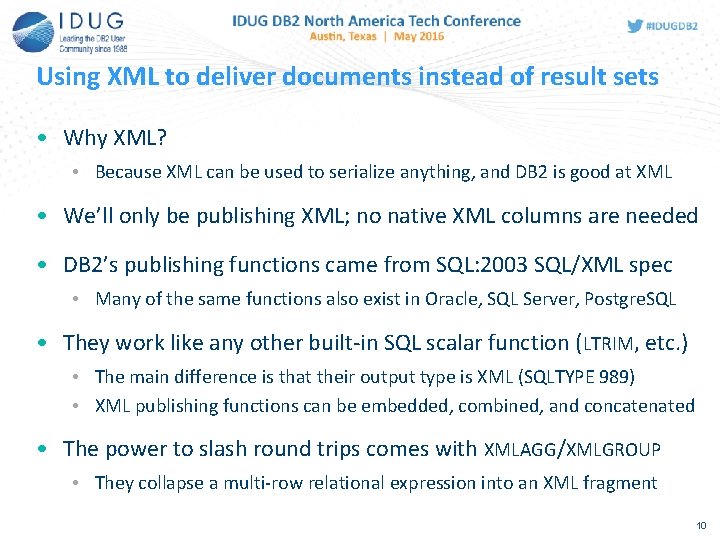

Using XML to deliver documents instead of result sets • Why XML? • Because XML can be used to serialize anything, and DB 2 is good at XML • We’ll only be publishing XML; no native XML columns are needed • DB 2’s publishing functions came from SQL: 2003 SQL/XML spec • Many of the same functions also exist in Oracle, SQL Server, Postgre. SQL • They work like any other built-in SQL scalar function (LTRIM, etc. ) • The main difference is that their output type is XML (SQLTYPE 989) • XML publishing functions can be embedded, combined, and concatenated • The power to slash round trips comes with XMLAGG/XMLGROUP • They collapse a multi-row relational expression into an XML fragment 10

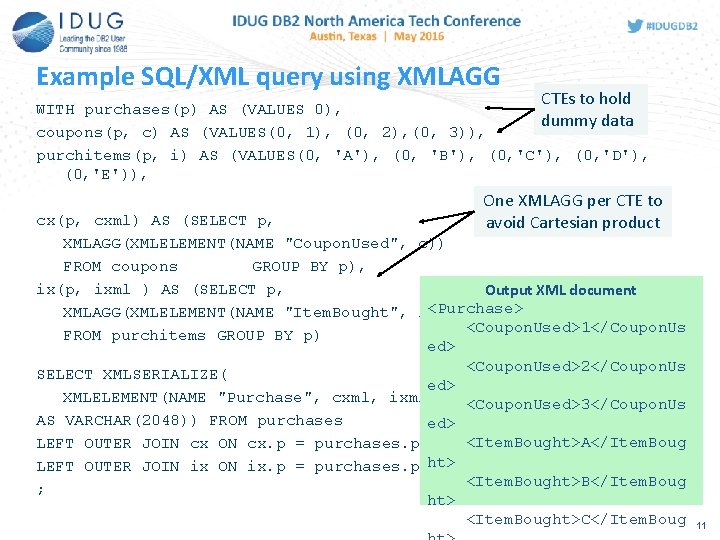

Example SQL/XML query using XMLAGG CTEs to hold WITH purchases(p) AS (VALUES 0), dummy data coupons(p, c) AS (VALUES(0, 1), (0, 2), (0, 3)), purchitems(p, i) AS (VALUES(0, 'A'), (0, 'B'), (0, 'C'), (0, 'D'), (0, 'E')), One XMLAGG per CTE to avoid Cartesian product cx(p, cxml) AS (SELECT p, XMLAGG(XMLELEMENT(NAME "Coupon. Used", c)) FROM coupons GROUP BY p), ix(p, ixml ) AS (SELECT p, Output XML document <Purchase> XMLAGG(XMLELEMENT(NAME "Item. Bought", i)) <Coupon. Used>1</Coupon. Us FROM purchitems GROUP BY p) ed> <Coupon. Used>2</Coupon. Us SELECT XMLSERIALIZE( ed> XMLELEMENT(NAME "Purchase", cxml, ixml) <Coupon. Used>3</Coupon. Us AS VARCHAR(2048)) FROM purchases ed> <Item. Bought>A</Item. Boug LEFT OUTER JOIN cx ON cx. p = purchases. p LEFT OUTER JOIN ix ON ix. p = purchases. p ht> <Item. Bought>B</Item. Boug ; ht> <Item. Bought>C</Item. Boug 11

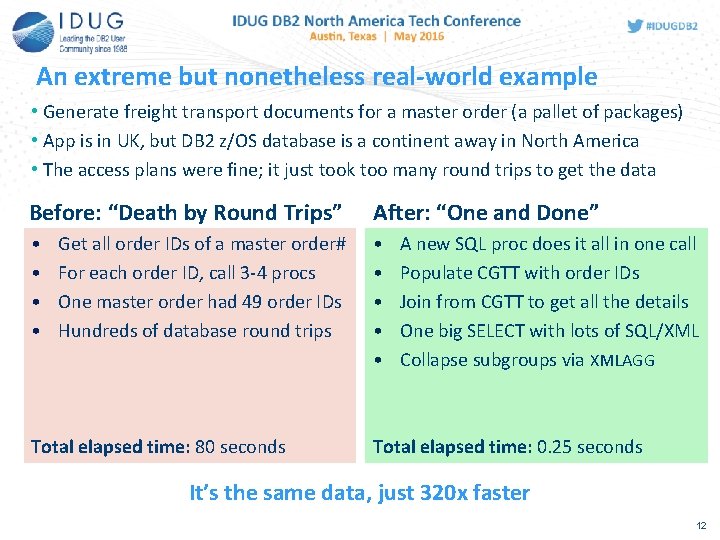

An extreme but nonetheless real-world example • Generate freight transport documents for a master order (a pallet of packages) • App is in UK, but DB 2 z/OS database is a continent away in North America • The access plans were fine; it just took too many round trips to get the data Before: “Death by Round Trips” After: “One and Done” • • • Get all order IDs of a master order# For each order ID, call 3 -4 procs One master order had 49 order IDs Hundreds of database round trips Total elapsed time: 80 seconds A new SQL proc does it all in one call Populate CGTT with order IDs Join from CGTT to get all the details One big SELECT with lots of SQL/XML Collapse subgroups via XMLAGG Total elapsed time: 0. 25 seconds It’s the same data, just 320 x faster 12

Haven’t you heard? We’re all into JSON now 13

How to tell if a JSON publishing approach is ugly Does delivering JSON to your applications involve any of these? q Java stored procedures/UDFs q JSON-specific denormalizations q Transformation by a dedicated hardware appliance q ETL to populate a remote JSON document store 14

What’s not to like about Java stored procs and UDFs? • DB 2 doesn’t allow much control over its embedded JVM • Hard to predict when garbage collection will occur • File handles opened by Java could take a while to close • JARs are unzipped into individual. class files during import • Total impact on CPU may make Java routines not worth it 15

Translating XML to JSON can be tricky • XML is a richer format than JSON, so converting can be lossy • It helps to be flexible on either the input XML or JSON output • Each JSON translation spec/convention has its tradeoffs • Options include Badgerfish, Rayfish, Rabbitfish, Json. ML, JSONx, others • Most stylesheet-based JSON converters require XSLT 2. 0 • DB 2’s XSLTRANSFORM only supports XSLT 1. 0 (and not all of it, either) • It does, however, allow some Xalan-specific extensions to be called • Thanks to Bram Stein for providing a solid XSLT 1. 0 option • With a minor change, xml-to-jsonml. xsl can work with XSLTRANSFORM • The stylesheet is part of a larger project https: //github. com/bramstein/xsltjson 16

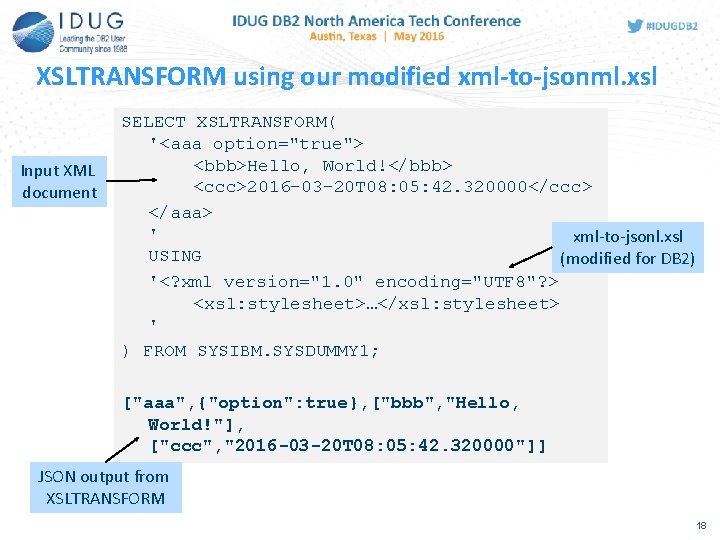

About that minor change • xml-to-jsonml. xsl makes use of xsl: document() which upsets DB 2 • Fortunately, xalan: nodeset() is a convenient workaround • Declare the xmlns: xalan namespace used by Xalan extensions <xsl: stylesheet version="1. 0" xmlns: xsl="http: //www. w 3. org/1999/XSL/Transform" xmlns: json="http: //json. org/" xmlns: xalan="http: //xml. apache. org/xalan"> • Turn the json: search fragment into an XSL variable $replacements <xsl: variable name="replacements"> <json: search name="string">…</json: search> </xsl: variable> the xsl: document('') stylesheet can be calls run by DB 2’s XSLTRANSFORM() • Now Replace with 17

XSLTRANSFORM using our modified xml-to-jsonml. xsl Input XML document SELECT XSLTRANSFORM( '<aaa option="true"> <bbb>Hello, World!</bbb> <ccc>2016 -03 -20 T 08: 05: 42. 320000</ccc> </aaa> ' xml-to-jsonl. xsl USING (modified for DB 2) '<? xml version="1. 0" encoding="UTF 8"? > <xsl: stylesheet>…</xsl: stylesheet> ' ) FROM SYSIBM. SYSDUMMY 1; ["aaa", {"option": true}, ["bbb", "Hello, World!"], ["ccc", "2016 -03 -20 T 08: 05: 42. 320000"]] JSON output from XSLTRANSFORM 18

Even temp tables are sensitive to inaccurate cardinality • A CGTT of keys can be a good inner table for big/complex joins • They allow more flexibility in how you gather the top-level PKs • The optimizer has been known to overestimate CGTT cardinality • Default cardinality of 1000+ rows could rule out cheap NLJOINs • Review the EXPLAIN plan to determine if bad CGTT cardinality is a factor • To avoid costly scans, lock in a low cardinality for your CGTT 19

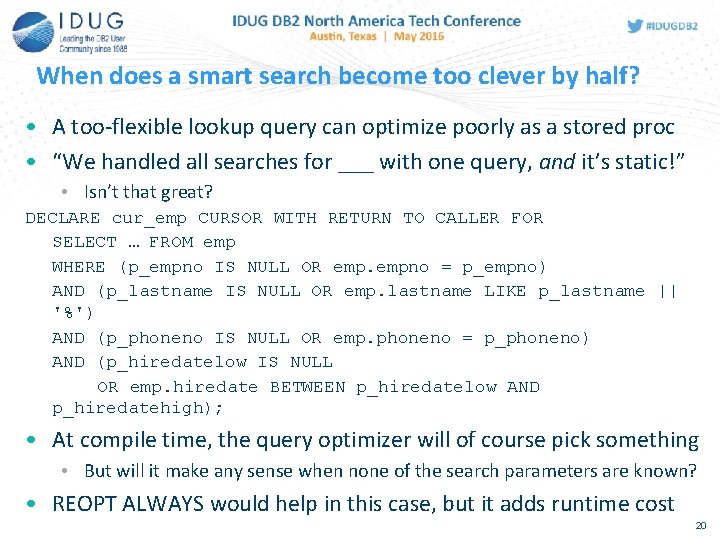

When does a smart search become too clever by half? • A too-flexible lookup query can optimize poorly as a stored proc • “We handled all searches for ___ with one query, and it’s static!” • Isn’t that great? DECLARE cur_emp CURSOR WITH RETURN TO CALLER FOR SELECT … FROM emp WHERE (p_empno IS NULL OR empno = p_empno) AND (p_lastname IS NULL OR emp. lastname LIKE p_lastname || '%') AND (p_phoneno IS NULL OR emp. phoneno = p_phoneno) AND (p_hiredatelow IS NULL OR emp. hiredate BETWEEN p_hiredatelow AND p_hiredatehigh); • At compile time, the query optimizer will of course pick something • But will it make any sense when none of the search parameters are known? • REOPT ALWAYS would help in this case, but it adds runtime cost 20

Perhaps it might help to be a little more specific • Flexible search/lookup procs are possible without REOPT ALWAYS • But you’ll need to cover your bases a bit • Instead of just one cursor for an “anything goes” query, define a few alternates that will actually optimize well at compile time • A version of the query where p_empno is always NOT NULL • A version of the query that always specifies p_phoneno • A version of the query where a range of hire dates is specified • Leave the other search predicates optional in those cursors • Use IF…THEN logic at the bottom of the procedure to evaluate the input parameters and determine which cursor to open 21

There is a “cone of shame” in Unicode ? And it can help you protect your database against bad UTF-8 22

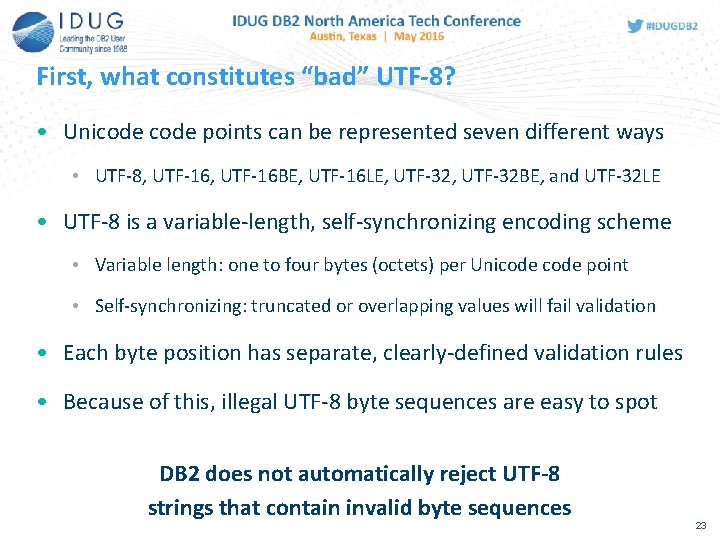

First, what constitutes “bad” UTF-8? • Unicode points can be represented seven different ways • UTF-8, UTF-16 BE, UTF-16 LE, UTF-32 BE, and UTF-32 LE • UTF-8 is a variable-length, self-synchronizing encoding scheme • Variable length: one to four bytes (octets) per Unicode point • Self-synchronizing: truncated or overlapping values will fail validation • Each byte position has separate, clearly-defined validation rules • Because of this, illegal UTF-8 byte sequences are easy to spot DB 2 does not automatically reject UTF-8 strings that contain invalid byte sequences 23

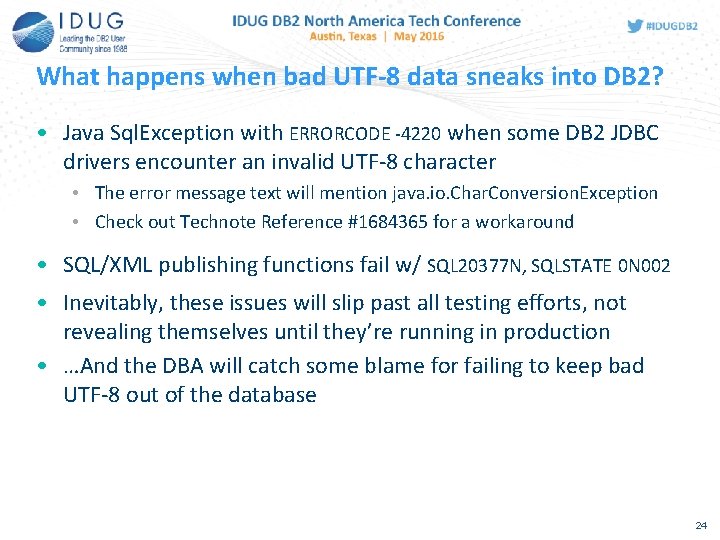

What happens when bad UTF-8 data sneaks into DB 2? • Java Sql. Exception with ERRORCODE -4220 when some DB 2 JDBC drivers encounter an invalid UTF-8 character • The error message text will mention java. io. Char. Conversion. Exception • Check out Technote Reference #1684365 for a workaround • SQL/XML publishing functions fail w/ SQL 20377 N, SQLSTATE 0 N 002 • Inevitably, these issues will slip past all testing efforts, not revealing themselves until they’re running in production • …And the DBA will catch some blame for failing to keep bad UTF-8 out of the database 24

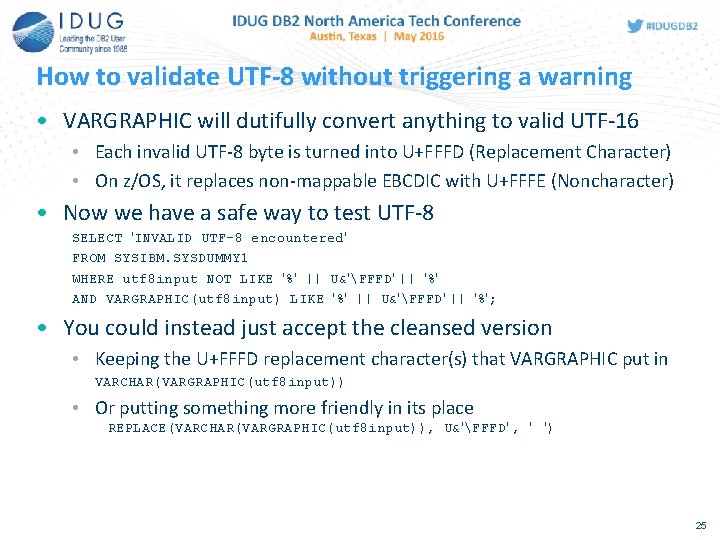

How to validate UTF-8 without triggering a warning • VARGRAPHIC will dutifully convert anything to valid UTF-16 • Each invalid UTF-8 byte is turned into U+FFFD (Replacement Character) • On z/OS, it replaces non-mappable EBCDIC with U+FFFE (Noncharacter) • Now we have a safe way to test UTF-8 SELECT 'INVALID UTF-8 encountered' FROM SYSIBM. SYSDUMMY 1 WHERE utf 8 input NOT LIKE '%' || U&'FFFD' || '%' AND VARGRAPHIC(utf 8 input) LIKE '%' || U&'FFFD' || '%'; • You could instead just accept the cleansed version • Keeping the U+FFFD replacement character(s) that VARGRAPHIC put in VARCHAR(VARGRAPHIC(utf 8 input)) • Or putting something more friendly in its place REPLACE(VARCHAR(VARGRAPHIC(utf 8 input)), U&'FFFD' , ' ') 25

Developers need DBA guidance more than ever • Network latency is a problem even if the connection is fast • Too many executions can be a problem even if the SQL is tuned • Apps are consuming a lot more data, but the database access patterns are often questionable • SQL is a declarative language with unique strengths that developers might overlook • DBAs must be approachable experts who are prepared to share examples and best practices on how to make smart use of DB 2 26

Fred Sobotka FRS Consulting fred@frsconsulting. com Please fill out your session evaluation before leaving! Session G 08 Guilt-Free JSON, Smarter Searches, and Other Useful DB 2 App. Dev Patterns Photo by Steve from Austin, TX, USA

- Slides: 27