Federated Data Infrastructure Scalable Namespace and Metadata Management

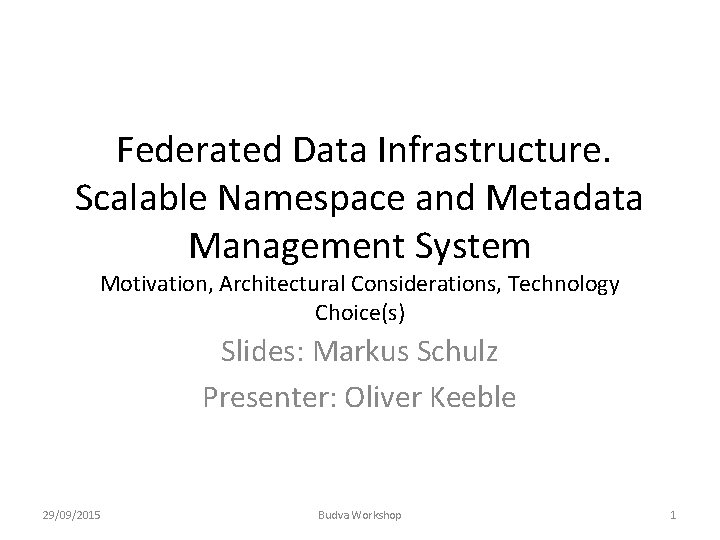

Federated Data Infrastructure. Scalable Namespace and Metadata Management System Motivation, Architectural Considerations, Technology Choice(s) Slides: Markus Schulz Presenter: Oliver Keeble 29/09/2015 Budva Workshop 1

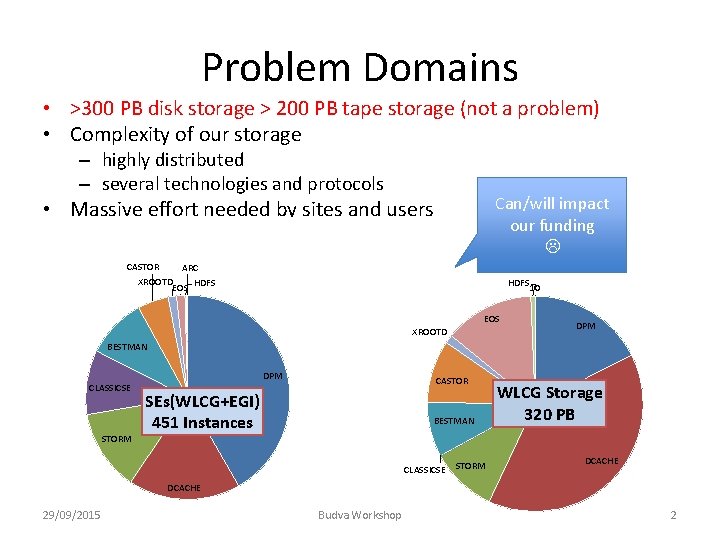

Problem Domains • >300 PB disk storage > 200 PB tape storage (not a problem) • Complexity of our storage – highly distributed – several technologies and protocols Can/will impact our funding • Massive effort needed by sites and users – manageable for our community, at a cost – close to impossible for other communities CASTOR ARC XROOTD HDFS – less efficient than desirable EOS HDFS 10 EOS XROOTD DPM BESTMAN CLASSICSE STORM DPM CASTOR SEs(WLCG+EGI) 451 Instances BESTMAN CLASSICSE STORM WLCG Storage 320 PB DCACHE 29/09/2015 Budva Workshop 2

Problem Domains • Many Protocols • Users maintain global namespaces – Namespaces are local • registration/deletion independent • consistency is an issue • cost of different implementations (scalability) – No direct connection to meta data services – Difficult to use for smaller user communities • often relying on obsolete technology • Variety of data federations – read-only • How will we integrate cloud based storage? 29/09/2015 Budva Workshop 3

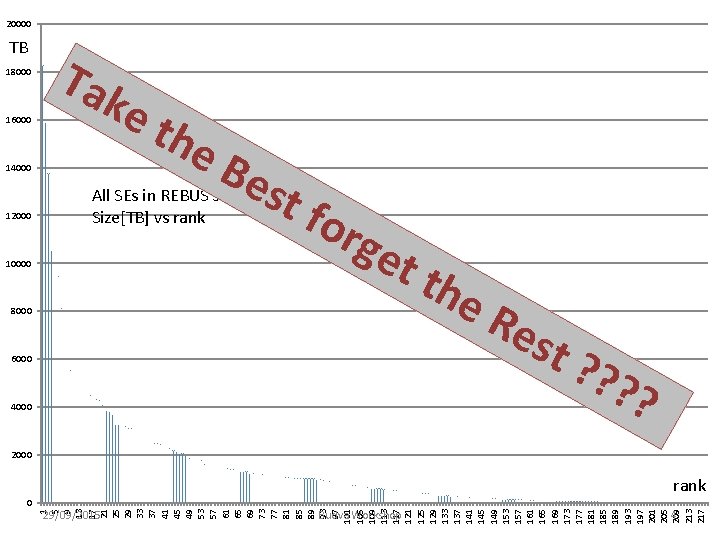

20000 TB 18000 16000 Tak et 14000 12000 he Be st for All SEs in REBUS sorted by size Size[TB] vs rank 10000 get 8000 6000 4000 the Re st ? ? ? ? 2000 rank 29/09/2015 Budva Workshop 4 1 5 9 13 17 21 25 29 33 37 41 45 49 53 57 61 65 69 73 77 81 85 89 93 97 101 105 109 113 117 121 125 129 133 137 141 145 149 153 157 161 165 169 173 177 181 185 189 193 197 201 205 209 213 217 0

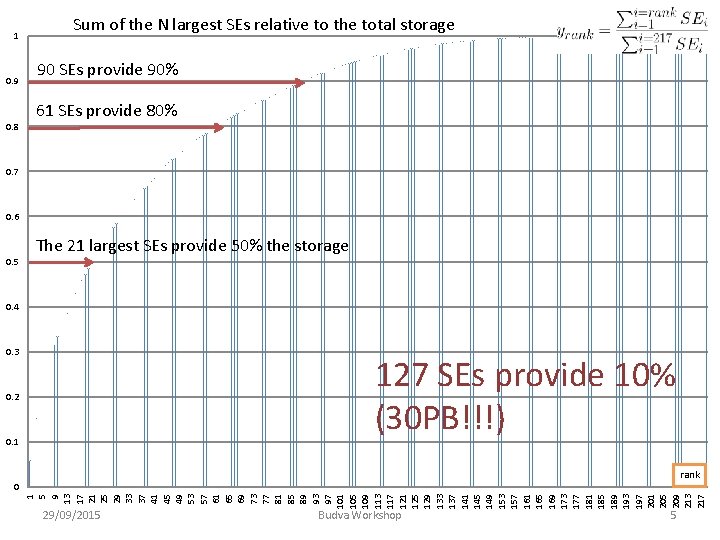

1 0. 9 0. 8 Sum of the N largest SEs relative to the total storage 90 SEs provide 90% 61 SEs provide 80% 0. 7 0. 6 0. 5 The 21 largest SEs provide 50% the storage 0. 4 0. 3 127 SEs provide 10% (30 PB!!!) 0. 2 0. 1 rank 1 5 9 13 17 21 25 29 33 37 41 45 49 53 57 61 65 69 73 77 81 85 89 93 97 101 105 109 113 117 121 125 129 133 137 141 145 149 153 157 161 165 169 173 177 181 185 189 193 197 201 205 209 213 217 0 29/09/2015 Budva Workshop 5

Long-term Goals • Reducing the complexity – Improving the effective use • Adding optional global namespace management – retaining local independence (hierarchical) – one for each user group (experiment) – with feeds for more complex meta data services • Standardizing on federation and authentication mechanisms 29/09/2015 Budva Workshop 6

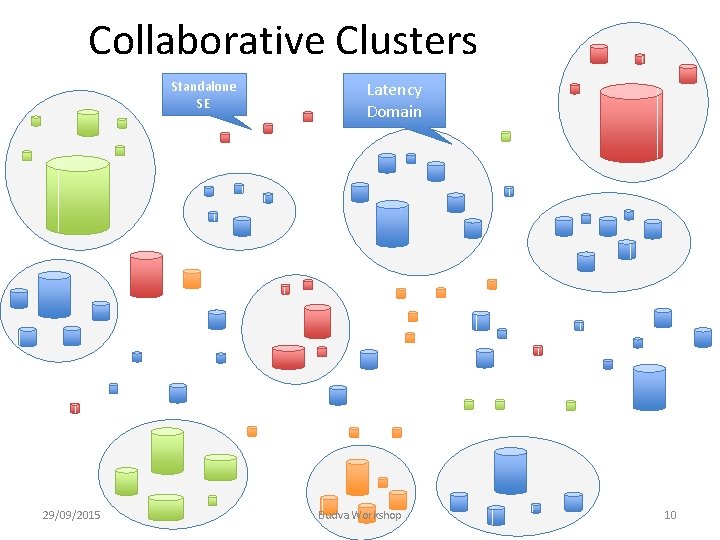

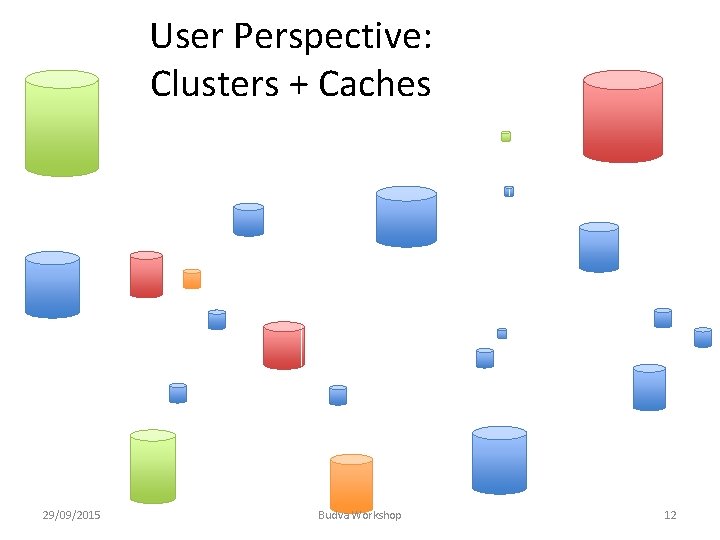

Reducing Complexity • Introduce “Collaborative Clusters” – Currently: • d. Cache NDGF • EOS CERN/Wigner – One technology per cluster – One point of access for storage in a region/ Latency Domain • • keeping track of internal replication and availability keeping track of the cluster’s namespace focussing expertise continuous reduction of complexity (NO REVOLUTION) • Convert small SEs to “unmanaged” caches • less management effort (transient service) • improving performance • federated data access to populate and fallback 29/09/2015 Budva Workshop 7

“Collaborative Clusters” • Not a solution for each/every site or region – funding, policies, educational goals etc. – not needed for significant gain! • Sites within a cluster share – operations and expertise – provide integrated a better service at lower cost • Distribution of SEs suggests: – <30 Clusters covering >90 % • per experiment less than 30 will be used – 10 - 20 midsized SEs ( x PB) – 100 caches 29/09/2015 Budva Workshop 8

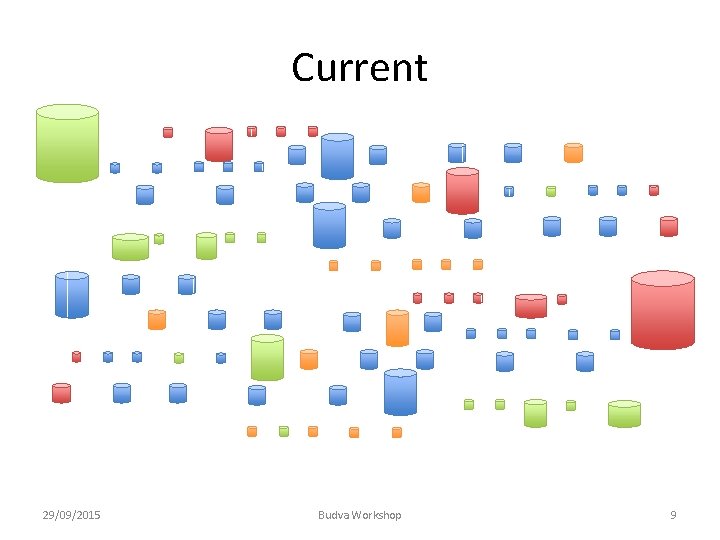

Current 29/09/2015 Budva Workshop 9

Collaborative Clusters Standalone SE 29/09/2015 Latency Domain Budva Workshop 10

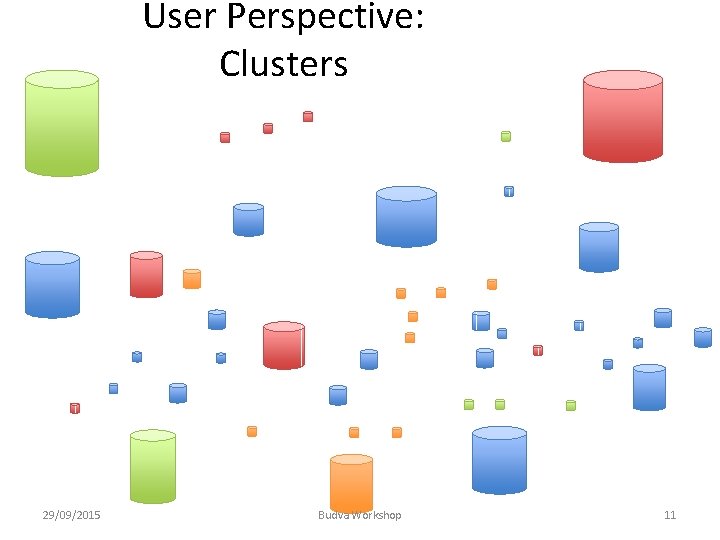

User Perspective: Clusters 29/09/2015 Budva Workshop 11

User Perspective: Clusters + Caches 29/09/2015 Budva Workshop 12

Adding Cloud Storage • Storage within a (commercial) cloud can be managed as a Cluster – global providers will cover several latency domains • requires multiple Clusters 29/09/2015 Budva Workshop 13

Simplified Access • Focus on two protocols: XROOTD and HTTP(S) – – – both support mechanisms for data federation both provide performance users can optimise their data structures for these combined they cover WLCG and other communities native protocols can be offered in addition • XROOTD and HTTP have to work well !!! • Federated Identity based authentication – using local credentials for remote access • like eduroam/edugain – users don’t handle certificates • critical for other communities – pilot implementation for a token translation service exists • used by Web. FTS 29/09/2015 Budva Workshop 14

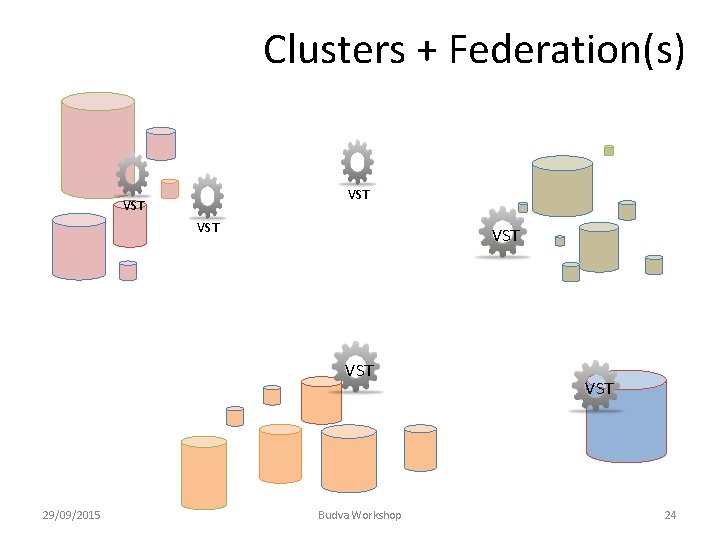

Federation • Can be considered distinct from “clustering” – Loosely coupled, eventual consistency, weak administrative control • Locating and reading data: – AAA, FAX, XROOTD, Dynafed • They can discover what is available to be read • Missing: – Tracking data, reconciling: • what should exist where • what does exist where – Policies within a federation • needed for placing data – including follow up on replication • for read some preferences can be expressed – latency/bandwidth • nothing for writing data 29/09/2015 Budva Workshop 15

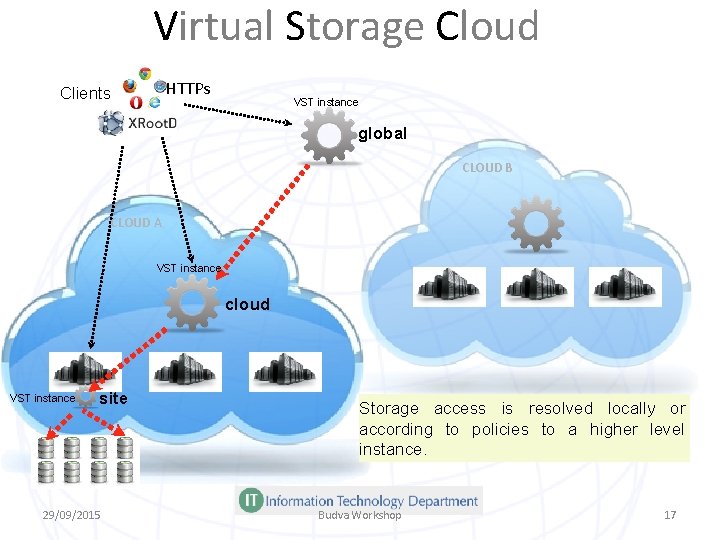

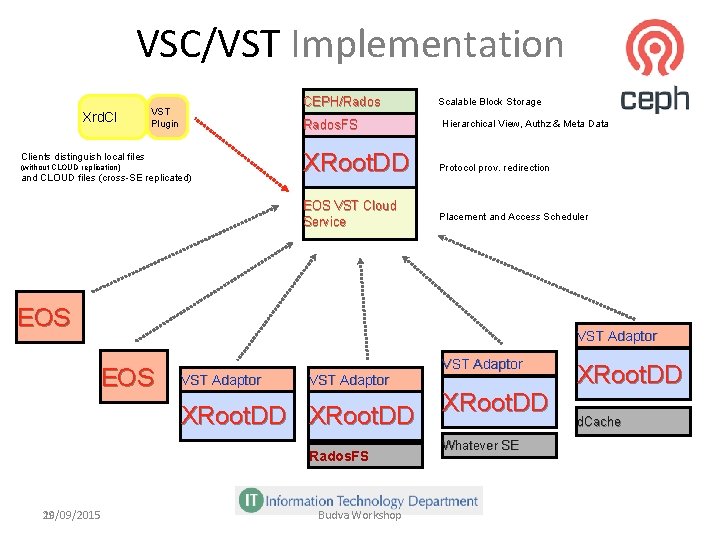

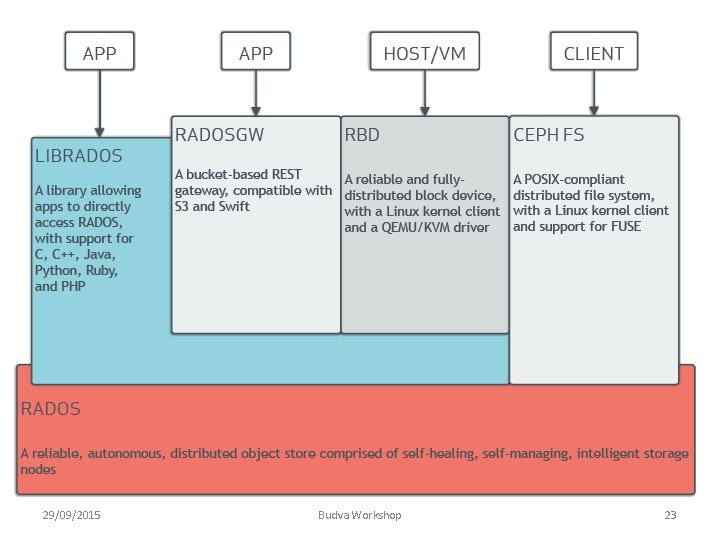

Federation Strategy • Develop one solution to cover the missing pieces – base the solution on existing/emerging technologies – VSC (Virtual Storage Cloud) could be starting point • presented by Andreas Peters (CERN) • implements the proposed architecture • multi level system with hierarchical policies – ensures local autonomy – users can interact on local, regional, global level • based on XRoot. D + Rados. FS + CEPH • tracks all files and builds a global namespace – state information flows up through a multi-level system • Can be rolled out gradually – interfaced to different storage element technologies 29/09/2015 Budva Workshop 16

Virtual Storage Cloud HTTPs Clients VST instance global CLOUD B CLOUD A VST instance cloud VST instance site 29/09/2015 Storage access is resolved locally or according to policies to a higher level instance. Budva Workshop 17 17

VSC/VST Implementation Xrd. Cl CEPH/Rados VST Plugin Rados. FS Clients distinguish local files (without CLOUD replication) and CLOUD files (cross-SE replicated) Scalable Block Storage Hierarchical View, Authz & Meta Data XRoot. DD Protocol prov. redirection EOS VST Cloud Service Placement and Access Scheduler EOS VST Adaptor XRoot. DD Rados. FS 18 29/09/2015 Budva Workshop VST Adaptor XRoot. DD Whatever SE XRoot. DD d. Cache

User Perspective • User can rely on two well working access protocols – XROOTD & HTTP • User can interact with the system on any level – communities/clusters/regions can implement policies – small number of entities to interact with – federation for read and write • Namespace is maintained by the VSC network – Inc mechanisms for managing consistency • Collaborative Clusters reduce the number of entities to monitor 29/09/2015 Budva Workshop 19

If i t is go od en Link to Meta Data ou gh g n i h fo • The global namespace relies on massive Technical Meta r n t Data th related to the files – all information eg – information related lo to policies. . . w o r ou y o ba • This system has to be extendable and massively scalable ln d a n by all user communities me Data is needed a • Some of the Technical Meta c s u p • The domain specific meta dataadiffers c yo between communities ed, – amount, complexity, structure, anprocessing requirements it is • Strategy: a at 29/09/2015 Budva Workshop mo d st Data for all users – Use the Global Namespace e for Technical Meta h likbe added t Tech Meta Data these can • for data similar to the u ely o y to the name space for communities – Provide subscription that have to go e v i od use different gtechnologies en • similar toillthe Lambda Architecture used in monitoring w ou e gh , t w fo o ry f. I n ou ! 20

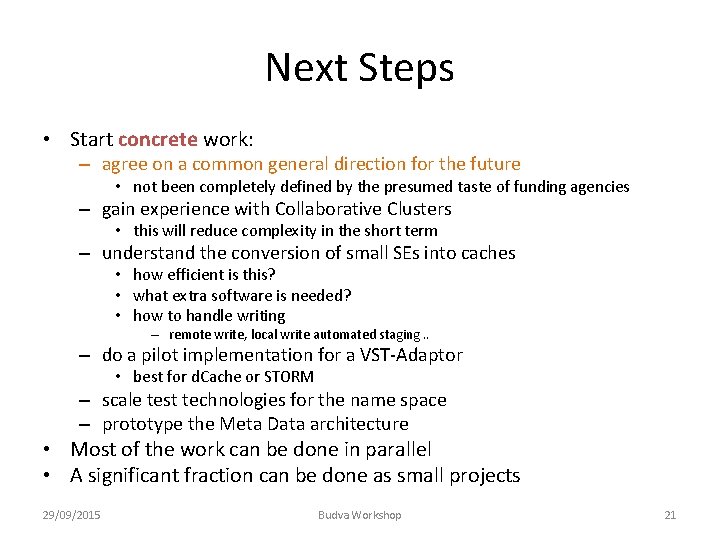

Next Steps • Start concrete work: – agree on a common general direction for the future • not been completely defined by the presumed taste of funding agencies – gain experience with Collaborative Clusters • this will reduce complexity in the short term – understand the conversion of small SEs into caches • how efficient is this? • what extra software is needed? • how to handle writing – remote write, local write automated staging. . – do a pilot implementation for a VST-Adaptor • best for d. Cache or STORM – scale test technologies for the name space – prototype the Meta Data architecture • Most of the work can be done in parallel • A significant fraction can be done as small projects 29/09/2015 Budva Workshop 21

backups 29/09/2015 Budva Workshop 22

29/09/2015 Budva Workshop 23

Clusters + Federation(s) VST VST VST 29/09/2015 Budva Workshop VST 24

- Slides: 24