Boosting Rong Jin Inefficiency with Bagging Inefficient boostrap

Boosting Rong Jin

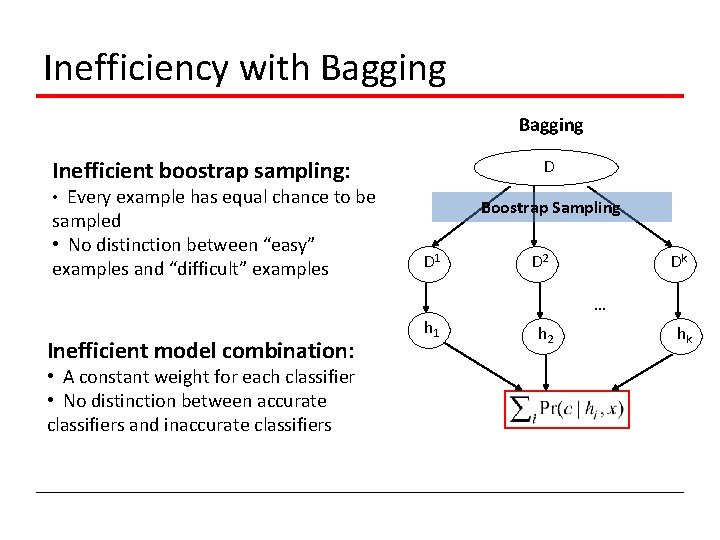

Inefficiency with Bagging Inefficient boostrap sampling: D • Every example has equal chance to be sampled • No distinction between “easy” examples and “difficult” examples Boostrap Sampling D 1 D 2 Dk … Inefficient model combination: • A constant weight for each classifier • No distinction between accurate classifiers and inaccurate classifiers h 1 h 2 hk

Improve the Efficiency of Bagging Better sampling strategy • Focus on the examples that are difficult to classify Better combination strategy • Accurate model should be assigned larger weights

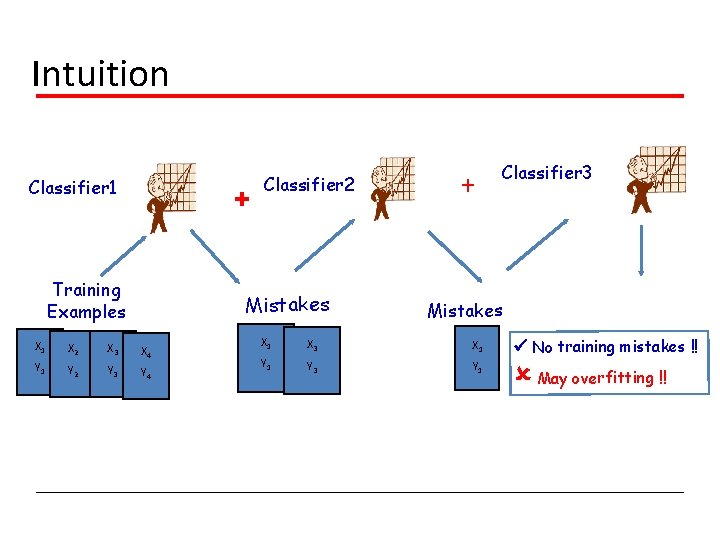

Intuition Classifier 1 + Training Examples Classifier 2 Mistakes X 1 X 2 X 3 X 4 Y 1 Y 2 Y 3 Y 4 + Classifier 3 Mistakes X 1 X 3 X 1 Y 1 No training mistakes !! Y 3 Y 1 May overfitting !!

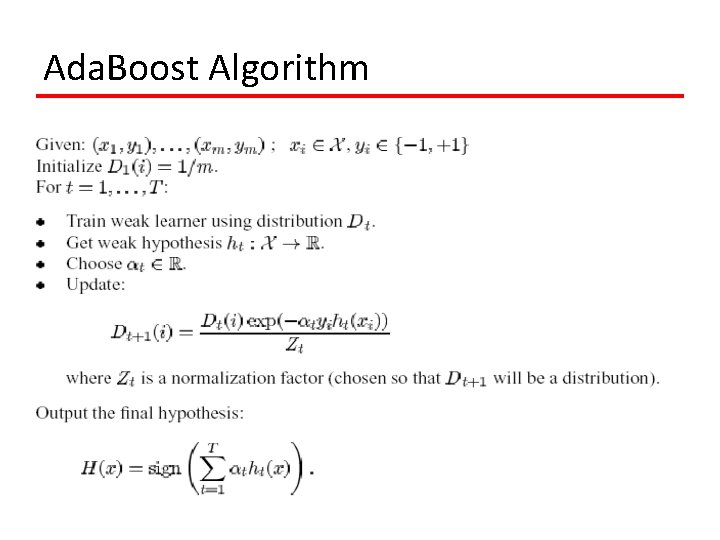

Ada. Boost Algorithm

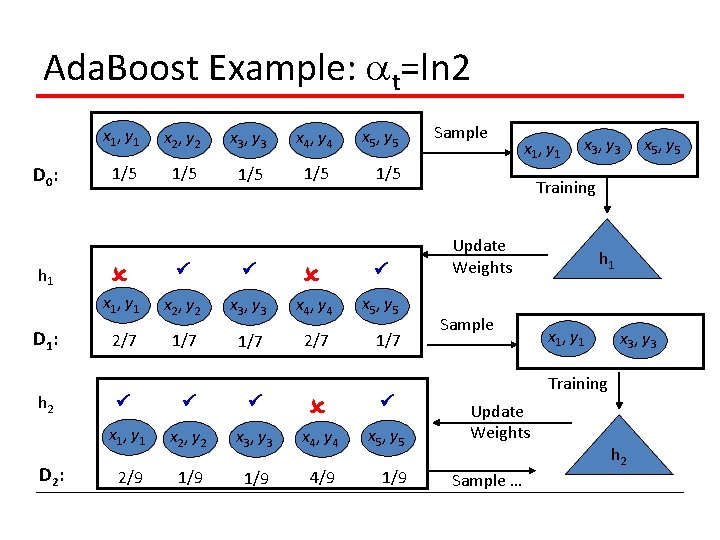

Ada. Boost Example: t=ln 2 D 0 : h 1 D 1 : h 2 D 2 : x 1, y 1 x 2, y 2 x 3, y 3 x 4, y 4 x 5, y 5 1/5 1/5 1/5 x 1, y 1 x 2, y 2 x 3, y 3 x 4, y 4 x 5, y 5 2/7 1/7 x 1, y 1 x 2, y 2 x 3, y 3 x 4, y 4 x 5, y 5 2/9 1/9 4/9 1/9 Sample x 1, y 1 x 3, y 3 x 5, y 5 Training Update Weights Sample h 1 x 1, y 1 x 3, y 3 Training Update Weights h 2 Sample …

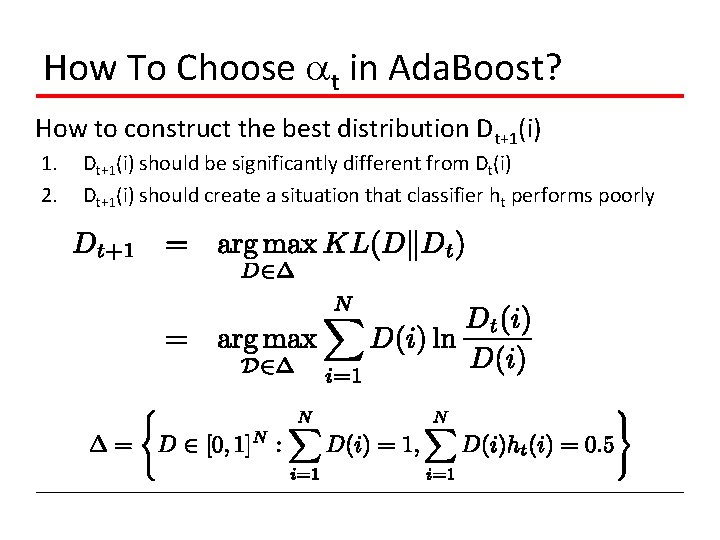

How To Choose t in Ada. Boost? How to construct the best distribution Dt+1(i) 1. 2. Dt+1(i) should be significantly different from Dt(i) Dt+1(i) should create a situation that classifier ht performs poorly

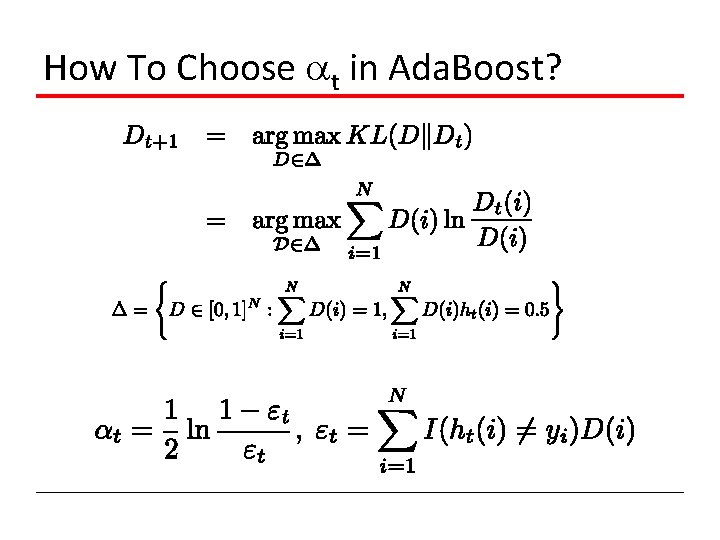

How To Choose t in Ada. Boost?

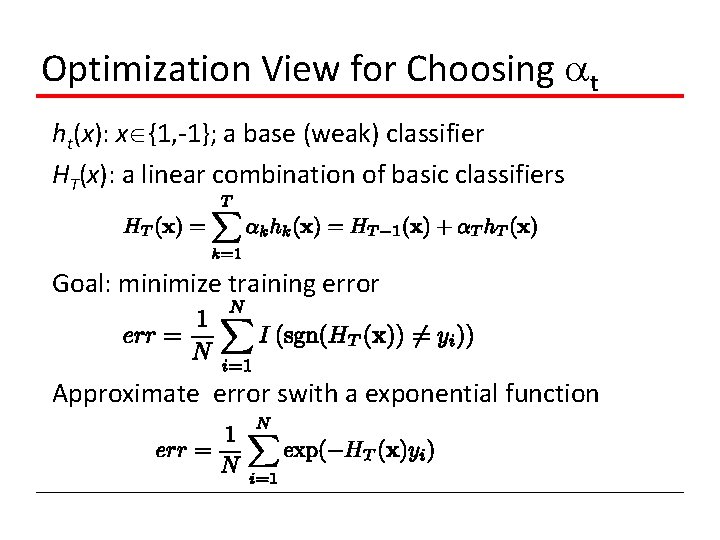

Optimization View for Choosing t ht(x): x {1, -1}; a base (weak) classifier HT(x): a linear combination of basic classifiers Goal: minimize training error Approximate error swith a exponential function

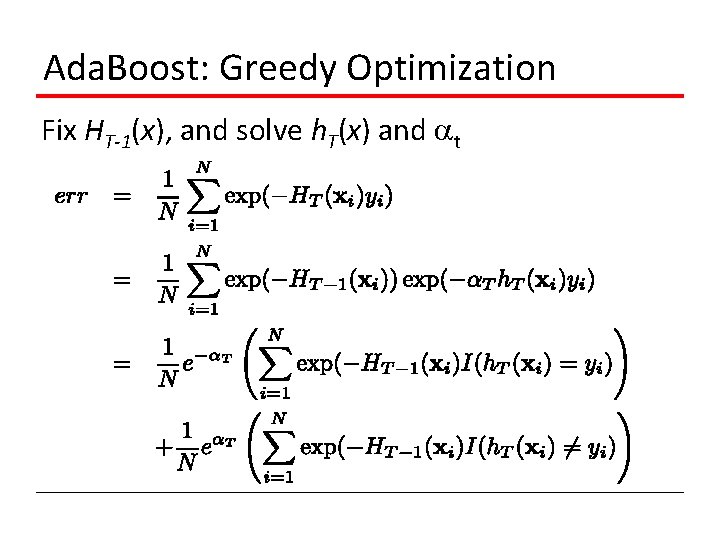

Ada. Boost: Greedy Optimization Fix HT-1(x), and solve h. T(x) and t

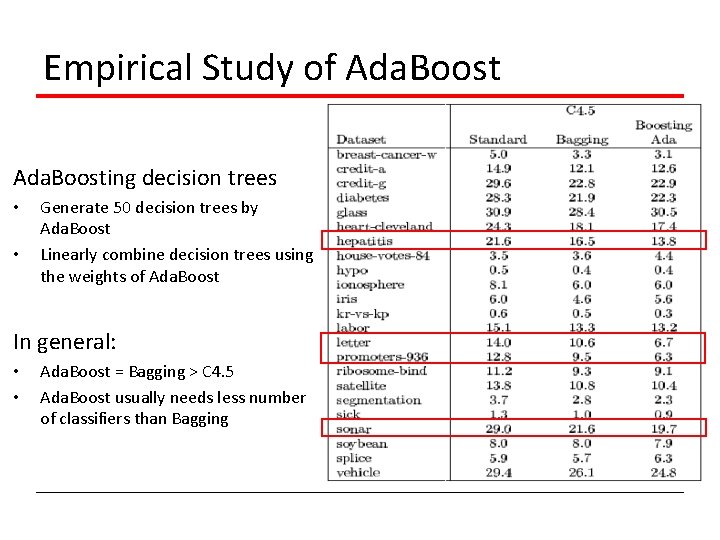

Empirical Study of Ada. Boosting decision trees • • Generate 50 decision trees by Ada. Boost Linearly combine decision trees using the weights of Ada. Boost In general: • • Ada. Boost = Bagging > C 4. 5 Ada. Boost usually needs less number of classifiers than Bagging

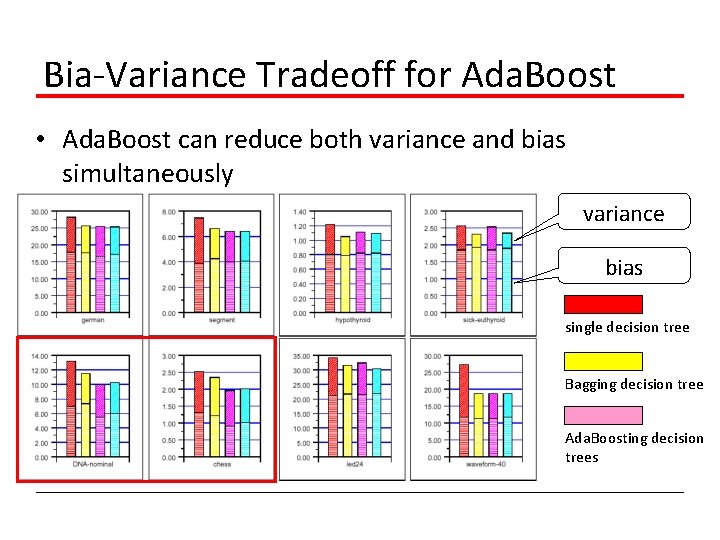

Bia-Variance Tradeoff for Ada. Boost • Ada. Boost can reduce both variance and bias simultaneously variance bias single decision tree Bagging decision tree Ada. Boosting decision trees

- Slides: 12