SelfPaced Learning for Latent Variable Models M Pawan

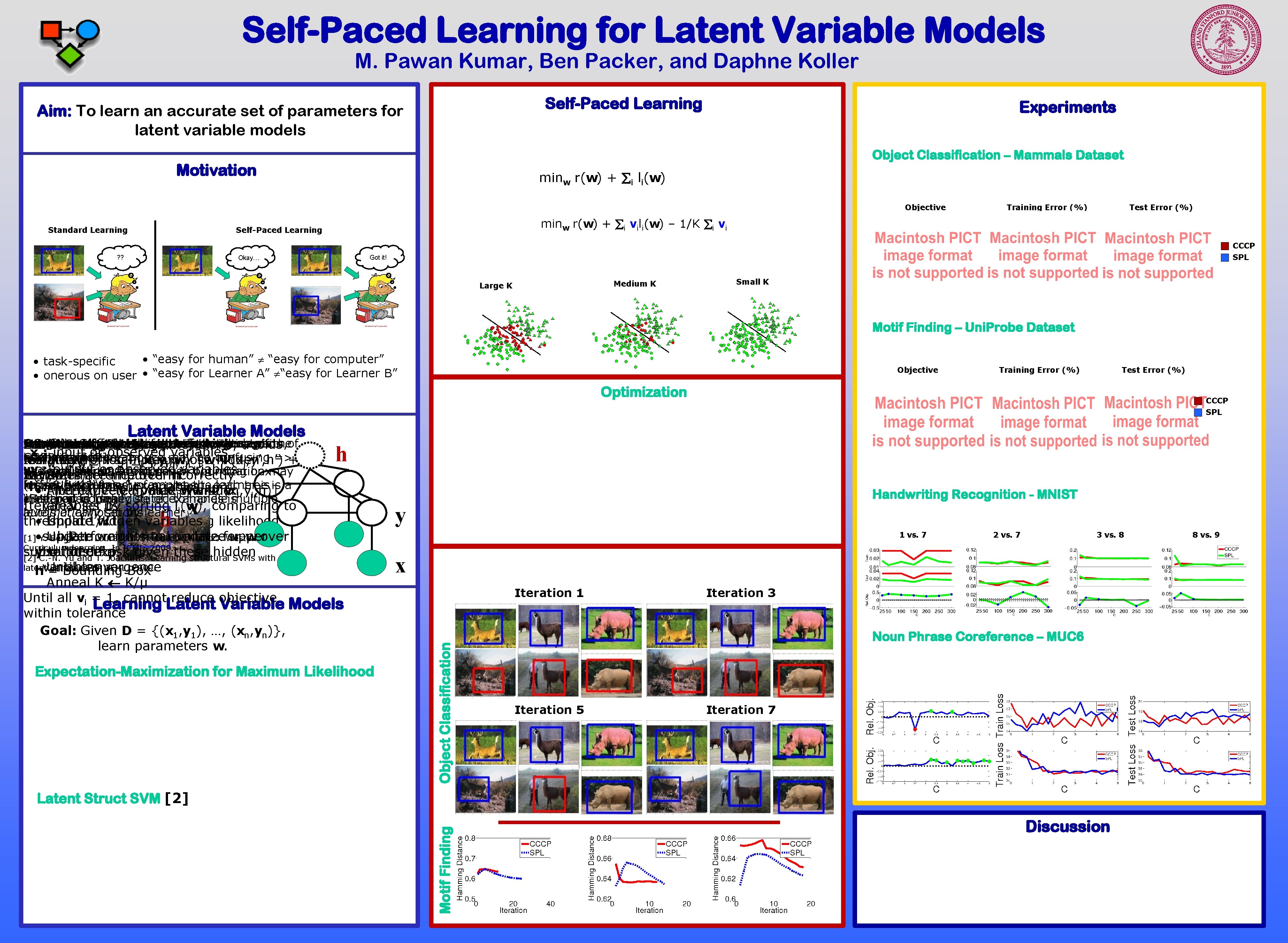

Self-Paced Learning for Latent Variable Models M. Pawan Kumar, Ben Packer, and Daphne Koller Self-Paced Learning Aim: To learn an accurate set of parameters for latent variable models Experiments Object Classification – Mammals Dataset Motivation minw r(w) + i li(w) Objective Standard Learning Test Error (%) minw r(w) + i vili(w) – 1/K i vi Self-Paced Learning ? ? Training Error (%) CCCP SPL Got it! Okay… Medium K Large K Small K Motif Finding – Uni. Probe Dataset • “easy for human” “easy for computer” • task-specific • onerous on user • “easy for Learner A” “easy for Learner B” Objective Training Error (%) Test Error (%) Optimization CCCP SPL Latent Variable Models h h x threshold • Impute Update 1/K w hidden to maximize variables log likelihood Introduce • Y. subject Update Perform weights normal to “easiness” minimize update upper vw [1] Bengio, J. indicator Louradour, R. of Collobert, and J. for Weston. i: over Curriculum learning. In ICML, 2009. y = “Deer” subset bound to this of on data expectation risk given these hidden [2] C. -N. Yu and T. Joachims. Learning structural SVMs with latent variables. In ICML, 2009. variables Until convergence h = Bounding Box Anneal K K/μ K determines threshold for a set being easy, all Until which vi = is 1, annealed cannot reduce over successive objective Learning Latent Variable Models iterations within tolerance until all samples used Goal: Given D = {(x 1, y 1), …, (xn, yn)}, learn parameters w. Expectation-Maximization for Maximum Likelihood Handwriting Recognition - MNIST y 1 vs. 7 2 vs. 7 3 vs. 8 x Iteration 1 Object Classification • Intuitions Image x Bengio Compare Self-paced is raw consists DNA label et image, sequence, Self-Paced al. of from y strategy pairwise [1]: islikelihood: yobject Human user-specified islarge h digit, Learning outperforms is features class motif h Learning: isrisk only, position, to image between ordering standard h state is rotation, ypairs of is theof Restrict Maximize Minimize Initialize Easier subsets learning K upper log to be inbound to early a model-dependent on iterations, avoids x : input or observed variables 2 • bounding binding use nouns CCCP art all linear information as affinity in box kernel [2] at. P(x once may be confusing =>+ min max ||w|| + log C· max , y ; w) [w·Ψ(x , y’, h’) “easy” Iterate: learning set from of samples whose hidden w w i i y’, h’ i y : output or observed variables bad Ψ(x y • Global is a , y local clustering , h solvers ) minima is HOG for of features nouns biconvex in bounding optimization box may i i i Iterate: Δ(y variables , y’, h’)] inference are imputed overincorrectly h i. Run • hstart (offset h improve specifies with byaccuracy class) a“easy” forest examples ofvariables nouns s. t. the each learner tree is is a : hidden/latent -of. C· [w·Ψ (x General • Alternatively Find form expected objective: update value w and hidden v: i, yi, h)] i maxhof prepared cluster “Self-paced” • Method of to is nouns ideally handle schedule suited of examples to handleismultiple Iterate: variables v set using by sorting current li(w), w comparing to automatically levels of annotations set by learner Iteration 3 Noun Phrase Coreference – MUC 6 Iteration 5 Iteration 7 Motif Finding Latent Struct SVM [2] Discussion 8 vs. 9

- Slides: 1