Rhythmic Transcription of MIDI Signals Carmine Casciato MUMT

- Slides: 16

Rhythmic Transcription of MIDI Signals Carmine Casciato MUMT 611 Thursday, February 10, 2005

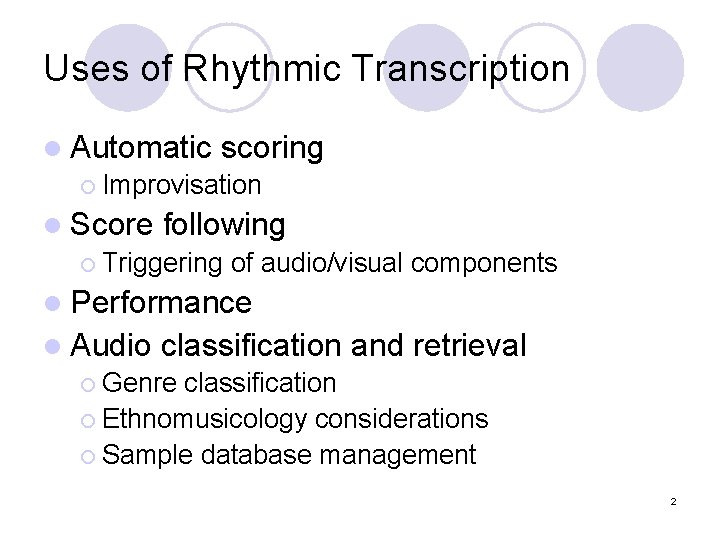

Uses of Rhythmic Transcription l Automatic scoring ¡ Improvisation l Score following ¡ Triggering of audio/visual components l Performance l Audio classification and retrieval ¡ Genre classification ¡ Ethnomusicology considerations ¡ Sample database management 2

MIDI Signals Unidirectional message stream at 3. 125 KHz l System Real Time Messages provide Timing Tick message l A simplification of acoustic signals l ¡ No noise, masking effects Easily retrieve note onsets, offsets, velocities, pitches l However, no knowledge of acoustic properties of sound l 3

Difficulties in Rhythmic Transcription Expressive performance vs mechanical performance l Inexact performance of notes l ¡ ¡ ¡ l Syncopations Silences Grace notes Robustness of beat tracker ¡ Can the tracker recover from incorrect beat induction? Real time implementation l (Dixon 2001) l 4

Human Limits of Rhythmic Perception Two note onsets are deemed synchronous when played within 40 ms of each other, 70 ms for > two notes l Piano and orchestral performances exhibit note onset asynchronicity of 30 -50 ms l Note onset differences of 50 ms to 2 s give rhythmic information l (Dixon 2001) l 5

Evaluation Criteria for Beat Trackers Informally - click track of reported beats added to signal l Visually marking the reporting beats l Comparing reported vs known, correct beats l (Dixon 2001) l 6

Definitions l l l Beat - “perceived pulses which are approximately equally spaced and define the rate at which notes in a piece are played” meterical, score , performance level tempo - beats per minute Inter-onset Intervals (IOI) - time intervals between note onsets (Dixon 2001) 7

Approaches - Probabilistic Frameworks Cemgil et al (2000) - Bayesian framework, using a tempogram (wavelet) and a 10 th order Kalman Filter to estimate tempo, which is a hidden state variable l Takeda et al (2002) - Hidden Markov models for fluctuating note lengths and note sequences, estimating both rhythms and tempo l Raphael (2002) - tempo and rhythm l 8

Approaches - Oscillators Period and phase that adjusts itself to synchronize to IOI input l Dannenberg and Allen (1990) - weighted IOIs and credibility evaluation based on past input l Meudic (2002) - real time implementation of Dixon l ¡ l Induce several beats and attempt to propagate them through the signal (agents), then choose the best Pardo (2004) - Oscillator, compared to Cemgil using same corpus 9

Pardo 2004 - Oscillatory Design Is a Kalman Filter (Cemgil) or oscillator better for online tempo tracking? l Performance as time series of weights, W, over T time steps l Weight of time step with no note onsets = 0, increased proportional to # of note onsets l 100 ms is minimum IOI allowed, minimum beat period l 10

Pardo 2004 Uses weighted average of last 20 beat periods, with one parameter varying degrees of smoothing l A correction parameter varies how far the period and phase of the next predicted beat is changed according to known information l A window size parameter affects how many periods may affect the current prediction l Chose 5000 random values of these three parameters, ran each triplet on 99 performances of Cemgil corpora l 11

Cemgil MIDI/Piano Corpora Four pro jazz, four pro classical, three amateur piano players l Yesterday and Michelle, fast, slow and normal, captured on a Yamaha Diskclavier l Available at www. nici. kun. nl/mmm/ l 12

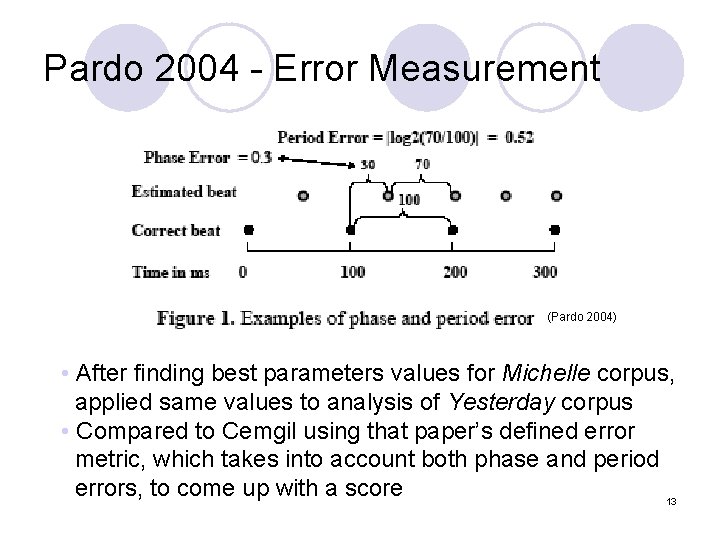

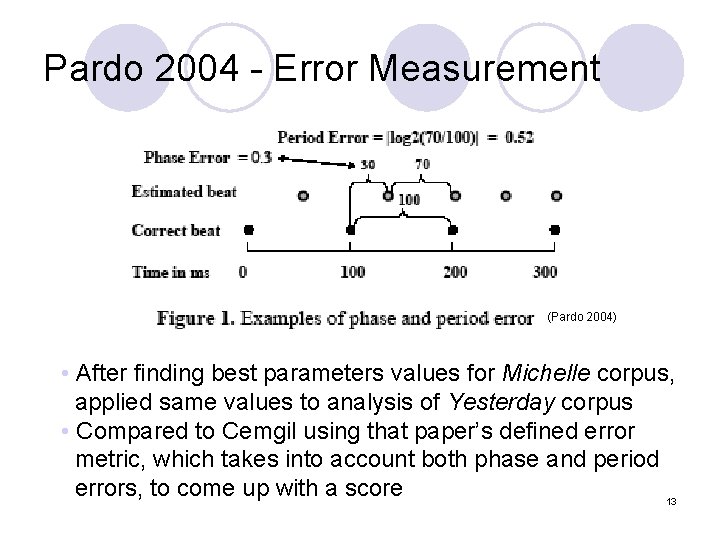

Pardo 2004 - Error Measurement (Pardo 2004) • After finding best parameters values for Michelle corpus, applied same values to analysis of Yesterday corpus • Compared to Cemgil using that paper’s defined error metric, which takes into account both phase and period errors, to come up with a score 13

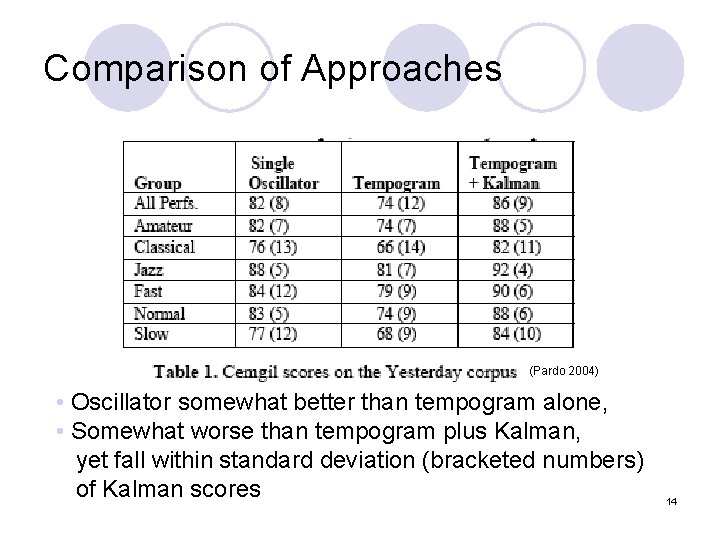

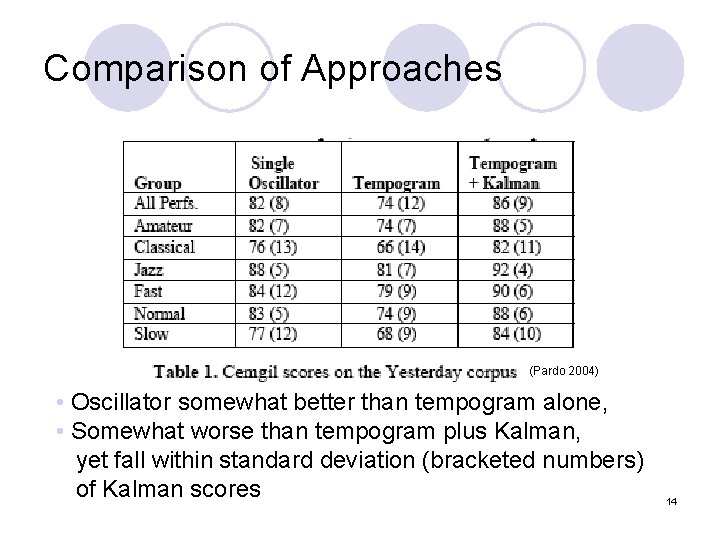

Comparison of Approaches (Pardo 2004) • Oscillator somewhat better than tempogram alone, • Somewhat worse than tempogram plus Kalman, yet fall within standard deviation (bracketed numbers) of Kalman scores 14

Other Considerations l Stylistic information ¡ Training l Musical of tracker importance of note ¡ Duration ¡ Pitch ¡ Velocity 15

Bibliography l l l Allen, P. , and R. Dannenberg. 1990. Tracking musical beats in real time. In Proceedings of the International Computer Music Conference 1990: 140– 3. Dixon, S. 2001. Automatic extraction of tempo and beat from expressive performances. Journal of New Music Research 30 (1): 39– 58. Meudic, B. 2002. A causal algorithm for beat-tracking. In Proceedings of Conference on Understanding and Creating Music. Pardo, B. 2004. Tempo tracking with a single oscillator. In Proceedings of the International Conference on Music Information Retrieval 2004. Raphael, C. 2002. A hybrid graphical model for rhythmic parsing. Artificial Intelligence 137: 217– 38. Takeda, H. , T. Nishimoto, and S. Sagayama. 2002. Automatic rhythm transcription from multiphonic MIDI signals. In Proceedings of the International Conference on Music Information Retrieval 2003. 16

Chapter 6 audio basics

Chapter 6 audio basics Pps carminé

Pps carminé Carmine ventre

Carmine ventre Pps carminé

Pps carminé Colours e100

Colours e100 Etsi security week

Etsi security week Communicative and informative signals

Communicative and informative signals Animals and human language chapter 2

Animals and human language chapter 2 Arbitrariness in human language and animal language

Arbitrariness in human language and animal language Steve reich midi files

Steve reich midi files Programmazione midi

Programmazione midi Midi channel voice messages

Midi channel voice messages Pic du midi d'ossau mariano

Pic du midi d'ossau mariano Midi trax

Midi trax Midi sample dump standard

Midi sample dump standard Rtp midi

Rtp midi General midi map

General midi map