PrivacyPreserving Data Sharing Michael Siegenthaler Ken Birman Cornell

Privacy-Preserving Data Sharing Michael Siegenthaler Ken Birman Cornell University

Introduction • Today, personal data is typically stored electronically • But systems at distinct organizations have no way to communicate with each other ID ID ID

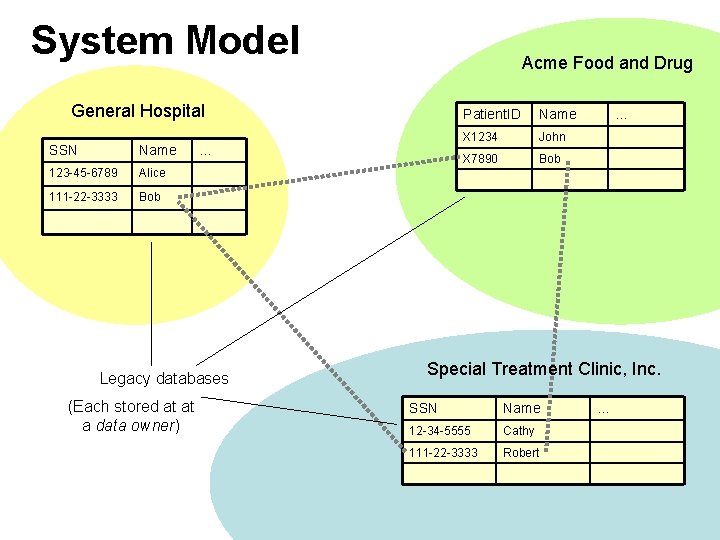

System Model Acme Food and Drug General Hospital SSN Name 123 -45 -6789 Alice 111 -22 -3333 Bob … Legacy databases (Each stored at at a data owner) Patient. ID Name X 1234 John X 7890 Bob … Special Treatment Clinic, Inc. SSN Name 12 -34 -5555 Cathy 111 -22 -3333 Robert …

Example Query • Drug interaction check at pharmacy – A pharmacist is dispensing a drug, doesn’t know what else the patient may be taking – Patient’s medical record is stored at primary care provider and various specialists • Is it safe for the patient to take this drug?

Guarantees • Data privacy – E. g. pharmacist receives yes/no answer, not the underlying data • Query privacy – E. g. hospital does not learn which drug is currently being dispensed • Anonymous communication – E. g. hospital and pharmacy do not learn each other’s identities

Anonymous Communication • Onion skin routing – Providers Pi – Encryption function E – Public keys KPi • Example: – Reference to patient 34 at Provider 2 routed through provider Provider 1

Requirements • “Locate” remote records – Translate a real-world identifier (name, SSN, DOB. . . ) into a data handle, an onion skin route that can be used to communicate with the providers where the data owners • Execute the desired query – Use data handles to perform a privacy-preserving query

Global Search Mechanism Search for user with SSN 343 -56 -7878 • Hierarchy of provider groups – Each group has a designated contact who tracks its membership

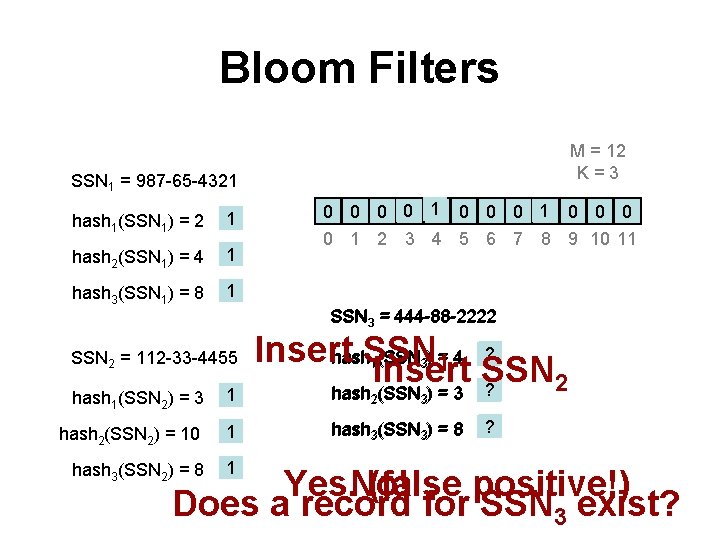

Bloom Filters M = 12 K=3 SSN 1 = 987 -65 -4321 hash 1(SSN 1) = 2 1 hash 2(SSN 1) = 4 1 hash 3(SSN 1) = 8 1 1 10 0 0 0 1 2 3 4 5 6 7 8 9 10 11 SSN 3 = 444 -88 -2222 SSN 2 = 112 -33 -4455 hash 1(SSN 2) = 3 1 hash 2(SSN 2) = 10 1 hash 3(SSN 2) = 8 1 Insert hash. SSN (SSN ) = 4 ? 1 Insert SSN 2 hash (SSN ) = 3 ? 1 3 2 3 hash 3(SSN 3) = 8 ? Yes. No! (false positive!) Does a record for SSN 3 exist?

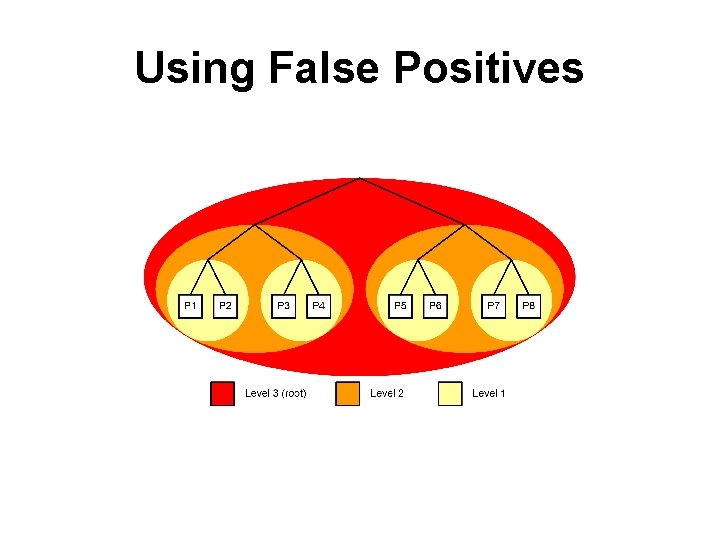

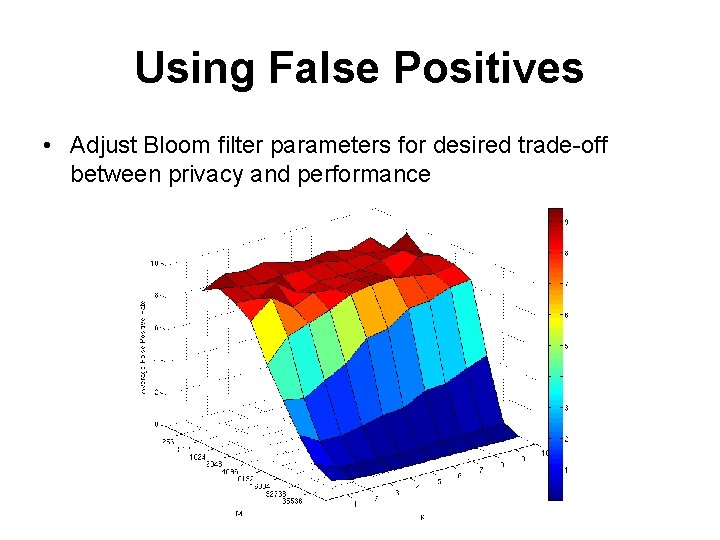

Using False Positives

Using False Positives • Adjust Bloom filter parameters for desired trade-off between privacy and performance

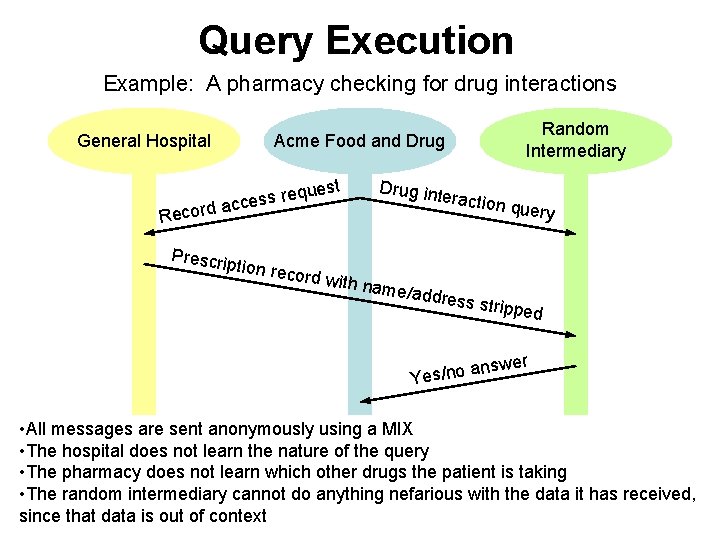

Query Execution Example: A pharmacy checking for drug interactions General Hospital Random Intermediary Acme Food and Drug quest e r s s e rd acc Reco Prescrip tion rec ord with Drug in name/a teractio ddress n query stripped wer ans Yes/no • All messages are sent anonymously using a MIX • The hospital does not learn the nature of the query • The pharmacy does not learn which other drugs the patient is taking • The random intermediary cannot do anything nefarious with the data it has received, since that data is out of context

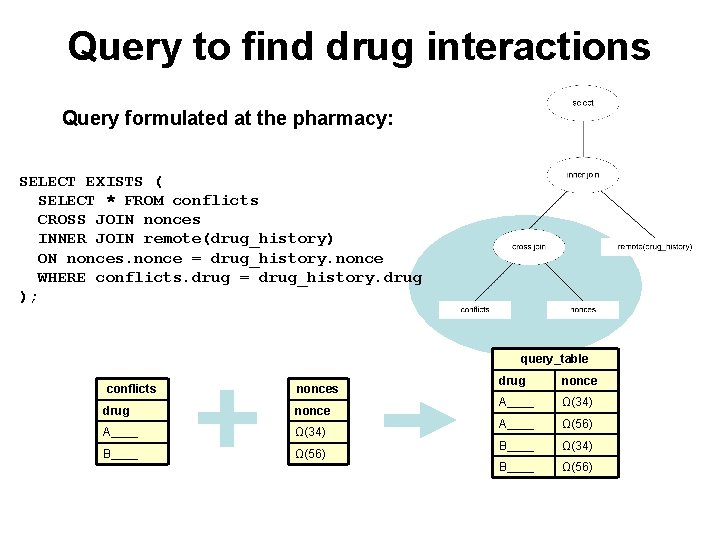

Query to find drug interactions Query formulated at the pharmacy: SELECT EXISTS ( SELECT * FROM conflicts CROSS JOIN nonces INNER JOIN remote(drug_history) ON nonces. nonce = drug_history. nonce WHERE conflicts. drug = drug_history. drug ); query_table conflicts nonces drug nonce A____ Ω(34) B____ Ω(56) drug nonce A____ Ω(34) A____ Ω(56) B____ Ω(34) B____ Ω(56)

Split query: data gathering Query sent to the data owner(s): SEND ( SELECT nonce, drug FROM drug_history WHERE drug_history. nonce = Ω(34) ); drug_history nonce drug 34 A____ mix_host

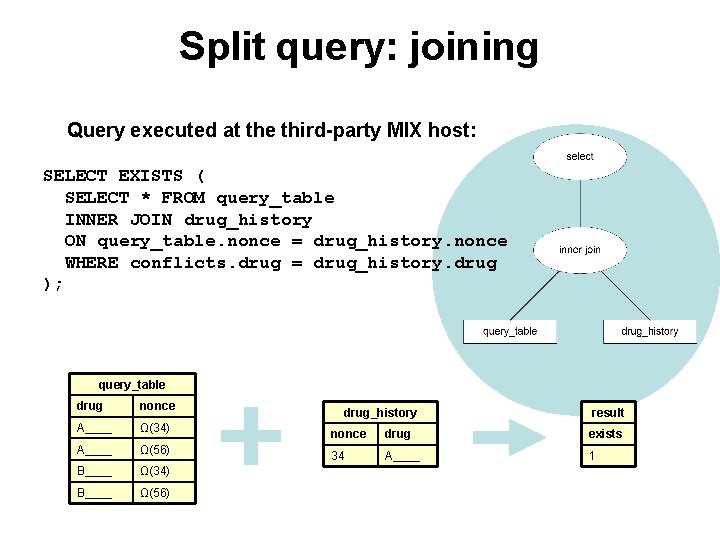

Split query: joining Query executed at the third-party MIX host: SELECT EXISTS ( SELECT * FROM query_table INNER JOIN drug_history ON query_table. nonce = drug_history. nonce WHERE conflicts. drug = drug_history. drug ); query_table drug nonce A____ Ω(34) A____ Ω(56) B____ Ω(34) B____ Ω(56) drug_history result nonce drug exists 34 A____ 1

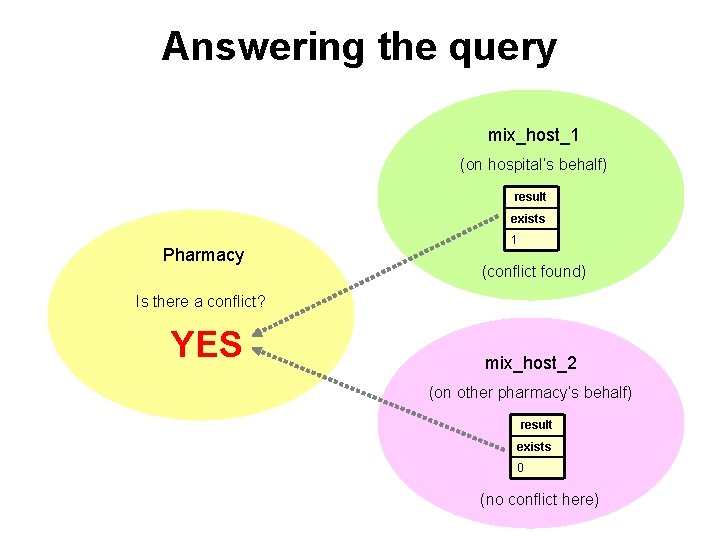

Answering the query mix_host_1 (on hospital’s behalf) result exists Pharmacy 1 (conflict found) Is there a conflict? YES mix_host_2 (on other pharmacy’s behalf) result exists 0 (no conflict here)

Conclusion and Future Work • Selective sharing of personal information across distributed databases – Data privacy – Query privacy – Anonymous communication • Working on: how to enforce a policy on which data may be revealed to whom • Also: how to prevent data mining attacks?

- Slides: 17