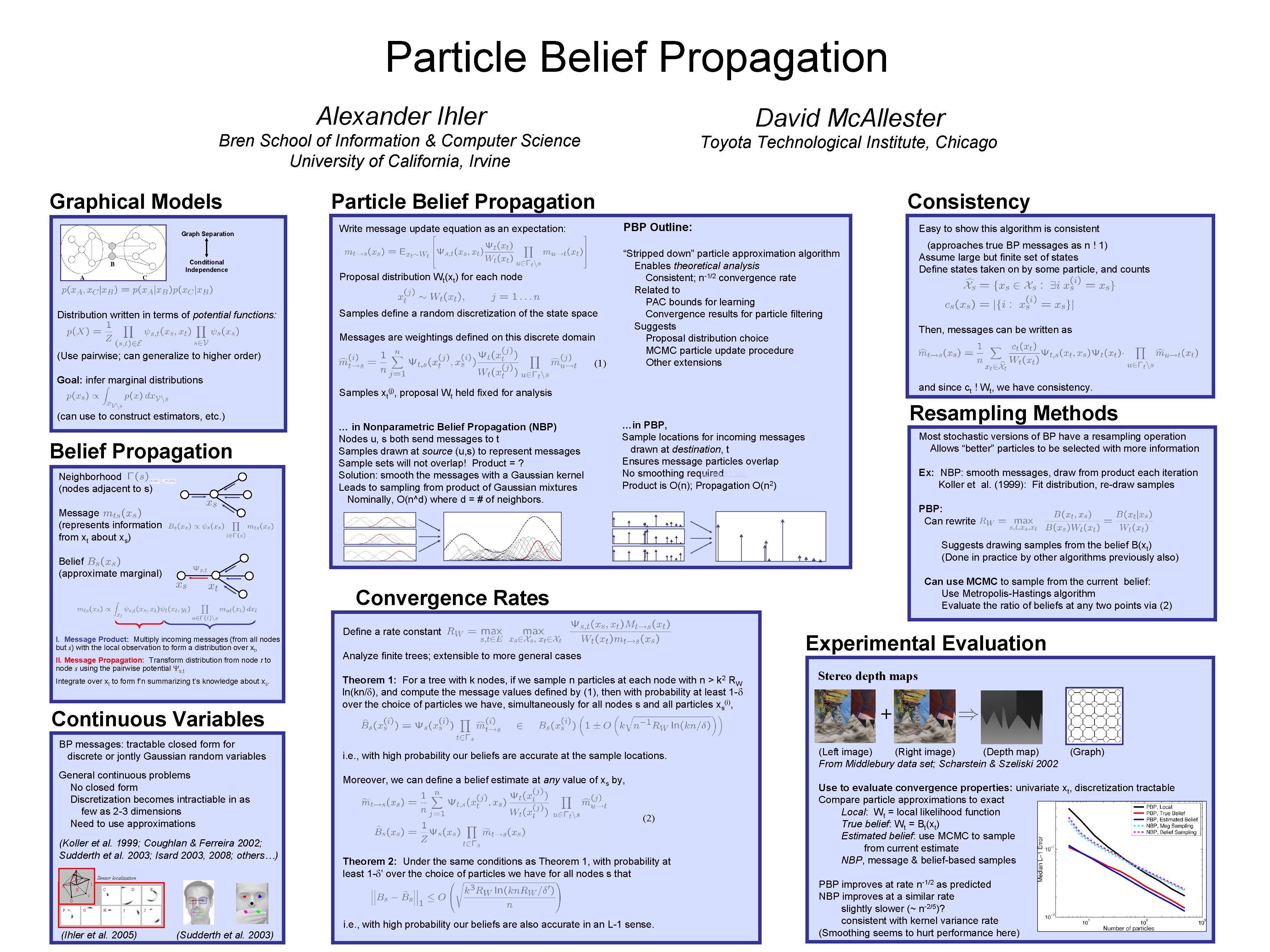

Particle Belief Propagation Alexander Ihler David Mc Allester

Particle Belief Propagation Alexander Ihler David Mc. Allester Bren School of Information & Computer Science University of California, Irvine Graphical Models Graph Separation Conditional Independence Distribution written in terms of potential functions: Toyota Technological Institute, Chicago Particle Belief Propagation PBP Outline: Write message update equation as an expectation: Proposal distribution Wt(xt) for each node Samples define a random discretization of the state space Messages are weightings defined on this discrete domain (Use pairwise; can generalize to higher order) Consistency (1) Easy to show this algorithm is consistent (approaches true BP messages as n ! 1) Assume large but finite set of states Define states taken on by some particle, and counts “Stripped down” particle approximation algorithm Enables theoretical analysis Consistent; n-1/2 convergence rate Related to PAC bounds for learning Convergence results for particle filtering Suggests Proposal distribution choice MCMC particle update procedure Other extensions Then, messages can be written as Goal: infer marginal distributions Samples (can use to construct estimators, etc. ) Belief Propagation Neighborhood (nodes adjacent to s) xt(j), and since ct ! Wt, we have consistency. proposal Wt held fixed for analysis … in Nonparametric Belief Propagation (NBP) Nodes u, s both send messages to t Samples drawn at source (u, s) to represent messages Sample sets will not overlap! Product = ? Solution: smooth the messages with a Gaussian kernel Leads to sampling from product of Gaussian mixtures Nominally, O(n^d) where d = # of neighbors. Resampling Methods …in PBP, Sample locations for incoming messages drawn at destination, t Ensures message particles overlap y 2=rand(1, 10); No smoothing required Product is O(n); Propagation O(n 2) Most stochastic versions of BP have a resampling operation Allows “better” particles to be selected with more information Ex: NBP: smooth messages, draw from product each iteration Koller et al. (1999): Fit distribution, re-draw samples PBP: Can rewrite Message (represents information from xt about xs) Suggests drawing samples from the belief B(xt) (Done in practice by other algorithms previously also) Belief (approximate marginal) Can use MCMC to sample from the current belief: Use Metropolis-Hastings algorithm Evaluate the ratio of beliefs at any two points via (2) Convergence Rates I. Message Product: Multiply incoming messages (from all nodes but s) with the local observation to form a distribution over xt, II. Message Propagation: Transform distribution from node t to node s using the pairwise potential Ys, t Integrate over xt to form f’n summarizing t’s knowledge about xs. Continuous Variables BP messages: tractable closed form for discrete or jontly Gaussian random variables General continuous problems No closed form Discretization becomes intractiable in as few as 2 -3 dimensions Need to use approximations (Koller et al. 1999; Coughlan & Ferreira 2002; Sudderth et al. 2003; Isard 2003, 2008; others…) G F A C H Sensor localization E Define a rate constant Experimental Evaluation Analyze finite trees; extensible to more general cases Theorem 1: For a tree with k nodes, if we sample n particles at each node with n > k 2 RW ln(kn/d), and compute the message values defined by (1), then with probability at least 1 -d over the choice of particles we have, simultaneously for all nodes s and all particles xs(i), i. e. , with high probability our beliefs are accurate at the sample locations. Moreover, we can define a belief estimate at any value of xs by, (2) Theorem 2: Under the same conditions as Theorem 1, with probability at least 1 -d’ over the choice of particles we have for all nodes s that I C B D E J D F G H I (Ihler et al. 2005) J (Sudderth et al. 2003) i. e. , with high probability our beliefs are also accurate in an L-1 sense. Stereo depth maps + (Left image) (Right image) (Depth map) From Middlebury data set; Scharstein & Szeliski 2002 (Graph) Use to evaluate convergence properties: univariate xt, discretization tractable Compare particle approximations to exact Local: Wt = local likelihood function True belief: Wt = Bt(xt) Estimated belief: use MCMC to sample from current estimate NBP, message & belief-based samples PBP improves at rate n-1/2 as predicted NBP improves at a similar rate slightly slower (~ n-2/5)? consistent with kernel variance rate (Smoothing seems to hurt performance here)

- Slides: 1