Overview of the Global Arrays Parallel Software Development

Overview of the Global Arrays Parallel Software Development Toolkit Jarek Nieplocha 1 , Bruce Palmer 1, Manojkumar Krishnan 1, Vinod Tipparaju 1, P. Saddayappan 2 1 Pacific Northwest National Laboratory 2 Ohio State University

Outline of the Tutorial z Parallel programming models z Global Arrays (GA) programming model z GA Operations y Writing, compiling and running GA programs y Basic, intermediate, and advanced calls x. With C and Fortran examples z GA Hands-on session 6/18/2021 Global Arrays Tutorial 2

Parallel Programming Models z Single Threaded y Data Parallel, e. g. HPF z Multiple Processes y Partitioned-Local Data Access x. MPI y Uniform-Global-Shared Data Access x. Open. MP y Partitioned-Global-Shared Data Access x. Co-Array Fortran y Uniform-Global-Shared + Partitioned Data Access x. UPC, Global Arrays, X 10 6/18/2021 Global Arrays Tutorial 3

High Performance Fortran z z Single-threaded view of computation Data parallelism and parallel loops User-specified data distributions for arrays Compiler transforms HPF program to SPMD program y Communication optimization critical to performance z Programmer may not be conscious of communication implications of parallel program DO I = 1, N DO J = 1, N A(I, J) = B(J, I) A(I, J) = B(I, J) END END 6/18/2021 Global Arrays Tutorial 4

Message Passing Interface z Most widely used parallel programming model today z Bindings for Fortran, C, C++, MATLAB z P parallel processes, each with local data y MPI-1: Send/receive messages for inter-process communication y MPI-2: One-sided get/put data access from/to local data at remote process z Explicit control of all inter-processor communication y Advantage: Programmer is conscious of communication overheads and attempts to minimize it y Drawback: Program development/debugging is tedious due to the partitioned-local view of the data 6/18/2021 Global Arrays Tutorial 5

Open. MP z Uniform-Global view of shared data z Available for Fortran, C, C++ z Work-sharing constructs (parallel lops and sections) and global-shared data view ease program development z Disadvantage: Data locality issues obscured by programming model z Only available for shared-memory systems 6/18/2021 Global Arrays Tutorial 6

Co-Array Fortran z Partitioned, but global-shared data view z SPMD programming model with local and shared variables z Shared variables have additional co-array dimension(s), mapped to process space; each process can directly access array elements in the space of other processes y A(I, J) = A(I, J)[me-1] + A(I, J)[me+1] z Compiler optimization of communication critical to performance, but all non-local access is explicit 6/18/2021 Global Arrays Tutorial 7

Unified Parallel C (UPC) z SPMD programming model with global shared view for arrays as well as pointer-based data structures z Compiler optimizations critical for controlling interprocessor communication overhead y Very challenging problem since local vs. remote access is not explicit in syntax (unlike Co-Array Fortran) y Linearization of multidimensional arrays makes compiler optimization of communication very difficults z Performance study with NAS benchmarks (PPo. PP 2005, Mellor-Crummey et. al. ) compared CAF and UPC y Co-Array Fortran had significantly better scalability y Linearization of multi-dimensional arrays in UPC was a significant source of overhead 6/18/2021 Global Arrays Tutorial 8

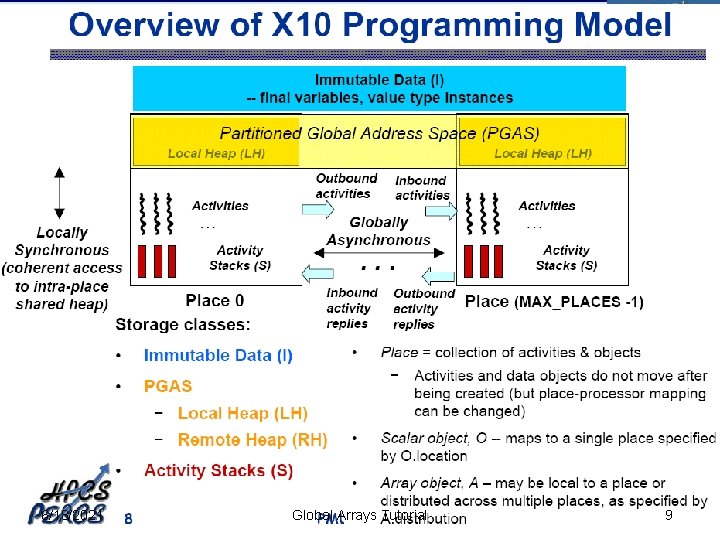

6/18/2021 Global Arrays Tutorial 9

Global Arrays vs. Other Models Advantages: z Inter-operates with MPI y Use more convenient global-shared view for multi-dimensional arrays, but can use MPI model wherever needed z Data-locality and granularity control is explicit with GA’s get-compute-put model, unlike the non-transparent communication overheads with other models (except MPI) z Library-based approach: does not rely upon smart compiler optimizations to achieve high performance Disadvantage: z Only useable for array data structures 6/18/2021 Global Arrays Tutorial 10

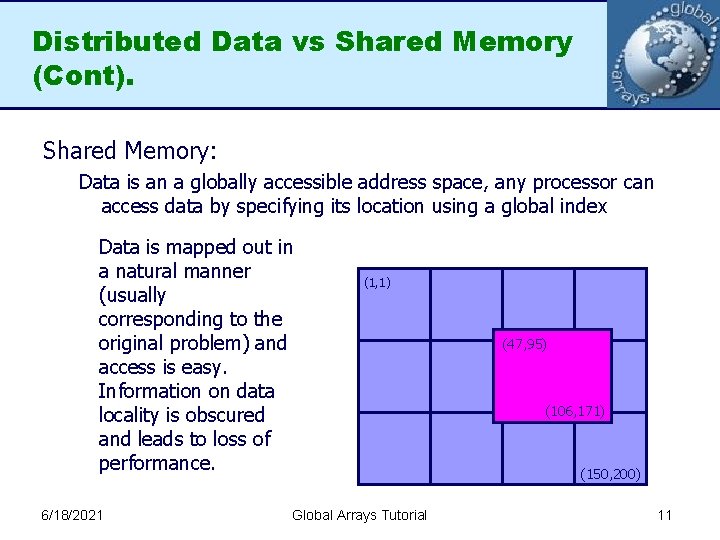

Distributed Data vs Shared Memory (Cont). Shared Memory: Data is an a globally accessible address space, any processor can access data by specifying its location using a global index Data is mapped out in a natural manner (usually corresponding to the original problem) and access is easy. Information on data locality is obscured and leads to loss of performance. 6/18/2021 (1, 1) Global Arrays Tutorial (47, 95) (106, 171) (150, 200) 11

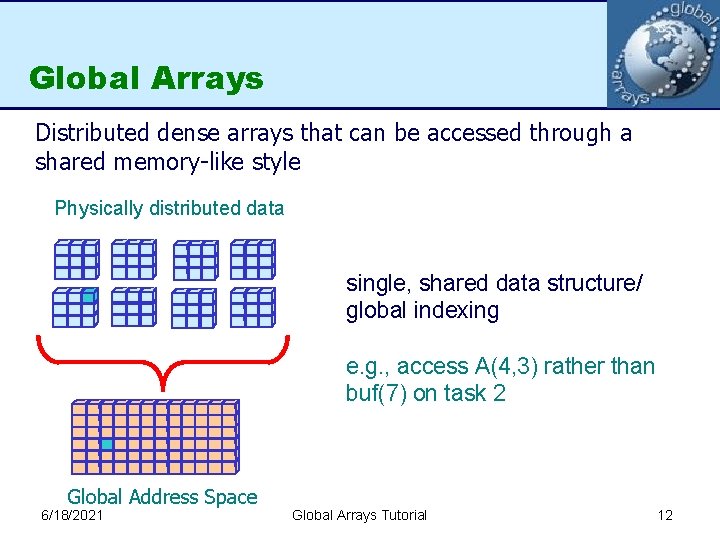

Global Arrays Distributed dense arrays that can be accessed through a shared memory-like style Physically distributed data single, shared data structure/ global indexing e. g. , access A(4, 3) rather than buf(7) on task 2 Global Address Space 6/18/2021 Global Arrays Tutorial 12

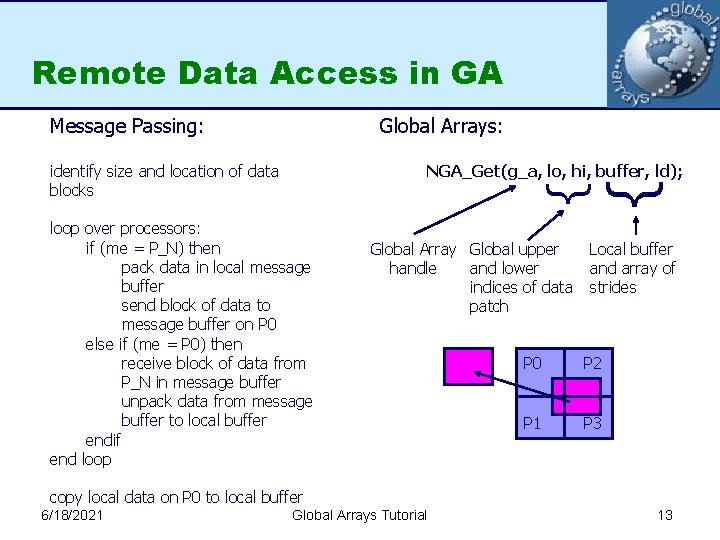

Remote Data Access in GA Message Passing: Global Arrays: loop over processors: if (me = P_N) then pack data in local message buffer send block of data to message buffer on P 0 else if (me = P 0) then receive block of data from P_N in message buffer unpack data from message buffer to local buffer endif end loop Global Array Global upper handle and lower indices of data patch } NGA_Get(g_a, lo, hi, buffer, ld); } identify size and location of data blocks Local buffer and array of strides P 0 P 2 P 1 P 3 copy local data on P 0 to local buffer 6/18/2021 Global Arrays Tutorial 13

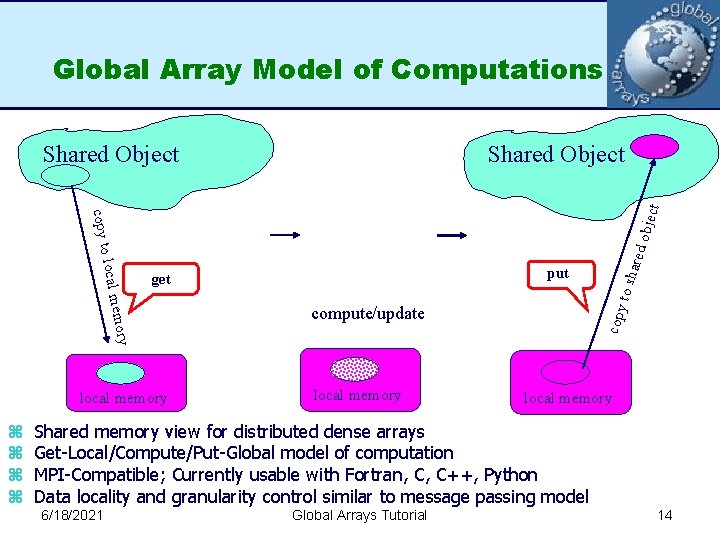

Global Array Model of Computations Shared Object compute/update local memory copy emory local m z z put get local memory to sh ared obje copy to ct Shared Object local memory Shared memory view for distributed dense arrays Get-Local/Compute/Put-Global model of computation MPI-Compatible; Currently usable with Fortran, C, C++, Python Data locality and granularity control similar to message passing model 6/18/2021 Global Arrays Tutorial 14

- Slides: 14