Consensus Paxos and RAFT COS 418 Distributed Systems

Consensus: Paxos and RAFT COS 418: Distributed Systems Lecture 13 Wyatt Lloyd RAFT slides based on those from Diego Ongaro and John Ousterhout

Consensus Used in Systems Group of servers want to: • Make sure all servers in group receive the same updates in the same order as each other • Maintain own lists (views) on who is a current member of the group, and update lists when somebody leaves/fails • Elect a leader in group, and inform everybody • Ensure mutually exclusive (one process at a time only) access to a critical resource like a file 2

Single-shot Consensus • Figure out how to reach consensus for 1 decision 3

Paxos Guarantees • Safety (bad things never happen) • Agreement: All processes that decide do so on the same value • Validity: Value decided must have proposed by some process • Liveness (good things eventually happen) • Termination: All non-faulty processes eventually decide on a value

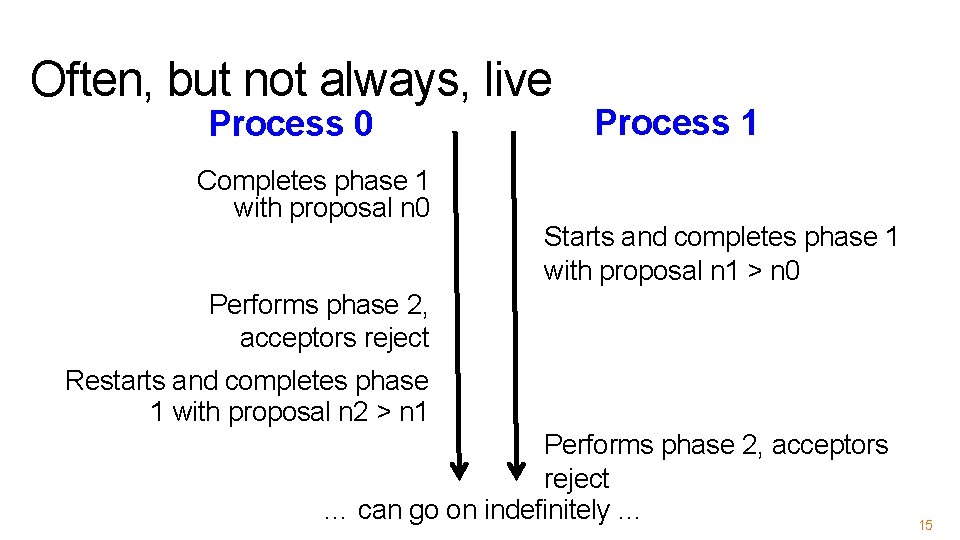

Paxos’s Safety and Liveness • Paxos is always safe • Paxos is very often live (but not always, more later)

Roles of a Process in Paxos • Three conceptual roles – Proposers propose values – Acceptors accept values, where value is chosen if majority accept – Learners learn the outcome (chosen value) • In reality, a process can play any/all roles 6

Strawmen • 3 proposers, 1 acceptor – Acceptor accepts first value received – No liveness with single failure • 3 proposers, 3 acceptors – Accept first value received, learners choose common value known by majority – But no such majority is guaranteed 7

Paxos • Each acceptor accepts multiple proposals – Hopefully one of multiple accepted proposals will have a majority vote (and we determine that) – If not, rinse and repeat (more on this) • How do we select among multiple proposals? – Ordering: proposal is tuple (proposal #, value) = (n, v) – Proposal # strictly increasing, globally unique – Globally unique? • Trick: set low-order bits to proposer’s ID 8

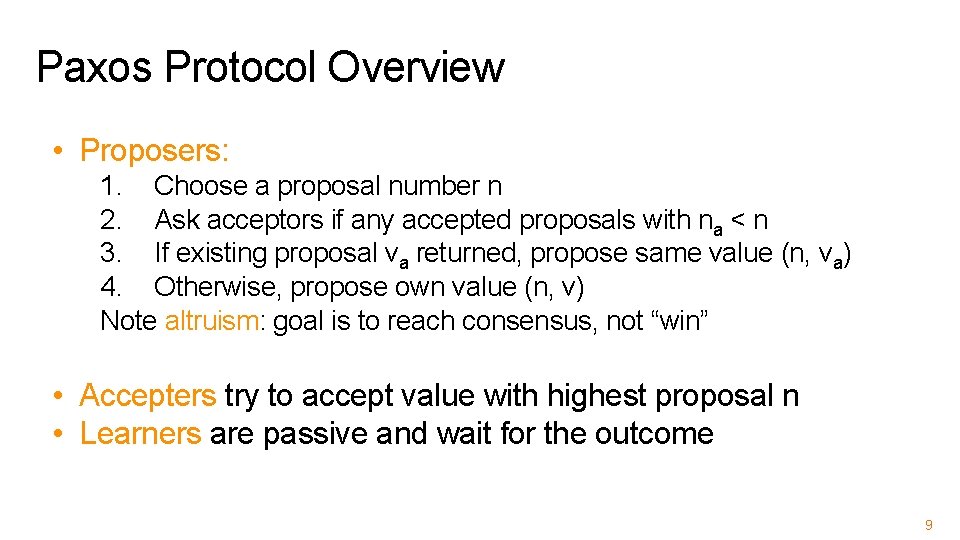

Paxos Protocol Overview • Proposers: 1. Choose a proposal number n 2. Ask acceptors if any accepted proposals with na < n 3. If existing proposal va returned, propose same value (n, va) 4. Otherwise, propose own value (n, v) Note altruism: goal is to reach consensus, not “win” • Accepters try to accept value with highest proposal n • Learners are passive and wait for the outcome 9

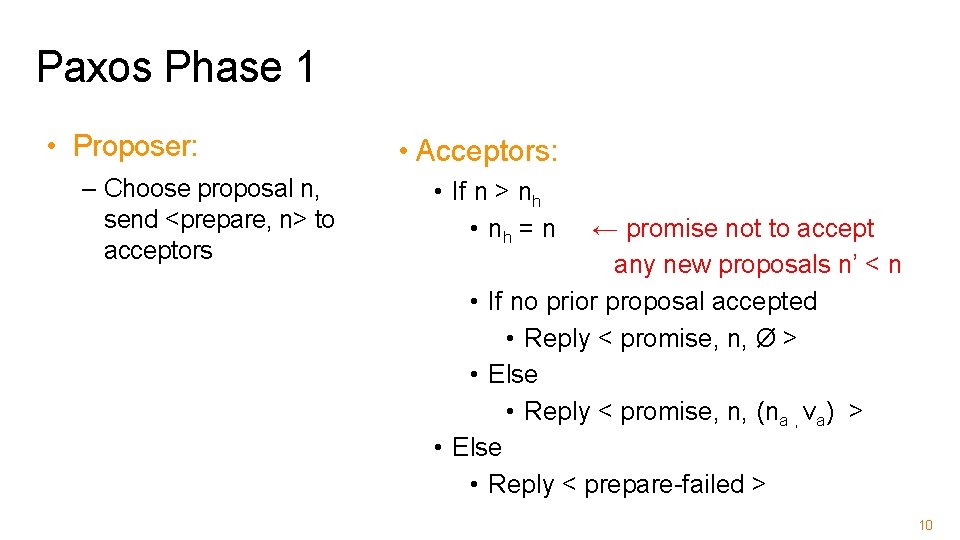

Paxos Phase 1 • Proposer: – Choose proposal n, send <prepare, n> to acceptors • Acceptors: • If n > nh • nh = n ← promise not to accept any new proposals n’ < n • If no prior proposal accepted • Reply < promise, n, Ø > • Else • Reply < promise, n, (na , va) > • Else • Reply < prepare-failed > 10

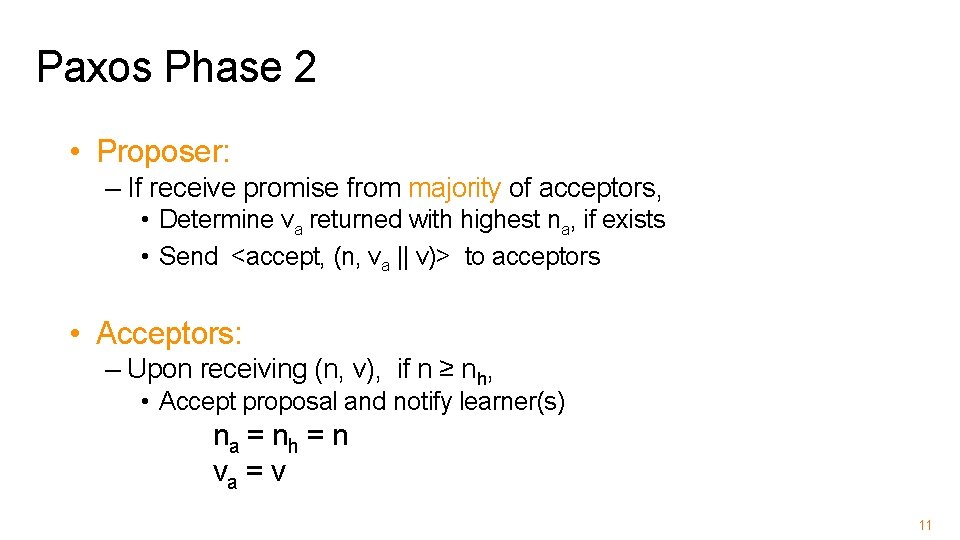

Paxos Phase 2 • Proposer: – If receive promise from majority of acceptors, • Determine va returned with highest na, if exists • Send <accept, (n, va || v)> to acceptors • Acceptors: – Upon receiving (n, v), if n ≥ nh, • Accept proposal and notify learner(s) na = nh = n va = v 11

Paxos Phase 3 • Learners need to know which value chosen • Each acceptor notifies all learners – Simplest approach, but many messages 12

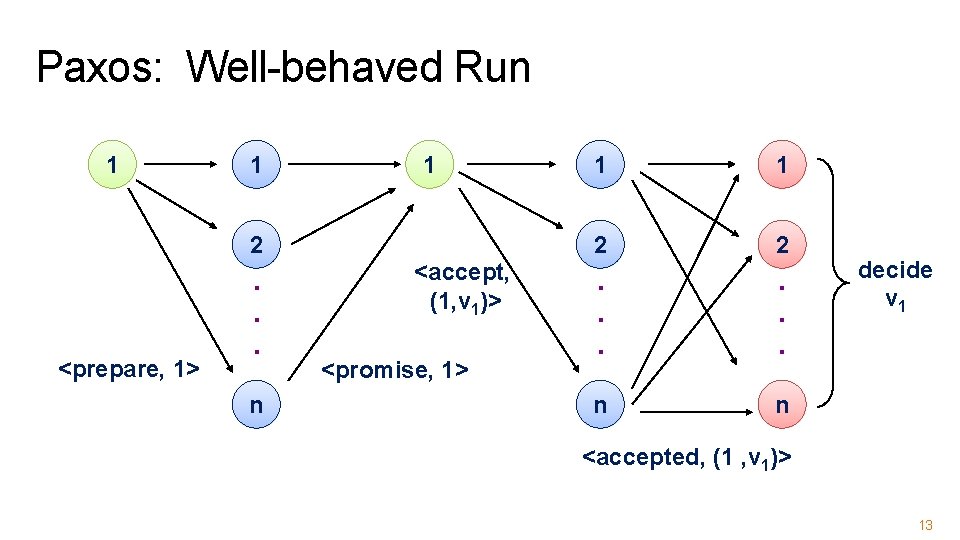

Paxos: Well-behaved Run 1 1 1 2 <prepare, 1> . . . n <accept, (1, v 1)> <promise, 1> 1 1 2 2 . . . n n decide v 1 <accepted, (1 , v 1)> 13

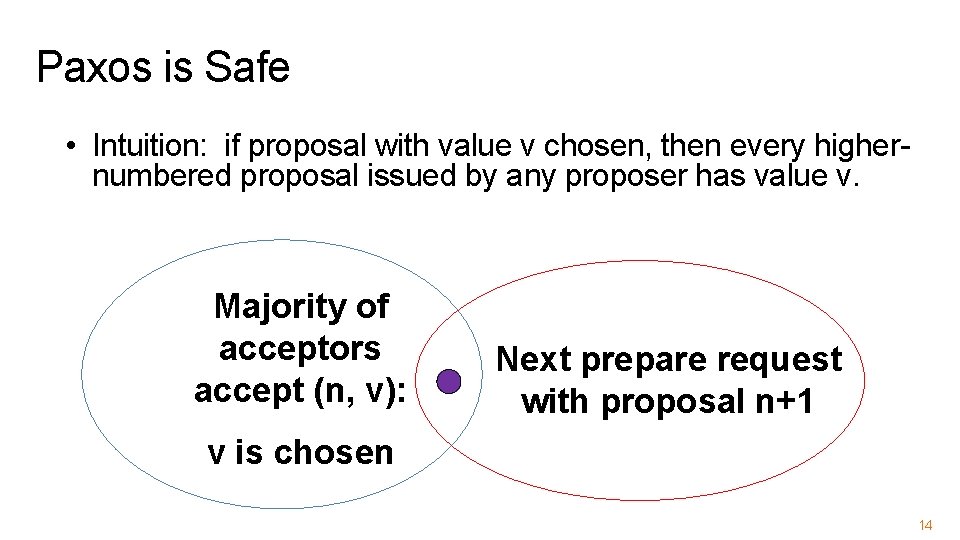

Paxos is Safe • Intuition: if proposal with value v chosen, then every highernumbered proposal issued by any proposer has value v. Majority of acceptors accept (n, v): Next prepare request with proposal n+1 v is chosen 14

Often, but not always, live Process 0 Completes phase 1 with proposal n 0 Process 1 Starts and completes phase 1 with proposal n 1 > n 0 Performs phase 2, acceptors reject Restarts and completes phase 1 with proposal n 2 > n 1 Performs phase 2, acceptors reject … can go on indefinitely … 15

Paxos Summary • Described for a single round of consensus • Proposer, Acceptors, Learners – Often implemented with nodes playing all roles • Always safe: Quorum intersection • Very often live • Acceptors accept multiple values – But only one value is ultimately chosen • Once a value is accepted by a majority it is chosen

Flavors of Paxos • Terminology is a mess • Paxos loosely and confusingly defined… • We’ll stick with – Basic Paxos – Multi-Paxos

Flavors of Paxos: Basic Paxos • Run the full protocol each time – e. g. , for each slot in the command log • Takes 2 rounds until a value is chosen

Flavors of Paxos: Multi-Paxos • Elect a leader and have them run 2 nd phase directly – e. g. , for each slot in the command log – Leader election uses Basic Paxos • Takes 1 round until a value is chosen – Faster than Basic Paxos • Used extensively in practice! – RAFT is similar to Multi Paxos

20

RAFT: A CONSENSUS ALGORITHM FOR REPLICATED LOGS Diego Ongaro and John Ousterhout Stanford University 21

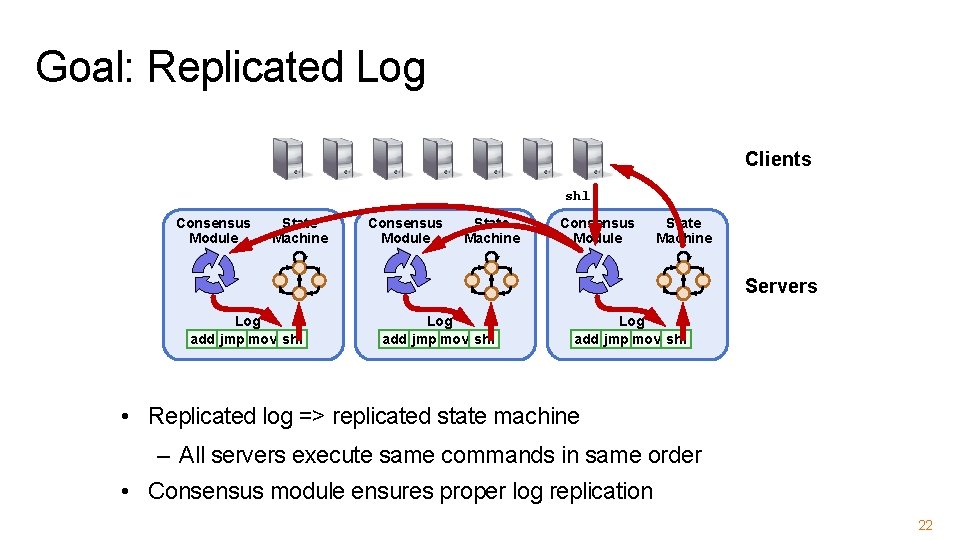

Goal: Replicated Log Clients shl Consensus Module State Machine Servers Log add jmp mov shl • Replicated log => replicated state machine – All servers execute same commands in same order • Consensus module ensures proper log replication 22

Raft Overview 1. Leader election 2. Normal operation (basic log replication) 3. Safety and consistency after leader changes 4. Neutralizing old leaders 5. Client interactions 6. Reconfiguration 23

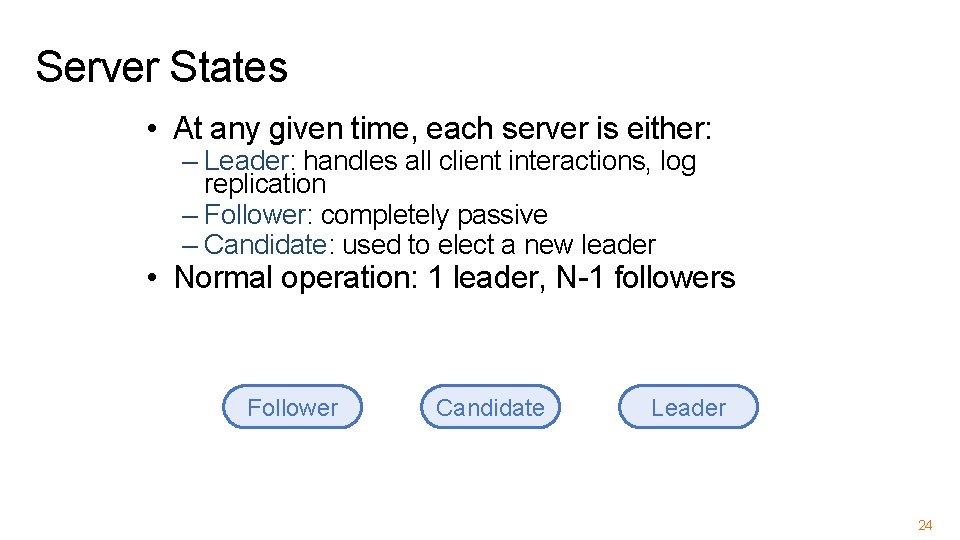

Server States • At any given time, each server is either: – Leader: handles all client interactions, log replication – Follower: completely passive – Candidate: used to elect a new leader • Normal operation: 1 leader, N-1 followers Follower Candidate Leader 24

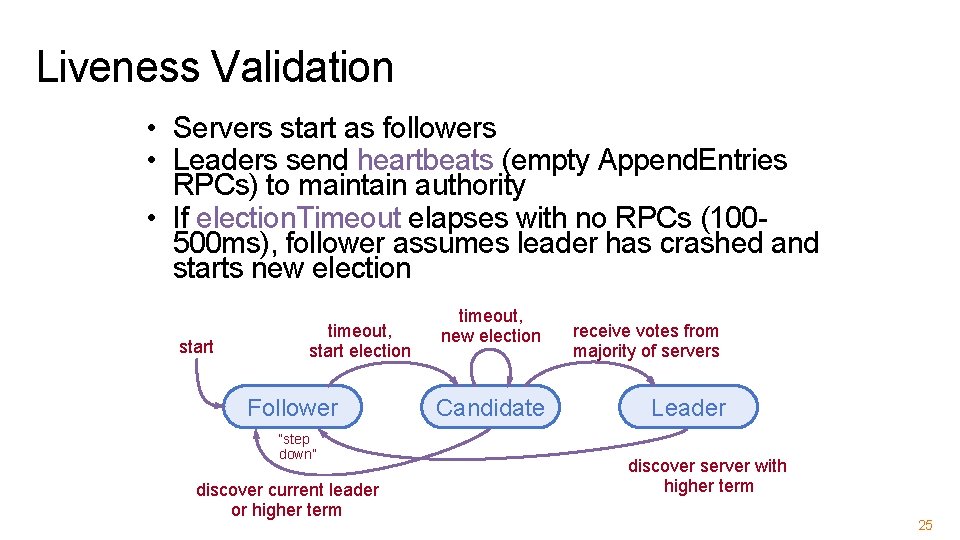

Liveness Validation • Servers start as followers • Leaders send heartbeats (empty Append. Entries RPCs) to maintain authority • If election. Timeout elapses with no RPCs (100500 ms), follower assumes leader has crashed and starts new election start timeout, start election Follower “step down” discover current leader or higher term timeout, new election Candidate receive votes from majority of servers Leader discover server with higher term 25

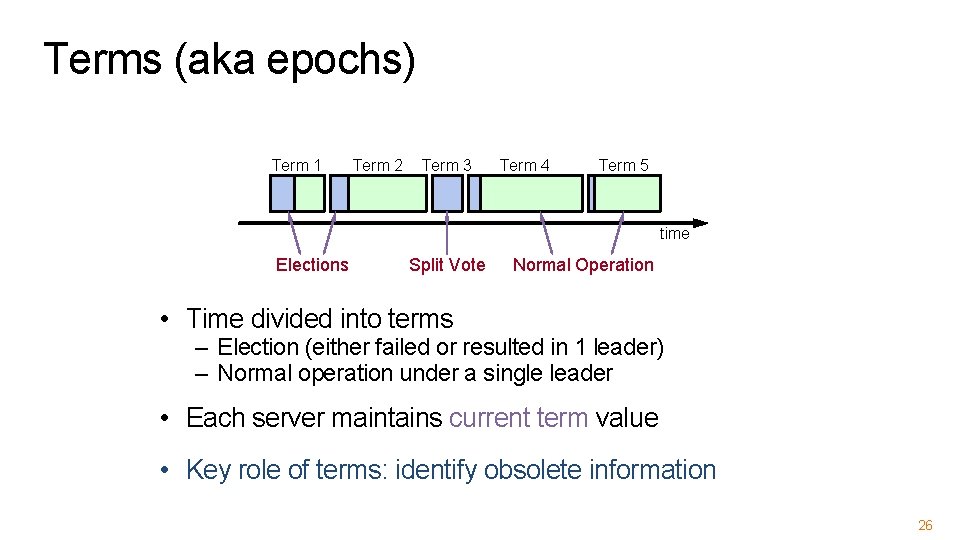

Terms (aka epochs) Term 1 Term 2 Term 3 Term 4 Term 5 time Elections Split Vote Normal Operation • Time divided into terms – Election (either failed or resulted in 1 leader) – Normal operation under a single leader • Each server maintains current term value • Key role of terms: identify obsolete information 26

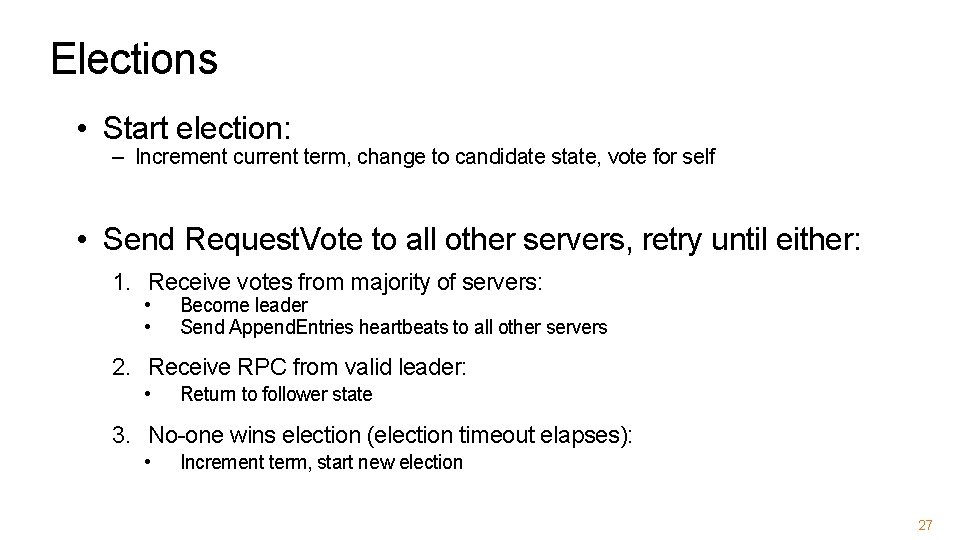

Elections • Start election: – Increment current term, change to candidate state, vote for self • Send Request. Vote to all other servers, retry until either: 1. Receive votes from majority of servers: • • Become leader Send Append. Entries heartbeats to all other servers 2. Receive RPC from valid leader: • Return to follower state 3. No-one wins election (election timeout elapses): • Increment term, start new election 27

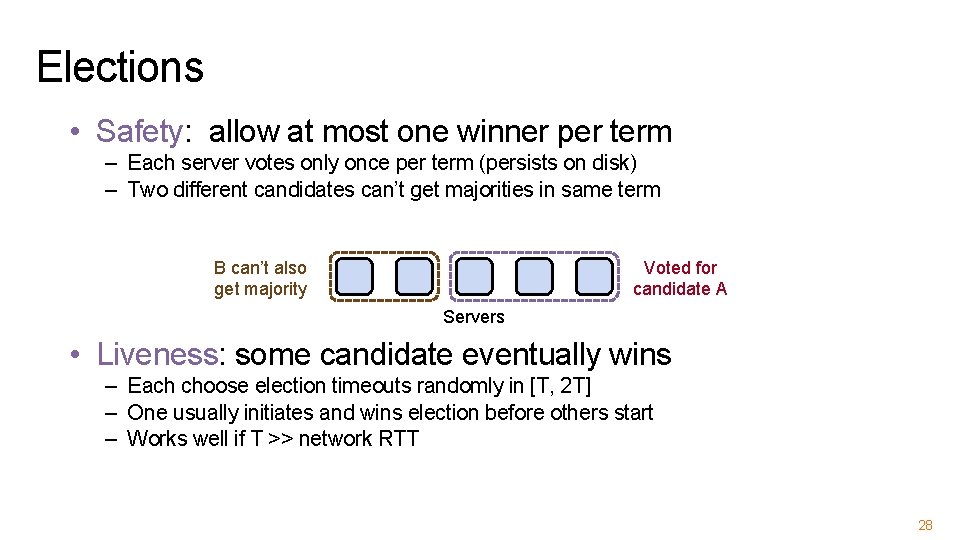

Elections • Safety: allow at most one winner per term – Each server votes only once per term (persists on disk) – Two different candidates can’t get majorities in same term B can’t also get majority Voted for candidate A Servers • Liveness: some candidate eventually wins – Each choose election timeouts randomly in [T, 2 T] – One usually initiates and wins election before others start – Works well if T >> network RTT 28

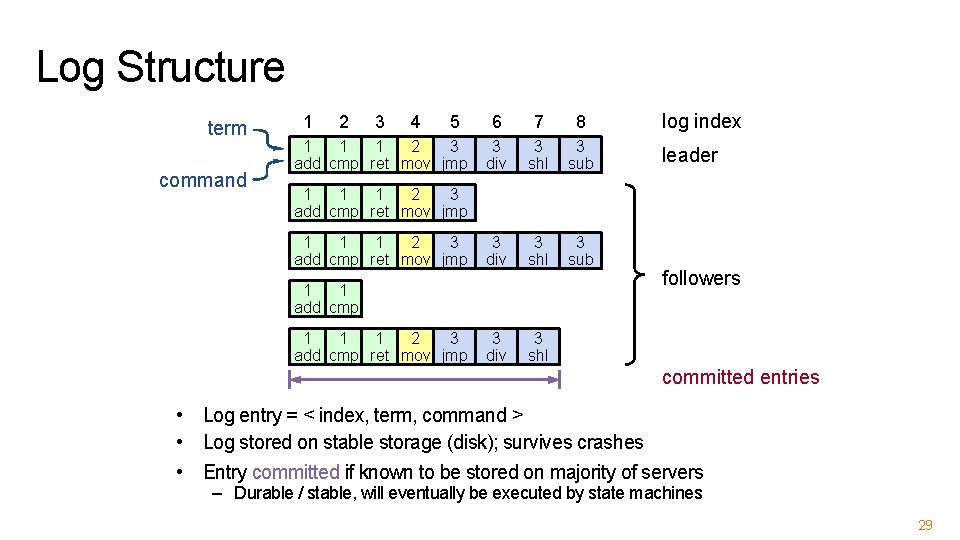

Log Structure term command 1 2 3 4 5 1 1 1 2 3 add cmp ret mov jmp 6 7 8 3 div 3 shl 3 sub 3 div 3 shl log index leader 1 1 1 2 3 add cmp ret mov jmp 1 1 add cmp 1 1 1 2 3 add cmp ret mov jmp followers committed entries • Log entry = < index, term, command > • Log stored on stable storage (disk); survives crashes • Entry committed if known to be stored on majority of servers – Durable / stable, will eventually be executed by state machines 29

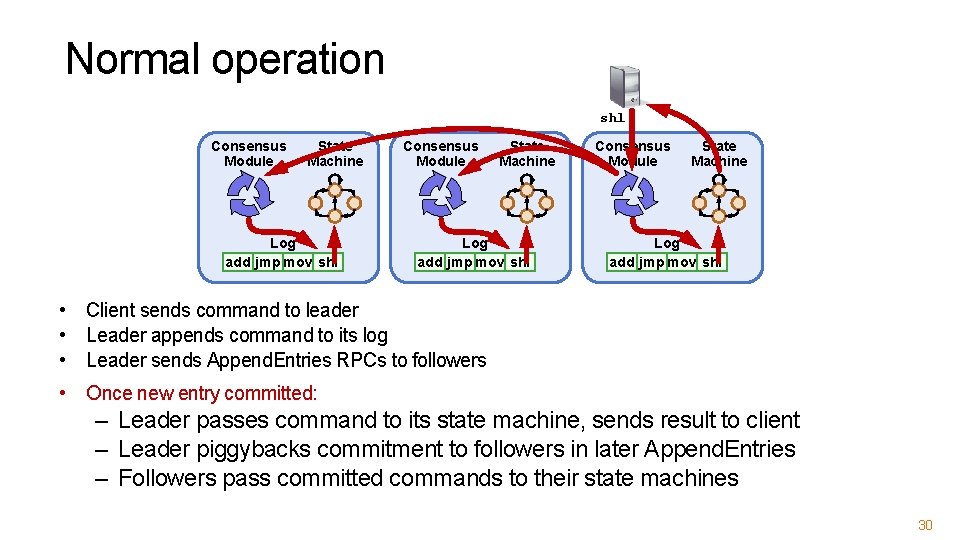

Normal operation shl Consensus Module State Machine Log add jmp mov shl • Client sends command to leader • Leader appends command to its log • Leader sends Append. Entries RPCs to followers • Once new entry committed: – Leader passes command to its state machine, sends result to client – Leader piggybacks commitment to followers in later Append. Entries – Followers pass committed commands to their state machines 30

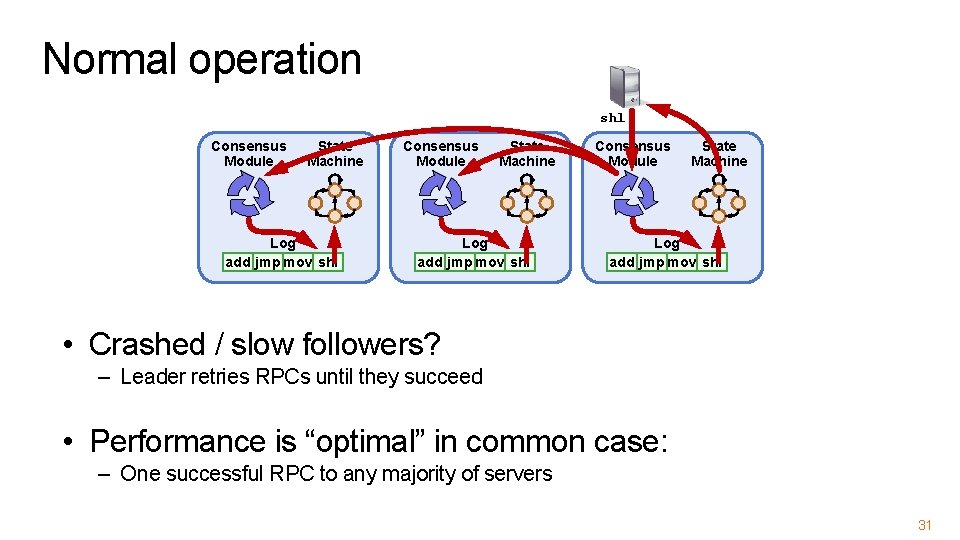

Normal operation shl Consensus Module State Machine Log add jmp mov shl • Crashed / slow followers? – Leader retries RPCs until they succeed • Performance is “optimal” in common case: – One successful RPC to any majority of servers 31

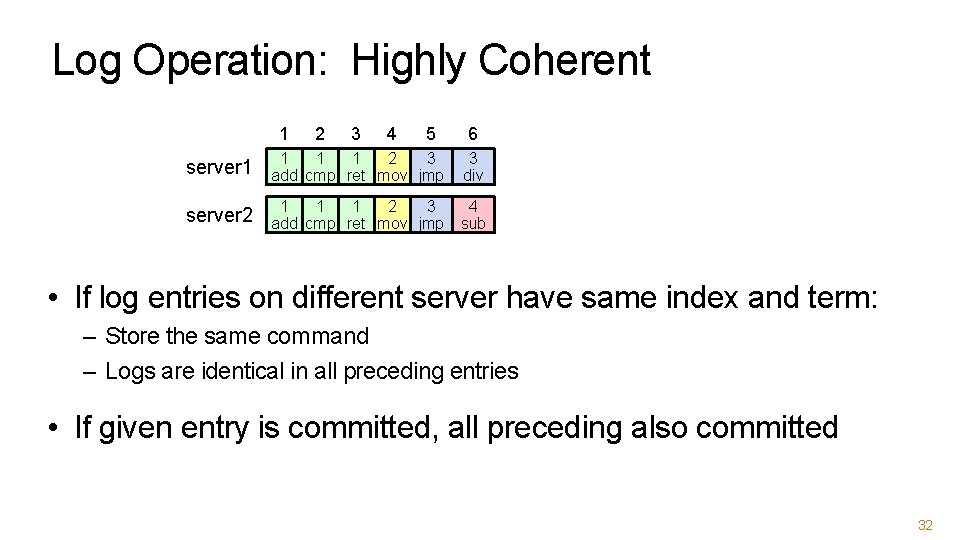

Log Operation: Highly Coherent 1 2 3 4 5 6 server 1 1 2 3 add cmp ret mov jmp 3 div server 2 1 1 1 2 3 add cmp ret mov jmp 4 sub • If log entries on different server have same index and term: – Store the same command – Logs are identical in all preceding entries • If given entry is committed, all preceding also committed 32

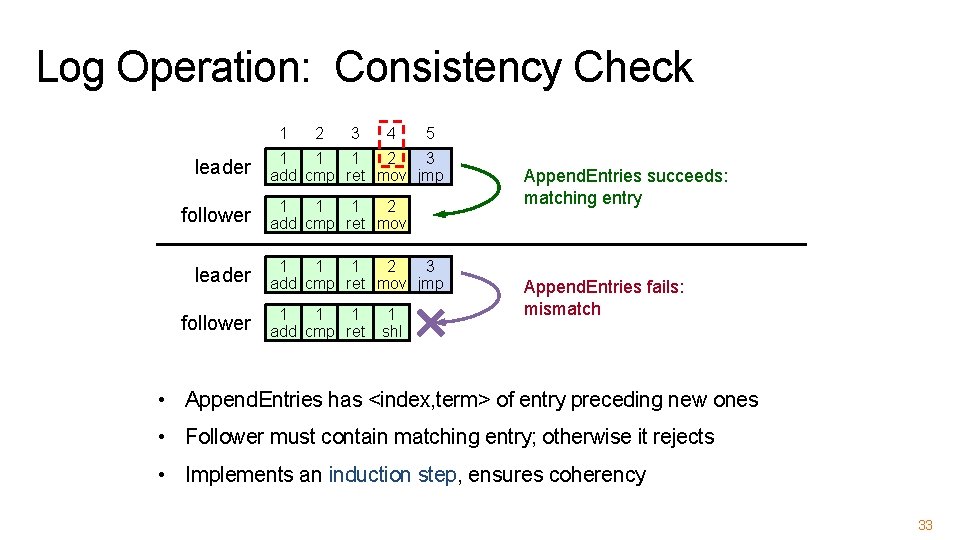

Log Operation: Consistency Check 1 leader follower 2 3 4 5 1 1 1 2 3 add cmp ret mov jmp 1 1 1 2 add cmp ret mov 1 1 1 2 3 add cmp ret mov jmp 1 1 1 add cmp ret 1 shl Append. Entries succeeds: matching entry Append. Entries fails: mismatch • Append. Entries has <index, term> of entry preceding new ones • Follower must contain matching entry; otherwise it rejects • Implements an induction step, ensures coherency 33

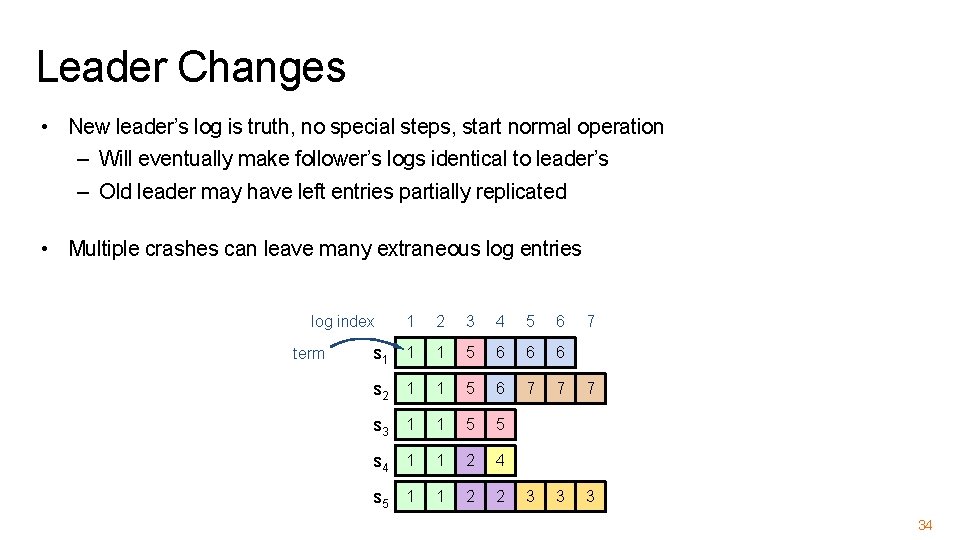

Leader Changes • New leader’s log is truth, no special steps, start normal operation – Will eventually make follower’s logs identical to leader’s – Old leader may have left entries partially replicated • Multiple crashes can leave many extraneous log entries 1 2 3 4 5 6 s 1 1 1 5 6 6 6 s 2 1 1 5 6 7 7 7 s 3 1 1 5 5 s 4 1 1 2 4 s 5 1 1 2 2 3 3 3 log index term 7 34

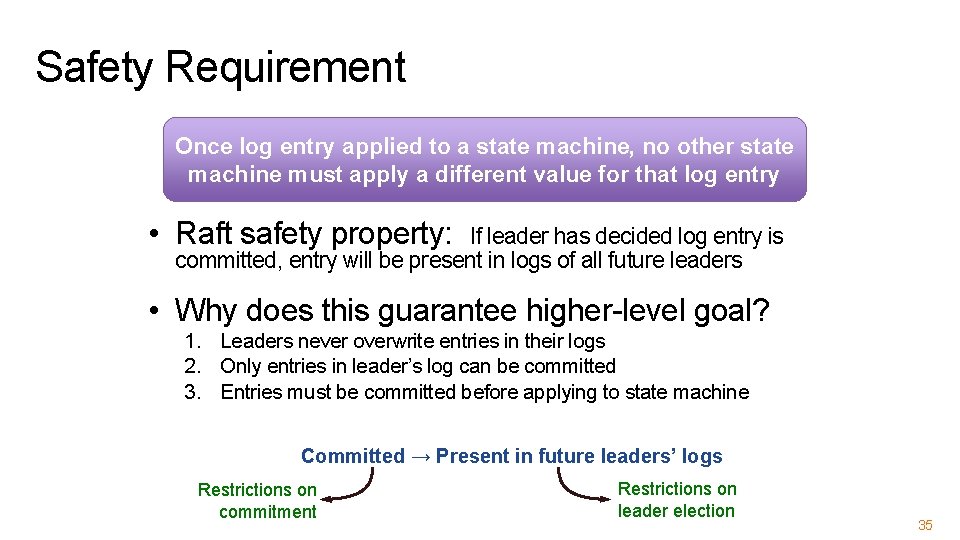

Safety Requirement Once log entry applied to a state machine, no other state machine must apply a different value for that log entry • Raft safety property: If leader has decided log entry is committed, entry will be present in logs of all future leaders • Why does this guarantee higher-level goal? 1. Leaders never overwrite entries in their logs 2. Only entries in leader’s log can be committed 3. Entries must be committed before applying to state machine Committed → Present in future leaders’ logs Restrictions on commitment Restrictions on leader election 35

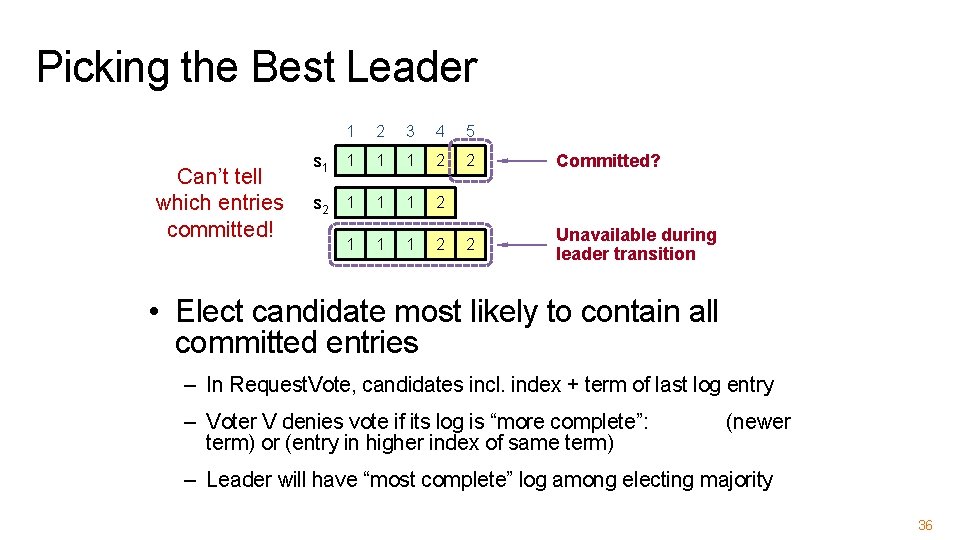

Picking the Best Leader Can’t tell which entries committed! 1 2 3 4 5 s 1 1 2 2 Committed? s 2 1 1 1 2 2 Unavailable during leader transition • Elect candidate most likely to contain all committed entries – In Request. Vote, candidates incl. index + term of last log entry – Voter V denies vote if its log is “more complete”: term) or (entry in higher index of same term) (newer – Leader will have “most complete” log among electing majority 36

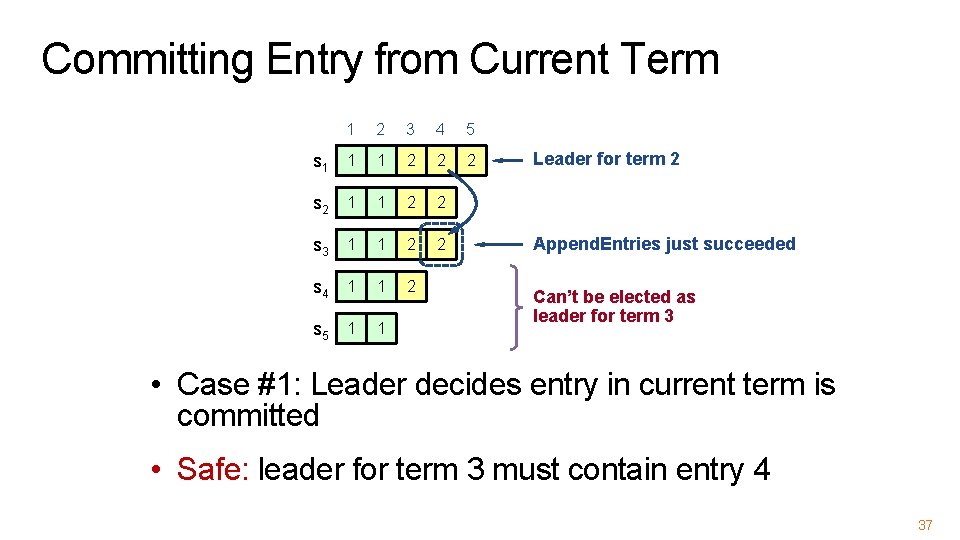

Committing Entry from Current Term 1 2 3 4 5 s 1 1 1 2 2 2 s 2 1 1 2 2 s 3 1 1 2 2 s 4 1 1 2 s 5 1 1 Leader for term 2 Append. Entries just succeeded Can’t be elected as leader for term 3 • Case #1: Leader decides entry in current term is committed • Safe: leader for term 3 must contain entry 4 37

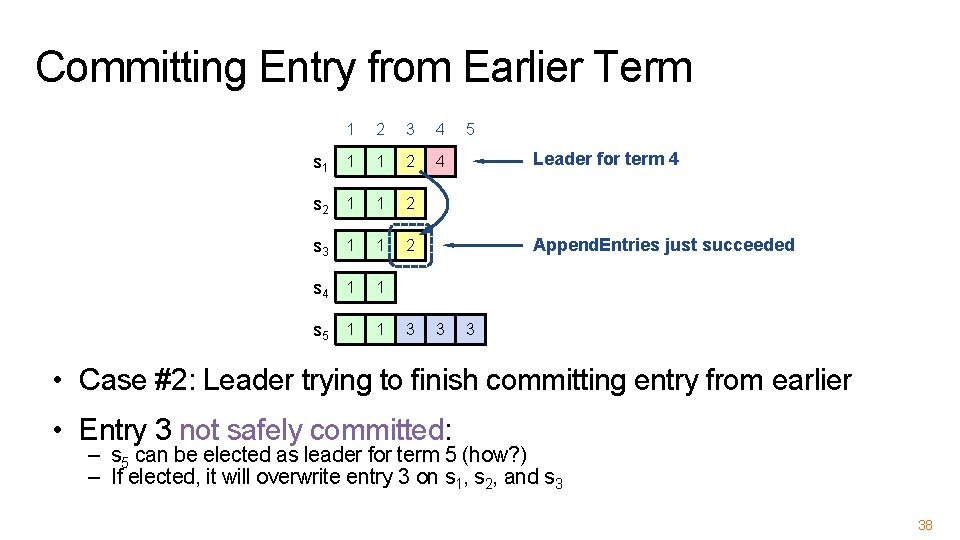

Committing Entry from Earlier Term 1 2 3 4 s 1 1 1 2 4 s 2 1 1 2 s 3 1 1 2 s 4 1 1 s 5 1 1 3 5 Leader for term 4 Append. Entries just succeeded 3 3 • Case #2: Leader trying to finish committing entry from earlier • Entry 3 not safely committed: – s 5 can be elected as leader for term 5 (how? ) – If elected, it will overwrite entry 3 on s 1, s 2, and s 3 38

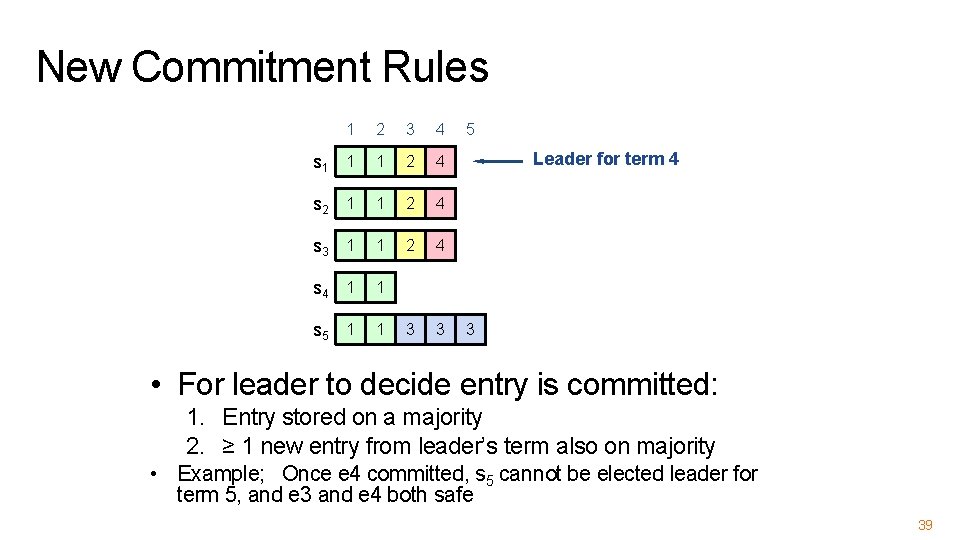

New Commitment Rules 1 2 3 4 s 1 1 1 2 4 s 2 1 1 2 4 s 3 1 1 2 4 s 4 1 1 s 5 1 1 3 3 5 Leader for term 4 3 • For leader to decide entry is committed: 1. Entry stored on a majority 2. ≥ 1 new entry from leader’s term also on majority • Example; Once e 4 committed, s 5 cannot be elected leader for term 5, and e 3 and e 4 both safe 39

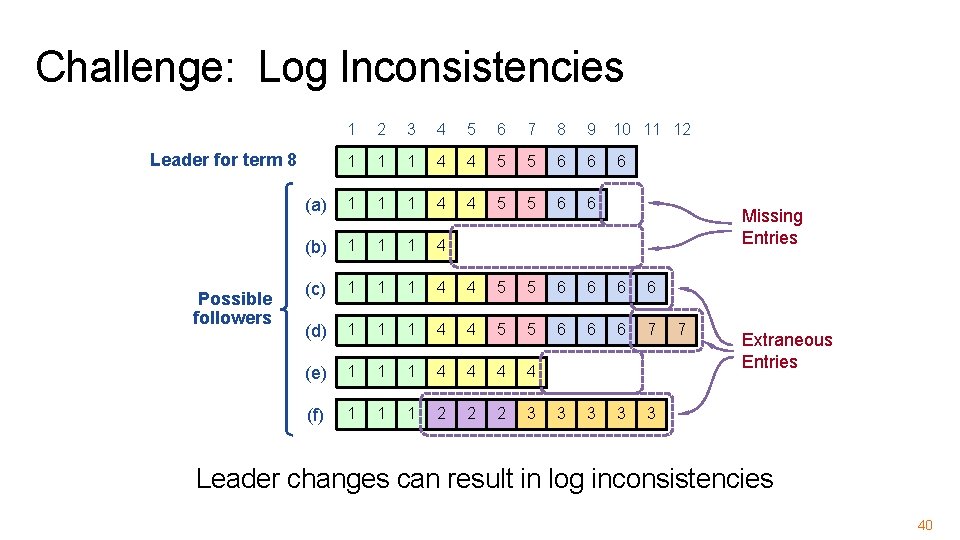

Challenge: Log Inconsistencies 1 2 3 4 5 6 7 8 9 10 11 12 1 1 1 4 4 5 5 6 6 6 (a) 1 1 1 4 4 5 5 6 6 (b) 1 1 1 4 (c) 1 1 1 4 4 5 5 6 6 (d) 1 1 1 4 4 5 5 6 6 6 7 (e) 1 1 1 4 4 (f) 1 1 1 2 2 2 3 3 3 Leader for term 8 Possible followers Missing Entries 7 Extraneous Entries Leader changes can result in log inconsistencies 40

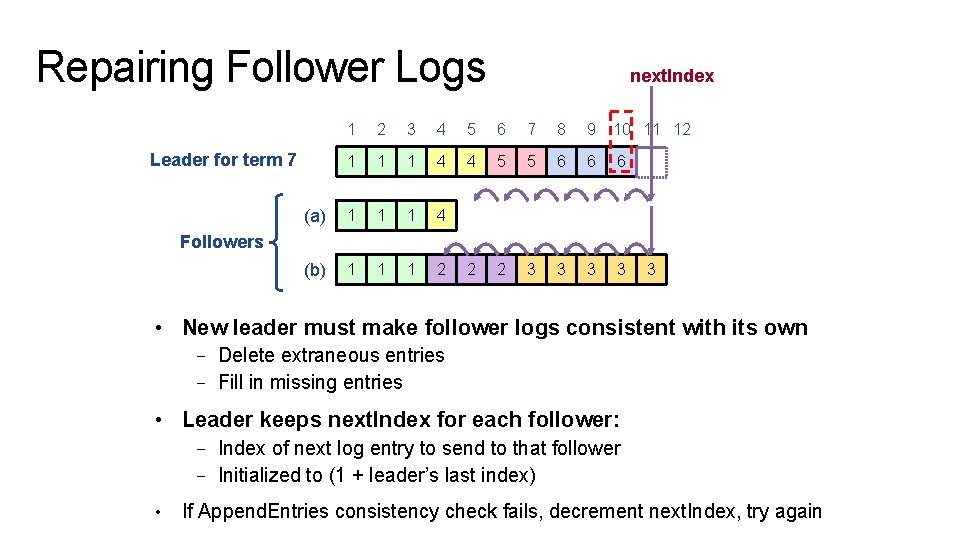

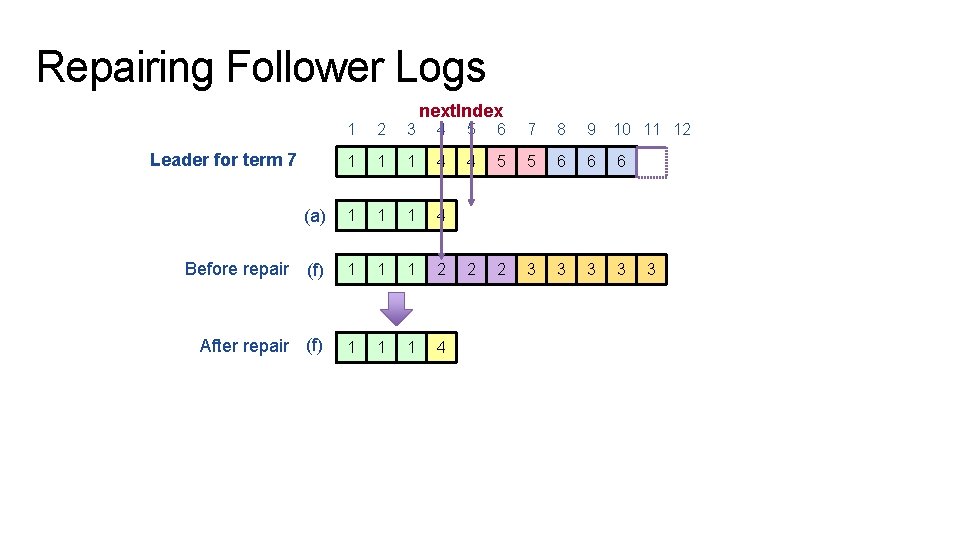

Repairing Follower Logs next. Index 1 2 3 4 5 6 7 8 9 10 11 12 1 1 1 4 4 5 5 6 6 6 (a) 1 1 1 4 (b) 1 1 1 2 2 2 3 3 Leader for term 7 Followers 3 • New leader must make follower logs consistent with its own – Delete extraneous entries – Fill in missing entries • Leader keeps next. Index for each follower: – Index of next log entry to send to that follower – Initialized to (1 + leader’s last index) • If Append. Entries consistency check fails, decrement next. Index, try again

Repairing Follower Logs 1 2 3 1 1 (a) 1 Before repair (f) After repair (f) Leader for term 7 next. Index 4 5 6 7 8 9 10 11 12 1 4 4 5 5 6 6 6 1 1 4 1 1 1 2 2 2 3 3 1 1 1 4 3

Neutralizing Old Leaders Leader temporarily disconnected → other servers elect new leader → old leader reconnected → old leader attempts to commit log entries • Terms used to detect stale leaders (and candidates) – Every RPC contains term of sender – Sender’s term < receiver: • Receiver: Rejects RPC (via ACK which sender processes…) – Receiver’s term < sender: • Receiver reverts to follower, updates term, processes RPC • Election updates terms of majority of servers – Deposed server cannot commit new log entries 43

Client Protocol • Send commands to leader – If leader unknown, contact any server, which redirects client to leader • Leader only responds after command logged, committed, and executed by leader • If request times out (e. g. , leader crashes): – Client reissues command to new leader (after possible redirect) • Ensure exactly-once semantics even with leader failures – E. g. , Leader can execute command then crash before responding – Client should embed unique request ID in each command – This unique request ID included in log entry – Before accepting request, leader checks log for entry with same id 44

RECONFIGURATION 45

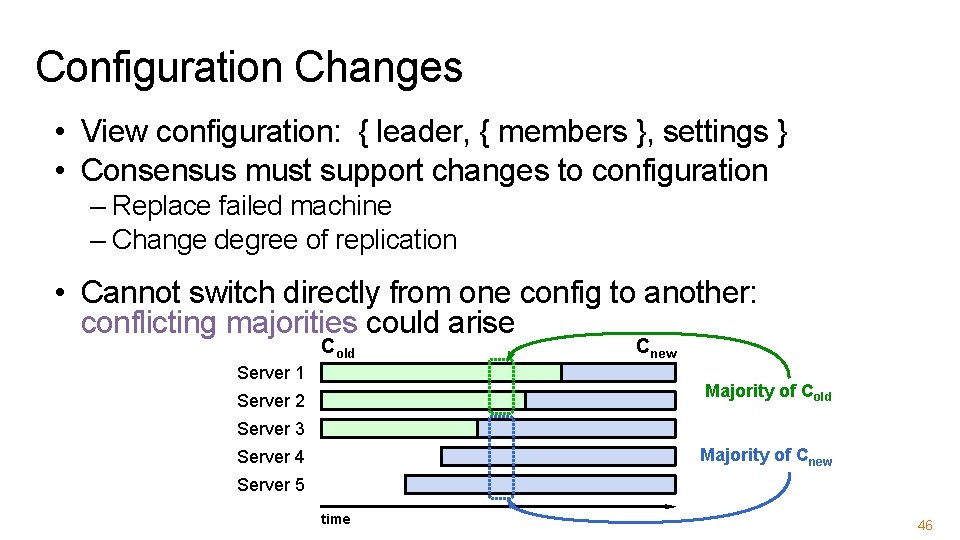

Configuration Changes • View configuration: { leader, { members }, settings } • Consensus must support changes to configuration – Replace failed machine – Change degree of replication • Cannot switch directly from one config to another: conflicting majorities could arise Cold Server 1 Cnew Majority of Cold Server 2 Server 3 Majority of Cnew Server 4 Server 5 time 46

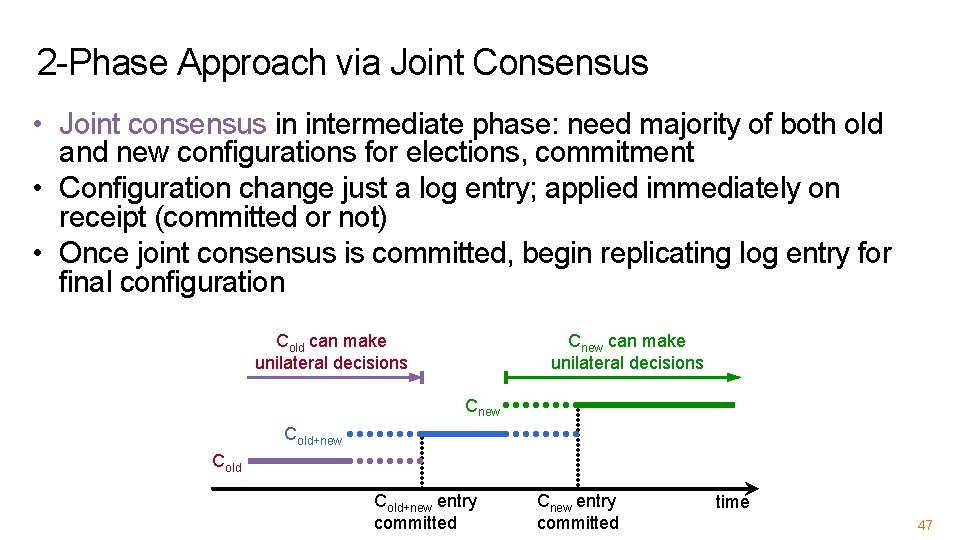

2 -Phase Approach via Joint Consensus • Joint consensus in intermediate phase: need majority of both old and new configurations for elections, commitment • Configuration change just a log entry; applied immediately on receipt (committed or not) • Once joint consensus is committed, begin replicating log entry for final configuration Cold can make unilateral decisions Cnew Cold+new entry committed Cnew entry committed time 47

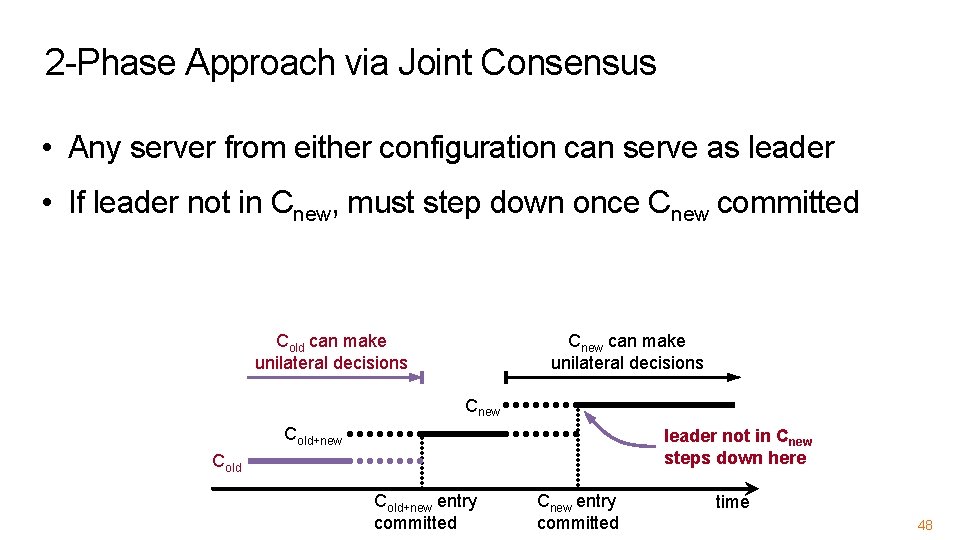

2 -Phase Approach via Joint Consensus • Any server from either configuration can serve as leader • If leader not in Cnew, must step down once Cnew committed Cold can make unilateral decisions Cnew Cold+new leader not in Cnew steps down here Cold+new entry committed Cnew entry committed time 48

- Slides: 48