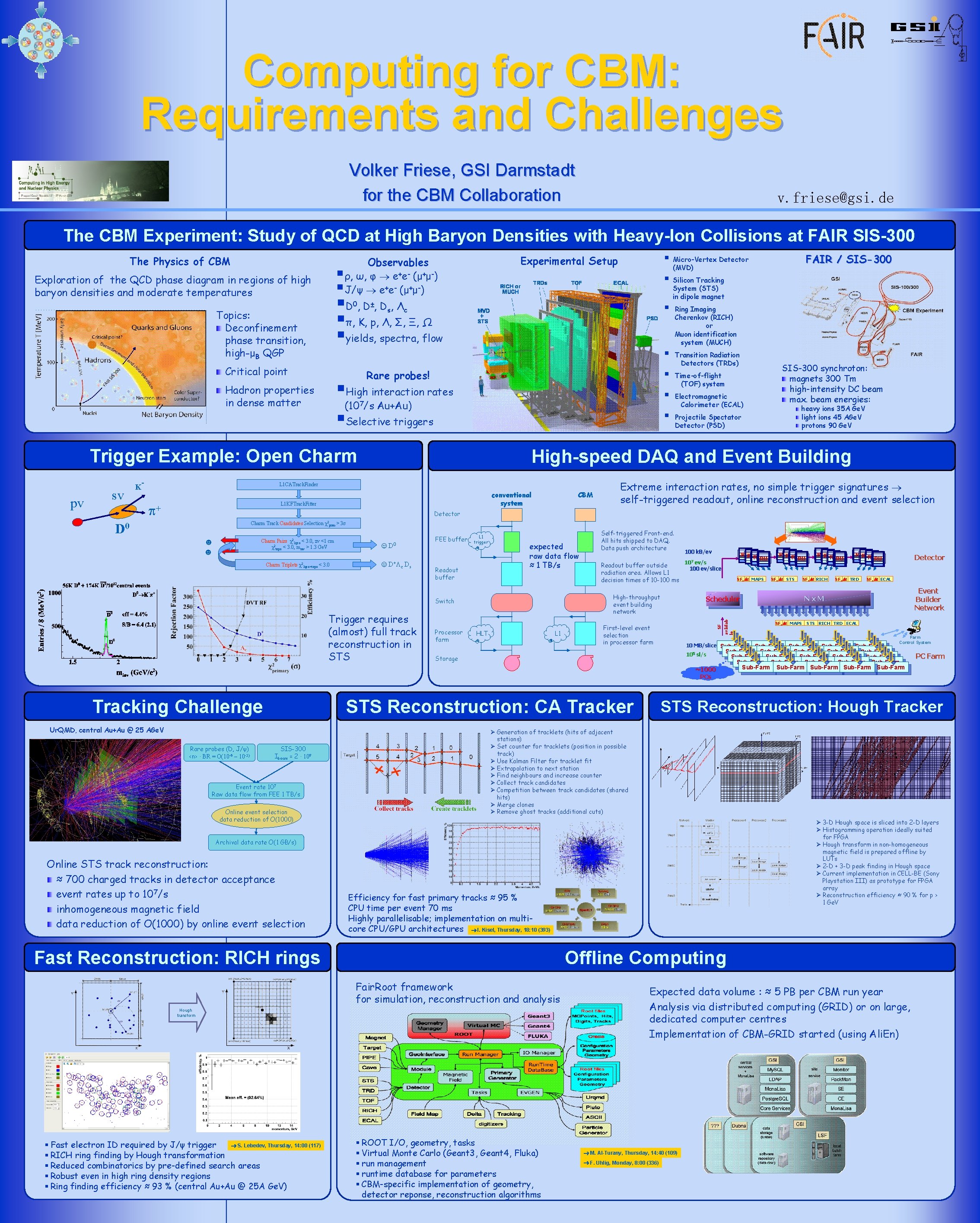

Computing for CBM Requirements and Challenges Volker Friese

Computing for CBM: Requirements and Challenges Volker Friese, GSI Darmstadt for the CBM Collaboration v. friese@gsi. de The CBM Experiment: Study of QCD at High Baryon Densities with Heavy-Ion Collisions at FAIR SIS-300 The Physics of CBM § § Exploration of the QCD phase diagram in regions of high baryon densities and moderate temperatures 0 ± s § Silicon Tracking § c § Rare probes! §High interaction rates (10 /s Au+Au) §Selective triggers Hadron properties in dense matter § 7 § Trigger Example: Open Charm Transition Radiation Detectors (TRDs) Time-of-flight (TOF) system Electromagnetic Calorimeter (ECAL) SIS-300 synchroton: magnets 300 Tm high-intensity DC beam max. beam energies: heavy ions 35 A Ge. V light ions 45 AGe. V protons 90 Ge. V Projectile Spectator Detector (PSD) High-speed DAQ and Event Building L 1 CATrack. Finder conventional system L 1 KFTrack. Fitter + Ring Imaging Cherenkov (RICH) or Muon identification system (MUCH) CBM Extreme interaction rates, no simple trigger signatures self-triggered readout, online reconstruction and event selection Detector Charm Track Candidates Selection χ2 prim > 3 ☻ ☻ χ2 Charm Pairs 2 geo < 3. 0, zv <1 cm χ2 topo < 3. 0, minv > 1. 3 Ge. V Charm Triplets χ2 3 geo+topo < 3. 0 FEE buffer ☺ D 0 ☺ D+ c Ds L 1 trigger Readout buffer expected raw data flow ≈ 1 TB/s Processor farm Readout buffer outside radiation area. Allows L 1 decision times of 10 -100 ms High-throughput event building network Switch Trigger requires (almost) full track reconstruction in STS Self-triggered Front-end. All hits shipped to DAQ. Data push architecture HLT L 1 100 k. B/ev SFn Dt MAPS First-level event selection in processor farm 10 MB/slice Sub-Farm 105 sl/s Storage STS Reconstruction: CA Tracker Ur. QMD, central Au+Au @ 25 AGe. V Rare probes (D, J/ψ) <n> · BR = O(10 -6 – 10 -5) SIS-300 Ibeam = 2 · 109 Event rate 107 Raw data flow from FEE 1 TB/s Online event selection data reduction of O(1000) SFn Dt RICH RURU SFn Dt TRD Detector SFn Dt ECAL Event Builder Network Nx. M SFn Dt MAPS STS RICH TRD ECAL Farm Control System PC Farm STS Reconstruction: Hough Tracker Ø Generation of tracklets (hits of adjacent stations) Ø Set counter for tracklets (position in possible track) Ø Use Kalman Filter for tracklet fit Ø Extrapolation to next station Ø Find neighbours and increase counter Ø Collect track candidates Ø Competition between track candidates (shared hits) Ø Merge clones Ø Remove ghost tracks (additional cuts) Ø 3 -D Hough space is sliced into 2 -D layers Ø Histogramming operation ideally suited for FPGA Ø Hough transform in non-homogeneous magnetic field is prepared offline by LUTs Ø 2 -D + 3 -D peak finding in Hough space Ø Current implementation in CELL-BE (Sony Playstation III) as prototype for FPGA array Ø Reconstruction efficiency ≈ 90 % for p > 1 Ge. V Efficiency for fast primary tracks ≈ 95 % CPU time per event 70 ms Highly parallelisable; implementation on multicore CPU/GPU architectures I. Kisel, Thursday, 18: 10 (393) Fast Reconstruction: RICH rings Offline Computing Fair. Root framework for simulation, reconstruction and analysis Hough transform S. Lebedev, Thursday, 14: 00 (117) § Fast electron ID required by J/ψ trigger § RICH ring finding by Hough transformation § Reduced combinatorics by pre-defined search areas § Robust even in high ring density regions § Ring finding efficiency ≈ 93 % (central Au+Au @ 25 A Ge. V) SFn Dt STS RURU Sub-Farm Sub-Farm Sub-Farm Sub-Farm Sub-Farm Sub-Farm Archival data rate O(1 GB/s) Online STS track reconstruction: ≈ 700 charged tracks in detector acceptance event rates up to 107/s inhomogeneous magnetic field data reduction of O(1000) by online event selection RURU Scheduler ~1000 PCs Tracking Challenge RURU 107 ev/s 100 ev/slice SF D 0 n availab le pv sv - System (STS) in dipole magnet § Critical point FAIR / SIS-300 (MVD) §D , D , Λ §π, K, p, Λ, Σ, Ξ, Ω §yields, spectra, flow Topics: Deconfinement phase transition, high-μB QGP K § Micro-Vertex Detector Experimental Setup Observables ρ, ω, φ e+e- (μ+μ-) J/ψ e+e- (μ+μ-) § ROOT I/O, geometry, tasks § Virtual Monte Carlo (Geant 3, Geant 4, Fluka) § run management § runtime database for parameters § CBM-specific implementation of geometry, detector reponse, reconstruction algorithms Expected data volume : ≈ 5 PB per CBM run year Analysis via distributed computing (GRID) or on large, dedicated computer centres Implementation of CBM-GRID started (using Ali. En) M. Al-Turany, Thursday, 14: 40 (109) F. Uhlig, Monday, 8: 00 (336)

- Slides: 1