Combining Structured and Unstructured Data in Electronic Health

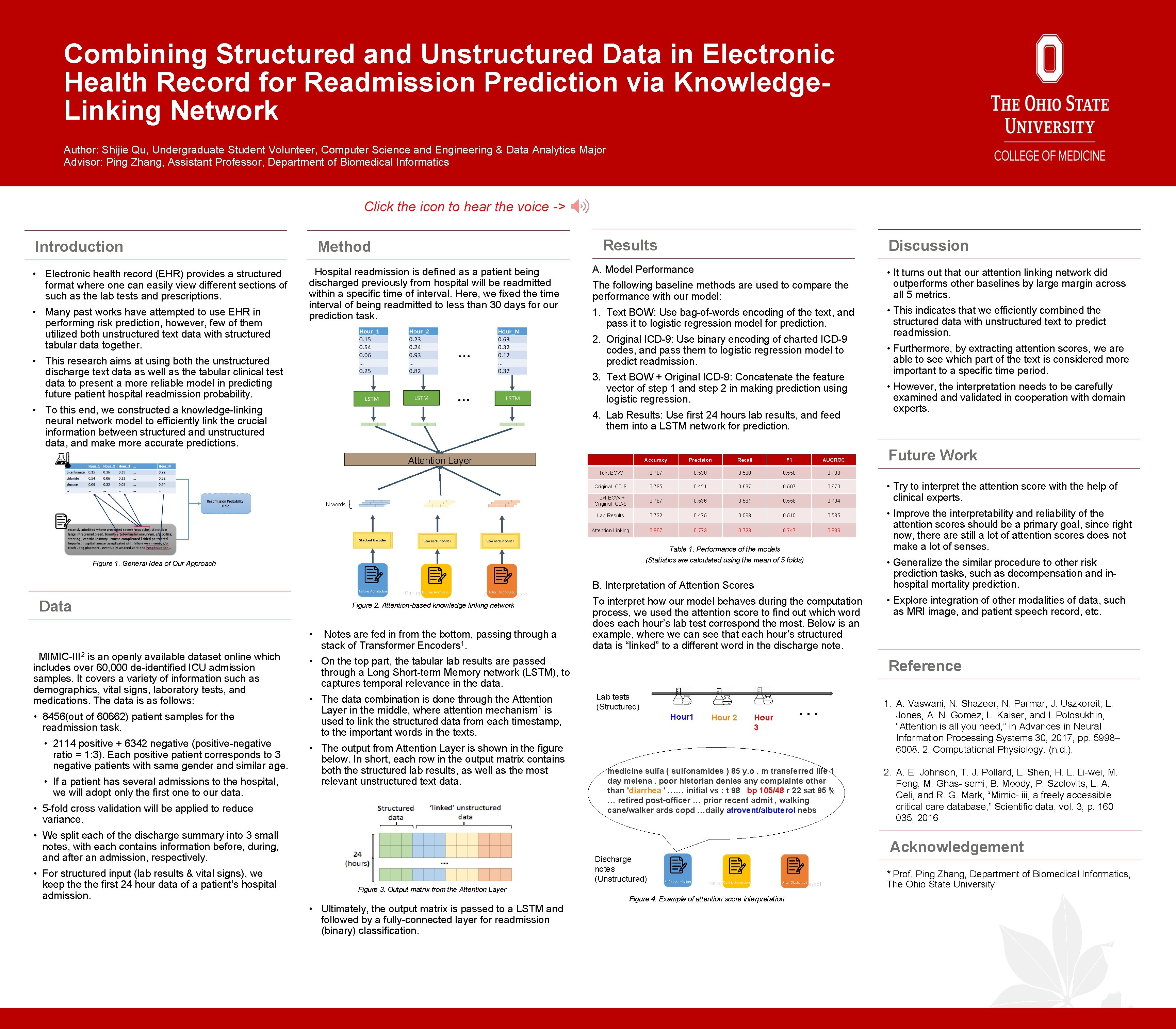

Combining Structured and Unstructured Data in Electronic Health Record for Readmission Prediction via Knowledge. Linking Network Author: Shijie Qu, Undergraduate Student Volunteer, Computer Science and Engineering & Data Analytics Major Advisor: Ping Zhang, Assistant Professor, Department of Biomedical Informatics Click the icon to hear the voice -> Introduction • Electronic health record (EHR) provides a structured format where one can easily view different sections of such as the lab tests and prescriptions. • Many past works have attempted to use EHR in performing risk prediction, however, few of them utilized both unstructured text data with structured tabular data together. Results Method Hospital readmission is defined as a patient being discharged previously from hospital will be readmitted within a specific time of interval. Here, we fixed the time interval of being readmitted to less than 30 days for our prediction task. Discussion A. Model Performance The following baseline methods are used to compare the performance with our model: 1. Text BOW: Use bag-of-words encoding of the text, and pass it to logistic regression model for prediction. 2. Original ICD-9: Use binary encoding of charted ICD-9 codes, and pass them to logistic regression model to predict readmission. • This research aims at using both the unstructured discharge text data as well as the tabular clinical test data to present a more reliable model in predicting future patient hospital readmission probability. 3. Text BOW + Original ICD-9: Concatenate the feature vector of step 1 and step 2 in making prediction using logistic regression. • To this end, we constructed a knowledge-linking neural network model to efficiently link the crucial information between structured and unstructured data, and make more accurate predictions. 4. Lab Results: Use first 24 hours lab results, and feed them into a LSTM network for prediction. Attention Layer N words Accuracy Precision Recall F 1 AUCROC Text BOW 0. 787 0. 538 0. 580 0. 558 0. 703 Original ICD-9 0. 795 0. 421 0. 637 0. 507 0. 670 Text BOW + Original ICD-9 0. 787 0. 536 0. 581 0. 558 0. 704 Lab Results 0. 732 0. 475 0. 563 0. 515 0. 535 Attention Linking 0. 867 0. 773 0. 723 0. 747 0. 836 Table 1. Performance of the models (Statistics are calculated using the mean of 5 folds) Figure 1. General Idea of Our Approach B. Interpretation of Attention Scores Data Figure 2. Attention-based knowledge linking network • MIMIC-III 2 is an openly available dataset online which includes over 60, 000 de-identified ICU admission samples. It covers a variety of information such as demographics, vital signs, laboratory tests, and medications. The data is as follows: • 8456(out of 60662) patient samples for the readmission task. • 2114 positive + 6342 negative (positive-negative ratio = 1: 3). Each positive patient corresponds to 3 negative patients with same gender and similar age. • If a patient has several admissions to the hospital, we will adopt only the first one to our data. Notes are fed in from the bottom, passing through a stack of Transformer Encoders 1. • On the top part, the tabular lab results are passed through a Long Short-term Memory network (LSTM), to captures temporal relevance in the data. • The data combination is done through the Attention Layer in the middle, where attention mechanism 1 is used to link the structured data from each timestamp, to the important words in the texts. • The output from Attention Layer is shown in the figure below. In short, each row in the output matrix contains both the structured lab results, as well as the most relevant unstructured text data. • 5 -fold cross validation will be applied to reduce variance. • We split each of the discharge summary into 3 small notes, with each contains information before, during, and after an admission, respectively. • For structured input (lab results & vital signs), we keep the first 24 hour data of a patient’s hospital admission. To interpret how our model behaves during the computation process, we used the attention score to find out which word does each hour’s lab test correspond the most. Below is an example, where we can see that each hour’s structured data is “linked” to a different word in the discharge note. • This indicates that we efficiently combined the structured data with unstructured text to predict readmission. • Furthermore, by extracting attention scores, we are able to see which part of the text is considered more important to a specific time period. • However, the interpretation needs to be carefully examined and validated in cooperation with domain experts. Future Work • Try to interpret the attention score with the help of clinical experts. • Improve the interpretability and reliability of the attention scores should be a primary goal, since right now, there are still a lot of attention scores does not make a lot of senses. • Generalize the similar procedure to other risk prediction tasks, such as decompensation and inhospital mortality prediction. • Explore integration of other modalities of data, such as MRI image, and patient speech record, etc. Reference Lab tests (Structured) Hour 1 Hour 2 Hour 3 … medicine sulfa ( sulfonamides ) 85 y. o. m transferred life 1 day melena. poor historian denies any complaints other than 'diarrhea ' …… initial vs : t 98 bp 105/48 r 22 sat 95 % … retired post-officer … prior recent admit , walking cane/walker ards copd …daily atrovent/albuterol nebs Discharge notes (Unstructured) Figure 3. Output matrix from the Attention Layer • Ultimately, the output matrix is passed to a LSTM and followed by a fully-connected layer for readmission (binary) classification. • It turns out that our attention linking network did outperforms other baselines by large margin across all 5 metrics. Figure 4. Example of attention score interpretation 1. A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, L. Kaiser, and I. Polosukhin, “Attention is all you need, ” in Advances in Neural Information Processing Systems 30, 2017, pp. 5998– 6008. 2. Computational Physiology. (n. d. ). 2. A. E. Johnson, T. J. Pollard, L. Shen, H. L. Li-wei, M. Feng, M. Ghas- semi, B. Moody, P. Szolovits, L. A. Celi, and R. G. Mark, “Mimic- iii, a freely accessible critical care database, ” Scientific data, vol. 3, p. 160 035, 2016 Acknowledgement * Prof. Ping Zhang, Department of Biomedical Informatics, The Ohio State University

- Slides: 1