Classification and Prediction Ensemble Methods Bamshad Mobasher De

Classification and Prediction: Ensemble Methods Bamshad Mobasher De. Paul University

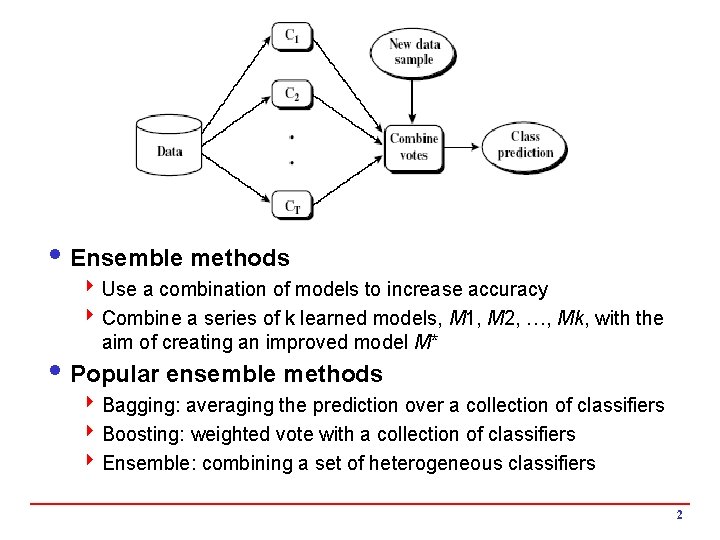

i Ensemble methods 4 Use a combination of models to increase accuracy 4 Combine a series of k learned models, M 1, M 2, …, Mk, with the aim of creating an improved model M* i Popular ensemble methods 4 Bagging: averaging the prediction over a collection of classifiers 4 Boosting: weighted vote with a collection of classifiers 4 Ensemble: combining a set of heterogeneous classifiers 2

Bagging: Boostrap Aggregation i Analogy: Diagnosis based on multiple doctors’ majority vote i Training 4 Given a set D of d instances, at each iteration i, a training set Di of d instances is sampled with replacement from D (i. e. , bootstrap sampling) 4 A classifier model Mi is learned for each training set Di i Classification: classify an unknown sample X 4 Each classifier Mi returns its class prediction 4 The bagged classifier M* counts the votes and assigns the class with the most votes to X i Prediction: 4 Can be applied to the prediction of continuous values by taking the average value of each prediction for a given test tuple i Accuracy 4 Often significantly better than a single classifier derived from D 4 For noise data: not considerably worse, more robust 3

Random Forest (Breiman 2001) i Random Forest: 4 Each classifier in the ensemble is a decision tree classifier and is generated using a random selection of attributes at each node to determine the split 4 During classification, each tree votes and the most popular class is returned i Two Methods to construct Random Forest: 4 Forest-RI (random input selection): Randomly select, at each node, F attributes as candidates for the split at the node. The CART methodology is used to grow the trees to maximum size 4 Forest-RC (random linear combinations): Creates new attributes (or features) that are a linear combination of the existing attributes (reduces the correlation between individual classifiers) i Comparable in accuracy to Adaboost, but more robust to errors and outliers i Insensitive to the number of attributes selected for consideration at each split, and faster than boosting 4

Random Forest i Features and Advantages 4 One of the most accurate learning algorithms available for most data sets 4 Fairly efficiently on large data sets; can be parallelized 4 Can handle lots of variables without variable deletion 4 It gives estimates of what variables are important in the classification 4 It has methods for balancing error in class unbalanced data sets 4 Generated forests can be saved for future use on other data i Disadvantages 4 For data including categorical variables with different number of levels, random forests are biased in favor of those attributes with more levels h Variable importance scores from random forest are not reliable for this type of data 4 Random forests have been observed to overfit for some datasets with noisy classification/regression tasks 4 large number of trees may make the algorithm slow for real-time prediction 5

Class-Imbalanced Data Sets i Class-imbalance problem: Rare positive example but numerous negative ones, e. g. , medical diagnosis, fraud, oilspill, fault, etc. i Traditional methods assume a balanced distribution of classes and equal error costs: not suitable for classimbalanced data i Typical methods for imbalance data in 2 -classification: 4 Oversampling: re-sampling of data from positive class 4 Under-sampling: randomly eliminate tuples from negative class 4 Threshold-moving: moves the decision threshold, t, so that the rare class tuples are easier to classify, and hence, less chance of costly false negative errors 4 Ensemble techniques: Ensemble multiple classifiers 6

Boosting i Analogy: Consult several doctors, based on a combination of weighted diagnoses—weight assigned based on the previous diagnosis accuracy i How boosting works? 4 Weights are assigned to each training tuple 4 A series of k classifiers is iteratively learned 4 After a classifier Mi is learned, the weights are updated to allow the subsequent classifier, Mi+1 , to pay more attention to the training tuples that were misclassified by Mi 4 The final M* combines the votes of each individual classifier, where the weight of each classifier's vote is a function of its accuracy i Boosting algorithm can be extended for numeric prediction i Compared to bagging: Boosting tends to have greater accuracy, but it also risks overfitting the model to misclassified data 7

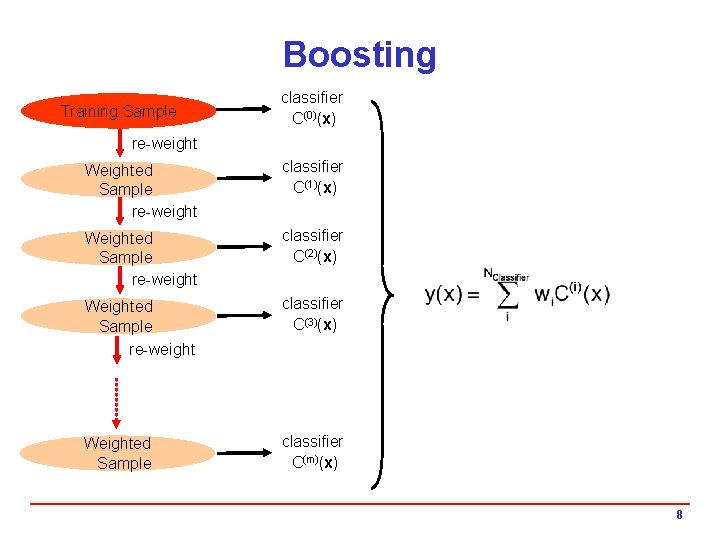

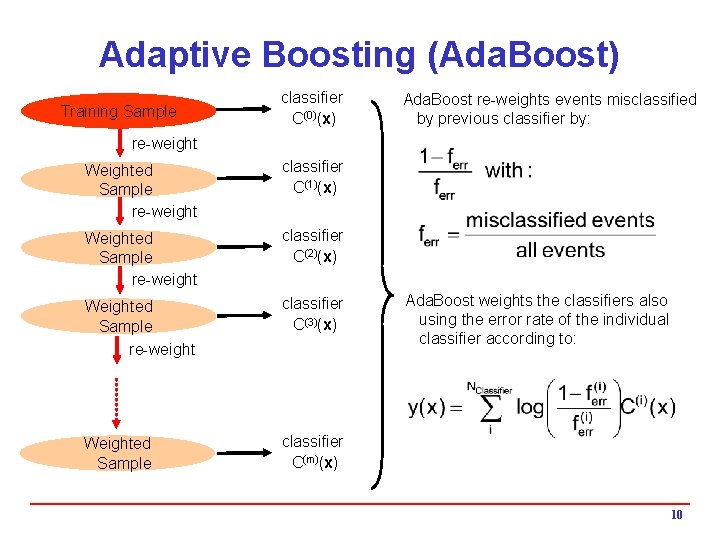

Boosting Training Sample classifier C(0)(x) re-weight Weighted Sample re-weight classifier C(1)(x) Weighted Sample re-weight classifier C(2)(x) Weighted Sample re-weight classifier C(3)(x) Weighted Sample classifier C(m)(x) 8

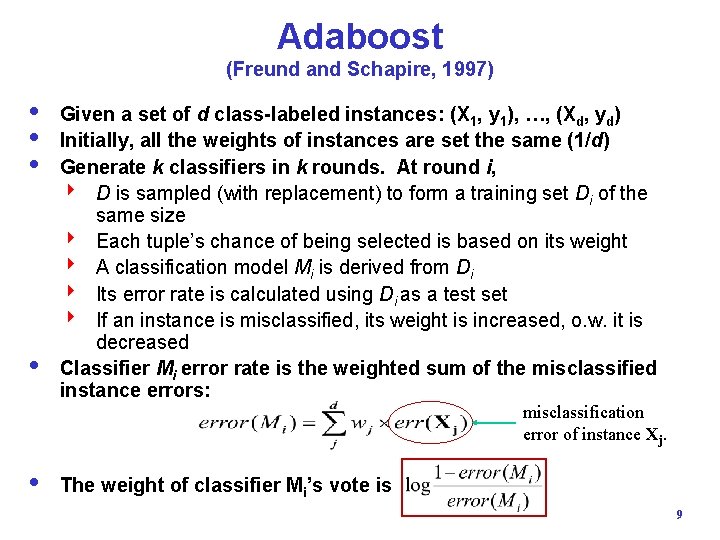

Adaboost (Freund and Schapire, 1997) i Given a set of d class-labeled instances: (X 1, y 1), …, (Xd, yd) i Initially, all the weights of instances are set the same (1/d) i Generate k classifiers in k rounds. At round i, 4 D is sampled (with replacement) to form a training set Di of the same size 4 Each tuple’s chance of being selected is based on its weight 4 A classification model Mi is derived from Di 4 Its error rate is calculated using Di as a test set 4 If an instance is misclassified, its weight is increased, o. w. it is decreased i Classifier Mi error rate is the weighted sum of the misclassified instance errors: misclassification error of instance Xj. i The weight of classifier Mi’s vote is 9

Adaptive Boosting (Ada. Boost) Training Sample classifier C(0)(x) Ada. Boost re-weights events misclassified by previous classifier by: re-weight Weighted Sample re-weight classifier C(1)(x) Weighted Sample re-weight classifier C(2)(x) Weighted Sample re-weight classifier C(3)(x) Weighted Sample classifier C(m)(x) Ada. Boost weights the classifiers also using the error rate of the individual classifier according to: 10

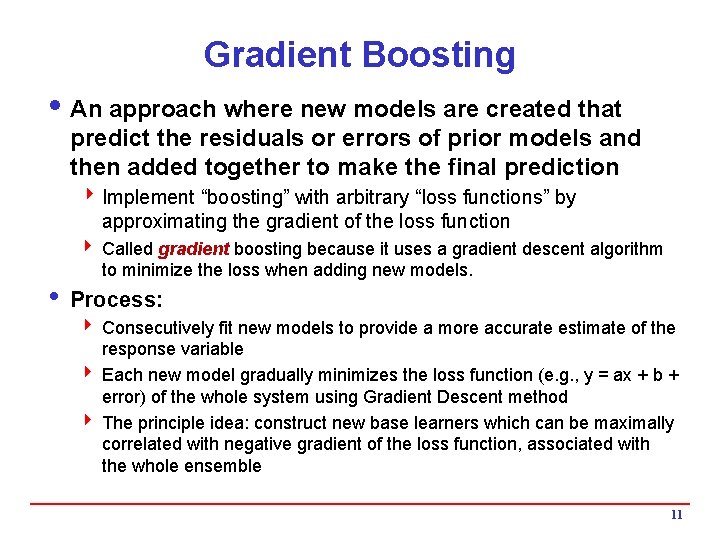

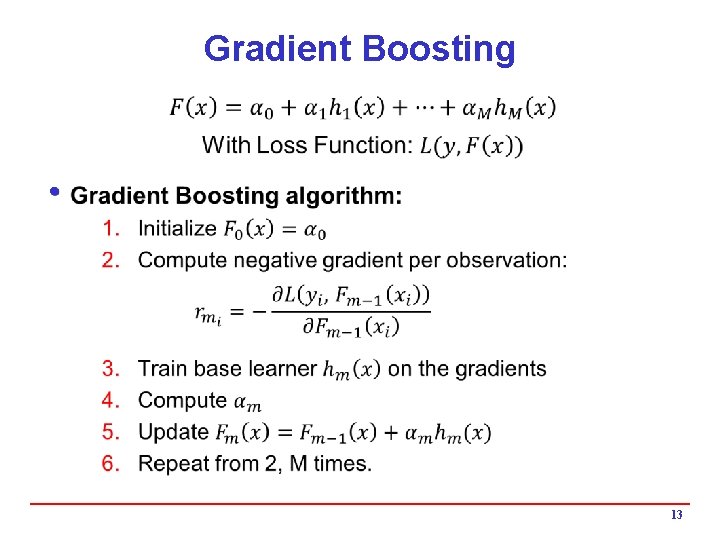

Gradient Boosting i An approach where new models are created that predict the residuals or errors of prior models and then added together to make the final prediction 4 Implement “boosting” with arbitrary “loss functions” by approximating the gradient of the loss function 4 Called gradient boosting because it uses a gradient descent algorithm to minimize the loss when adding new models. i Process: 4 Consecutively fit new models to provide a more accurate estimate of the response variable 4 Each new model gradually minimizes the loss function (e. g. , y = ax + b + error) of the whole system using Gradient Descent method 4 The principle idea: construct new base learners which can be maximally correlated with negative gradient of the loss function, associated with the whole ensemble 11

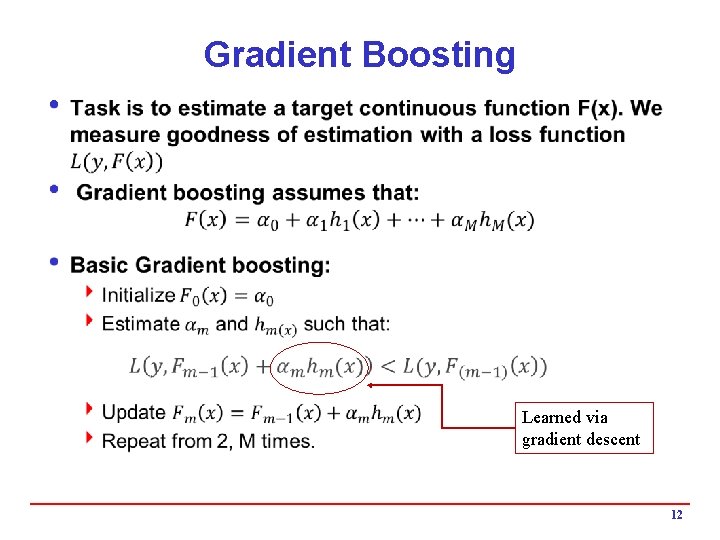

Gradient Boosting i Learned via gradient descent 12

Gradient Boosting i 13

- Slides: 13