Author Andrew C Smith Abstract LHCbs participation in

Author: Andrew C. Smith Abstract: LHCb's participation in LCG's Service Challenge 3 involves testing the bulk data transfer infrastructure developed to allow high bandwidth distribution of data across the grid in accordance with the computing model. To enable reliable bulk replication of data, LHCb's DIRAC system has been integrated with g. Lite's File Transfer Service middleware component to make use of dedicated network links between LHCb computing centres. DIRAC's Data Management tools previously allowed the replication, registration and deletion of files on the grid. For SC 3 supplementary functionality has been added to allow bulk replication of data (using FTS) and efficient mass registration to the LFC replica catalog. Provisional performance results have shown that the system developed can meet the expected data replication rate required by the computing model in 2007. This paper details the experience and results of integration and utilisation of DIRAC with the SC 3 transfer machinery. LHCb Transfer Aims During SC 3 The extended Service Phase of SC 3 was to allow the experiments to test their specific software and validate their computing models using the platform of machinery provided. LHCb’s Data Replication goals during SC 3 can be summarised as: • Replication ~1 TB of stripped DST data from CERN to all Tier-1’s. • Replication of 8 TB of digitised data from CERN/Tier-0 to LHCb participating Tier 1 centers in parallel. • Removal of 50 k replicas (via LFN) from all Tier-1 centres • Moving 4 TB of data from Tier 1 centres to Tier 0 and to other participating Tier 1 centers. Introduction to DIRAC Data Management Architecture Integration of DIRAC with FTS DIRAC architecture split into three main component types: SC 3 replication machinery utilised g. Lite’s File Transfer Service (FTS) • Services - independent functionalities deployed and administered centrally on machines accessible by all other DIRAC components • lowest-level data movement service defined in the g. Lite architecture • offers reliable point-to-point bulk file transfers • Resources - GRID compute and storage resources at remote sites • physical files (SURLs) between SRM managed SEs • Agents - lightweight software components that request jobs from the central Services for a specific purpose. The DIRAC Data Management System is made up an assortment of these components. • accepts source-destination SURL pairs • assigns file transfers to dedicated transfer channel • take advantage of networking between CERN and Tier 1 s Data Management Clients User. Interface DIRAC Data Management Components WMS Replica. Manager Transfer. Agent File. Catalog. C File. Catalog. B File. Catalog. A Storage. Element SRMStorage Physical storage Grid. FTPStorage HTTPStorage SE Service • routing of transfers is not provided Higher level service required to resolve SURLs and hence decide on routing. DIRAC Data Management System employed to do these tasks. LHCb DIRAC DMS File Catalog Interface LCG File Catalog Transfer Agent Replica Manager File Transfer Service LCG – SC 3 Machinery Main components of the DIRAC Data Management System: Transfer network • Replica Manager • provides an API for the available data management operations • point of contact for users of data management systems • removes direct operation with Storage Element and File Catalogs • uploading/downloading file to/from GRID SE, replication of files, file registration, file removal • File Catalog • standard API exposed for variety of available catalogs • allows redundancy across several catalogs Tier 1 SE B Tier 1 SE C Transfer Manager Interface Tier 1 SE A Request DB Tier 0 SE • Storage Element • abstraction of GRID storage resources • actual access by specific plug-ins • srm, gridftp, bbftp, sftp, http supported • namespace management, file up/download, deletion etc. Integration requirements: • new methods developed in Replica Manager • previous Data Management operations single file and blocking • bulk operation functionality added to the Transfer Agent/Request • monitoring of asynchronous FTS jobs required • information for monitoring stored within Request DB entry

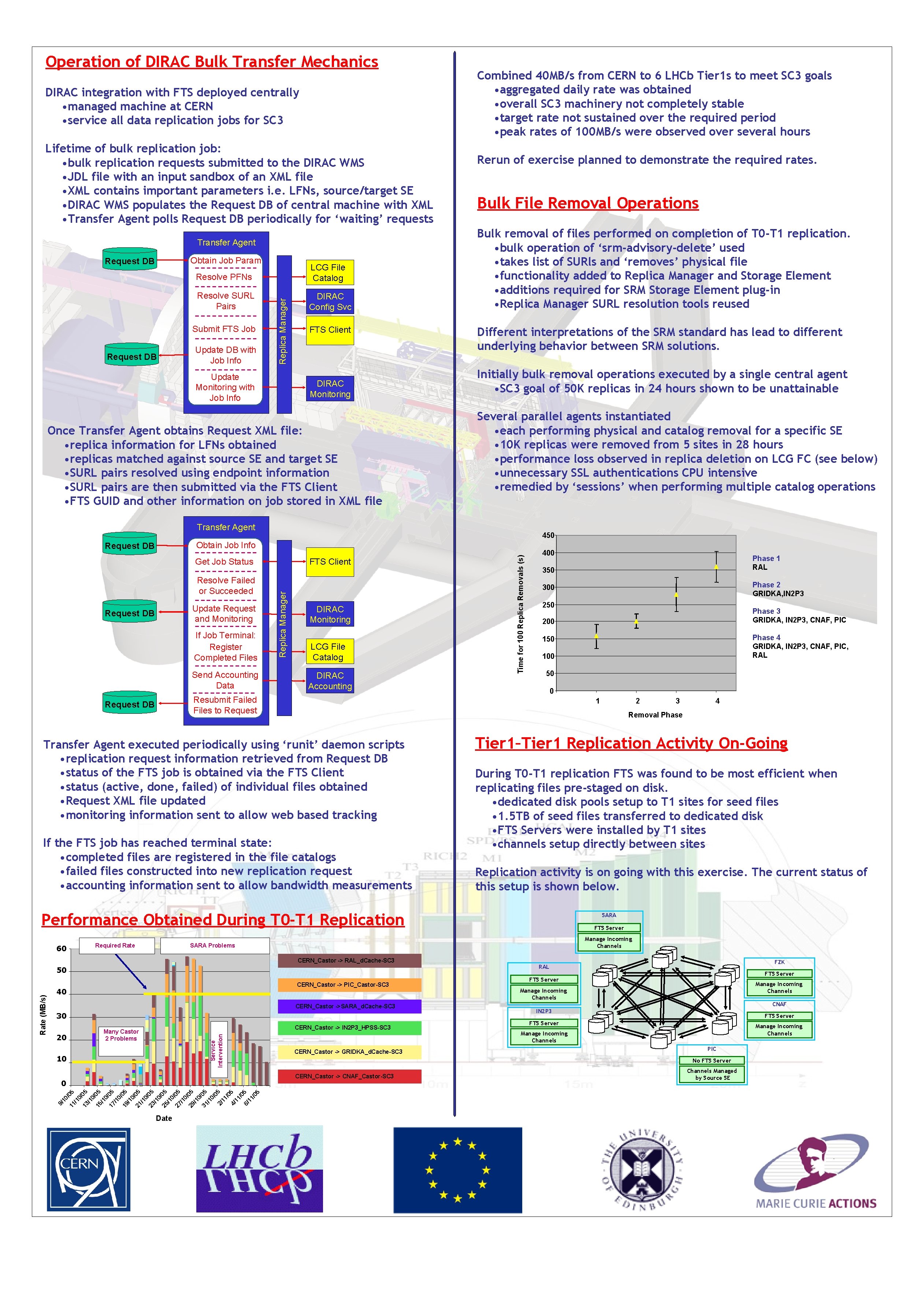

Operation of DIRAC Bulk Transfer Mechanics DIRAC integration with FTS deployed centrally • managed machine at CERN • service all data replication jobs for SC 3 Lifetime of bulk replication job: • bulk replication requests submitted to the DIRAC WMS • JDL file with an input sandbox of an XML file • XML contains important parameters i. e. LFNs, source/target SE • DIRAC WMS populates the Request DB of central machine with XML • Transfer Agent polls Request DB periodically for ‘waiting’ requests Transfer Agent Obtain Job Param Resolve PFNs LCG File Catalog Resolve SURL Pairs DIRAC Config Svc Submit FTS Job Request DB Update DB with Job Info Replica Manager Request DB Update Monitoring with Job Info FTS Client DIRAC Monitoring Once Transfer Agent obtains Request XML file: • replica information for LFNs obtained • replicas matched against source SE and target SE • SURL pairs resolved using endpoint information • SURL pairs are then submitted via the FTS Client • FTS GUID and other information on job stored in XML file Combined 40 MB/s from CERN to 6 LHCb Tier 1 s to meet SC 3 goals • aggregated daily rate was obtained • overall SC 3 machinery not completely stable • target rate not sustained over the required period • peak rates of 100 MB/s were observed over several hours Rerun of exercise planned to demonstrate the required rates. Bulk File Removal Operations Bulk removal of files performed on completion of T 0 -T 1 replication. • bulk operation of ‘srm-advisory-delete’ used • takes list of SURls and ‘removes’ physical file • functionality added to Replica Manager and Storage Element • additions required for SRM Storage Element plug-in • Replica Manager SURL resolution tools reused Different interpretations of the SRM standard has lead to different underlying behavior between SRM solutions. Initially bulk removal operations executed by a single central agent • SC 3 goal of 50 K replicas in 24 hours shown to be unattainable Several parallel agents instantiated • each performing physical and catalog removal for a specific SE • 10 K replicas were removed from 5 sites in 28 hours • performance loss observed in replica deletion on LCG FC (see below) • unnecessary SSL authentications CPU intensive • remedied by ‘sessions’ when performing multiple catalog operations Transfer Agent 450 FTS Client Resolve Failed or Succeeded Update Request and Monitoring Request DB If Job Terminal: Register Completed Files Send Accounting Data Replica Manager Get Job Status DIRAC Monitoring LCG File Catalog DIRAC Accounting Time for 100 Replica Removals (s) Obtain Job Info Request DB 400 350 200 100 50 0 CERN_Castor -> PIC_Castor-SC 3 CERN_Castor ->SARA_d. Cache-SC 3 30 CERN_Castor -> IN 2 P 3_HPSS-SC 3 Service Intervention CERN_Castor -> GRIDKA_d. Cache-SC 3 Replication activity is on going with this exercise. The current status of this setup is shown below. 0/ 13 05 /1 0/ 15 05 /1 0/ 17 05 /1 0/ 19 05 /1 0/ 21 05 /1 0/ 23 05 /1 0/ 25 05 /1 0/ 27 05 /1 0/ 29 05 /1 0 31 /05 /1 0/ 0 2/ 5 11 /0 4/ 5 11 /0 6/ 5 11 /0 5 /1 05 11 0/ SARA FTS Server Date FZK RAL FTS Server Manage Incoming Channels CNAF IN 2 P 3 FTS Server Manage Incoming Channels PIC No FTS Server CERN_Castor -> CNAF_Castor-SC 3 0 9/ 1 Rate (MB/s) 50 10 4 Manage Incoming Channels CERN_Castor -> RAL_d. Cache-SC 3 20 3 During T 0 -T 1 replication FTS was found to be most efficient when replicating files pre-staged on disk. • dedicated disk pools setup to T 1 sites for seed files • 1. 5 TB of seed files transferred to dedicated disk • FTS Servers were installed by T 1 sites • channels setup directly between sites SARA Problems Many Castor 2 Problems 2 Tier 1–Tier 1 Replication Activity On-Going Performance Obtained During T 0 -T 1 Replication 40 Phase 4 GRIDKA, IN 2 P 3, CNAF, PIC, RAL 150 Removal Phase If the FTS job has reached terminal state: • completed files are registered in the file catalogs • failed files constructed into new replication request • accounting information sent to allow bandwidth measurements Required Rate Phase 3 GRIDKA, IN 2 P 3, CNAF, PIC 1 Transfer Agent executed periodically using ‘runit’ daemon scripts • replication request information retrieved from Request DB • status of the FTS job is obtained via the FTS Client • status (active, done, failed) of individual files obtained • Request XML file updated • monitoring information sent to allow web based tracking 60 Phase 2 GRIDKA, IN 2 P 3 300 Resubmit Failed Files to Request DB Phase 1 RAL Channels Managed by Source SE

- Slides: 2