x DPDK Multiple Sized Packet buffer pool Proposal

x DPDK Multiple Sized Packet buffer pool Proposal GAVIN HU PRINCIPAL SOFTWARE ENGINEER ARM

Agenda Ø DPDK packet buffer overview Ø Optimization Proposal Ø Why the proposal makes sense Ø Ethernet traffic profile model Ø HW support Ø Software changes required Ø Where we are 2

Abstract A high speed network adapter requires the packets be transferred to the DMA buffers within the main memory, by NIC, to offload CPU. The DMA buffers are pre-allocated for the NIC HW to reduce latency. Upon packet arrivals, the NIC transfers the packets into pre-allocated buffers without CPU involvement, this can highly save CPU cycles and increase the Ethernet throughput. However for the host software that pre-allocates, without knowing the size of the arrival packets beforehand, has to allocates the biggest buffer for every packet, in case of DPDK, it is 2048 Bytes. This is ok for big packets but causes significant memory space waste for smaller packets, especially for systems that are memory constrained. This proposal is to manage a pool with multiple sized buffers and allocate it for the NIC HW. Upon a packet arrival, NIC HW will get its size, select the right buffer and transfer. This can save memory space enormously. Moreover, a just-fit single buffer for a packet, allows a whole packet being DMA transferred for one time. That way chained buffers are not needed any more, that save CPU cycles from traversing chained buffers and DMA transactions. 3

Packet memory optimization - Issue Ø Ø Arm-based platforms are more heterogeneous Ø Some are cost constrained Ø Some have limited memory Ø Memory optimization is a necessity Ø Buffers/queues management hardware units available Ø Multiple buffer sizes are feasible DPDK supports single sized memory pool Ø With single buffer size Ø Typical buffer size is 2 K wasting a lot of memory Ø For packet sizes more than 2 K, buffers are chained requiring additional CPU cycles 4

Packet memory optimization - Solution and Further comments Each memory pool can contain several sub-pools, each with one packet size Ø Application can create a single pool with buffers of different sizes to suit the traffic profile expected Ø Helps achieve optimal usage of available memory as well as save CPU cycles for larger packets Ø HW support required – need to work with partners Ø 5

Benefits – to be continued Ø Ø Memory space saving Ø In example of 64 -octet packets, memory savings is (2048 -64)/2048=97%, the real saving is dependent on the traffic profile, typically 50% considering the bimodal traffic model. Ø In case of 4096 Rx buffers for a single host/VM, total memory saving is up to (204864)*4096 = 8 MB Ø In case of VMs/VNFs, the saving can mount to 8 M*number of VMs, amount to hundreds of MBs or multiple GBs. CPU cycles saving Ø A single frame buffer saves CPU cycles from traversing chained buffers. 6

Benefits - Continued Ø Ø DMA a whole packet at a time Ø A single continuous buffer without chaining allows the whole packet being DMA transferred at one time, this can save PCIe or other IO bandwidth. Ø In example of 9000 B jumbo frames, 4 DMA transactions were saved Virtio Ø Ø Single frame buffer enables fixed mappings of Avail Ring and Descriptor ring Avoid cache migrations, which is costly Save vring allocation and release operations Facilitate applying SIMD/SVE instructions 7

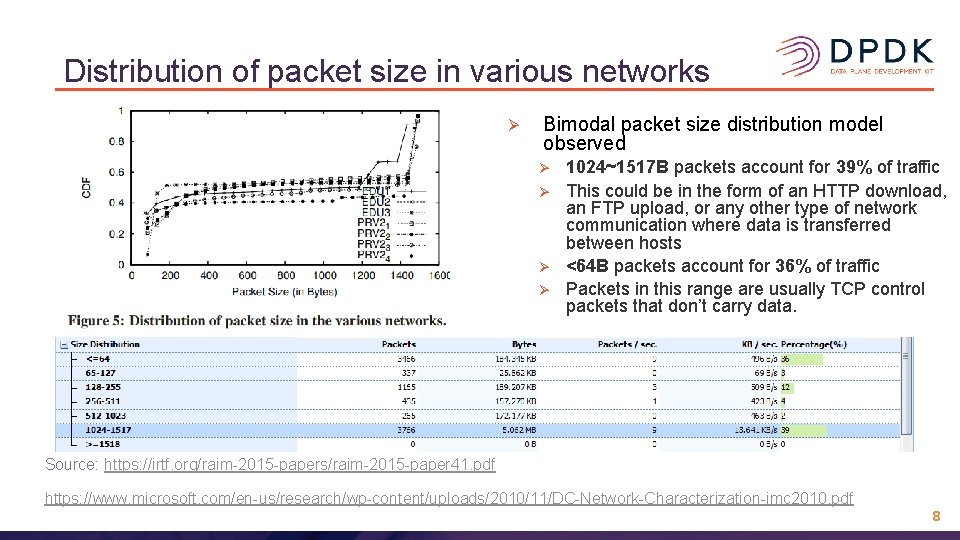

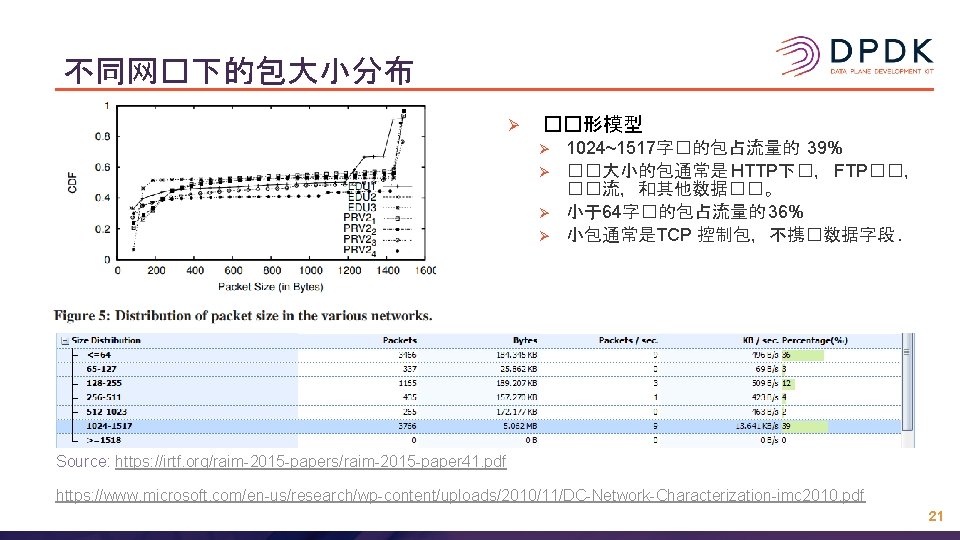

Distribution of packet size in various networks Ø Bimodal packet size distribution model observed Ø Ø 1024~1517 B packets account for 39% of traffic This could be in the form of an HTTP download, an FTP upload, or any other type of network communication where data is transferred between hosts <64 B packets account for 36% of traffic Packets in this range are usually TCP control packets that don’t carry data. Source: https: //irtf. org/raim-2015 -papers/raim-2015 -paper 41. pdf https: //www. microsoft. com/en-us/research/wp-content/uploads/2010/11/DC-Network-Characterization-imc 2010. pdf 8

HW Support Required Ø Ø No special HW support required on the host processor side Ø X 86 Ø ARM 64 NIC requirements Ø Minimum capability to parse the packet headers and get the packet size Ø Hardware units/Firmware managing the queue and buffers Ø Distribute packets to different buffer pools according to the size 9

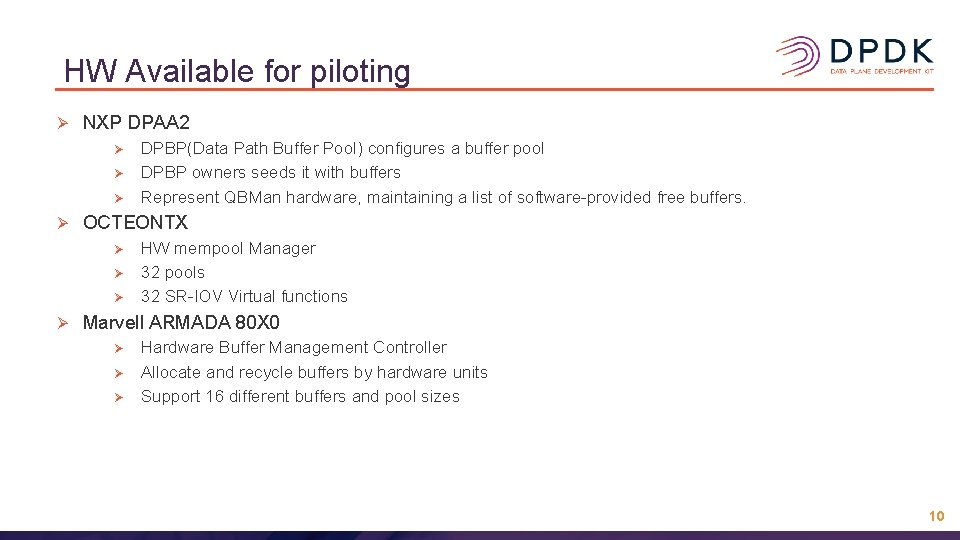

HW Available for piloting Ø NXP DPAA 2 Ø Ø OCTEONTX Ø Ø DPBP(Data Path Buffer Pool) configures a buffer pool DPBP owners seeds it with buffers Represent QBMan hardware, maintaining a list of software-provided free buffers. HW mempool Manager 32 pools 32 SR-IOV Virtual functions Marvell ARMADA 80 X 0 Ø Ø Ø Hardware Buffer Management Controller Allocate and recycle buffers by hardware units Support 16 different buffers and pool sizes 10

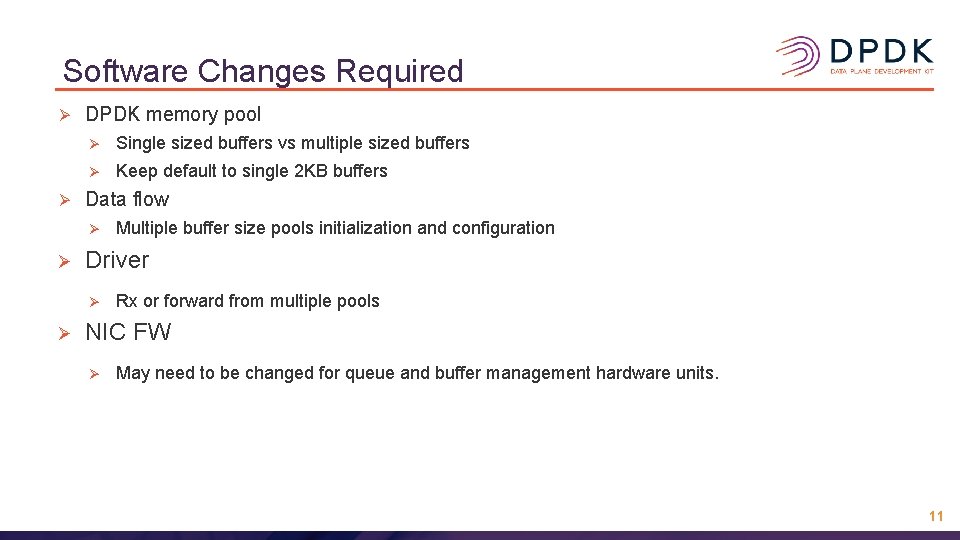

Software Changes Required Ø Ø DPDK memory pool Ø Single sized buffers vs multiple sized buffers Ø Keep default to single 2 KB buffers Data flow Ø Ø Driver Ø Ø Multiple buffer size pools initialization and configuration Rx or forward from multiple pools NIC FW Ø May need to be changed for queue and buffer management hardware units. 11

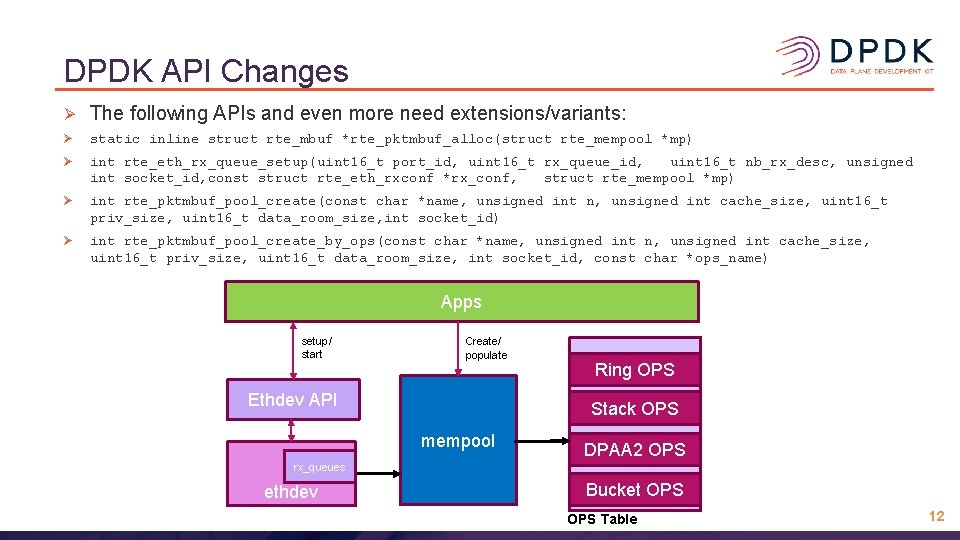

DPDK API Changes Ø The following APIs and even more need extensions/variants: Ø static inline struct rte_mbuf *rte_pktmbuf_alloc(struct rte_mempool *mp) Ø int rte_eth_rx_queue_setup(uint 16_t port_id, uint 16_t rx_queue_id, uint 16_t nb_rx_desc, unsigned int socket_id, const struct rte_eth_rxconf *rx_conf, struct rte_mempool *mp) Ø int rte_pktmbuf_pool_create(const char *name, unsigned int n, unsigned int cache_size, uint 16_t priv_size, uint 16_t data_room_size, int socket_id) Ø int rte_pktmbuf_pool_create_by_ops(const char *name, unsigned int n, unsigned int cache_size, uint 16_t priv_size, uint 16_t data_room_size, int socket_id, const char *ops_name) Apps setup/ start Create/ populate Ethdev API Ring OPS Stack OPS mempool DPAA 2 OPS rx_queues ethdev Bucket OPS Table 12

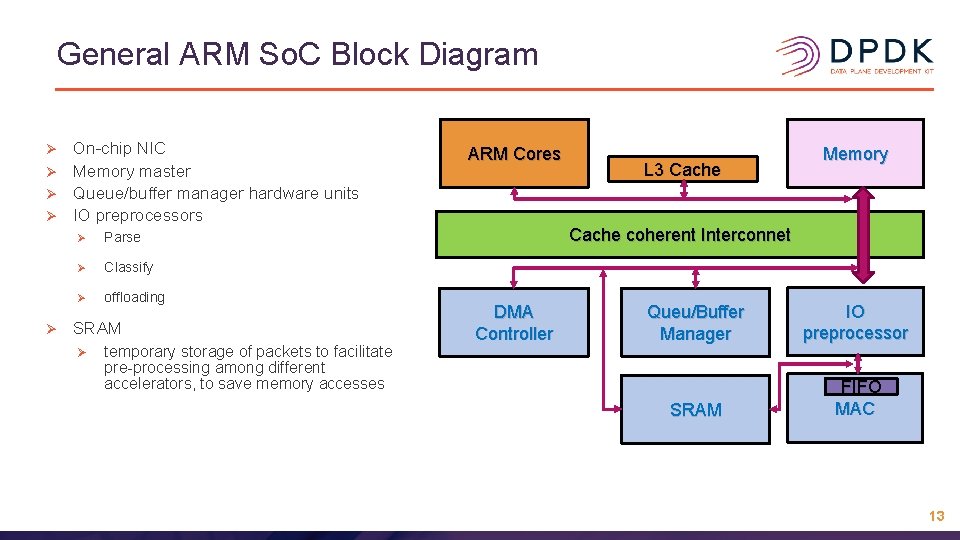

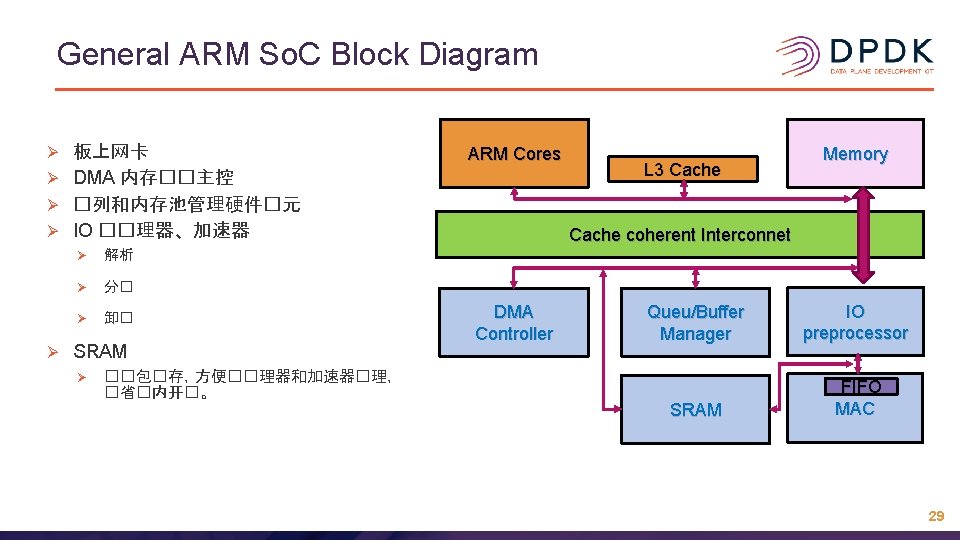

General ARM So. C Block Diagram On-chip NIC Ø Memory master Ø Queue/buffer manager hardware units Ø IO preprocessors Ø Ø Ø Parse Ø Classify Ø offloading SRAM Ø temporary storage of packets to facilitate pre-processing among different accelerators, to save memory accesses ARM Cores L 3 Cache Memory Cache coherent Interconnet DMA Controller Queu/Buffer Manager IO preprocessor SRAM FIFO MAC 13

Where we are and future work Ø Software designs Ø Seeking NIC support(hardware and firmware) and selecting piloting HW. Ø Ø Ø Marvell ARMADA(support long and short buffers per port) Ø NXP DPAA 2(support assigning queue sets to a TC) Ø Reuse the multiple pools per queue to hold specific sized packets. Identifying use cases for piloting the new APIs and benchmarking Ø L 2 fwd Ø L 3 fwd Ø ……. RFC for API changes Ø rte_mempool, Ø rte_ethdev Ø ……. 14

Q & A Thanks! 15

不同网�下的包大小分布 Ø ��形模型 Ø Ø 1024~1517字�的包占流量的 39% ��大小的包通常是 HTTP下�, FTP��, ��流,和其他数据��。 小于64字�的包占流量的 36% 小包通常是TCP 控制包,不携�数据字段. Source: https: //irtf. org/raim-2015 -papers/raim-2015 -paper 41. pdf https: //www. microsoft. com/en-us/research/wp-content/uploads/2010/11/DC-Network-Characterization-imc 2010. pdf 21

具�能力的硬件 Ø Ø Ø 恩智浦 DPAA 2 Ø DPBP(Data Path Buffer Pool) configures a buffer pool Ø DPBP owners seeds it with buffers Ø Represent QBMan hardware, maintaining a list of software-provided free buffers. Cavium OCTERONTX/THUNDERX 2 Ø HW mempool Manager Ø 32 pools Ø 32 SR-IOV Virtual functions Marvell ARMADA 80 X 0 Ø 硬件�冲区管理控制器 Ø 硬件�元分配和回收�冲区 Ø 支持16个内存池 23

DPDK API 修改 Ø 以下甚至更多API 需要�充或更多�体 Ø static inline struct rte_mbuf *rte_pktmbuf_alloc(struct rte_mempool *mp) Ø int rte_eth_rx_queue_setup(uint 16_t port_id, uint 16_t rx_queue_id, uint 16_t nb_rx_desc, unsigned int socket_id, const struct rte_eth_rxconf *rx_conf, struct rte_mempool *mp) Ø int rte_pktmbuf_pool_create(const char *name, unsigned int n, unsigned int cache_size, uint 16_t priv_size, uint 16_t data_room_size, int socket_id) Ø int rte_pktmbuf_pool_create_by_ops(const char *name, unsigned int n, unsigned int cache_size, uint 16_t priv_size, uint 16_t data_room_size, int socket_id, const char *ops_name) 25

General ARM So. C Block Diagram 板上网卡 Ø DMA 内存��主控 Ø �列和内存池管理硬件�元 Ø IO ��理器、加速器 Ø Ø Ø 解析 Ø 分� Ø 卸� SRAM Ø ��包�存,方便��理器和加速器�理, �省�内开�。 ARM Cores L 3 Cache Memory Cache coherent Interconnet DMA Controller Queu/Buffer Manager IO preprocessor SRAM FIFO MAC 29

�与答 ��! 胡兵全 ARM Corp. �箱: gavin. hu@arm. com 微信号: 15202154518 30

- Slides: 30