WritebackAware Bandwidth Partitioning for Multicore Systems with PCM

Writeback-Aware Bandwidth Partitioning for Multi-core Systems with PCM MIAO ZHOU, YU DU, BRUCE CHILDERS, RAMI MELHEM, DANIEL MOSSÉ UNIVERSITY OF PITTSBURGH http: //www. cs. pitt. edu/PCM

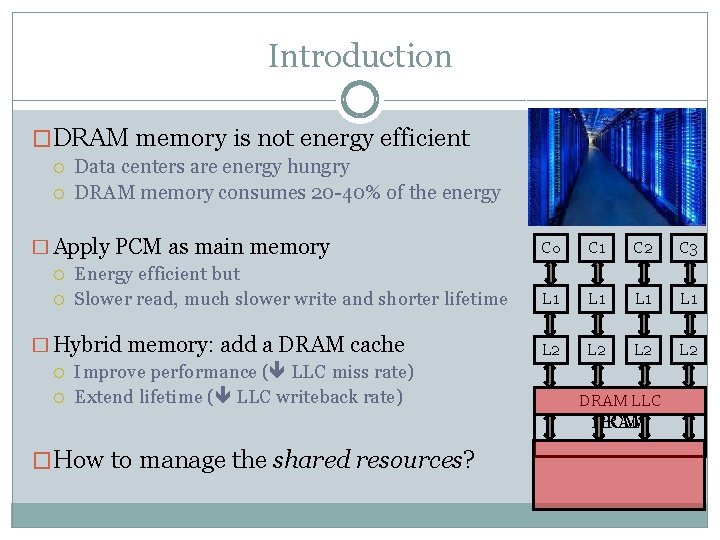

Introduction �DRAM memory is not energy efficient Data centers are energy hungry DRAM memory consumes 20 -40% of the energy � Apply PCM as main memory Energy efficient but Slower read, much slower write and shorter lifetime � Hybrid memory: add a DRAM cache Improve performance ( LLC miss rate) Extend lifetime ( LLC writeback rate) C 0 C 1 C 2 C 3 L 1 L 1 L 2 L 2 DRAM LLC PCM DRAM �How to manage the shared resources?

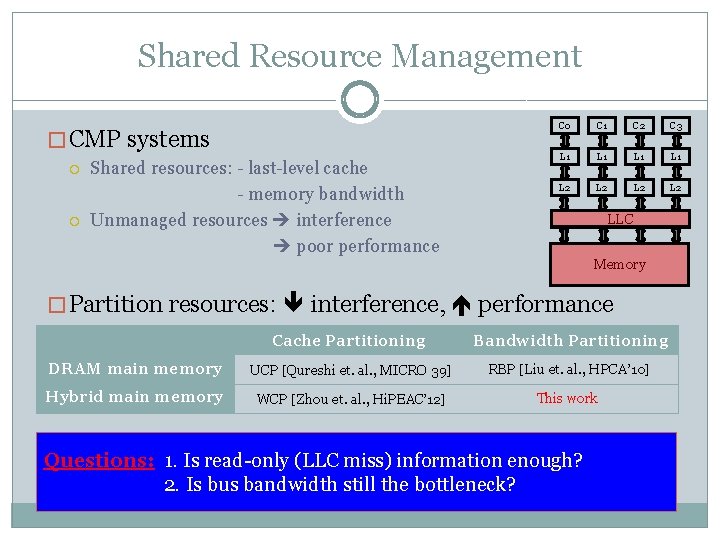

Shared Resource Management � CMP systems Shared resources: - last-level cache - memory bandwidth Unmanaged resources interference poor performance C 0 C 1 C 2 C 3 L 1 L 1 L 2 L 2 LLC Memory � Partition resources: interference, performance Cache Partitioning Bandwidth Partitioning DRAM main memory UCP [Qureshi et. al. , MICRO 39] RBP [Liu et. al. , HPCA’ 10] Hybrid main memory WCP [Zhou et. al. , Hi. PEAC’ 12] This work Utility-based Partitioning Writeback-aware Partitioning (WCP) Read-only Bandwidth Partitioning (RBP) Questions: 1. Cache Is Cache read-only (LLC (UCP) miss) information enough? Tracksand utility (LLC hit/miss) andstill overall LLC misses Tracks minimizes LLC miss &minimizes writebacks Partitions the bandwidth based on miss information 2. bus Is bus bandwidth the. LLC bottleneck?

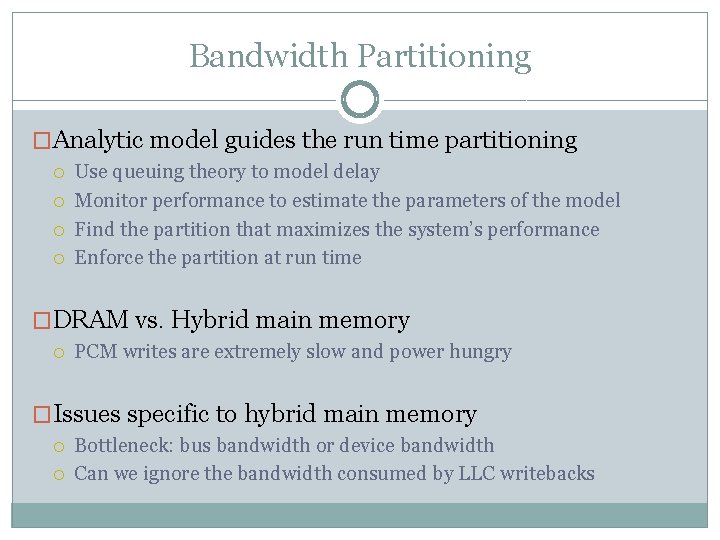

Bandwidth Partitioning �Analytic model guides the run time partitioning Use queuing theory to model delay Monitor performance to estimate the parameters of the model Find the partition that maximizes the system’s performance Enforce the partition at run time �DRAM vs. Hybrid main memory PCM writes are extremely slow and power hungry �Issues specific to hybrid main memory Bottleneck: bus bandwidth or device bandwidth Can we ignore the bandwidth consumed by LLC writebacks

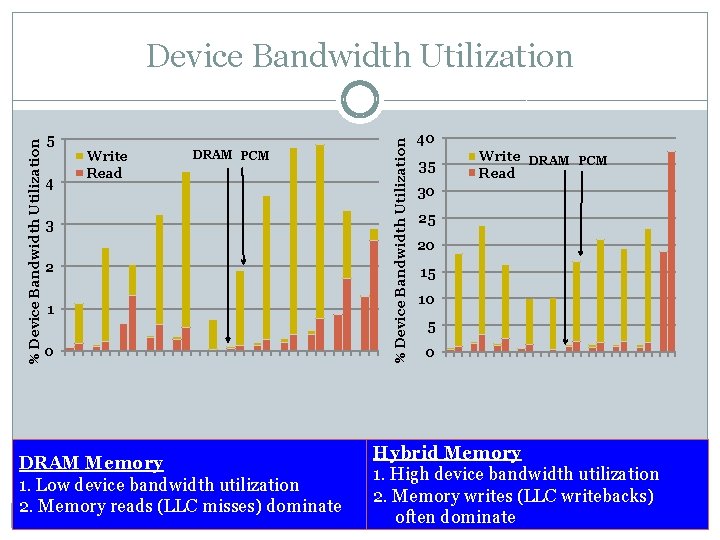

5 4 Write Read DRAM PCM 3 2 1 0 DRAM Memory 1. Low device bandwidth utilization 2. Memory reads (LLC misses) dominate % Device Bandwidth Utilization 40 35 Write DRAM PCM Read 30 25 20 15 10 5 0 Hybrid Memory 1. High device bandwidth utilization 2. Memory writes (LLC writebacks) often dominate

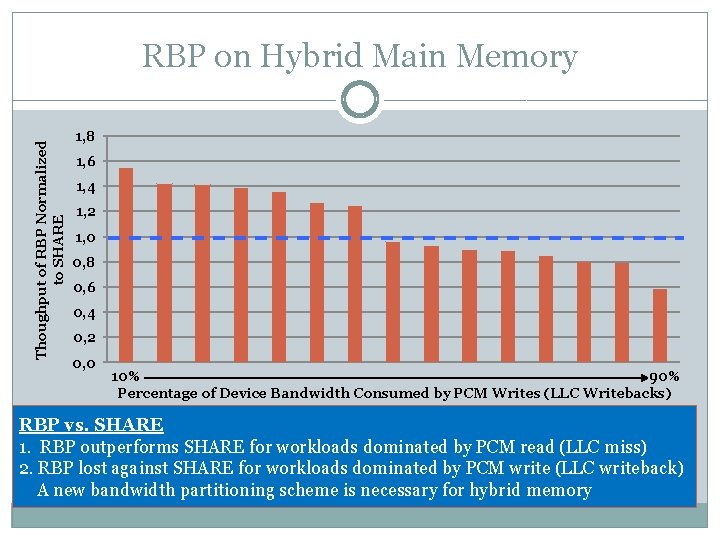

Thoughput of RBP Normalized to SHARE RBP on Hybrid Main Memory 1, 8 1, 6 1, 4 1, 2 1, 0 0, 8 0, 6 0, 4 0, 2 0, 0 90% 10% Percentage of Device Bandwidth Consumed by PCM Writes (LLC Writebacks) RBP vs. SHARE 1. RBP outperforms SHARE for workloads dominated by PCM read (LLC miss) 2. RBP lost against SHARE for workloads dominated by PCM write (LLC writeback) A new bandwidth partitioning scheme is necessary for hybrid memory

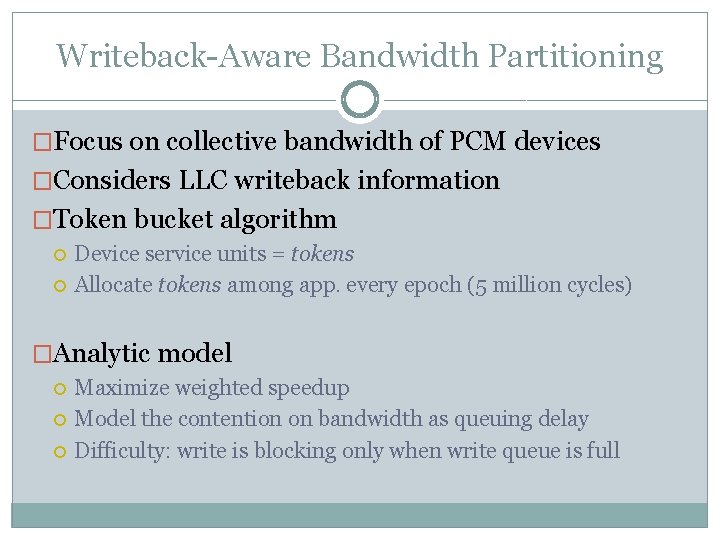

Writeback-Aware Bandwidth Partitioning �Focus on collective bandwidth of PCM devices �Considers LLC writeback information �Token bucket algorithm Device service units = tokens Allocate tokens among app. every epoch (5 million cycles) �Analytic model Maximize weighted speedup Model the contention on bandwidth as queuing delay Difficulty: write is blocking only when write queue is full

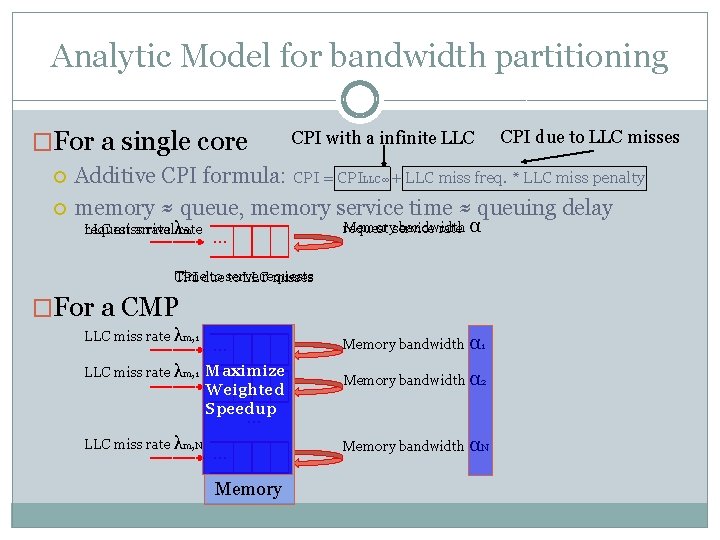

Analytic Model for bandwidth partitioning �For a single core CPI with a infinite LLC CPI due to LLC misses Additive CPI formula: CPI = CPILLC∞ + LLC miss freq. * LLC miss penalty memory ≈ queue, memory service time ≈ queuing delay request arrival LLC miss rate λrate m Memoryservice bandwidth request rate α … Timedue to serve requests CPI to LLC misses �For a CMP LLC miss rate λm, 1 Memory bandwidth α 1 … LLC miss rate λm, 1 Maximize … Weighted Speedup Memory bandwidth α 2 … LLC miss rate λm, N … Memory bandwidth αN

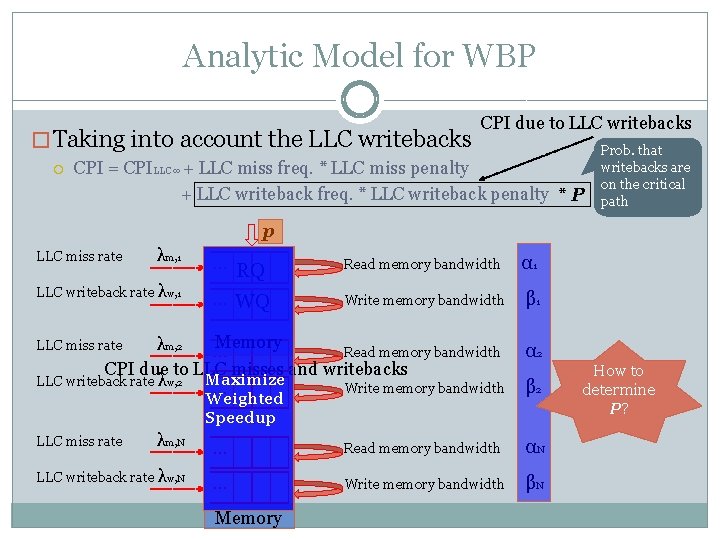

Analytic Model for WBP � Taking into account the LLC writebacks CPI due to LLC writebacks CPI = CPILLC∞ + LLC miss freq. * LLC miss penalty + LLC writeback freq. * LLC writeback penalty * P Prob. that writebacks are on the critical path p LLC miss rate λm, 1 LLC writeback rate λw, 1 … RQ Read memory bandwidth α 1 … WQ Write memory bandwidth β 1 Memory λm, 2 … Read memory bandwidth CPI due to LLC misses and writebacks Maximize LLC writeback rate λw, 2 Write memory bandwidth … LLC miss rate Weighted Speedup … LLC miss rate λm, N LLC writeback rate λw, N α 2 β 2 … Read memory bandwidth αN … Write memory bandwidth βN Memory How to determine P?

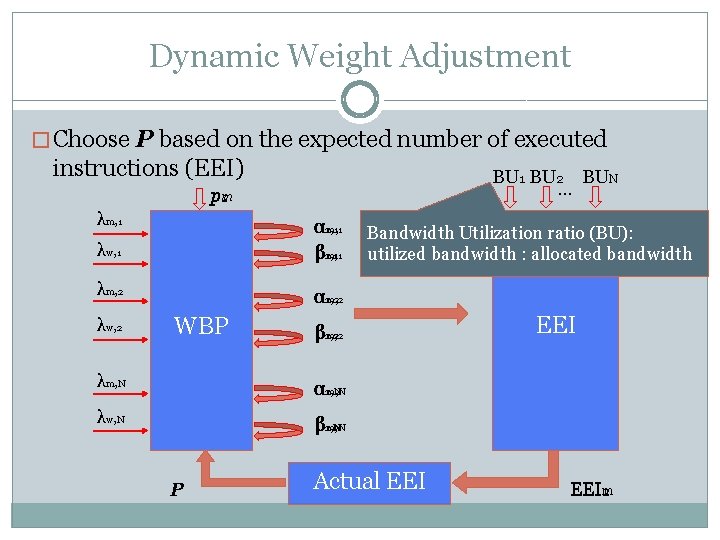

Dynamic Weight Adjustment � Choose P based on the expected number of executed instructions (EEI) BU 1 BU 2 BUN … pm 1 2 λm, 1 λw, 1 α 2, 1 m, 1 1, 1 β 2, 1 m, 1 1, 1 λm, 2 α 2, 2 m, 2 1, 2 λw, 2 WBP β 2, 2 m, 2 1, 2 λm, N α 2, N 1, N m, N λw, N β 2, N m, N 1, N P Bandwidth Utilization ratio (BU): utilized bandwidth : allocated bandwidth Actual EEI EEIm 1 2

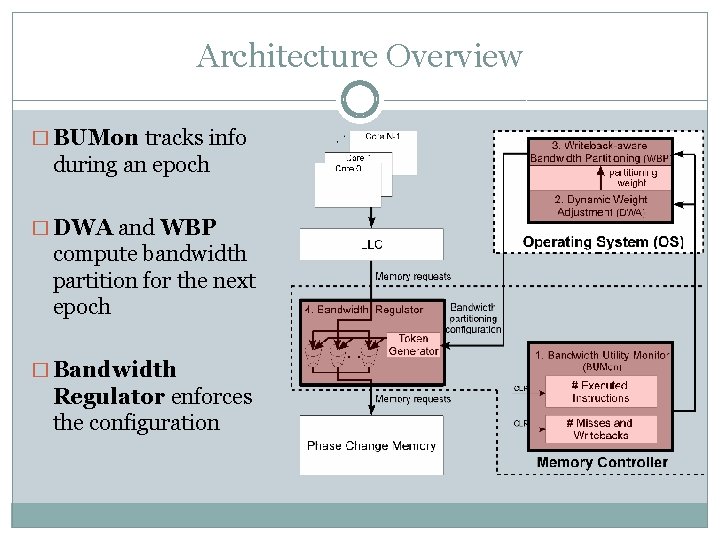

Architecture Overview � BUMon tracks info during an epoch � DWA and WBP compute bandwidth partition for the next epoch � Bandwidth Regulator enforces the configuration

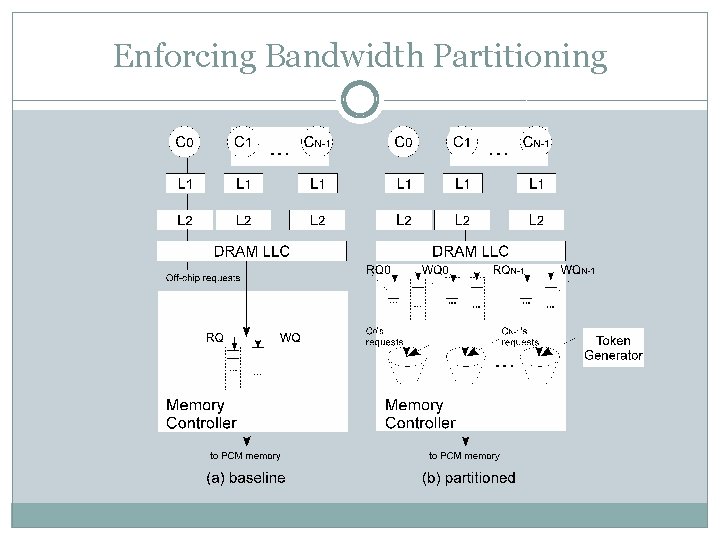

Enforcing Bandwidth Partitioning

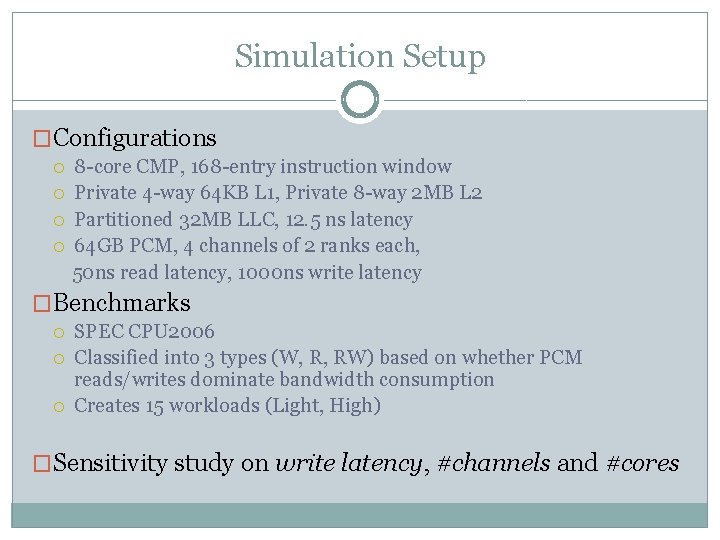

Simulation Setup �Configurations 8 -core CMP, 168 -entry instruction window Private 4 -way 64 KB L 1, Private 8 -way 2 MB L 2 Partitioned 32 MB LLC, 12. 5 ns latency 64 GB PCM, 4 channels of 2 ranks each, 50 ns read latency, 1000 ns write latency �Benchmarks SPEC CPU 2006 Classified into 3 types (W, R, RW) based on whether PCM reads/writes dominate bandwidth consumption Creates 15 workloads (Light, High) �Sensitivity study on write latency, #channels and #cores

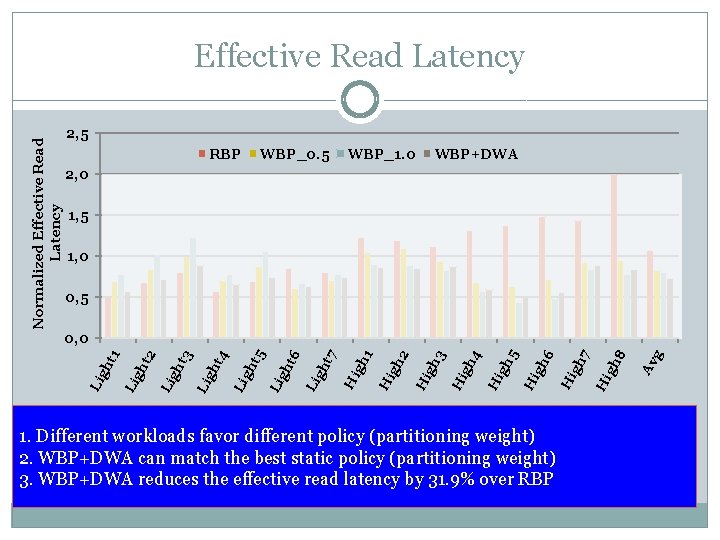

Normalized Effective Read Latency 2, 5 RBP WBP_0. 5 WBP_1. 0 WBP+DWA 2, 0 1, 5 1, 0 0, 5 1. Different workloads favor different policy (partitioning weight) 2. WBP+DWA can match the best static policy (partitioning weight) 3. WBP+DWA reduces the effective read latency by 31. 9% over RBP Av g gh 8 Hi gh 7 Hi 6 Hi gh gh 5 Hi 4 Hi gh gh 3 Hi 2 gh Hi 1 Hi gh t 7 Li gh gh t 6 Li gh t 5 Li t 4 gh Li t 3 gh Li 2 gh t Li Li gh t 1 0, 0

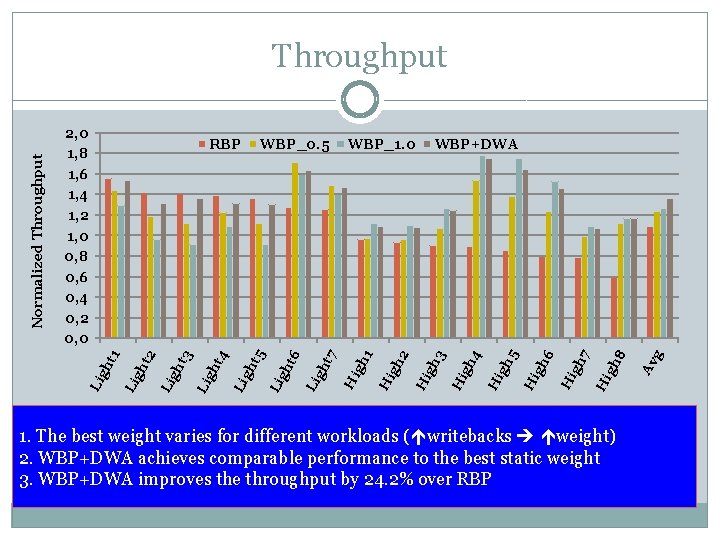

1. The best weight varies for different workloads ( writebacks weight) 2. WBP+DWA achieves comparable performance to the best static weight 3. WBP+DWA improves the throughput by 24. 2% over RBP Av g gh 8 Hi gh 7 Hi 6 Hi gh gh 5 Hi 4 Hi gh gh 3 WBP+DWA Hi 2 gh Hi 1 WBP_1. 0 Hi gh t 7 Li gh gh t 6 WBP_0. 5 gh t 5 Li t 4 gh Li t 3 gh Li gh t Li Li 2 RBP Li 2, 0 1, 8 1, 6 1, 4 1, 2 1, 0 0, 8 0, 6 0, 4 0, 2 0, 0 gh t 1 Normalized Throughput

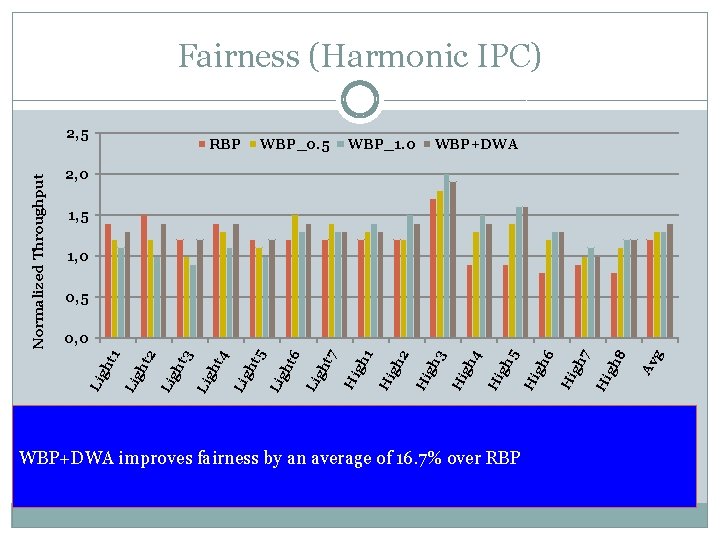

Fairness (Harmonic IPC) RBP WBP_0. 5 WBP_1. 0 WBP+DWA 2, 0 1, 5 1, 0 0, 5 WBP+DWA improves fairness by an average of 16. 7% over RBP Av g gh 8 Hi gh 7 Hi 6 Hi gh gh 5 Hi 4 Hi gh gh 3 Hi 2 gh Hi 1 Hi gh t 7 Li gh gh t 6 Li gh t 5 Li t 4 gh Li t 3 gh Li gh t Li Li 2 0, 0 gh t 1 Normalized Throughput 2, 5

Conclusions � PCM device bandwidth is the bottleneck in hybrid memory � Writeback information is important (LLC writebacks consume a substantial portion of memory bandwidth) � WBP can better partition the PCM bandwidth � WBP outperforms RBP by an average of 24. 9% in terms of weighted speedup

Thank you Questions ?

- Slides: 18