Working Backwards Moves You Forward Informed Data Collection

Working Backwards Moves You Forward: Informed Data Collection Join our interactive poll! Online: pollev. com/katesenn 800 or Text: KATESENN 800 to 22333

Working Backwards Moves You Forward: Informed Data Collection Presenters Kate Senn – West Kentucky Community and Technical College Jonathon Berry – Kentucky Community and Technical College System Office David C. Dixon- Southeast Kentucky Community and Technical College

Additional Author- Kenya Thomas

ASK THE AUDIENCE THE CORRECT FORMAT FOR SUBTITLES (IF USED)

Stakeholders • Internal • Faculty • Students • Administration • External • State Government • State Agencies • Federal Government • Federal Agencies • Grant funders • Citizenry (tax payers)

It all depends upon the context • Pell/Financial Aid “year” • Fall – Spring – Summer • KCTCS • Summer – Fall – Spring • Special Programs and grants • Any three consecutive semesters • Other considerations: • Winter and Summer terms? • Preference or requirements of accreditation bodies • Government or other external budget cycles Could reports and analysis of data be needed for different stakeholders based on different “years”? ?

Program?

All depends upon your “goal” • Embedded and stackable credentials • Multiple entry and exit points • General Studies • AS student could want exposure to computer hardware and networking • Overlapping programs • CIT and Criminal Justice have similar certificates so a student could earn credentials in both areas. • Performance-based Funding

Before we tell you what we did, let’s tell you who we are!

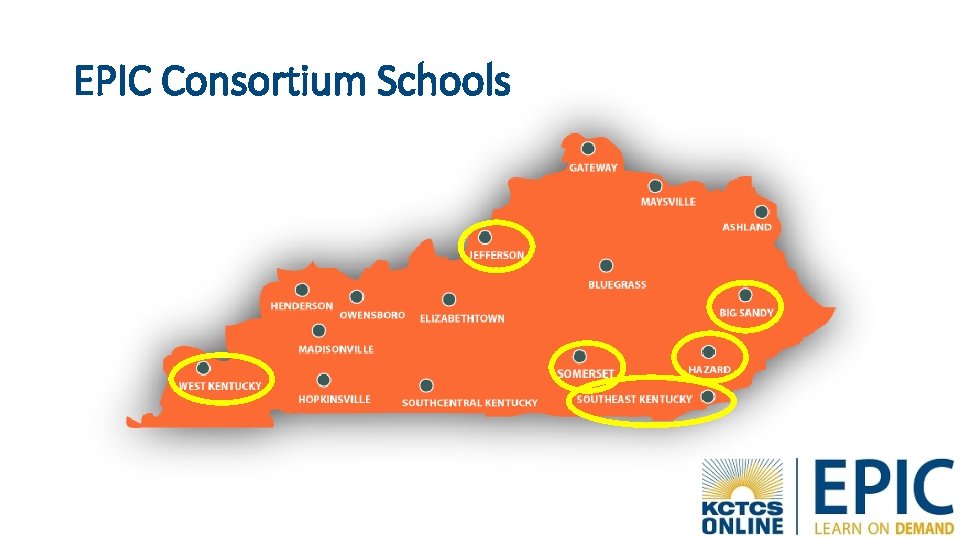

EPIC Consortium Schools

WHO WE ARE • Enhanced and expanded the Learn on Demand offerings in the areas of Computer and Information Technologies and Medical Information Technology. • Created or modified delivery options for courses supporting multiple degree programs and stackable credentials • Introduced adaptive learning technologies within new and revised modules and courses. • Increased student support and tracking • Partnered with regional and national employers to ensure the best learning environment and preparation for the workforce.

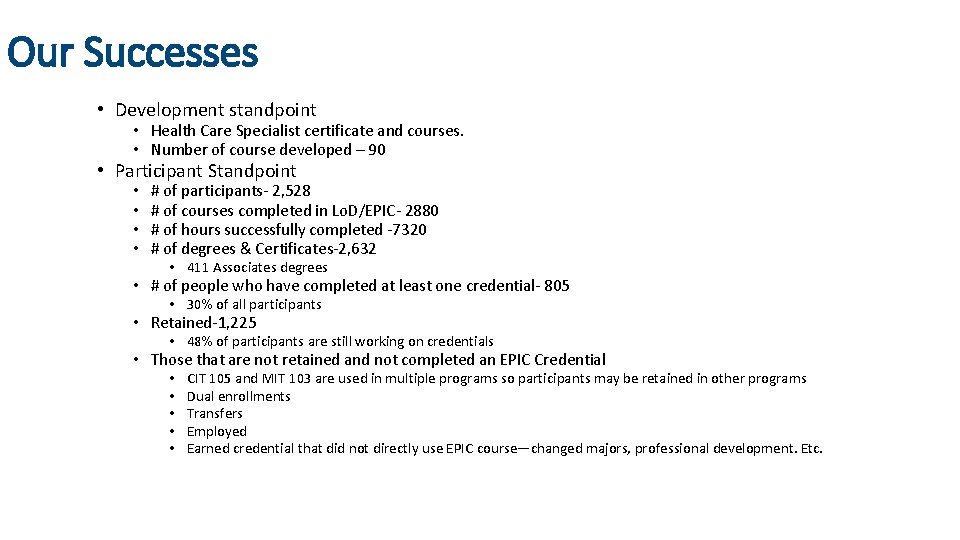

Our Successes • Development standpoint • Health Care Specialist certificate and courses. • Number of course developed – 90 • Participant Standpoint • • # of participants- 2, 528 # of courses completed in Lo. D/EPIC- 2880 # of hours successfully completed -7320 # of degrees & Certificates-2, 632 • 411 Associates degrees • # of people who have completed at least one credential- 805 • 30% of all participants • Retained-1, 225 • 48% of participants are still working on credentials • Those that are not retained and not completed an EPIC Credential • • • CIT 105 and MIT 103 are used in multiple programs so participants may be retained in other programs Dual enrollments Transfers Employed Earned credential that did not directly use EPIC course—changed majors, professional development. Etc.

Working Backwards Moves You Forward: Informed Data Collection • Working backwards? • Project Data Domains: • Planning data collection • Entering the data • Data analysis and reporting • Goal of informed data collection: • Optimally quantify success

In the beginning… • We had to recruit students • We had collect information about our students • Pressure to quickly enter/analyze data: • To show results to gain support • To highlight early successes • To develop strategies for continued success

PLANNING DATA ENTRY ANALYSIS What We Did, What We Could Have Done Better, and Lessons Learned

Planning – what we did • Clarified data terms as needed • Contracted a data system with an incomplete understanding of reporting requirements • (False) Assumptions: • Data terms are equivalent internally and externally • Single, comprehensive reporting requirements in a central document from granting body • Considerable past experience of contracted data system makes them the authority for reporting metrics

Planning – what we could have done better • Analyze all data items before collecting any data • Communicate and verify operational definitions with granting body • Map out each data element and how they are interrelated • Know how changing one item will impact others • Ensure the database management system works optimally with existing internal data sources • Make such integration a high priority • Form a data committee • Responsible for data mapping and clarification of data elements

Planning – Lessons Learned • Thoroughly audit existing data sources • What can be linked– what can be imported– what MUST be entered • Ask more questions – assume nothing! • What is a "participant”? • Don't assume your DB supplier knows everything. Don't let them sell you a canned DB.

Planning – Lessons Learned • Expect… the unexpected! • Changes will happen within the life of a grant • Changes at the local level (residency policy) • Granting body clarifying definitions • "Completer" • Transfer Date vs Employment • Thorough planning and data mapping will allow for quick adjustments

Entry -What we did • Group training for data collection and entry • Data entry began as soon as students enrolled • Strong desire to quantify early successes • Error checking began in the second half of the grant • Regular communication of data entry guidelines and error reports (second half of grant)

Entry -What we could have done better • Individualized training to meet the needs of staff with diverse data backgrounds • Data entry and related training should not begin until data planning is complete • Comprehensive data dictionary, data map, and data entry manual • Establish error checking as a routine process from the beginning • Communicate all guidelines and procedures up front • Send data communications and error reports regularly from the start

Entry – Lessons Learned • Conceptualize data entry as a whole • Timeline of key data entry deadlines across the entire project • Reduce-or at least compensate for- human error • Communication is key! • Especially in multi-college projects

Analysis—What we did • Didn't manually calculate outcomes until early in Year 3 (4 -year grant) • We trusted the contracted data system's automated reports • Didn't question their data definitions and metric logic • Anyone who could access the data system could query reports • Data findings were sometimes presented to stakeholders without fully understanding the contents of the automated reports

Analysis—What we could have done better • Work backwards: • No student outcomes? No problem! • A focus on student success throughout project will cultivate success: data can sharpen that focus • Don't be afraid to ask questions • Data analysis should be incorporated into training materials • Or if this isn’t right for your institution, querying privileges should be restricted • Analyst does not need to pull all reports, but should be listed as a reference on all externally distributed reports in case of additional questions

Analysis – Lessons Learned • Realistically, not everyone on the project can devote time to mastering data definitions and understanding reporting metric logic • The analyst should be the authority on data and their expertise should be utilized • Raw data can be interpreted many ways, and not always correctly • Operational definitions are key • It doesn’t have to be perfect, but it must be consistent!

SUCCESS Planning, Entry, and Analysis

Successes: Planning • Worked with consultant- JFF • Worked with members of the team from different backgrounds • • Grant experience Curriculum outcomes Student services Institutional Research • Ultimately, we overcame the challenges of incomplete planning • Regular meetings with all grant staff • Rapid communication of changes: “damage control” • Amendments to training materials and manuals

Successes: Data Entry • Data manual • Broken down by form/report • “Elephant recipes” • Automated error reports built into data system • Consistent, manually built ad hoc error reports • Deadlines staggered but firm • Compensation for limitations of contracted data system • Connecting existing internal and external data sources to grant data system • Identifying inconsistencies between data system's automated metric calculations and grant reporting requirements in time to make adjustments

Successes: Analysis • Grant-level surveys and reports • Evidence of some success • Internal benchmark for comparison: • Under-reported incumbents? • Under-counting employment? • Credentials missing from the grant data system? • Utilizing local data sources: • Ready to Work and Path to Promise • Program Reviews • Analyst housed in system Institutional Research office • Could provide local & system level reports • More direct data (less human error) • Benchmarks – TEDS, data from other KCTCS projects • Measure if reports are complete: "sniff tests"

Successes: Analysis Identifying external data sources: Applying results of analyses: • WIOA - Data source for comparison • Clearinghouse (NSC) • Promoting services • Addressing needs of specific groups • Monitoring progression towards goals • Informing policy decisions • Success and “exiting" stop-outs • Unemployment Insurance (UI) • Showed stronger success without individual data • Another sniff test: our collected data was not complete

Wrapping up…

Data Informed Leadership • Big Picture • System Level Thinking • Evaluation

Conclusion • Working backwards from performance metrics ensures data consistency and integrity throughout the project • Informed Data Design: • Establishes basis for accurate project evaluation • Informs student services and other support • Empowers student success • More successes will be exposed and supported empirically

Thanks! Questions?

Working Backwards Moves You Forward: Informed Data Collection by Kate Senn and Jonathon Berry is licensed under a Creative Commons Attribution 4. 0 International License.

This workforce product was funded by a grant awarded by the U. S. Department of Labor's Employment and Training Administration. The product was created by the grantee and does not necessarily reflect the official position of the U. S. Department of Labor. The U. S. Department of Labor makes no guarantees, warranties, or assurances of any kind, express or implied, with respect to such information, including any information on linked sites and including, but not limited to, accuracy of the information or its completeness, timeliness, usefulness, adequacy, continued availability, or ownership.

- Slides: 46