WLCG Progress and Challenges Ian Bird CHEP 2010

WLCG Progress and Challenges Ian Bird CHEP 2010 18 th October 2010, Taipei Ian. Bird@cern. ch

(W)LCG at previous CHEPs. . . • 2000 (Padova): – Was the start of grids in HEP. US, EU, Nordic efforts. Griphyn, Datagrid approved. • 2001 (Beijing): – LCG Project approved. PPDG, i. VDGL, Data. Tag. • 2003 (San Diego): – Deployment had just started, had few sites, main LCG support structures under construction – Resource plan for 2008 was x 2 (CPU), x 5 (disk) below what we have in place today • 2004 (Interlaken): – 78 sites, 9000 CPU, 6. 5 PB disk – LCG-2; 1 st data challenges, MC productions • 2006 (Mumbai): – TDRs written; Baseline Services agreed – Agreed on SRM storage classes and started SRM requirements round. . . • 2007 (Vancouver): – Worry: x 5 -6 away from scale of needed resources+jobs; x 2 -3 below data transfer rates needed – Thought we were only 1 year from data taking Ian. Bird@cern. ch 2

At CHEP ‘ 09. . . • Neil Geddes: (“Can WLCG Deliver? ”) – – – 500 k jobs/day; good data transfer rates Relatively few real users trying analysis Efforts to improve reliability Effort required to manage the system was high Reminded of warning from CHEP 2004: • “. . . software/computing to address the early phase of LHC operation, not to hinder the fast delivery of physics results. . . ” • Les Robertson: (“What’s Next? ”) – Grids are all about sharing. . Grids are also flexible. . . HEP and others have shown that Grids [can do what we need]. . . stimulated high energy physics to organise its computing – This will be the workhorse for production data handling for many years and as such must be maintained and developed through the first waves of data taking – BUT: the landscape has changed dramatically over the past decade – could there be a revolution for physics analysis? • Scale Testing exercise (STEP 09) was conceived Ian. Bird@cern. ch 3

Outline • Progress in computing for LHC – Where we are we today & how we got here – What is today’s grid compared to early ideas? • … and the outlook? – What are the open questions? – Grids clouds? Sustainability? – What next? Ian. Bird@cern. ch 4

CERN Ca. TRIUMF US-BNL Amsterdam/NIKHEF-SARA Bologna/CNAF WLCG Collaboration Status Tier 0; 11 Tier 1 s; 64 Tier 2 federations Taipei/ASGC Today we have 49 Mo. U signatories, representing 34 countries: Australia, Austria, Belgium, Brazil, Canada, China, Czech Rep, Denmark, Estonia, Finland, France, Germany, Hungary, Italy, India, Israel, Japan, Rep. Korea, Netherlands, Norway, Pakistan, Poland, Portugal, Romania, Russia, Slovenia, Spain, Sweden, Switzerland, Taipei, Turkey, UK, Ukraine, USA. NDGF US-FNAL 26 June 2009 De-FZK Barcelona/PIC Ian Bird, CERN Lyon/CCIN 2 P 3 UK-RAL 5

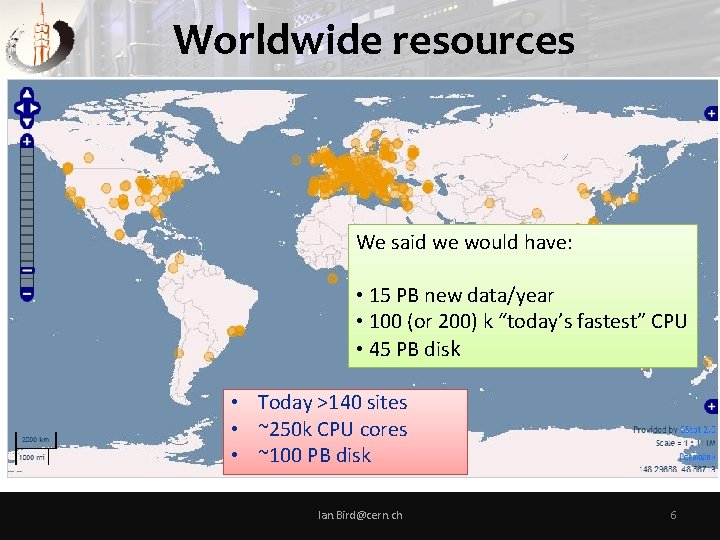

Worldwide resources We said we would have: • 15 PB new data/year • 100 (or 200) k “today’s fastest” CPU • 45 PB disk • Today >140 sites • ~250 k CPU cores • ~100 PB disk Ian. Bird@cern. ch 6

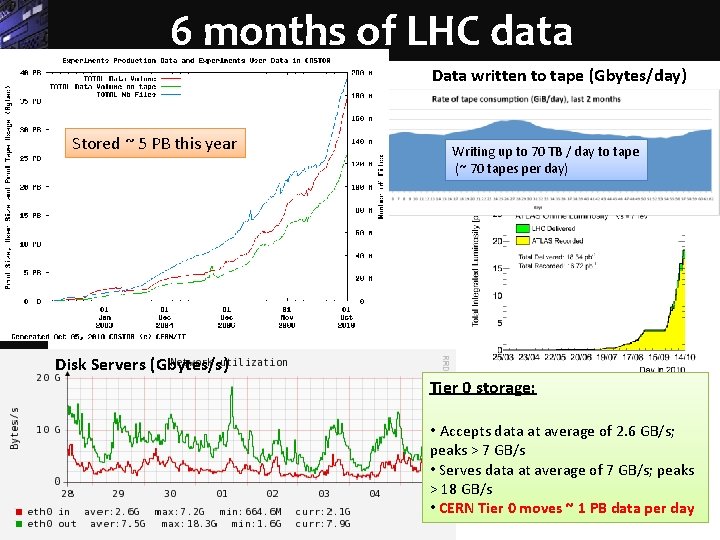

6 months of LHC data Data written to tape (Gbytes/day) Stored ~ 5 PB this year Writing up to 70 TB / day to tape (~ 70 tapes per day) Disk Servers (Gbytes/s) Tier 0 storage: • Accepts data at average of 2. 6 GB/s; peaks > 7 GB/s • Serves data at average of 7 GB/s; peaks > 18 GB/s • CERN Tier 0 moves ~ 1 PB data per day

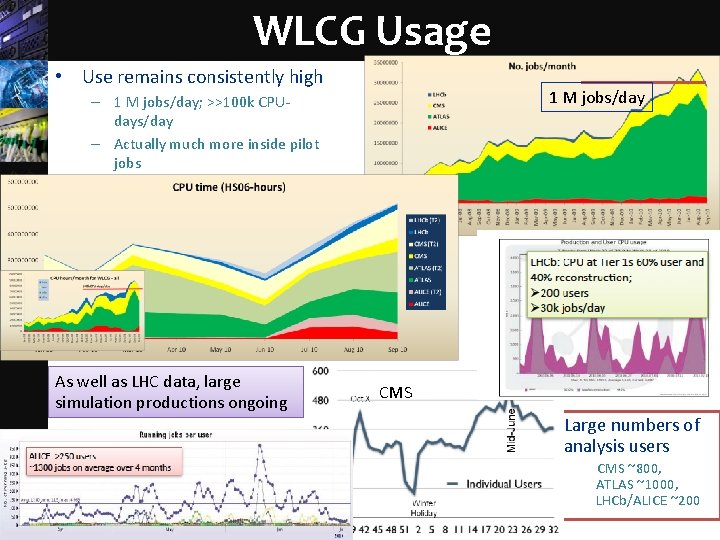

WLCG Usage • Use remains consistently high 1 M jobs/day – 1 M jobs/day; >>100 k CPUdays/day – Actually much more inside pilot jobs 100 k CPU-days/day LHCb As well as LHC data, large simulation productions ongoing CMS • ALICE: ~200 users, 5 -10% of Grid resources Large numbers of analysis users CMS ~800, ATLAS ~1000, LHCb/ALICE ~200

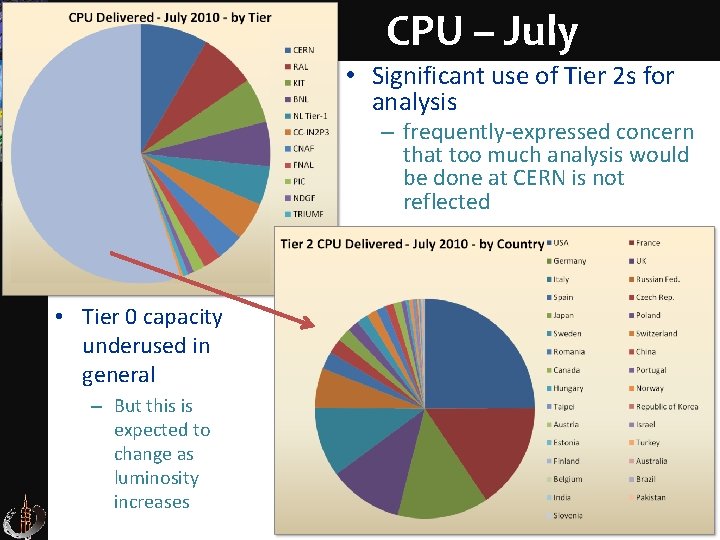

CPU – July • Significant use of Tier 2 s for analysis – frequently-expressed concern that too much analysis would be done at CERN is not reflected • Tier 0 capacity underused in general – But this is expected to change as luminosity increases Ian. Bird@cern. ch 9

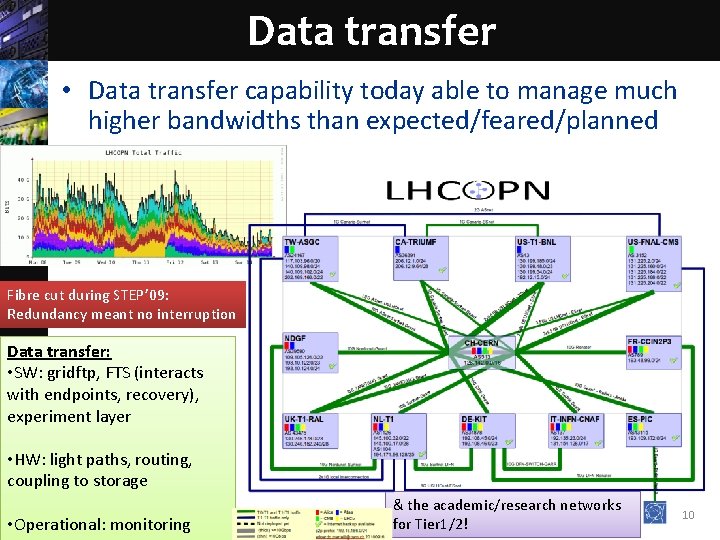

Data transfer • Data transfer capability today able to manage much higher bandwidths than expected/feared/planned Fibre cut during STEP’ 09: Redundancy meant no interruption Data transfer: • SW: gridftp, FTS (interacts with endpoints, recovery), experiment layer • HW: light paths, routing, coupling to storage • Operational: monitoring & the academic/research networks for Tier 1/2! Ian Bird, CERN 10

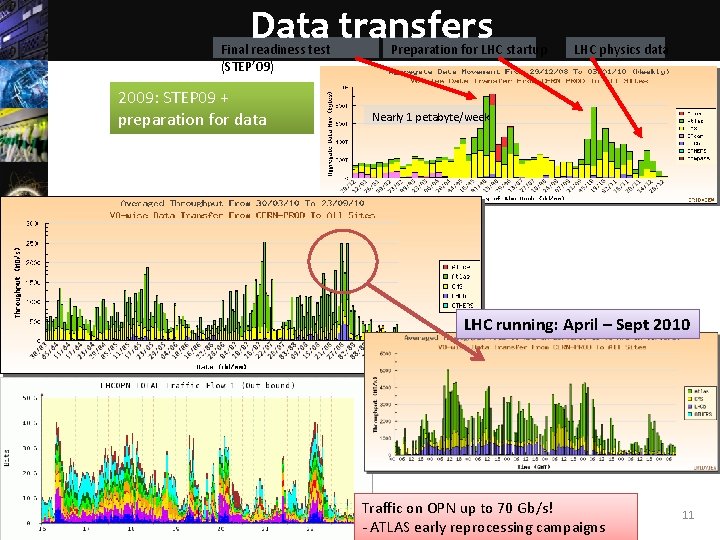

Data transfers Final readiness test (STEP’ 09) 2009: STEP 09 + preparation for data Preparation for LHC startup LHC physics data Nearly 1 petabyte/week LHC running: April – Sept 2010 Traffic on OPN up to 70 Gb/s! - ATLAS early reprocessing campaigns Ian Bird, CERN 11

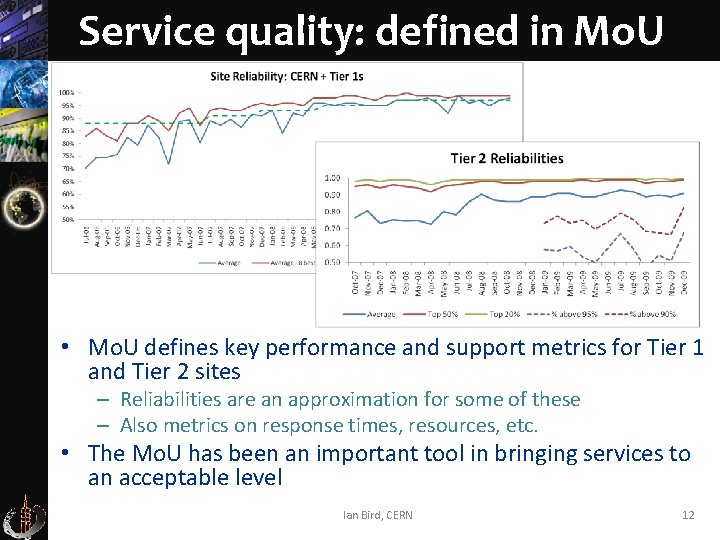

Service quality: defined in Mo. U • Mo. U defines key performance and support metrics for Tier 1 and Tier 2 sites – Reliabilities are an approximation for some of these – Also metrics on response times, resources, etc. • The Mo. U has been an important tool in bringing services to an acceptable level Ian Bird, CERN 12

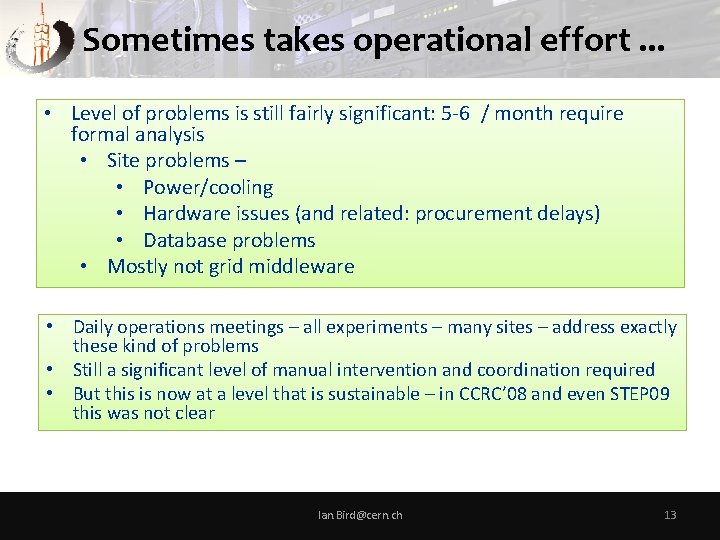

Sometimes takes operational effort. . . • Level of problems is still fairly significant: 5 -6 / month require formal analysis • Site problems – • Power/cooling • Hardware issues (and related: procurement delays) • Database problems • Mostly not grid middleware • Daily operations meetings – all experiments – many sites – address exactly these kind of problems • Still a significant level of manual intervention and coordination required • But this is now at a level that is sustainable – in CCRC’ 08 and even STEP 09 this was not clear Ian. Bird@cern. ch 13

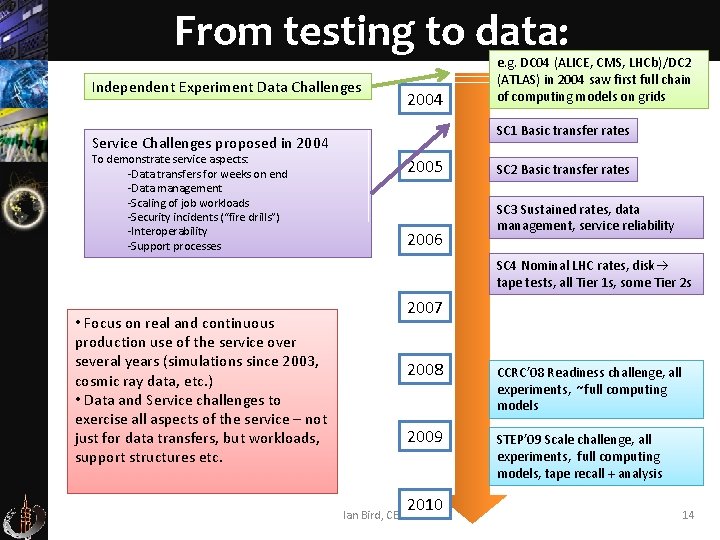

From testing to data: Independent Experiment Data Challenges 2004 SC 1 Basic transfer rates Service Challenges proposed in 2004 To demonstrate service aspects: -Data transfers for weeks on end -Data management -Scaling of job workloads -Security incidents (“fire drills”) -Interoperability -Support processes e. g. DC 04 (ALICE, CMS, LHCb)/DC 2 (ATLAS) in 2004 saw first full chain of computing models on grids 2005 2006 SC 2 Basic transfer rates SC 3 Sustained rates, data management, service reliability SC 4 Nominal LHC rates, disk tape tests, all Tier 1 s, some Tier 2 s • Focus on real and continuous production use of the service over several years (simulations since 2003, cosmic ray data, etc. ) • Data and Service challenges to exercise all aspects of the service – not just for data transfers, but workloads, support structures etc. 2007 2008 CCRC’ 08 Readiness challenge, all experiments, ~full computing models 2009 STEP’ 09 Scale challenge, all experiments, full computing models, tape recall + analysis 2010 Ian Bird, CERN 14

Successes: ü We have a working grid infrastructure ü Experiments have truly distributed models ü Has enabled physics output in a very short time ü Network traffic close to that planned – and the network is extremely reliable ü Significant numbers of people doing analysis (at Tier 2 s) ü Today resources are plentiful, and no contention seen. . . yet ü Support levels manageable. . . just Ian. Bird@cern. ch 15

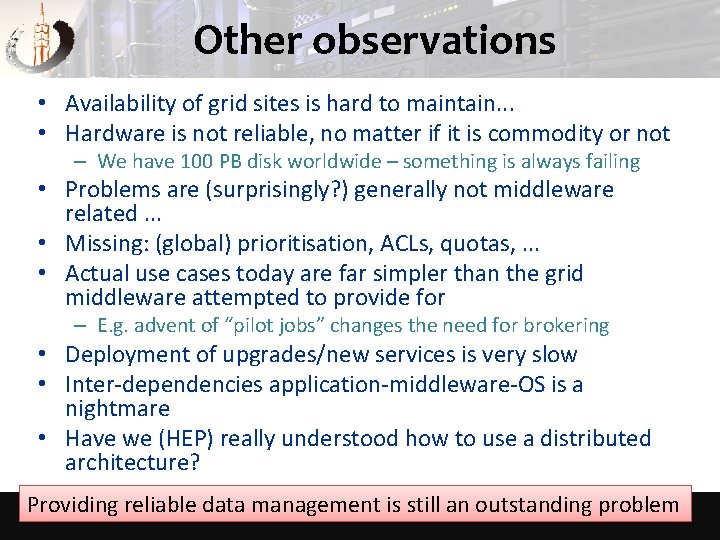

Other observations • Availability of grid sites is hard to maintain. . . • Hardware is not reliable, no matter if it is commodity or not – We have 100 PB disk worldwide – something is always failing • Problems are (surprisingly? ) generally not middleware related. . . • Missing: (global) prioritisation, ACLs, quotas, . . . • Actual use cases today are far simpler than the grid middleware attempted to provide for – E. g. advent of “pilot jobs” changes the need for brokering • Deployment of upgrades/new services is very slow • Inter-dependencies application-middleware-OS is a nightmare • Have we (HEP) really understood how to use a distributed architecture? Providing reliable data management is still an outstanding problem Ian. Bird@cern. ch 16

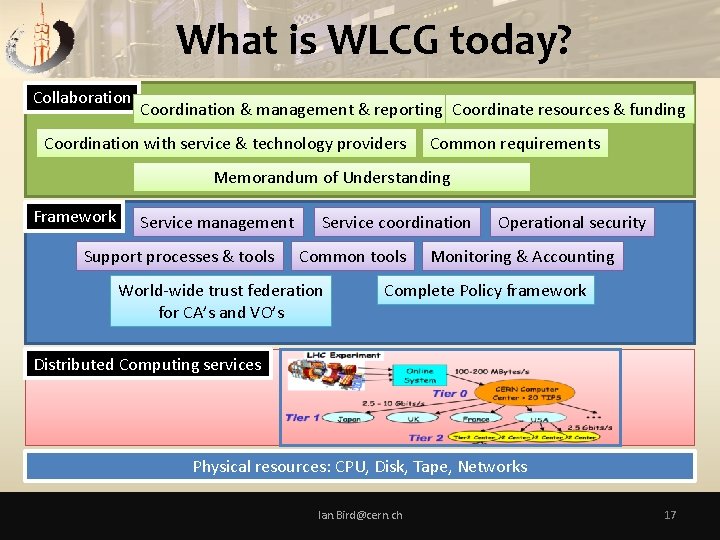

What is WLCG today? Collaboration Coordination & management & reporting Coordinate resources & funding Coordination with service & technology providers Common requirements Memorandum of Understanding Framework Service management Support processes & tools Service coordination Common tools World-wide trust federation for CA’s and VO’s Operational security Monitoring & Accounting Complete Policy framework Distributed Computing services Physical resources: CPU, Disk, Tape, Networks Ian. Bird@cern. ch 17

Evolution and sustainability • Making what we have today more sustainable (software, operations, etc) is a challenge • Data issues: – Data management and access – How to make reliable systems from commodity (or expensive!) hardware – Fault tolerance – Data preservation and open access • Need to adapt to changing technologies: – Use of many-core CPUs (and other processor types? ) – Global filesystems (soon. . . ) – Virtualisation • Network infrastructure – This is the most reliable service we have – Invest in networks and make full use of the distributed system Ian Bird, CERN 18

Areas for evolution – 2 • Grid Middleware – Complexity of today’s middleware compared to the actual use cases – Evolve by using more “standard” technologies: e. g. Message Brokers, Monitoring systems are first steps • Global AAI: – – SSO Evolution/replacement/hiding of today’s X 509 Use existing ID federations? Integrate with commercial/opensource software? • Fabric – Are we using our resources as effectively as possible? (power is an issue) – Use of remote data centres Ian Bird, CERN 19

Evolution of Data Management • 1 st workshop held in June – Recognition that network as a very reliable resource can optimize the use of the storage and CPU resources • The strict hierarchical MONARC model is no longer necessary – Simplification of use of tape and the interfaces – Use disk resources more as a cache – Recognize that not all data has to be local at a site for a job to run – allow remote access (or fetch to a local cache) • Often faster to fetch a file from a remote site than from local tape • Data management software will evolve – A number of short term prototypes have been proposed – Simplify the interfaces where possible; hide details from end-users • Experiment models will evolve – To accept that information in a distributed system cannot be fully up-todate; use remote access to data and caching mechanisms to improve overall robustness • Timescale: 2013 LHC run Ian Bird, CERN 20

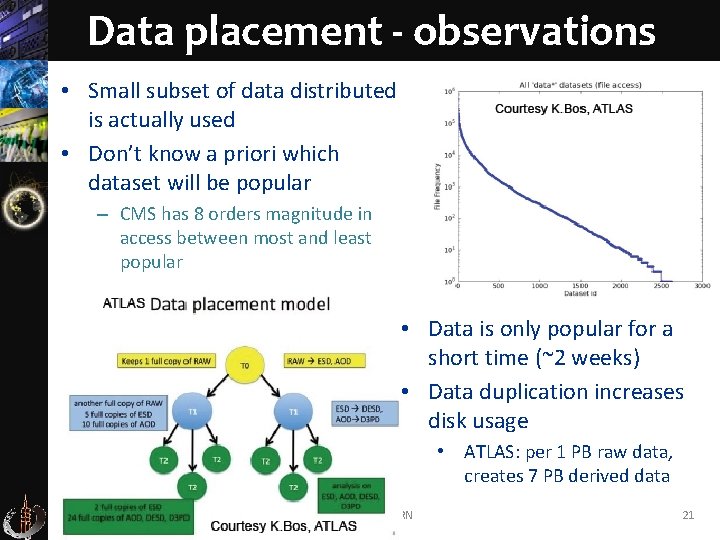

Data placement - observations • Small subset of data distributed is actually used • Don’t know a priori which dataset will be popular – CMS has 8 orders magnitude in access between most and least popular • Data is only popular for a short time (~2 weeks) • Data duplication increases disk usage • ATLAS: per 1 PB raw data, creates 7 PB derived data Ian Bird, CERN 21

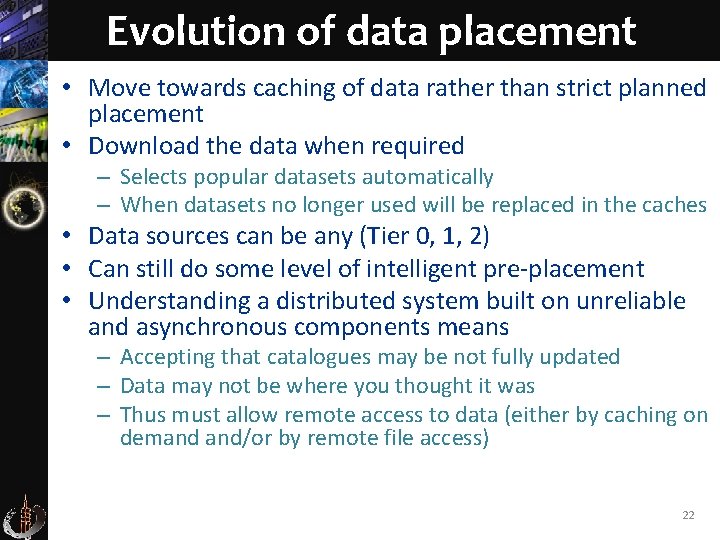

Evolution of data placement • Move towards caching of data rather than strict planned placement • Download the data when required – Selects popular datasets automatically – When datasets no longer used will be replaced in the caches • Data sources can be any (Tier 0, 1, 2) • Can still do some level of intelligent pre-placement • Understanding a distributed system built on unreliable and asynchronous components means – Accepting that catalogues may be not fully updated – Data may not be where you thought it was – Thus must allow remote access to data (either by caching on demand and/or by remote file access) 22

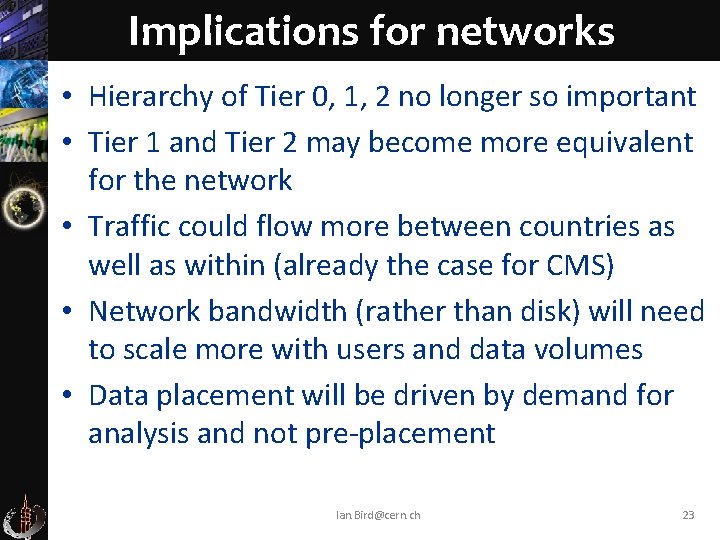

Implications for networks • Hierarchy of Tier 0, 1, 2 no longer so important • Tier 1 and Tier 2 may become more equivalent for the network • Traffic could flow more between countries as well as within (already the case for CMS) • Network bandwidth (rather than disk) will need to scale more with users and data volumes • Data placement will be driven by demand for analysis and not pre-placement Ian. Bird@cern. ch 23

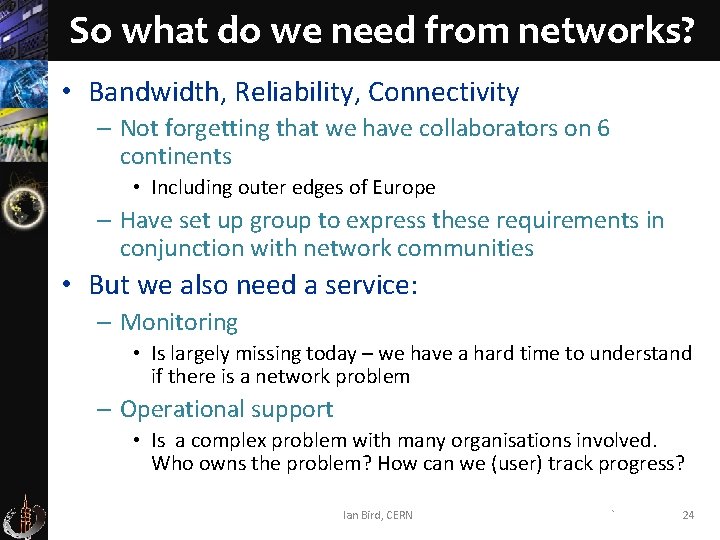

So what do we need from networks? • Bandwidth, Reliability, Connectivity – Not forgetting that we have collaborators on 6 continents • Including outer edges of Europe – Have set up group to express these requirements in conjunction with network communities • But we also need a service: – Monitoring • Is largely missing today – we have a hard time to understand if there is a network problem – Operational support • Is a complex problem with many organisations involved. Who owns the problem? How can we (user) track progress? Ian Bird, CERN ` 24

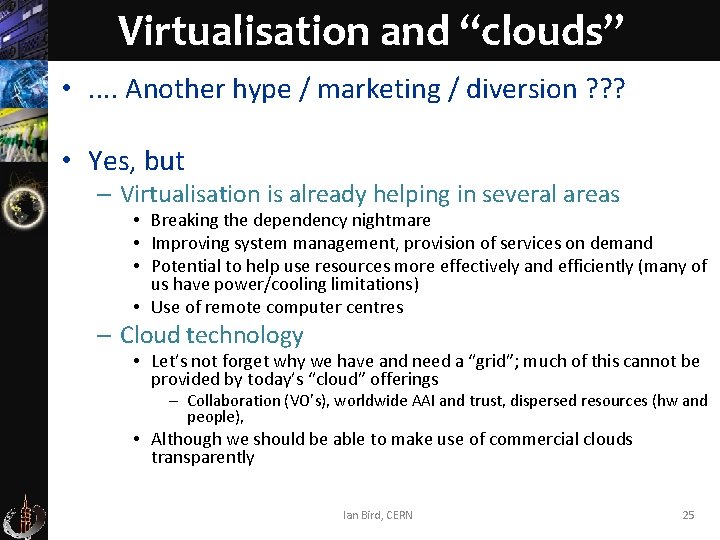

Virtualisation and “clouds” • . . Another hype / marketing / diversion ? ? ? • Yes, but – Virtualisation is already helping in several areas • Breaking the dependency nightmare • Improving system management, provision of services on demand • Potential to help use resources more effectively and efficiently (many of us have power/cooling limitations) • Use of remote computer centres – Cloud technology • Let’s not forget why we have and need a “grid”; much of this cannot be provided by today’s “cloud” offerings – Collaboration (VO’s), worldwide AAI and trust, dispersed resources (hw and people), • Although we should be able to make use of commercial clouds transparently Ian Bird, CERN 25

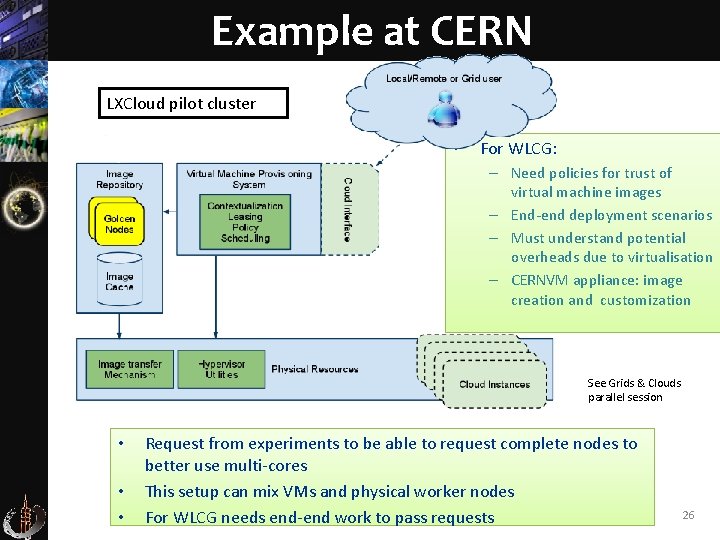

Example at CERN LXCloud pilot cluster • For WLCG: – Need policies for trust of virtual machine images – End-end deployment scenarios – Must understand potential overheads due to virtualisation – CERNVM appliance: image creation and customization See Grids & Clouds parallel session • • • Request from experiments to be able to request complete nodes to better use multi-cores This setup can mix VMs and physical worker nodes Ian Bird, For WLCG needs end-end work to. CERN pass requests 26

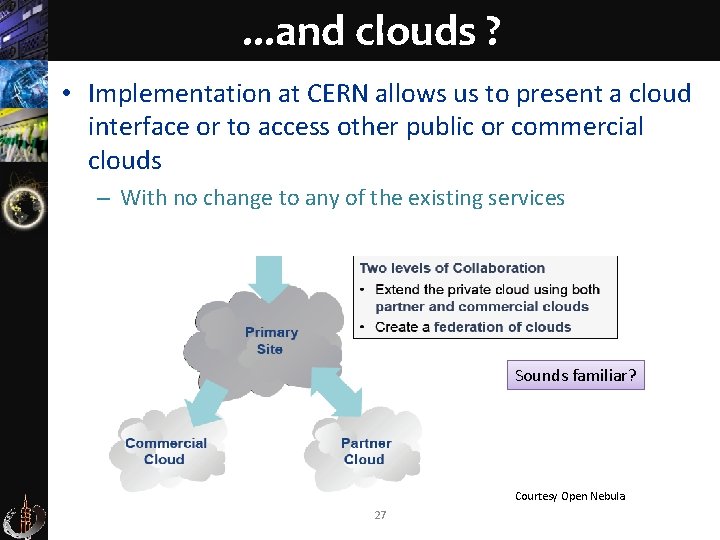

. . . and clouds ? • Implementation at CERN allows us to present a cloud interface or to access other public or commercial clouds – With no change to any of the existing services Sounds familiar? Courtesy Open Nebula 27

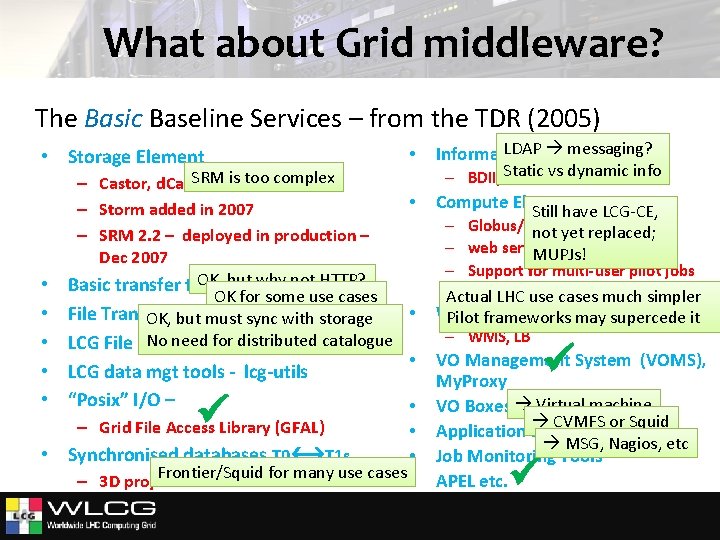

What about Grid middleware? The Basic Baseline Services – from the TDR (2005) • Storage Element • SRMDPM is too complex – Castor, d. Cache, • – Storm added in 2007 – SRM 2. 2 – deployed in production – Dec 2007 OK, but why not. . HTTP? Basic transfer tools – Gridftp, OK for some use cases • File Transfer OK (FTS) ifsync all VOs it OK, Service but must withuse storage No need(LFC) for distributed catalogue LCG File Catalog • • LCG data mgt tools - lcg-utils • “Posix” I/O – • • • – Grid File Access Library (GFAL) • Synchronised databases T 0 T 1 s • Frontier/Squid for many use cases • – 3 D project LDAPSystem messaging? Information – BDII, Static GLUE vs dynamic info Compute Elements Still have LCG-CE, – Globus/Condor-C not yet replaced; – web services (CREAM) MUPJs! – Support for multi-user pilot jobs (glexec, Actual LHC SCAS) use cases much simpler Workload Management Pilot frameworks may supercede it – WMS, LB VO Management System (VOMS), My. Proxy VO Boxes Virtual machine CVMFS or Squid Application software installation MSG, Nagios, etc Job Monitoring Tools APEL etc.

What about grid middleware? • Clearly a thinner layer today than originally imagined – And the actual usage is far simpler • Experiment layer is deeper. . . And different from one to the other • Experiments had to work hard to (mostly) hide the grid details from users • Pilot jobs are (almost) ubiquitous in all experiments • Simplification of some services is possible and helps long term maintenance and support • The current grid infrastructure can sit transparently over virtualised (cloud) services – And provide a potential path for evolutionary change Ian Bird, CERN 29

Conclusions • Distributed computing for LHC is a reality and enables physics output in a very short time • Experience with real data and real users suggests areas for improvement – – The infrastructure of WLCG can support evolution of the underlying technology Ian. Bird@cern. ch 30

- Slides: 30