WLCG LHCC minireview LHCb Summary Outline m m

WLCG LHCC mini-review LHCb Summary

Outline m m LHCb Activities in 2008: summary Status of DIRAC Activities in 2009: outlook Resources in 2009 -10 Ph. C 2

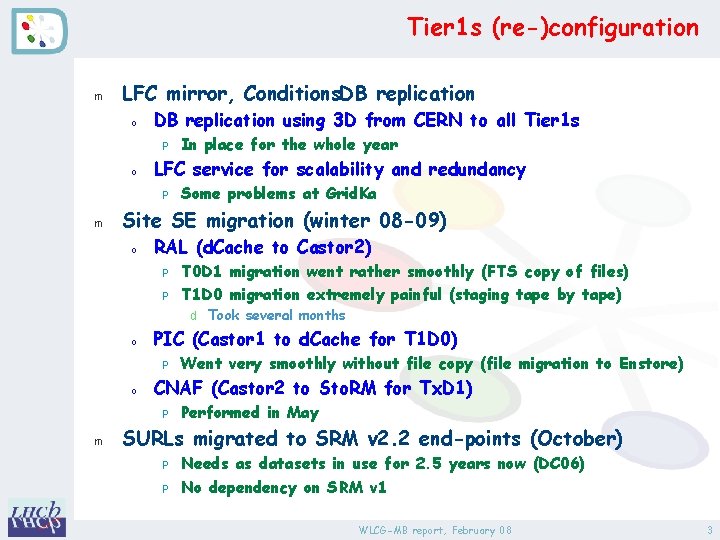

Tier 1 s (re-)configuration m LFC mirror, Conditions. DB replication o DB replication using 3 D from CERN to all Tier 1 s P o LFC service for scalability and redundancy P m In place for the whole year Some problems at Grid. Ka Site SE migration (winter 08 -09) o RAL (d. Cache to Castor 2) P P T 0 D 1 migration went rather smoothly (FTS copy of files) T 1 D 0 migration extremely painful (staging tape by tape) d Took several months o PIC (Castor 1 to d. Cache for T 1 D 0) P o CNAF (Castor 2 to Sto. RM for Tx. D 1) P m Went very smoothly without file copy (file migration to Enstore) Performed in May SURLs migrated to SRM v 2. 2 end-points (October) P P Needs as datasets in use for 2. 5 years now (DC 06) No dependency on SRM v 1 WLCG-MB report, February 08 3

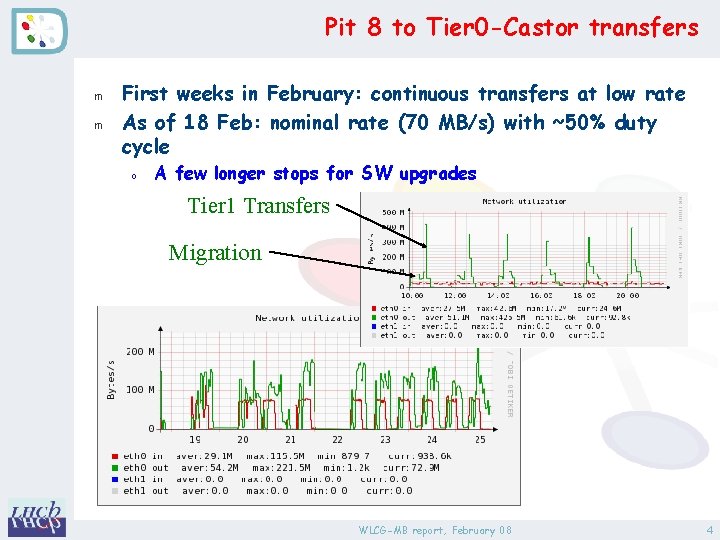

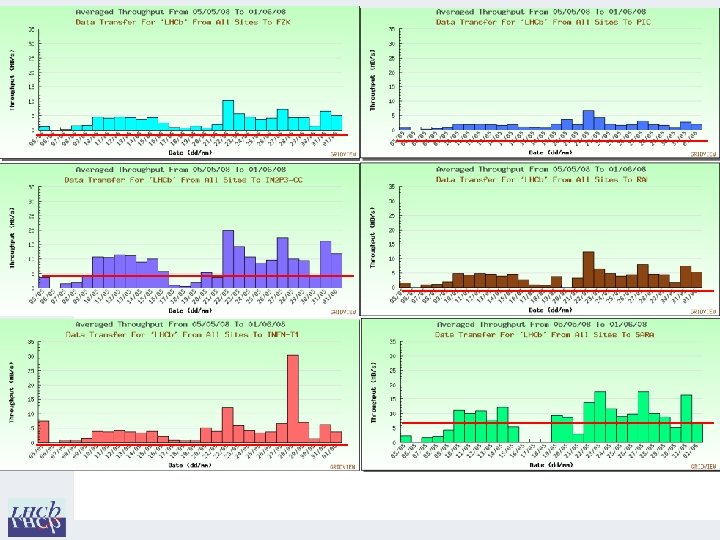

Pit 8 to Tier 0 -Castor transfers m m First weeks in February: continuous transfers at low rate As of 18 Feb: nominal rate (70 MB/s) with ~50% duty cycle o A few longer stops for SW upgrades Tier 1 Transfers Migration WLCG-MB report, February 08 4

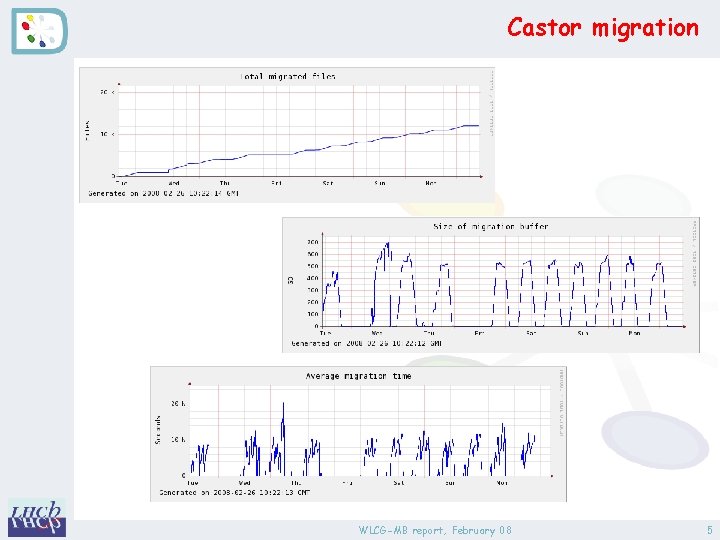

Castor migration WLCG-MB report, February 08 5

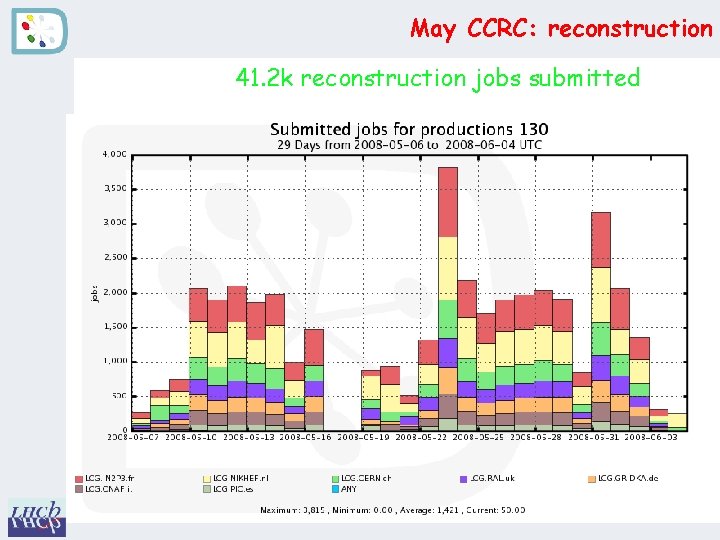

May CCRC: reconstruction 41. 2 k reconstruction jobs submitted 27. 6 k jobs proceeded to done state Done/created ~67%

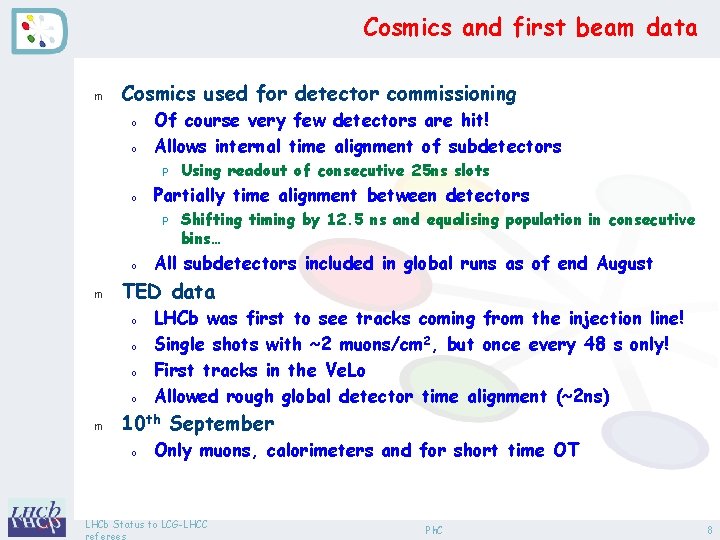

Cosmics and first beam data m Cosmics used for detector commissioning o o Of course very few detectors are hit! Allows internal time alignment of subdetectors P o Partially time alignment between detectors P o m Shifting timing by 12. 5 ns and equalising population in consecutive bins… All subdetectors included in global runs as of end August TED data o o m Using readout of consecutive 25 ns slots LHCb was first to see tracks coming from the injection line! Single shots with ~2 muons/cm 2, but once every 48 s only! First tracks in the Ve. Lo Allowed rough global detector time alignment (~2 ns) 10 th September o Only muons, calorimeters and for short time OT LHCb Status to LCG-LHCC Ph. C 8

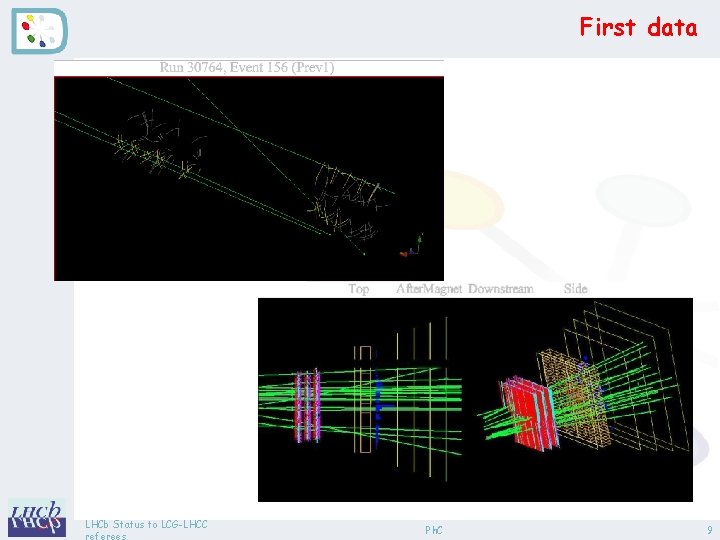

First data LHCb Status to LCG-LHCC Ph. C 9

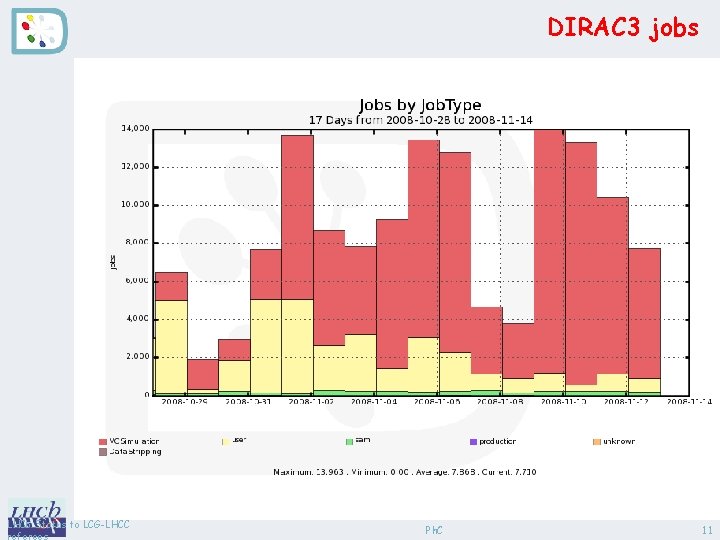

DIRAC 3 put in production m Production activities o o Started in July Simulation, reconstruction, stripping P P P Includes file distribution strategy, failover mechanism File access using local access protocol (rootd, rfio, (gsi)dcap, xrootd) Commissioned alternative method: copy to local disk d Drawback: non-guaranteed space, less CPU efficiency, additional network traffic (possibly copied from remote site) o Failover using VOBOXes P P P m Analysis o o o LHCb File transfers (delegated to FTS) LFC registration Internal DIRAC operations (bookkeeping, job monitoring…) Started in September Ganga available for DIRAC 3 in November DIRAC 2 de-commissioned on January 12 th Ph. C 10

DIRAC 3 jobs LHCb Status to LCG-LHCC Ph. C 11

Issues in 2008 m Data Management o o o m Site configuration (non-scaling) SRM v 2. 2 still not fully mature (e. g. pinning) Many issues with Storage. Ware (mainly d. Cache) Workload Management o o Moved to g. Lite WMS, but still many issues with it (e. g. mixup of identities). Better scaling behavior though than LCG-RB LHCb moved to using “generic pilot jobs” (i. e. can execute workload from any user or production) P P m Not switching identity yet (g. Lexec / SCAS not available) Not a show-stopper as not required by LHCb but by sites Middleware deployment o LHCb distributes the client middleware P P P From distribution in the LCG-AA Necessary to ensure bug fixes to be available Allows multiple platform (OS, architecture, python version) Ph. C 12

LHCb Computing Operations m Production manager o m Schedules production work, sets up and checks workflows, reports to LHCb operations Computing shifters o Computing Operations shifter (pool of ~12 shifters) P P o Data Quality shifter P o m Both are in the LHCb Computing Control room (2 -R-014) Week days (twice a week during shutdowns) Grid Expert on-call o o m Covers 8 h/day, 7 days / week Daily DQ and Operations meetings o m Covers 14 h/day, 7 days / week Computing Control room (2 -R-014) On duty for a week Runs the operations meetings Grid Team (~6 FTEs needed, ~2 missing) o Shared responsibilities (WMS, DMS, SAM, Bookkeeping…) LHCb Status to LCG-LHCC Ph. C 13

Plans for 2009 m Commissioning for 2009 -10 data taking (FEST’ 09) o m See next slides Simulation o Replacing DC 06 datasets P P o Signal and background samples (~300 Mevts) Minimum bias for L 0 and HLT commissioning (~100 Mevts) Used for CP-violation performance studies Nominal LHC settings (7 Te. V, 25 ns, 2 1032 cm-2 s-1) Tuning stripping and HLT for 2010 P P 4/5 Te. V, 50 ns (no spillover), 1032 cm-1 s-1 Benchmark channels for first physics studies d B µµ, Γs, B Dh, Bs J/ψ�, B K*µµ … P P P o Preparation for very first physics P P LHCb Large minimum bias samples (~ 1 mn of LHC running) Stripping performance required: ~ 50 Hz for benchmark channels Tune HLT: efficiency vs retention, optimisation 2 Te. V, low luminosity Large minimum bias sample (part used for FEST’ 09) Ph. C 14

FEST’ 09 m Aim o o Replace the non-existing 2008 beam data with MC Points to be tested P L 0 (Hardware trigger) strategy d Emulated in software P HLT strategy d First data (loose trigger) d Higher lumi/energy data (b-physics trigger) P Online detector monitoring d Based on event selection from HLT e. g. J/Psi events d Automatic detector problems detection P Data streaming d Physics stream (all triggers) and calibration stream (subset of triggers, typically 5 Hz) P Alignment and calibration loop d Trigger re-alignment d Run alignment processes d Validate new alignment (based on calibration stream) LHCb Status to LCG-LHCC Ph. C 15

FEST’ 09 runs m FEST activity o o Define running conditions (rate, HLT version + config) Start runs from the Control System P o o Files export to Tier 0 and distribution to Tier 1 s Automatic reconstruction jobs at CERN and Tier 1 s P m Commission Data Quality green-light Short test periods o o m Events are injected and follow the normal path Typically full week, ½ to 1 day every week for tests Depending on results, take a few weeks interval for fixing problems Vary conditions o o L 0 parameters Event rates HLT parameters Trigger calibration and alignment loop LHCb Status to LCG-LHCC Ph. C 17

Resources (very preliminary) m Consider 2009 -10 as a whole (new LHC schedule) o Real data P Split year in two parts: d 0. 5 106 s at low lumi – LHC-phase 1 d 3 106 s at higher lumi (1 1032) – LHC phase 2 P o m Trigger rate independent on lumi and energy: 2 k. Hz Simulation: 2 109 events (nominal year) over 2 years New assumptions for (re-)processing and analysis o o o More re-processings during LHC-phase 1 Add calibration checks (done at CERN) Envision more analysis at CERN with first data P P o Increase from 25% (TDR) to 50% (phase 1) and 35% (phase 2) Include SW development and testing (LXBATCH) Adjust event sizes and CPU needs to current estimates P P Important effort to reduce data size (packed format for r. DST, µDST…) Use new HEP-SPEC 06 benchmarking LHCb Status to LCG-LHCC Ph. C 18

Resources (cont’d) m CERN usage o Tier 0: P P P o CAF (“Calibration and Alignment Facility”) P P o Dedicated LXBATCH resources Detector studies, alignment and calibration CAF (“CERN Analysis Facility”) P P m Real data recording, export to Tier 1 s First pass reconstruction of ~85% of raw data Reprocessing (in future foresee to use also the Online HLT farm) Part of Grid distributed analysis facilities (estimate 40% in 2009 -10) Histograms and interactive analysis (lxplus, desk/lap-tops) Tier 1 usage o Reconstruction P o Analysis facilities P P LHCb First pass during data taking, reprocessing Grid distributed analysis Local storage for users’ data (LHCb_USER SRM space) Ph. C 19

Conclusions m 2008 o o CCRC very useful for LHCb (although irrelevant to be simultaneous due to low throughput) DIRAC 3 fully commissioned P P P o Last processing on DC 06 P o m Analysis will continue in 2009 Commission simulation and reconstruction for real data 2009 -10 o o o LHCb Production in July Analysis in November As of now, called DIRAC Large simulation requests for replacing DC 06, preparing 2009 -10 FEST’ 09: ~1 week a month and 1 day a week Resource requirements being prepared for WLCG workshop in March and C-RRB in April Ph. C 20

- Slides: 19