Wireless Communication Digital Wireless Communication Basics Modulation and

Wireless Communication Digital Wireless Communication Basics: Modulation and Coding Stan Baggen

Stan Baggen: Modulation Basics 1 Contents • Digital Modulation • Synchronization • Nyquist Shaping • Optimum Receivers • Coding for Wireless Channels • • • Feb. 2003 Block Codes Convolutional Codes The Viterbi algorithm Contents 1

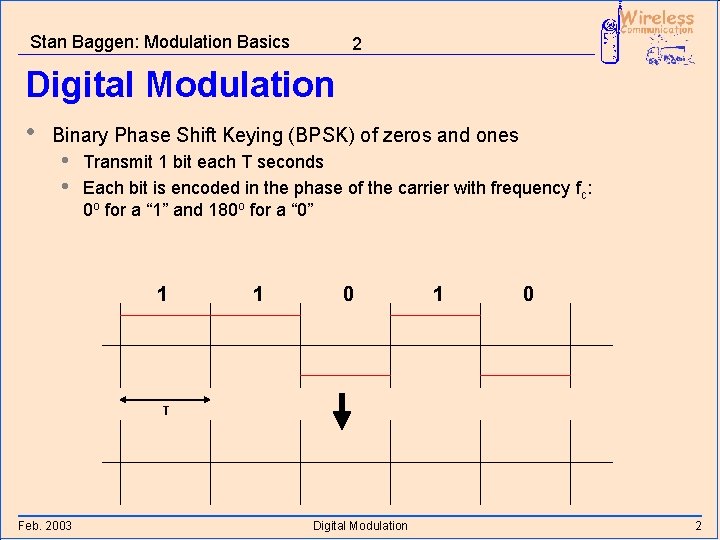

Stan Baggen: Modulation Basics 2 Digital Modulation • Binary Phase Shift Keying (BPSK) of zeros and ones • • Transmit 1 bit each T seconds Each bit is encoded in the phase of the carrier with frequency fc: 0 o for a “ 1” and 180 o for a “ 0” 1 1 0 T Feb. 2003 Digital Modulation 2

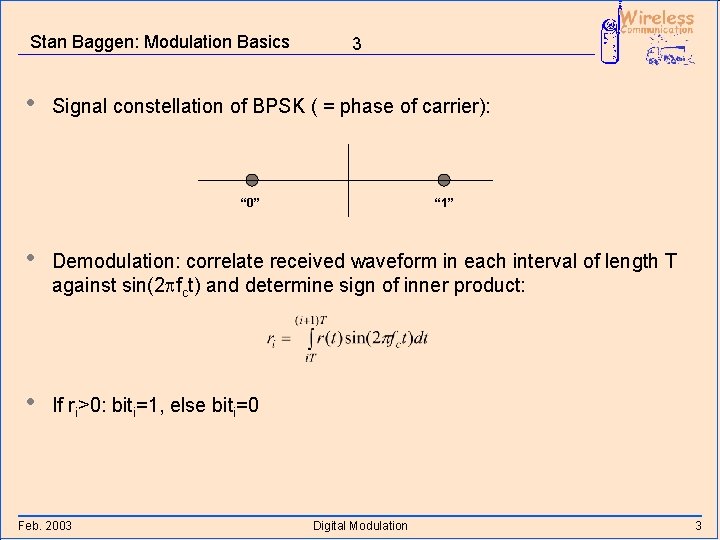

Stan Baggen: Modulation Basics • 3 Signal constellation of BPSK ( = phase of carrier): “ 0” “ 1” • Demodulation: correlate received waveform in each interval of length T against sin(2 pfct) and determine sign of inner product: • If ri>0: biti=1, else biti=0 Feb. 2003 Digital Modulation 3

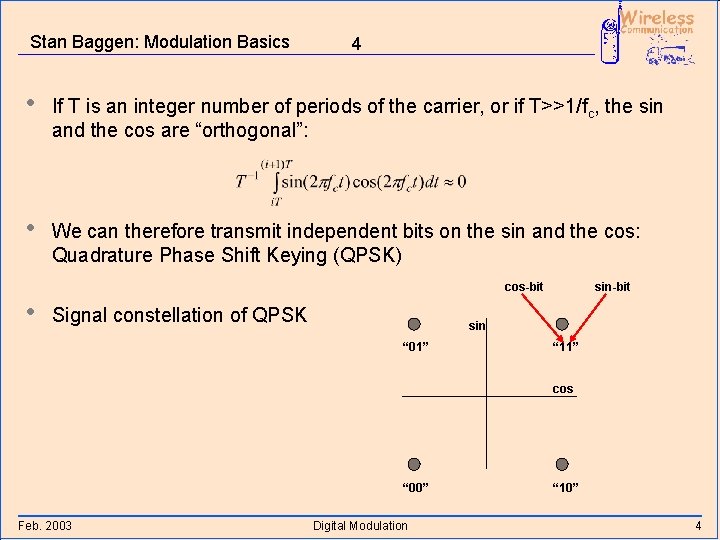

Stan Baggen: Modulation Basics 4 • If T is an integer number of periods of the carrier, or if T>>1/fc, the sin and the cos are “orthogonal”: • We can therefore transmit independent bits on the sin and the cos: Quadrature Phase Shift Keying (QPSK) cos-bit • Signal constellation of QPSK sin-bit sin “ 01” “ 11” cos “ 00” Feb. 2003 Digital Modulation “ 10” 4

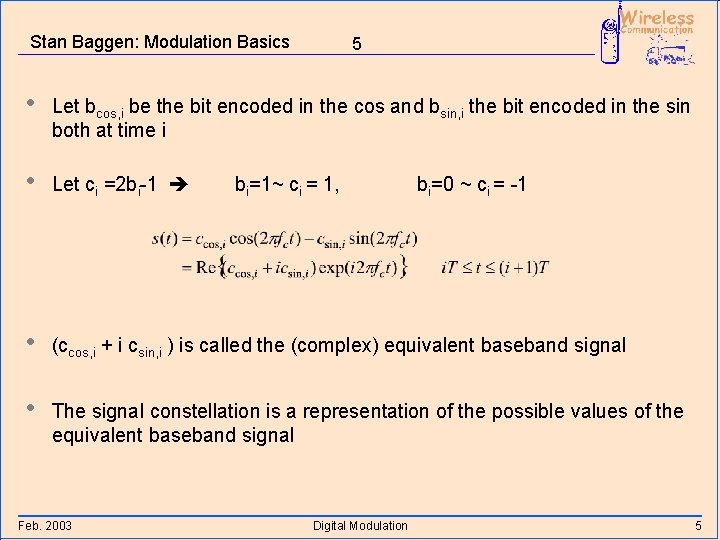

Stan Baggen: Modulation Basics 5 • Let bcos, i be the bit encoded in the cos and bsin, i the bit encoded in the sin both at time i • Let ci =2 bi-1 • (ccos, i + i csin, i ) is called the (complex) equivalent baseband signal • The signal constellation is a representation of the possible values of the equivalent baseband signal Feb. 2003 bi=1~ ci = 1, Digital Modulation bi=0 ~ ci = -1 5

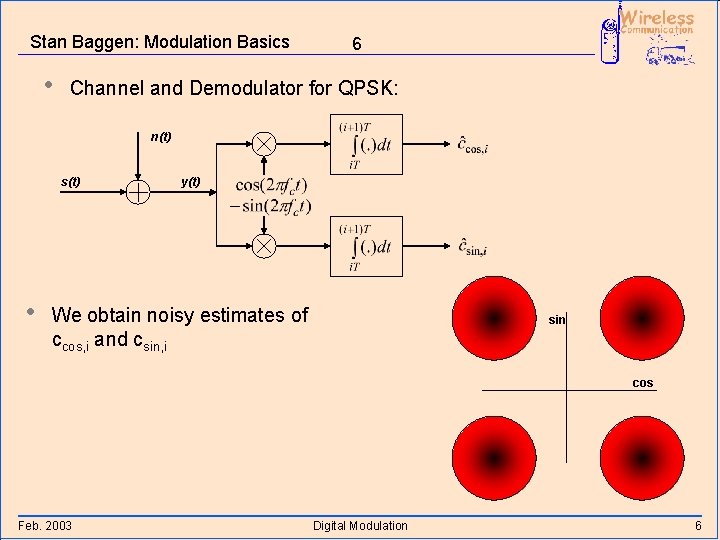

Stan Baggen: Modulation Basics • 6 Channel and Demodulator for QPSK: n(t) s(t) • y(t) We obtain noisy estimates of ccos, i and csin, i sin cos Feb. 2003 Digital Modulation 6

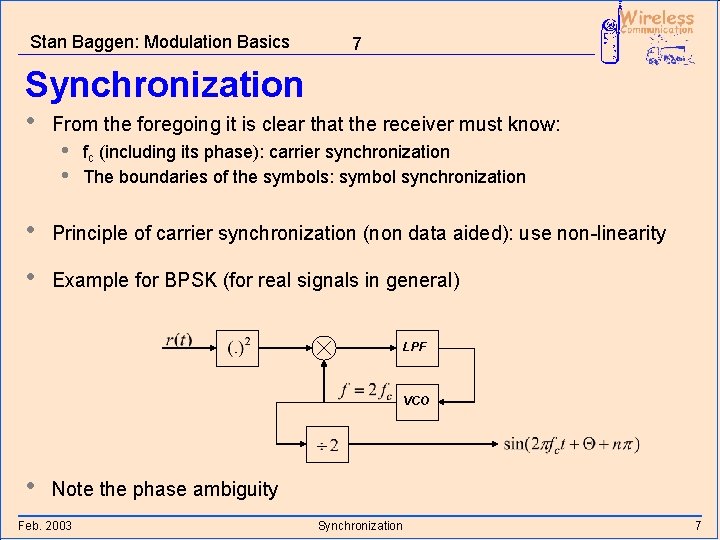

Stan Baggen: Modulation Basics 7 Synchronization • From the foregoing it is clear that the receiver must know: • • fc (including its phase): carrier synchronization The boundaries of the symbols: symbol synchronization • Principle of carrier synchronization (non data aided): use non-linearity • Example for BPSK (for real signals in general) LPF VCO • Note the phase ambiguity Feb. 2003 Synchronization 7

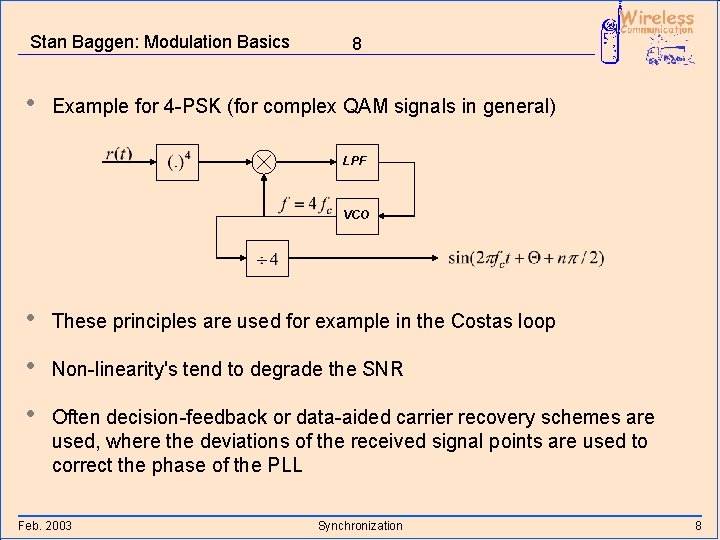

Stan Baggen: Modulation Basics • 8 Example for 4 -PSK (for complex QAM signals in general) LPF VCO • These principles are used for example in the Costas loop • Non-linearity's tend to degrade the SNR • Often decision-feedback or data-aided carrier recovery schemes are used, where the deviations of the received signal points are used to correct the phase of the PLL Feb. 2003 Synchronization 8

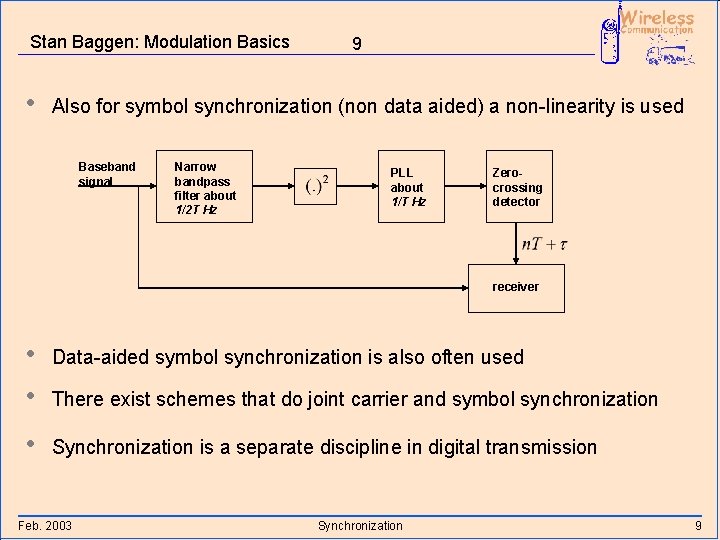

Stan Baggen: Modulation Basics • 9 Also for symbol synchronization (non data aided) a non-linearity is used Baseband signal Narrow bandpass filter about 1/2 T Hz PLL about 1/T Hz Zerocrossing detector receiver • • Data-aided symbol synchronization is also often used • Synchronization is a separate discipline in digital transmission There exist schemes that do joint carrier and symbol synchronization Feb. 2003 Synchronization 9

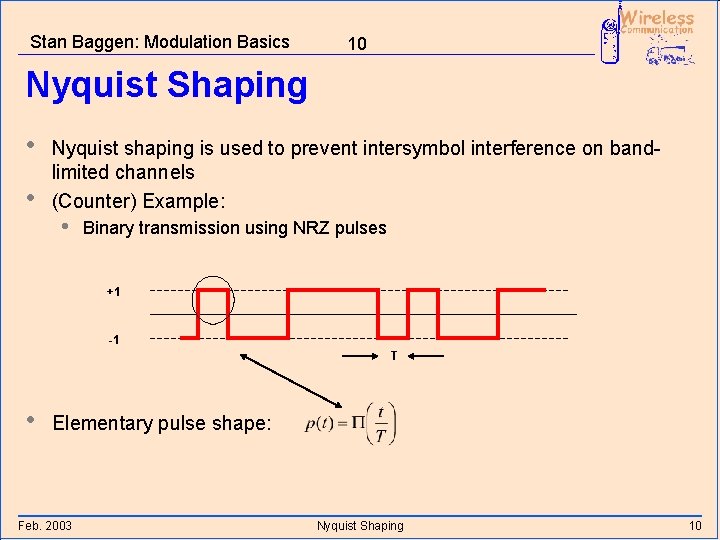

Stan Baggen: Modulation Basics 10 Nyquist Shaping • • Nyquist shaping is used to prevent intersymbol interference on bandlimited channels (Counter) Example: • Binary transmission using NRZ pulses +1 -1 T • Elementary pulse shape: Feb. 2003 Nyquist Shaping 10

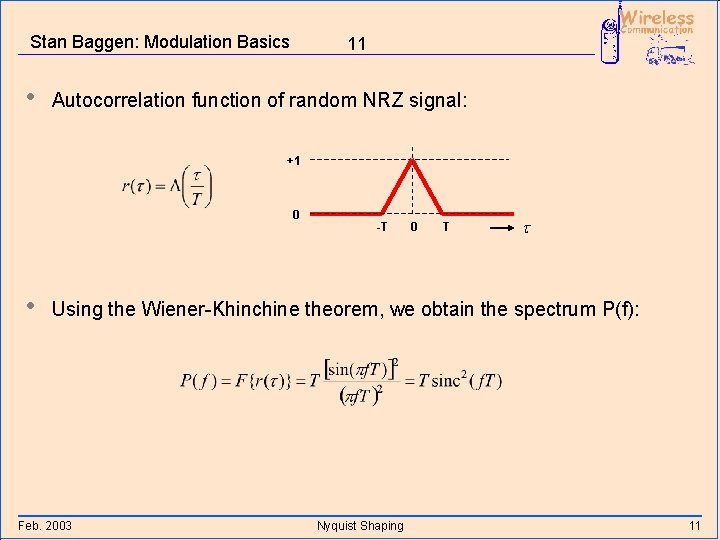

Stan Baggen: Modulation Basics • 11 Autocorrelation function of random NRZ signal: +1 0 • -T 0 T t Using the Wiener-Khinchine theorem, we obtain the spectrum P(f): Feb. 2003 Nyquist Shaping 11

![Stan Baggen: Modulation Basics 12 PSD [ in units T per Hz] f [ Stan Baggen: Modulation Basics 12 PSD [ in units T per Hz] f [](http://slidetodoc.com/presentation_image_h/a682997d009f2b907e7f80125201d848/image-13.jpg)

Stan Baggen: Modulation Basics 12 PSD [ in units T per Hz] f [ in units 1/T Hz] Feb. 2003 Nyquist Shaping 12

![Stan Baggen: Modulation Basics 13 PSD [d. B] f [ in units 1/T Hz] Stan Baggen: Modulation Basics 13 PSD [d. B] f [ in units 1/T Hz]](http://slidetodoc.com/presentation_image_h/a682997d009f2b907e7f80125201d848/image-14.jpg)

Stan Baggen: Modulation Basics 13 PSD [d. B] f [ in units 1/T Hz] Feb. 2003 Nyquist Shaping 13

Stan Baggen: Modulation Basics 14 • Bandwidth of spectrum is unbounded • By international agreement, services each get allocated their own slice of available spectrum: Frequency Division Multiplex • Filtering of NRZ leads to distorted waveforms • • Intersymbol Interference (ISI) Need technical solution that • • Feb. 2003 Allows independent bits to be transmitted and recovered at the receiver Leads to finite bandwidth waveforms Nyquist Shaping 14

Stan Baggen: Modulation Basics 15 • Possible solution: signaling using sinc time-functions: • • Now the spectrum is a box function: • Need compromise However, the time function corresponding to a single bit has unlimited duration Feb. 2003 Nyquist Shaping 15

Stan Baggen: Modulation Basics 16 • General solution if one wants to invest some “excess” bandwidth: • The sinc function fixes the zeros of p(t) at regular intervals no ISI • Any other requirement on p(t) can be obtained by choosing a proper c(t) • Corresponding spectrum: Feb. 2003 Nyquist Shaping 16

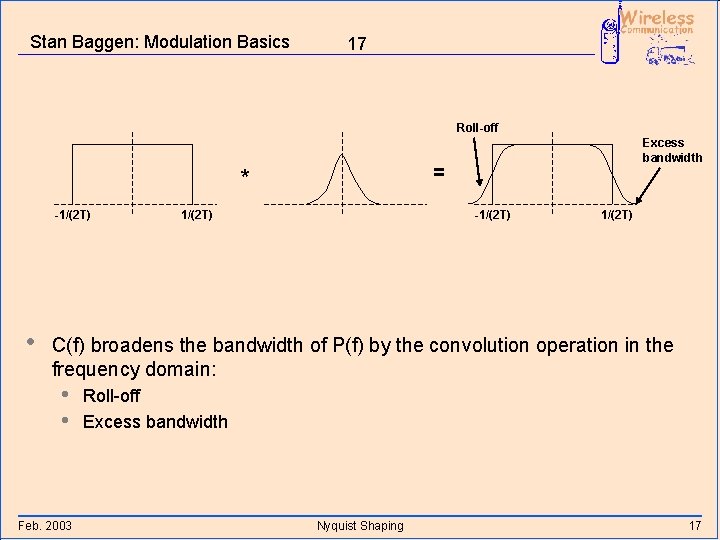

Stan Baggen: Modulation Basics 17 Roll-off = * -1/(2 T) • Excess bandwidth 1/(2 T) -1/(2 T) C(f) broadens the bandwidth of P(f) by the convolution operation in the frequency domain: • • Feb. 2003 Roll-off Excess bandwidth Nyquist Shaping 17

Stan Baggen: Modulation Basics 18 Remarks: • If c(t) is real and symmetric, C(f) is real and symmetric • P(f) is prescribed at the moment of sampling • If the channel distorts, we have to equalize back to P(f) in order to have ISI-free sampling • Mostly, P(f) is chosen to be a “raised cosine spectrum” • Feb. 2003 p(t) “drops” as 1/t 3, because the spectrum itself, and its first derivative are continuous in that case Nyquist Shaping 18

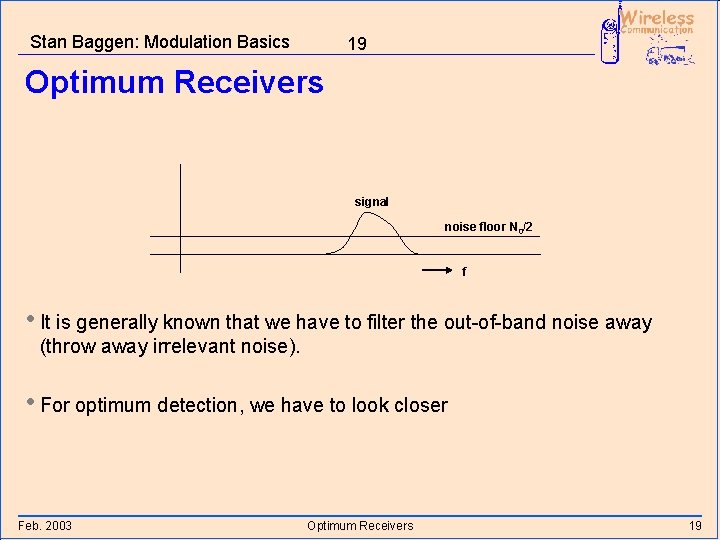

Stan Baggen: Modulation Basics 19 Optimum Receivers signal noise floor N 0/2 f • It is generally known that we have to filter the out-of-band noise away (throw away irrelevant noise). • For optimum detection, we have to look closer Feb. 2003 Optimum Receivers 19

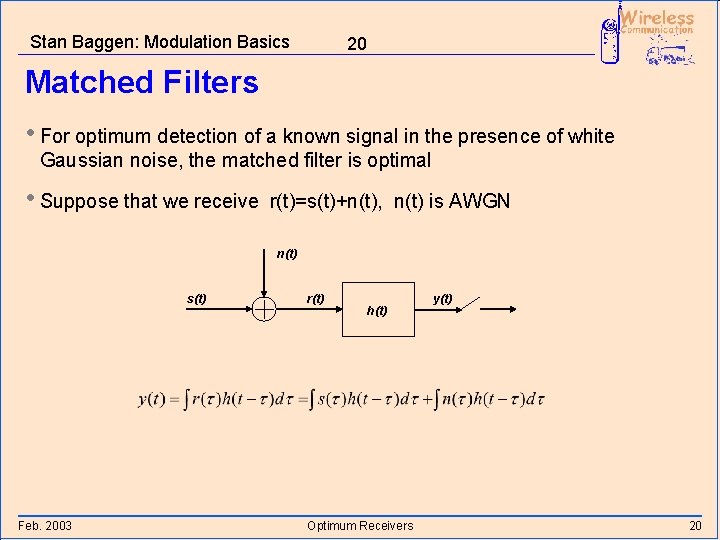

Stan Baggen: Modulation Basics 20 Matched Filters • For optimum detection of a known signal in the presence of white Gaussian noise, the matched filter is optimal • Suppose that we receive r(t)=s(t)+n(t), n(t) is AWGN n(t) s(t) Feb. 2003 r(t) h(t) Optimum Receivers y(t) 20

Stan Baggen: Modulation Basics 21 • Note that y(t) is a Gaussian random variable !! • The first integral is the signal part and the second integral corresponds to the noise at the output of the receive filter • Suppose that we sample y(t) at t=0, and that we want to optimize the SNR at the sampling instant (single pulse transmission) • The noise term has average zero and variance • For maximum SNR, we must maximize: Feb. 2003 Optimum Receivers 21

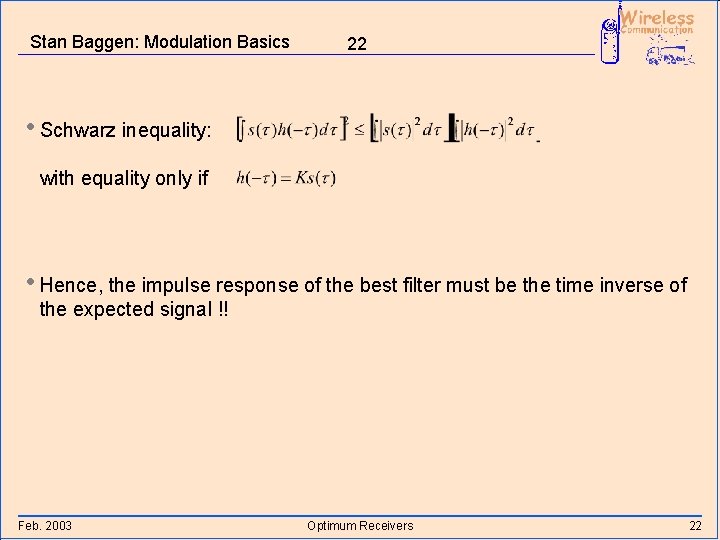

Stan Baggen: Modulation Basics 22 • Schwarz inequality: with equality only if • Hence, the impulse response of the best filter must be the time inverse of the expected signal !! Feb. 2003 Optimum Receivers 22

Stan Baggen: Modulation Basics 23 • Proof of Schwarz inequality: • Definition of inner product (for square integrable functions) • For any scalar l: • From which we find: Feb. 2003 Optimum Receivers 23

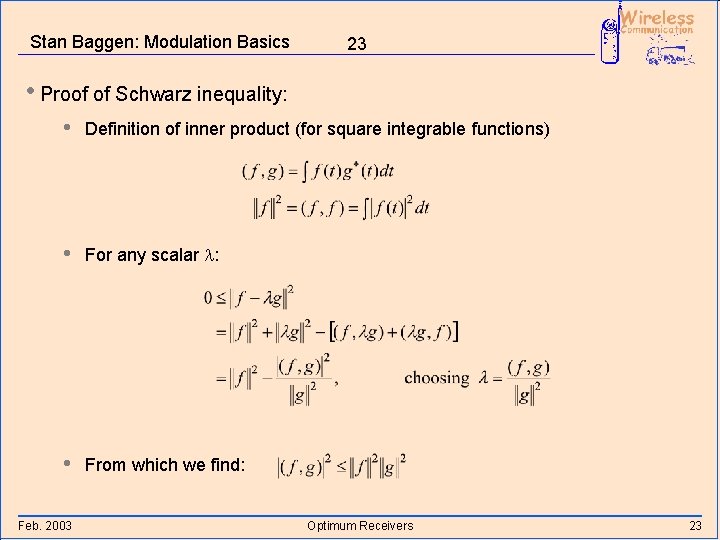

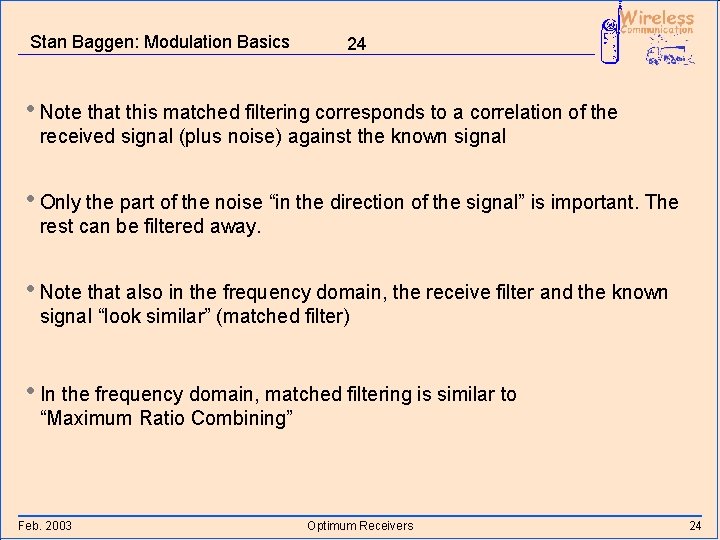

Stan Baggen: Modulation Basics 24 • Note that this matched filtering corresponds to a correlation of the received signal (plus noise) against the known signal • Only the part of the noise “in the direction of the signal” is important. The rest can be filtered away. • Note that also in the frequency domain, the receive filter and the known signal “look similar” (matched filter) • In the frequency domain, matched filtering is similar to “Maximum Ratio Combining” Feb. 2003 Optimum Receivers 24

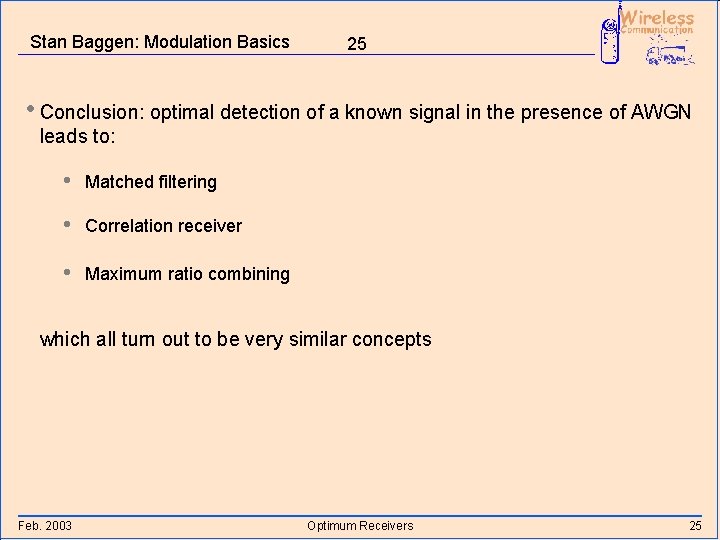

Stan Baggen: Modulation Basics 25 • Conclusion: optimal detection of a known signal in the presence of AWGN leads to: • Matched filtering • Correlation receiver • Maximum ratio combining which all turn out to be very similar concepts Feb. 2003 Optimum Receivers 25

Stan Baggen: Modulation Basics 26 Remarks: • Equalization (feed forward) in case of channel distortions is “the opposite” of matched filtering noise enhancement • Solutions (partial): • • Decision feedback equalization Partial resonse detection • Optimal solution in case of channel distortions: • • Maximum Likelihood Sequence Estimation (MLSE) OFDM-like techniques: splitting up the channel in many narrowband channels that each have no distortion, but for a fixed attenuation and phase rotation • If the noise is non-white (but still Gaussian) • Feb. 2003 A “whitened matched filter” is the optimum solution Optimum Receivers 26

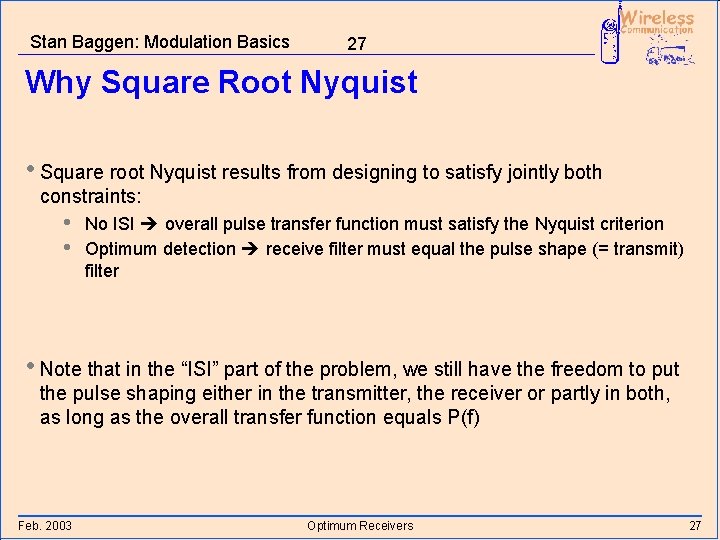

Stan Baggen: Modulation Basics 27 Why Square Root Nyquist • Square root Nyquist results from designing to satisfy jointly both constraints: • • No ISI overall pulse transfer function must satisfy the Nyquist criterion Optimum detection receive filter must equal the pulse shape (= transmit) filter • Note that in the “ISI” part of the problem, we still have the freedom to put the pulse shaping either in the transmitter, the receiver or partly in both, as long as the overall transfer function equals P(f) Feb. 2003 Optimum Receivers 27

Stan Baggen: Modulation Basics 28 • From the “optimum detection” part, it follows that the transmit filter and the receive filter should be “equal” (for real signals) n(t) s(t) Feb. 2003 r(t) Optimum Receivers y(t) 28

Stan Baggen: Modulation Basics 29 Maximum Likelihood (ML) Reception Suppose that we have two possible messages m 0 and m 1. One of them is transmitted and at the receiver, upon receiving r, we want to minimize the error probability. • Choose mi for which P(mi|r) is maximum • This is the Maximum A Posteriori (MAP) decision • Using Bayes rule, we find a likelihood ratio that has to be compared with a threshold Feb. 2003 Coding for Wireless Channels 29

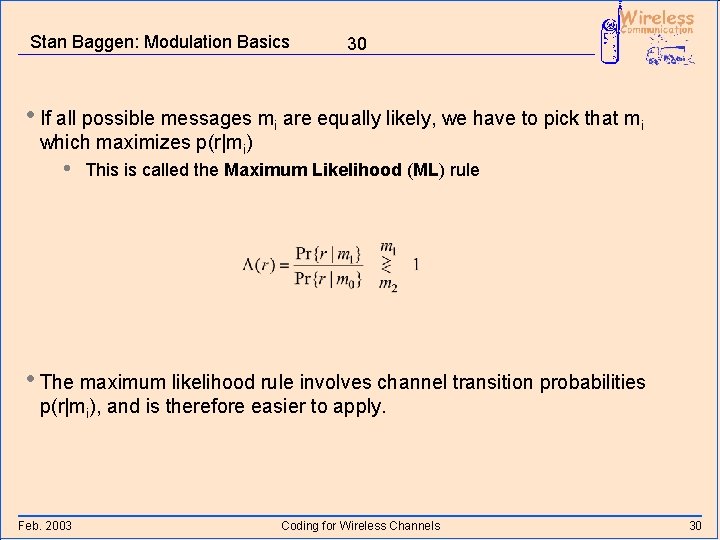

Stan Baggen: Modulation Basics 30 • If all possible messages mi are equally likely, we have to pick that mi which maximizes p(r|mi) • This is called the Maximum Likelihood (ML) rule • The maximum likelihood rule involves channel transition probabilities p(r|mi), and is therefore easier to apply. Feb. 2003 Coding for Wireless Channels 30

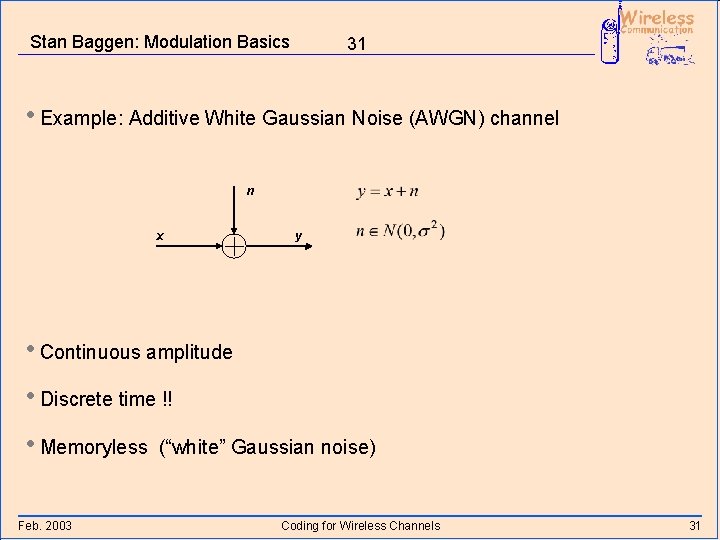

Stan Baggen: Modulation Basics 31 • Example: Additive White Gaussian Noise (AWGN) channel n x y • Continuous amplitude • Discrete time !! • Memoryless Feb. 2003 (“white” Gaussian noise) Coding for Wireless Channels 31

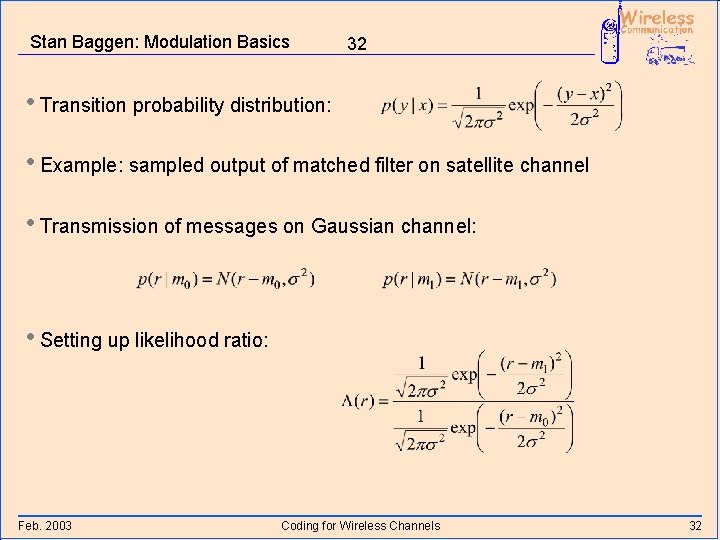

Stan Baggen: Modulation Basics 32 • Transition probability distribution: • Example: sampled output of matched filter on satellite channel • Transmission of messages on Gaussian channel: • Setting up likelihood ratio: Feb. 2003 Coding for Wireless Channels 32

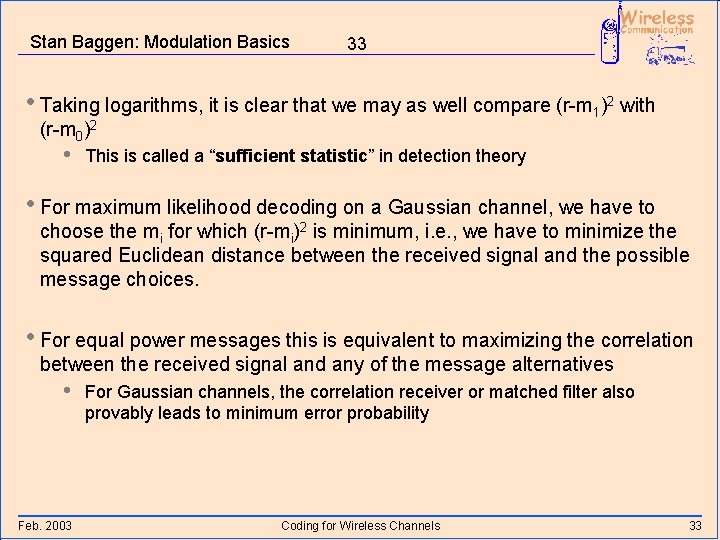

Stan Baggen: Modulation Basics 33 • Taking logarithms, it is clear that we may as well compare (r-m 1)2 with (r-m 0)2 • This is called a “sufficient statistic” in detection theory • For maximum likelihood decoding on a Gaussian channel, we have to choose the mi for which (r-mi)2 is minimum, i. e. , we have to minimize the squared Euclidean distance between the received signal and the possible message choices. • For equal power messages this is equivalent to maximizing the correlation between the received signal and any of the message alternatives • Feb. 2003 For Gaussian channels, the correlation receiver or matched filter also provably leads to minimum error probability Coding for Wireless Channels 33

Stan Baggen: Modulation Basics 34 Coding for Wireless Channels • Hamming Distance and Error Correction • The [7, 4, 3] Hamming Code • Convolutional Codes • ML Decoding and the Viterbi Algorithm • Coding Gain • Coding on Fading Channels Feb. 2003 Coding for Wireless Channels 34

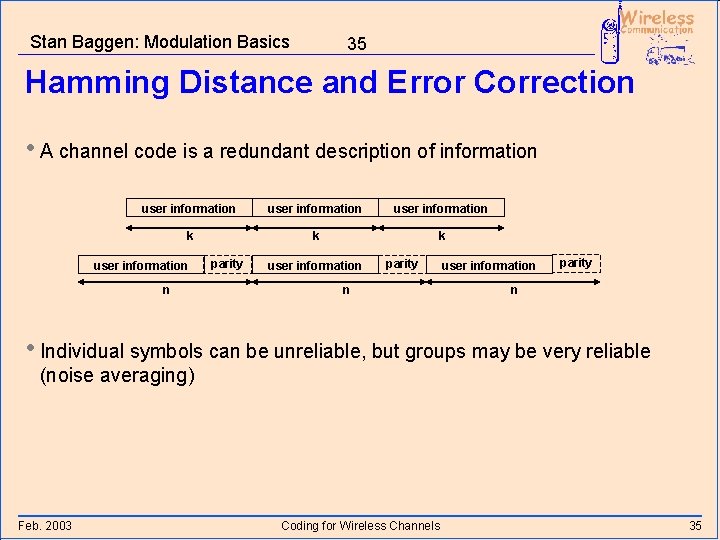

Stan Baggen: Modulation Basics 35 Hamming Distance and Error Correction • A channel code is a redundant description of information user information k k k user information n parity user information parity n • Individual symbols can be unreliable, but groups may be very reliable (noise averaging) Feb. 2003 Coding for Wireless Channels 35

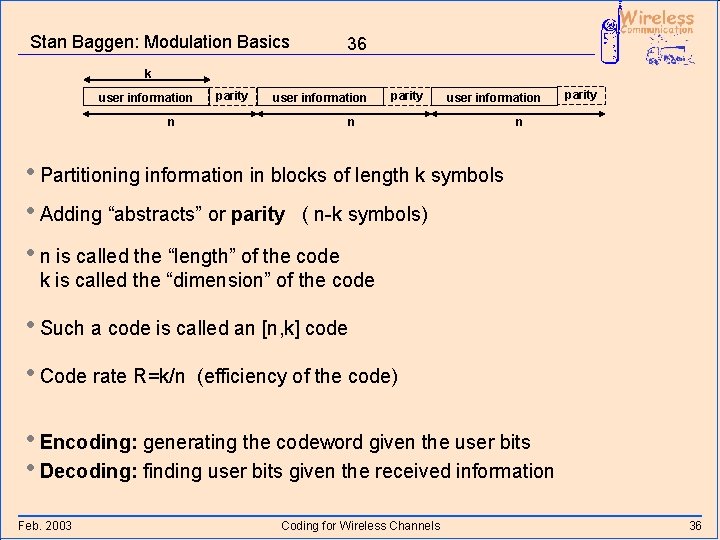

Stan Baggen: Modulation Basics 36 k user information n parity n • Partitioning information in blocks of length k symbols • Adding “abstracts” or parity ( n-k symbols) • n is called the “length” of the code k is called the “dimension” of the code • Such a code is called an [n, k] code • Code rate R=k/n (efficiency of the code) • Encoding: generating the codeword given the user bits • Decoding: finding user bits given the received information Feb. 2003 Coding for Wireless Channels 36

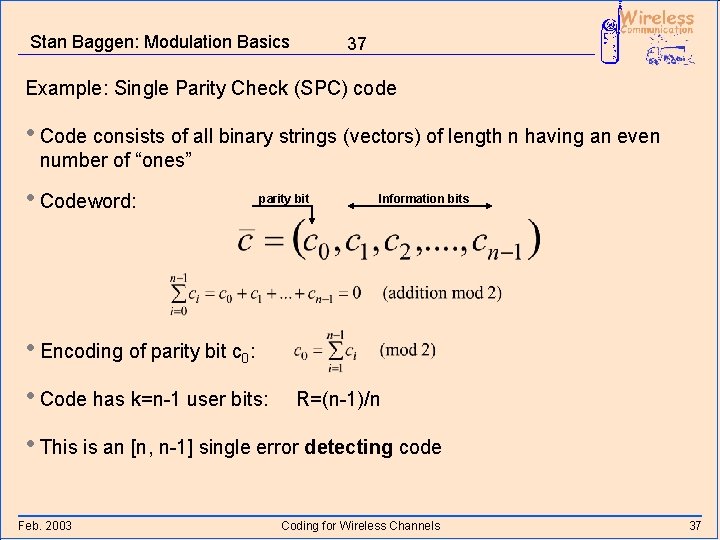

Stan Baggen: Modulation Basics 37 Example: Single Parity Check (SPC) code • Code consists of all binary strings (vectors) of length n having an even number of “ones” • Codeword: parity bit Information bits • Encoding of parity bit c 0: • Code has k=n-1 user bits: R=(n-1)/n • This is an [n, n-1] single error detecting code Feb. 2003 Coding for Wireless Channels 37

Stan Baggen: Modulation Basics 38 • Error detecting codes only try to detect errors, they do not correct any errors • Only valid codewords are accepted as reliable Feb. 2003 Coding for Wireless Channels 38

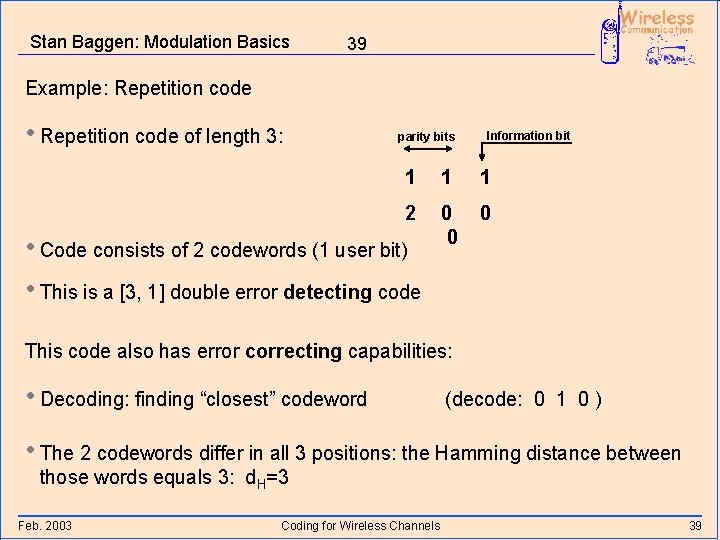

Stan Baggen: Modulation Basics 39 Example: Repetition code • Repetition code of length 3: parity bits Information bit 1 1 1 2 0 0 0 • Code consists of 2 codewords (1 user bit) • This is a [3, 1] double error detecting code This code also has error correcting capabilities: • Decoding: finding “closest” codeword (decode: 0 1 0 ) • The 2 codewords differ in all 3 positions: the Hamming distance between those words equals 3: d. H=3 Feb. 2003 Coding for Wireless Channels 39

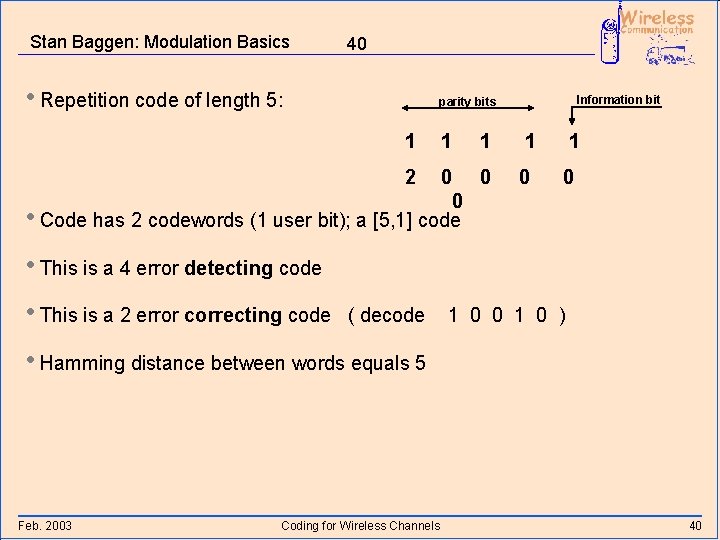

Stan Baggen: Modulation Basics 40 • Repetition code of length 5: Information bit parity bits 1 1 0 0 0 • Code has 2 codewords (1 user bit); a [5, 1] code 0 0 2 1 • This is a 4 error detecting code • This is a 2 error correcting code ( decode 1 0 0 1 0 ) • Hamming distance between words equals 5 Feb. 2003 Coding for Wireless Channels 40

Stan Baggen: Modulation Basics 41 Basic intuition behind error correcting codes: • Codewords should “differ” a lot • If there are not too many errors, the original codeword is still “closer” than any other codeword Feb. 2003 Coding for Wireless Channels 41

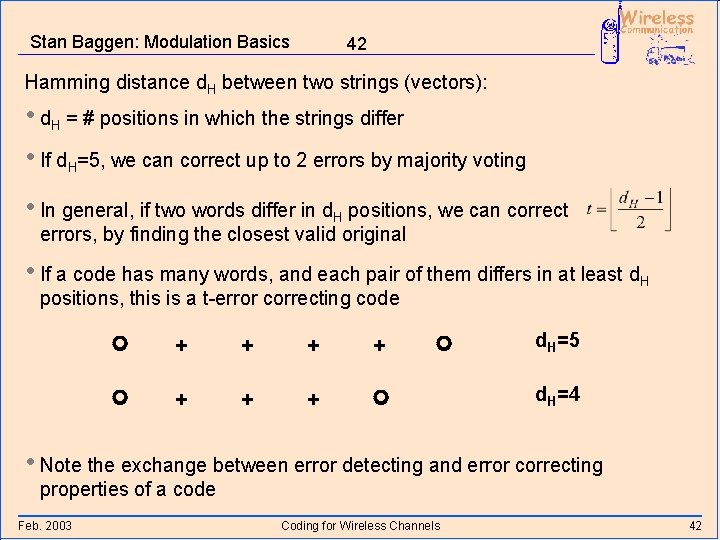

Stan Baggen: Modulation Basics 42 Hamming distance d. H between two strings (vectors): • d. H = # positions in which the strings differ • If d. H=5, we can correct up to 2 errors by majority voting • In general, if two words differ in d. H positions, we can correct errors, by finding the closest valid original • If a code has many words, and each pair of them differs in at least d. H positions, this is a t-error correcting code + + + + d. H=5 d. H=4 • Note the exchange between error detecting and error correcting properties of a code Feb. 2003 Coding for Wireless Channels 42

![Stan Baggen: Modulation Basics 43 The [7, 4, 3] Hamming Code • A very Stan Baggen: Modulation Basics 43 The [7, 4, 3] Hamming Code • A very](http://slidetodoc.com/presentation_image_h/a682997d009f2b907e7f80125201d848/image-44.jpg)

Stan Baggen: Modulation Basics 43 The [7, 4, 3] Hamming Code • A very famous code is is the [7, 4, 3] Hamming code • 4 user information bits i 1, …, i 4 3 parity bits p 1, p 2 and p 3 • Envisioning the [7, 4, 3] Hamming code: p 1 i 1 • In each circle the parity should be even i 4 i 3 p 3 i 2 p 2 Example from Bob Mc. Eliece • A single bit error correcting code Feb. 2003 Coding for Wireless Channels 43

![Stan Baggen: Modulation Basics 44 • List of codewords of the [7, 4, 3] Stan Baggen: Modulation Basics 44 • List of codewords of the [7, 4, 3]](http://slidetodoc.com/presentation_image_h/a682997d009f2b907e7f80125201d848/image-45.jpg)

Stan Baggen: Modulation Basics 44 • List of codewords of the [7, 4, 3] Hamming code I 4. . i 1 p 3. p 1 0000 1000 101 0100 111 0010 110 0001 011 1100 0110 0011 1010 011 0101 1001 110 100 0111 010 1011 000 1101 001 111 Feb. 2003 • each pair of codewords differs in at least 3 positions d. H 3 ===> 1 error correcting code 0100 111 0110 001 Coding for Wireless Channels 44

Stan Baggen: Modulation Basics 45 Reed Solomon Codes • Used for very strong error correction (e. g. t=8) and burst error correction • Code operates on groups of bits (e. g. bytes) and not on bits • Requires algebraic decoding algorithms • Used for getting extremely low error rates (<10 -12) • Algebraic block codes have two major drawbacks: • They cannot handle soft-decision information (except for an erasure) • Efficient algorithms exist only for bounded distance decoding (up to t errors) Feb. 2003 Coding for Wireless Channels 45

Stan Baggen: Modulation Basics 46 Convolutional Codes • Redundancy is generated in a different manner • A convolutional code is not block-oriented. It generates semi-infinite strings • A convolutional code is a linear code (over GF(2) ) • Encoder = FIR filter over GF(2) convolutional code • Information sequences and code sequences are written as polynomials in D where the low order coefficients come first in time Feb. 2003 Coding for Wireless Channels 46

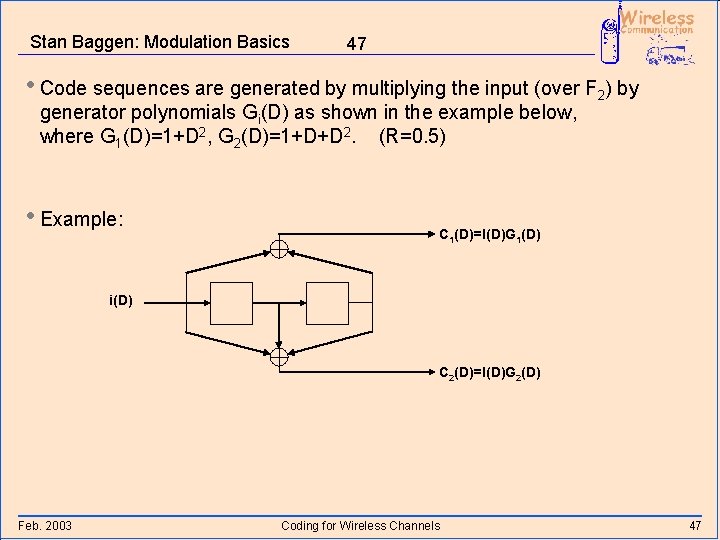

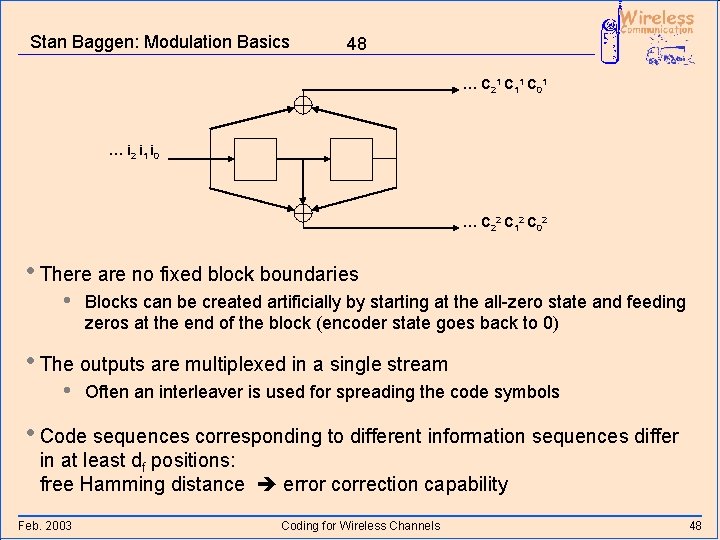

Stan Baggen: Modulation Basics 47 • Code sequences are generated by multiplying the input (over F 2) by generator polynomials Gi(D) as shown in the example below, where G 1(D)=1+D 2, G 2(D)=1+D+D 2. (R=0. 5) • Example: C 1(D)=I(D)G 1(D) i(D) C 2(D)=I(D)G 2(D) Feb. 2003 Coding for Wireless Channels 47

Stan Baggen: Modulation Basics 48 … C 21 C 11 C 01 … i 2 i 1 i 0 … C 22 C 12 C 02 • There are no fixed block boundaries • Blocks can be created artificially by starting at the all-zero state and feeding zeros at the end of the block (encoder state goes back to 0) • The outputs are multiplexed in a single stream • Often an interleaver is used for spreading the code symbols • Code sequences corresponding to different information sequences differ in at least df positions: free Hamming distance error correction capability Feb. 2003 Coding for Wireless Channels 48

Stan Baggen: Modulation Basics 49 Description of Convolutional Codes • Most convolutional codes in practice are derived from R=0. 5 nonsystematic codes • Leads to the simplest Viterbi decoder • There is almost no algebraic theory related to convolutional codes • • We want to have the maximum distance for the fewest delay elements in the encoder ( simplest decoder ) “good” generator polynomials are found by computer search • The number n of delay elements in the encoder is an important design parameter. It is called the encoder constraint length • Feb. 2003 In many cases K=n+1 is defined as the encoder constraint length (K = span of taps) Coding for Wireless Channels 49

Stan Baggen: Modulation Basics 50 • It turns out that we can obtain dfree proportional to n • Complexity of Viterbi decoder is exponential in n • The connection polynomials of the FIR filter are called generator polynomials. They describe the code. • Example: for the n=2 code: G 1(D)=1+D 2, G 2(D)=1+D+D 2 • In octal notation: a [5, 7] convolutional code, dfree=5 • Mostly, non-systematic codes are used because they give a higher free distance, given the encoder constraint length Feb. 2003 Coding for Wireless Channels 50

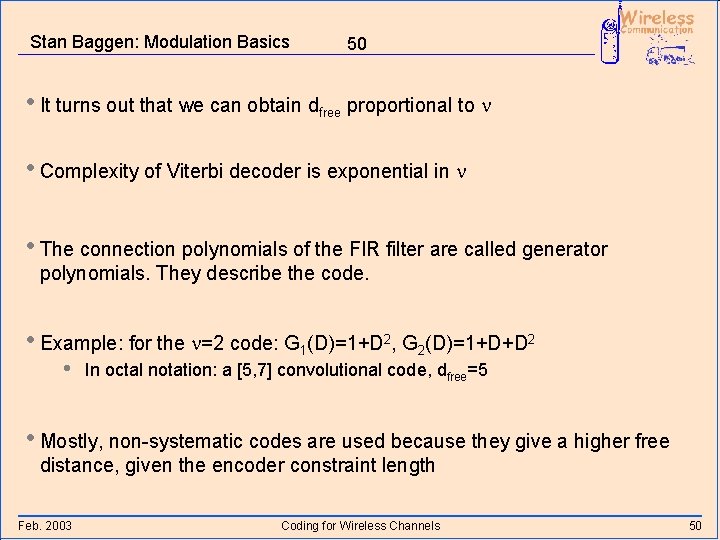

Stan Baggen: Modulation Basics 51 Maximum Likelihood Decoding • Any received vector is decoded to the “nearest” codeword (complete decoding) Voronoi region Feb. 2003 Coding for Wireless Channels 51

Stan Baggen: Modulation Basics 52 The Viterbi Algorithm • Convolutional codes are used on very “noisy” channels • Convolutional codes are designed for complete maximum likelihood (ML) decoding with the lowest complexity decoders • Complexity of ML decoders grows exponentially in the code parameters • ML decoding of a convolutional code is done with a Viterbi decoder • A Viterbi decoder conceptually generates all possible codewords in an efficient manner and compares them with the received waveform. It then chooses the closest (minimum Euclidean distance on a Gaussian channel • Maximum Likelihood Sequence Estimation (MLSE) • Lowest error probability if all input sequences are equally likely Feb. 2003 Coding for Wireless Channels 52

Stan Baggen: Modulation Basics 53 • For each possible codeword ci that corresponds to some mi, a Viterbi decoder keeps track of its “costs” (metric) given the received waveform r • The metric (r-ci)2 (for Gaussian channels) • Note that we use real numbers for r: soft-decision information • The Viterbi algorithm efficiently finds the codeword with minimum metric, given the received waveform Feb. 2003 Coding for Wireless Channels 53

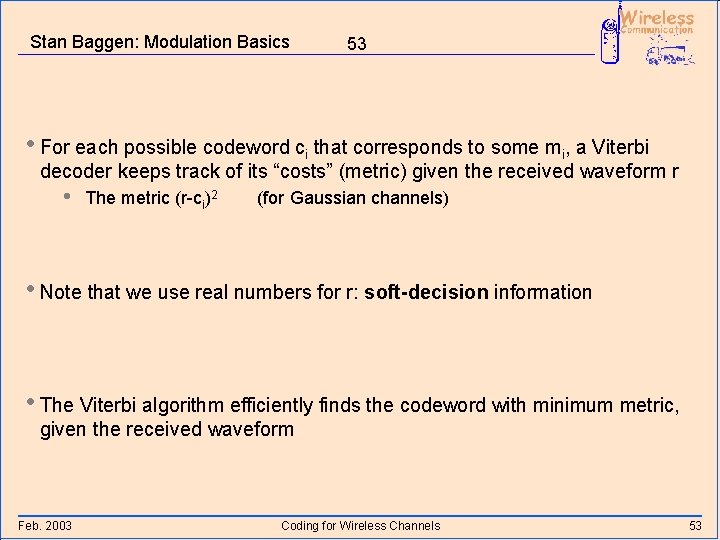

Stan Baggen: Modulation Basics 54 State Transition Diagram • The operation of a convolutional encoder can be described by a state transition diagram • Example: state transition diagram of n=2 code generated by G 1(D)=1+D 2, G 2(D)=1+D+D 2 0/00 00 • States correspond to the contents of the shift register Feb. 2003 1/00 01 • Along the branches, we find • • 1/11 0/11 Input bit before “/” Output bits after the “/” 10 0/01 0/10 11 1/01 Coding for Wireless Channels 54

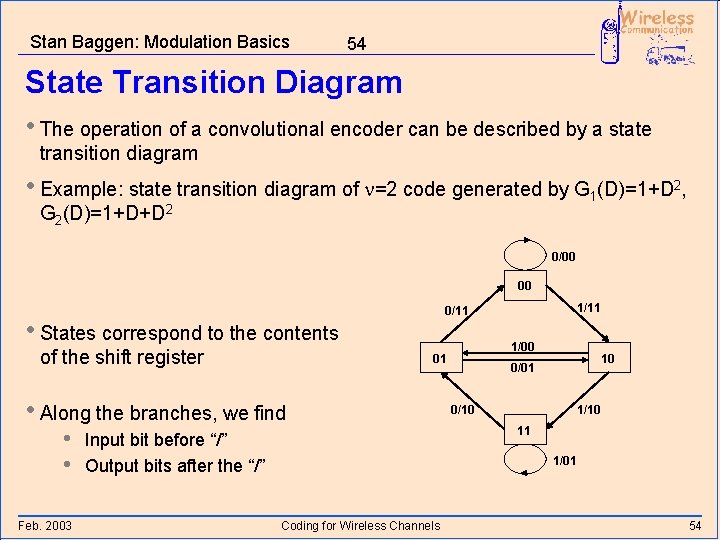

Stan Baggen: Modulation Basics 55 Trellis Decoding • Each codeword corresponds to a path through the state transition diagram • We can visualize such paths by drawing the possible state transitions as a function of time • This is calles a trellis • Example: trellis section of n=2, [5, 7] code 00 state 11 • A trellis has nodes and branches • • • Feb. 2003 00 Dotted branch: input bit = 0 Solid branch: input bit = 1 Along the branches: generated code bits Coding for Wireless Channels 10 01 10 11 01 branch 00 node 10 11 01 time 55

![Stan Baggen: Modulation Basics 56 • Example of trellis of n=2, [5, 7] code Stan Baggen: Modulation Basics 56 • Example of trellis of n=2, [5, 7] code](http://slidetodoc.com/presentation_image_h/a682997d009f2b907e7f80125201d848/image-57.jpg)

Stan Baggen: Modulation Basics 56 • Example of trellis of n=2, [5, 7] code 00 00 00 11 11 11 01 01 01 10 11 10 11 00 00 00 10 10 10 01 01 01 time • 4 different codewords are shown in the trellis Feb. 2003 The Viterbi Algorithm 56

![Stan Baggen: Modulation Basics 57 • 6 trellis sections of n=2, [5, 7] code, Stan Baggen: Modulation Basics 57 • 6 trellis sections of n=2, [5, 7] code,](http://slidetodoc.com/presentation_image_h/a682997d009f2b907e7f80125201d848/image-58.jpg)

Stan Baggen: Modulation Basics 57 • 6 trellis sections of n=2, [5, 7] code, corresponding to the transmission of 6 information bits i 0, i 1, i 2, i 3, i 4, i 5 00 00 00 11 11 11 01 01 10 11 10 11 00 00 00 10 10 10 01 01 01 time Feb. 2003 The Viterbi Algorithm 57

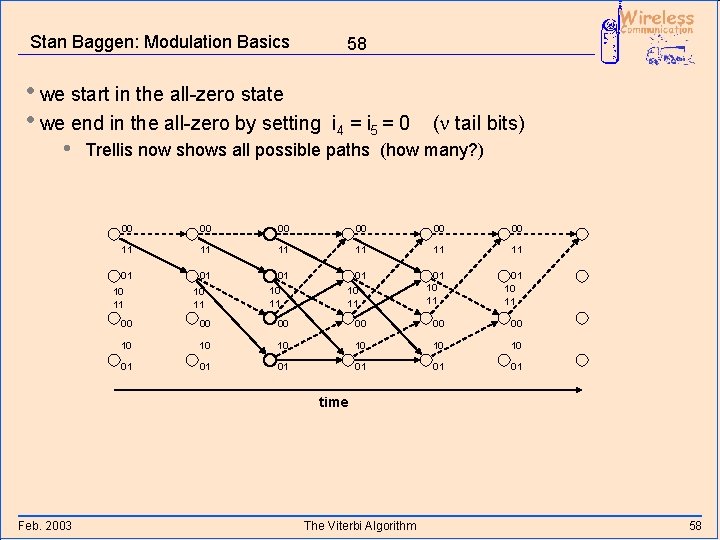

Stan Baggen: Modulation Basics 58 • we start in the all-zero state • we end in the all-zero by setting • i 4 = i 5 = 0 (n tail bits) Trellis now shows all possible paths (how many? ) 00 00 00 11 11 11 01 01 01 10 11 10 11 00 00 00 10 10 10 01 01 01 time Feb. 2003 The Viterbi Algorithm 58

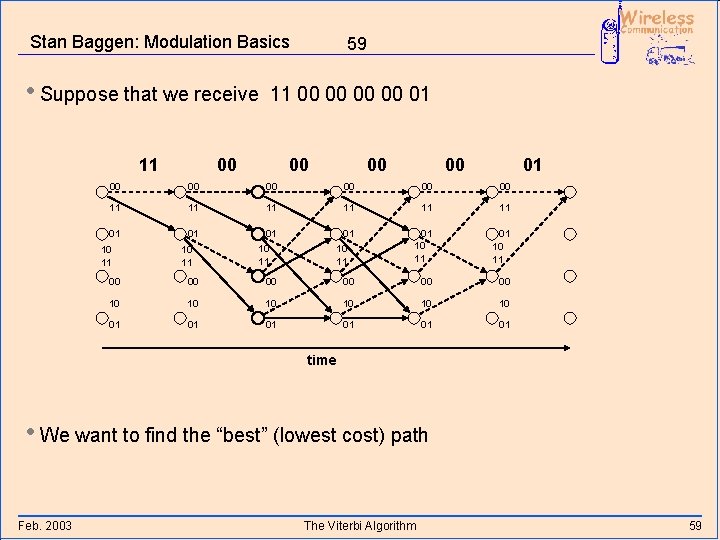

Stan Baggen: Modulation Basics • Suppose that we receive 11 59 11 00 00 01 00 00 00 11 11 11 01 01 01 10 11 10 11 00 00 00 10 10 10 01 01 01 time • We want to find the “best” (lowest cost) path Feb. 2003 The Viterbi Algorithm 59

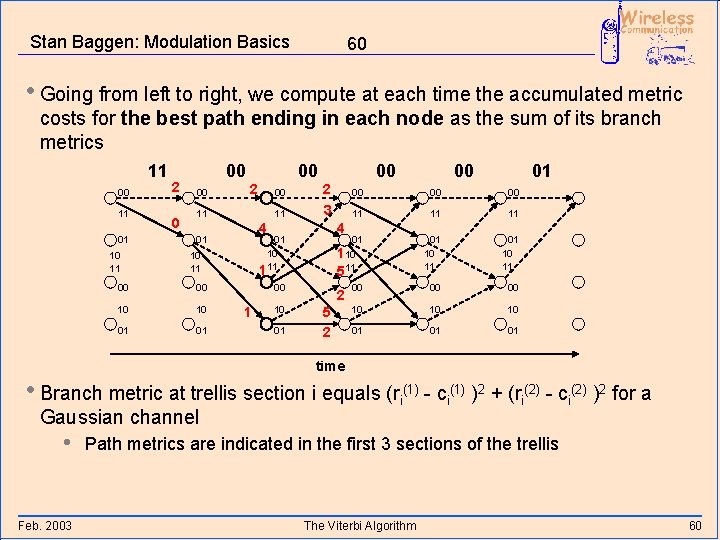

Stan Baggen: Modulation Basics 60 • Going from left to right, we compute at each time the accumulated metric costs for the best path ending in each node as the sum of its branch metrics 11 00 11 01 10 11 2 0 00 00 00 2 00 11 11 10 10 01 01 00 1 10 01 00 00 00 11 11 11 01 110 511 01 10 11 00 00 00 10 10 10 01 01 01 4 01 10 11 00 2 3 4 01 00 00 2 5 2 time • Branch metric at trellis section i equals (ri(1) - ci(1) )2 + (ri(2) - ci(2) )2 for a Gaussian channel • Feb. 2003 Path metrics are indicated in the first 3 sections of the trellis The Viterbi Algorithm 60

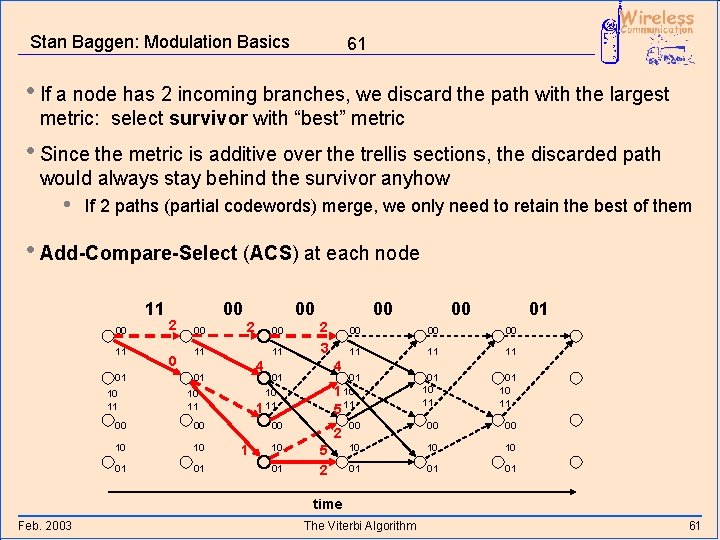

Stan Baggen: Modulation Basics 61 • If a node has 2 incoming branches, we discard the path with the largest metric: select survivor with “best” metric • Since the metric is additive over the trellis sections, the discarded path would always stay behind the survivor anyhow • If 2 paths (partial codewords) merge, we only need to retain the best of them • Add-Compare-Select (ACS) at each node 11 00 11 01 10 11 2 0 00 00 00 2 00 11 11 10 10 01 01 1 10 01 01 00 00 11 11 11 01 10 11 00 00 00 10 10 10 01 01 01 5 00 00 00 4 01 10 1 11 10 11 00 2 3 4 01 00 00 2 5 2 time Feb. 2003 The Viterbi Algorithm 61

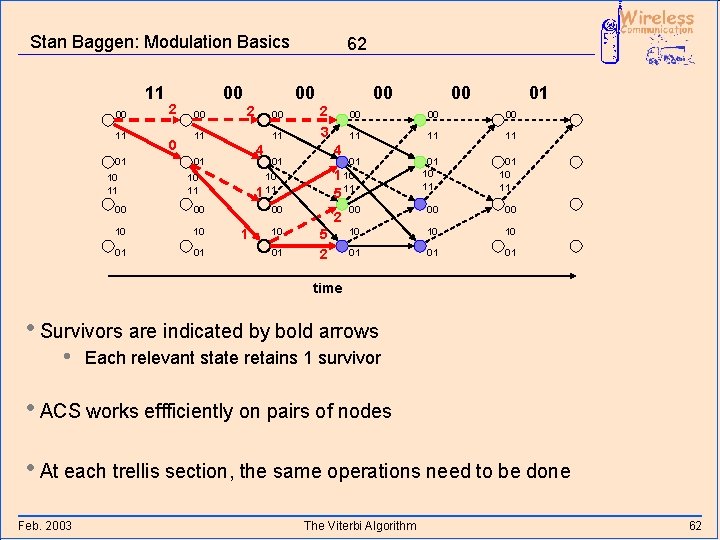

Stan Baggen: Modulation Basics 11 00 11 01 10 11 2 0 00 00 00 2 00 11 11 10 10 01 01 2 3 1 10 01 01 00 00 11 11 11 01 10 11 00 00 00 10 10 10 01 01 01 5 00 00 00 4 01 10 1 11 10 11 00 00 4 01 00 62 2 5 2 time • Survivors are indicated by bold arrows • Each relevant state retains 1 survivor • ACS works effficiently on pairs of nodes • At each trellis section, the same operations need to be done Feb. 2003 The Viterbi Algorithm 62

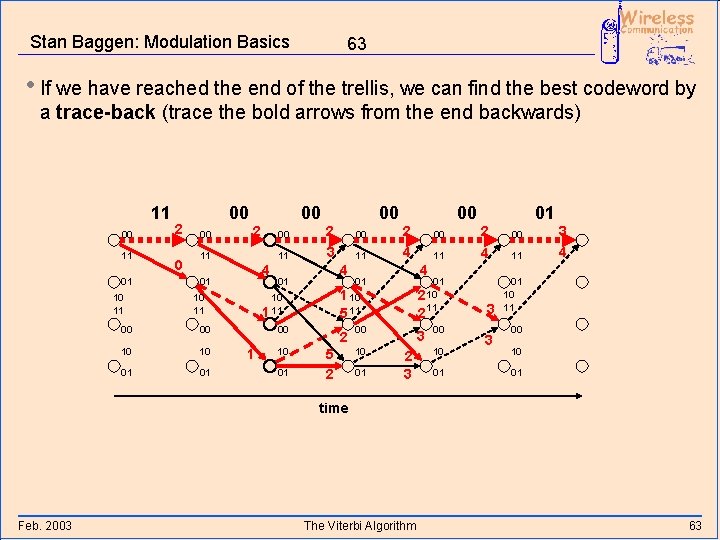

Stan Baggen: Modulation Basics 63 • If we have reached the end of the trellis, we can find the best codeword by a trace-back (trace the bold arrows from the end backwards) 11 00 11 01 10 11 2 0 00 00 00 2 00 11 11 4 01 10 11 00 00 10 10 01 01 00 2 3 01 2 4 2 5 2 01 00 2 00 11 4 11 01 10 2 11 2 00 10 01 4 01 1 10 5 11 00 10 11 4 01 10 1 11 1 00 00 3 2 3 00 10 01 3 3 3 4 01 10 11 00 10 01 time Feb. 2003 The Viterbi Algorithm 63

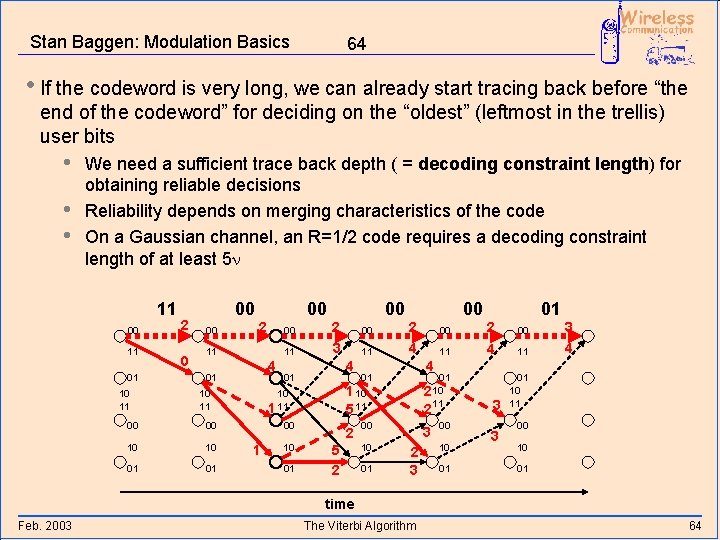

Stan Baggen: Modulation Basics 64 • If the codeword is very long, we can already start tracing back before “the end of the codeword” for deciding on the “oldest” (leftmost in the trellis) user bits • • • We need a sufficient trace back depth ( = decoding constraint length) for obtaining reliable decisions Reliability depends on merging characteristics of the code On a Gaussian channel, an R=1/2 code requires a decoding constraint length of at least 5 n 11 00 11 01 10 11 2 0 00 00 00 2 00 11 11 4 01 10 11 00 00 10 10 01 01 00 2 3 01 2 4 2 5 2 01 00 2 00 11 4 11 01 10 2 11 2 00 10 01 4 01 1 10 5 11 00 10 11 4 01 10 1 11 1 00 00 3 2 3 00 10 01 3 3 3 4 01 10 11 00 10 01 time Feb. 2003 The Viterbi Algorithm 64

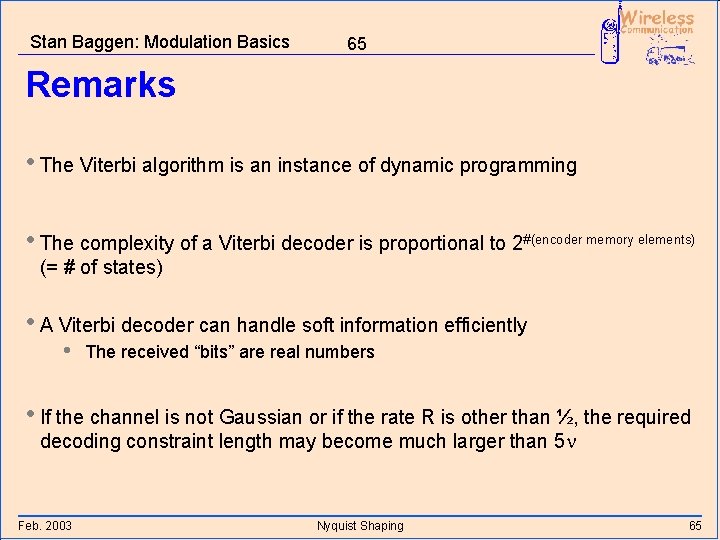

Stan Baggen: Modulation Basics 65 Remarks • The Viterbi algorithm is an instance of dynamic programming • The complexity of a Viterbi decoder is proportional to 2#(encoder memory elements) (= # of states) • A Viterbi decoder can handle soft information efficiently • The received “bits” are real numbers • If the channel is not Gaussian or if the rate R is other than ½, the required decoding constraint length may become much larger than 5 n Feb. 2003 Nyquist Shaping 65

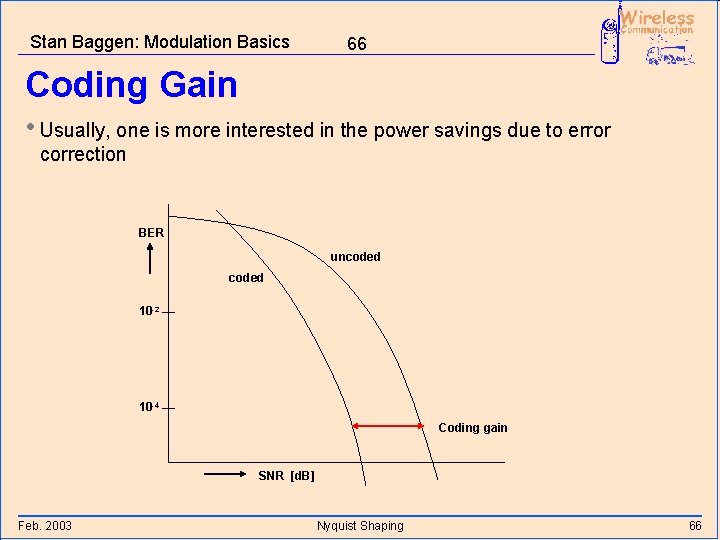

Stan Baggen: Modulation Basics 66 Coding Gain • Usually, one is more interested in the power savings due to error correction BER uncoded 10 -2 10 -4 Coding gain SNR [d. B] Feb. 2003 Nyquist Shaping 66

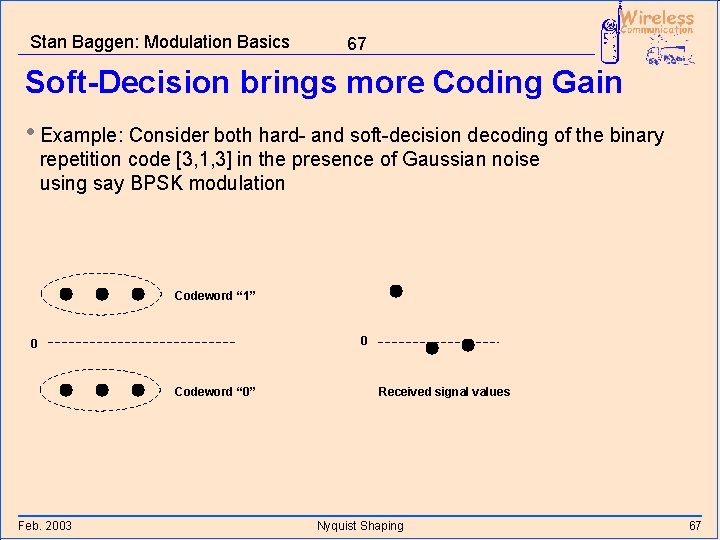

Stan Baggen: Modulation Basics 67 Soft-Decision brings more Coding Gain • Example: Consider both hard- and soft-decision decoding of the binary repetition code [3, 1, 3] in the presence of Gaussian noise using say BPSK modulation Codeword “ 1” 0 0 Codeword “ 0” Feb. 2003 Received signal values Nyquist Shaping 67

Stan Baggen: Modulation Basics 68 Coding gain Feb. 2003 The Viterbi Algorithm 68

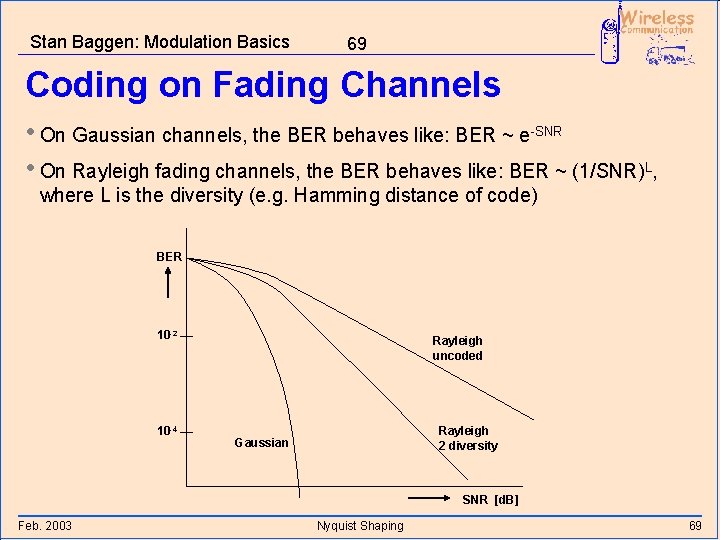

Stan Baggen: Modulation Basics 69 Coding on Fading Channels • On Gaussian channels, the BER behaves like: BER ~ e-SNR • On Rayleigh fading channels, the BER behaves like: BER ~ (1/SNR)L, where L is the diversity (e. g. Hamming distance of code) BER 10 -2 10 -4 Rayleigh uncoded Rayleigh 2 diversity Gaussian SNR [d. B] Feb. 2003 Nyquist Shaping 69

- Slides: 70