Windowbased models for generic object detection MeiChen Yeh

Window-based models for generic object detection Mei-Chen Yeh 04/24/2012

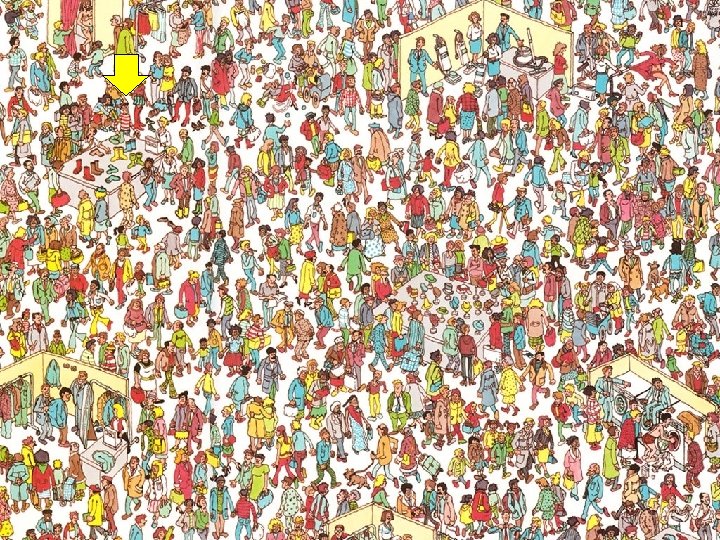

Object Detection • Find the location of an object if it appear in an image – Does the object appear? – Where is it?

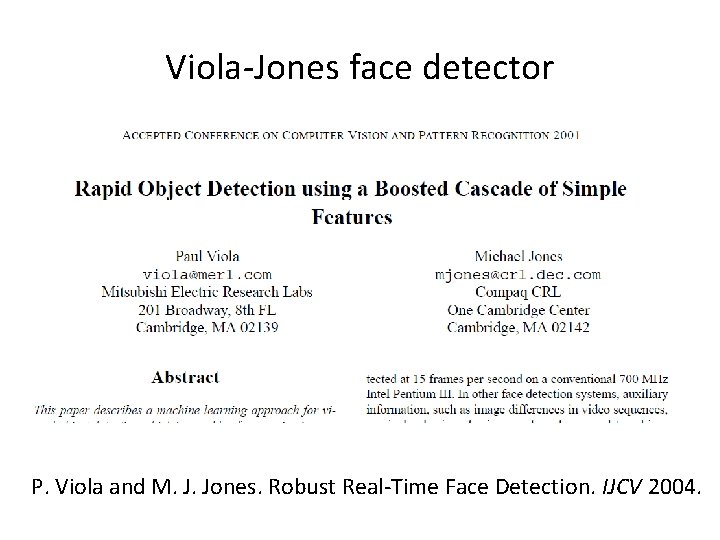

Viola-Jones face detector P. Viola and M. J. Jones. Robust Real-Time Face Detection. IJCV 2004.

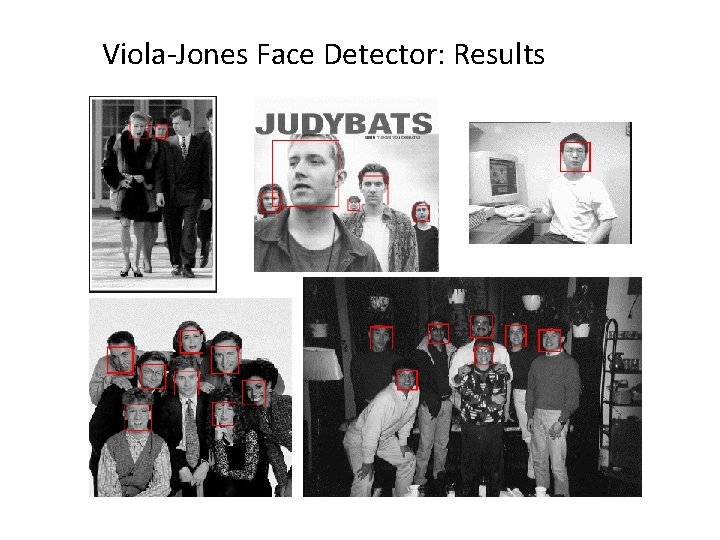

Viola-Jones Face Detector: Results

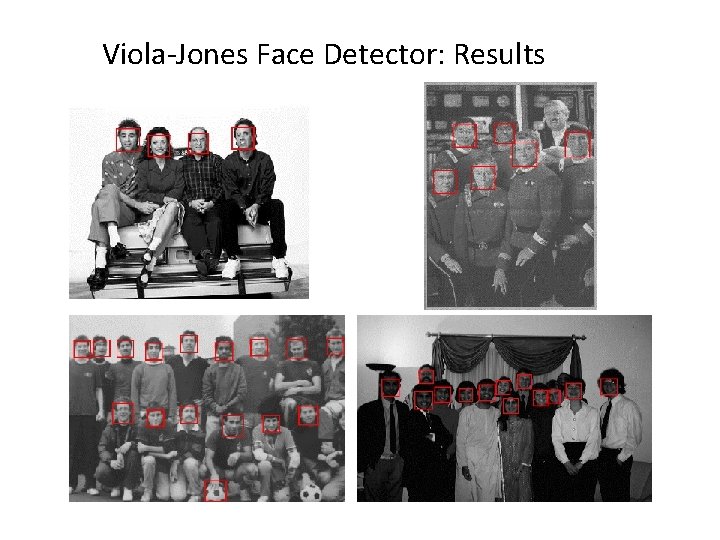

Viola-Jones Face Detector: Results

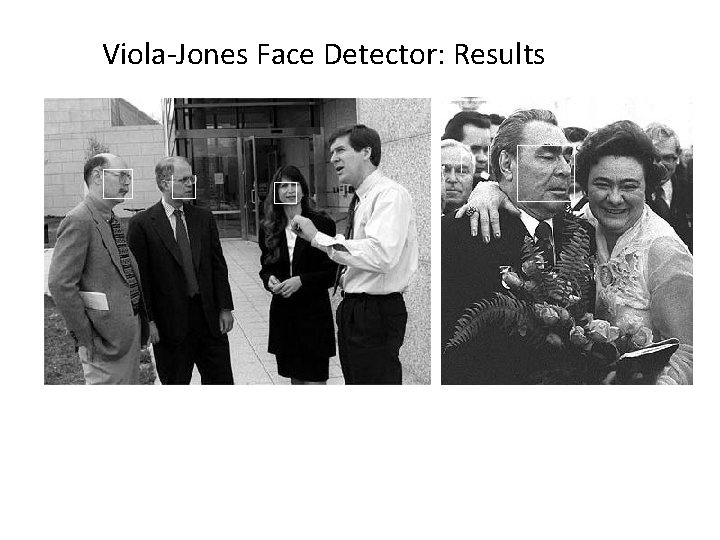

Viola-Jones Face Detector: Results

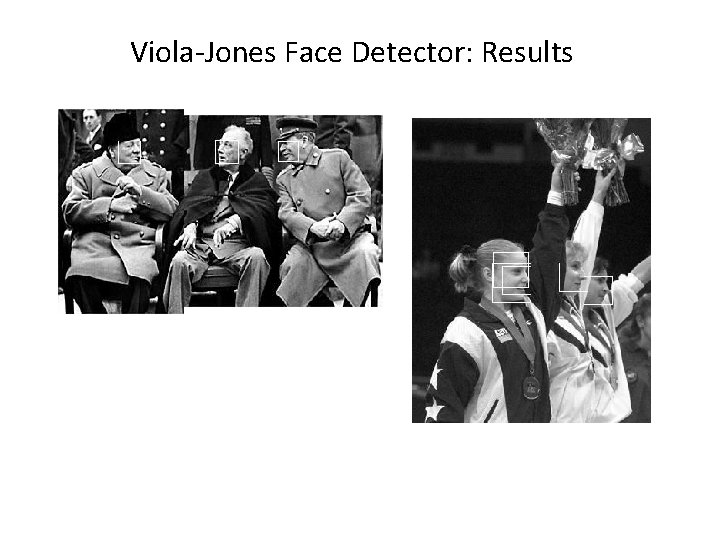

Viola-Jones Face Detector: Results Paul Viola, ICCV tutorial

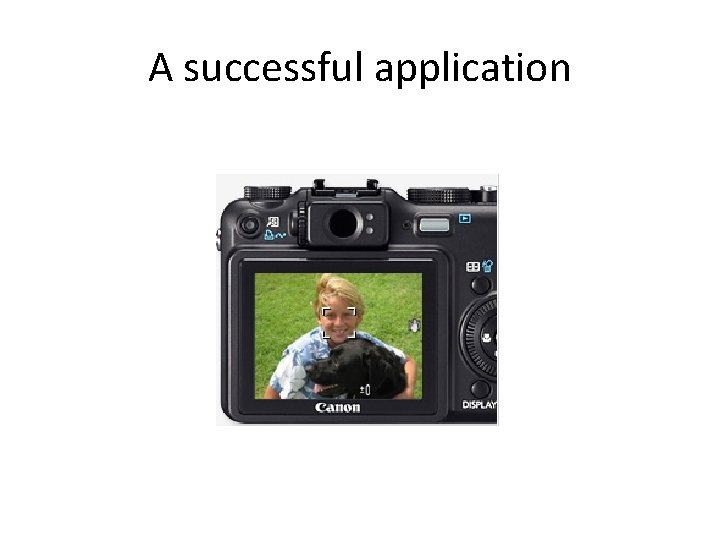

A successful application

Consumer application: i. Photo 2009 http: //www. apple. com/ilife/iphoto/ Slide credit: Lana Lazebnik

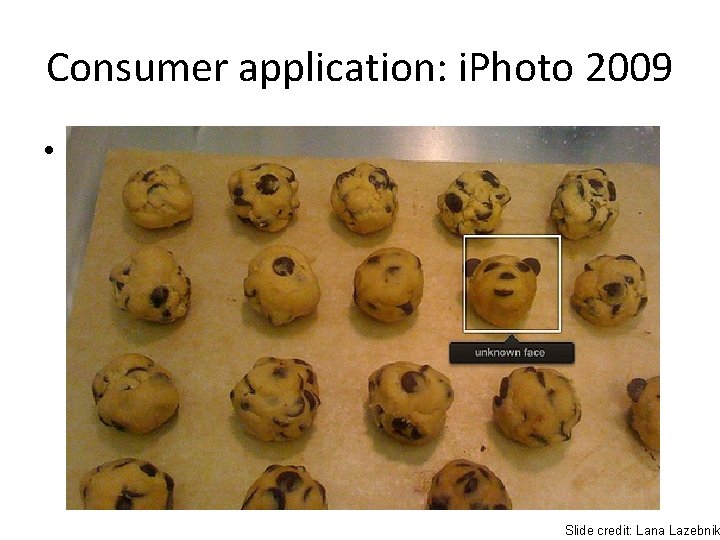

Consumer application: i. Photo 2009 • Things i. Photo thinks are faces Slide credit: Lana Lazebnik

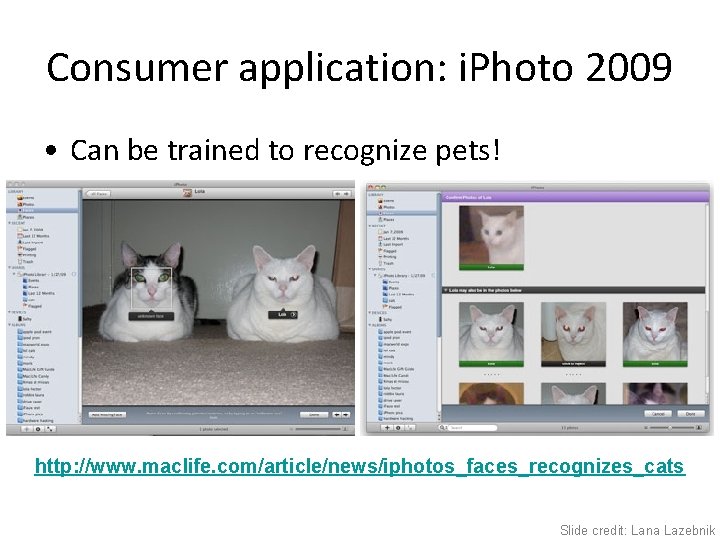

Consumer application: i. Photo 2009 • Can be trained to recognize pets! http: //www. maclife. com/article/news/iphotos_faces_recognizes_cats Slide credit: Lana Lazebnik

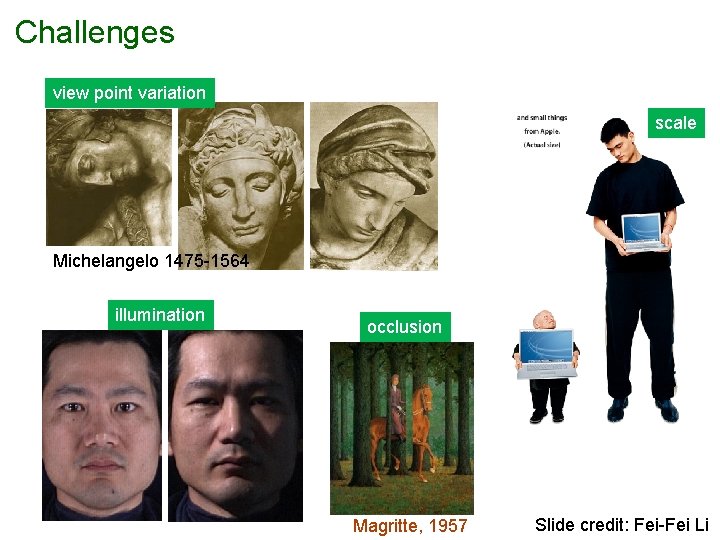

Challenges view point variation scale Michelangelo 1475 -1564 illumination occlusion Magritte, 1957 Slide credit: Fei-Fei Li

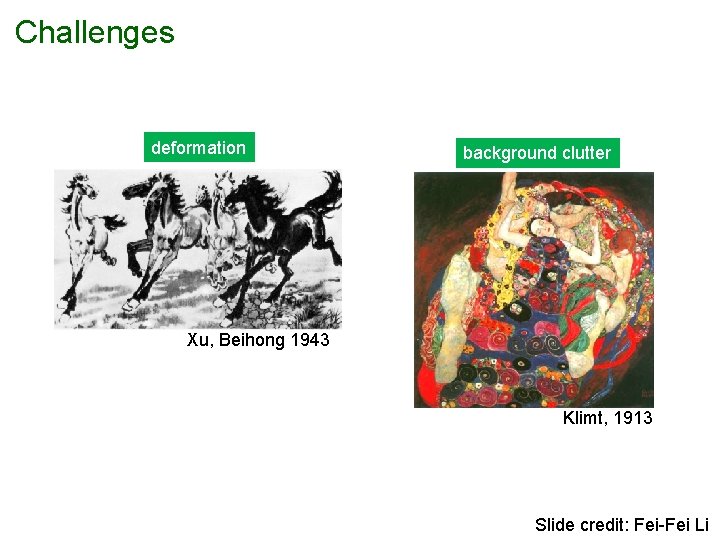

Challenges deformation background clutter Xu, Beihong 1943 Klimt, 1913 Slide credit: Fei-Fei Li

Basic framework • Build/train object model – Choose a representation – Learn or fit parameters of model / classifier • Generate candidates in new image • Score the candidates

Basic framework • Build/train object model – Choose a representation – Learn or fit parameters of model / classifier • Generate candidates in new image • Score the candidates

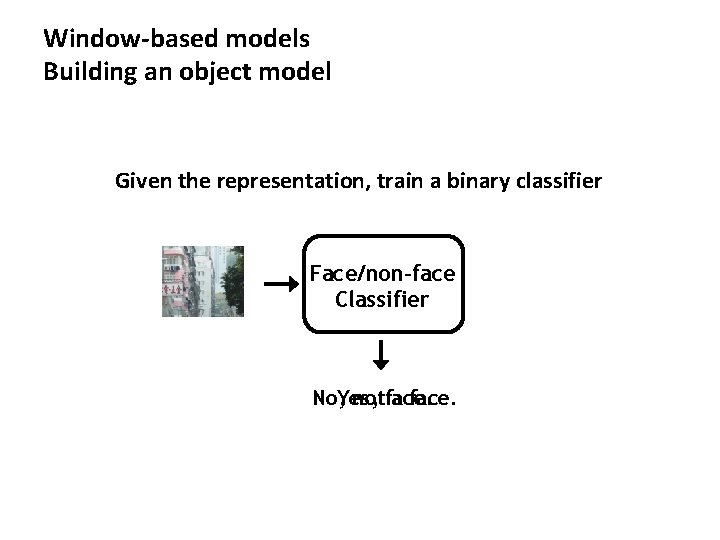

Window-based models Building an object model Given the representation, train a binary classifier Face/non-face Classifier No, Yes, notface. a face.

Basic framework • Build/train object model – Choose a representation – Learn or fit parameters of model / classifier • Generate candidates in new image • Score the candidates

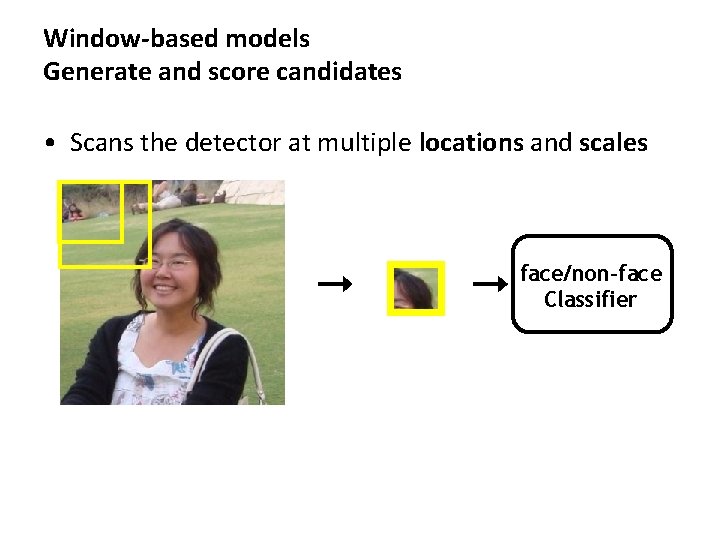

Window-based models Generate and score candidates • Scans the detector at multiple locations and scales face/non-face Classifier

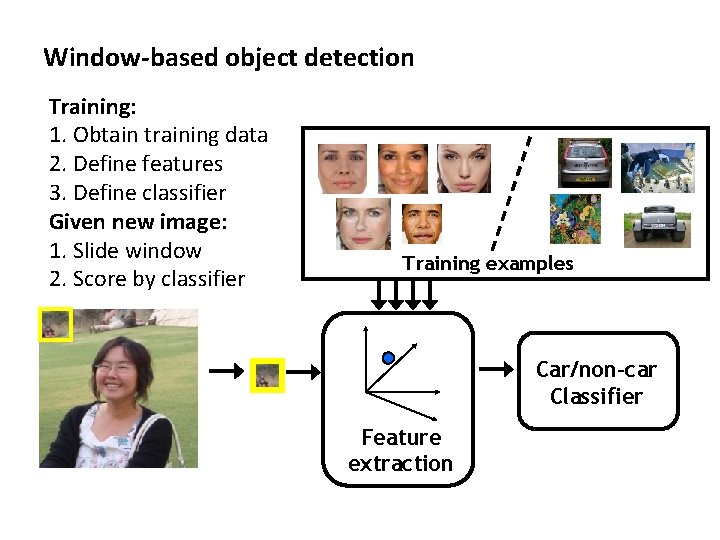

Window-based object detection Training: 1. Obtain training data 2. Define features 3. Define classifier Given new image: 1. Slide window 2. Score by classifier Training examples Car/non-car Classifier Feature extraction

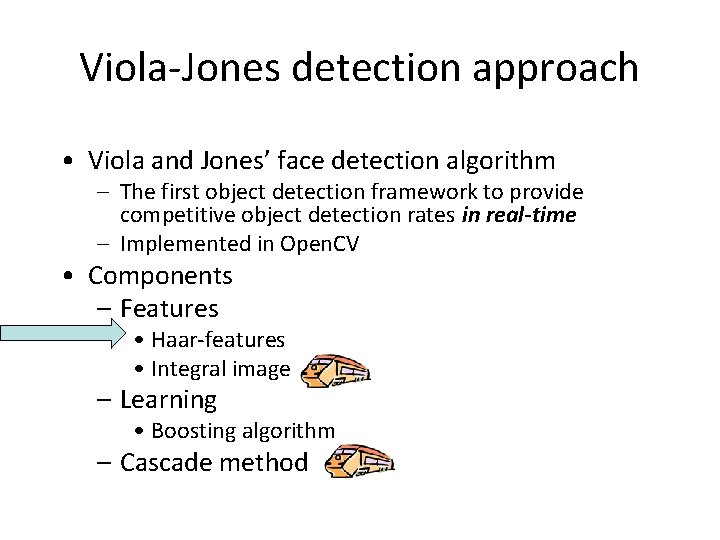

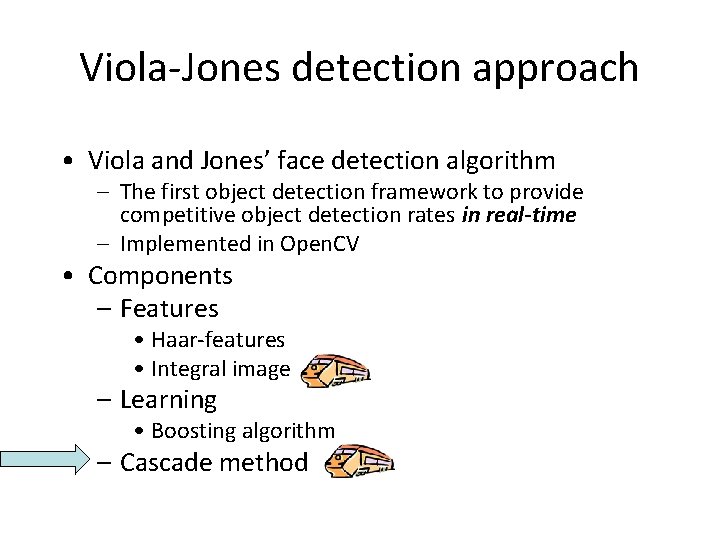

Viola-Jones detection approach • Viola and Jones’ face detection algorithm – The first object detection framework to provide competitive object detection rates in real-time – Implemented in Open. CV • Components – Features • Haar-features • Integral image – Learning • Boosting algorithm – Cascade method

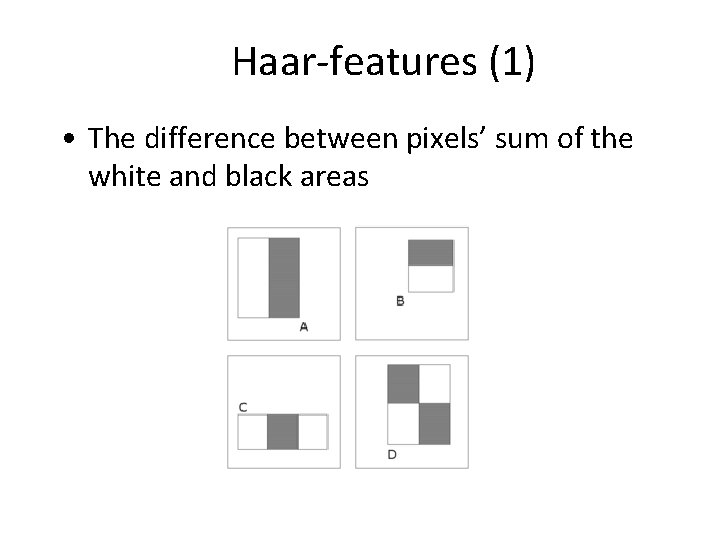

Haar-features (1) • The difference between pixels’ sum of the white and black areas

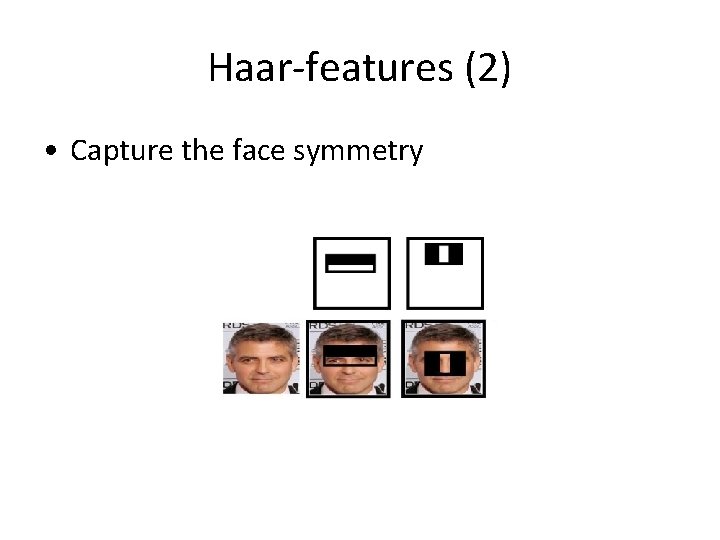

Haar-features (2) • Capture the face symmetry

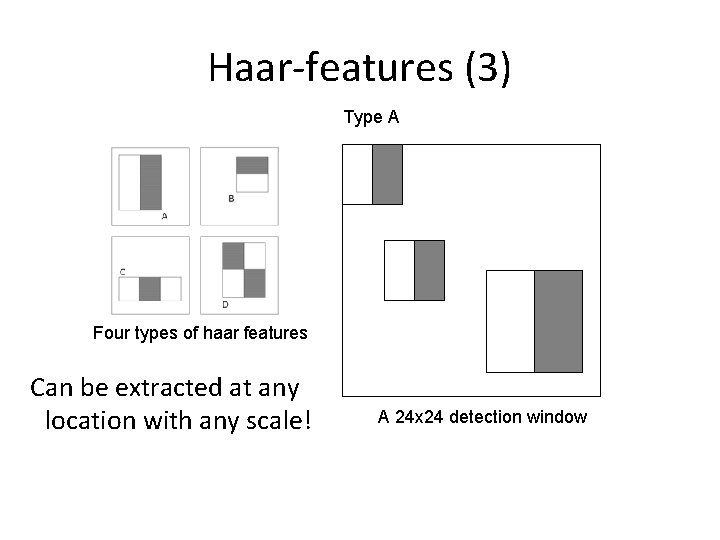

Haar-features (3) Type A Four types of haar features Can be extracted at any location with any scale! A 24 x 24 detection window

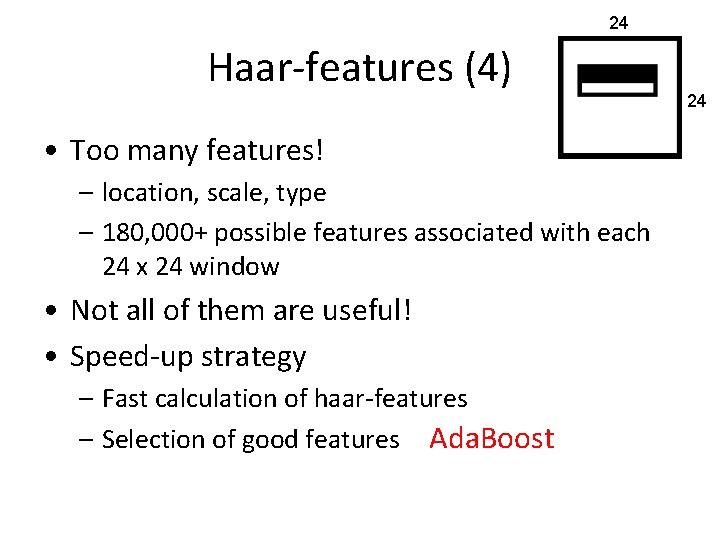

24 Haar-features (4) • Too many features! – location, scale, type – 180, 000+ possible features associated with each 24 x 24 window • Not all of them are useful! • Speed-up strategy – Fast calculation of haar-features – Selection of good features Ada. Boost 24

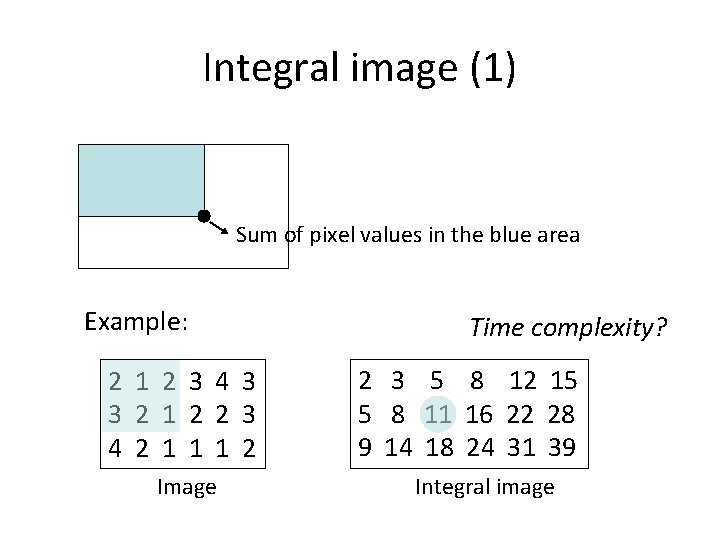

Integral image (1) Sum of pixel values in the blue area Example: 2 1 2 3 4 3 3 2 1 2 2 3 4 2 1 1 1 2 Image Time complexity? 2 3 5 8 12 15 5 8 11 16 22 28 9 14 18 24 31 39 Integral image

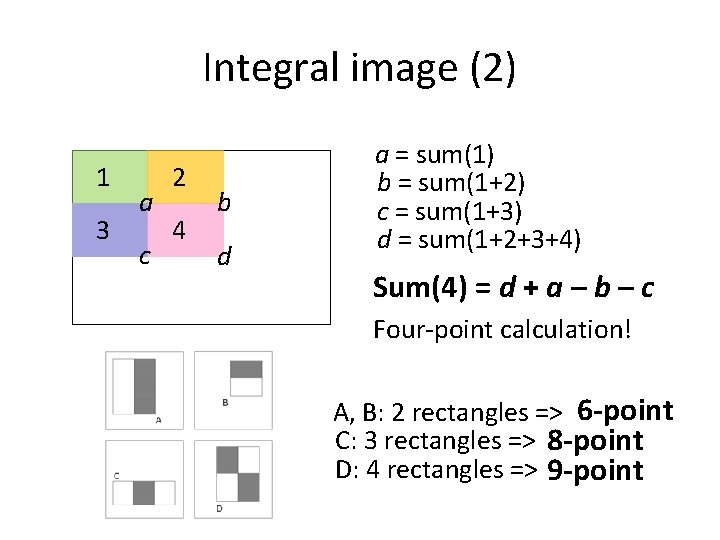

Integral image (2) 1 3 a c 2 4 b d a = sum(1) b = sum(1+2) c = sum(1+3) d = sum(1+2+3+4) Sum(4) = ? d + a – b – c Four-point calculation! A, B: 2 rectangles => 6 -point C: 3 rectangles => 8 -point D: 4 rectangles => 9 -point

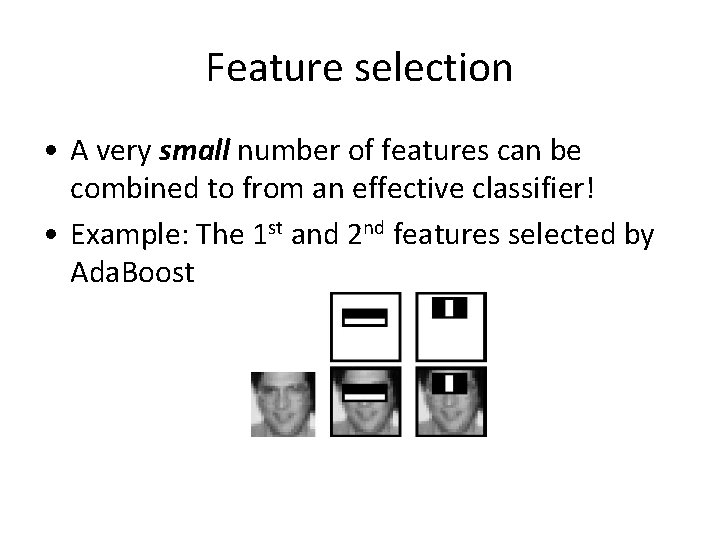

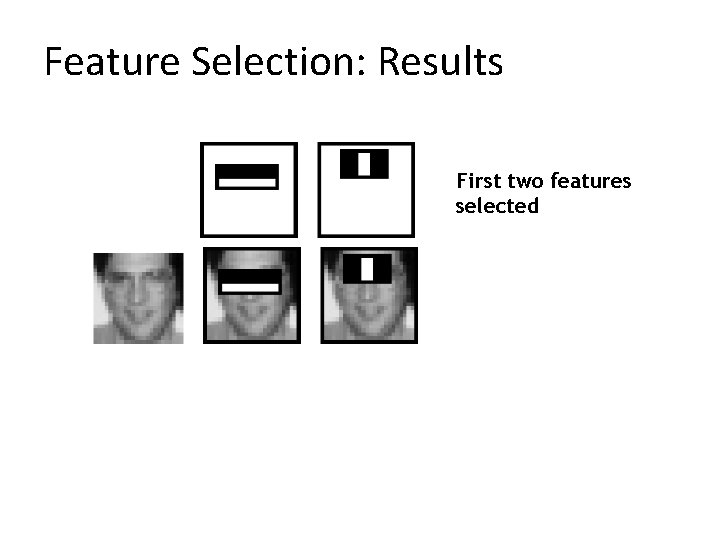

Feature selection • A very small number of features can be combined to from an effective classifier! • Example: The 1 st and 2 nd features selected by Ada. Boost

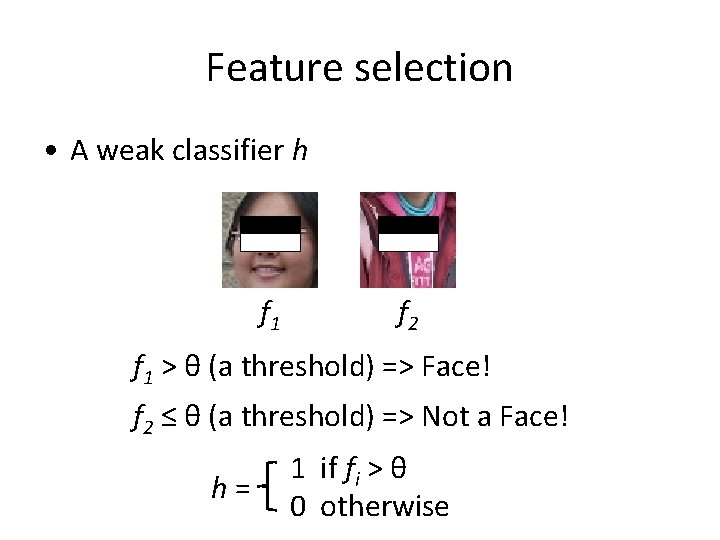

Feature selection • A weak classifier h f 1 f 2 f 1 > θ (a threshold) => Face! f 2 ≤ θ (a threshold) => Not a Face! h= 1 if fi > θ 0 otherwise

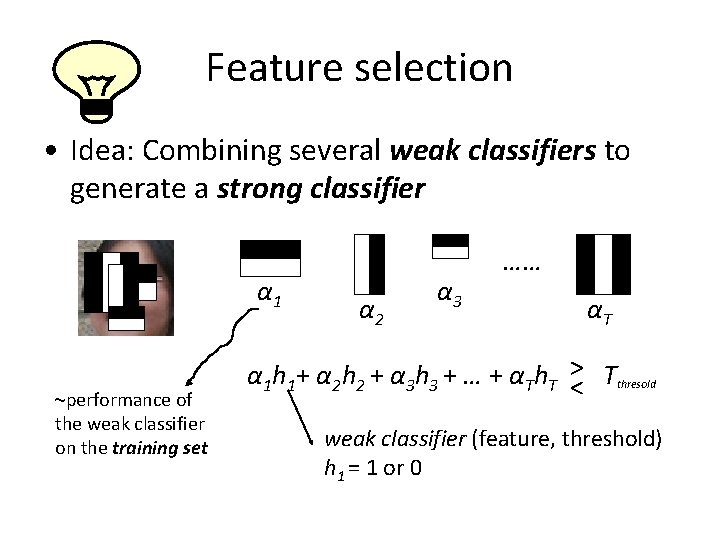

Feature selection • Idea: Combining several weak classifiers to generate a strong classifier α 1 ~performance of the weak classifier on the training set α 2 α 3 …… αT α 1 h 1+ α 2 h 2 + α 3 h 3 + … + αTh. T >< Tthresold weak classifier (feature, threshold) h 1 = 1 or 0

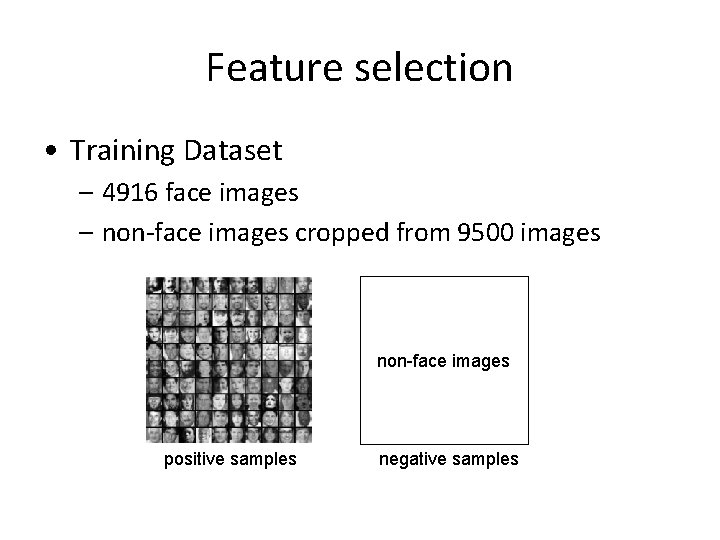

Feature selection • Training Dataset – 4916 face images – non-face images cropped from 9500 images non-face images positive samples negative samples

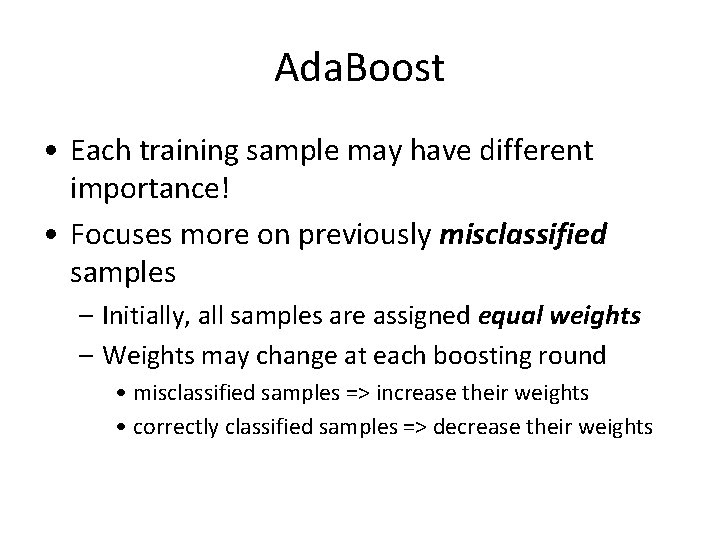

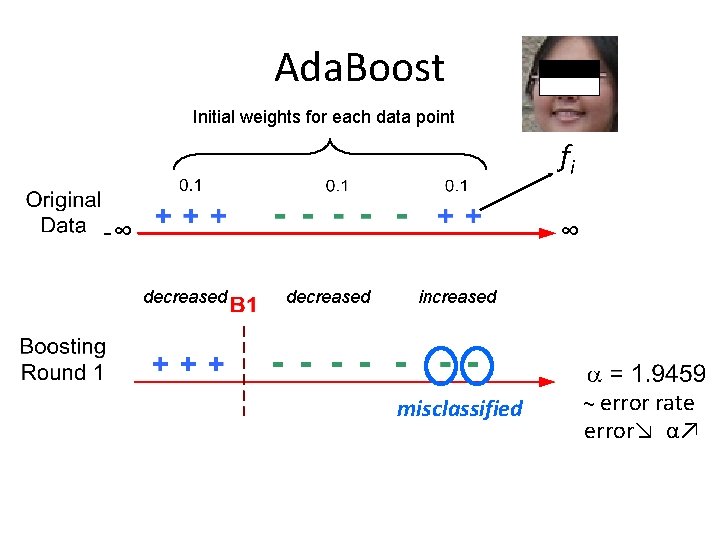

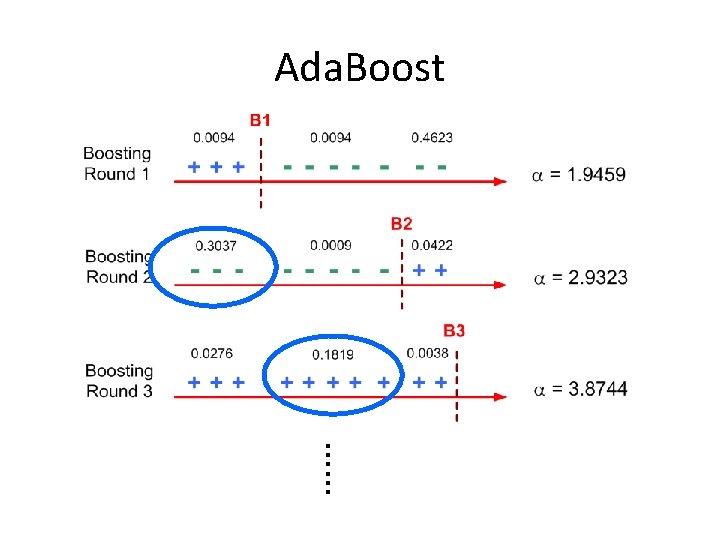

Ada. Boost • Each training sample may have different importance! • Focuses more on previously misclassified samples – Initially, all samples are assigned equal weights – Weights may change at each boosting round • misclassified samples => increase their weights • correctly classified samples => decrease their weights

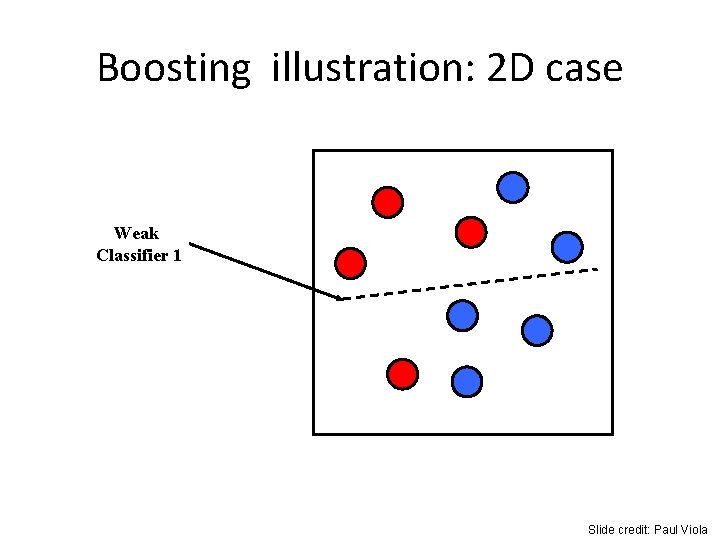

Boosting illustration: 2 D case Weak Classifier 1 Slide credit: Paul Viola

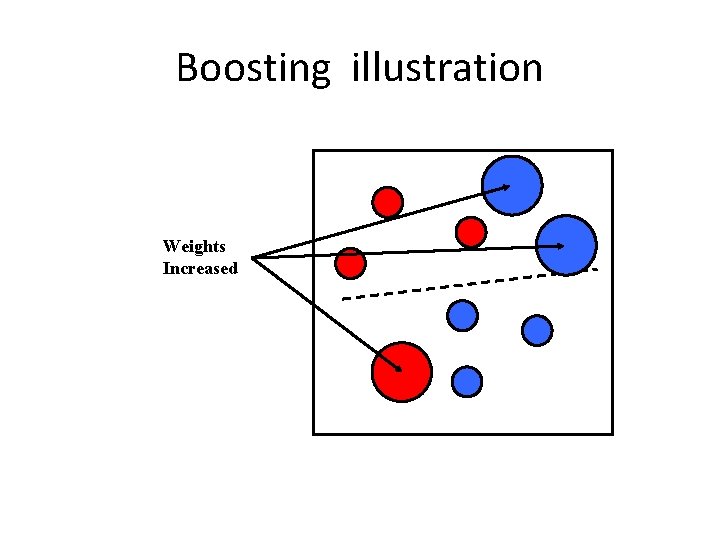

Boosting illustration Weights Increased

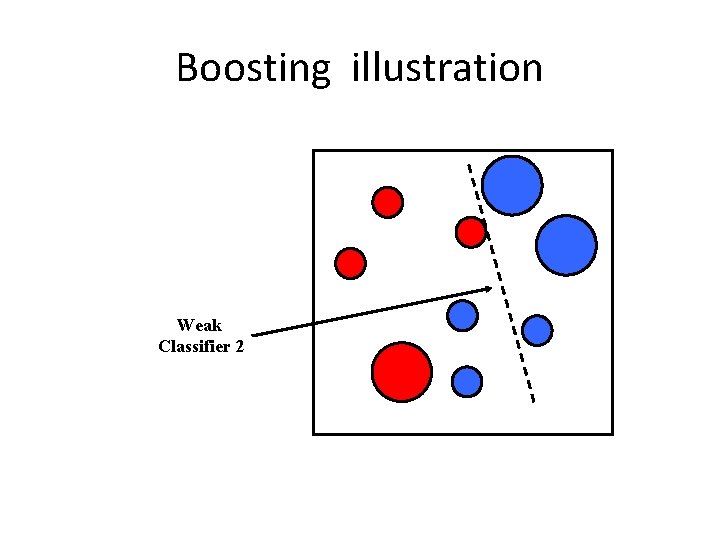

Boosting illustration Weak Classifier 2

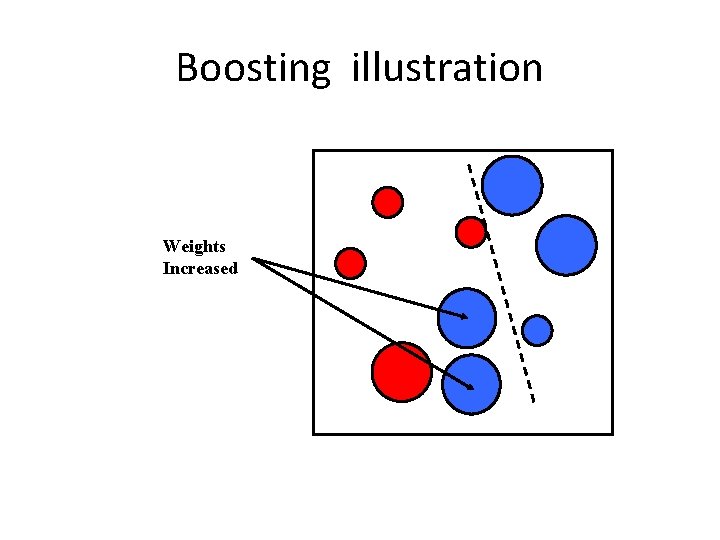

Boosting illustration Weights Increased

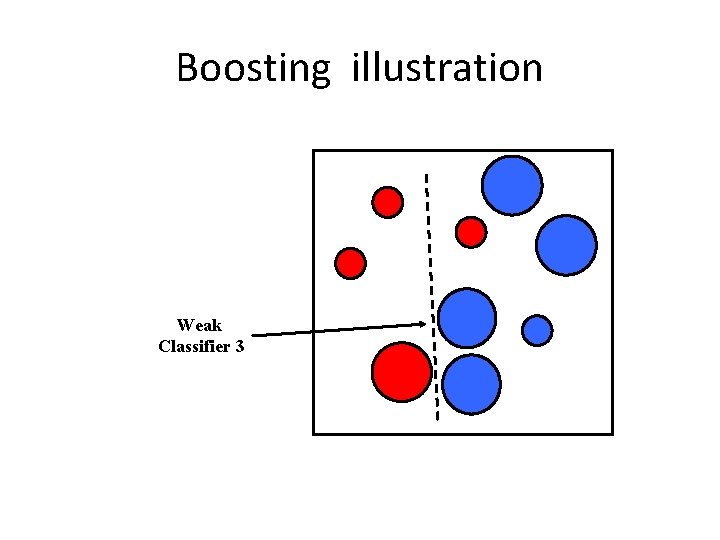

Boosting illustration Weak Classifier 3

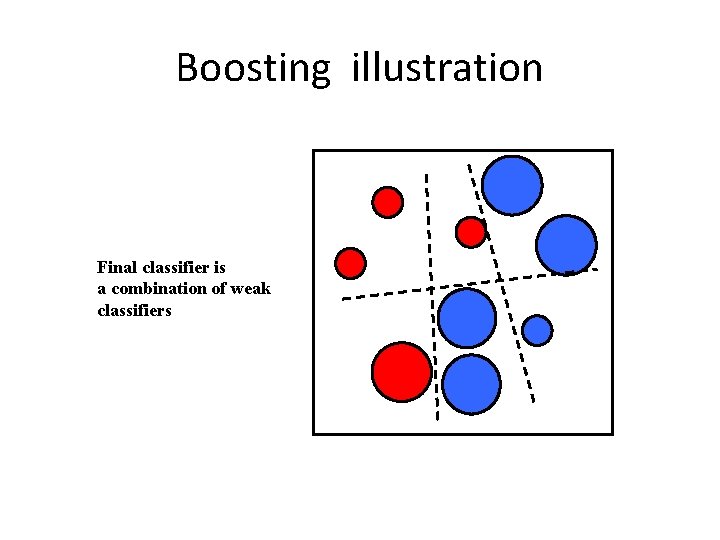

Boosting illustration Final classifier is a combination of weak classifiers

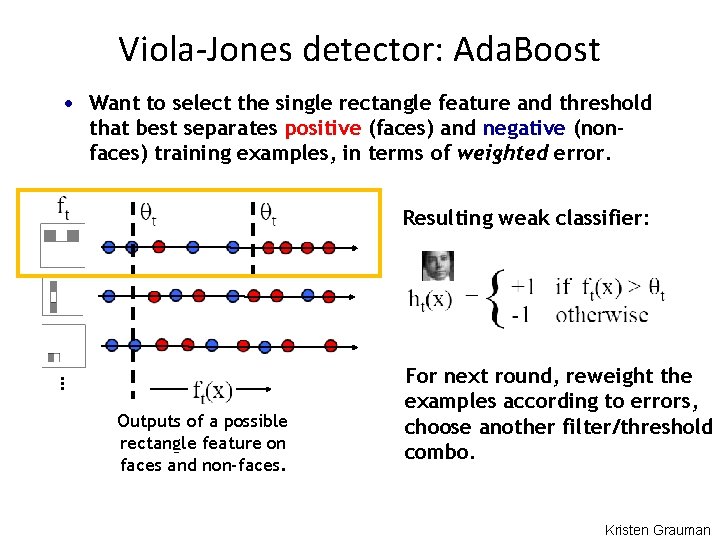

Viola-Jones detector: Ada. Boost • Want to select the single rectangle feature and threshold that best separates positive (faces) and negative (nonfaces) training examples, in terms of weighted error. Resulting weak classifier: … Outputs of a possible rectangle feature on faces and non-faces. For next round, reweight the examples according to errors, choose another filter/threshold combo. Kristen Grauman

Ada. Boost Initial weights for each data point fi -∞ ∞ decreased increased misclassified ~ error rate error↘ α↗

Ada. Boost ……

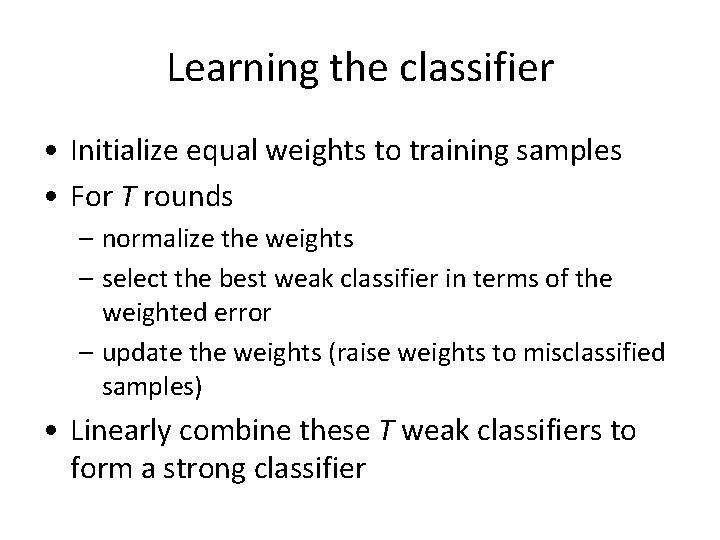

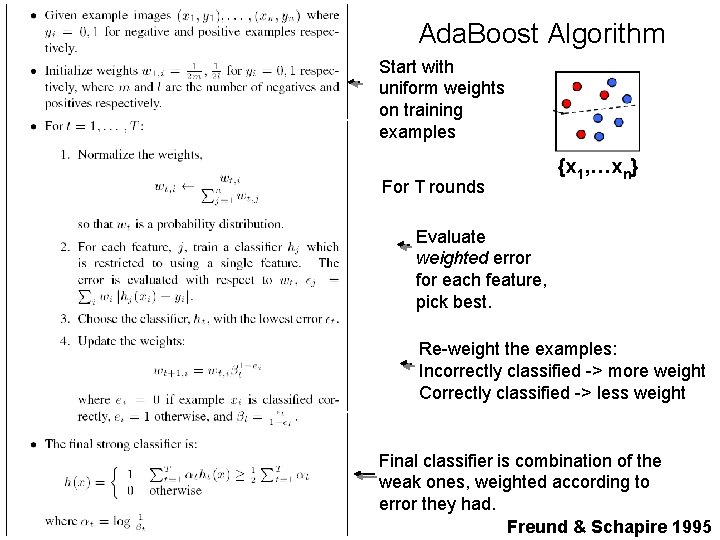

Learning the classifier • Initialize equal weights to training samples • For T rounds – normalize the weights – select the best weak classifier in terms of the weighted error – update the weights (raise weights to misclassified samples) • Linearly combine these T weak classifiers to form a strong classifier

Ada. Boost Algorithm Start with uniform weights on training examples For T rounds {x 1, …xn} Evaluate weighted error for each feature, pick best. Re-weight the examples: Incorrectly classified -> more weight Correctly classified -> less weight Final classifier is combination of the weak ones, weighted according to error they had. Freund & Schapire 1995

Feature Selection: Results First two features selected

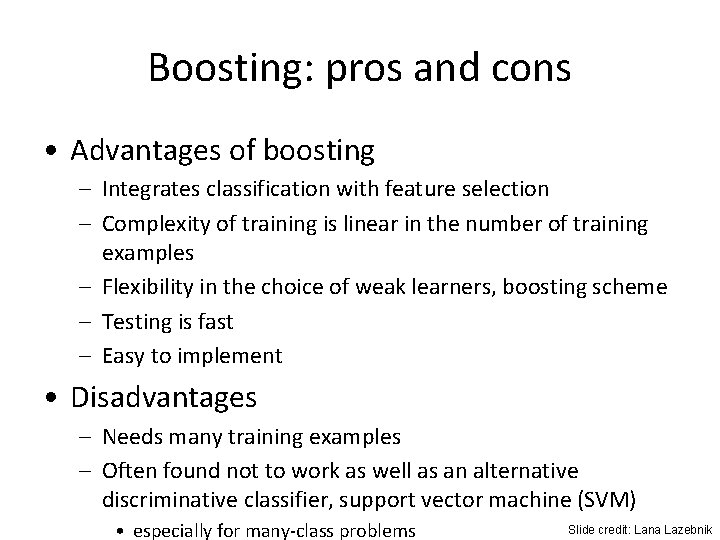

Boosting: pros and cons • Advantages of boosting – Integrates classification with feature selection – Complexity of training is linear in the number of training examples – Flexibility in the choice of weak learners, boosting scheme – Testing is fast – Easy to implement • Disadvantages – Needs many training examples – Often found not to work as well as an alternative discriminative classifier, support vector machine (SVM) • especially for many-class problems Slide credit: Lana Lazebnik

Viola-Jones detection approach • Viola and Jones’ face detection algorithm – The first object detection framework to provide competitive object detection rates in real-time – Implemented in Open. CV • Components – Features • Haar-features • Integral image – Learning • Boosting algorithm – Cascade method

• Even if the filters are fast to compute, each new image has a lot of possible windows to search. • How to make the detection more efficient?

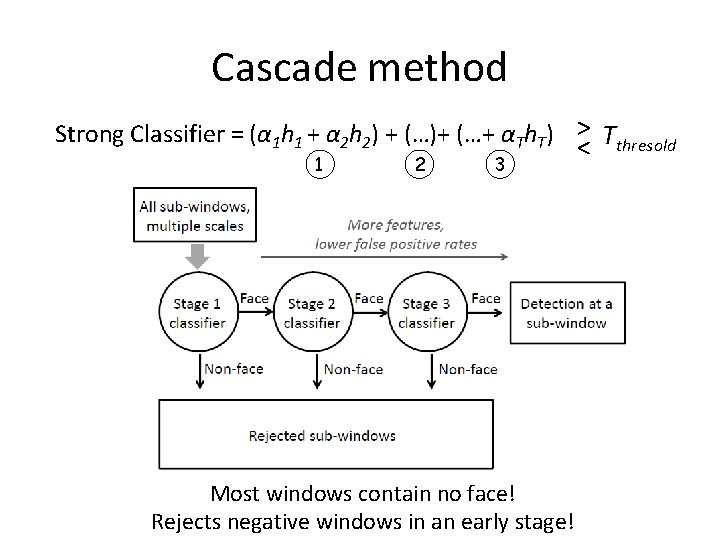

Cascade method Strong Classifier = (α 1 h 1 + α 2 h 2) + (…)+ (…+ αTh. T) > Tthresold < 1 2 3 Most windows contain no face! Rejects negative windows in an early stage!

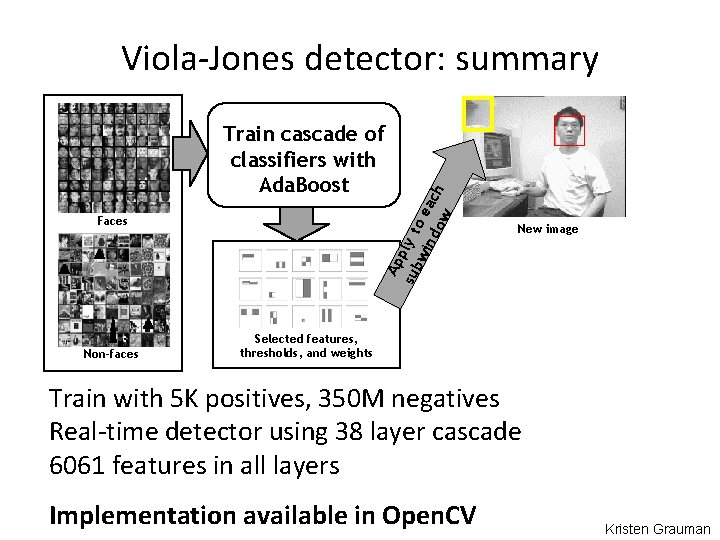

Viola-Jones detector: summary Ap sub ply t wi o ea nd ow ch Train cascade of classifiers with Ada. Boost Faces Non-faces New image Selected features, thresholds, and weights Train with 5 K positives, 350 M negatives Real-time detector using 38 layer cascade 6061 features in all layers Implementation available in Open. CV Kristen Grauman

Questions • What other categories are amenable to window-based representation? • Can the integral image technique be applied to compute histograms? • Alternatives to sliding-window-based approaches?

- Slides: 52