WHY ARE PADIC NUMBERS USEFUL FOR FAST LINEAR

WHY ARE P-ADIC NUMBERS USEFUL FOR FAST LINEAR ALGEBRA ALGORITHMS? Harvard Undergraduate Math Table Sílvia Casacuberta Puig 2 December 2020

WHAT ARE P-ADIC NUMBERS? First think of power series: we can see them as infinite polynomials and operate with them without ever plugging anything in for the variable x: f(x) = 1 + x 2 + x 3 + … g(x) = 2 + 5 x 2 + 8 x 4 + … Add: f(x) + g(x) = 3 + x + 6 x 2 + x 3 + 8 x 4 + … Multiply: f(x) · g(x) = 2 + 2 x + 7 x 2 + 7 x 3 + 13 x 5 + 8 x 6 + 8 x 7 + … Writing integers in base 10 yields polynomials where the variable is x = 10: 4567 = 4 + 5· 10 + 6· 102 + 7· 103. This is a 10 -adic expression, hence an element of the set of 10 -adic integers.

GENERALIZING POWER SERIES More generally, we can talk about b-adic integers for any b: Note that, for composite b, we can have 0 divisors. For example, for b = 10, we have (. . . 9879186432) (. . . 8212890625) = 0. Thus we require that b is a prime: this gives us a definition of the p-adic integers (Kurt Hensel, 1897). Definition: A p-adic integer α is a formal infinite series α = a 0 + a 1 p + a 2 p 2 + … with 0 ≤ ai < p. The set of p-adic integers is denoted by If we truncate an element α ϵ Note that is included in . at its k-th term, we get a well-defined element of , and corresponds to those α that have finite expansion. .

AN EXAMPLE

FINDING ROOTS IN Z P Doing this manually is tedious. Recall that in the reals we can use Newton’s iteration to successively approximate a root of a polynomial with the recursion The p-adic analogue is Hensel’s lemma, which successively lifts a solution modulo p to higher powers of p: We use the following recursion:

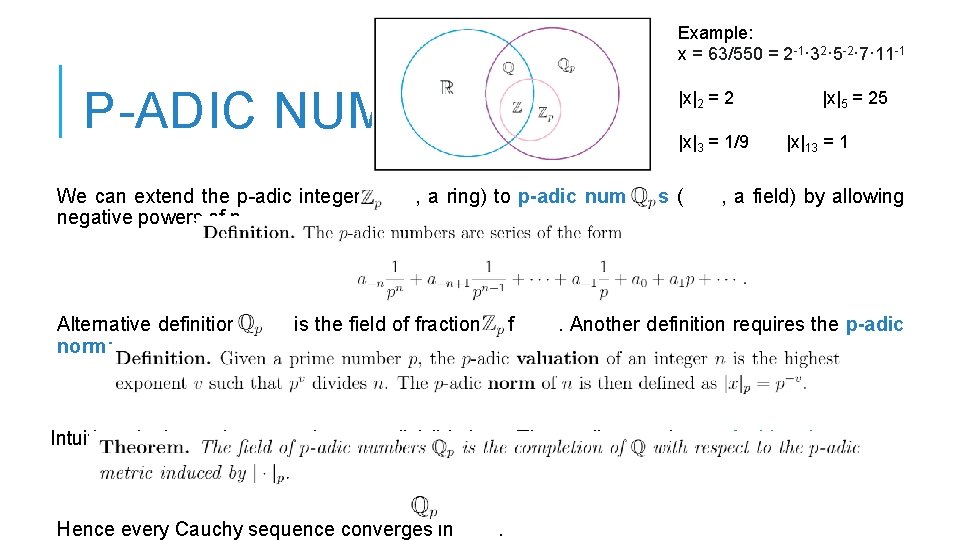

Example: x = 63/550 = 2 -1· 32· 5 -2· 7· 11 -1 P-ADIC NUMBERS We can extend the p-adic integers ( negative powers of p. Alternative definition: norm: |x|2 = 2 |x|3 = 1/9 , a ring) to p-adic numbers ( is the field of fractions of |x|5 = 25 |x|13 = 1 , a field) by allowing . Another definition requires the p-adic Intuition: the lower the norm, the more divisible by p. The p-adic norm is non-Archimedean. Hence every Cauchy sequence converges in .

P-ADICS ARE INCREDIBLY RICH! There is a lot of theory from here! 1. For algebraists: projective limits, algebraic extensions of , Hasse. Minkowski Theorem (local-global principle), quadratic forms, Galois extensions… 2. For analysts: p-adic norm, Ostrowski’s theorem, continuity, derivatives, integration, Riemann zeta function interpolation, Banach spaces… 3. For topologists: topologies of and , Cantor set models, disconnected, p-completion of spaces… More surprising: also for computer scientists! is totally

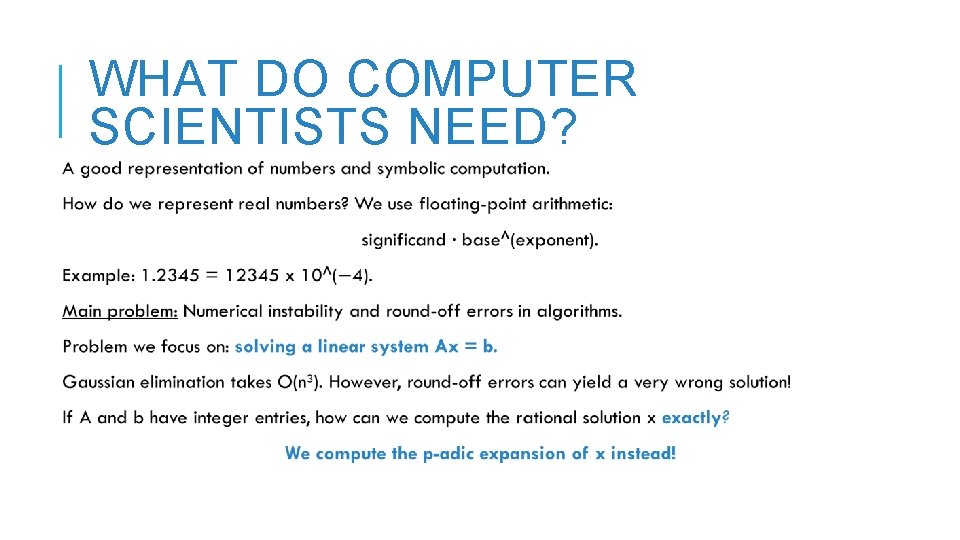

WHAT DO COMPUTER SCIENTISTS NEED?

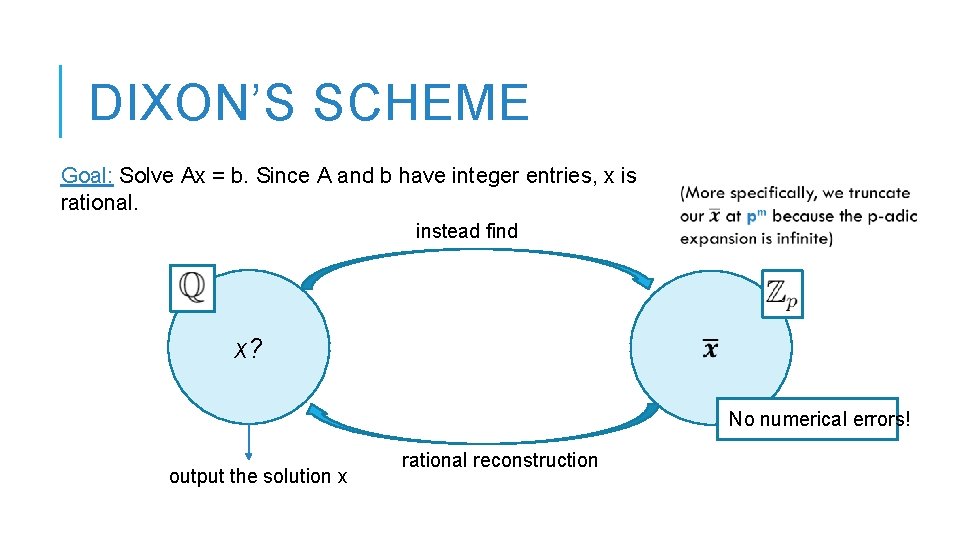

DIXON’S IDEA John Dixon was the first to introduce the use of p-adic numbers in linear algebra in 1982, when he proposed an algorithm for solving Ax = b exactly: Key idea: Instead of solving for x in to some power of p. Then how do we get back x in , we find the p-adic expansion of x iteratively up from x in ? We apply rational reconstruction.

DIXON’S SCHEME Goal: Solve Ax = b. Since A and b have integer entries, x is rational. instead find x? No numerical errors! output the solution x rational reconstruction

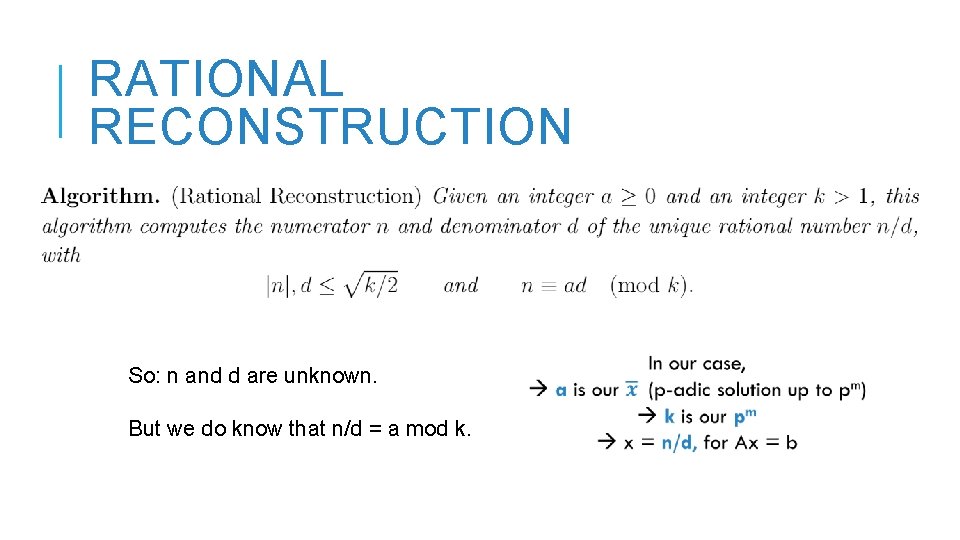

RATIONAL RECONSTRUCTION So: n and d are unknown. But we do know that n/d = a mod k.

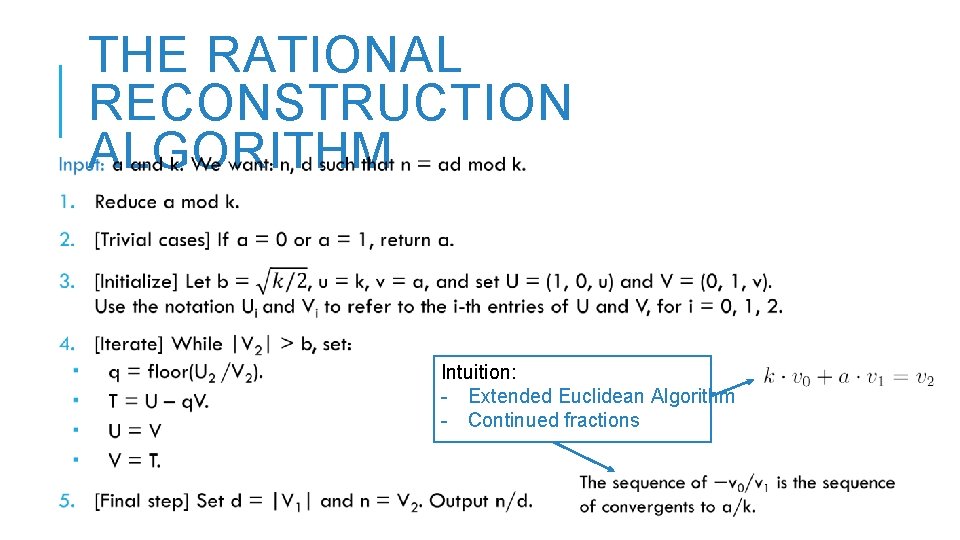

THE RATIONAL RECONSTRUCTION ALGORITHM Intuition: - Extended Euclidean Algorithm - Continued fractions

AN EXAMPLE

DIXON’S ITERATION

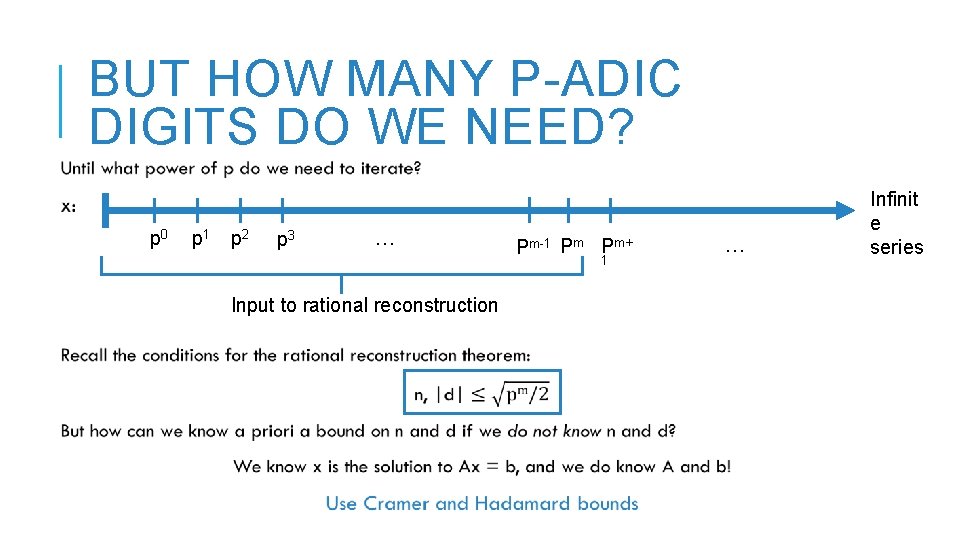

BUT HOW MANY P-ADIC DIGITS DO WE NEED? p 0 p 1 p 2 p 3 … Pm-1 Pm Pm+ 1 Input to rational reconstruction … Infinit e series

CRAMER AND HADAMARD

BACK TO DIXON

ADVANTAGES OF DIXON 1. No numerical errors. Solution x is exact. No need to think of the numerical analysis. 2. Modular approach (and faster than others, e. g. , Chinese Remainder Theorem). 3. We can now apply finite field methods for linear system solving for rational solutions, by lifting at each and then doing rational reconstruction. Example: Wiedemann’s minimal polynomial method. 4. Lifting + rational reconstruction extends to many other numerical algebra problems: integrality certification, computing determinants, Smith normal form computation, etc. Question: Can we do faster than O(n 3)?

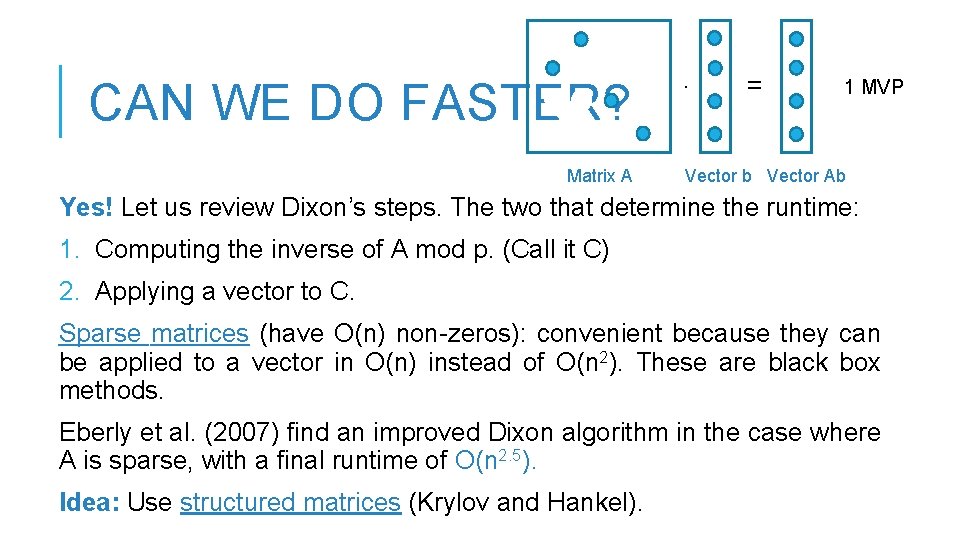

CAN WE DO FASTER? Matrix A · = 1 MVP Vector b Vector Ab Yes! Let us review Dixon’s steps. The two that determine the runtime: 1. Computing the inverse of A mod p. (Call it C) 2. Applying a vector to C. Sparse matrices (have O(n) non-zeros): convenient because they can be applied to a vector in O(n) instead of O(n 2). These are black box methods. Eberly et al. (2007) find an improved Dixon algorithm in the case where A is sparse, with a final runtime of O(n 2. 5). Idea: Use structured matrices (Krylov and Hankel).

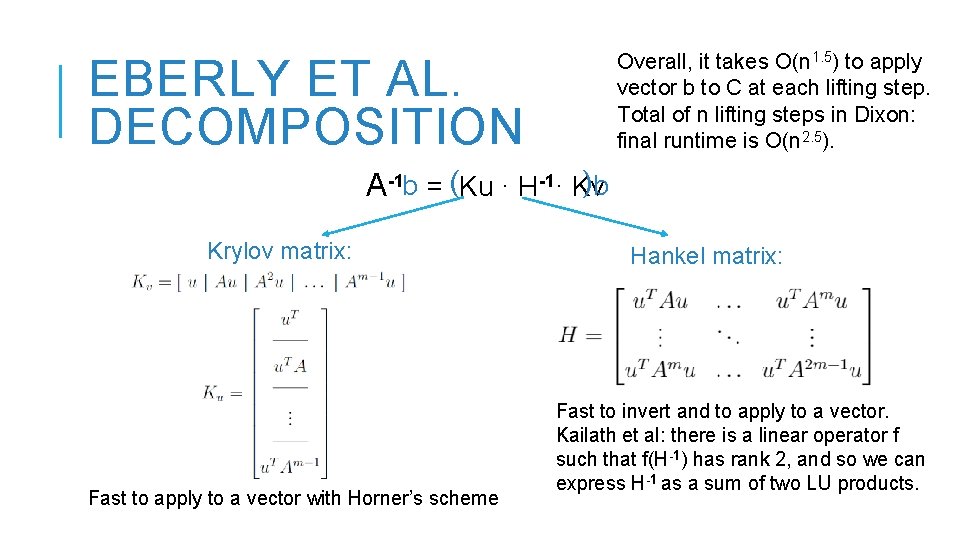

Overall, it takes O(n 1. 5) to apply vector b to C at each lifting step. Total of n lifting steps in Dixon: final runtime is O(n 2. 5). EBERLY ET AL. DECOMPOSITION )b A-1 b = (Ku · H-1· Kv Krylov matrix: Fast to apply to a vector with Horner’s scheme Hankel matrix: Fast to invert and to apply to a vector. Kailath et al: there is a linear operator f such that f(H-1) has rank 2, and so we can express H-1 as a sum of two LU products.

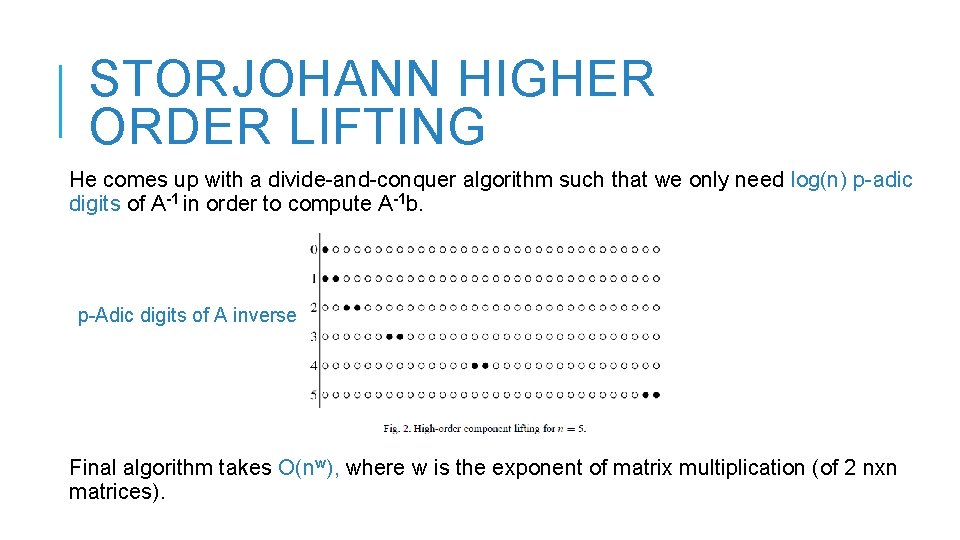

STORJOHANN HIGHER ORDER LIFTING He comes up with a divide-and-conquer algorithm such that we only need log(n) p-adic digits of A-1 in order to compute A-1 b. p-Adic digits of A inverse Final algorithm takes O(nw), where w is the exponent of matrix multiplication (of 2 nxn matrices).

CAN WE BEAT MATRIX MULTIPLICATION TIME? Main question: Can we solve a linear system Ax = b faster than matrix multiplication? Solved this July! Exactly the structure of Eberly et al. , but applied in the reals by controlling bit precision. Open question: We still do not know how to get an exact solution using Dixon’s scheme with an algorithm below O(nw).

THANK YOU! I am indebted to Prof. Rasmus Kyng (ETH Zurich) for supervising this research project. I also thank Dusty Grundmeier for feedback on this presentation.

- Slides: 23