What youve always wanted to know about logistic

What you've always wanted to know about logistic regression analysis, but were afraid to ask. . . Februari, 1 2010 Gerrit Rooks Sociology of Innovation Sciences & Industrial Engineering Phone: 5509 email: g. rooks@tue. nl 1

This Lecture • • • Why logistic regression analysis? The logistic regression model Estimation Goodness of fit An example 2

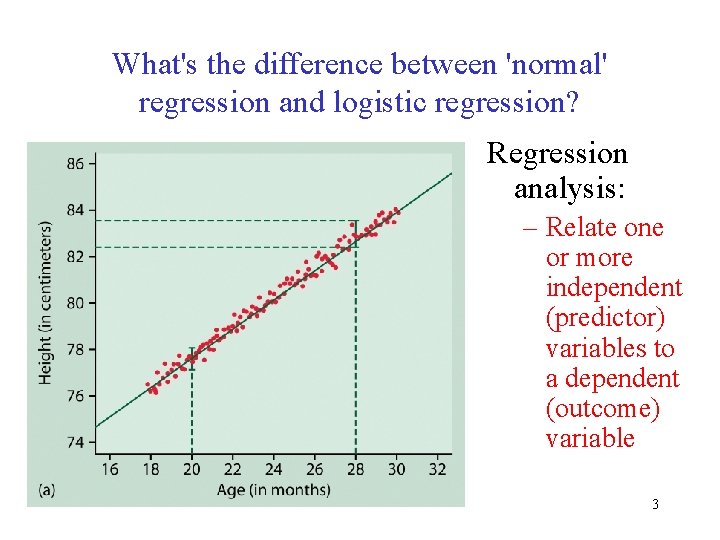

What's the difference between 'normal' regression and logistic regression? Regression analysis: – Relate one or more independent (predictor) variables to a dependent (outcome) variable 3

What's the difference between 'normal' regression and logistic regression? • Often you will be confronted with outcome variables that are dichotomic: – success vs failure – employed vs unemployed – promoted or not – sick or healthy – pass or fail an exam 4

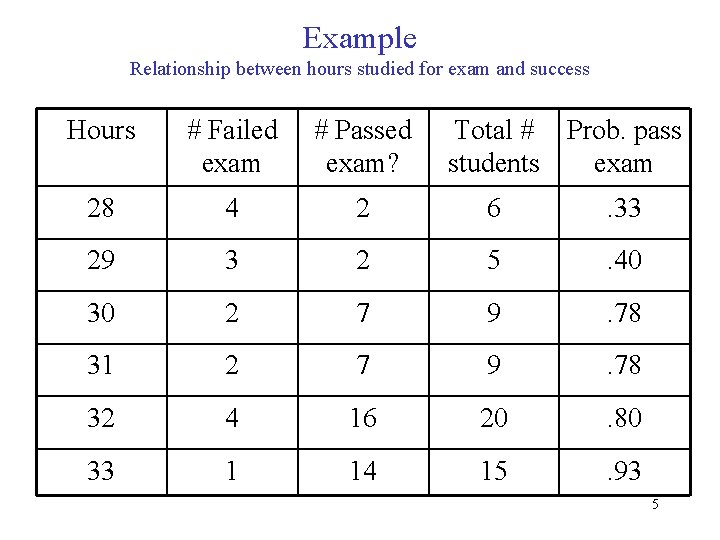

Example Relationship between hours studied for exam and success Hours # Failed exam # Passed exam? Total # Prob. pass students exam 28 4 2 6 . 33 29 3 2 5 . 40 30 2 7 9 . 78 31 2 7 9 . 78 32 4 16 20 . 80 33 1 14 15 . 93 5

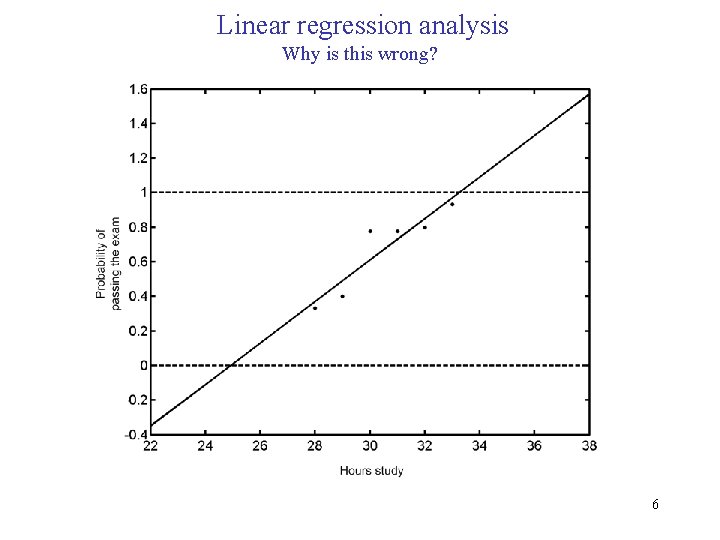

Linear regression analysis Why is this wrong? 6

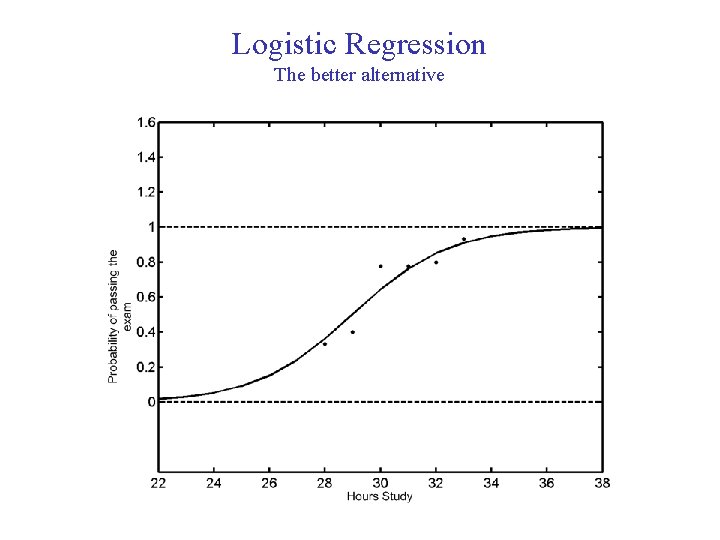

Logistic Regression The better alternative 7

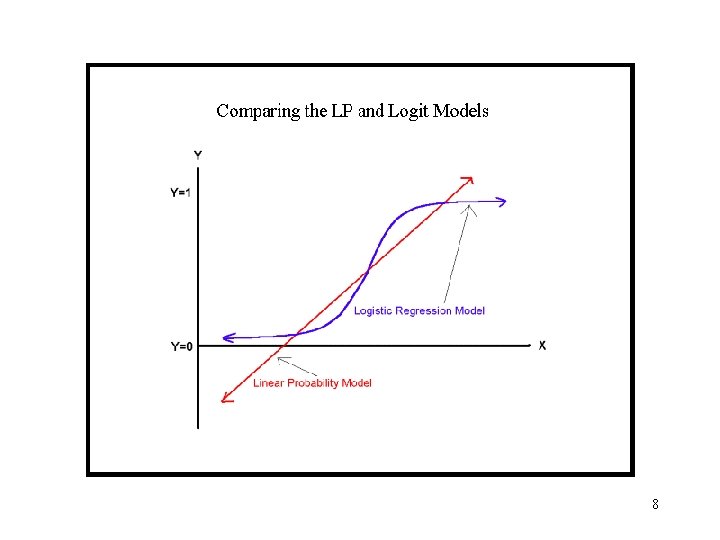

8

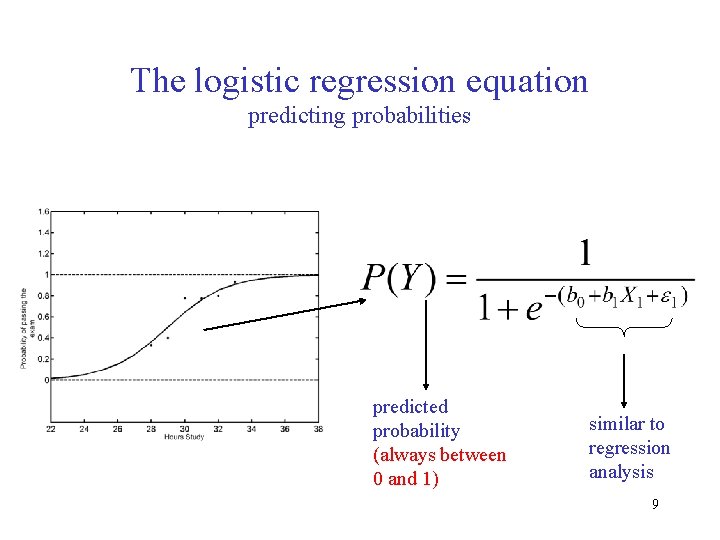

The logistic regression equation predicting probabilities predicted probability (always between 0 and 1) similar to regression analysis 9

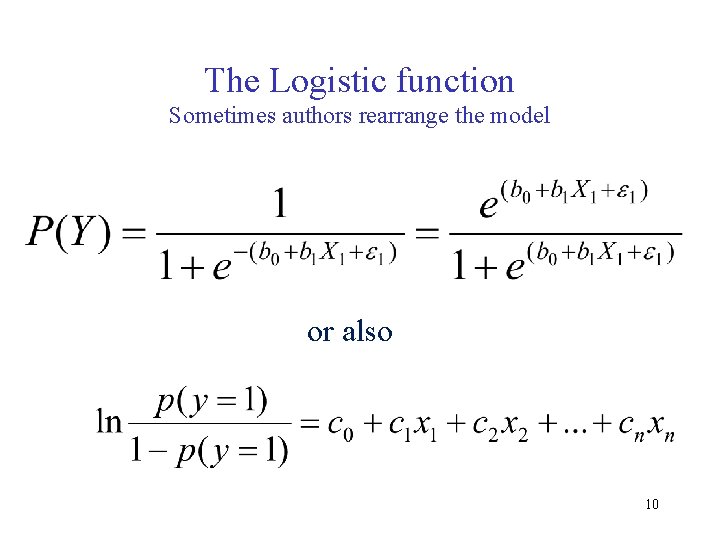

The Logistic function Sometimes authors rearrange the model or also 10

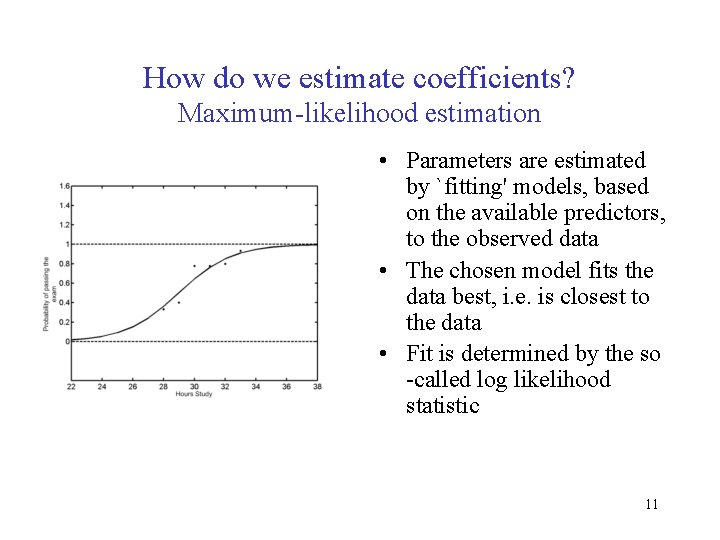

How do we estimate coefficients? Maximum-likelihood estimation • Parameters are estimated by `fitting' models, based on the available predictors, to the observed data • The chosen model fits the data best, i. e. is closest to the data • Fit is determined by the so -called log likelihood statistic 11

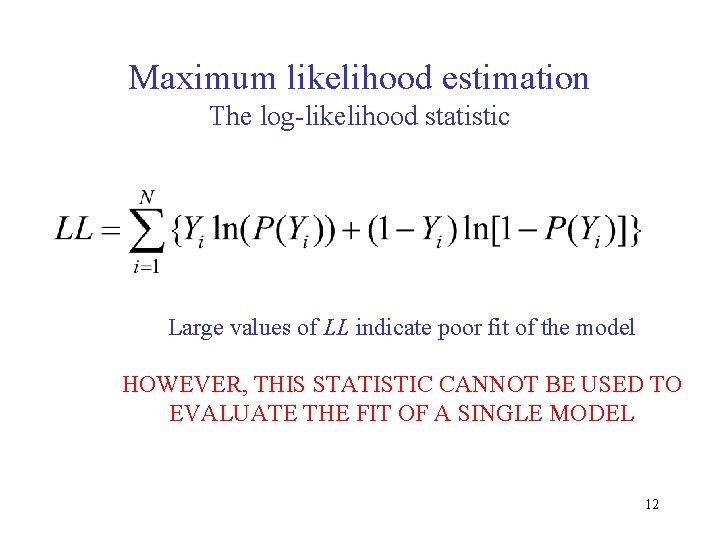

Maximum likelihood estimation The log-likelihood statistic Large values of LL indicate poor fit of the model HOWEVER, THIS STATISTIC CANNOT BE USED TO EVALUATE THE FIT OF A SINGLE MODEL 12

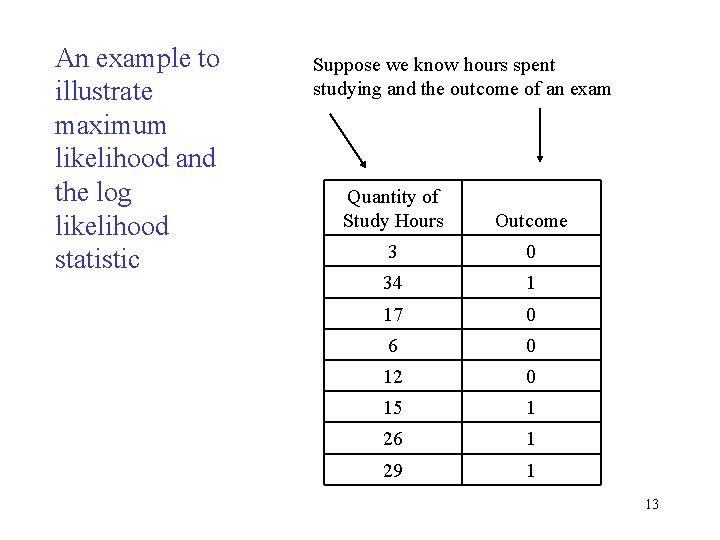

An example to illustrate maximum likelihood and the log likelihood statistic Suppose we know hours spent studying and the outcome of an exam Quantity of Study Hours Outcome 3 0 34 1 17 0 6 0 12 0 15 1 26 1 29 1 13

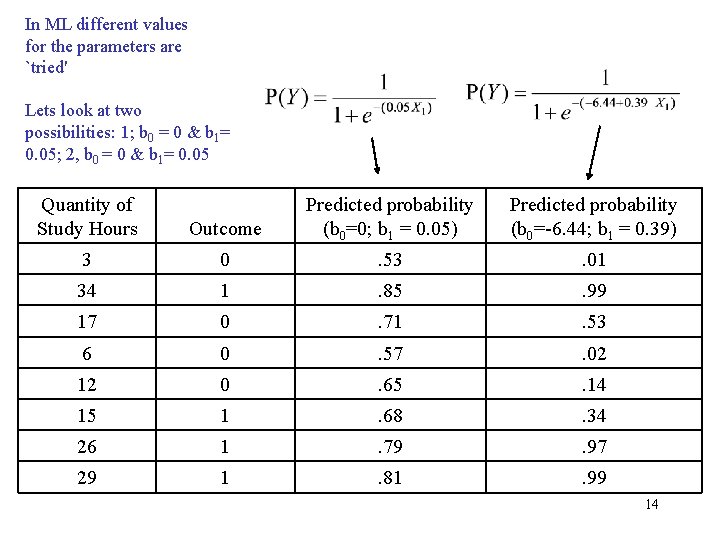

In ML different values for the parameters are `tried' Lets look at two possibilities: 1; b 0 = 0 & b 1= 0. 05; 2, b 0 = 0 & b 1= 0. 05 Quantity of Study Hours Outcome Predicted probability (b 0=0; b 1 = 0. 05) Predicted probability (b 0=-6. 44; b 1 = 0. 39) 3 0 . 53 . 01 34 1 . 85 . 99 17 0 . 71 . 53 6 0 . 57 . 02 12 0 . 65 . 14 15 1 . 68 . 34 26 1 . 79 . 97 29 1 . 81 . 99 14

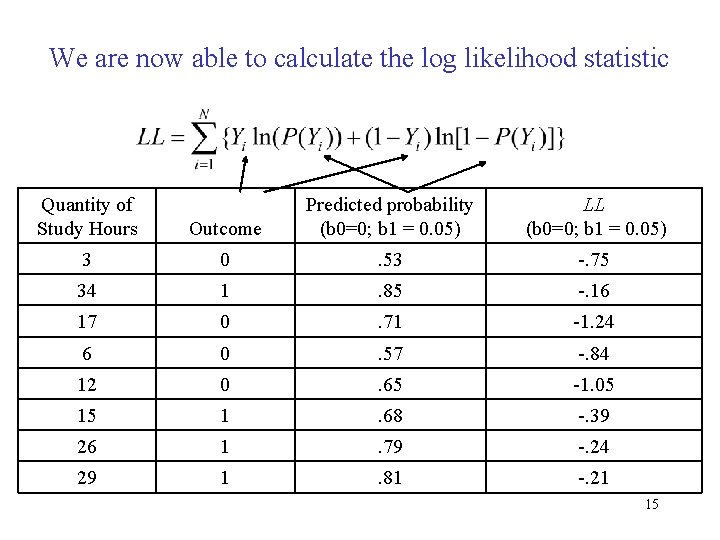

We are now able to calculate the log likelihood statistic Quantity of Study Hours Outcome Predicted probability (b 0=0; b 1 = 0. 05) LL (b 0=0; b 1 = 0. 05) 3 0 . 53 -. 75 34 1 . 85 -. 16 17 0 . 71 -1. 24 6 0 . 57 -. 84 12 0 . 65 -1. 05 15 1 . 68 -. 39 26 1 . 79 -. 24 29 1 . 81 -. 21 15

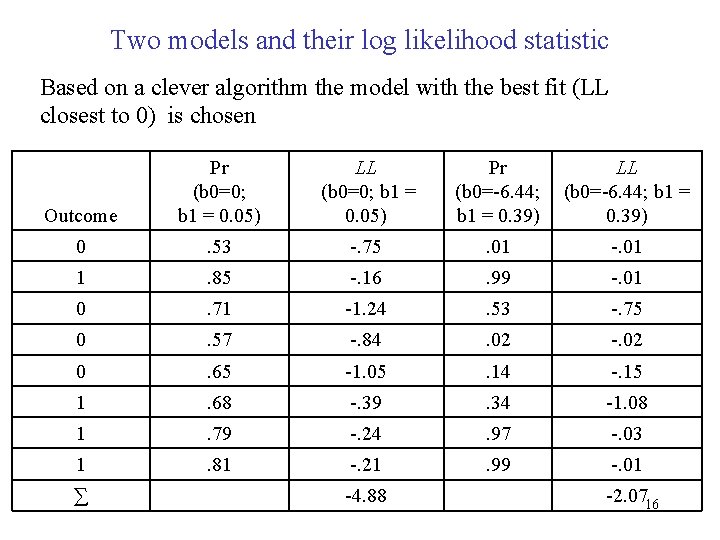

Two models and their log likelihood statistic Based on a clever algorithm the model with the best fit (LL closest to 0) is chosen Outcome Pr (b 0=0; b 1 = 0. 05) LL (b 0=0; b 1 = 0. 05) Pr (b 0=-6. 44; b 1 = 0. 39) LL (b 0=-6. 44; b 1 = 0. 39) 0 . 53 -. 75 . 01 -. 01 1 . 85 -. 16 . 99 -. 01 0 . 71 -1. 24 . 53 -. 75 0 . 57 -. 84 . 02 -. 02 0 . 65 -1. 05 . 14 -. 15 1 . 68 -. 39 . 34 -1. 08 1 . 79 -. 24 . 97 -. 03 1 . 81 -. 21 . 99 -. 01 ∑ -4. 88 -2. 0716

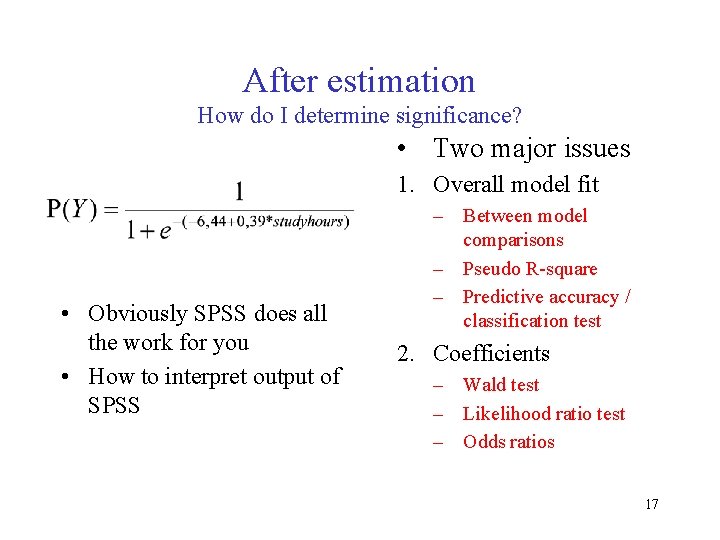

After estimation How do I determine significance? • Two major issues 1. Overall model fit • Obviously SPSS does all the work for you • How to interpret output of SPSS – Between model comparisons – Pseudo R-square – Predictive accuracy / classification test 2. Coefficients – Wald test – Likelihood ratio test – Odds ratios 17

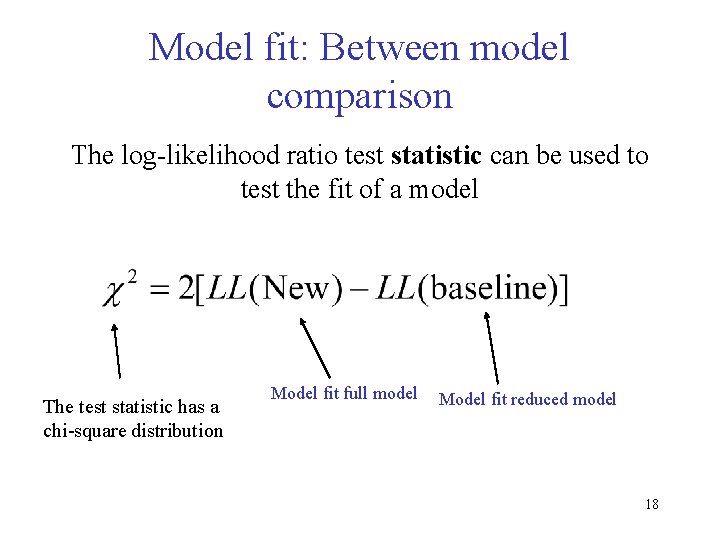

Model fit: Between model comparison The log-likelihood ratio test statistic can be used to test the fit of a model The test statistic has a chi-square distribution Model fit full model Model fit reduced model 18

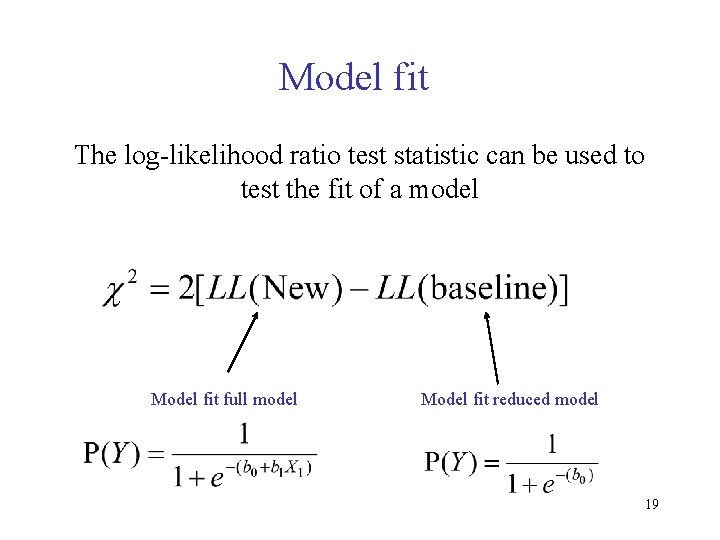

Model fit The log-likelihood ratio test statistic can be used to test the fit of a model Model fit full model Model fit reduced model 19

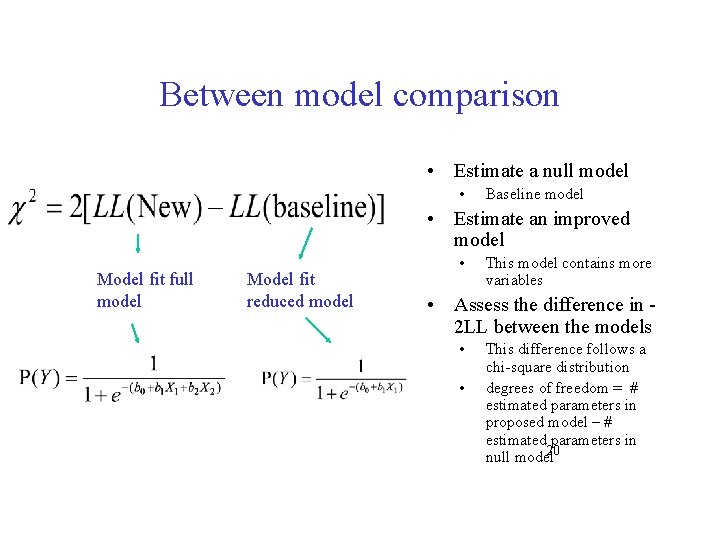

Between model comparison • Estimate a null model • Baseline model • Estimate an improved model Model fit full model Model fit reduced model • This model contains more variables • Assess the difference in 2 LL between the models • • This difference follows a chi-square distribution degrees of freedom = # estimated parameters in proposed model – # estimated parameters in 20 null model

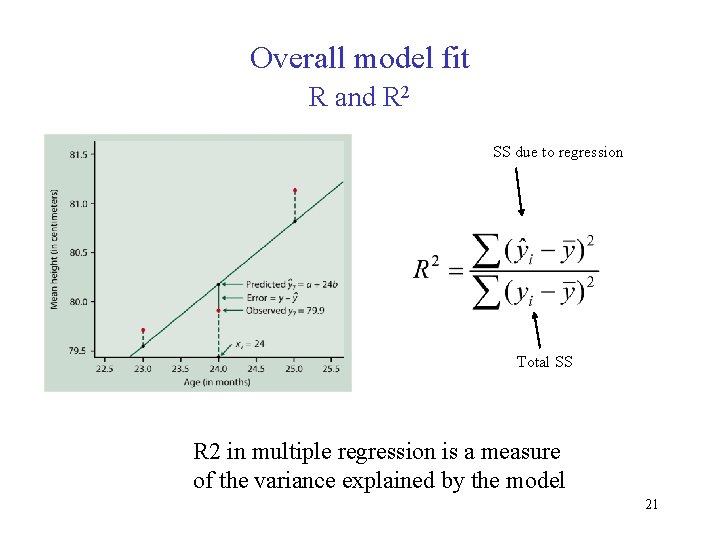

Overall model fit R and R 2 SS due to regression Total SS R 2 in multiple regression is a measure of the variance explained by the model 21

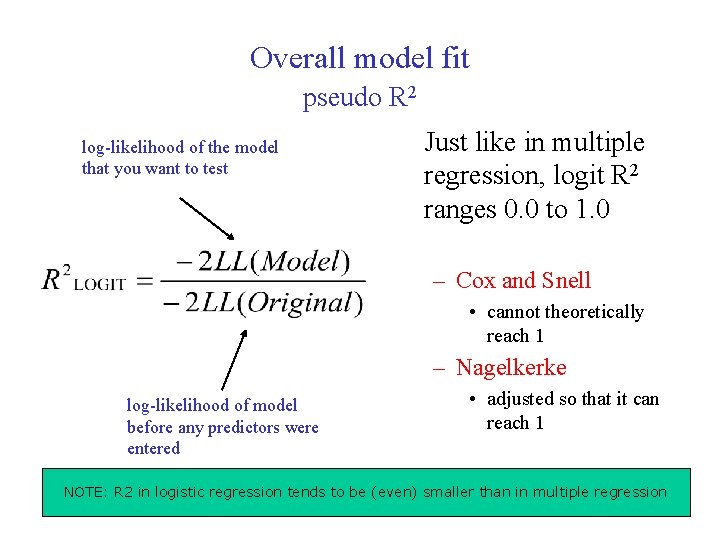

Overall model fit pseudo R 2 log-likelihood of the model that you want to test Just like in multiple regression, logit R 2 ranges 0. 0 to 1. 0 – Cox and Snell • cannot theoretically reach 1 – Nagelkerke log-likelihood of model before any predictors were entered • adjusted so that it can reach 1 NOTE: R 2 in logistic regression tends to be (even) smaller than in multiple regression 22

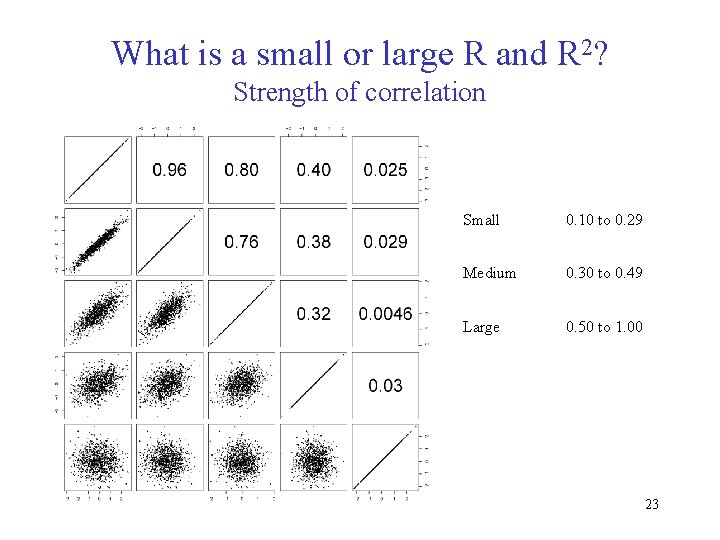

What is a small or large R and R 2? Strength of correlation Small 0. 10 to 0. 29 Medium 0. 30 to 0. 49 Large 0. 50 to 1. 00 23

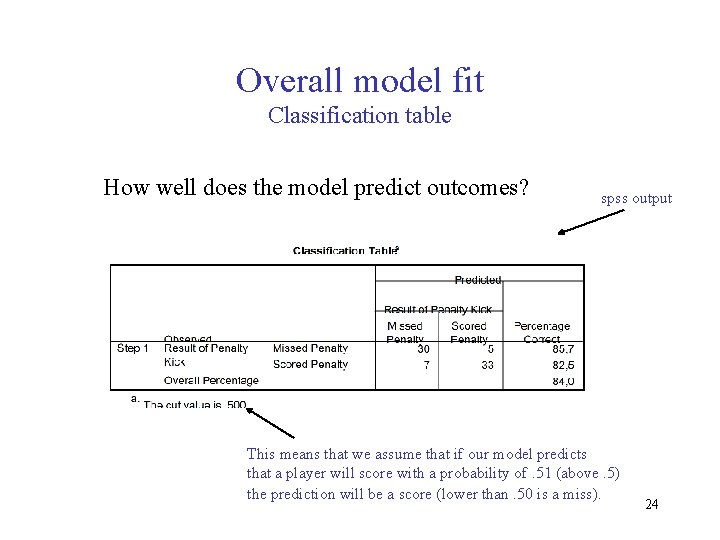

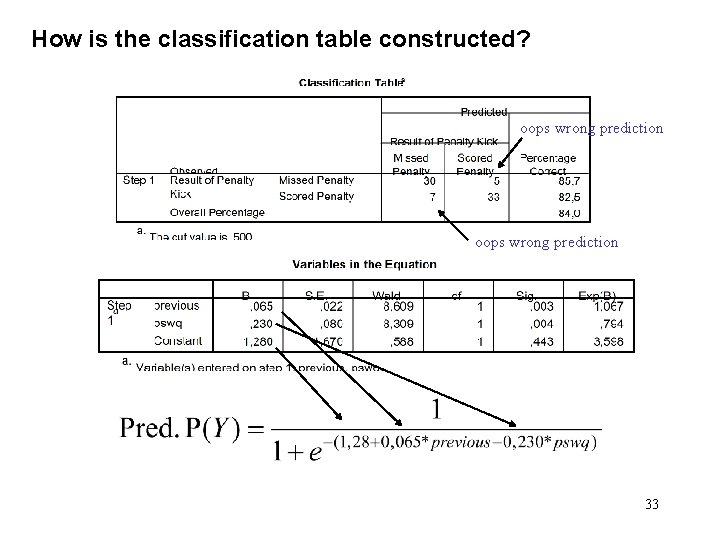

Overall model fit Classification table How well does the model predict outcomes? spss output This means that we assume that if our model predicts that a player will score with a probability of. 51 (above. 5) the prediction will be a score (lower than. 50 is a miss). 24

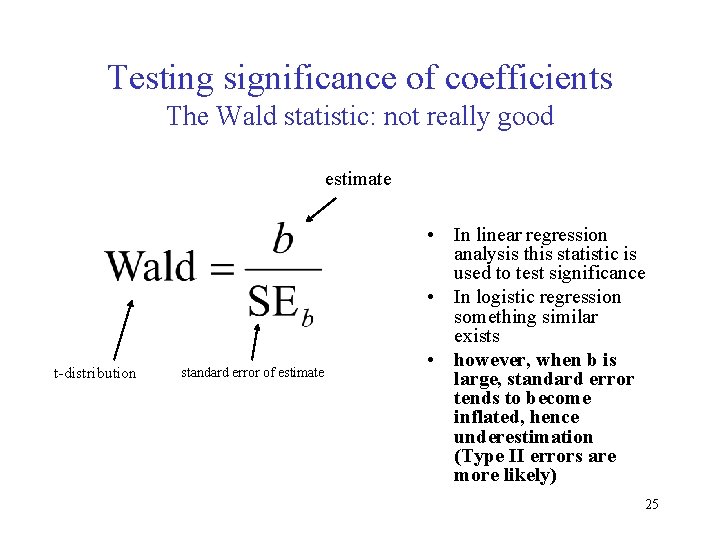

Testing significance of coefficients The Wald statistic: not really good estimate t-distribution standard error of estimate • In linear regression analysis this statistic is used to test significance • In logistic regression something similar exists • however, when b is large, standard error tends to become inflated, hence underestimation (Type II errors are more likely) 25

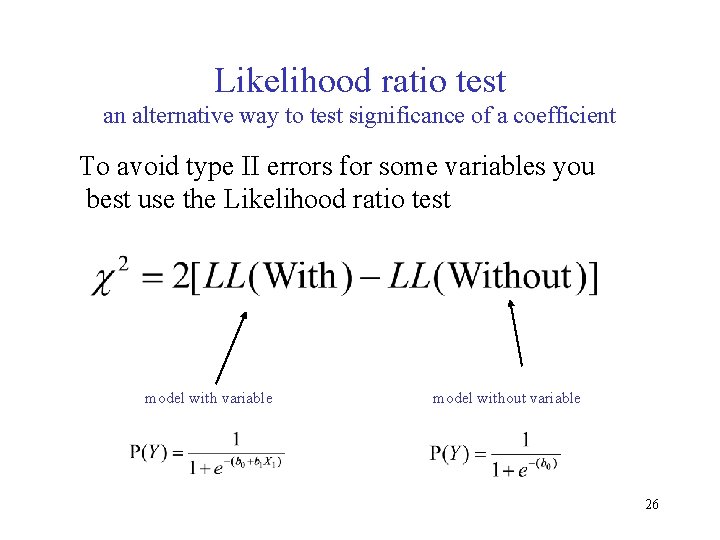

Likelihood ratio test an alternative way to test significance of a coefficient To avoid type II errors for some variables you best use the Likelihood ratio test model with variable model without variable 26

Before we go to the example A recap • Logistic regression – dichotomous outcome – logistic function – log-likelihood / maximum likelihood • Model fit – – likelihood ratio test (compare LL of models) Pseudo R-square Classification table Wald test 27

Illustration with SPSS • Penalty kicks data, variables: – Scored: outcome variable, • 0 = penalty missed, and 1 = penalty scored – Pswq: degree to which a player worries – Previous: percentage of penalties scored by a particulare player in their career 28

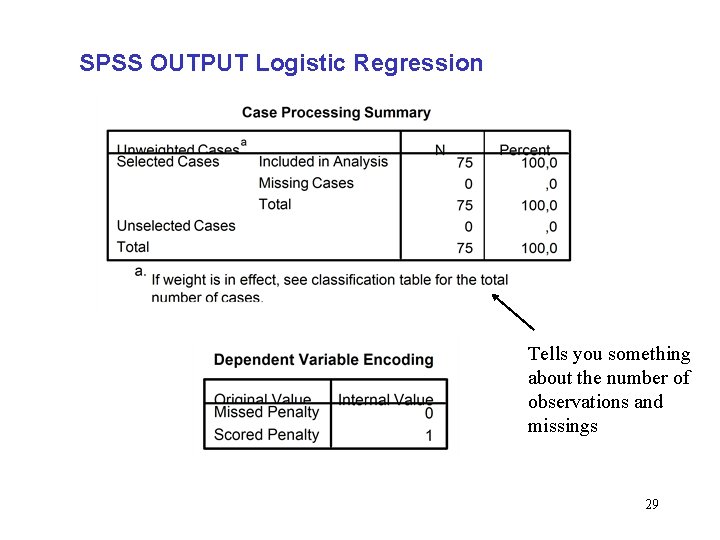

SPSS OUTPUT Logistic Regression Tells you something about the number of observations and missings 29

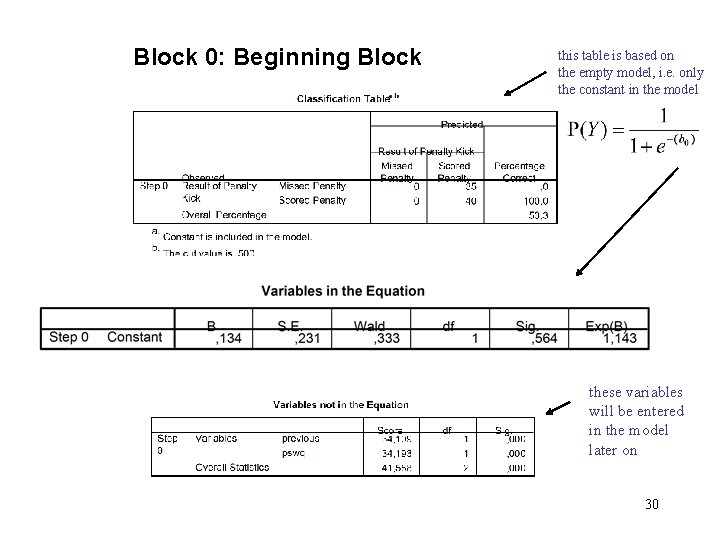

Block 0: Beginning Block this table is based on the empty model, i. e. only the constant in the model these variables will be entered in the model later on 30

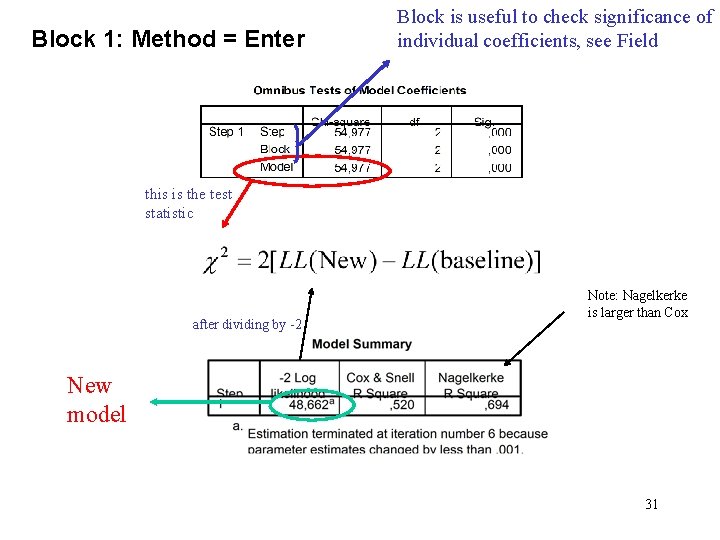

Block 1: Method = Enter Block is useful to check significance of individual coefficients, see Field this is the test statistic after dividing by -2 Note: Nagelkerke is larger than Cox New model 31

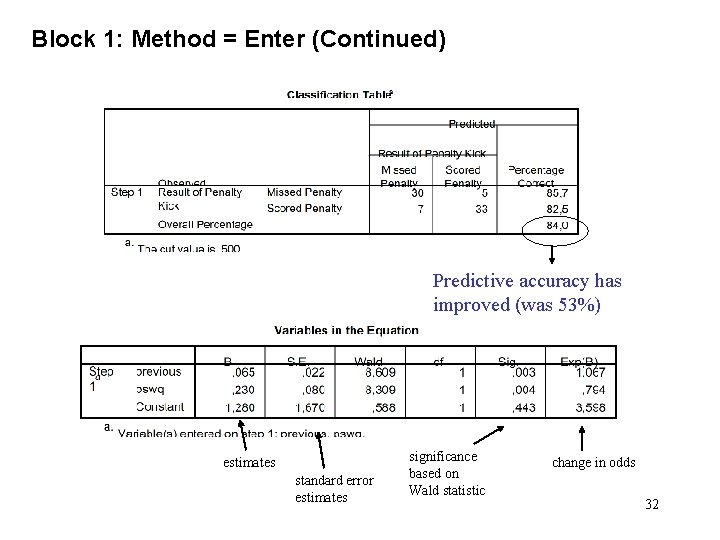

Block 1: Method = Enter (Continued) Predictive accuracy has improved (was 53%) estimates standard error estimates significance based on Wald statistic change in odds 32

How is the classification table constructed? oops wrong prediction 33

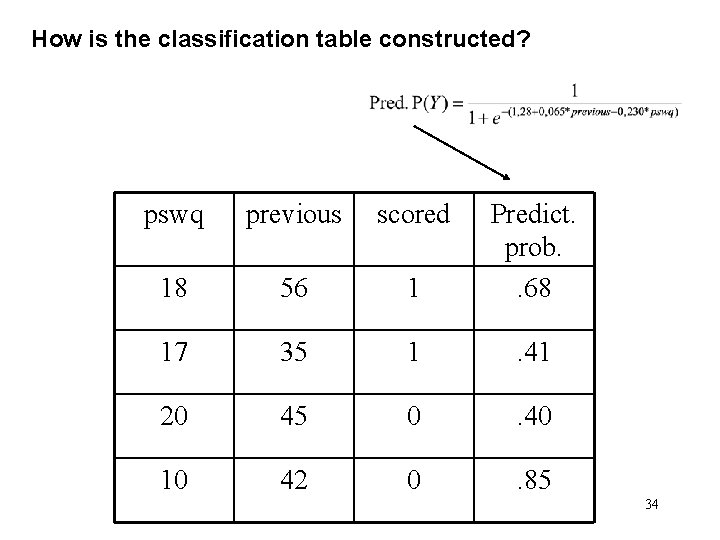

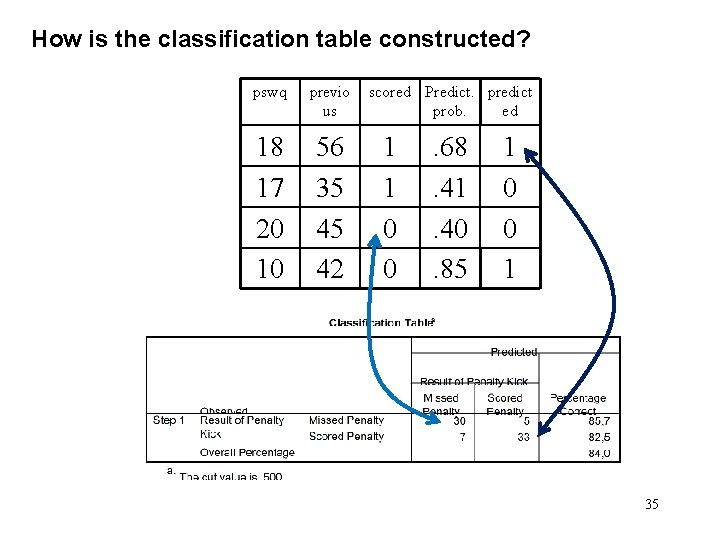

How is the classification table constructed? pswq previous scored 18 56 1 Predict. prob. . 68 17 35 1 . 41 20 45 0 . 40 10 42 0 . 85 34

How is the classification table constructed? pswq previo us 18 17 20 10 56 35 45 42 scored Predict. predict prob. ed 1 1 0 0 . 68. 41. 40. 85 1 0 0 1 35

- Slides: 35