What to do With Data Or closing the

- Slides: 9

What to do With Data? (Or, closing the alleged loop) Analysis, Interpretation, and Improvement. Mark Pursley

The “O” of SLO’s • The outcome should be a measurable behavior. • How is behavior measured? Performing a task in accordance with a rubric. Ideally, that task should connect with a skill that improves performance in the workplace or community.

If the “O” is ill-defined, the data is unhelpful • A discipline in one local college defines the outcome as the percentage of students who pass courses in that discipline with a grade of C or better. Clearly, that outcome is not an adequate measure of student learning, since students may earn a grade of C without having learned a measurable skill. • We need to assess our assessments to ensure that the data we are collecting is informative. • What practical skill can students do now that they couldn’t do before taking this course?

Assessing assessments • If it is possible for any student to walk in and score 70% on our assessment, then we haven’t got useful data that measures student learning. • If assessment scores are always above 90%, perhaps we set the bar too low. • There is no loop to close if we don’t have assessments that effectively measure student learning.

Traditional vs Authentic Assessment ACADEMIC SENATE OF THE CALIFORNIA COMMUNITY COLLEGES. • Authentic assessment simulates a real world experience by evaluating the student’s ability to apply critical thinking and knowledge or to perform tasks that may approximate those found in the workplace or other venues outside the classroom. “I’M FEELING ‘C’ RIGHT NOW. ”

Individuals and Aggregates • We assess individual performance constantly. To assess the aggregate it helps to disaggregate the data. • A good rubric makes this possible. • We may find that students are strong on syntax but weak on organization. • The benchmarks we set help us measure improvement. • Departmental discussions of assessment data analyze trends and produce recommendations for improvement.

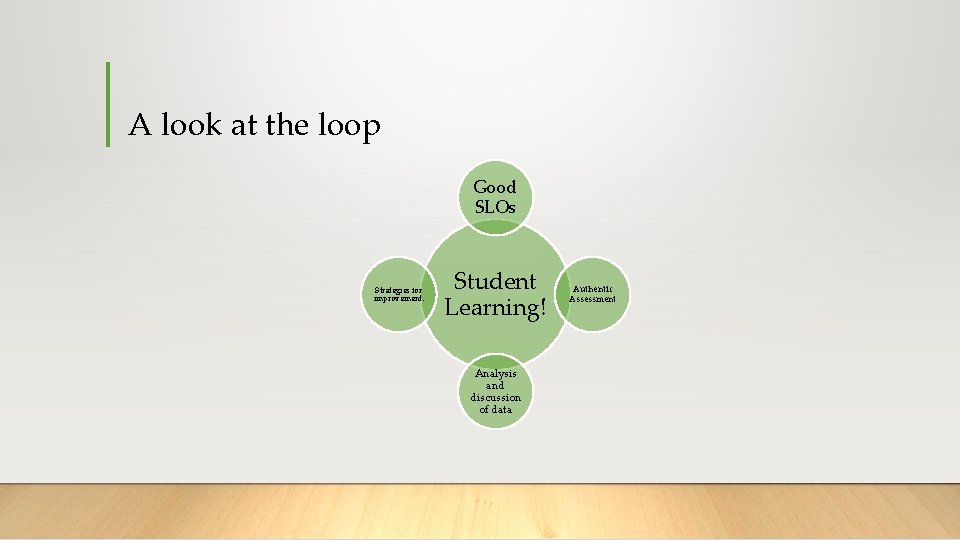

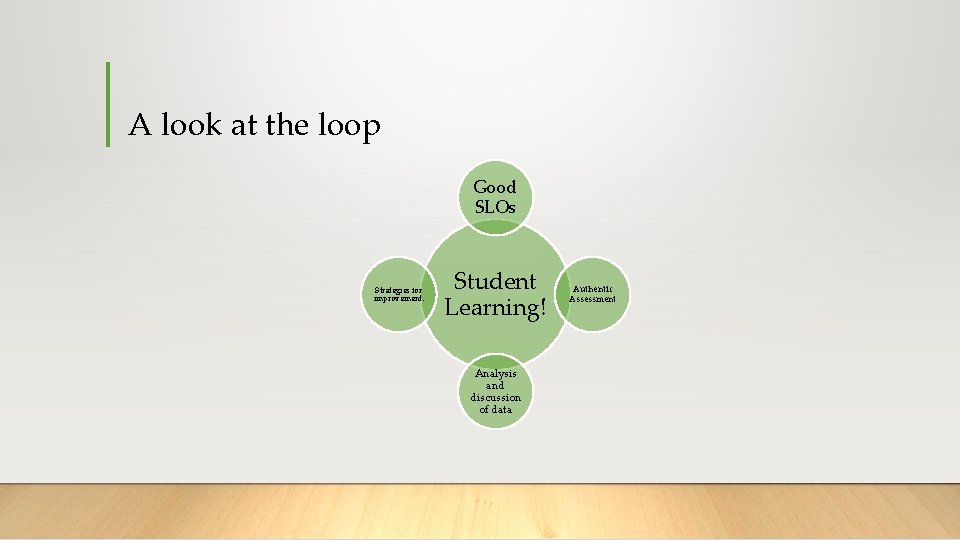

A look at the loop Good SLOs Strategies for improvement. Student Learning! Analysis and discussion of data Authentic Assessment

Continuous Improvement • Assessment should not be about the ability to give the right answer. • Rather, we should expect our students to use the knowledge they have acquired to perform meaningful tasks effectively. • When those tasks are clearly defined, a rubric is created to measure effective performance, and a benchmark is identified to aim for, then we get data that leads to meaningful improvement.