What is SAMGrid Job Handling Data Handling Monitoring

What is SAM-Grid? Job Handling Data Handling Monitoring and Information

Problems To Solve n n n How can a large, geographically distributed, dynamic, physics collaboration work together? How can this collaboration make use of available distributed computing resources? How can it handle the huge amount of data (PBs) generated by the experiment?

Answers – The GRID & SAM-Grid n GRID q n A network of middleware services that tie together distributed resources (Fabric – processors, storage). SAM-Grid q Integrate the standard middleware to achieve a complete Job, Data, and Information management infrastructure thereby enabling fully distributed computing.

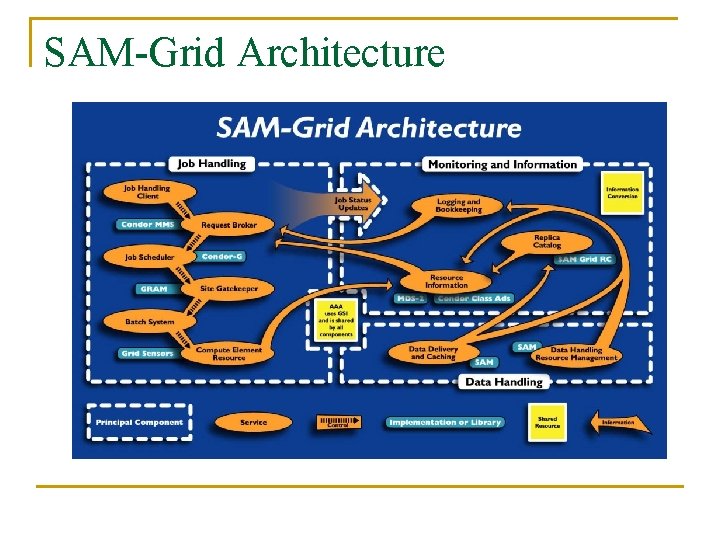

SAM-Grid Architecture

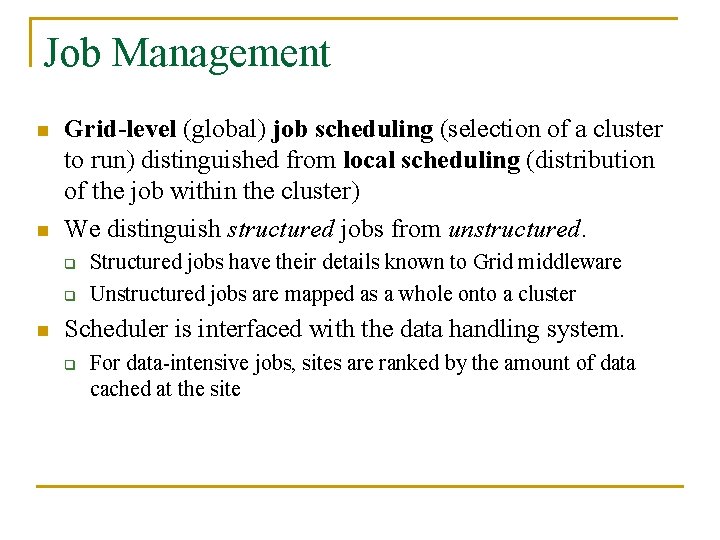

Job Management n n Grid-level (global) job scheduling (selection of a cluster to run) distinguished from local scheduling (distribution of the job within the cluster) We distinguish structured jobs from unstructured. q q n Structured jobs have their details known to Grid middleware Unstructured jobs are mapped as a whole onto a cluster Scheduler is interfaced with the data handling system. q For data-intensive jobs, sites are ranked by the amount of data cached at the site

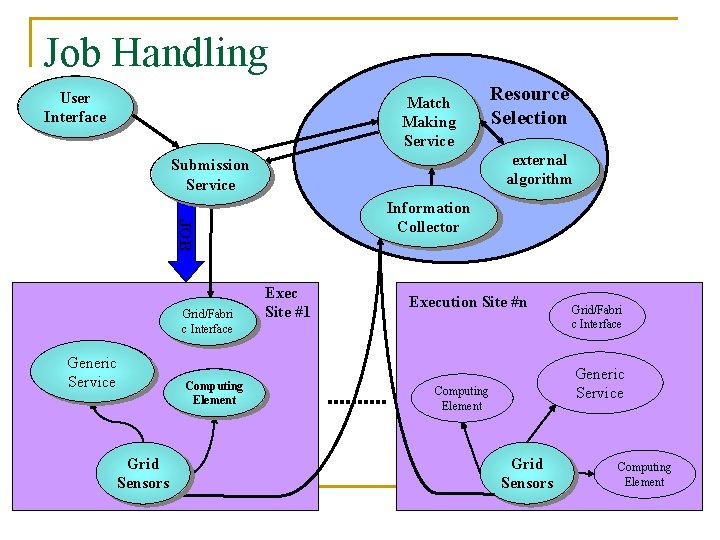

Job Handling User Interface Match Making Service external algorithm Submission Service Information Collector JOB Grid/Fabri c Interface Generic Service Grid Sensors Computing Element Resource Selection Exec Site #1 Execution Site #n Grid/Fabri c Interface Generic Service Computing Element Grid Sensors Computing Element

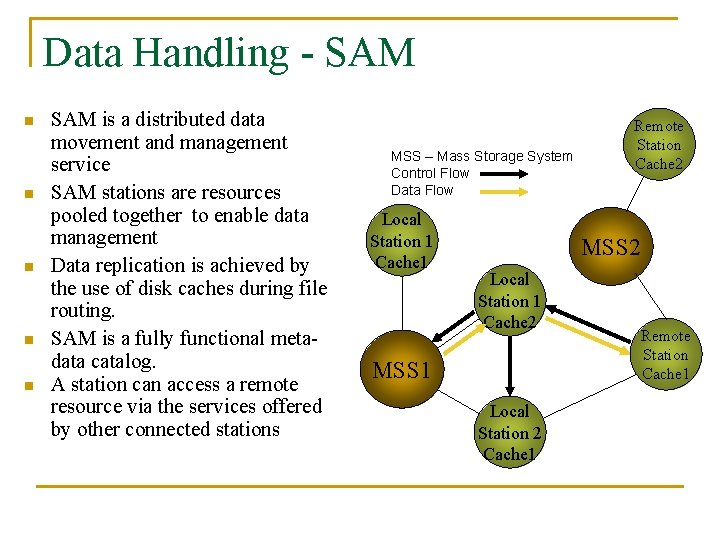

Data Handling - SAM n n n SAM is a distributed data movement and management service SAM stations are resources pooled together to enable data management Data replication is achieved by the use of disk caches during file routing. SAM is a fully functional metadata catalog. A station can access a remote resource via the services offered by other connected stations MSS – Mass Storage System Control Flow Data Flow Local Station 1 Cache 1 Remote Station Cache 2 MSS 2 Local Station 1 Cache 2 MSS 1 Local Station 2 Cache 1 Remote Station Cache 1

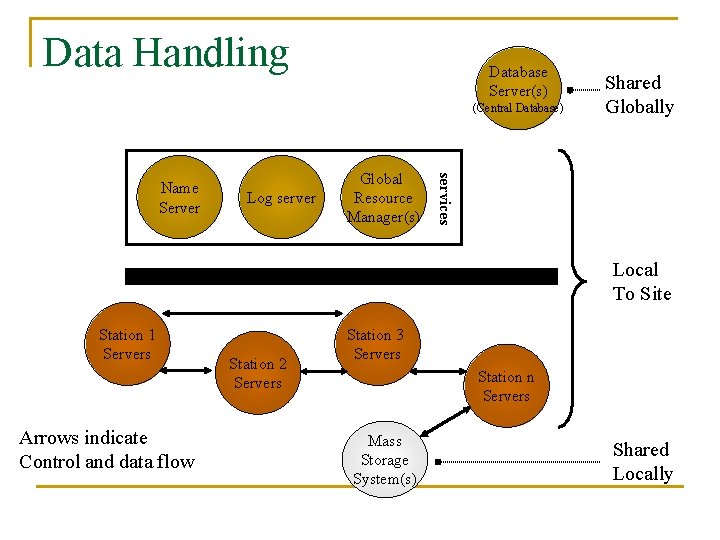

Data Handling Database Server(s) (Central Database) Log server Global Resource Manager(s) services Name Server Shared Globally Local To Site Station 1 Servers Arrows indicate Control and data flow Station 2 Servers Station 3 Servers Station n Servers Mass Storage System(s) Shared Locally

Monitoring and Information n This includes: q q q n configuration framework resource description for job brokering infrastructure for monitoring Main features q q q Sites (resources), services and jobs monitoring Distributed knowledge about jobs etc. Incremental knowledge building Grid Monitoring Architecture for current state inquiries, Logging for recent history studies All Web based

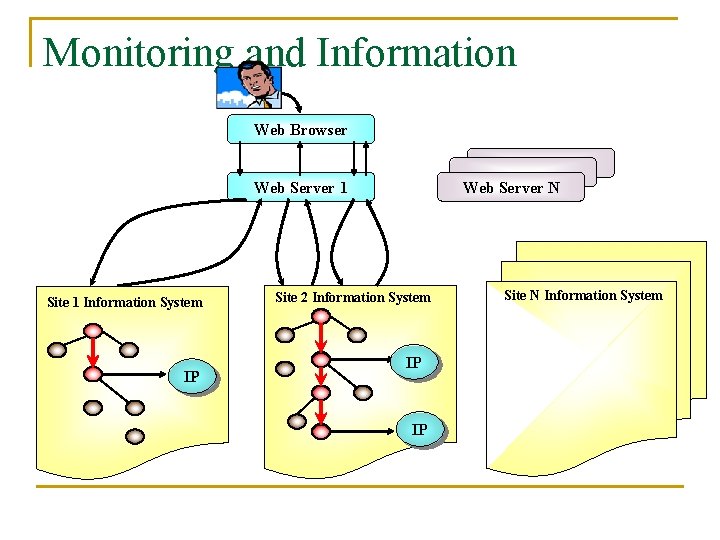

Monitoring and Information Web Browser Web. Server 1 Site 1 Information System IP Web Server N Site 2 Information System IP IP Site N Information System

Challenges with Grid/Fabric Interface n The Globus toolkit Grid/Fabric interfaces are not sufficiently… q q …flexible: they expect a “standard” batch system configuration. …scalable: a process per grid job is started up at the gateway machine. We want/need aggregation. …comprehensive: they interface to the batch system only. How about data handling, local monitoring, databases, etc. …robust: if the batch system forgets about the jobs, they cannot react.

Flexibility n n n Addressing the peculiarity of the configuration of each batch system requires modification to the Globus toolkit job-manager We address the problem by writing jobmanagers that use a level of abstraction on top of the batch systems. Each batch system adapter can be locally configured to conform to the local batch system interface

Scalability n n n The Globus gatekeeper starts up a process at the gateway node for every job entering the site This limits the number of grid jobs at a site to around 300, for the typical commodity computer We split single grid jobs into multiple batch processes in the SAM-Grid job-managers. Not only does this increase scalability, but it also increases the manageability of the job

Comprehensiveness n n The standard job-managers interface only to the local batch system We notify other fabric services when a job enters a site q q q Data handling: for data pre-staging Monitoring: to monitor a non-running job Database: to aggregate queries

Robustness n n n The standard job-managers cannot react to temporary failures of the local batch systems In our experience, PBS, Condor and BQS have failed to report the status of a job We write wrappers around the batch systems. These wrappers implement extra robustness. We call them “idealizers”

- Slides: 15