What is Computation Computation is about processing information

What is “Computation”? Computation is about “processing information” - Comp 411 – Spring 2008 Transforming information from one form to another Deriving new information from old Finding information associated with a given input “Computation” describes the motion of information through time “Communication” describes the motion of information through space 1/15/2008 L 02 - Information 1

What is “Information”? “ 10 Problem sets, 2 quizzes, and a final!” information, n. Knowledge communicated or received concerning a particular fact or circumstance. Carolina won again. Tell me something new… A Computer Scientist’s Definition: Information resolves uncertainty. Information is simply that which cannot be predicted. The less predictable a message is, the more information it conveys! Comp 411 – Spring 2008 1/15/2008 L 02 - Information 2

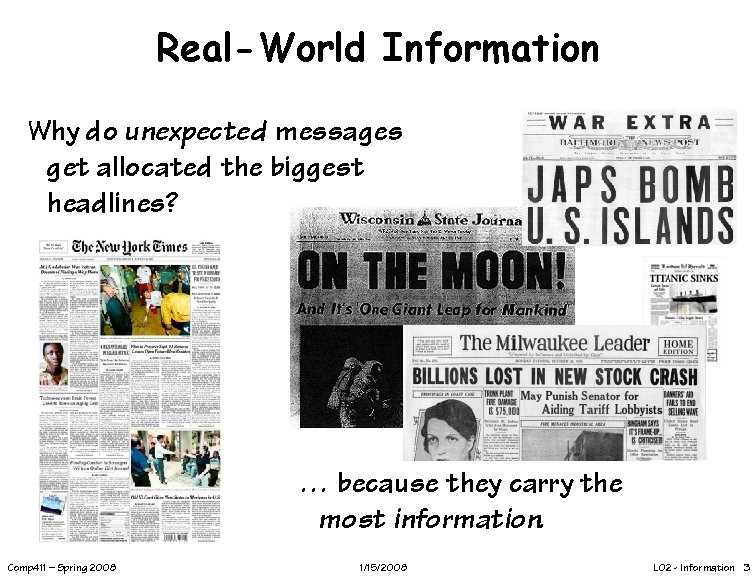

Real-World Information Why do unexpected messages get allocated the biggest headlines? … because they carry the most information. Comp 411 – Spring 2008 1/15/2008 L 02 - Information 3

What Does A Computer Process? • A Toaster processes bread and bagels • A Blender processes smoothies and margaritas • What does a computer process? • 2 allowable answers: – Information – Bits • What is the mapping from information to bits? Comp 411 – Spring 2008 1/15/2008 L 02 - Information 4

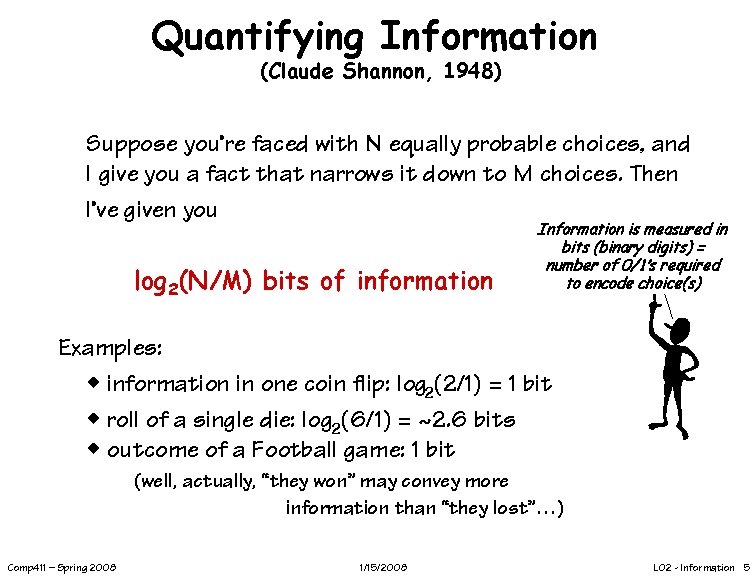

Quantifying Information (Claude Shannon, 1948) Suppose you’re faced with N equally probable choices, and I give you a fact that narrows it down to M choices. Then I’ve given you log 2(N/M) bits of information Information is measured in bits (binary digits) = number of 0/1’s required to encode choice(s) Examples: w information in one coin flip: log 2(2/1) = 1 bit w roll of a single die: log 2(6/1) = ~2. 6 bits w outcome of a Football game: 1 bit (well, actually, “they won” may convey more information than “they lost”…) Comp 411 – Spring 2008 1/15/2008 L 02 - Information 5

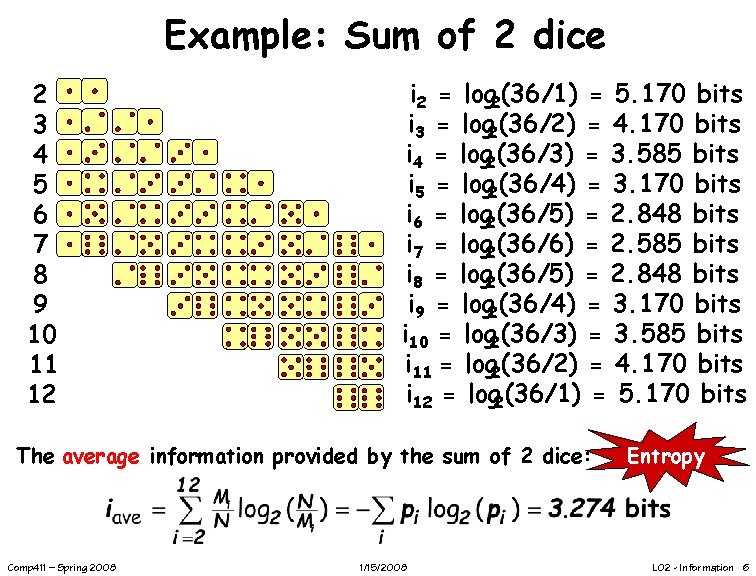

Example: Sum of 2 dice 2 3 4 5 6 7 8 9 10 11 12 i 2 = log 2(36/1) = 5. 170 bits i 3 = log 2(36/2) = 4. 170 bits i 4 = log 2(36/3) = 3. 585 bits i 5 = log 2(36/4) = 3. 170 bits i 6 = log 2(36/5) = 2. 848 bits i 7 = log 2(36/6) = 2. 585 bits i 8 = log 2(36/5) = 2. 848 bits i 9 = log 2(36/4) = 3. 170 bits i 10 = log 2(36/3) = 3. 585 bits i 11 = log 2(36/2) = 4. 170 bits i 12 = log 2(36/1) = 5. 170 bits The average information provided by the sum of 2 dice: Comp 411 – Spring 2008 1/15/2008 Entropy L 02 - Information 6

Show Me the Bits! Can the sum of two dice REALLY be represented using 3. 274 bits? If so, how? The fact is, the average information content is a strict *lower-bound* on how small of a representation that we can achieve. In practice, it is difficult to reach this bound. But, we can come very close. Comp 411 – Spring 2008 1/15/2008 L 02 - Information 7

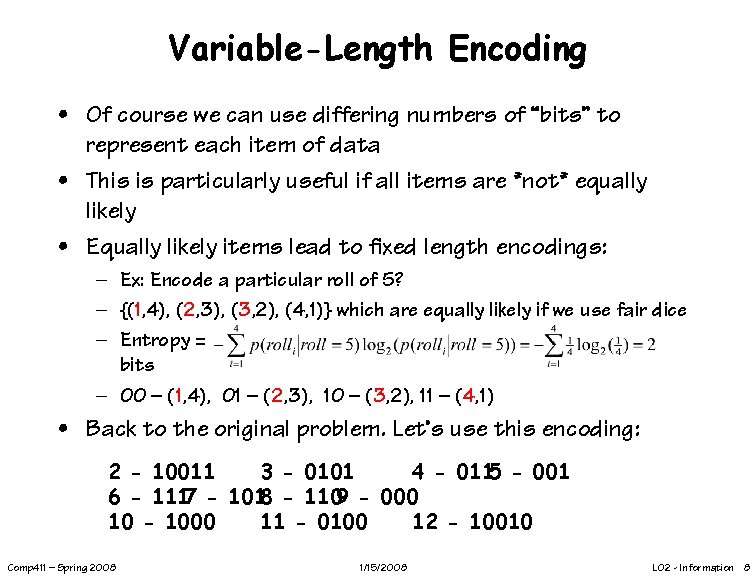

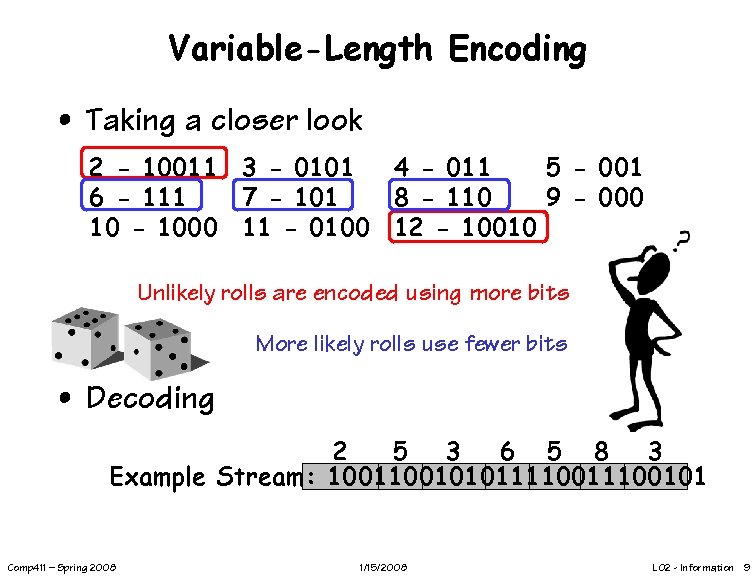

Variable-Length Encoding • Of course we can use differing numbers of “bits” to represent each item of data • This is particularly useful if all items are *not* equally likely • Equally likely items lead to fixed length encodings: – Ex: Encode a particular roll of 5? – {(1, 4), (2, 3), (3, 2), (4, 1)} which are equally likely if we use fair dice – Entropy = bits – 00 – (1, 4), 01 – (2, 3), 10 – (3, 2), 11 – (4, 1) • Back to the original problem. Let’s use this encoding: 2 - 10011 3 - 0101 4 - 0115 - 001 6 - 1117 - 1018 - 1109 - 000 10 - 1000 11 - 0100 12 - 10010 Comp 411 – Spring 2008 1/15/2008 L 02 - Information 8

Variable-Length Encoding • Taking a closer look 2 - 10011 3 - 0101 4 - 011 5 - 001 6 - 111 7 - 101 8 - 110 9 - 000 10 - 1000 11 - 0100 12 - 10010 Unlikely rolls are encoded using more bits More likely rolls use fewer bits • Decoding 2 5 3 6 5 8 3 Example Stream: 1001010111100101 Comp 411 – Spring 2008 1/15/2008 L 02 - Information 9

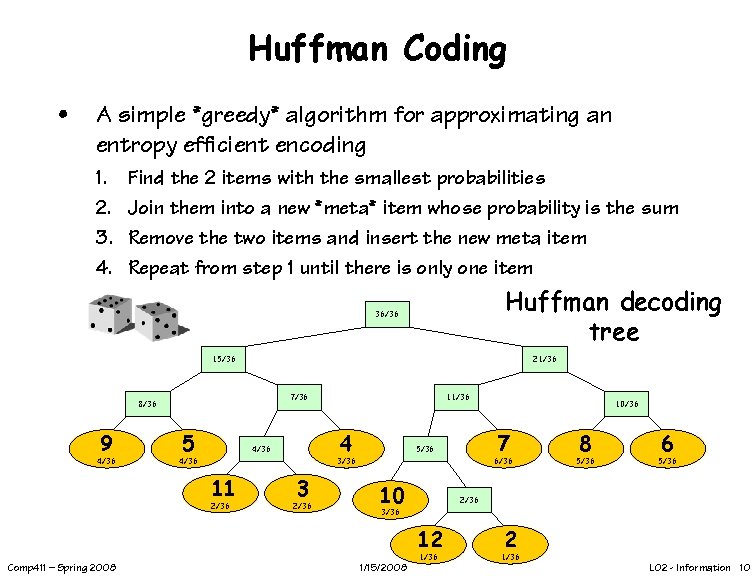

Huffman Coding • A simple *greedy* algorithm for approximating an entropy efficient encoding 1. 2. 3. 4. Find the 2 items with the smallest probabilities Join them into a new *meta* item whose probability is the sum Remove the two items and insert the new meta item Repeat from step 1 until there is only one item Huffman decoding tree 36/36 15/36 21/36 7/36 8/36 9 4/36 5 11/36 4 4/36 11 2/36 3 2/36 6/36 10 8 5/36 6 5/36 2/36 3/36 12 1/36 Comp 411 – Spring 2008 7 5/36 3/36 4/36 10/36 1/15/2008 2 1/36 L 02 - Information 10

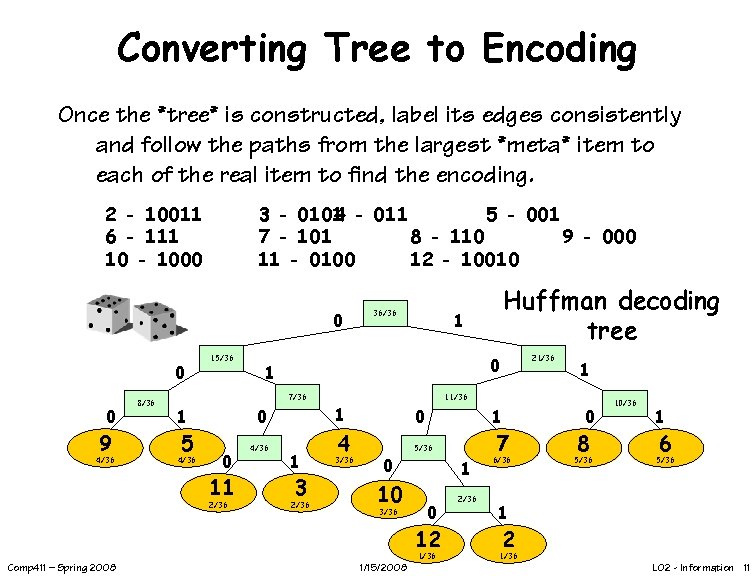

Converting Tree to Encoding Once the *tree* is constructed, label its edges consistently and follow the paths from the largest *meta* item to each of the real item to find the encoding. 2 - 10011 6 - 111 10 - 1000 3 - 0101 4 - 011 5 - 001 7 - 101 8 - 110 9 - 000 11 - 0100 12 - 10010 0 9 4/36 8/36 15/36 5 4/36 0 0 11 2/36 1 1 4/36 21/36 1 3 2/36 1 4 3/36 1 11/36 0 0 10 3/36 1 5/36 1 0 12 1/36 Comp 411 – Spring 2008 Huffman decoding tree 0 7/36 1 36/36 1/15/2008 2/36 7 6/36 0 8 5/36 10/36 1 6 5/36 1 2 1/36 L 02 - Information 11

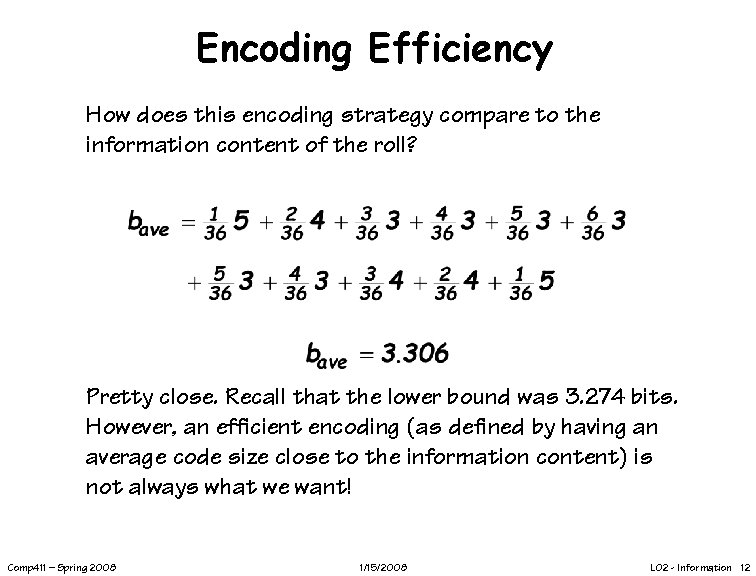

Encoding Efficiency How does this encoding strategy compare to the information content of the roll? Pretty close. Recall that the lower bound was 3. 274 bits. However, an efficient encoding (as defined by having an average code size close to the information content) is not always what we want! Comp 411 – Spring 2008 1/15/2008 L 02 - Information 12

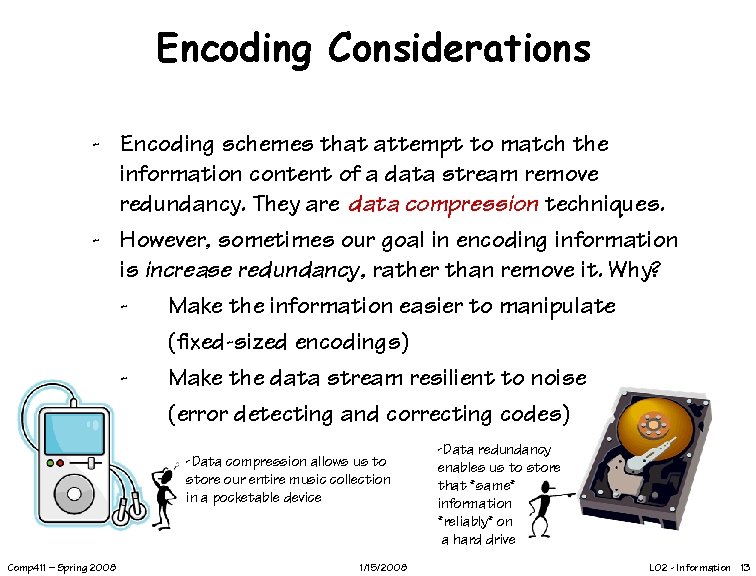

Encoding Considerations - Encoding schemes that attempt to match the information content of a data stream remove redundancy. They are data compression techniques. - However, sometimes our goal in encoding information is increase redundancy, rather than remove it. Why? Make the information easier to manipulate (fixed-sized encodings) Make the data stream resilient to noise (error detecting and correcting codes) -Data compression allows us to store our entire music collection in a pocketable device Comp 411 – Spring 2008 1/15/2008 -Data redundancy enables us to store that *same* information *reliably* on a hard drive L 02 - Information 13

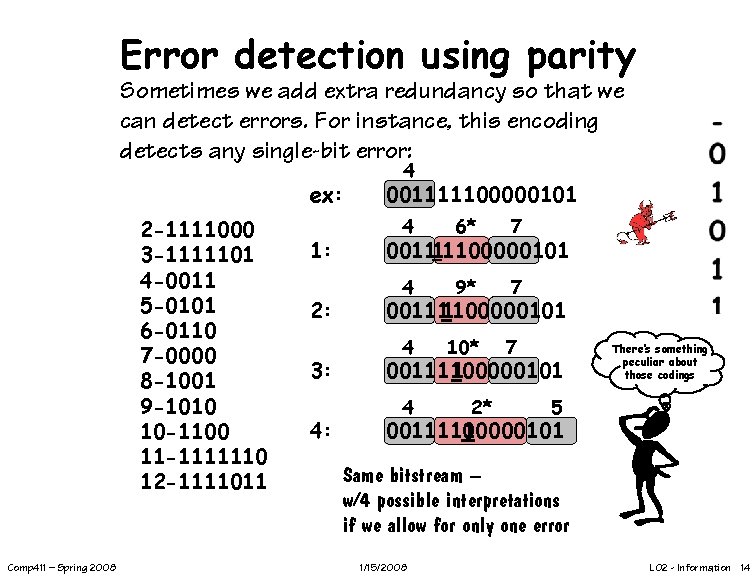

Error detection using parity Sometimes we add extra redundancy so that we can detect errors. For instance, this encoding detects any single-bit error: ex: 2 -1111000 3 -1111101 4 -0011 5 -0101 6 -0110 7 -0000 8 -1001 9 -1010 10 -1100 11 -1111110 12 -1111011 Comp 411 – Spring 2008 1: 2: 3: 4: 4 001111100000101 4 6* 7 4 9* 7 4 10* 7 001111100000101 001111 100000101 4 2* There’s something peculiar about those codings 5 0011111 00000101 Same bitstream – w/4 possible interpretations if we allow for only one error 1/15/2008 L 02 - Information 14

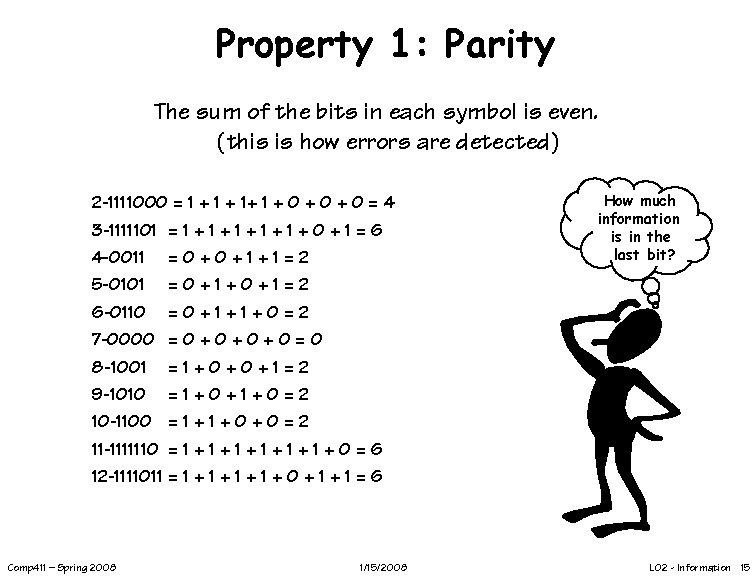

Property 1: Parity The sum of the bits in each symbol is even. (this is how errors are detected) 2 -1111000 = 1 + 1+ 1 + 0 + 0 = 4 3 -1111101 = 1 + 1 + 1 + 0 + 1 = 6 4 -0011 = 0 + 1 + 1 = 2 5 -0101 = 0 + 1 + 0 + 1 = 2 6 -0110 = 0 + 1 + 0 = 2 7 -0000 = 0 + 0 + 0 = 0 8 -1001 = 1 + 0 + 1 = 2 9 -1010 = 1 + 0 + 1 + 0 = 2 10 -1100 = 1 + 0 + 0 = 2 11 -1111110 = 1 + 1 + 1 + 0 = 6 12 -1111011 = 1 + 1 + 0 + 1 = 6 Comp 411 – Spring 2008 1/15/2008 How much information is in the last bit? L 02 - Information 15

Property 2: Separation Each encoding differs from all others by at least two bits in their overlapping parts This difference is called the “Hamming distance” “A Hamming distance of 1 is needed to uniquely identify an encoding” Comp 411 – Spring 2008 1/15/2008 L 02 - Information 16

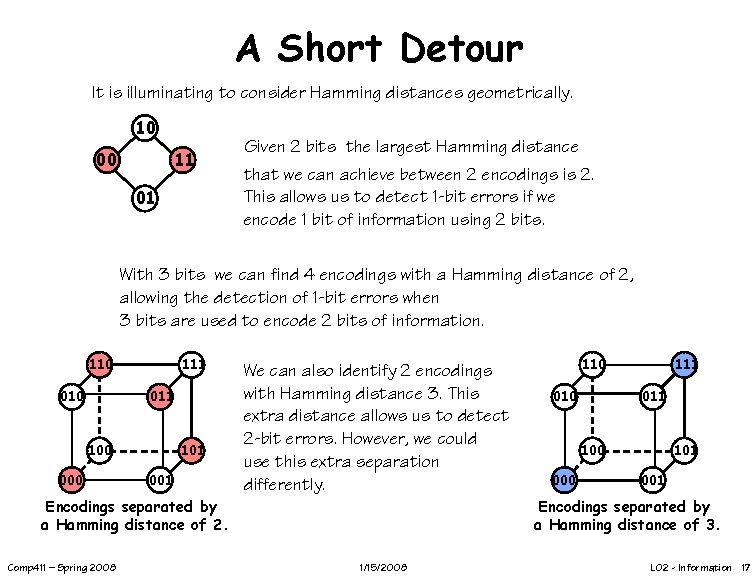

A Short Detour It is illuminating to consider Hamming distances geometrically. 10 00 11 01 Given 2 bits the largest Hamming distance that we can achieve between 2 encodings is 2. This allows us to detect 1 -bit errors if we encode 1 bit of information using 2 bits. With 3 bits we can find 4 encodings with a Hamming distance of 2, allowing the detection of 1 -bit errors when 3 bits are used to encode 2 bits of information. 110 010 111 011 100 000 101 001 We can also identify 2 encodings with Hamming distance 3. This extra distance allows us to detect 2 -bit errors. However, we could use this extra separation differently. Encodings separated by a Hamming distance of 2. Comp 411 – Spring 2008 110 010 111 011 100 000 101 001 Encodings separated by a Hamming distance of 3. 1/15/2008 L 02 - Information 17

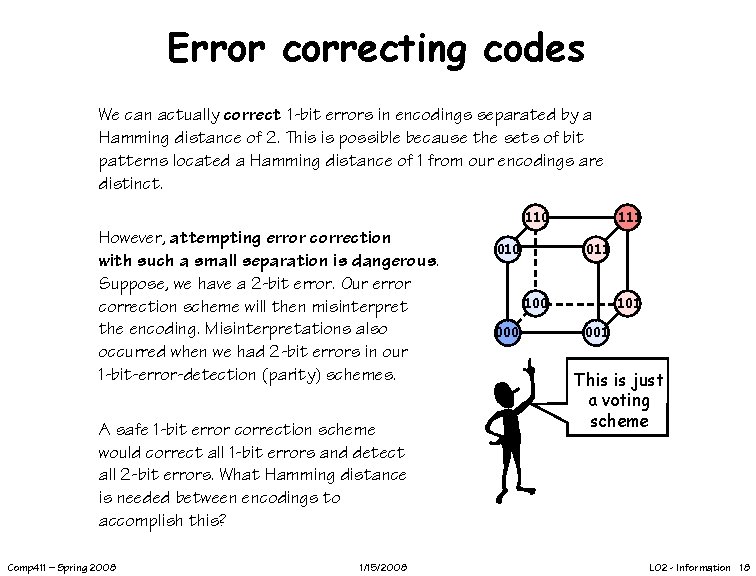

Error correcting codes We can actually correct 1 -bit errors in encodings separated by a Hamming distance of 2. This is possible because the sets of bit patterns located a Hamming distance of 1 from our encodings are distinct. 110 However, attempting error correction with such a small separation is dangerous. Suppose, we have a 2 -bit error. Our error correction scheme will then misinterpret the encoding. Misinterpretations also occurred when we had 2 -bit errors in our 1 -bit-error-detection (parity) schemes. A safe 1 -bit error correction scheme would correct all 1 -bit errors and detect all 2 -bit errors. What Hamming distance is needed between encodings to accomplish this? Comp 411 – Spring 2008 1/15/2008 010 111 011 100 000 101 001 This is just a voting scheme L 02 - Information 18

Information Summary Information resolves uncertainty • Choices equally probable: • N choices down to M log 2(N/M) bits of information • Choices not equally probable: • choicei with probability pi log 2(1/pi) bits of information • average number of bits = pilog 2(1/pi) • use variable-length encodings Comp 411 – Spring 2008 1/15/2008 L 02 - Information 19

Computer Abstractions and Technology 1. Layer Cakes 2. Computers are translators 3. Switches and Wires (Read Chapter 1) Comp 411 – Spring 2008 1/15/2008 L 02 - Information 20

Computers Everywhere The computers we are used to Desktops Laptops Embedded processors Cars Mobile phones Toasters, irons, wristwatches, happy-meal toys Comp 411 – Spring 2008 1/15/2008 L 02 - Information 21

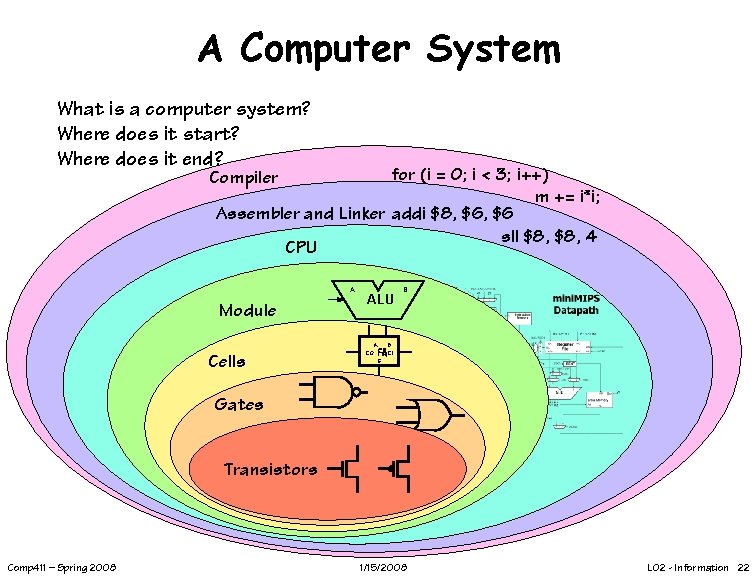

A Computer System What is a computer system? Where does it start? Where does it end? for (i = 0; i < 3; i++) m += i*i; Assembler and Linker addi $8, $6 sll $8, 4 CPU Compiler A Module ALU A Cells CO B B FA CI S Gates Transistors Comp 411 – Spring 2008 1/15/2008 L 02 - Information 22

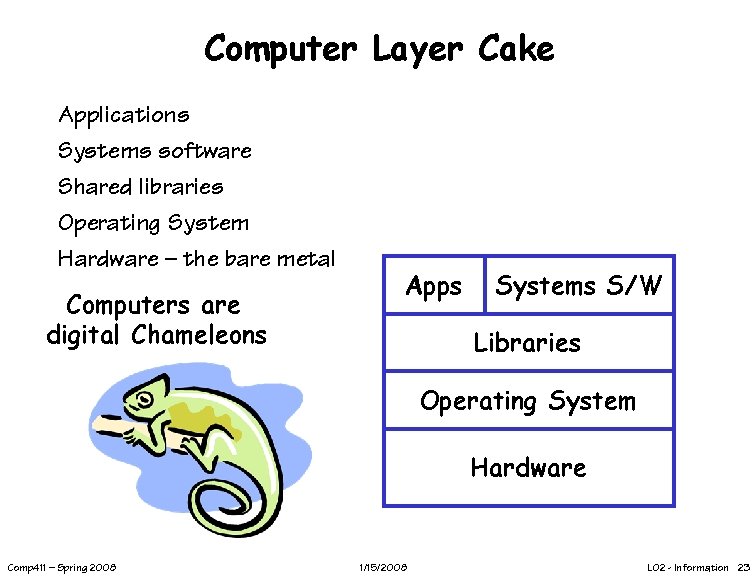

Computer Layer Cake Applications Systems software Shared libraries Operating System Hardware – the bare metal Computers are digital Chameleons Apps Systems S/W Libraries Operating System Hardware Comp 411 – Spring 2008 1/15/2008 L 02 - Information 23

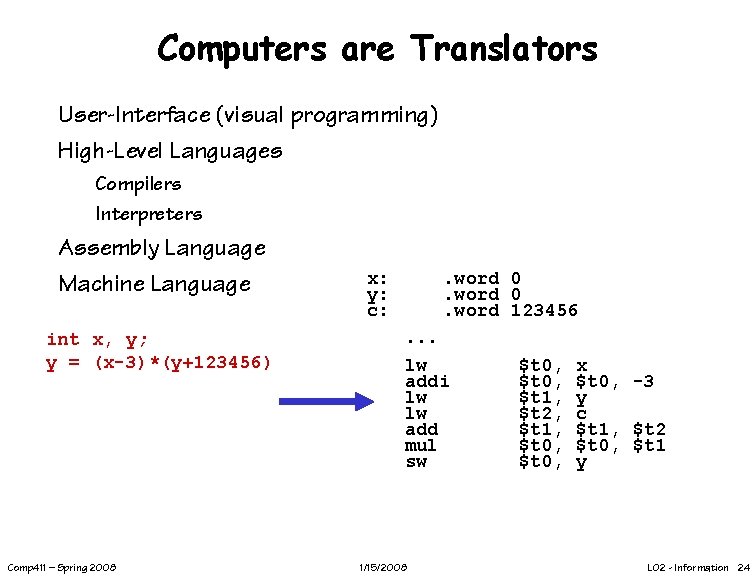

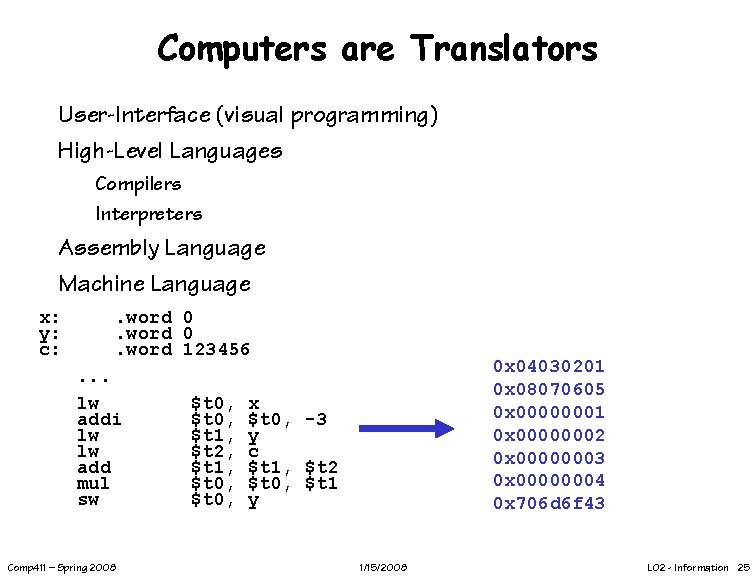

Computers are Translators User-Interface (visual programming) High-Level Languages Compilers Interpreters Assembly Language Machine Language int x, y; y = (x-3)*(y+123456) Comp 411 – Spring 2008 x: y: c: . word 0. word 123456. . . lw addi lw lw add mul sw 1/15/2008 $t 0, $t 1, $t 2, $t 1, $t 0, x $t 0, -3 y c $t 1, $t 2 $t 0, $t 1 y L 02 - Information 24

Computers are Translators User-Interface (visual programming) High-Level Languages Compilers Interpreters Assembly Language Machine Language x: y: c: . word 0. word 123456. . . lw addi lw lw add mul sw Comp 411 – Spring 2008 $t 0, $t 1, $t 2, $t 1, $t 0, 0 x 04030201 0 x 08070605 0 x 00000001 0 x 00000002 0 x 00000003 0 x 00000004 0 x 706 d 6 f 43 x $t 0, -3 y c $t 1, $t 2 $t 0, $t 1 y 1/15/2008 L 02 - Information 25

Why So Many Languages? Application Specific Historically: COBOL vs. Fortran Today: C# vs. Java Visual Basic vs. Matlab Static vs. Dynamic Code Maintainability High-level specifications are easier to understand modify Code Reuse Code Portability Virtual Machines Comp 411 – Spring 2008 1/15/2008 L 02 - Information 26

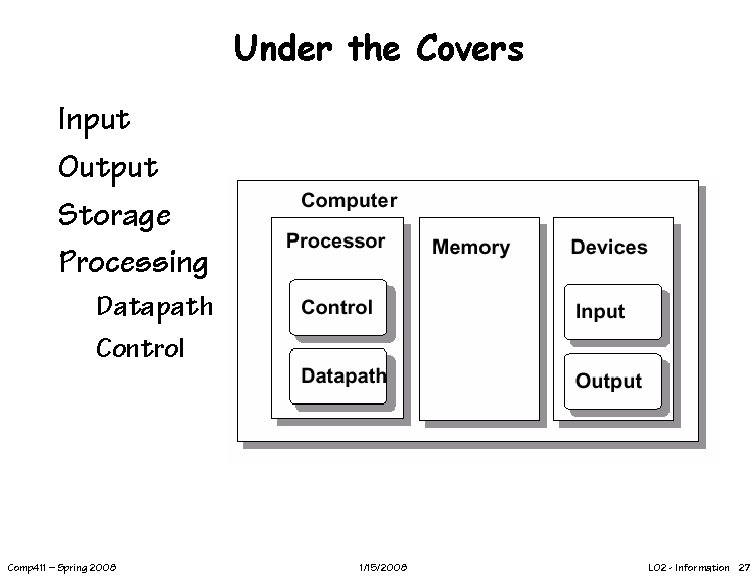

Under the Covers Input Output Storage Processing Datapath Control Comp 411 – Spring 2008 1/15/2008 L 02 - Information 27

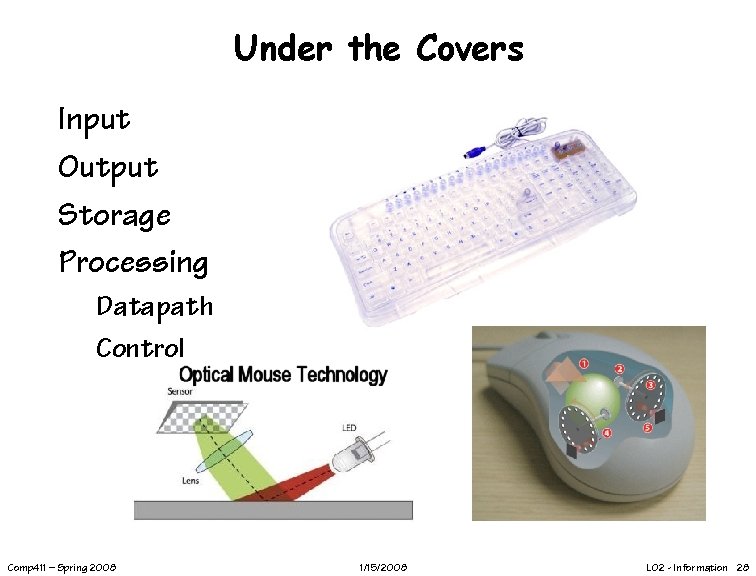

Under the Covers Input Output Storage Processing Datapath Control Comp 411 – Spring 2008 1/15/2008 L 02 - Information 28

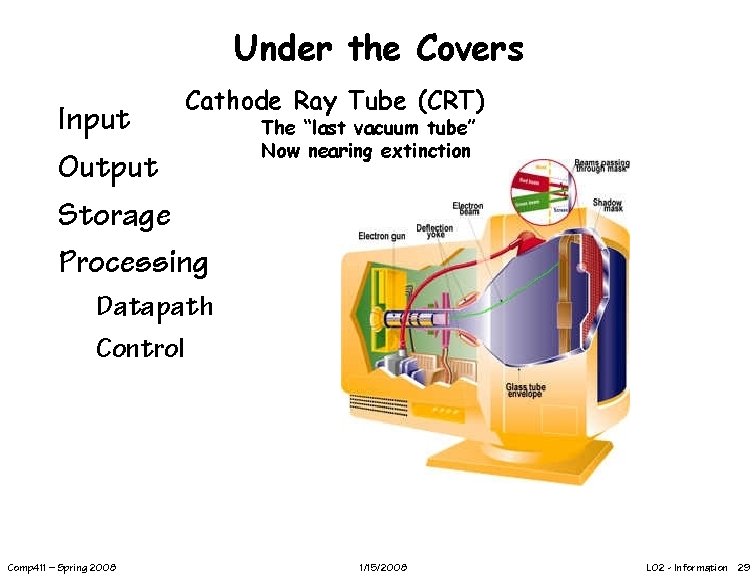

Under the Covers Cathode Ray Tube (CRT) Input Output Storage Processing The “last vacuum tube” Now nearing extinction Datapath Control Comp 411 – Spring 2008 1/15/2008 L 02 - Information 29

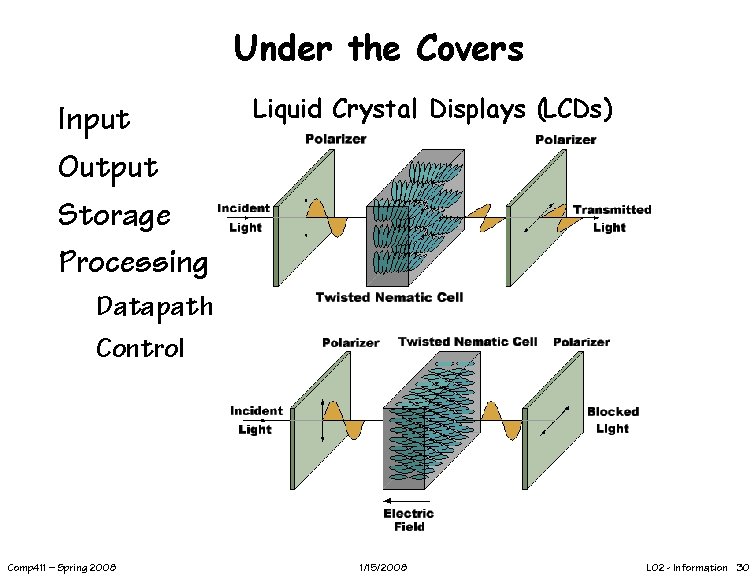

Under the Covers Input Output Storage Processing Liquid Crystal Displays (LCDs) Datapath Control Comp 411 – Spring 2008 1/15/2008 L 02 - Information 30

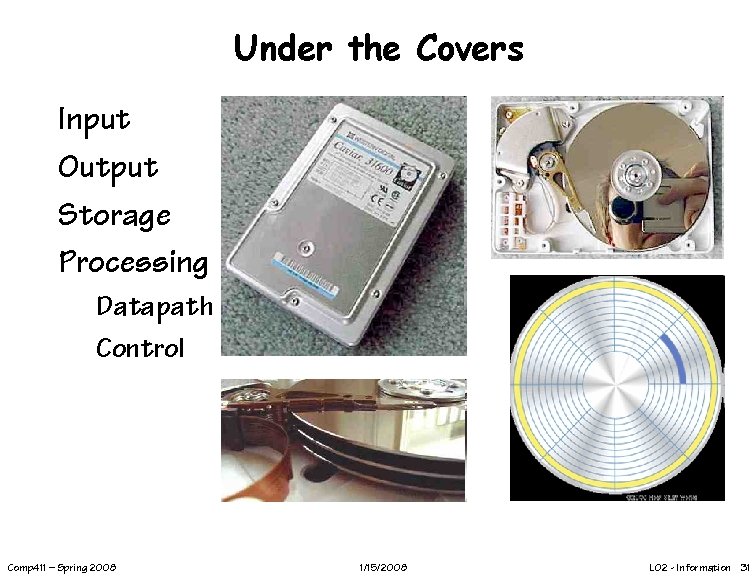

Under the Covers Input Output Storage Processing Datapath Control Comp 411 – Spring 2008 1/15/2008 L 02 - Information 31

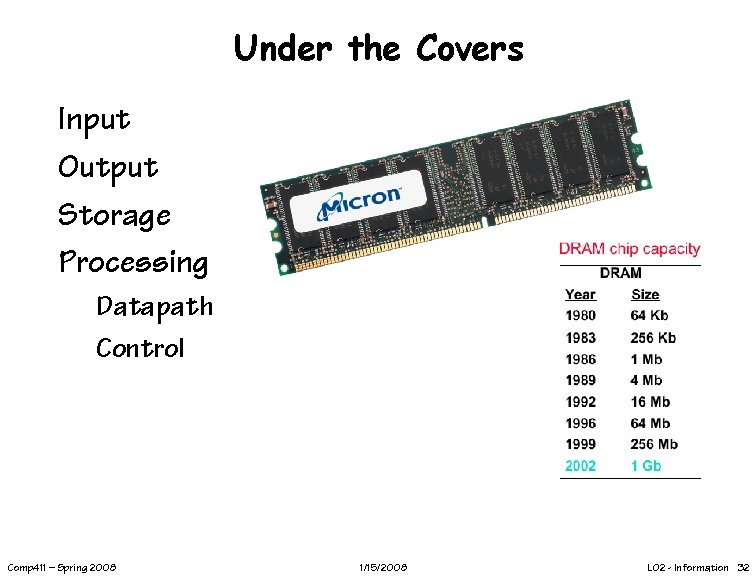

Under the Covers Input Output Storage Processing Datapath Control Comp 411 – Spring 2008 1/15/2008 L 02 - Information 32

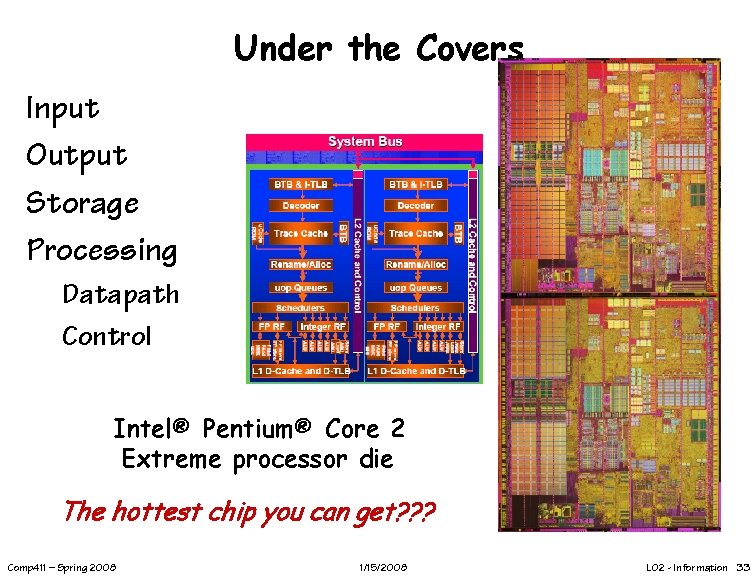

Under the Covers Input Output Storage Processing Datapath Control Intel® Pentium® Core 2 Extreme processor die The hottest chip you can get? ? ? Comp 411 – Spring 2008 1/15/2008 L 02 - Information 33

Issues for Modern Computers GHz Clock speeds Multiple Instructions per clock cycle Multi-core Memory Wall I/O bottlenecks Power Dissipation Will I ever understand all this stuff? Courtesy Troubador Technology Changes Comp 411 – Spring 2008 1/15/2008 L 02 - Information 34

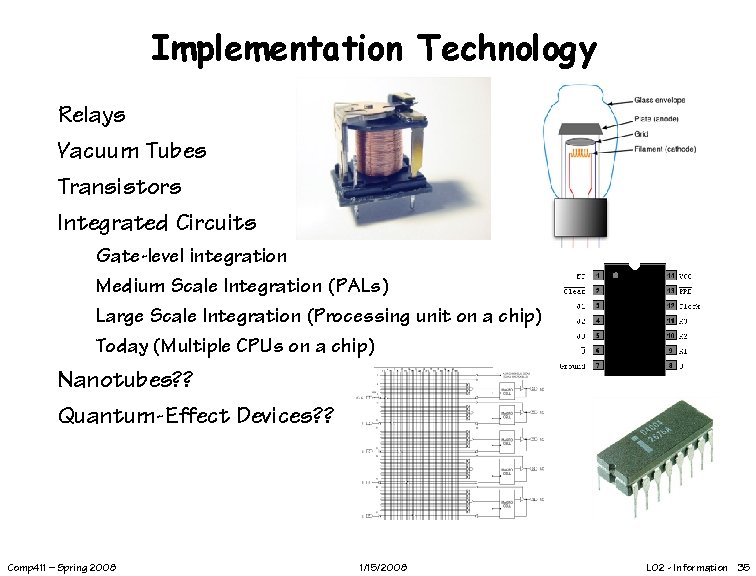

Implementation Technology Relays Vacuum Tubes Transistors Integrated Circuits Gate-level integration Medium Scale Integration (PALs) Large Scale Integration (Processing unit on a chip) Today (Multiple CPUs on a chip) Nanotubes? ? Quantum-Effect Devices? ? Comp 411 – Spring 2008 1/15/2008 L 02 - Information 35

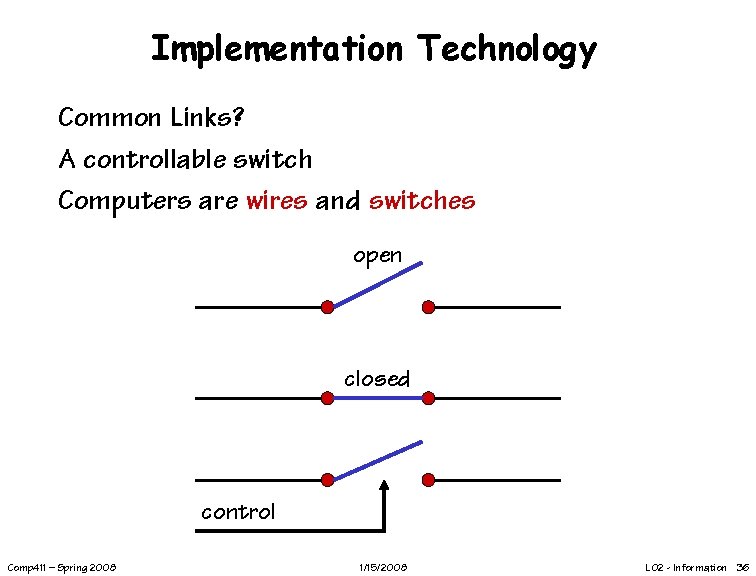

Implementation Technology Common Links? A controllable switch Computers are wires and switches open closed control Comp 411 – Spring 2008 1/15/2008 L 02 - Information 36

Chips Silicon Wafers Chip manufactures build many copies of the same circuit onto a single wafer. Only a certain percentage of the chips will work; those that work will run at different speeds. The yield decreases as the size of the chips increases and the feature size decreases. Wafers are processed by automated fabrication lines. To minimize the chance of contaminants ruining a process step, great care is taken to maintain a meticulously clean environment. Comp 411 – Spring 2008 1/15/2008 L 02 - Information 37

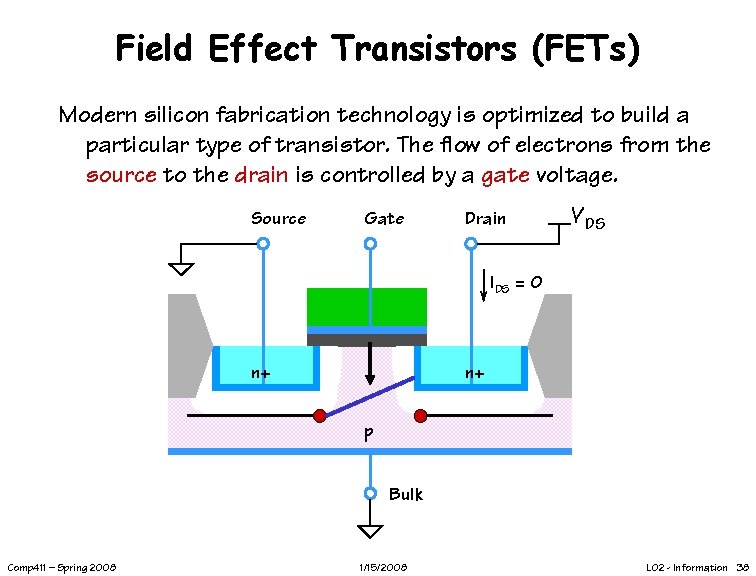

Field Effect Transistors (FETs) Modern silicon fabrication technology is optimized to build a particular type of transistor. The flow of electrons from the source to the drain is controlled by a gate voltage. Source Gate Drain VDS IDS = 0 n+ n+ p Bulk Comp 411 – Spring 2008 1/15/2008 L 02 - Information 38

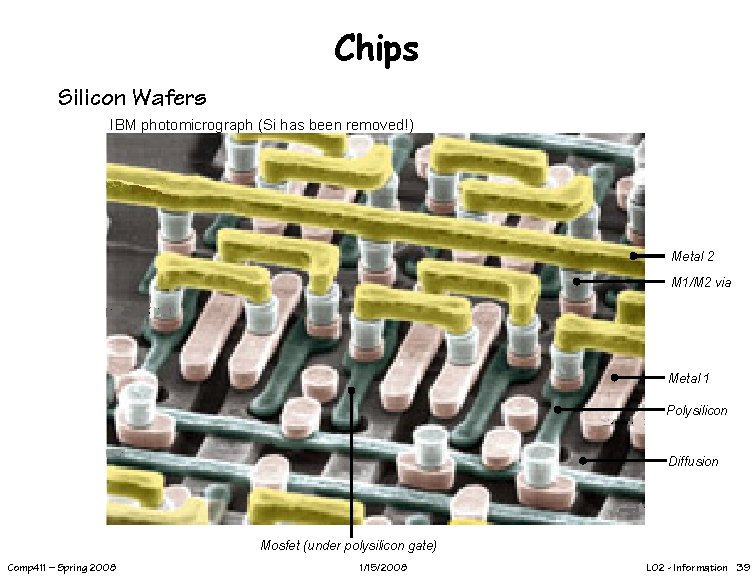

Chips Silicon Wafers IBM photomicrograph (Si has been removed!) Metal 2 M 1/M 2 via Metal 1 Polysilicon Diffusion Mosfet (under polysilicon gate) Comp 411 – Spring 2008 1/15/2008 L 02 - Information 39

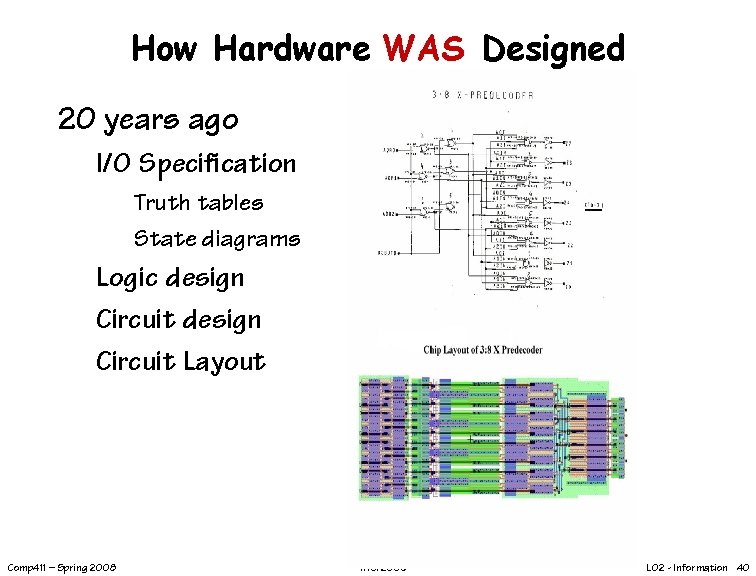

How Hardware WAS Designed 20 years ago I/O Specification Truth tables State diagrams Logic design Circuit Layout Comp 411 – Spring 2008 1/15/2008 L 02 - Information 40

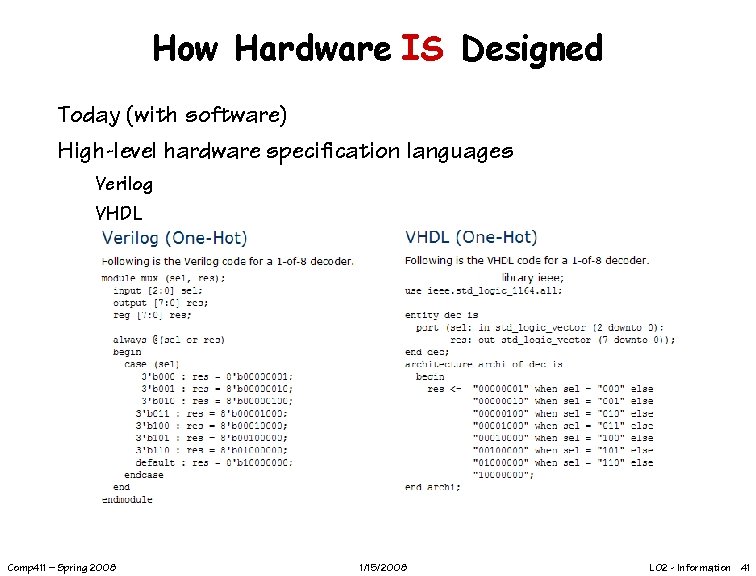

How Hardware IS Designed Today (with software) High-level hardware specification languages Verilog VHDL Comp 411 – Spring 2008 1/15/2008 L 02 - Information 41

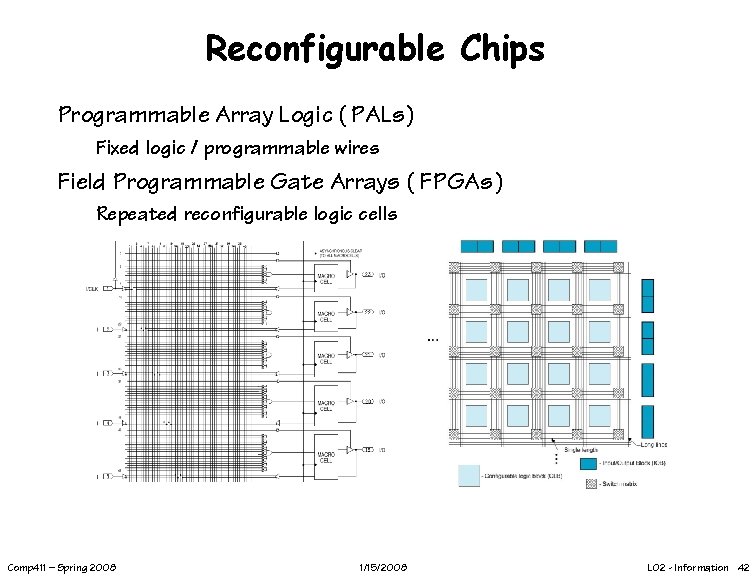

Reconfigurable Chips Programmable Array Logic ( PALs) Fixed logic / programmable wires Field Programmable Gate Arrays ( FPGAs) Repeated reconfigurable logic cells Comp 411 – Spring 2008 1/15/2008 L 02 - Information 42

Next Lecture Computer Representations How is X represented in computers? X = text X = numbers X = anything else Encoding Information Comp 411 – Spring 2008 1/15/2008 L 02 - Information 43

- Slides: 43