WELCOME to the webinar Institutionalizing Impact Evaluation This

WELCOME to the webinar “Institutionalizing Impact Evaluation” This Live Webinar will start at 9: 30 AM, New York time. All microphones & webcams are disabled and we will only enable microphones during the Q&A portion. Therefore, you will not hear any sound/noise till the beginning of the webinar.

Institutionalizing Impact Evaluation Live Webinar 25 th January 2011 Dev. Info

This series of webinars are based on the book published by UNICEF in partnership with key international institutions §Authors: 40 global evaluation leaders §Partnership: UNICEF, WB, UNDP, WFP, UNIFEM, IDEAS, IOCE, Dev. Info

Available for free download at www. mymande. org

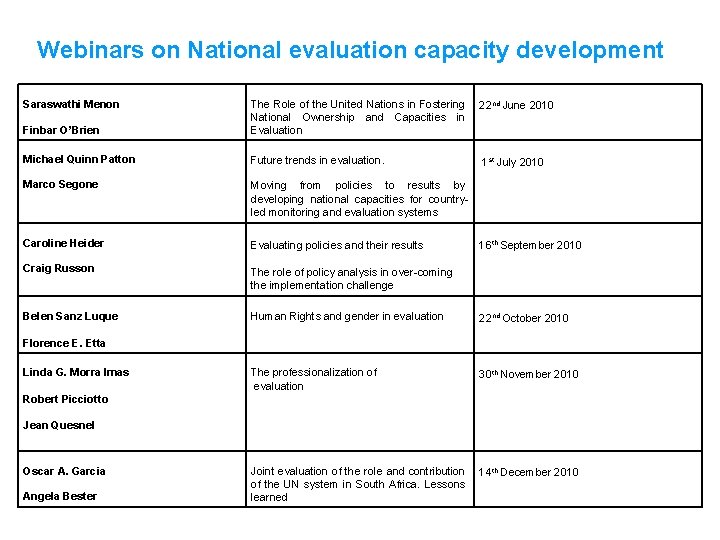

Webinars on National evaluation capacity development Saraswathi Menon 22 nd June 2010 Finbar O’Brien The Role of the United Nations in Fostering National Ownership and Capacities in Evaluation Michael Quinn Patton Future trends in evaluation. 1 st July 2010 Marco Segone Moving from policies to results by developing national capacities for countryled monitoring and evaluation systems Caroline Heider Evaluating policies and their results Craig Russon The role of policy analysis in over-coming the implementation challenge Belen Sanz Luque Human Rights and gender in evaluation 22 nd October 2010 The professionalization of evaluation 30 th November 2010 Joint evaluation of the role and contribution of the UN system in South Africa. Lessons learned 14 th December 2010 16 th September 2010 Florence E. Etta Linda G. Morra Imas Robert Picciotto Jean Quesnel Oscar A. Garcia Angela Bester

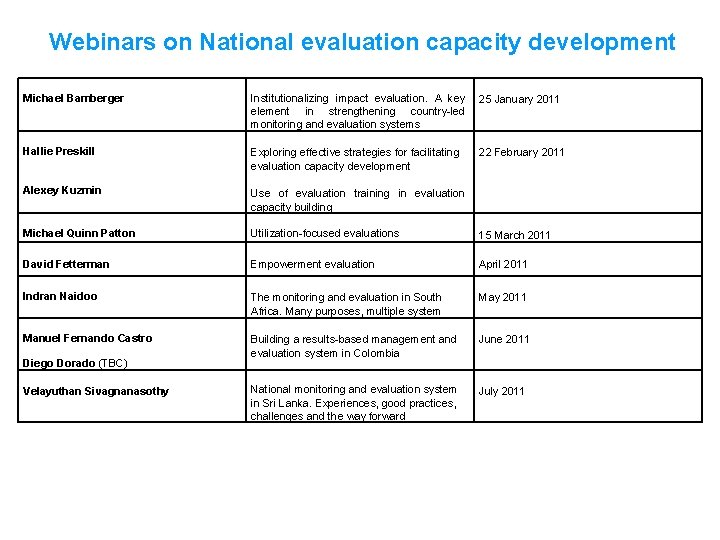

Webinars on National evaluation capacity development Michael Bamberger Institutionalizing impact evaluation. A key element in strengthening country-led monitoring and evaluation systems 25 January 2011 Hallie Preskill Exploring effective strategies for facilitating evaluation capacity development 22 February 2011 Alexey Kuzmin Use of evaluation training in evaluation capacity building Michael Quinn Patton Utilization-focused evaluations 15 March 2011 David Fetterman Empowerment evaluation April 2011 Indran Naidoo The monitoring and evaluation in South Africa. Many purposes, multiple system May 2011 Manuel Fernando Castro Building a results-based management and evaluation system in Colombia June 2011 National monitoring and evaluation system in Sri Lanka. Experiences, good practices, challenges and the way forward July 2011 Diego Dorado (TBC) Velayuthan Sivagnanasothy

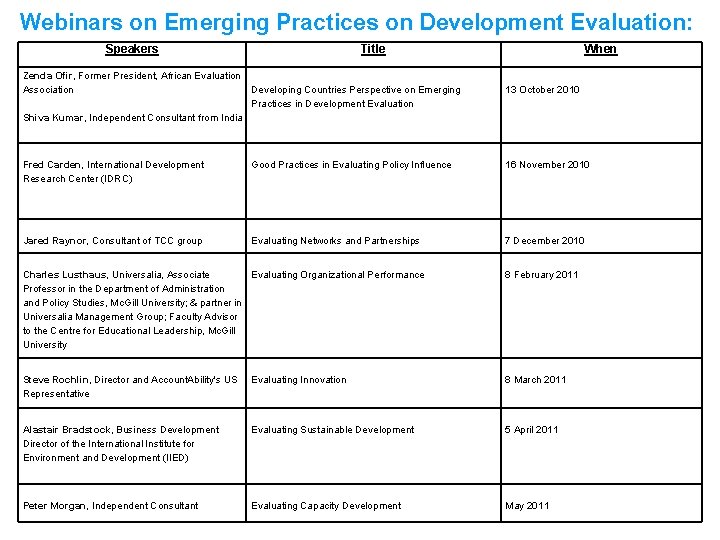

Webinars on Emerging Practices on Development Evaluation: Speakers Zenda Ofir, Former President, African Evaluation Association Title When Developing Countries Perspective on Emerging Practices in Development Evaluation 13 October 2010 Fred Carden, International Development Research Center (IDRC) Good Practices in Evaluating Policy Influence 16 November 2010 Jared Raynor, Consultant of TCC group Evaluating Networks and Partnerships 7 December 2010 Shiva Kumar, Independent Consultant from India Charles Lusthaus, Universalia, Associate Evaluating Organizational Performance Professor in the Department of Administration and Policy Studies, Mc. Gill University; & partner in Universalia Management Group; Faculty Advisor to the Centre for Educational Leadership, Mc. Gill University 8 February 2011 Steve Rochlin, Director and Account. Ability's US Representative Evaluating Innovation 8 March 2011 Alastair Bradstock, Business Development Director of the International Institute for Environment and Development (IIED) Evaluating Sustainable Development 5 April 2011 Peter Morgan, Independent Consultant Evaluating Capacity Development May 2011

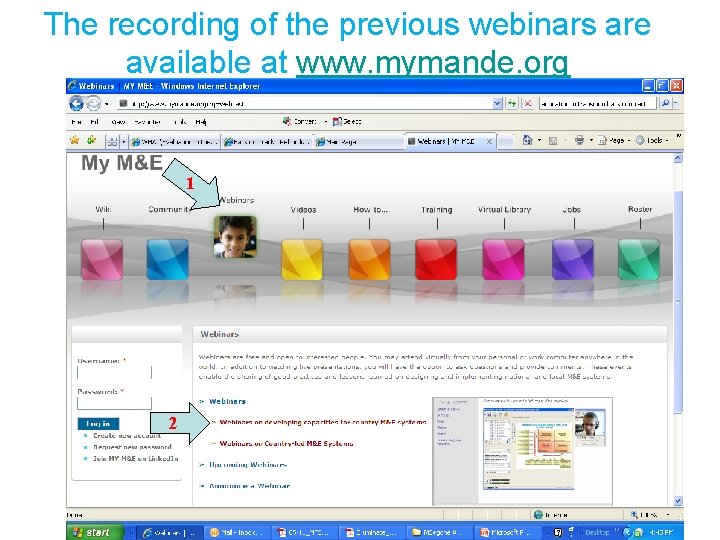

The recording of the previous webinars are available at www. mymande. org 1 2

The recording of the previous webinars are available at www. mymande. org

The recording of the previous webinars are available at www. mymande. org

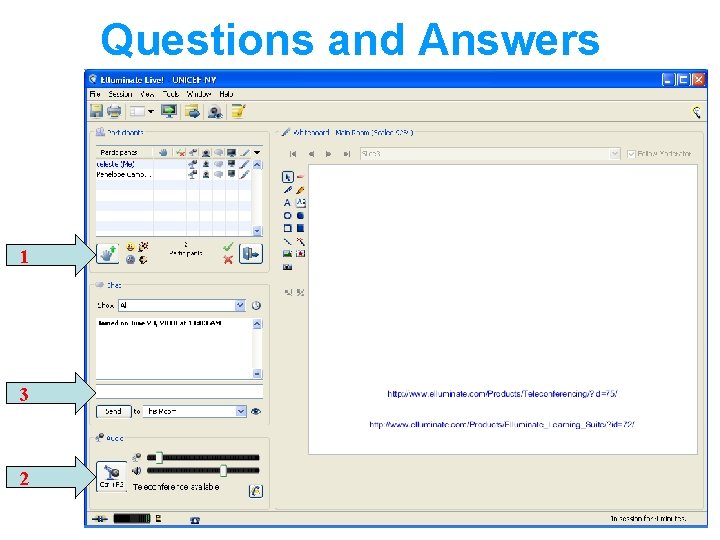

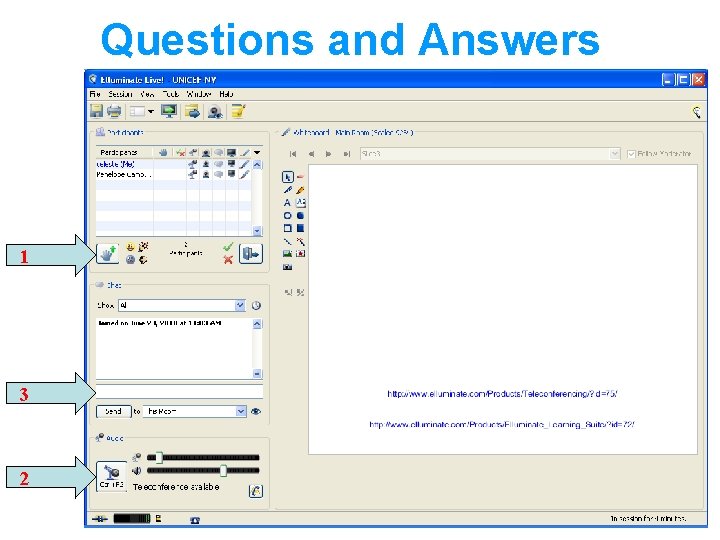

Questions and Answers 1 3 2

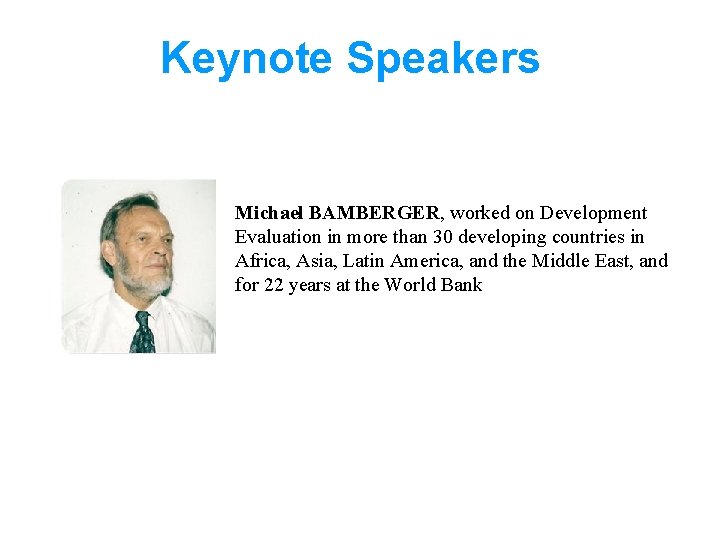

Keynote Speakers Michael BAMBERGER, worked on Development Evaluation in more than 30 developing countries in Africa, Asia, Latin America, and the Middle East, and for 22 years at the World Bank

Agenda 9 h 30 – 9 h 35 Welcome and introduction Marco Segone, Systemic management, UNICEF Evaluation Office 9 h 35 – 10 h 05 Michael Bamberger, Independent Consultant 10 h 05 – 10 h 25 Questions and Answers Abigail Taylor, Knowledge Management Specialist 10 h 25 – 10 h 30 Wrap-up Marco Segone

Fourth Meeting of the UNICEF, IOCE, DEVINFO in partnership with UNDP, WFP, UNIFEM and ILO Latin American Monitoring and Network Webinar Series Evaluation on Developing Capacities for Country Monitoring and Evaluation Systems Institutionalizing Impact Evaluation A key element in strengthening country M&E systems Michael. Bamberger Michael Bamberger Independent Consultant January 25, 2011

Outline 1. 2. 3. 4. 5. 6. 7. 8. 15 Why worry about institutionalizing Impact Evaluation? Defining impact evaluation [IE] Defining institutionalization Alternative pathways to institutionalization of IE Skills required for managing IEs Factors affecting the successful institutionalization of IE Creating demand for IE Issues for governments and donor agencies

1. Why worry about institutionalizing IE? The potential benefits of IE studies to developing countries are often limited because: • Many IE studies are one-off with no direct follow-up • Many IEs are largely funded and managed by donors with little country ownership. • Quality of many IE’s is limited due to lack of secondary data or national evaluation capacity • Many IEs are method-driven not utilization driven 16

Importance of utilization (continued) • Full benefits only achieved if there is a system for selecting, conducting and using the studies − and for generating the secondary data on which a rigorous IE will depend 17

![2. Defining Impact Evaluation [IE] • IE only one of many types of program 2. Defining Impact Evaluation [IE] • IE only one of many types of program](http://slidetodoc.com/presentation_image_h2/00b6dad5eec8b4be1e6d00841e80c40b/image-18.jpg)

2. Defining Impact Evaluation [IE] • IE only one of many types of program evaluation [See Table 1] • IE has specific purposes and is not a general tool for addressing all management questions 18

![Defining IE [continued] The two most widely used approaches to defining IE • Option Defining IE [continued] The two most widely used approaches to defining IE • Option](http://slidetodoc.com/presentation_image_h2/00b6dad5eec8b4be1e6d00841e80c40b/image-19.jpg)

Defining IE [continued] The two most widely used approaches to defining IE • Option 1: Defining IE in terms of the methodology • Option 2: Defining IE in terms of what is evaluated and the timeline 19

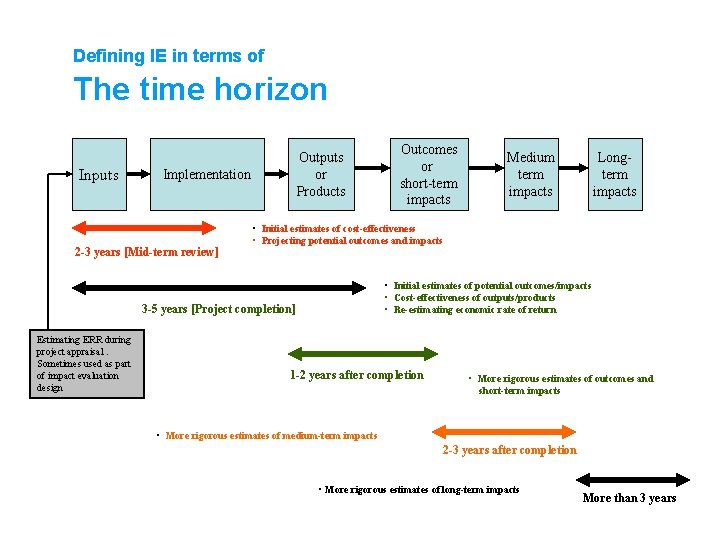

Defining IE in terms of The time horizon Inputs Outputs or Products Implementation 2 -3 years [Mid-term review] Medium term impacts Longterm impacts • Initial estimates of cost-effectiveness • Projecting potential outcomes and impacts • Initial estimates of potential outcomes/impacts • Cost-effectiveness of outputs/products • Re-estimating economic rate of return 3 -5 years [Project completion] Estimating ERR during project appraisal. Sometimes used as part of impact evaluation design Outcomes or short-term impacts 1 -2 years after completion • More rigorous estimates of outcomes and short-term impacts • More rigorous estimates of medium-term impacts 2 -3 years after completion • More rigorous estimates of long-term impacts More than 3 years

![Other dimensions of IE [see Table 2] • • • 21 Purpose What is Other dimensions of IE [see Table 2] • • • 21 Purpose What is](http://slidetodoc.com/presentation_image_h2/00b6dad5eec8b4be1e6d00841e80c40b/image-21.jpg)

Other dimensions of IE [see Table 2] • • • 21 Purpose What is being measured Level Scale Cost Timing Client Who conducts the evaluation Methodological rigor [See Table 3]

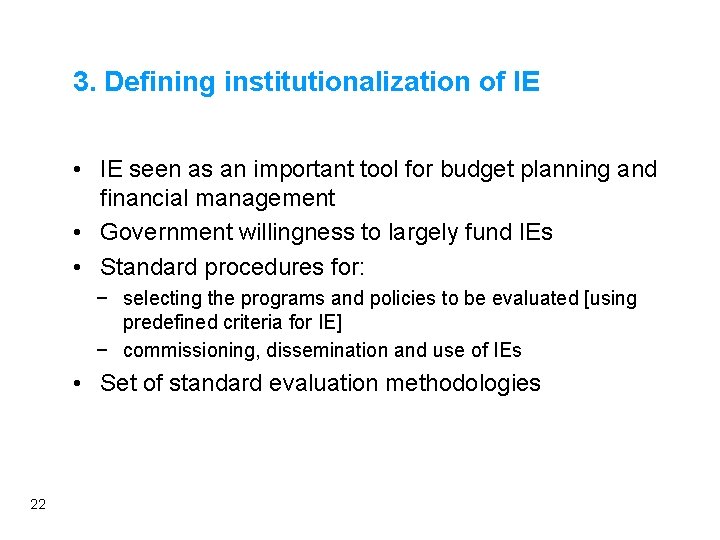

3. Defining institutionalization of IE • IE seen as an important tool for budget planning and financial management • Government willingness to largely fund IEs • Standard procedures for: − selecting the programs and policies to be evaluated [using predefined criteria for IE] − commissioning, dissemination and use of IEs • Set of standard evaluation methodologies 22

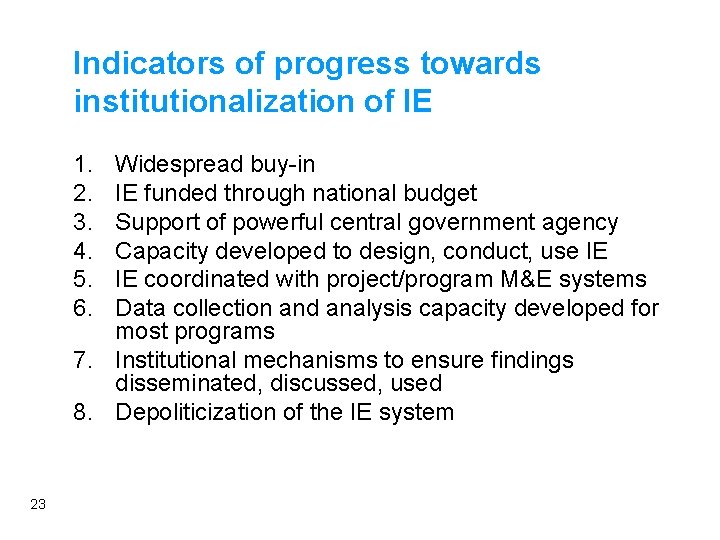

Indicators of progress towards institutionalization of IE 1. 2. 3. 4. 5. 6. Widespread buy-in IE funded through national budget Support of powerful central government agency Capacity developed to design, conduct, use IE IE coordinated with project/program M&E systems Data collection and analysis capacity developed for most programs 7. Institutional mechanisms to ensure findings disseminated, discussed, used 8. Depoliticization of the IE system 23

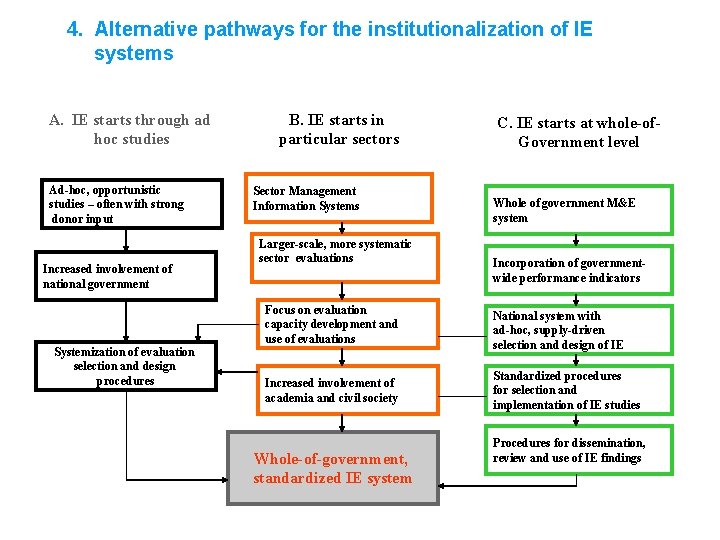

4. Alternative pathways for the institutionalization of IE systems A. IE starts through ad hoc studies Ad-hoc, opportunistic studies – often with strong donor input Increased involvement of national government Systemization of evaluation selection and design procedures B. IE starts in particular sectors Sector Management Information Systems Larger-scale, more systematic sector evaluations C. IE starts at whole-of. Government level Whole of government M&E system Incorporation of governmentwide performance indicators Focus on evaluation capacity development and use of evaluations National system with ad-hoc, supply-driven selection and design of IE Increased involvement of academia and civil society Standardized procedures for selection and implementation of IE studies Whole-of-government, standardized IE system Procedures for dissemination, review and use of IE findings

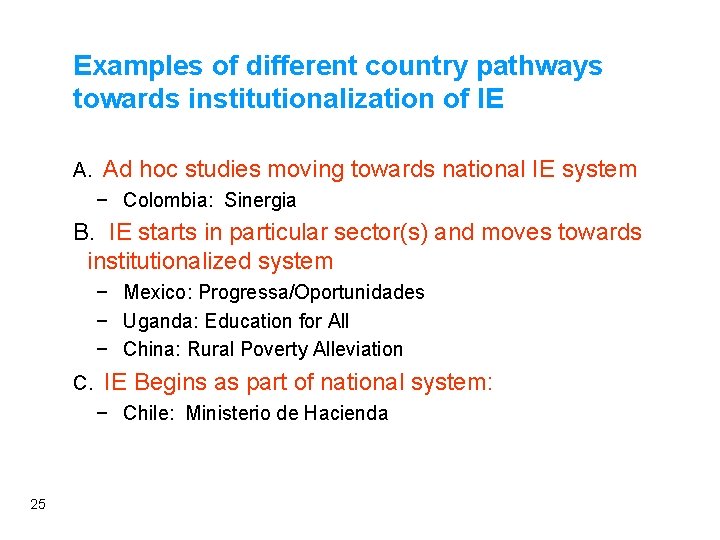

Examples of different country pathways towards institutionalization of IE A. Ad hoc studies moving towards national IE system − Colombia: Sinergia B. IE starts in particular sector(s) and moves towards institutionalized system − Mexico: Progressa/Oportunidades − Uganda: Education for All − China: Rural Poverty Alleviation C. IE Begins as part of national system: − Chile: Ministerio de Hacienda 25

Mexico: Moving from an evaluation program developed in one sector towards a national evaluation system • Rigorous evaluations of Progresa conditional cash transfers [CCT] conducted over a number of years • Demonstrated effectiveness of CCT as poverty reduction tool • Contributed to new government continuing a major program started by previous regime 26

Mexico (continued) • Evaluations convinced policy-makers to pass law mandating the evaluation of all social sector programs managed by CONEVAL. • Various M&E tools and methods have been developed, including rigorous IE. 27

Colombia: From ad hoc evaluation studies towards a national IE system • Ministry of Planning responsible for national system for evaluation of public sector performance • Most widely used is system for monitoring progress against 320 presidential and country development goals • SINERGIA commissioned and managed wide range of IE: − Selected in a somewhat ad hoc ways, initially with methods focus • Moving towards national system (World Bank M&E loan) 28

Chile: National IE system evolved out of government-wide M&E and accountability system • National M&E system has evolved since 1970 s • 1994 introduced indicators to evaluate national programs • 2001 national program of rigorous IE: − Standard retrospective studies completed in 6 months with low-budget − More in-depth studies lasting up to 19 months 29

![Chile [continued] • The system is managed and championed by the powerful Ministry of Chile [continued] • The system is managed and championed by the powerful Ministry of](http://slidetodoc.com/presentation_image_h2/00b6dad5eec8b4be1e6d00841e80c40b/image-30.jpg)

Chile [continued] • The system is managed and championed by the powerful Ministry of Finance • Limitations: − Narrowly focused − Limited buy-in from implementing agencies − Methodology not very rigorous and would benefit from being combined with more rigorous, in-depth studies in priority sectors 30

Uganda: Education sector evaluations generate interest in more rigorous research • Evaluations of the cost-effectiveness of different components of universal primary education demonstrate how data from the MIS can be used, encouraging more rigorous data collection • Parliament impressed with more rigorous evaluation and accountability and expresses interest in expanding to other programs? 31

China: Using evaluation of field experiments to test approaches to rural poverty alleviation • 1978 Communist Party Congress adopted more pragmatic approach where public action based on demonstrable success on the ground • Field research teams set up to evaluate poverty impacts of decollectivization of farming using contracts with individual farmers 32

![China [continued] • Empirical data helped convince skeptical policymakers of the merits of scaling-up China [continued] • Empirical data helped convince skeptical policymakers of the merits of scaling-up](http://slidetodoc.com/presentation_image_h2/00b6dad5eec8b4be1e6d00841e80c40b/image-33.jpg)

China [continued] • Empirical data helped convince skeptical policymakers of the merits of scaling-up local initiatives • Based on empirical evidence rural reforms that were implemented nationally produced “the most dramatic reduction in the extent of poverty the world has yet seen. ” Ravallion 2008) 33

5. Skills required for managing impact evaluations See Table 4 34

Example of skills agencies need to manage individual impact evaluations • Deciding when an IE is required • Deciding which design to use • Using program theory to define hypotheses and define indicators • Conducting an evaluability assessment • How the evaluation will be managed • Understanding the political dynamics 35

![Required skills [continued] • Managing multi-donor evaluations • Managing consultants • Creating an evidence Required skills [continued] • Managing multi-donor evaluations • Managing consultants • Creating an evidence](http://slidetodoc.com/presentation_image_h2/00b6dad5eec8b4be1e6d00841e80c40b/image-36.jpg)

Required skills [continued] • Managing multi-donor evaluations • Managing consultants • Creating an evidence trail 36

6. Factors affecting the successful institutionalization of impact evaluation 1. 2. 3. 4. 5. 6. 7. 37 Substantive demand from government Enhance understanding of what IE encompasses Strengthening the supply side Starting with a diagnostic study Support of a powerful champion A strong ministry acting as a steward for the program Support of middle-level civil servants

![Factors affecting success [continued] 8. Build on existing M&E and country experience 9. Utilizing Factors affecting success [continued] 8. Build on existing M&E and country experience 9. Utilizing](http://slidetodoc.com/presentation_image_h2/00b6dad5eec8b4be1e6d00841e80c40b/image-38.jpg)

Factors affecting success [continued] 8. Build on existing M&E and country experience 9. Utilizing university and civil society expertise 10. Integrate different ministry evaluation programs 11. Adopt an opportunistic approach 12. Strong capacity development component 38

7. Creating demand for IE See Table 5 • Carrots − Providing financial and other incentives to implementing agencies to develop “evaluation ready” data bases • Sticks − Laws, decrees or regulations mandating IE • Sermons − High-level statements or endorsements by the President, ministers etc. 39

8. Issues for governments and donors 1. Moving from supporting individual IEs to helping governments develop their own IE systems Linking institutionalization to moves towards multidonor, government-lead evaluations Flexibility in terms of methods 2. 3. • 4. 40 Avoid imposing methods that are too sophisticated for client countries Deciding the appropriate funding and support mechanisms

![Issues governments and donors [continued] 5. Design projects that can be evaluated • • Issues governments and donors [continued] 5. Design projects that can be evaluated • •](http://slidetodoc.com/presentation_image_h2/00b6dad5eec8b4be1e6d00841e80c40b/image-41.jpg)

Issues governments and donors [continued] 5. Design projects that can be evaluated • • 6. 41 Seek more uniform implementation procedures permitting use of rigorous designs: Regression Discontinuity, randomization Document implementation and decisions concerning changes Use project records to create baseline data – ensure records are kept in a form that can be used for evaluation Make greater use of project monitoring data Strengthening national data bases

![Issues for governments and donors [continued] 7. 8. 9. 10. 42 Involving civil society Issues for governments and donors [continued] 7. 8. 9. 10. 42 Involving civil society](http://slidetodoc.com/presentation_image_h2/00b6dad5eec8b4be1e6d00841e80c40b/image-42.jpg)

Issues for governments and donors [continued] 7. 8. 9. 10. 42 Involving civil society and academia More focus on evaluation utilization Supporting IE capacity development Mapping of all attempts to use different types of IE (including failures) to provide more complete information on what does and does not work, how evaluations are used and what influence they have

For more information • Michael Bamberger’s Chapter in “From Policies to Results” UNICEF 2010 • Handouts for this presentation will be available at www. mymande. org jmichaelbamberger@gmail. com 43

Questions and Answers 1 3 2

Evaluating Organizational Performance, 8 February 2011 Charles LUSTHAUS, Universalia

Exploring effective strategies for facilitating evaluation capacity development, 22 February 2011 Hallie PRESKILL, Executive Director, Strategic Learning & Evaluation Center, FSG Social Impact Advisor & former President of the American Evaluation Association (AEA) Alexey KUZMIN, President, Process Consulting Company & former Chair, IPEN

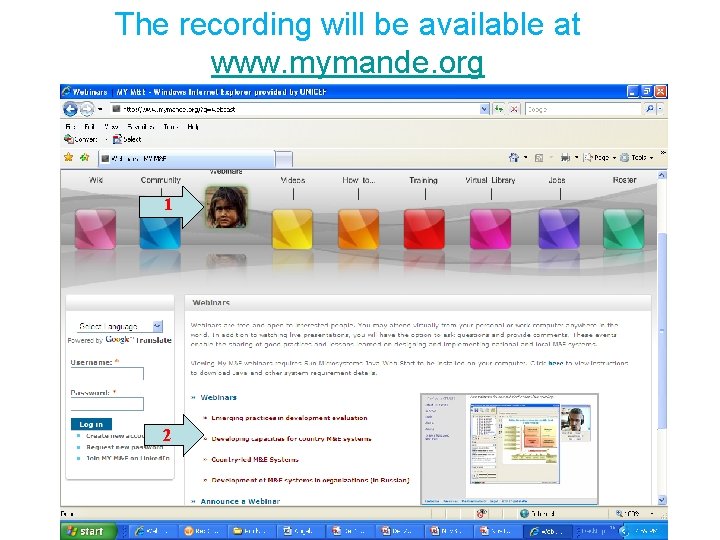

The recording will be available at www. mymande. org 1 2

Evaluation of Webinars Survey Your opinion/feedback is important to us, therefore we ask that you complete this short evaluation on today’s webinar. http: //www. zoomerang. com/Survey/WEB 22 BMJ 86 GX 8 T/

- Slides: 48