Welcome to the Single IRB s IRB Adoption

Welcome to the Single IRB (s. IRB) Adoption & Evaluation Webinar Once you’ve logged into Web. Ex, please select one of the following audio options: 1. Call Using Computer 2. I Will Call In 3. DO NOT SELECT the “Call Me” option. This webinar is being recorded. All participants are muted upon entry. Questions will be taken following the presentation. Please indicate that you have a question by typing in the chat box “To Erin Baker (host). ”

January 16, 2020 s. IRB Adoption & Evaluation Cynthia Hahn, President, Integrated Research Strategy Stephen Rosenfeld, Chair, Secretary’s Advisory Committee on Human Research Protections (SACHRP) Heather Pierce, Senior Director for Science Policy and Regulatory Counsel, Association of American Medical Colleges (AAMC)

Public-Private Partnership Co-founded by Duke University & FDA Involves all stakeholders - Approx. 80+ members - Participation of 400+ more orgs MISSION: To develop and drive adoption of practices that will increase the quality and efficiency of clinical trials

Agenda CTTI s. IRB Projects & Introduction to s. IRB Evaluation Work § Cynthia Hahn Methods & Results of s. IRB Evaluation Project § Stephen Rosenfeld Evaluation Framework Report § Heather Pierce Questions & Discussion

Single IRB (s. IRB) Evaluation Team CTTI Project Team Laura Cleveland Cynthia Hahn Eric Mah Rita O’Sullivan Helen Panageas CTTI Social Science Research Team Amy Corneli Emily Hanlen Kevin Mc. Kenna Adora Nsonwu Brian Perry Heather Pierce Stephen Rosenfeld CTTI Project Manager Sara Calvert

CTTI s. IRB Projects & Introduction to s. IRB Evaluation Work

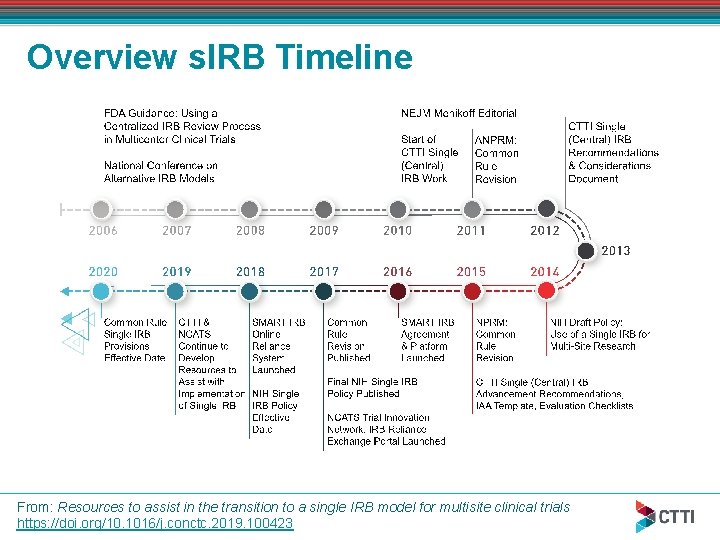

Overview s. IRB Timeline From: Resources to assist in the transition to a single IRB model for multisite clinical trials https: //doi. org/10. 1016/j. conctc. 2019. 100423

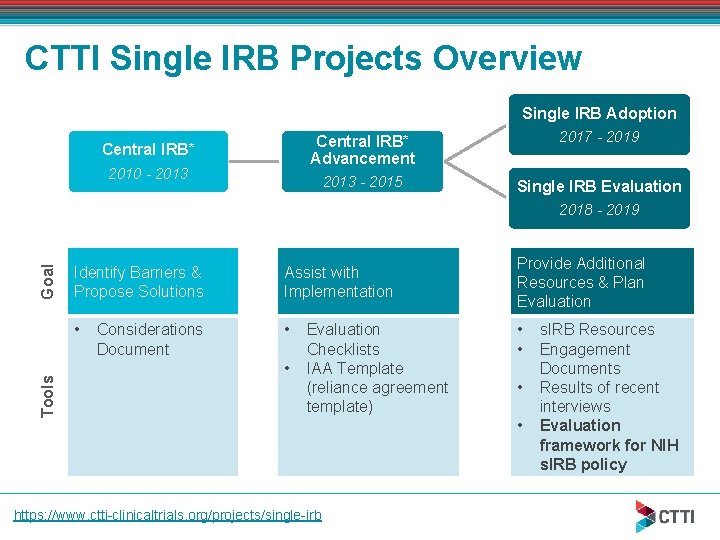

CTTI Single IRB Projects Overview Single IRB Adoption Central IRB* 2010 - 2013 Central IRB* Advancement 2017 - 2019 2013 - 2015 Single IRB Evaluation Tools Goal 2018 - 2019 Identify Barriers & Propose Solutions Assist with Implementation • • Considerations Document • Evaluation Checklists IAA Template (reliance agreement template) https: //www. ctti-clinicaltrials. org/projects/single-irb Provide Additional Resources & Plan Evaluation • • s. IRB Resources Engagement Documents Results of recent interviews Evaluation framework for NIH s. IRB policy

Main Findings of Previous CTTI s. IRB Projects Need to define “central IRB” CTTI definition of “central IRB” - a single IRB of record for all sites involved in a multi -center protocol. A range of entities may serve as the s. IRB (e. g. another institution’s IRB, a federal IRB, an independent IRB). Now use term single IRB (s. IRB). Concerns about the conflation of the responsibilities of the institution with the ethical review responsibilities of the IRB Discomfort due to lack of experience using centralized review Research community’s need for additional resources to assist in transition to s. IRB model

CTTI s. IRB Resources Considerations document Evaluation checklists IRB authorization agreement (IAA) template s. IRB resource of resources (Ro. R) Engagement documents Available at: https: //www. ctti-clinicaltrials. org/projects/single-irb

s. IRB Evaluation April 2018: CTTI received an award to help develop a comprehensive evaluation plan for the NIH s. IRB policy CTTI’s selected proposal included: Creating multi-stakeholder project team of experts in human research protection and clinical research Gathering evidence, with CTTI Social Science Research Team, to inform the evaluation plan

Objectives: s. IRB Policy Evaluation Project Data Gathering: § Describe key stakeholder experiences in implementing the NIH s. IRB policy § Describe steps involved in operationalizing the s. IRB process at IRBs & institutions § Identify potential metrics to evaluate the implementation of the NIH s. IRB policy Evaluation Framework - propose methodology for evaluating s. IRB policy including: § Goals of the evaluation § Potential stakeholders to include § Key concepts to measure § Possible evaluation designs

Methods & Results of s. IRB Evaluation Project

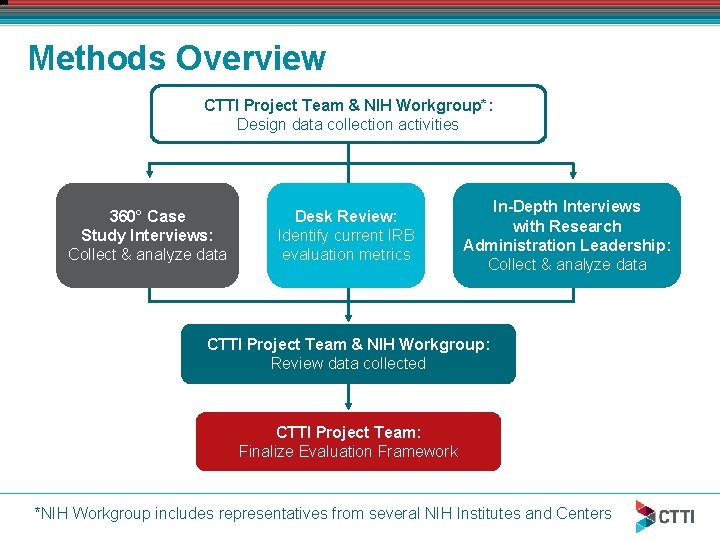

Methods Overview CTTI Project Team & NIH Workgroup*: Design data collection activities 360° Case Study Interviews: Collect & analyze data Desk Review: Identify current IRB evaluation metrics In-Depth Interviews with Research Administration Leadership: Collect & analyze data CTTI Project Team & NIH Workgroup: Review data collected CTTI Project Team: Finalize Evaluation Framework *NIH Workgroup includes representatives from several NIH Institutes and Centers

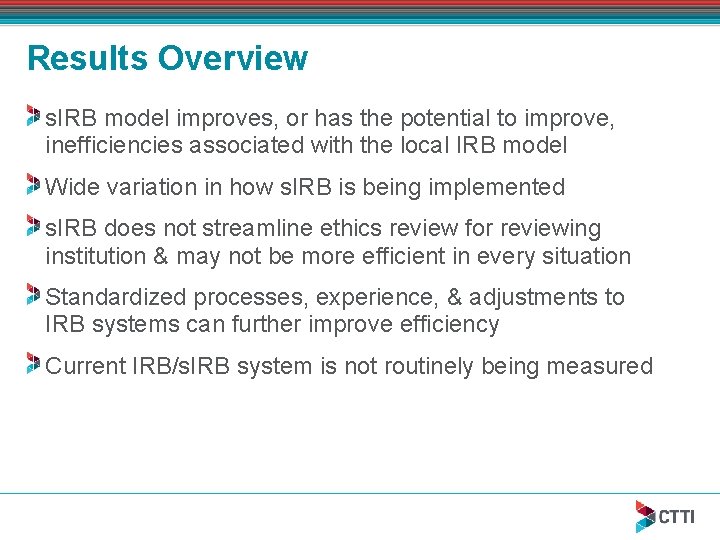

Results Overview s. IRB model improves, or has the potential to improve, inefficiencies associated with the local IRB model Wide variation in how s. IRB is being implemented s. IRB does not streamline ethics review for reviewing institution & may not be more efficient in every situation Standardized processes, experience, & adjustments to IRB systems can further improve efficiency Current IRB/s. IRB system is not routinely being measured

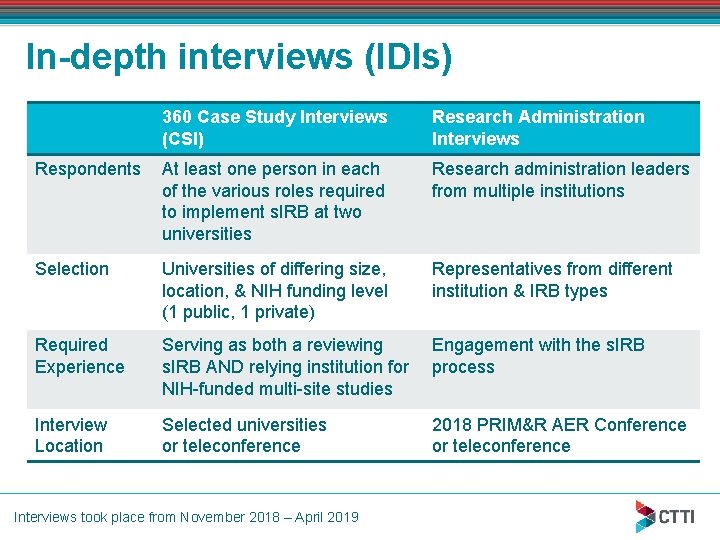

In-depth interviews (IDIs) 360 Case Study Interviews (CSI) Research Administration Interviews Respondents At least one person in each of the various roles required to implement s. IRB at two universities Research administration leaders from multiple institutions Selection Universities of differing size, location, & NIH funding level (1 public, 1 private) Representatives from different institution & IRB types Required Experience Serving as both a reviewing s. IRB AND relying institution for NIH-funded multi-site studies Engagement with the s. IRB process Interview Location Selected universities or teleconference 2018 PRIM&R AER Conference or teleconference Interviews took place from November 2018 – April 2019

IDI Respondent Demographics Respondents (n=34): Research administration leadership representatives from several organizations (n=13) § IRB executives, IRB directors or associate directors, IRB chairs, institutional officials 360 case study interviews at two research institutions (n=21) § 10 research administration leadership representatives - IRB directors or associate directors, IRB chairs, directors of human protection programs, reliance agreement officers § 5 investigators § 6 study/regulatory coordinators

IDI Topics – NIH Policy Goals, Metrics, & Process Respondents were asked to describe their experiences with implementing the s. IRB process Focus on gathering information about the six goals of the NIH s. IRB policy that could inform an evaluation framework 1. 2. 3. 4. 5. 6. Enhance & streamline IRB review for multi-site research Maintain a high standard for human subject protections Allow research to proceed effectively & expeditiously Eliminate unnecessary duplicative IRB review Reduce administrative burden Prevent systemic inefficiencies Process Map: Respondents compared example to their process Metrics: Currently collected, or could be collected in future to evaluate NIH s. IRB policy

IDI Results: Improving Inefficiencies Most respondents believed that the s. IRB model improves, or has the potential to improve, inefficiencies associated with the local IRB model by: § Creating consistency in the review process § Standardizing documents produced for a study § Reducing workload for staff at relying sites § Reducing overall duplication in ethics reviews § Streamlining the amount of involvement of their IRBs when they are a relying institution

IDI Results: Processes & Tools Help Most respondents believed that the s. IRB process typically becomes more efficient, or has the potential to become more efficient, § Once systems are created § Systematic processes are followed § Institutions gain experience & IRBs establish working relationships Resources & tools, such as SMART IRB*, are helpful & assist in standardizing the process Additional standard processes and systems are needed (e. g. , well-defined definition of local context and institutional information repository) *NCATS Streamlined, Multi-site, Accelerated Resources for Trials

IDI Results: Efficiency for Reviewing vs. Relying IRB NIH s. IRB policy has not streamlined ethics review for institutions serving as the s. IRB may not be more efficient in every situation Institutional reviews are still required by the relying institution § Privacy reviews and determinations § Ancillary reviews § Activities related to compliance & oversight “Shadow reviews”— in which relying IRBs still provide an ethics review— are being conducted by some institutions

IDI Results: s. IRB Inefficiencies Wide variation in how s. IRB is being implemented Unclear roles & responsibilities for staff & institutions Lack of systems & processes for implementing the s. IRB process § Retooling IRB workflows § Incompatibility of IRB software § Inability of relying sites to directly access the reviewing IRB’s electronic systems Added workload, particularly for investigators, who must now submit the same documents to both reviewing & relying IRBs

IDI Results: Maintaining High Standards Study participants’ experiences with research do not appear to have changed with the use of s. IRBs Some respondents raised concerns about the need for extensive monitoring & reporting to ensure that the high standards for human subject protections are maintained when using a s. IRB process § Comfort from previously relying on an institution successfully & experience serving as an s. IRB can improve concerns

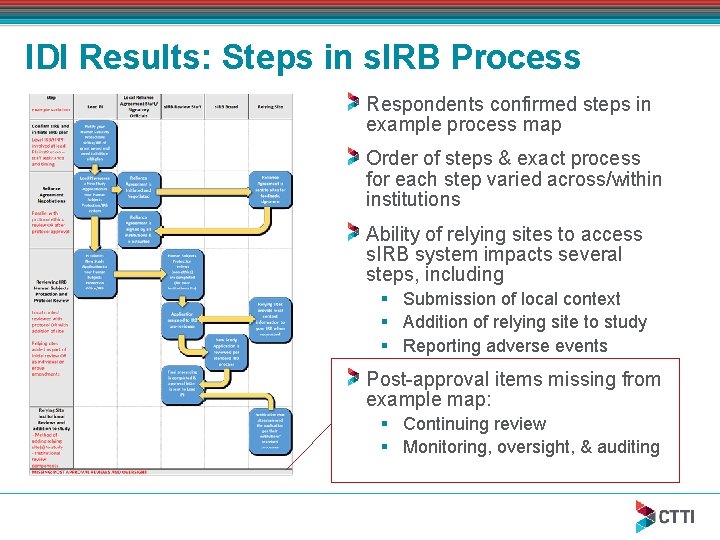

IDI Results: Steps in s. IRB Process Respondents confirmed steps in example process map Order of steps & exact process for each step varied across/within institutions Ability of relying sites to access s. IRB system impacts several steps, including § Submission of local context § Addition of relying site to study § Reporting adverse events Post-approval items missing from example map: § Continuing review § Monitoring, oversight, & auditing

Desk Review: Metrics Findings IRB metrics grouped into five categories: volume, review time, staffing, costs, & quality Quantitative items most common (e. g. , review time, number of studies using s. IRB) § Most IRB metrics are not single IRB specific § Definitions & time points used vary across organizations Existing standardized IRB metrics available for comparison are often limited to member organizations (e. g. AAHRPP, CTSAs) Lack of standard effectiveness measures for assessment of IRB/s. IRB review, or to compare quality across programs

IDI: Metrics Findings Common metrics measure time in each step of review process. Ex: § Time spent on pre-review before IRB submission § Time for PI s. IRB process training § Staff time on s. IRB activities, including site communication Struggle with measuring IRB quality vs. operational efficiency: Quality metrics are important, should be developed § Ex. metrics: Number of modifications requested, % of initial study applications approved by s. IRB, & the number of errors in approved documents found by relying sites Metrics collection could be improved by use of standardized processes, standard definitions, & ability of relying sites to access the s. IRB software system or portal

Evaluation Framework Report

Development of Evaluation Framework CTTI created a final report including: Challenges of attributing outcomes directly to s. IRB policy Suggested next steps for research community to allow for evaluation & improvement of the s. IRB model Proposed evaluation components Report available at: https: //grants. nih. gov/policy/humansubjects/single-irb-policy-multi-site-research. htm

Challenges of Evaluating NIH s. IRB Policy Standardized, well-defined metrics to measure IRB/s. IRB review have not been established Baseline metrics were not measured prior to the implementation of the NIH s. IRB policy s. IRB was already being implemented prior to NIH s. IRB policy effective date for many reasons, including: § Preparation for NIH & Common Rule requirements § Sponsor preferences § s. IRB requirements of some NIH networks & NCI CIRB* Effective date of Common Rule s. IRB provisions will occur prior to implementation of the NIH s. IRB policy evaluation *Central Institutional Review Board for the National Cancer Institute

Possible Next Steps Develop a learning system to measure & improve the s. IRB process & realize the goals of s. IRB requirements Engage stakeholders implementing the s. IRB model Developing a foundational database to identify the population of organizations implementing s. IRB Define critical time points & factors to be measured Test proposed metrics Deploy, routinely collect, & publicly share established metrics to promote best practices & develop a continuous learning environment

Engage Stakeholders Involve a diverse group of stakeholders § Mix of large & small NIH grantee organizations • Multi-site investigators & research staff • Institutional and academic IRBs § Independent IRBs § Policy Organizations § Other relevant organizations Communicate their needs & suggested improvements Contribute to all steps of creating system for continuous s. IRB improvement

Database of s. IRB Organizations A list of organizations implementing s. IRB model is needed to identify and define the population to be queried in an evaluation § Organizations serving as s. IRB § Relying institutions Could be developed from existing or new data fields on forms collected by NIH or other IRB registrations Collect only the minimum necessary information to decrease burden of respondents and minimize effort to maintain database

Establish & Define Metrics To evaluate IRB/s. IRB effectiveness, need to establish: § Critical time points to be measured • Include IRB review time, AND • Time and effort of other s. IRB activities such as – Time to establish reliance agreements – Communication between s. IRB, lead study teams, and sites – Time to enter information in s. IRB system § Functions and processes performed by s. IRB and relying institutions § Standard definitions and approaches to time-point and process measures collection

Create & Test Evaluation Instrument Develop a survey instrument* to evaluate IRB functions & collect established metrics Potential key questions & metrics were created by CTTI team to guide the creation of a survey instrument Survey should be pilot tested to assess practicality & make any necessary adjustments Target sample: representative sample of grantee institutions § Include varied institution size & level of NIH funding, different types of research, & varied levels of s. IRB experience *The Paperwork Reduction Act requirements, to receive permission from the Office of Management and Budget (OMB) before surveying more than 10 or more people, will need to be considered if implemented by a government agency

Routinely Collect & Share Metrics Routinely collect established metrics & s. IRB functions via annual evaluation survey Aggregate & report results publicly Identify best practices & standards where appropriate Encourage areas for improvement & elimination of unnecessary duplicative practices Results should assist organizations in improving human subject protections & managing business practices Adjust annual surveys to add or remove elements as needed (e. g. , implementation questions may lose relevance)

Conclusions Process for implementing s. IRB varies widely & is not being routinely evaluated CTTI project team agreed that definitive evaluation of direct impact & effectiveness of NIH s. IRB policy is infeasible NIH could lead the way, in partnership with other Common Rule agencies, to develop an ongoing evaluation of the implementation & continuing process improvement of the s. IRB model

NIH Single IRB Policy Information https: //grants. nih. gov /policy/humansubject s/single-irb-policymulti-siteresearch. htm Page includes link to CTTI Evaluation Framework report

THANK YOU. For more information on CTTI s. IRB work, contact sara. calvert@duke. edu www. ctti-clinicaltrials. org

- Slides: 38