Weighting FiniteState Transductions With Neural Context Pushpendre Rastogi

- Slides: 29

Weighting Finite-State Transductions With Neural Context Pushpendre Rastogi Ryan Cotterell Jason Eisner

2

The Setting: string-to-string transduction Morphology! Pronunciation!Transliteratio break broken bathe beð Washington ﻭﺍﺷﻨﻄﻮﻥ Segmentation! Tagging! Supertagg 日文章魚怎麼 說 日文 章魚 怎麼 說 Time flies like an arrow N V P D N 3

The Setting: string-to-string transduction The Cowboys: Finite-state transducers 4

The Setting: string-to-string transduction The Cowboys: Finite-state transducers The Aliens: seq 2 seq models (recurrent neural nets) 5

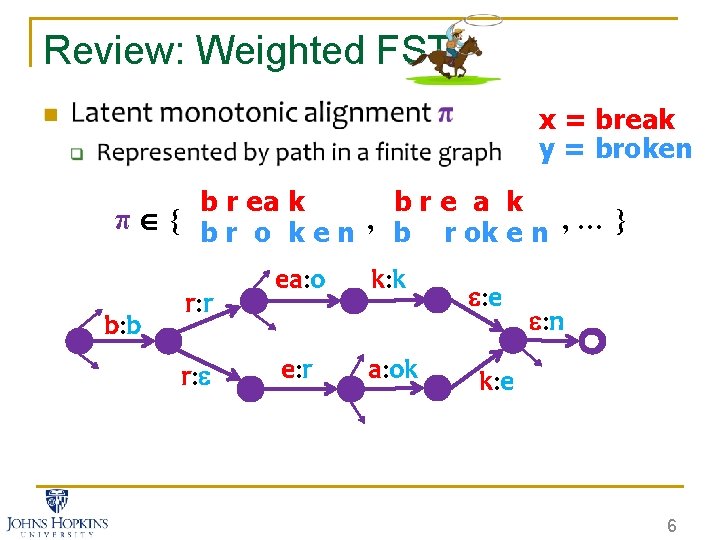

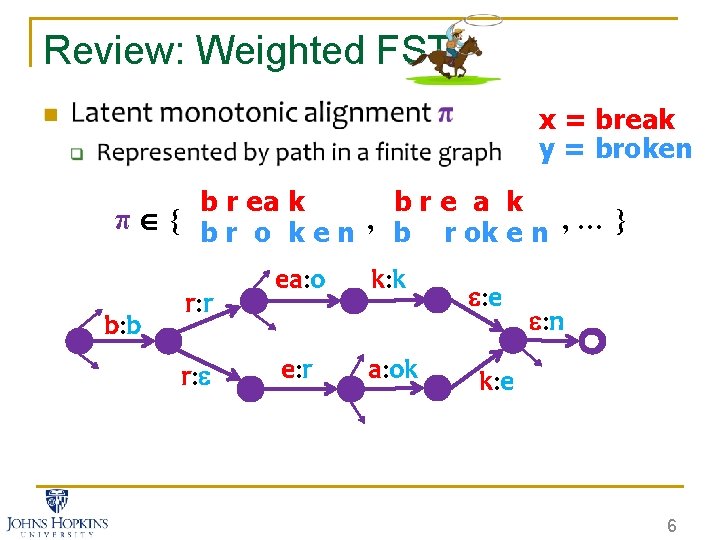

Review: Weighted FST n x = break y = broken b r ea k bre a k π { b r o k e n , b r ok e n , … } b: b r: r r: ea: o e: r k: k a: ok : e : n k: e 6

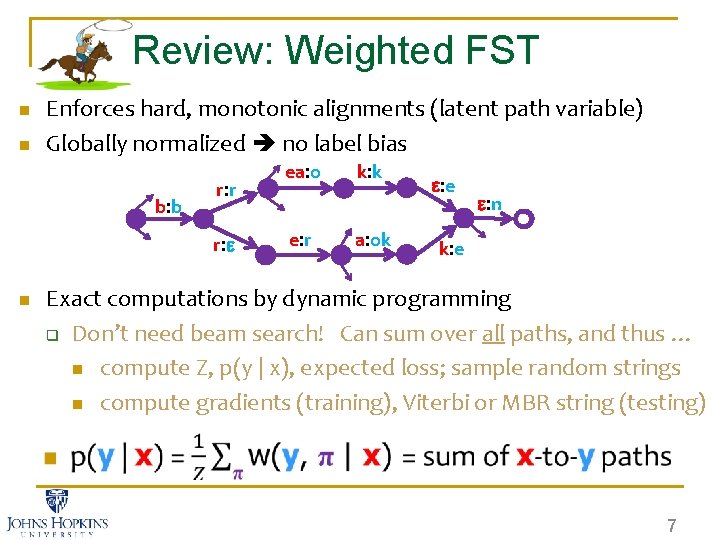

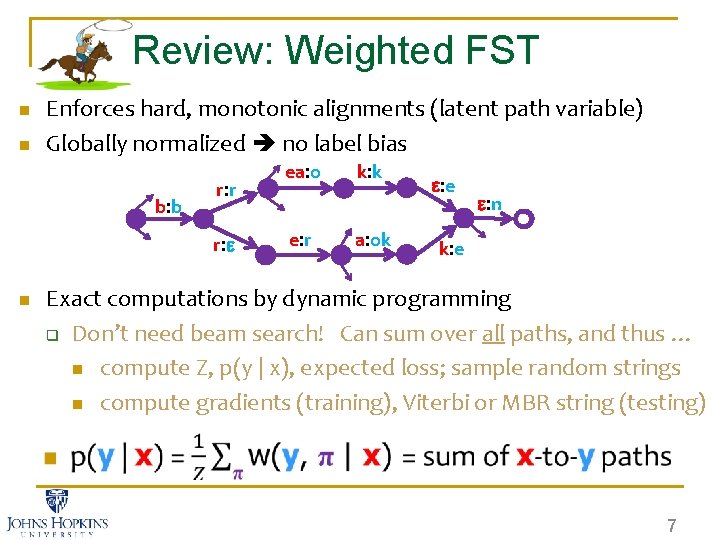

Review: Weighted FST n n Enforces hard, monotonic alignments (latent path variable) Globally normalized no label bias b: b r: r r: n ea: o e: r k: k a: ok : e : n k: e Exact computations by dynamic programming q Don’t need beam search! Can sum over all paths, and thus … n compute Z, p(y | x), expected loss; sample random strings n compute gradients (training), Viterbi or MBR string (testing) 7

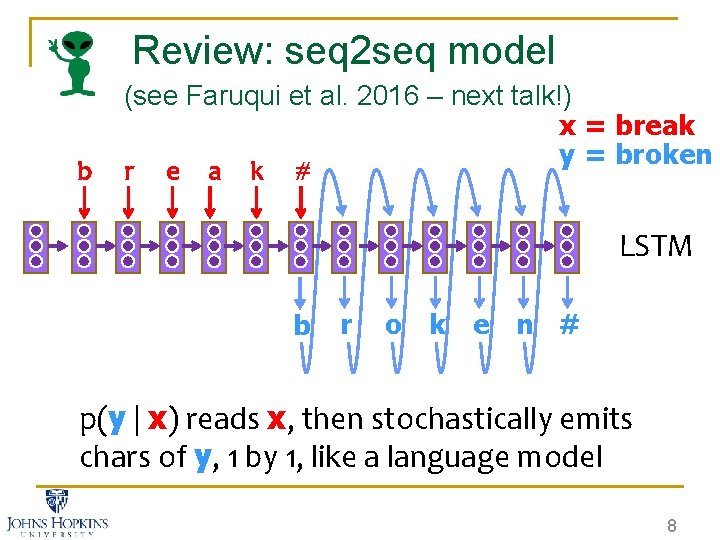

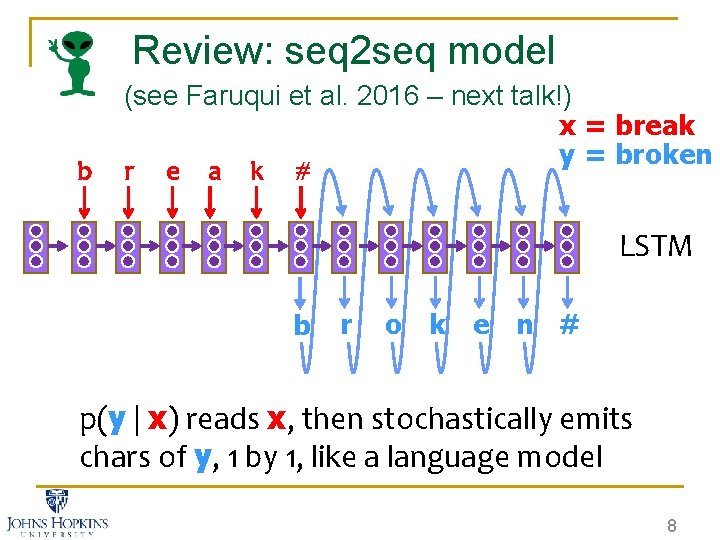

Review: seq 2 seq model b (see Faruqui et al. 2016 – next talk!) x = break y = broken r e a k # LSTM b r o k e n # p(y | x) reads x, then stochastically emits chars of y, 1 by 1, like a language model 8

9

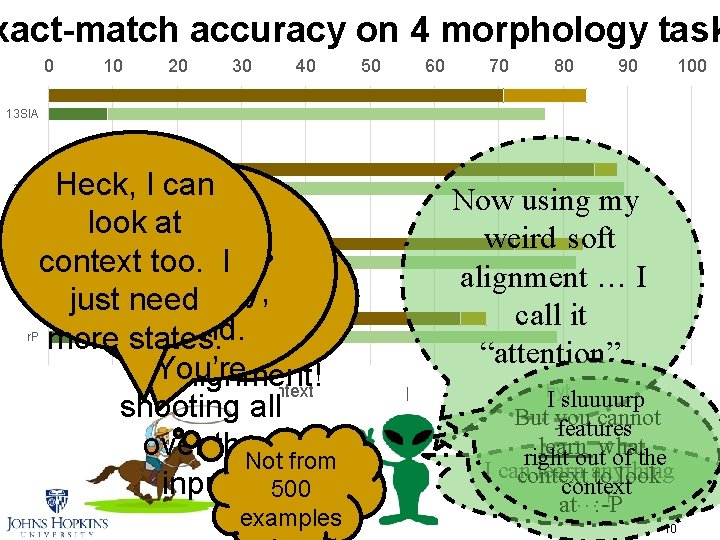

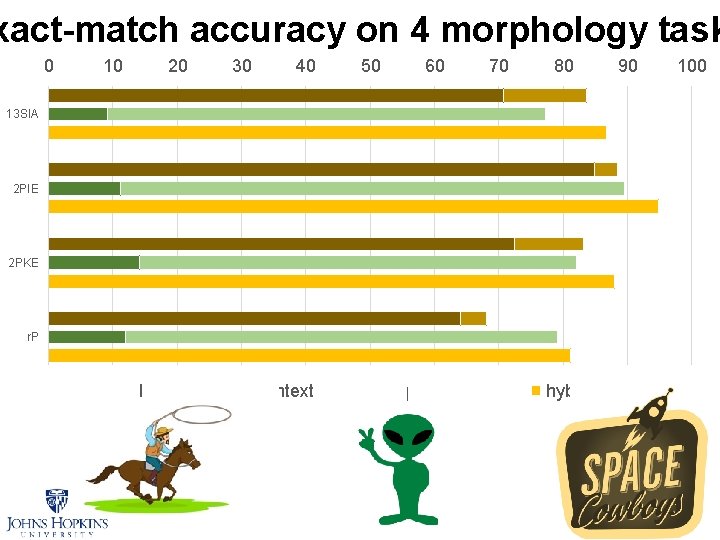

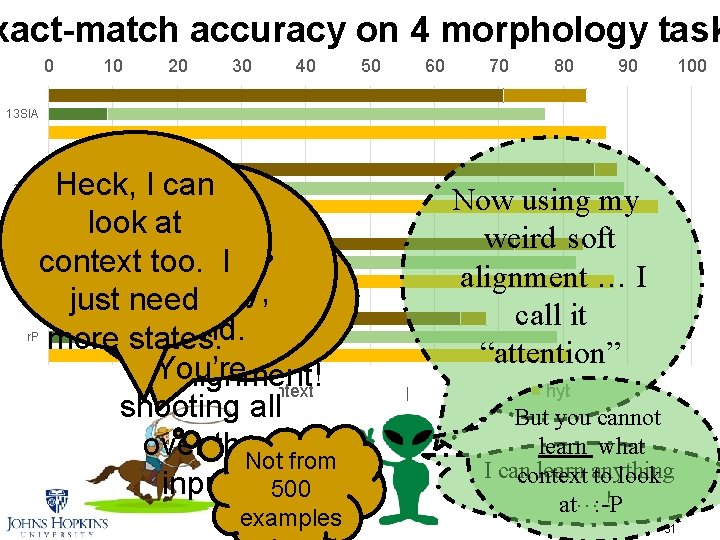

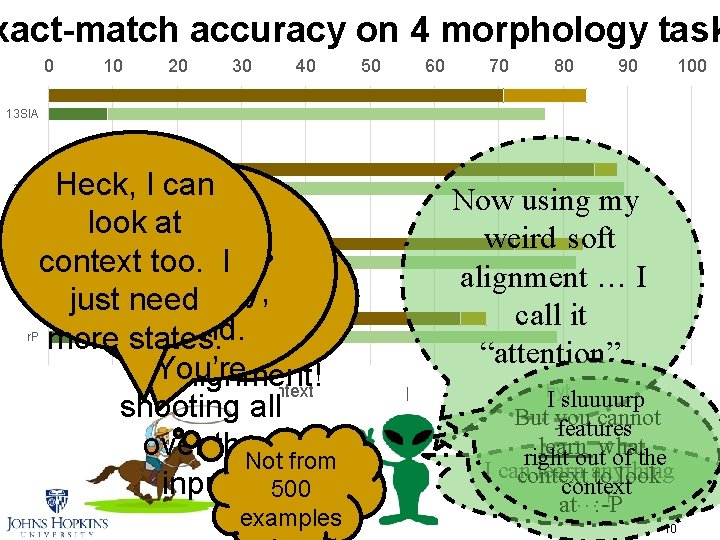

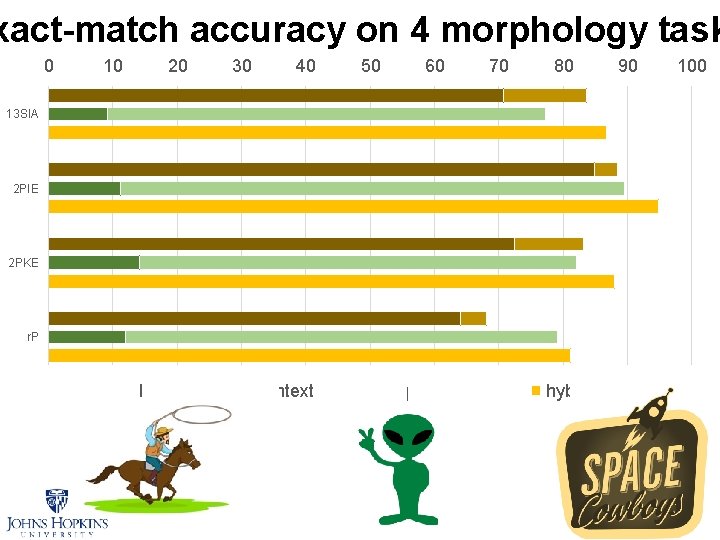

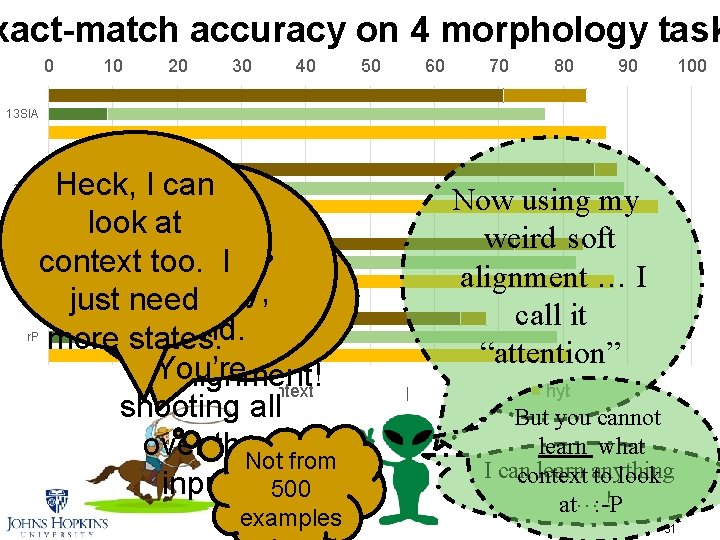

xact-match accuracy on 4 morphology task 0 10 20 30 40 50 60 70 80 90 100 13 SIA 2 PIE 2 PKE r. P Heck, I can look at. Your Ha!I You contextattention’s too. unsteady, ain’t got just need friend. any more states. You’re alignment! FST + local context seq 2 seq shooting all over the. Not from input. 500 examples Now using my weird soft alignment … I call it “attention” + attention hybrid I sluuuurp But you cannot features learnoutwhat right of the I can learn anything context to look context ! at…: -P 10

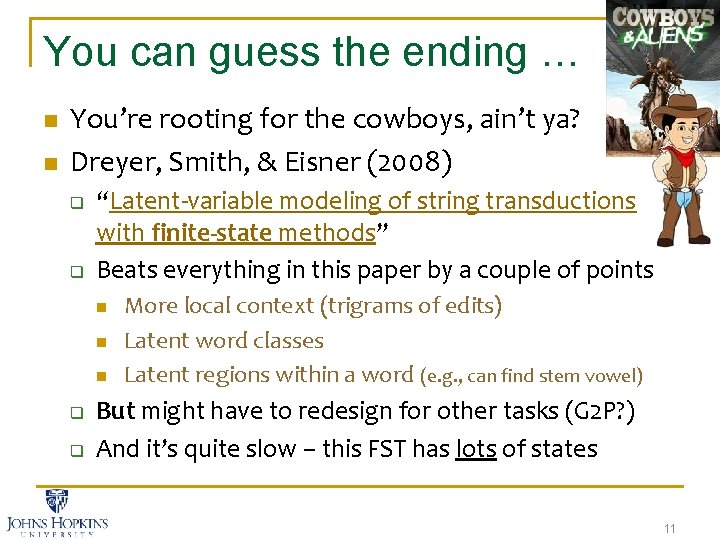

You can guess the ending … n n You’re rooting for the cowboys, ain’t ya? Dreyer, Smith, & Eisner (2008) q q “Latent-variable modeling of string transductions with finite-state methods” Beats everything in this paper by a couple of points n n n q q More local context (trigrams of edits) Latent word classes Latent regions within a word (e. g. , can find stem vowel) But might have to redesign for other tasks (G 2 P? ) And it’s quite slow – this FST has lots of states 11

The Alternate Ending … 13 13

How do we give a cowboy alien genes? n First, we’ll need to upgrade our cowboy. n The new weapon comes from CRFs. q Discriminative training? Already doing it. Global normalization? Already doing it. q Conditioning on entire input (like seq 2 seq)? Aha! q 14

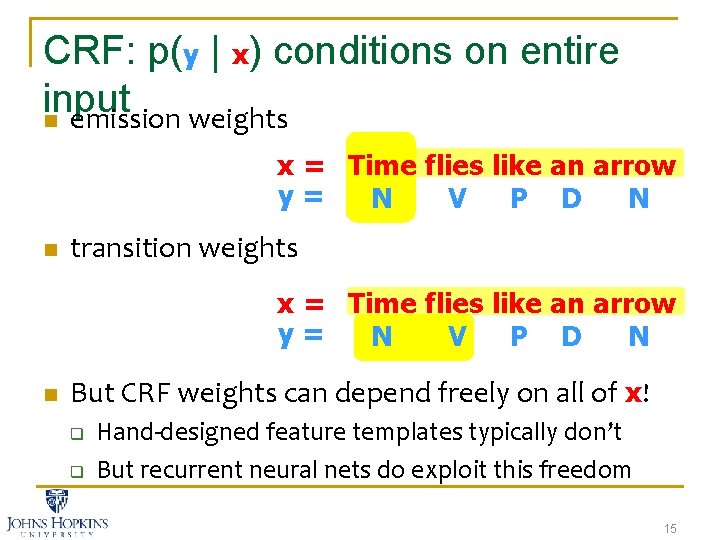

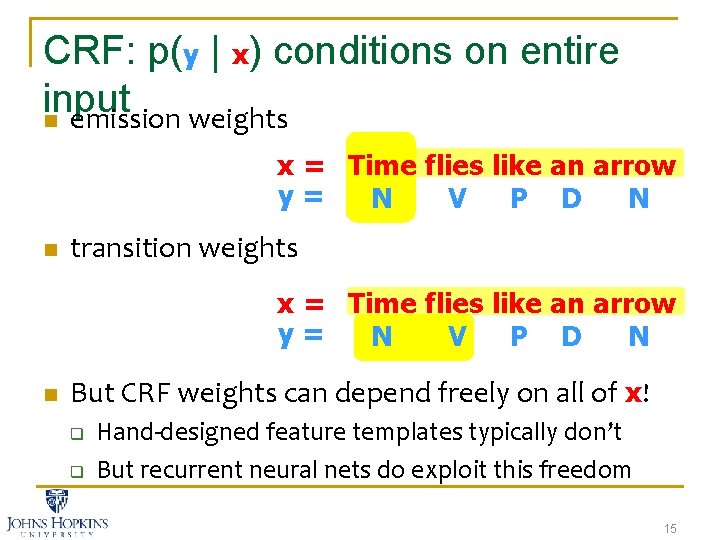

CRF: p(y | x) conditions on entire input n emission weights x = Time flies like an arrow y= N V P D N n transition weights x = Time flies like an arrow y= N V P D N n But CRF weights can depend freely on all of x! q q Hand-designed feature templates typically don’t But recurrent neural nets do exploit this freedom 15

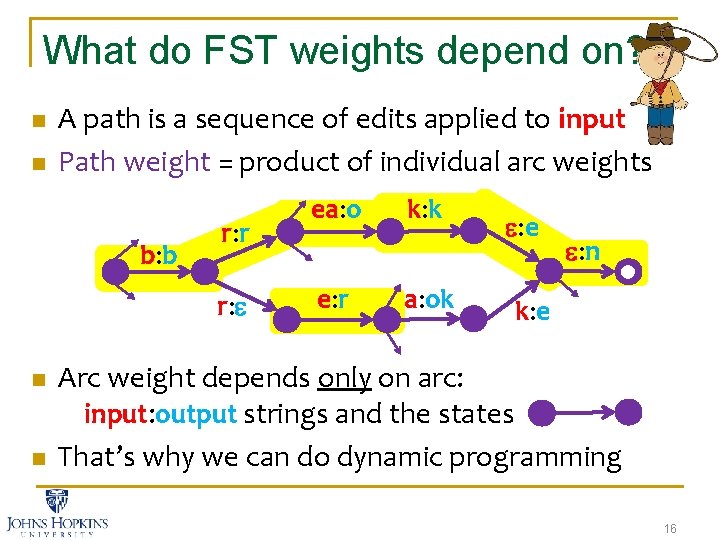

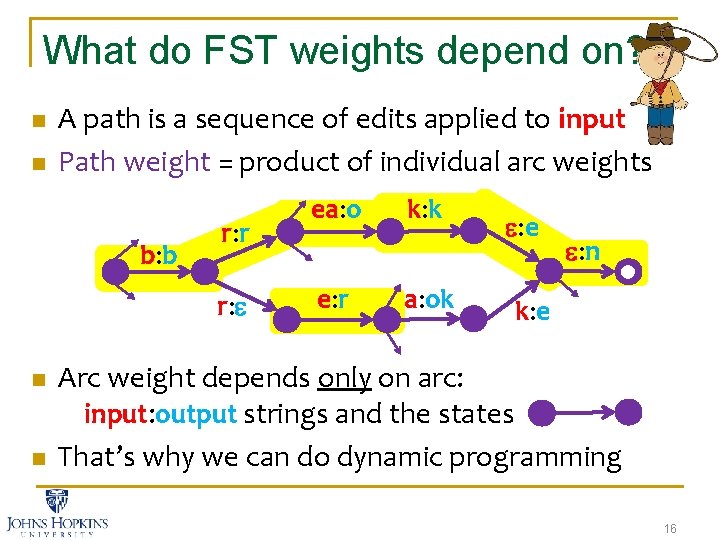

What do FST weights depend on? n n A path is a sequence of edits applied to input Path weight = product of individual arc weights b: b r: r r: n n ea: o e: r k: k a: ok : e : n k: e Arc weight depends only on arc: input: output strings and the states That’s why we can do dynamic programming 16

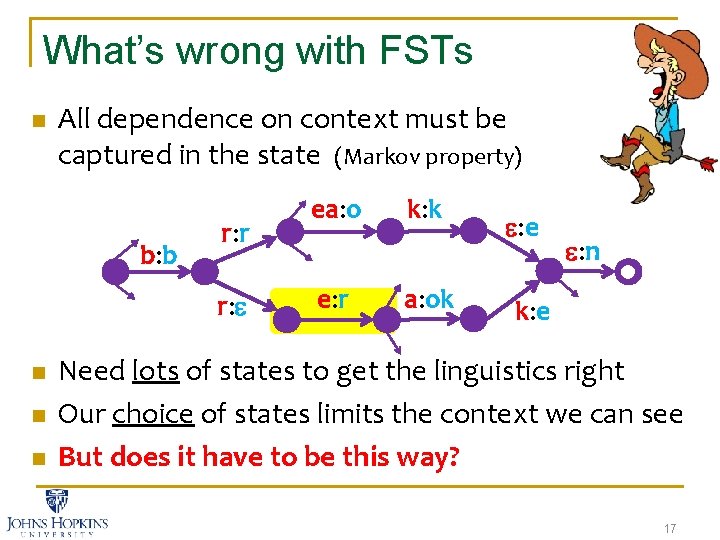

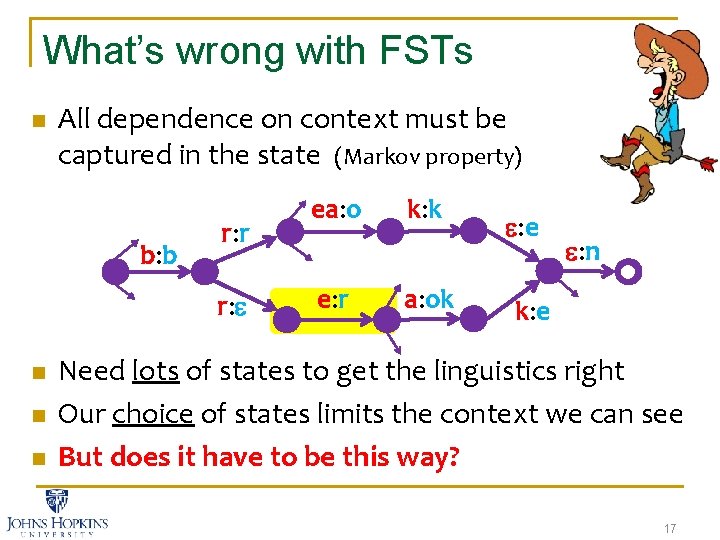

What’s wrong with FSTs n All dependence on context must be captured in the state (Markov property) b: b r: r r: n n n ea: o e: r k: k a: ok : e : n k: e Need lots of states to get the linguistics right Our choice of states limits the context we can see But does it have to be this way? 17

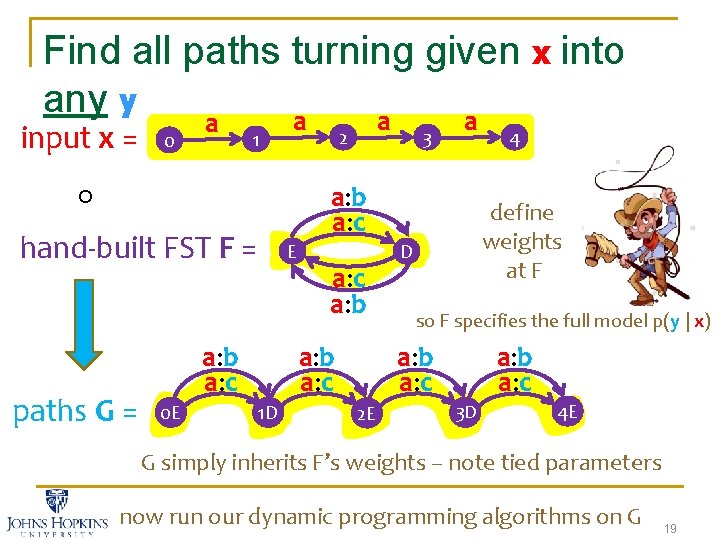

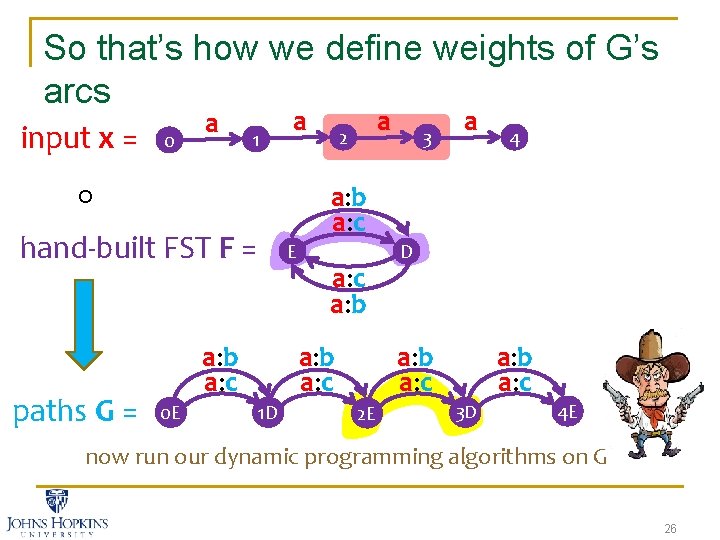

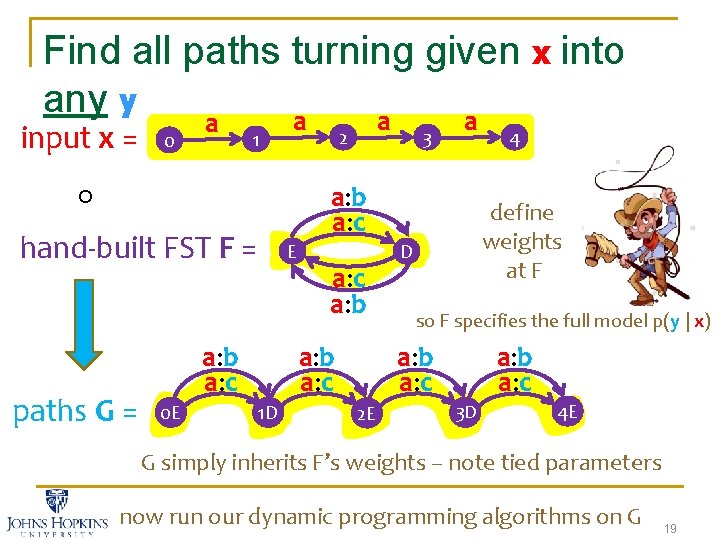

Find all paths turning given x into any y a a input x = 2 1 0 hand-built FST F = paths G = E a: b a: c 0 E 3 a: b a: c 1 D 4 define weights at F D so F specifies the full model p(y | x) a: b a: c 2 E a: b a: c 3 D 4 E G simply inherits F’s weights – note tied parameters now run our dynamic programming algorithms on G 19

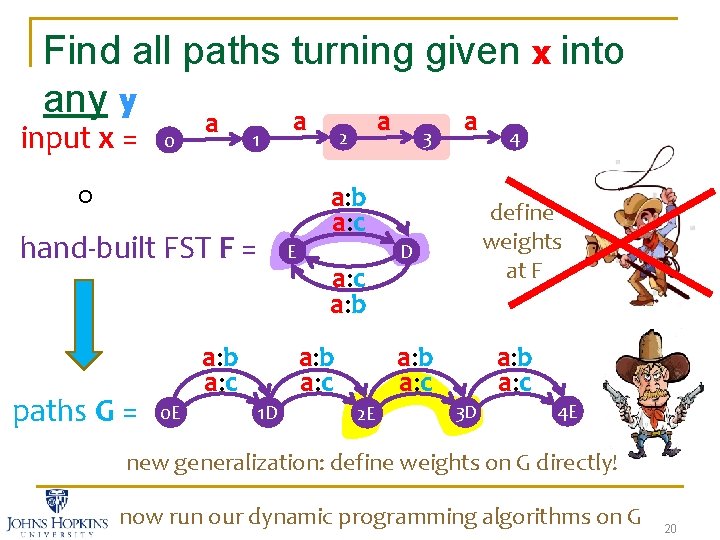

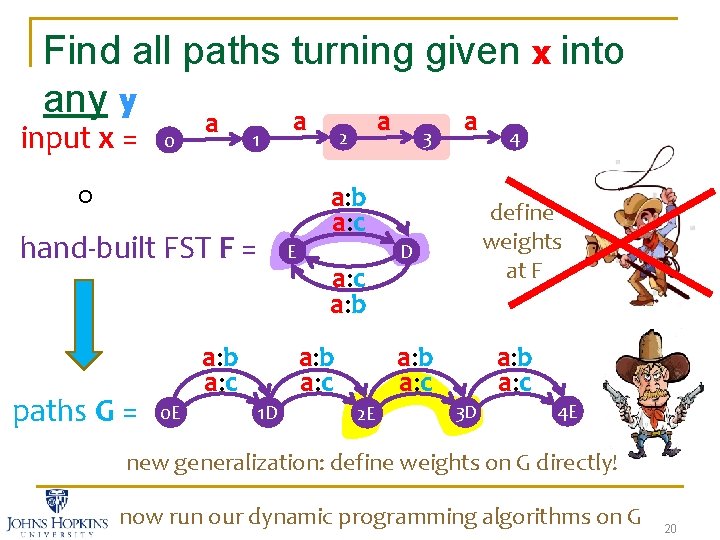

Find all paths turning given x into any y a a input x = 2 1 0 hand-built FST F = paths G = E a: b a: c 0 E 3 a: b a: c 1 D 4 define weights at F D a: b a: c 2 E a: b a: c 3 D 4 E new generalization: define weights on G directly! now run our dynamic programming algorithms on G 20

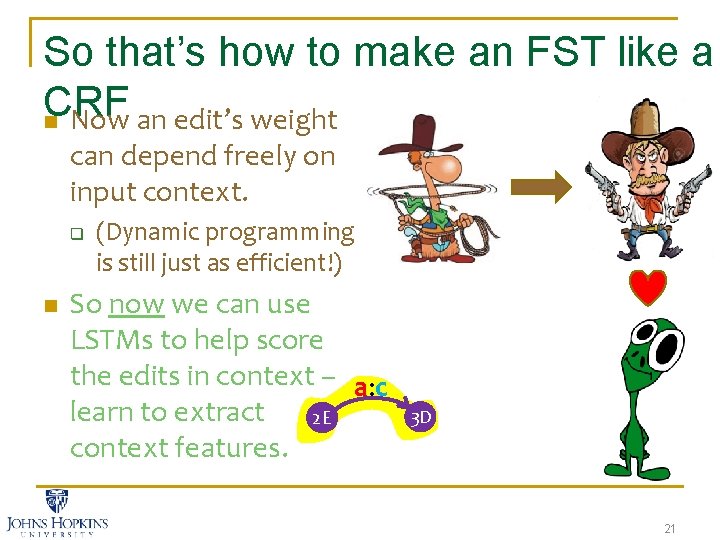

So that’s how to make an FST like a CRF n Now an edit’s weight can depend freely on input context. q n (Dynamic programming is still just as efficient!) So now we can use LSTMs to help score the edits in context – a: c learn to extract 2 E context features. 3 D 21

Cowboy + Alien = ? 22

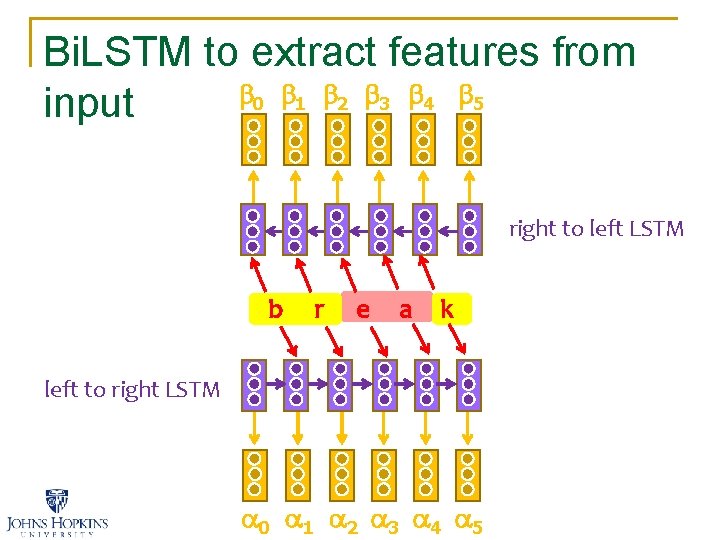

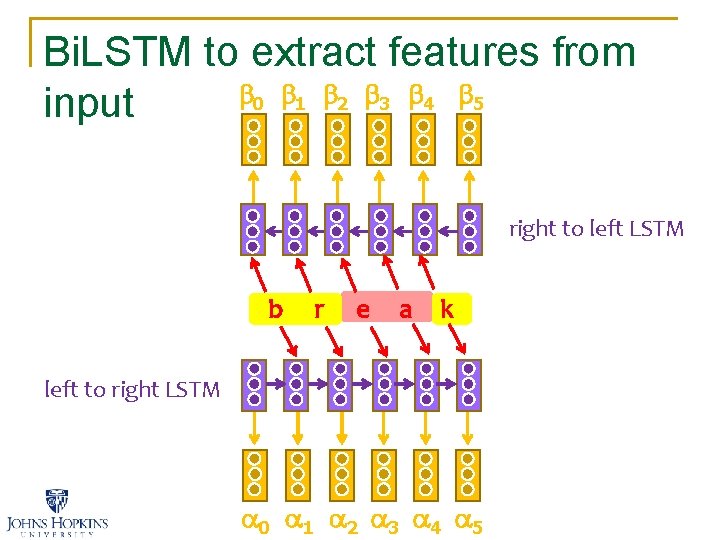

Bi. LSTM to extract features from 0 1 2 3 4 5 input right to left LSTM b r e a k left to right LSTM 0 1 2 3 4 5

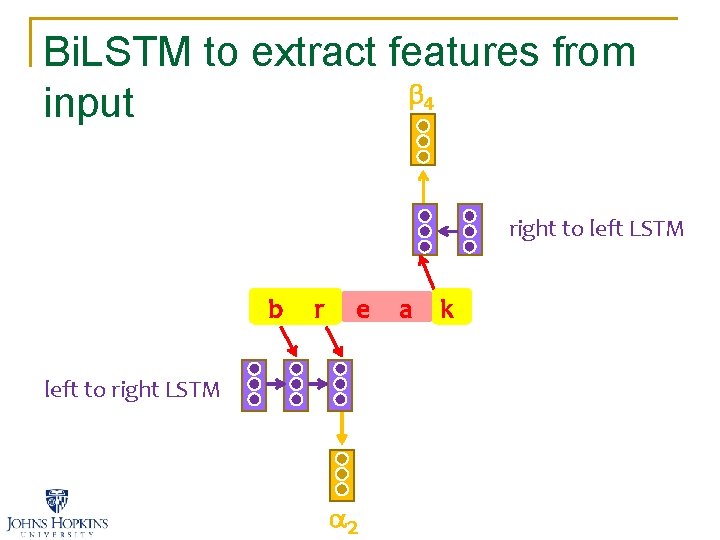

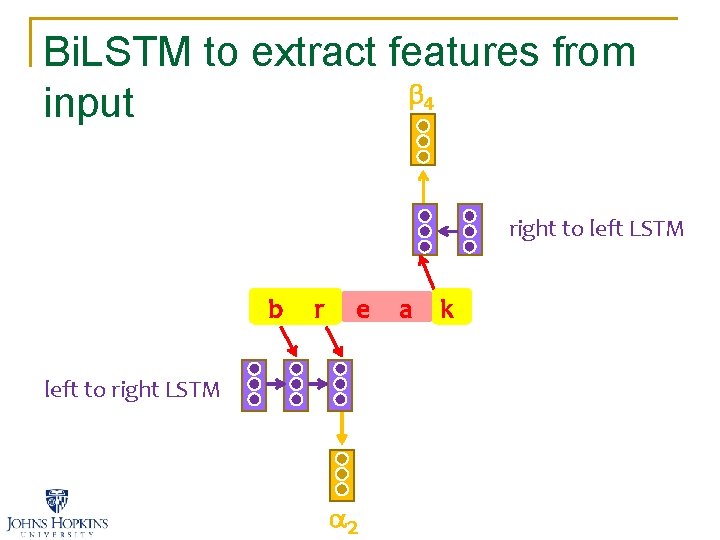

Bi. LSTM to extract features from 4 input right to left LSTM b r e a k left to right LSTM 2

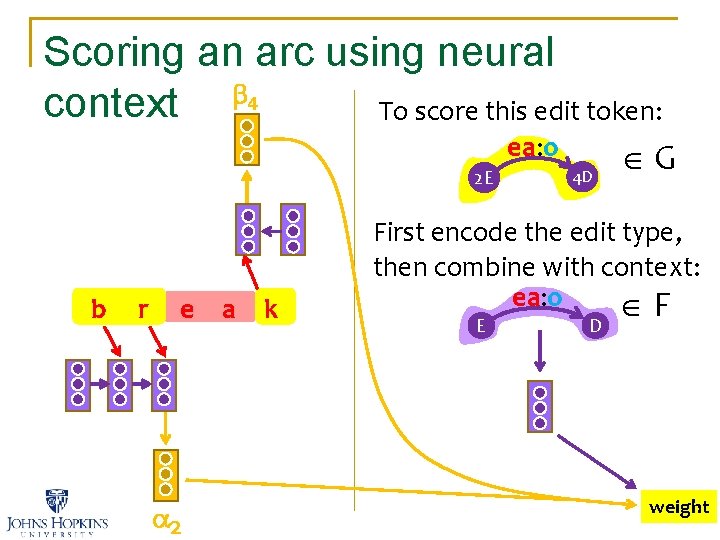

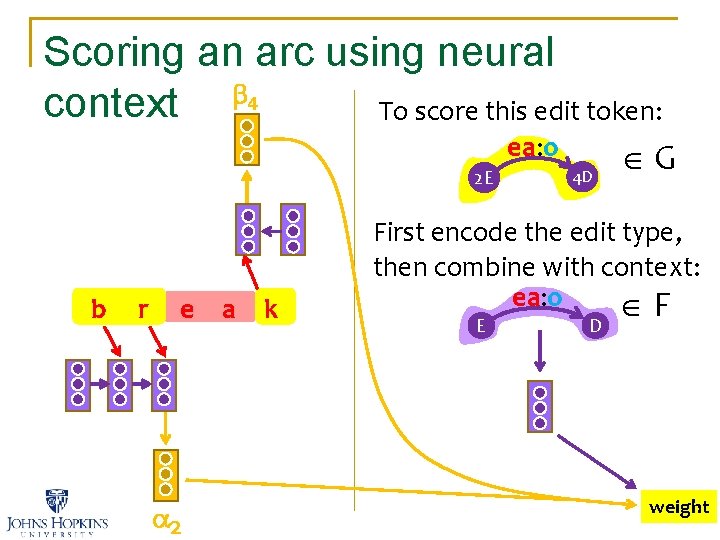

Scoring an arc using neural 4 context To score this edit token: ea: o 2 E b r e a k 2 4 D G First encode the edit type, then combine with context: ea: o F E D weight

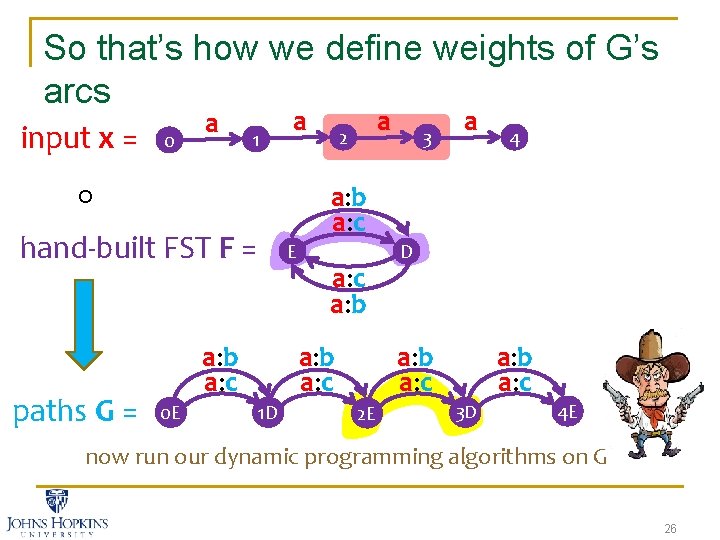

So that’s how we define weights of G’s arcs input x = 0 a 1 a hand-built FST F = paths G = E a: b a: c 0 E a 2 a: b a: c 1 D 3 a D a: b a: c 2 E 4 a: b a: c 3 D 4 E now run our dynamic programming algorithms on G 26

27

xact-match accuracy on 4 morphology task 0 10 20 30 40 50 60 70 80 90 100 13 SIA 2 PIE 2 PKE r. P FST + local context seq 2 seq + attention hybrid 28

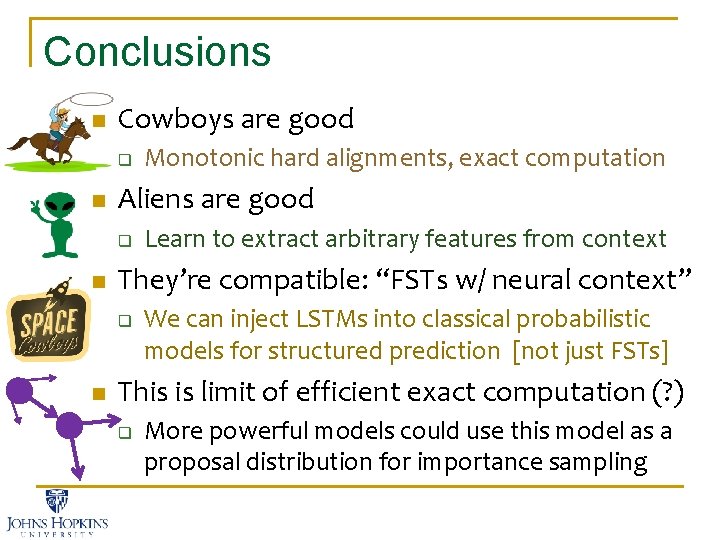

Conclusions n Cowboys are good q n Aliens are good q n Learn to extract arbitrary features from context They’re compatible: “FSTs w/ neural context” q n Monotonic hard alignments, exact computation We can inject LSTMs into classical probabilistic models for structured prediction [not just FSTs] This is limit of efficient exact computation (? ) q More powerful models could use this model as a proposal distribution for importance sampling

Questions? Weighting Finite-State Transductions With Neural Context Pushpendre Rastogi Ryan Cotterell Jason Eisner

xact-match accuracy on 4 morphology task 0 10 20 30 40 50 60 70 80 90 100 13 SIA 2 PIE 2 PKE r. P Heck, I can look at. Your Ha!I You contextattention’s too. unsteady, ain’t got just need friend. any more states. You’re alignment! FST + local context seq 2 seq shooting all over the. Not from input. 500 examples Now using my weird soft alignment … I call it “attention” + attention hybrid But you cannot learn what I can learn anything context to look ! at…: -P 31