Weight Learning Slides by Daniel Lowd Overview l

Weight Learning (Slides by Daniel Lowd)

Overview l l Generative Discriminative l l l Gradient descent Diagonal Newton Conjugate gradient Missing data Empirical comparison

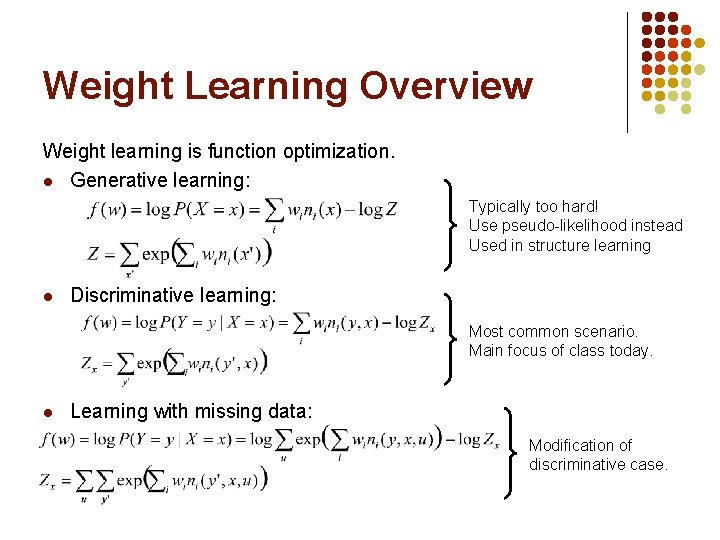

Weight Learning Overview Weight learning is function optimization. l Generative learning: Typically too hard! Use pseudo-likelihood instead Used in structure learning l Discriminative learning: Most common scenario. Main focus of class today. l Learning with missing data: Modification of discriminative case.

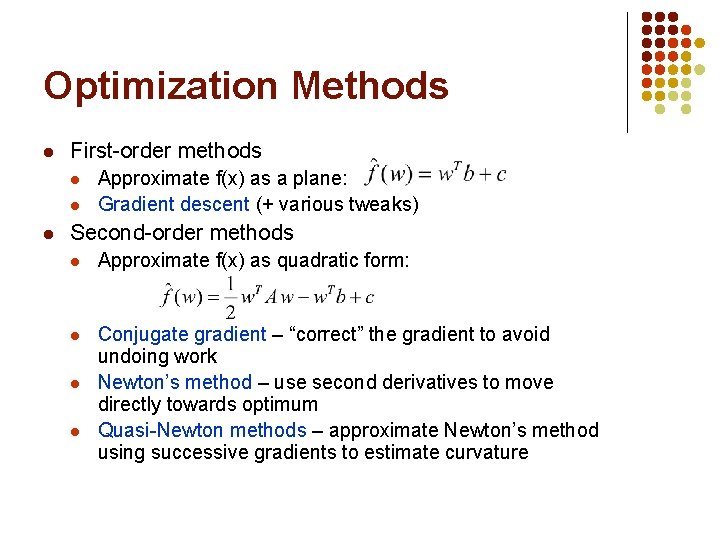

Optimization Methods l First-order methods l l l Approximate f(x) as a plane: Gradient descent (+ various tweaks) Second-order methods l Approximate f(x) as quadratic form: l Conjugate gradient – “correct” the gradient to avoid undoing work Newton’s method – use second derivatives to move directly towards optimum Quasi-Newton methods – approximate Newton’s method using successive gradients to estimate curvature l l

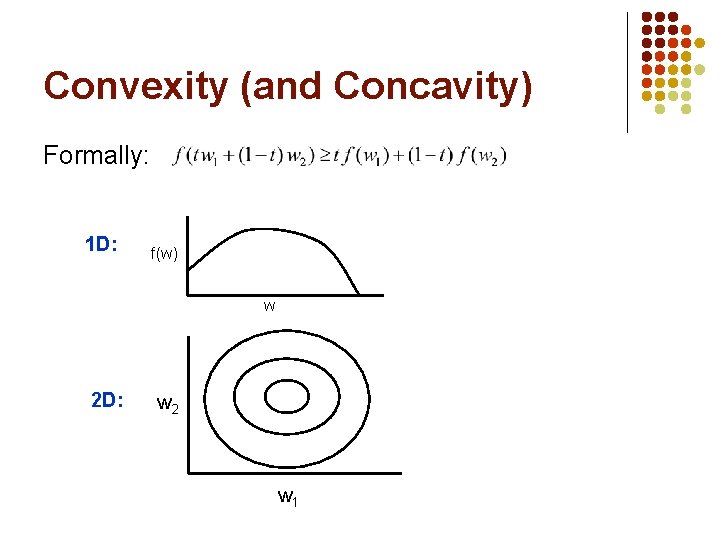

Convexity (and Concavity) Formally: 1 D: f(w) w 2 D: w 2 w 1

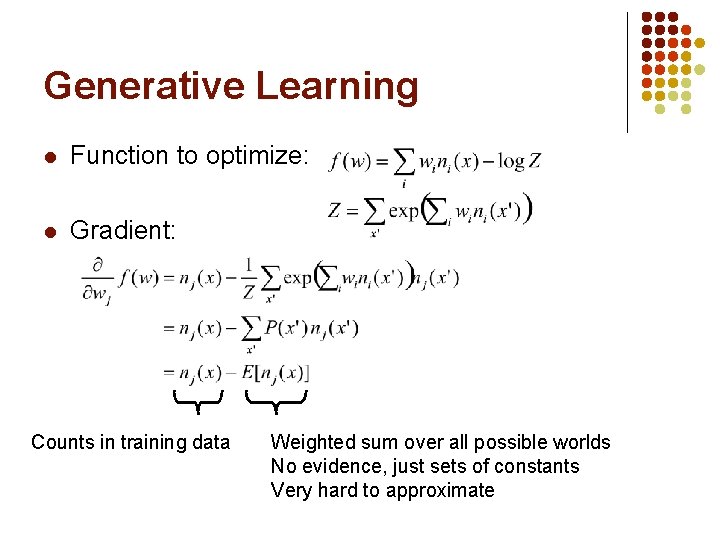

Generative Learning l Function to optimize: l Gradient: Counts in training data Weighted sum over all possible worlds No evidence, just sets of constants Very hard to approximate

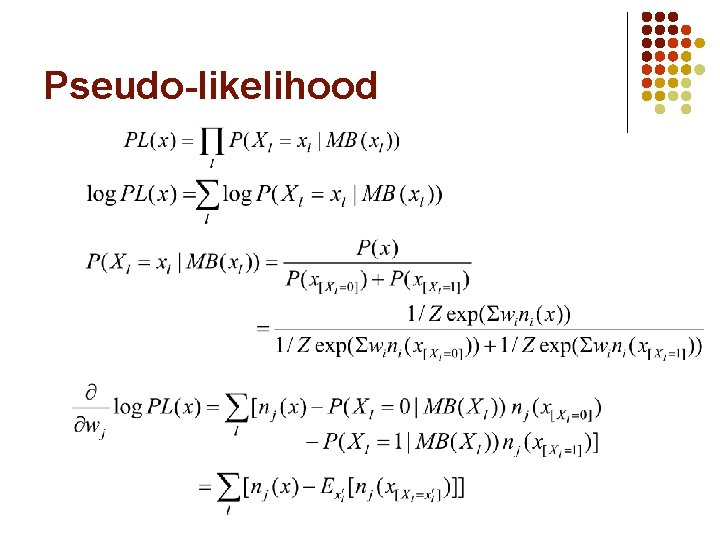

Pseudo-likelihood

Pseudo-likelihood l Efficiency tricks: l l Compute each nj(x) only once Skip formulas in which xl does not appear Skip groundings of clauses with > 1 true literal e. g. , (A v ¬B v C) when A=1, B=0 Optimizing pseudo-likelihood l l Pseudo-log likelihood is convex Standard convex optimization algorithms work great (e. g. , L-BFGS quasi-Newton method)

Pseudo-likelihood l Pros l l l Efficient to compute Consistent estimator Cons l Works poorly with long-range dependencies

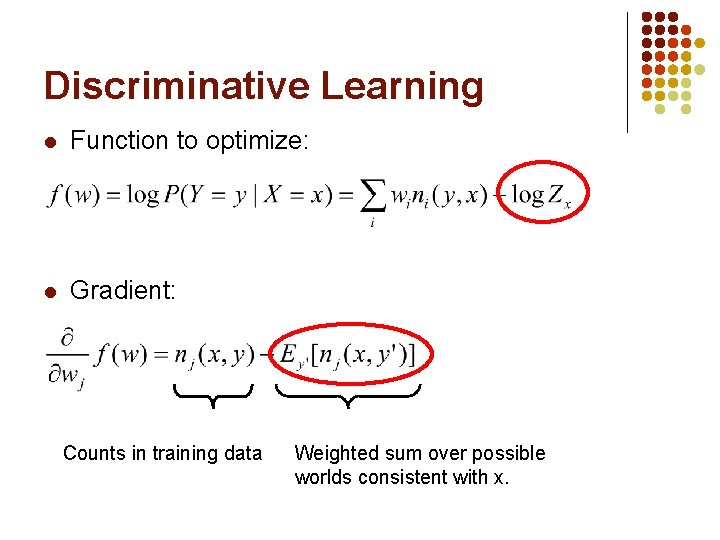

Discriminative Learning l Function to optimize: l Gradient: Counts in training data Weighted sum over possible worlds consistent with x.

![Approximating E[n(x, y)] l Use the counts of the most likely (MAP) state l Approximating E[n(x, y)] l Use the counts of the most likely (MAP) state l](http://slidetodoc.com/presentation_image_h2/bb76a3f902e9e4f107ebf148f8125c26/image-11.jpg)

Approximating E[n(x, y)] l Use the counts of the most likely (MAP) state l l l Average over states sampled with MCMC l l l Approximate with Max. Walk. SAT -- very efficient Does not represent multi-modal distributions well MC-SAT produces weakly correlated samples Just a few samples (5) often suffices! (Contrastive divergence) Note that a single complete state may have millions of groundings of a clause! Tied weights allow us to get away with fewer samples.

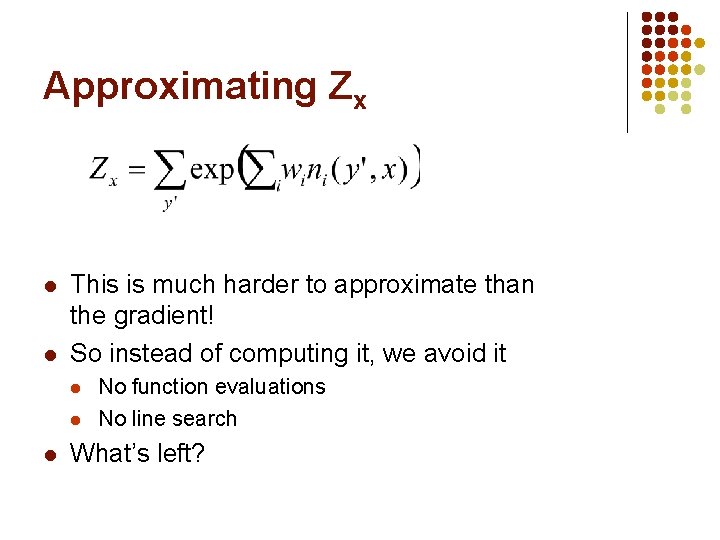

Approximating Zx l l This is much harder to approximate than the gradient! So instead of computing it, we avoid it l l l No function evaluations No line search What’s left?

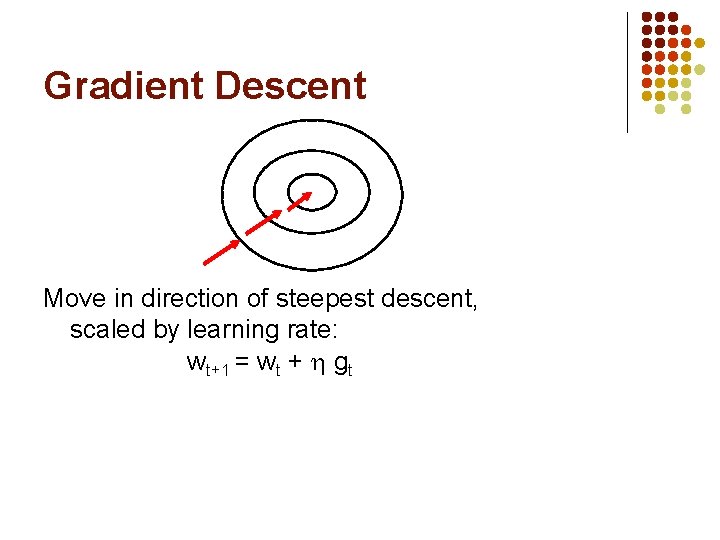

Gradient Descent Move in direction of steepest descent, scaled by learning rate: wt+1 = wt + gt

![Gradient Descent in MLNs l l Voted perceptron [Collins, 2002; Singla & Domingos, 2005] Gradient Descent in MLNs l l Voted perceptron [Collins, 2002; Singla & Domingos, 2005]](http://slidetodoc.com/presentation_image_h2/bb76a3f902e9e4f107ebf148f8125c26/image-14.jpg)

Gradient Descent in MLNs l l Voted perceptron [Collins, 2002; Singla & Domingos, 2005] l Approximate counts use MAP state l MAP state approximated using Max. Walk. SAT l Average weights across all learning steps for additional smoothing Contrastive divergence [Hinton, 2002; Lowd & Domingos, 2007] l Approximate counts from a few MCMC samples l MC-SAT gives less correlated samples [Poon & Domingos, 2006]

Per-weight Learning Rates l l Some clauses have vastly more groundings than others l Smokes(x) Cancer(x) l Friends(a, b) Friends(b, c) Friends(a, c) Need different learning rate in each dimension Impractical to tune rate to each weight by hand Learning rate in each dimension is: /(# true clause groundings)

Problem: Ill-Conditioning l l Skewed surface Slow convergence Condition number: (λmax/λmin) of Hessian

The Hessian Matrix l l Hessian matrix: all second derivatives In an MLN, the Hessian is the negative covariance matrix of clause counts l l l Diagonal entries are clause variances Off-diagonal entries show correlations Shows local curvature of the error function

Newton’s Method l l l Weight update: w = w + H-1 g We can converge in one step if error surface is quadratic Requires inverting the Hessian matrix

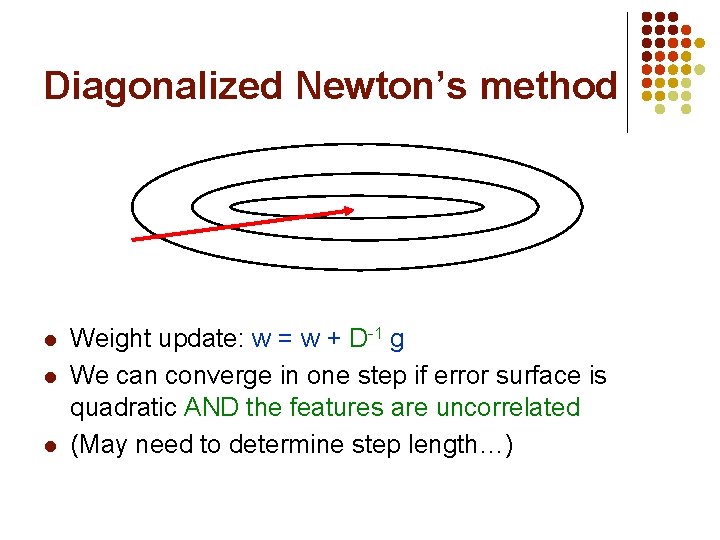

Diagonalized Newton’s method l l l Weight update: w = w + D-1 g We can converge in one step if error surface is quadratic AND the features are uncorrelated (May need to determine step length…)

Problem: Ill-Conditioning l l Skewed surface slow convergence Condition number: (λmax/λmin) of Hessian

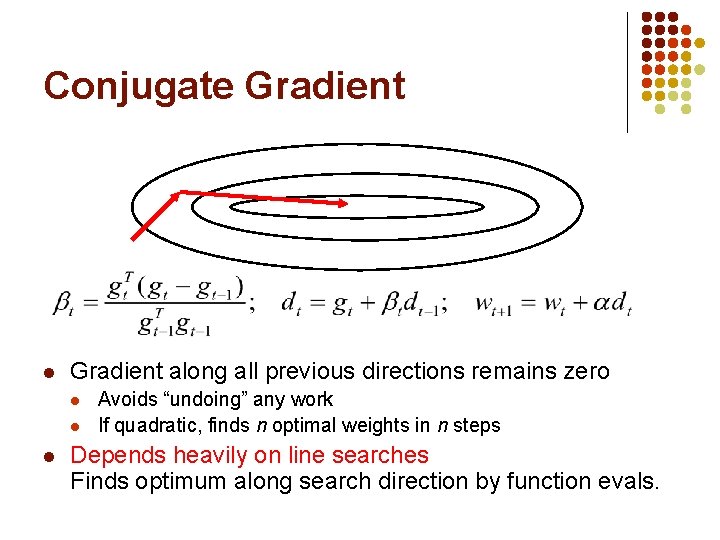

Conjugate Gradient l Gradient along all previous directions remains zero l l l Avoids “undoing” any work If quadratic, finds n optimal weights in n steps Depends heavily on line searches Finds optimum along search direction by function evals.

![[Møller, 1993] Scaled Conjugate Gradient l Gradient along all previous directions remains zero l [Møller, 1993] Scaled Conjugate Gradient l Gradient along all previous directions remains zero l](http://slidetodoc.com/presentation_image_h2/bb76a3f902e9e4f107ebf148f8125c26/image-22.jpg)

[Møller, 1993] Scaled Conjugate Gradient l Gradient along all previous directions remains zero l l Avoids “undoing” any work If quadratic, finds n optimal weights in n steps Uses Hessian matrix in place of line search Still cannot store full Hessian in memory

![Choosing a Step Size [Møller, 1993; Nocedal & Wright, 2007] l Given a direction Choosing a Step Size [Møller, 1993; Nocedal & Wright, 2007] l Given a direction](http://slidetodoc.com/presentation_image_h2/bb76a3f902e9e4f107ebf148f8125c26/image-23.jpg)

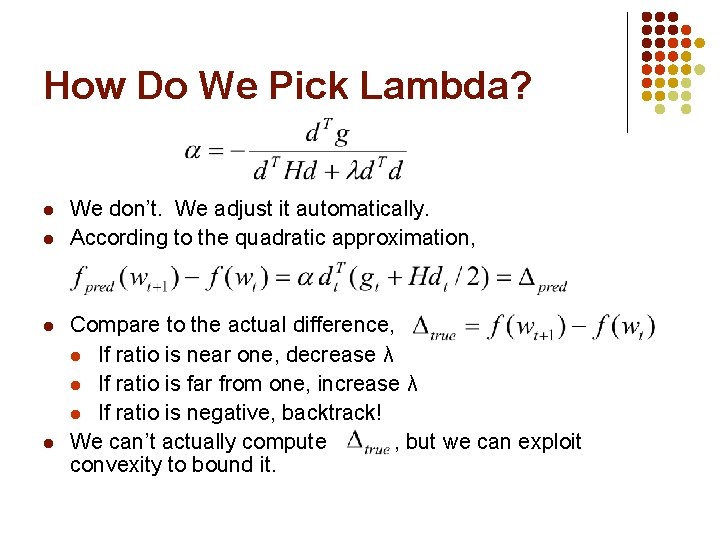

Choosing a Step Size [Møller, 1993; Nocedal & Wright, 2007] l Given a direction d, how do we choose a good step size α? Want to make gradient zero. Suppose f is quadratic: l But f isn’t quadratic! l l In a small enough region it’s approximately quadratic One approach: Set maximum step size Alternately, add a normalization term to denominator

How Do We Pick Lambda? l l We don’t. We adjust it automatically. According to the quadratic approximation, Compare to the actual difference, l If ratio is near one, decrease λ l If ratio is far from one, increase λ l If ratio is negative, backtrack! We can’t actually compute , but we can exploit convexity to bound it.

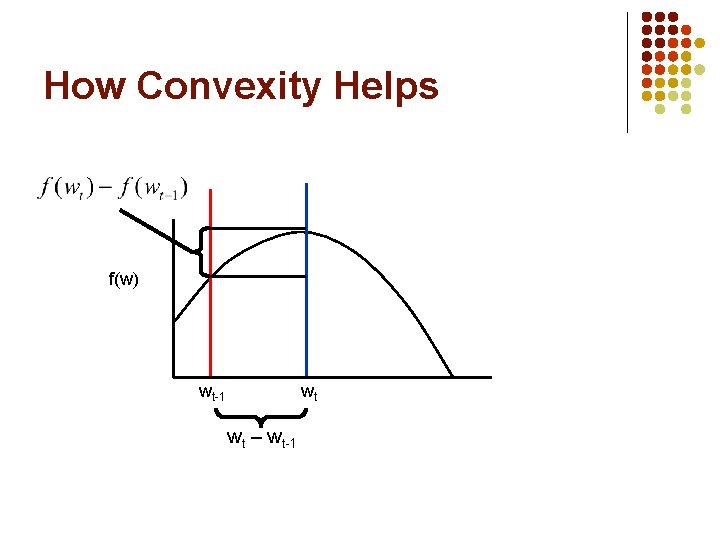

How Convexity Helps f(w) wt-1 wt wt – wt-1

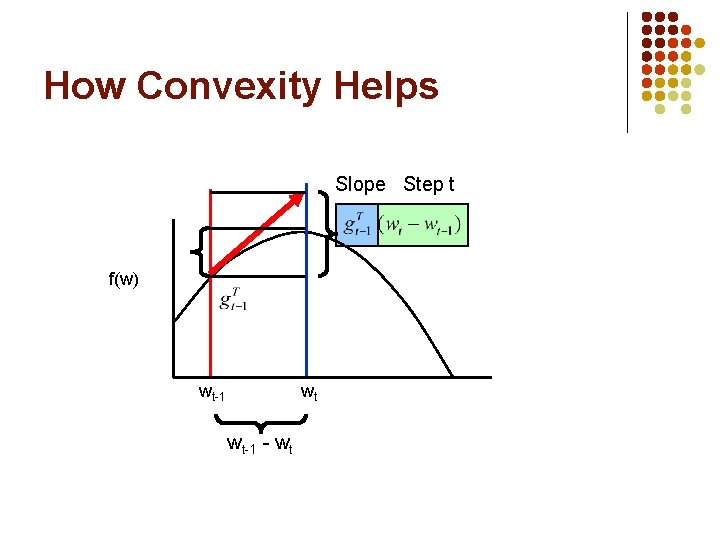

How Convexity Helps Slope Step t f(w) wt-1 wt wt-1 - wt

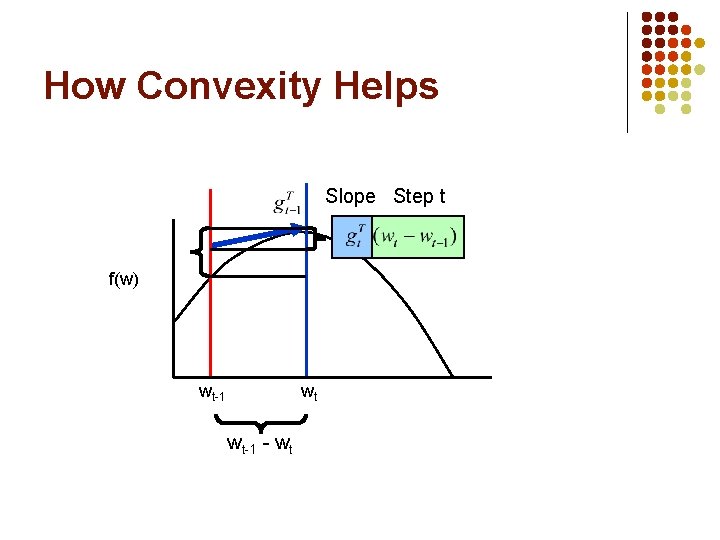

How Convexity Helps Slope Step t f(w) wt-1 wt wt-1 - wt

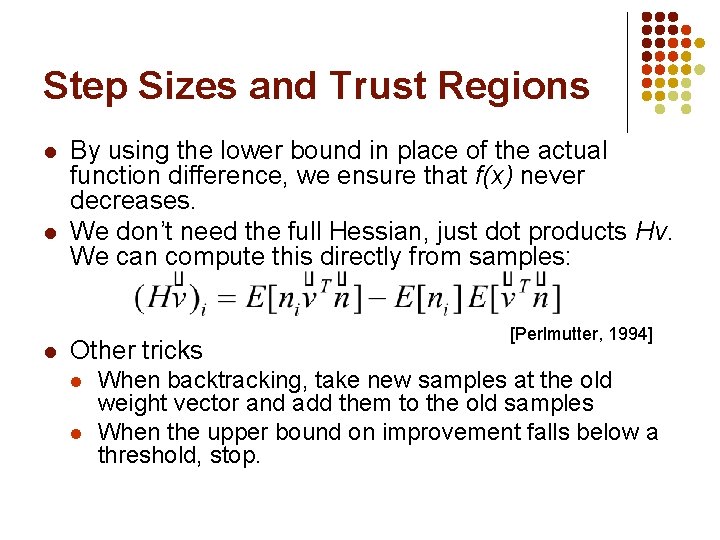

Step Sizes and Trust Regions l l l By using the lower bound in place of the actual function difference, we ensure that f(x) never decreases. We don’t need the full Hessian, just dot products Hv. We can compute this directly from samples: Other tricks l l [Perlmutter, 1994] When backtracking, take new samples at the old weight vector and add them to the old samples When the upper bound on improvement falls below a threshold, stop.

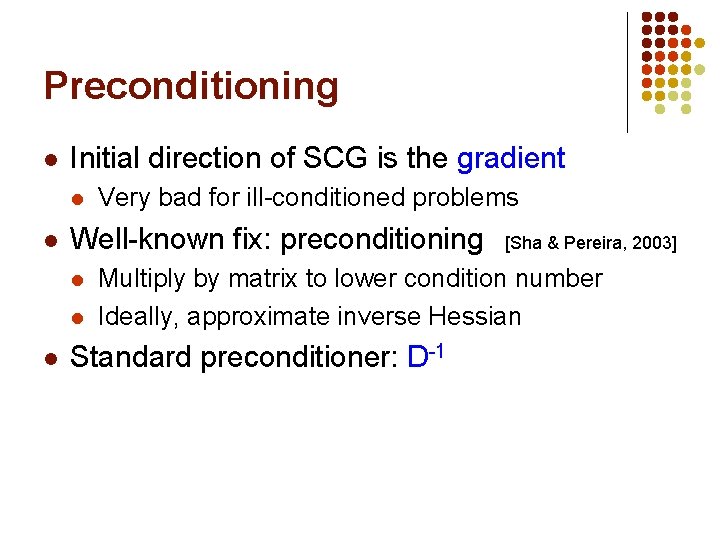

Preconditioning l Initial direction of SCG is the gradient l l Well-known fix: preconditioning l l l Very bad for ill-conditioned problems [Sha & Pereira, 2003] Multiply by matrix to lower condition number Ideally, approximate inverse Hessian Standard preconditioner: D-1

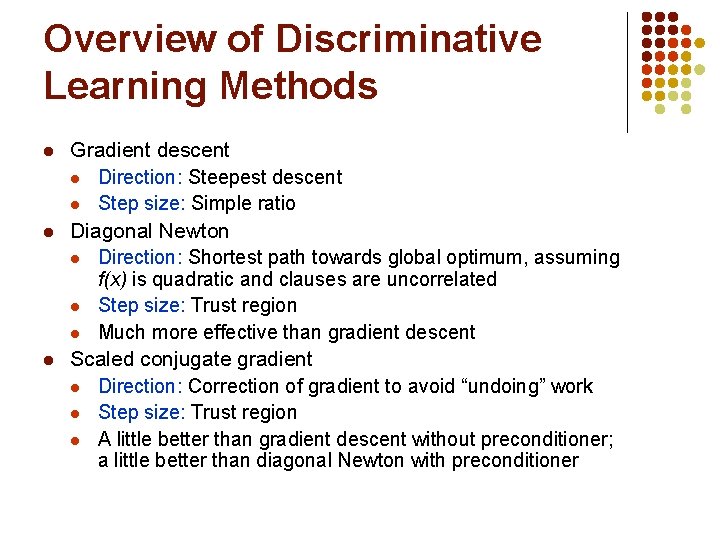

Overview of Discriminative Learning Methods l l l Gradient descent l Direction: Steepest descent l Step size: Simple ratio Diagonal Newton l Direction: Shortest path towards global optimum, assuming f(x) is quadratic and clauses are uncorrelated l Step size: Trust region l Much more effective than gradient descent Scaled conjugate gradient l Direction: Correction of gradient to avoid “undoing” work l Step size: Trust region l A little better than gradient descent without preconditioner; a little better than diagonal Newton with preconditioner

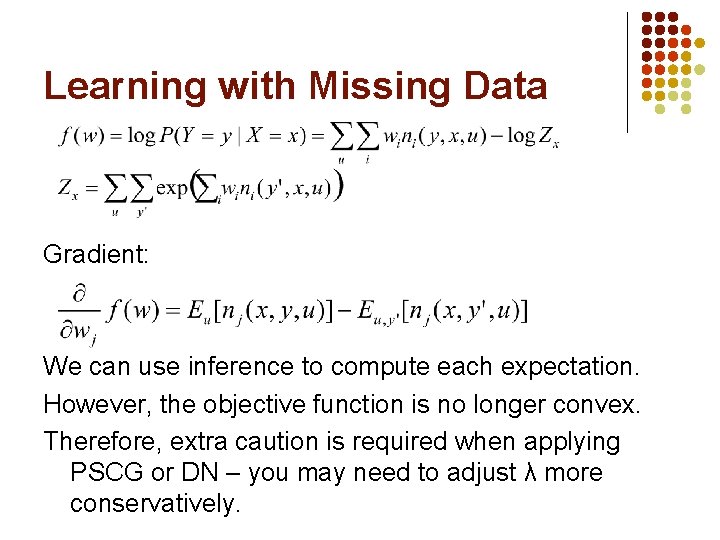

Learning with Missing Data Gradient: We can use inference to compute each expectation. However, the objective function is no longer convex. Therefore, extra caution is required when applying PSCG or DN – you may need to adjust λ more conservatively.

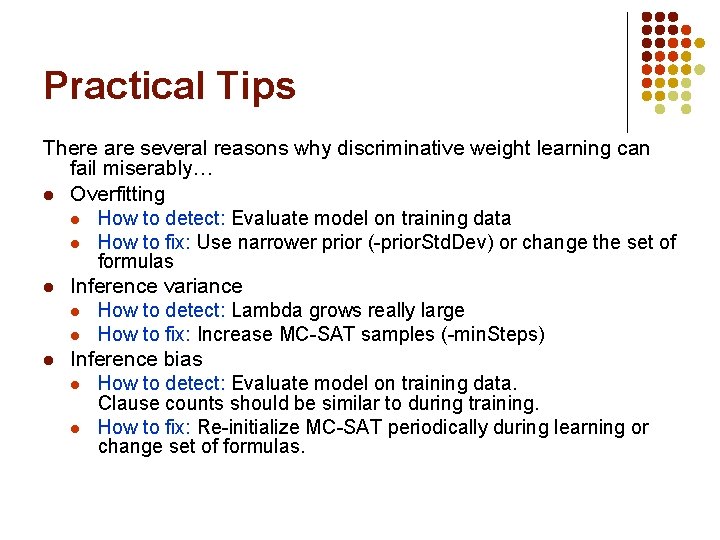

Practical Tips There are several reasons why discriminative weight learning can fail miserably… l Overfitting l How to detect: Evaluate model on training data l How to fix: Use narrower prior (-prior. Std. Dev) or change the set of formulas l Inference variance l How to detect: Lambda grows really large l How to fix: Increase MC-SAT samples (-min. Steps) l Inference bias l How to detect: Evaluate model on training data. Clause counts should be similar to during training. l How to fix: Re-initialize MC-SAT periodically during learning or change set of formulas.

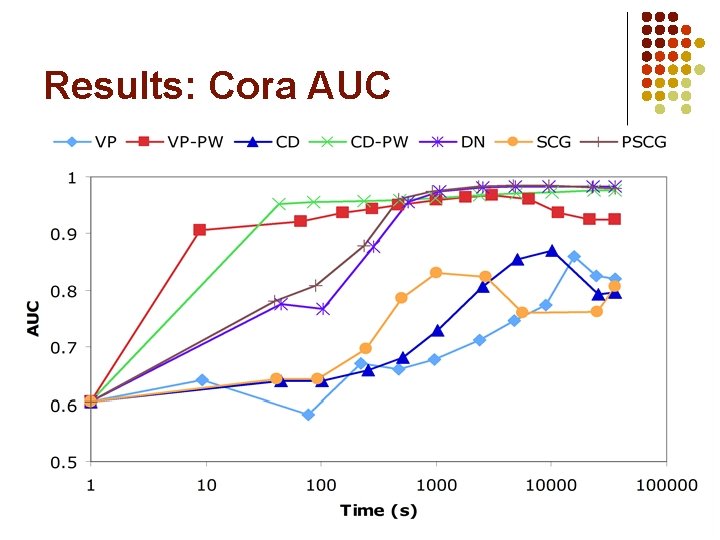

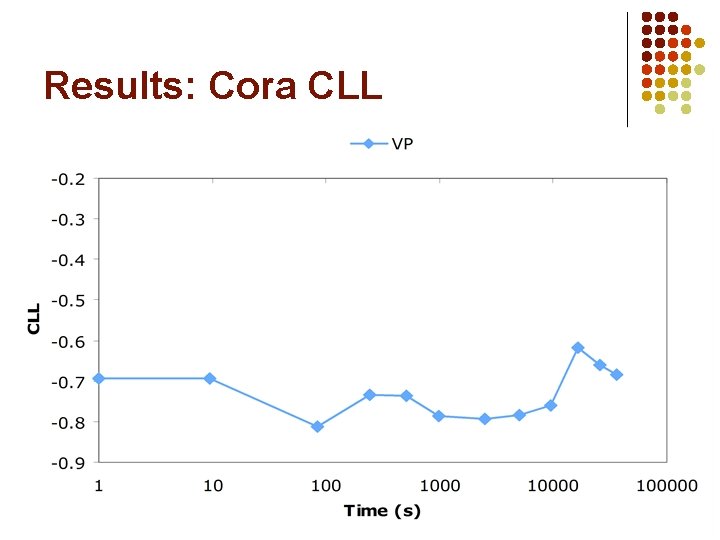

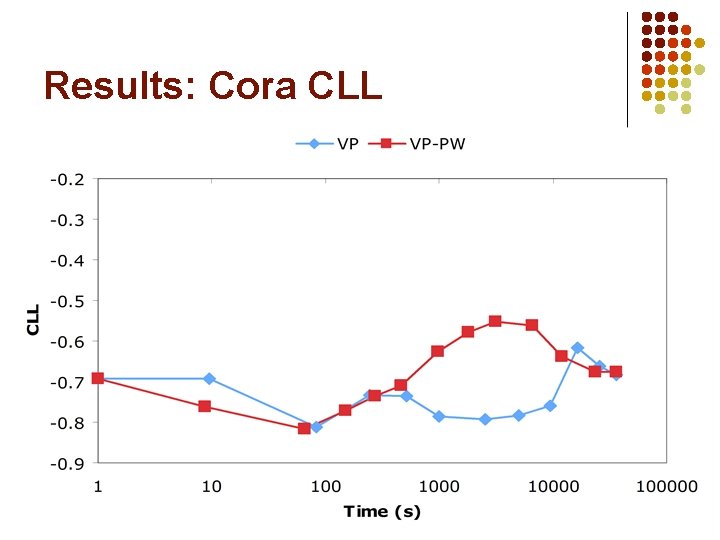

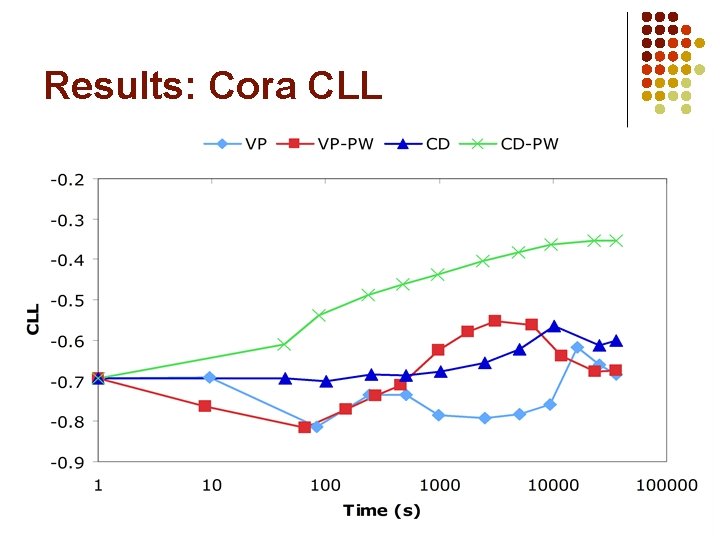

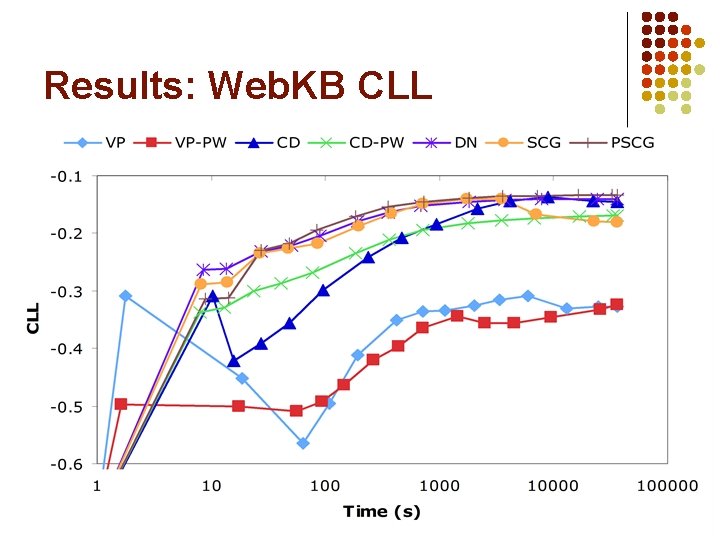

Experiments: Algorithms l l Voted perceptron (VP, VP-PW) Contrastive divergence (CD, CD-PW) Diagonal Newton (DN) Scaled conjugate gradient (SCG, PSCG)

![[Singla & Domingos, 2006] Experiments: Cora l l Task: Deduplicate 1295 citations to 132 [Singla & Domingos, 2006] Experiments: Cora l l Task: Deduplicate 1295 citations to 132](http://slidetodoc.com/presentation_image_h2/bb76a3f902e9e4f107ebf148f8125c26/image-34.jpg)

[Singla & Domingos, 2006] Experiments: Cora l l Task: Deduplicate 1295 citations to 132 papers MLN (approximate): Has. Token(+t, +f, r) ^ Has. Token(+t, +f, r’)=> Same. Field(f, r, r’) Same. Field(+f, r, r’) <=> Same. Record(r, r’) ^ Same. Record(r’, r”)=> Same. Record(r, r”) Same. Field(f, r, r’) ^ Same. Field(f, r’, r”)=> Same. Field(f, r, r”) l l l Weights: 6141 Ground clauses: > 3 million Condition number: > 600, 000

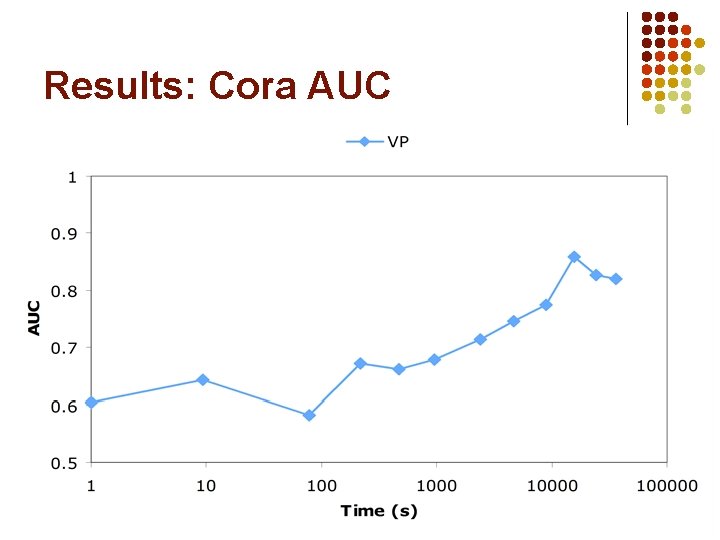

Results: Cora AUC

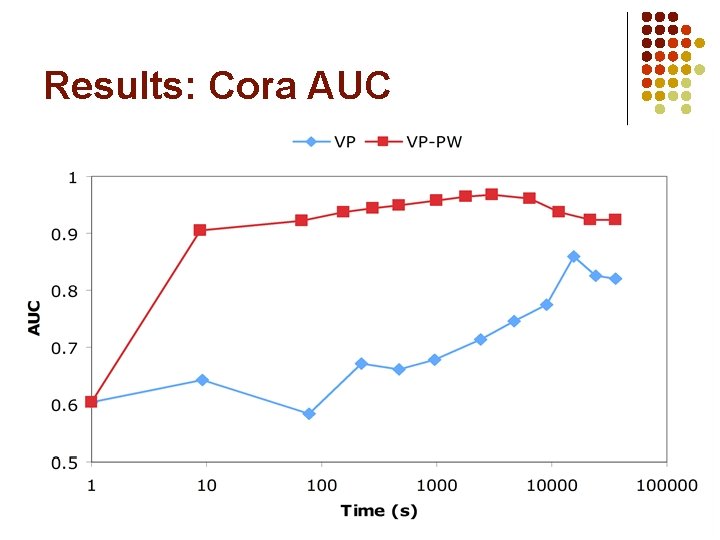

Results: Cora AUC

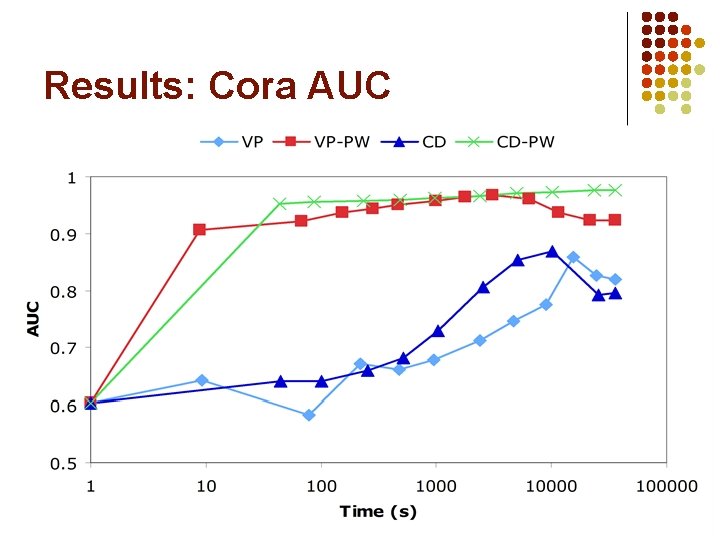

Results: Cora AUC

Results: Cora AUC

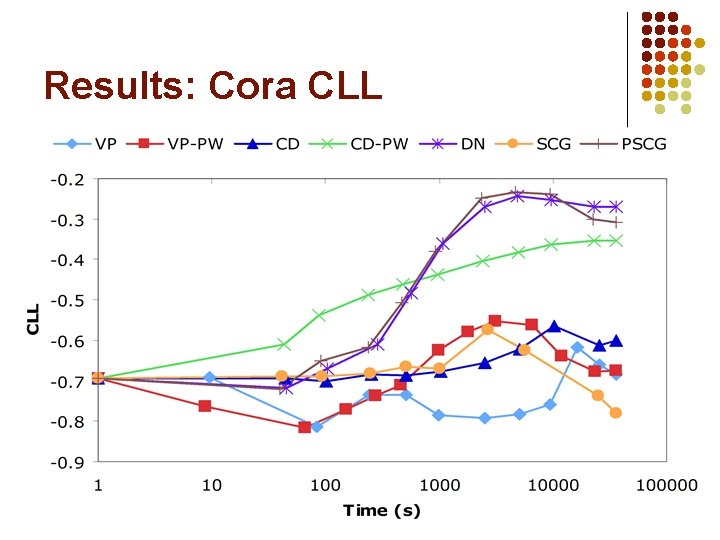

Results: Cora CLL

Results: Cora CLL

Results: Cora CLL

Results: Cora CLL

![[Craven & Slattery, 2001] Experiments: Web. KB l Task: Predict categories of 4165 web [Craven & Slattery, 2001] Experiments: Web. KB l Task: Predict categories of 4165 web](http://slidetodoc.com/presentation_image_h2/bb76a3f902e9e4f107ebf148f8125c26/image-43.jpg)

[Craven & Slattery, 2001] Experiments: Web. KB l Task: Predict categories of 4165 web pages MLN: l Page. Class(page, class) Has. Word(page, word) Links(page, page) Has. Word(p, +w) => Page. Class(p, +c) !Has. Word(p, +w) => Page. Class(p, +c) ^ Links(p, p') => Page. Class(p', +c') l l l Weights: 10, 891 Ground clauses: > 300, 000 Condition number: ~7000

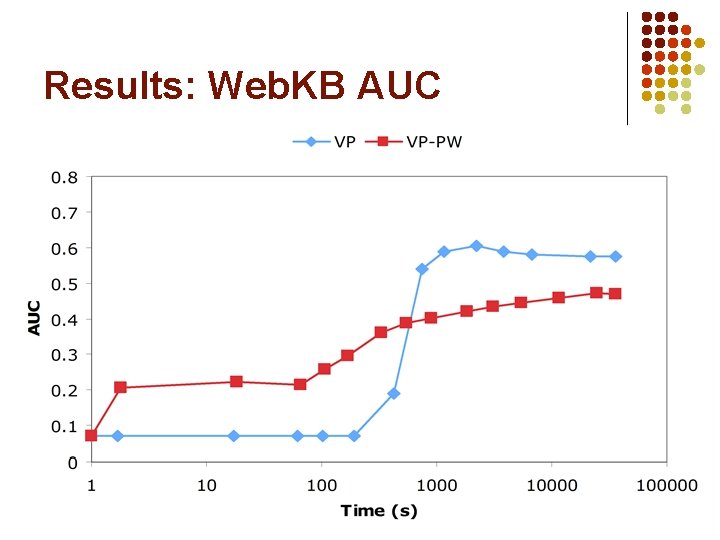

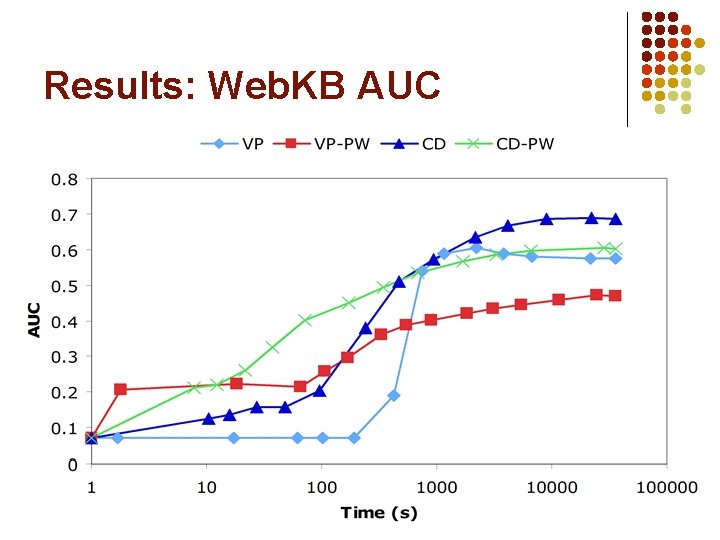

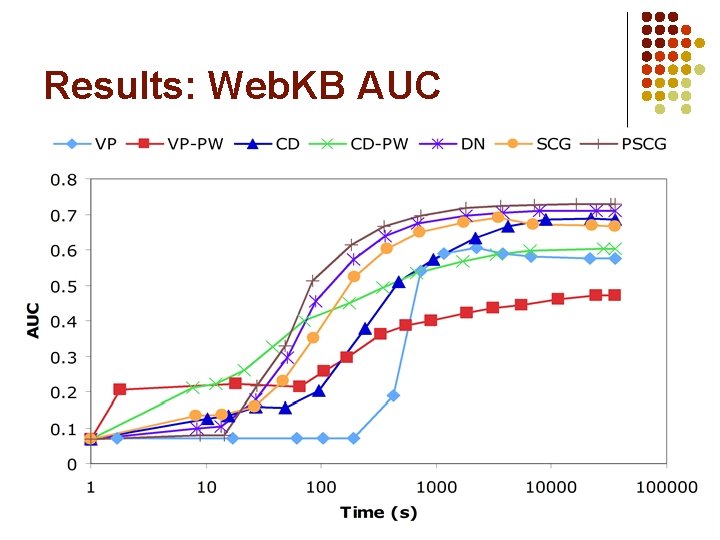

Results: Web. KB AUC

Results: Web. KB AUC

Results: Web. KB AUC

Results: Web. KB CLL

- Slides: 47