Web Search Slides based on those of C

Web Search Slides based on those of C. Lee Giles, who credits R. Mooney, S. White, W. Arms C. Manning, P. Raghavan, H. Schutze

Search Engine Strategies Subject hierarchies • Yahoo! , dmoz -- use of human indexing Web crawling + automatic indexing • General -- Google, Ask, Exalead, Bing Mixed models • Graphs - Kart. OO; clusters – Clusty (now yippy) New ones evolving 2

Components of Web Search Service Components • Web crawler • Indexing system • Search system Considerations • Economics • Scalability • Legal issues 3

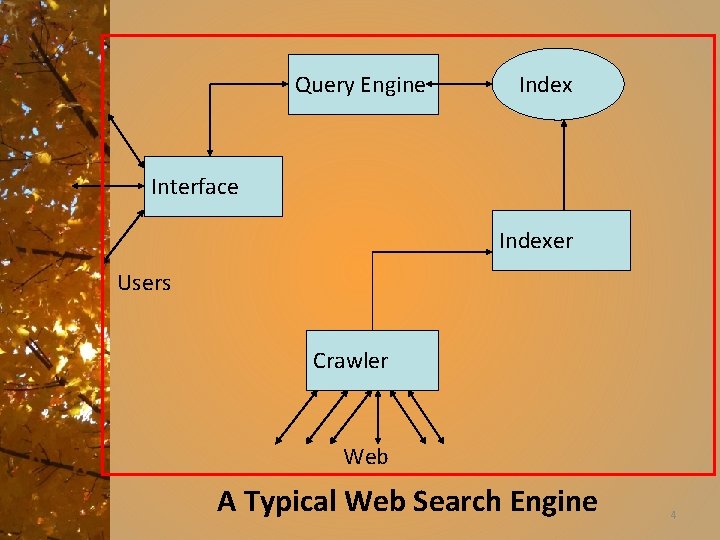

Query Engine Index Interface Indexer Users Crawler Web A Typical Web Search Engine 4

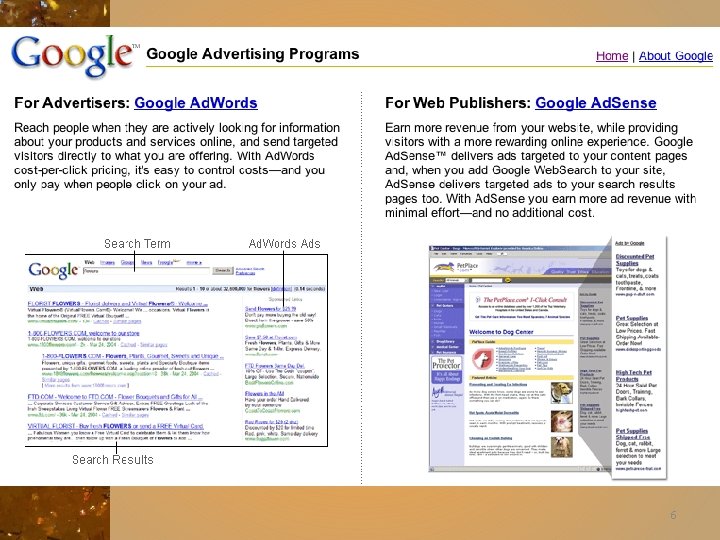

Business models for advertisers • When someone enters a query related to your business or product, your page – Comes up first in SERP (Search Engine Result Page) – Comes up on the first SERP page • Otherwise, you will not get the business • Completely dependent on search engines ranking algorithm for organic SEO (Search Engine Optimization) • Google changes ranking – Will other search engines follow? 5

6

Generations of search engines • 0 th - Library catalog – Based on human created metadata • 1 st - Altavista – First large comprehensive database – Word based index and ranking • 2 nd - Google – High relevance – Link (connectivity) based importance 7

Motivation for Link Analysis • First approach to query matching – use standard information retrieval methods, cosine, TF -IDF, . . . • The web is a different environment than the IR context of a set collection. A different approach is needed: – Huge and growing number of pages • Try “classification methods”, Google estimates: about 1, 330, 000 pages. • How to choose only 30 -40 pages and rank them suitably to present to the user? – Content similarity is easily spammed. • A page owner can repeat some words and add many related words to boost the rankings of his pages and/or to make the pages relevant to a large number of queries. 9

Early hyperlinks • Web pages are connected through hyperlinks, which carry important information. – Some hyperlinks: organize information at the same site. – Other hyperlinks: point to pages on other Web sites. Such out-going hyperlinks often indicate an implicit conveyance of authority to the pages being pointed to. • Those pages that are pointed to by many other pages are likely to contain authoritative information. 10

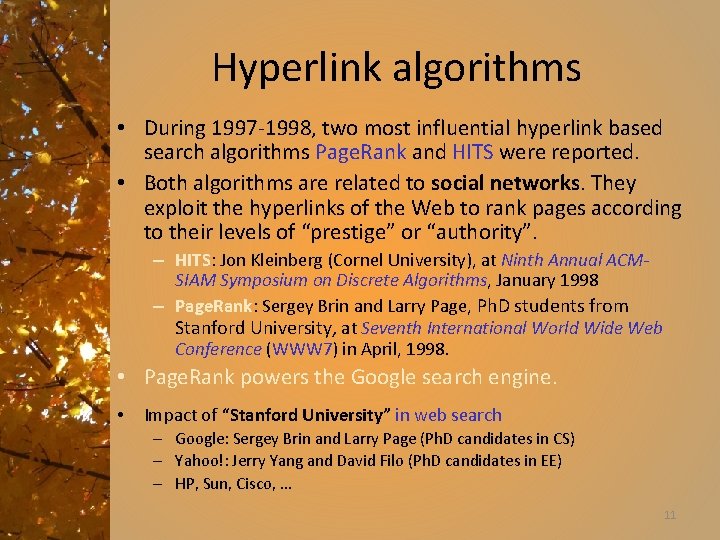

Hyperlink algorithms • During 1997 -1998, two most influential hyperlink based search algorithms Page. Rank and HITS were reported. • Both algorithms are related to social networks. They exploit the hyperlinks of the Web to rank pages according to their levels of “prestige” or “authority”. – HITS: Jon Kleinberg (Cornel University), at Ninth Annual ACMSIAM Symposium on Discrete Algorithms, January 1998 – Page. Rank: Sergey Brin and Larry Page, Ph. D students from Stanford University, at Seventh International World Wide Web Conference (WWW 7) in April, 1998. • Page. Rank powers the Google search engine. • Impact of “Stanford University” in web search – Google: Sergey Brin and Larry Page (Ph. D candidates in CS) – Yahoo!: Jerry Yang and David Filo (Ph. D candidates in EE) – HP, Sun, Cisco, … 11

Other uses • Apart from search ranking, hyperlinks are also useful for finding Web communities. – A Web community is a cluster of densely linked pages representing a group of people with a special interest. • Beyond explicit hyperlinks on the Web, links in other contexts are useful too, e. g. , – for discovering communities of named entities (e. g. , people and organizations) in free text documents, and – for analyzing social phenomena in emails. .

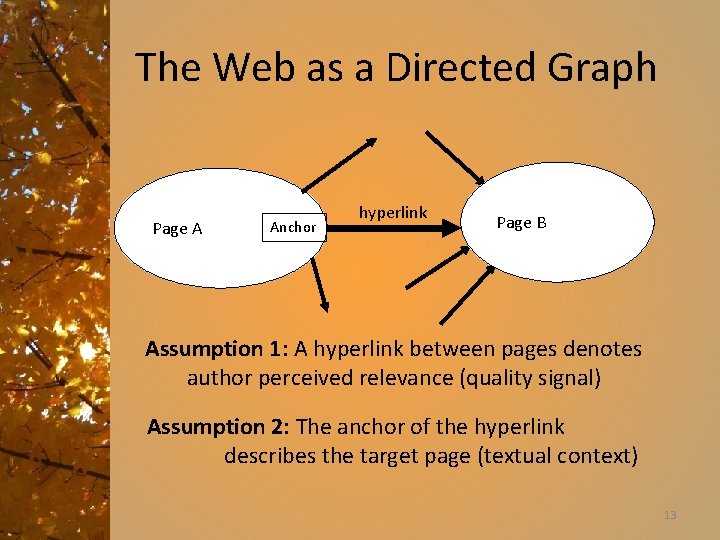

The Web as a Directed Graph Page A Anchor hyperlink Page B Assumption 1: A hyperlink between pages denotes author perceived relevance (quality signal) Assumption 2: The anchor of the hyperlink describes the target page (textual context) 13

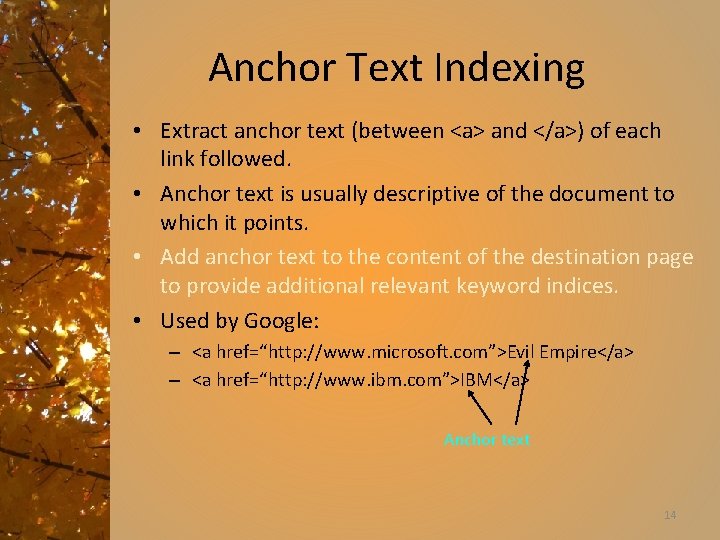

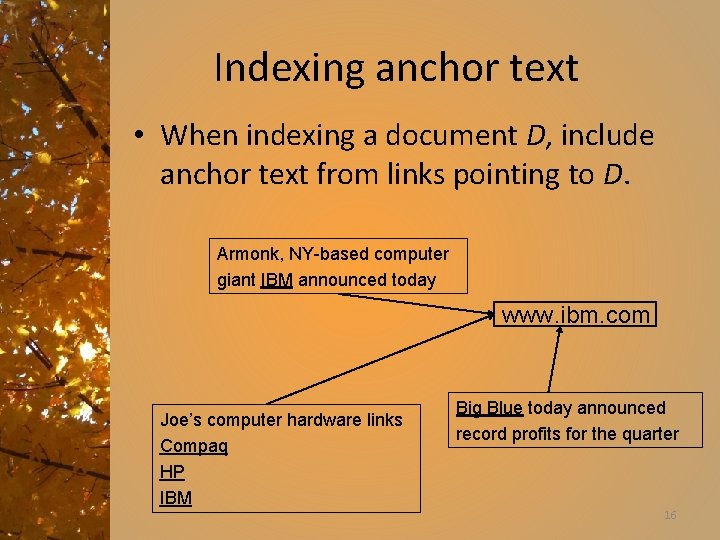

Anchor Text Indexing • Extract anchor text (between <a> and </a>) of each link followed. • Anchor text is usually descriptive of the document to which it points. • Add anchor text to the content of the destination page to provide additional relevant keyword indices. • Used by Google: – <a href=“http: //www. microsoft. com”>Evil Empire</a> – <a href=“http: //www. ibm. com”>IBM</a> Anchor text 14

![Anchor Text WWW Worm - Mc. Bryan [Mcbr 94] • For ibm how to Anchor Text WWW Worm - Mc. Bryan [Mcbr 94] • For ibm how to](http://slidetodoc.com/presentation_image_h2/cfdaa7f5e7f3a161e85dadeb5eee4d3c/image-14.jpg)

Anchor Text WWW Worm - Mc. Bryan [Mcbr 94] • For ibm how to distinguish between: – IBM’s home page (mostly graphical) – IBM’s copyright page (high term freq. for ‘ibm’) – Rival’s spam page (arbitrarily high term freq. ) “ibm” A million pieces of anchor text with “ibm” send a strong signal “ibm. com” “IBM home page” www. ibm. com 15

Indexing anchor text • When indexing a document D, include anchor text from links pointing to D. Armonk, NY-based computer giant IBM announced today www. ibm. com Joe’s computer hardware links Compaq HP IBM Big Blue today announced record profits for the quarter 16

Indexing anchor text • Can sometimes have unexpected side effects - e. g. , french military victories • Helps when descriptive text in destination page is embedded in image logos rather than in accessible text. • Many times anchor text is not useful: – “click here” • Increases content more for popular pages with many in-coming links, increasing recall of these pages. • May even give higher weights to tokens from anchor text. 17

Query length statistics • See http: //www. keyworddiscovery. com/key word-stats. html • Statistics related to length of query on the top search engines and the market share of the search engines 20

Concept of Relevance Document measures Relevance, as conventionally defined, is binary (relevant or not relevant). It is usually estimated by the similarity between the terms in the query and each document. Importance measures documents by their likelihood of being useful to a variety of users. It is usually estimated by some measure of popularity. Web search engines rank documents by combination of relevance and importance. The goal is to present the user with the most important of the relevant documents. 21

Ranking Options 1. Paid advertisers 2. Manually created classification 3. Vector space ranking with corrections for document length 4. Extra weighting for specific fields, e. g. , title, anchors, etc. 5. Popularity or importance, e. g. , Page. Rank Not all these factors are made public. 22

History of link analysis • Bibliometrics – Citation analysis since the 1960’s – Citation links to and from documents • Basis of pagerank idea 23

Bibliometrics Techniques that use citation analysis to measure the similarity of journal articles or their importance Bibliographic coupling: two papers that cite many of the same papers Co-citation: two papers that were cited by many of the same papers Impact factor (of a journal): frequency with which the average article in a journal has been cited in a particular year or period Citation frequency 24

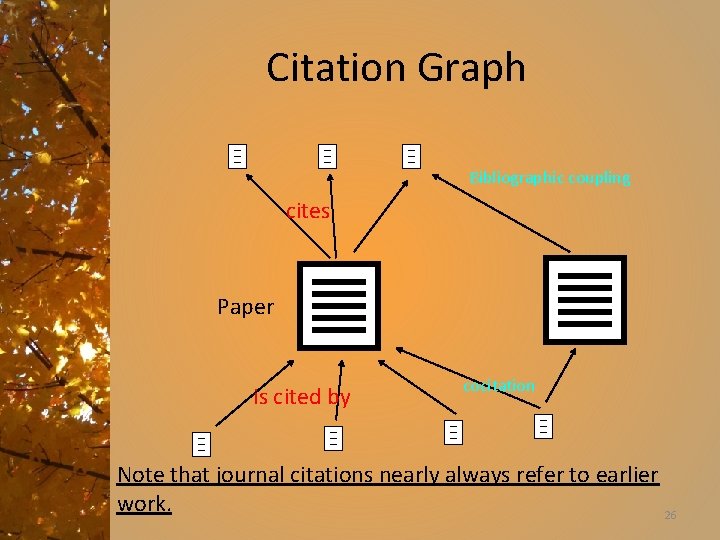

Citation Graph Bibliographic coupling cites Paper is cited by cocitation Note that journal citations nearly always refer to earlier work. 26

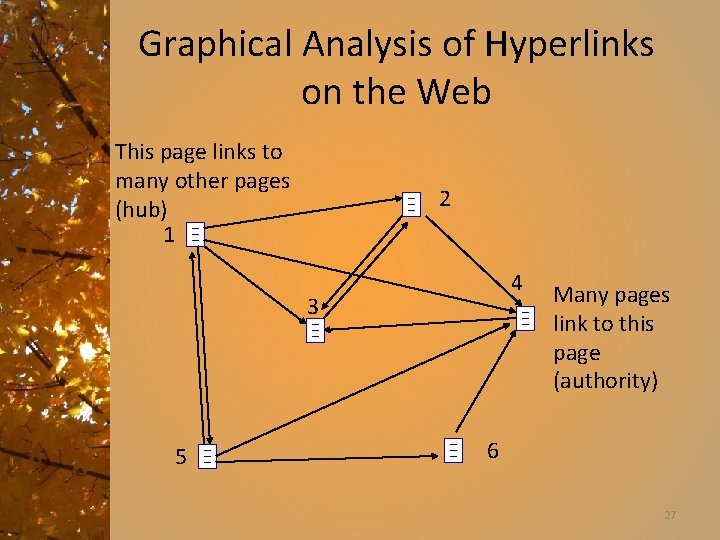

Graphical Analysis of Hyperlinks on the Web This page links to many other pages (hub) 1 2 4 3 5 Many pages link to this page (authority) 6 27

Bibliometrics: Citation Analysis • Many standard documents include bibliographies (or references), explicit citations to other previously published documents. • Using citations as links, standard corpora can be viewed as a graph. • The structure of this graph, independent of content, can provide interesting information about the similarity of documents and the structure of information. • Impact of a paper! 29

Impact Factor • Developed by Garfield in 1972 to measure the importance (quality, influence) of scientific journals. • Measure of how often papers in the journal are cited by other scientists. • Computed and published annually by the Institute for Scientific Information (ISI). • The impact factor of a journal J in year Y is the average number of citations (from indexed documents published in year Y) to a paper published in J in year Y 1 or Y 2. • Does not account for the quality of the citing article. 30

Citations vs. Links • Web links are a bit different from citations: – Many links are navigational. – Many pages with high in-degree are portals not content providers. – Not all links are endorsements. – Company websites don’t point to their competitors. – Citations to relevant literature is enforced by peer-review. 33

Ranking: query (in)dependence • Query independent ranking – Important pages; no need for queries – Trusted pages? – Pagerank can do this • Query dependent ranking – Combine importance with query evaluation – Hits is query based. 34

Authorities • Authorities are pages that are recognized as providing significant, trustworthy, and useful information on a topic. • In-degree (number of pointers to a page) is one simple measure of authority. • However in-degree treats all links as equal. • Should links from pages that are themselves authoritative count more? 35

Hubs • Hubs are index pages that provide lots of useful links to relevant content pages (topic authorities). • Ex: pages are included in the course home page 36

Hyperlink-Induced Topic Search (HITS) • In response to a query, instead of an ordered list of pages each meeting the query, find two sets of inter-related pages: – Hub pages are good lists of links on a subject. • e. g. , “Bob’s list of cancer-related links. ” – Authority pages occur recurrently on good hubs for the subject. • Best suited for “broad topic” queries rather than for page-finding queries. • Gets at a broader slice of common opinion. 37

HITS • Algorithm developed by Kleinberg in 1998. • IBM search engine project • Attempts to computationally determine hubs and authorities on a particular topic through analysis of a relevant subgraph of the web. • Based on mutually recursive facts: – Hubs point to lots of authorities. – Authorities are pointed to by lots of hubs. 38

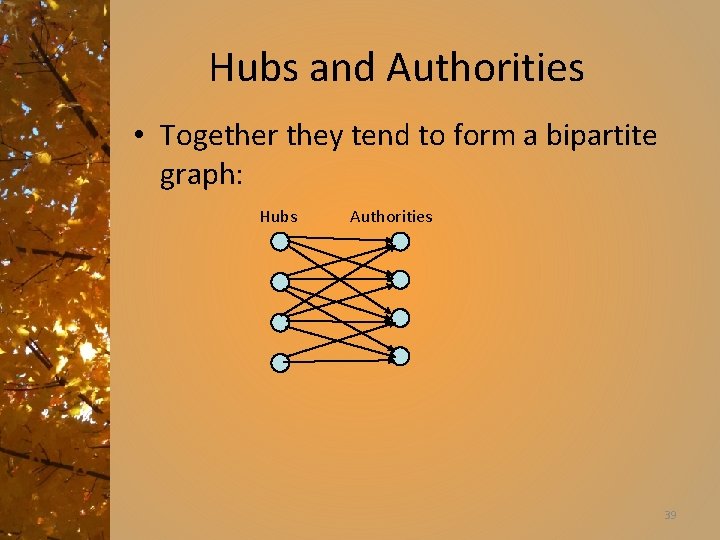

Hubs and Authorities • Together they tend to form a bipartite graph: Hubs Authorities 39

HITS Algorithm • Computes hubs and authorities for a particular topic specified by a normal query. – Thus query dependent ranking • First determines a set of relevant pages for the query called the base set S. • Analyze the link structure of the web subgraph defined by S to find authority and hub pages in this set. 40

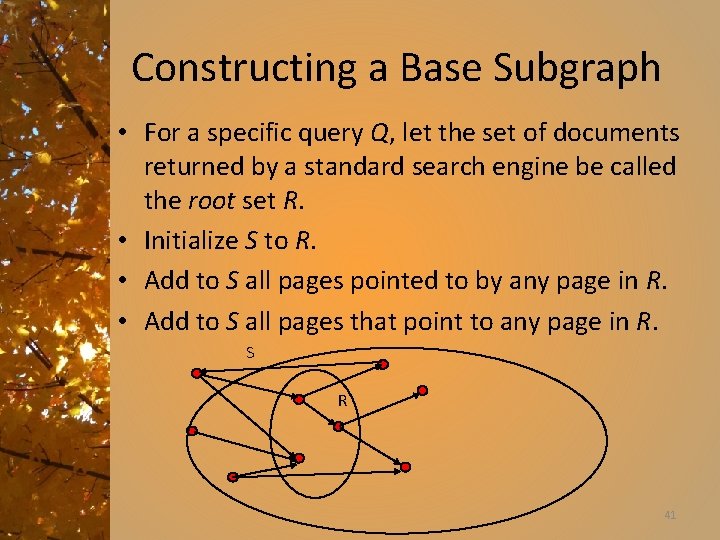

Constructing a Base Subgraph • For a specific query Q, let the set of documents returned by a standard search engine be called the root set R. • Initialize S to R. • Add to S all pages pointed to by any page in R. • Add to S all pages that point to any page in R. S R 41

Base Limitations • To limit computational expense: – Limit number of root pages to the top 200 pages retrieved for the query. – Limit number of “back-pointer” pages to a random set of at most 50 pages returned by a “reverse link” query. • To eliminate purely navigational links: – Eliminate links between two pages on the same host. • To eliminate “non-authority-conveying” links: – Allow only m (m 4 8) pages from a given host as pointers to any individual page. 42

Authorities and In-Degree • Even within the base set S for a given query, the nodes with highest in-degree are not necessarily authorities (may just be generally popular pages like Yahoo or Amazon). • True authority pages are pointed to by a number of hubs (i. e. pages that point to lots of authorities). 43

Iterative Algorithm • Use an iterative algorithm to slowly converge on a mutually reinforcing set of hubs and authorities. • Maintain for each page p S: – Authority score: ap (vector a) – Hub score: hp (vector h) • Initialize all ap = hp = 1 • Maintain normalized scores: 44

Convergence • Algorithm converges to a fix-point if iterated indefinitely. • Define A to be the adjacency matrix for the subgraph defined by S. – Aij = 1 for i S, j S iff i j • Authority vector, a, converges to the principal eigenvector of A TA • Hub vector, h, converges to the principal eigenvector of AAT • In practice, 20 iterations produces fairly stable results. 45

HITS Results • An ambiguous query can result in the principal eigenvector only covering one of the possible meanings. • Non-principal eigenvectors may contain hubs & authorities for other meanings. • Example: “jaguar”: – Atari video game (principal eigenvector) – NFL Football team (2 nd non-princ. eigenvector) – Automobile (3 rd non-princ. eigenvector) • Reportedly used by Ask. com 46

Google Background “Our main goal is to improve the quality of web search engines” • Google googol = 10^100 • Originally part of the Stanford digital library project known as Web. Base, commercialized in 1999 47

Initial Design Goals • Deliver results that have very high precision even at the expense of recall • Make search engine technology transparent, i. e. advertising shouldn’t bias results • Bring search engine technology into academic realm in order to support novel research activities on large web data sets • Make system easy to use for most people, e. g. users shouldn’t have to specify more than a few words 48

Google Search Engine Features Two main features to increase result precision: • Uses link structure of web (Page. Rank) • Uses text surrounding hyperlinks to improve accurate document retrieval Other features include: • Takes into account word proximity in documents • Uses font size, word position, etc. to weight word • Storage of full raw html pages 49

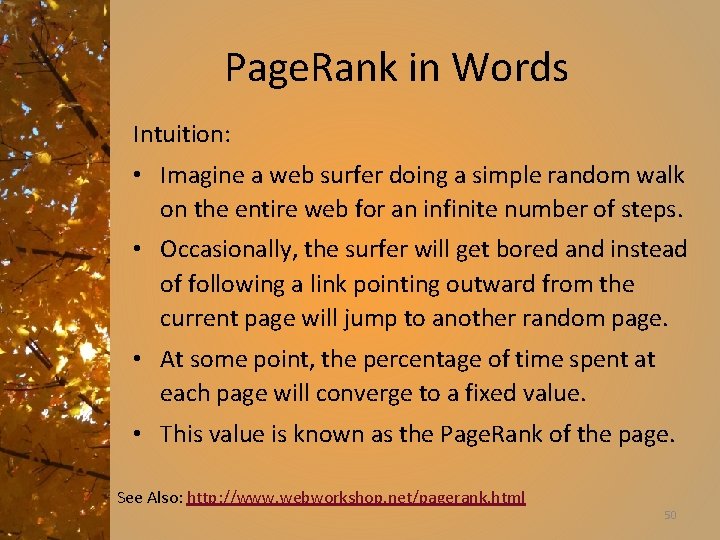

Page. Rank in Words Intuition: • Imagine a web surfer doing a simple random walk on the entire web for an infinite number of steps. • Occasionally, the surfer will get bored and instead of following a link pointing outward from the current page will jump to another random page. • At some point, the percentage of time spent at each page will converge to a fixed value. • This value is known as the Page. Rank of the page. See Also: http: //www. webworkshop. net/pagerank. html 50

Page. Rank • Link-analysis method used by Google (Brin & Page, 1998). • Does not attempt to capture the distinction between hubs and authorities. • Ranks pages just by authority. • Query independent • Applied to the entire web rather than a local neighborhood of pages surrounding the results of a query. 51

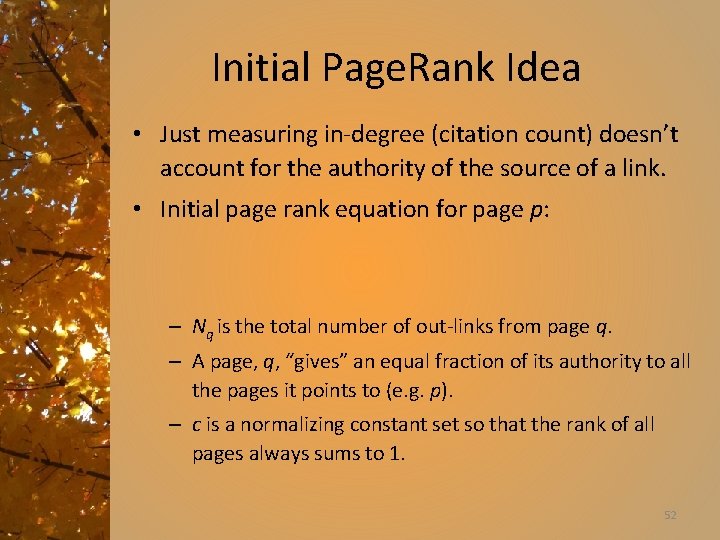

Initial Page. Rank Idea • Just measuring in-degree (citation count) doesn’t account for the authority of the source of a link. • Initial page rank equation for page p: – Nq is the total number of out-links from page q. – A page, q, “gives” an equal fraction of its authority to all the pages it points to (e. g. p). – c is a normalizing constant set so that the rank of all pages always sums to 1. 52

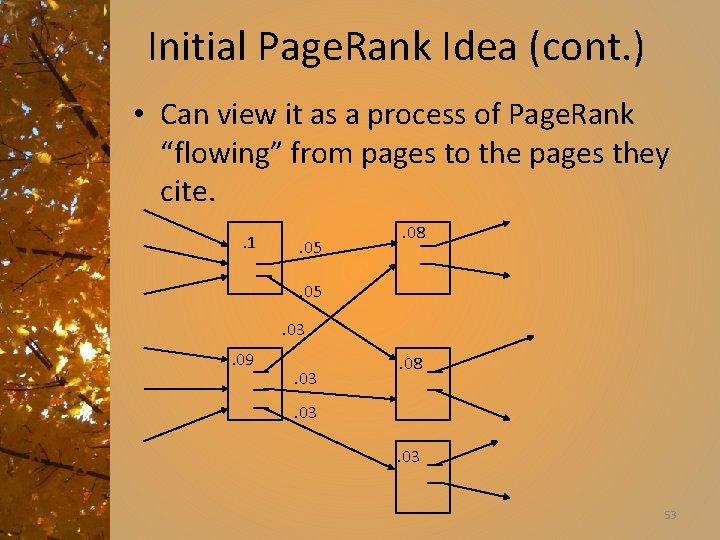

Initial Page. Rank Idea (cont. ) • Can view it as a process of Page. Rank “flowing” from pages to the pages they cite. . 1 . 05 . 08 . 05. 03. 09 . 03 . 08 . 03 53

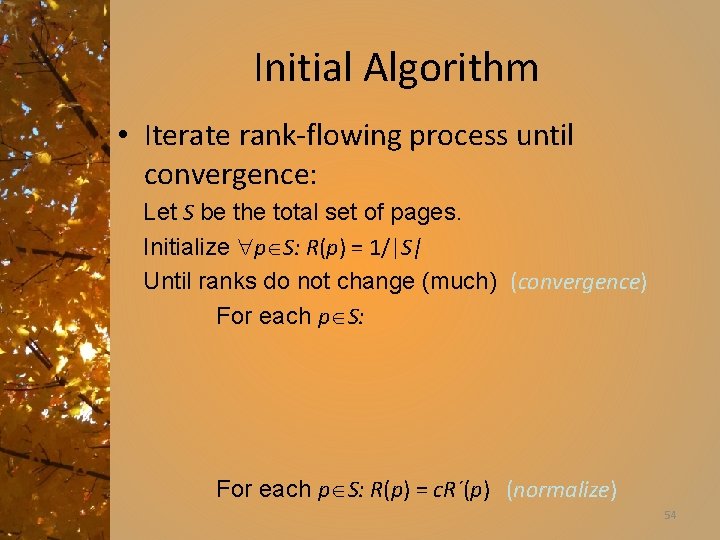

Initial Algorithm • Iterate rank-flowing process until convergence: Let S be the total set of pages. Initialize p S: R(p) = 1/|S| Until ranks do not change (much) (convergence) For each p S: R(p) = c. R´(p) (normalize) 54

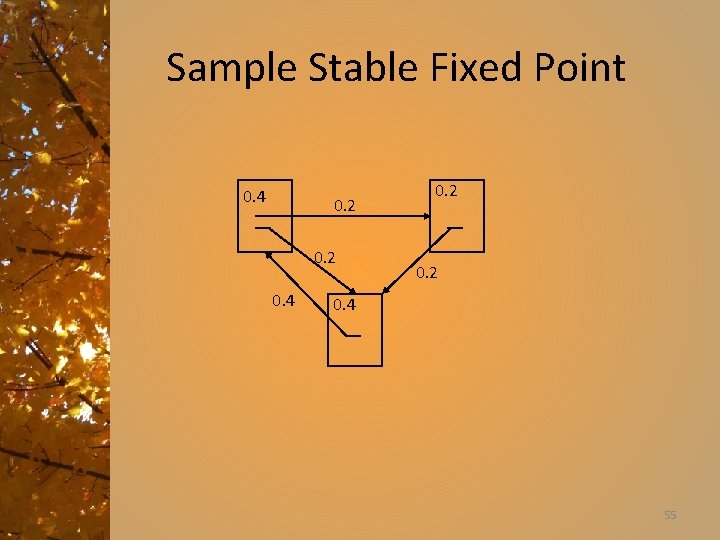

Sample Stable Fixed Point 0. 4 0. 2 0. 4 55

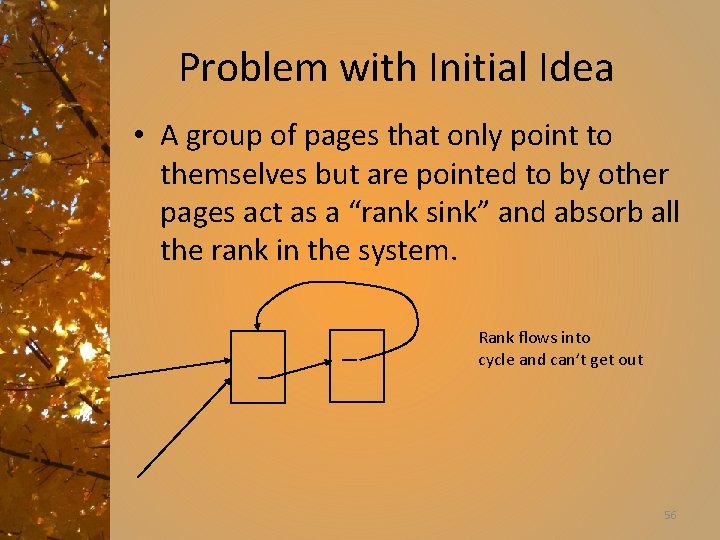

Problem with Initial Idea • A group of pages that only point to themselves but are pointed to by other pages act as a “rank sink” and absorb all the rank in the system. Rank flows into cycle and can’t get out 56

Rank Source • Introduce a “rank source” E that continually replenishes the rank of each page, p, by a fixed amount E(p). 57

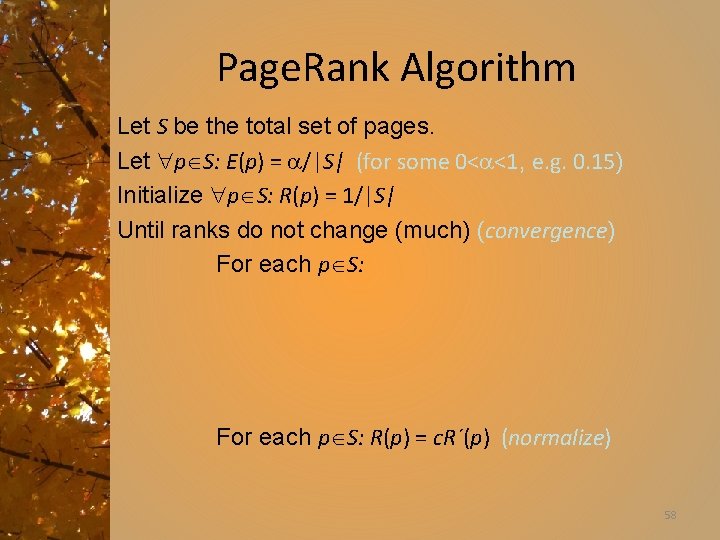

Page. Rank Algorithm Let S be the total set of pages. Let p S: E(p) = /|S| (for some 0< <1, e. g. 0. 15) Initialize p S: R(p) = 1/|S| Until ranks do not change (much) (convergence) For each p S: R(p) = c. R´(p) (normalize) 58

Random Surfer Model • Page. Rank can be seen as modeling a “random surfer” that starts on a random page and then at each point: – With probability E(p) randomly jumps to page p. – Otherwise, randomly follows a link on the current page. • R(p) models the probability that this random surfer will be on page p at any given time. • “E jumps” are needed to prevent the random surfer from getting “trapped” in web sinks with no outgoing links. 59

Justifications for using Page. Rank • Attempts to model user behavior • Captures the notion that the more a page is pointed to by “important” pages, the more it is worth looking at • Takes into account global structure of web 60

Speed of Convergence • Early experiments on Google used 322 million links. • Page. Rank algorithm converged (within small tolerance) in about 52 iterations. • Number of iterations required for convergence is empirically O(log n) (where n is the number of links). • Therefore calculation is quite efficient. 61

Google Ranking • Complete Google ranking includes (based on university publications prior to commercialization). – – Vector-space similarity component. Keyword proximity component. HTML-tag weight component (e. g. title preference). Page. Rank component. • Details of current commercial ranking functions are trade secrets. – Pagerank becomes Googlerank! 62

Personalized Page. Rank • Page. Rank can be biased (personalized) by changing E to a non-uniform distribution. • Restrict “random jumps” to a set of specified relevant pages. • For example, let E(p) = 0 except for one’s own home page, for which E(p) = • This results in a bias towards pages that are closer in the web graph to your own homepage. 63

Google Page. Rank-Biased Crawling • Use Page. Rank to direct (focus) a crawler on “important” pages. • Compute page-rank using the current set of crawled pages. • Order the crawler’s search queue based on current estimated Page. Rank. 64

Link Analysis Conclusions • Link analysis uses information about the structure of the web graph to aid search. • It is one of the major innovations in web search. • It is the primary reason for Google’s success. • Still lots of research regarding improvements

Limits of Link Analysis • Stability – Adding even a small number of nodes/edges to the graph has a significant impact • Topic drift – A top authority may be a hub of pages on a different topic resulting in increased rank of the authority page • Content evolution – Adding/removing links/content can affect the intuitive authority rank of a page requiring recalculation of page ranks

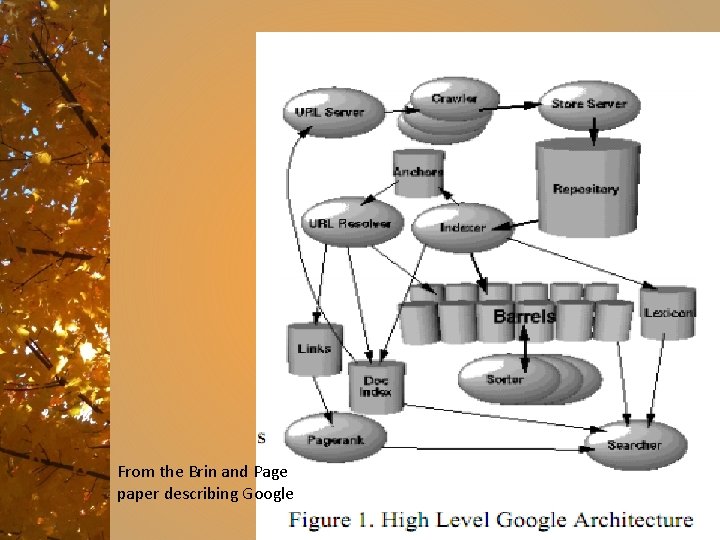

From the Brin and Page paper describing Google

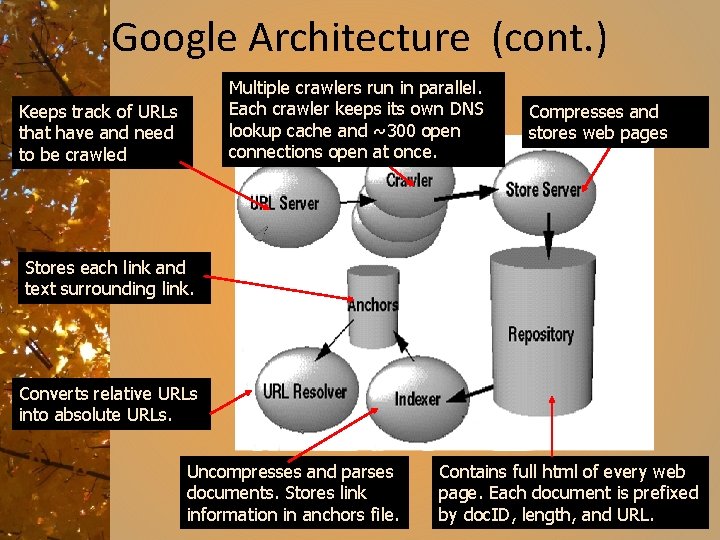

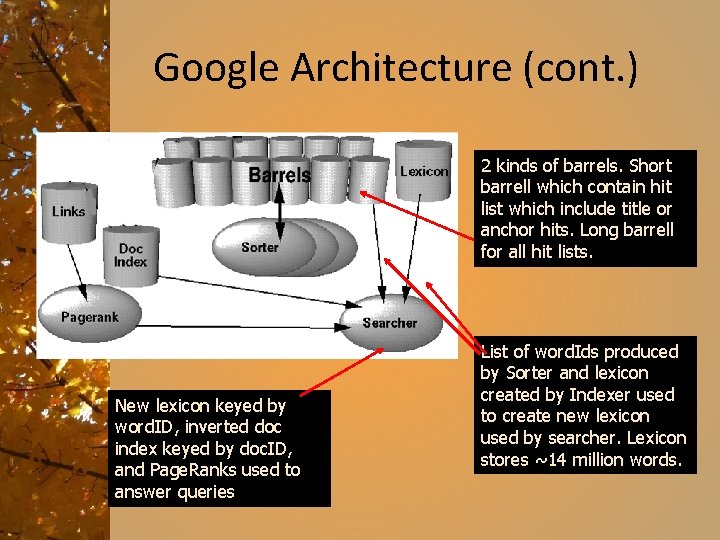

Google Architecture (cont. ) Multiple crawlers run in parallel. Each crawler keeps its own DNS lookup cache and ~300 open connections open at once. Keeps track of URLs that have and need to be crawled Compresses and stores web pages Stores each link and text surrounding link. Converts relative URLs into absolute URLs. Uncompresses and parses documents. Stores link information in anchors file. Contains full html of every web page. Each document is prefixed by doc. ID, length, and URL.

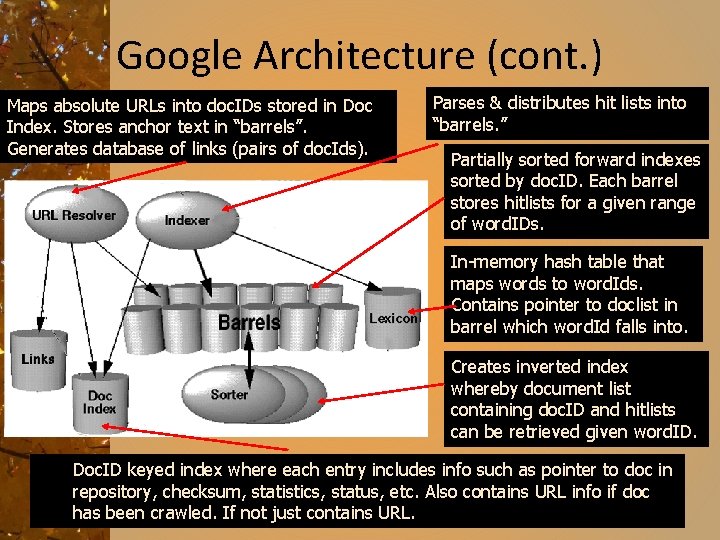

Google Architecture (cont. ) Maps absolute URLs into doc. IDs stored in Doc Index. Stores anchor text in “barrels”. Generates database of links (pairs of doc. Ids). Parses & distributes hit lists into “barrels. ” Partially sorted forward indexes sorted by doc. ID. Each barrel stores hitlists for a given range of word. IDs. In-memory hash table that maps words to word. Ids. Contains pointer to doclist in barrel which word. Id falls into. Creates inverted index whereby document list containing doc. ID and hitlists can be retrieved given word. ID. Doc. ID keyed index where each entry includes info such as pointer to doc in repository, checksum, statistics, status, etc. Also contains URL info if doc has been crawled. If not just contains URL.

Google Architecture (cont. ) 2 kinds of barrels. Short barrell which contain hit list which include title or anchor hits. Long barrell for all hit lists. New lexicon keyed by word. ID, inverted doc index keyed by doc. ID, and Page. Ranks used to answer queries List of word. Ids produced by Sorter and lexicon created by Indexer used to create new lexicon used by searcher. Lexicon stores ~14 million words.

Growth of Web Searching In November 1997: • Alta. Vista was handling 20 million searches/day. • Google forecast for 2000 was 100 s of millions of searches/day. In 2004, Google reports 250 million webs searches/day, and estimates that the total number over all engines is 500 million searches/day. Moore's Law and web searching In 7 years, Moore's Law predicts computer power will increase by a factor of at least 24 = 16. It appears that computing power is growing at least as fast as web searching.

Growth of Google In 2000: 85 people 50% technical, 14 Ph. D. in Computer Science In 2000: Equipment 2, 500 Linux machines 80 terabytes of spinning disks 30 new machines installed daily By fall 2002, Google had grown to over 400 people. In 2004, Google hired 1, 000 new people. As of 2008, 16, 800 employees, $15 billion in sales => $1 million average earnings/employee

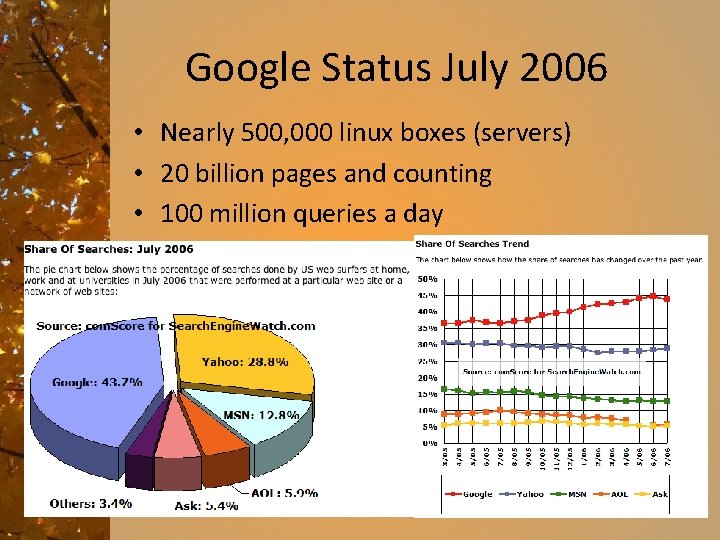

Google Status July 2006 • Nearly 500, 000 linux boxes (servers) • 20 billion pages and counting • 100 million queries a day

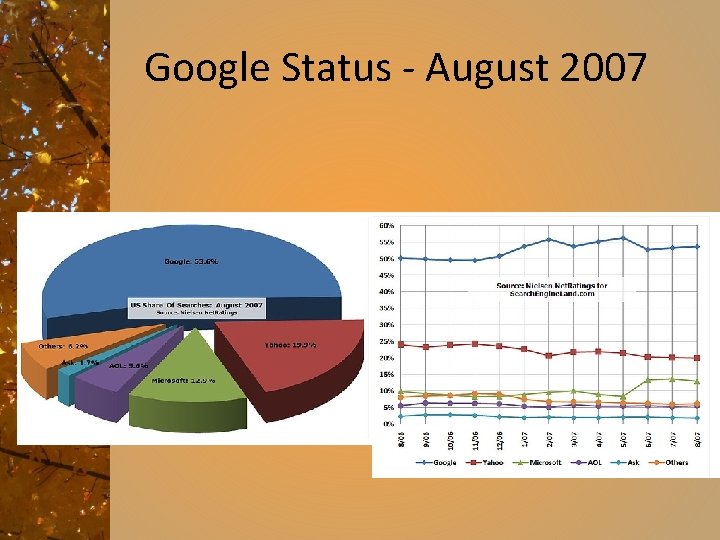

Google Status - August 2007

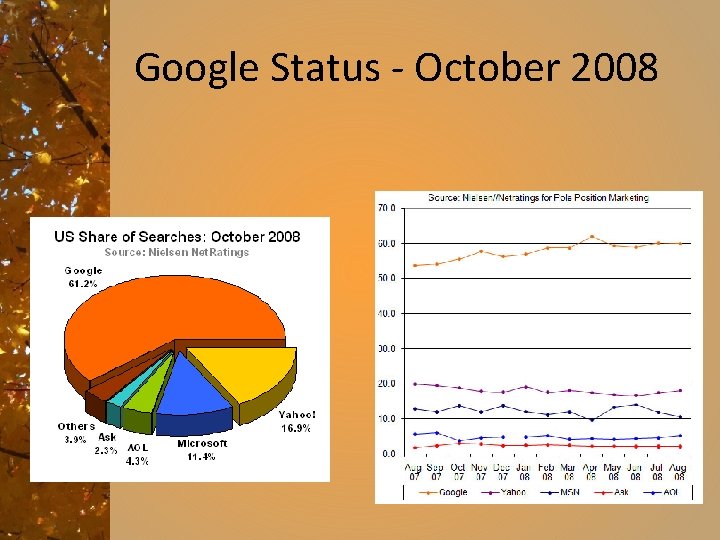

Google Status - October 2008

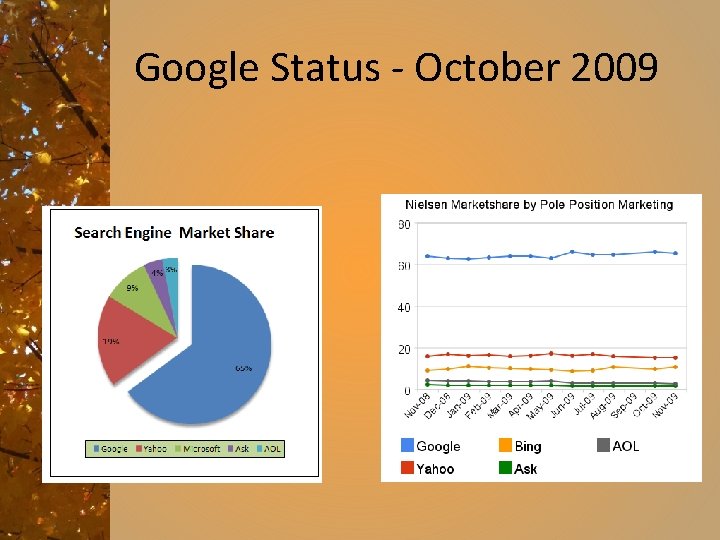

Google Status - October 2009

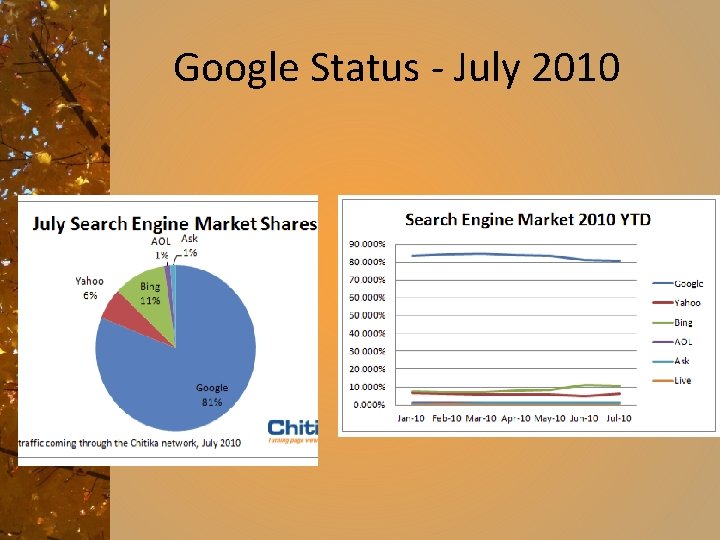

Google Status - July 2010

What’s coming? • More personal search • Social search • Mobile search • Specialty search • Freshness search 3 rd generation search? Will anyone replace Google? “Search as a problem is only 5% solved” Udi Manber, 1 st Yahoo, 2 nd Amazon, now Google

Google of the future

- Slides: 72