Web Search Engines Zachary G Ives University of

Web Search Engines Zachary G. Ives University of Pennsylvania CSE 455 / CIS 555 – Internet and Web Systems March 27, 2008

Administrivia § Homework 3 due 4/1 § Please decide on a group of 2 or 3 partners to work on the project (groups of 3 or 4) 2

Improved Page. Rank § Remove out-degree 0 nodes (or consider them to refer back to referrer) § Add decay factor to deal with sinks § Page. Rank(p) = d b B(p) (Page. Rank(b) / N(b)) + (1 – d) § Intuition in the idea of the “random surfer”: § Surfer occasionally stops following link sequence and jumps to new random page, with probability 1 - d 3

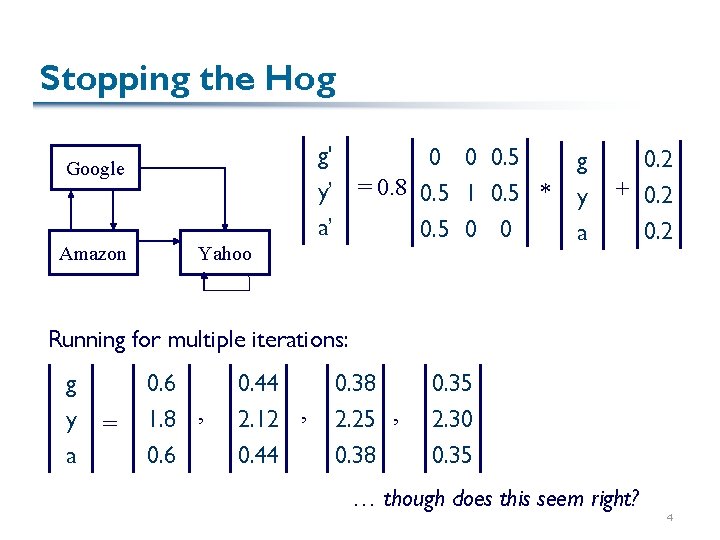

Stopping the Hog g' y’ a’ Google Amazon 0 0 0. 5 = 0. 8 0. 5 1 0. 5 * 0. 5 0 0 Yahoo g y a 0. 2 + 0. 2 Running for multiple iterations: g y a = 0. 6 1. 8 , 0. 6 0. 44 2. 12 , 0. 44 0. 38 2. 25 , 0. 38 0. 35 2. 30 0. 35 … though does this seem right? 4

Summary of Link Analysis § Use back-links as a means of adjusting the “worthiness” or “importance” of a page § Use iterative process over matrix/vector values to reach a convergence point § Page. Rank is query-independent and considered relatively stable § But vulnerable to SEO § Just one piece of the overall picture… 5

Overall Ranking Strategies in Web Search Engines § Everybody has their own “secret sauce” that uses: § § § § Vector model (TF/IDF) Proximity of terms Where terms appear (title vs. body vs. link) Link analysis Info from directories Page popularity gorank. com “search engine optimization site” compares these factors § Some alternative approaches: § Some new engines (Vivisimo, Teoma, Clusty) try to do clustering § A few engines (Dogpile, Mamma. com) try to do meta-search 6

![Google Architecture [Brin/Page 98] Focus was on scalability to the size of the Web Google Architecture [Brin/Page 98] Focus was on scalability to the size of the Web](http://slidetodoc.com/presentation_image_h2/578b8f877d999e30ccdbe838a70e5bee/image-7.jpg)

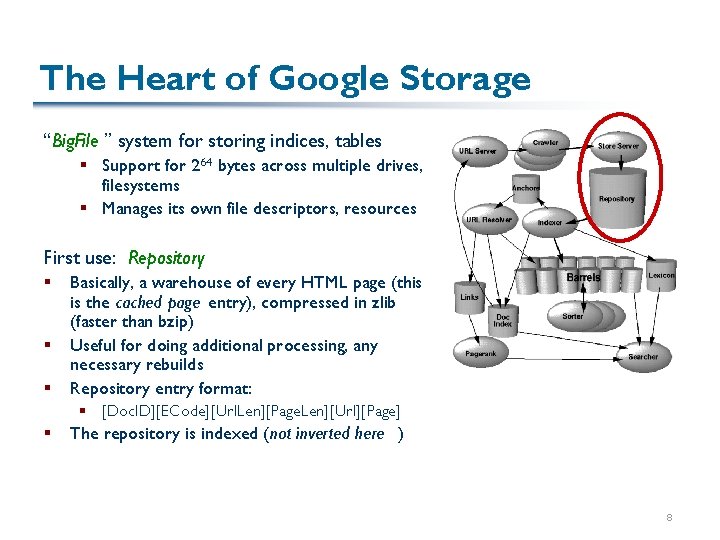

Google Architecture [Brin/Page 98] Focus was on scalability to the size of the Web First to really exploit Link Analysis Started as an academic project @ Stanford; became a startup Our discussion will be on early Google – today they keep things secret! 7

The Heart of Google Storage “Big. File ” system for storing indices, tables § Support for 264 bytes across multiple drives, filesystems § Manages its own file descriptors, resources First use: Repository § § § Basically, a warehouse of every HTML page (this is the cached page entry), compressed in zlib (faster than bzip) Useful for doing additional processing, any necessary rebuilds Repository entry format: § [Doc. ID][ECode][Url. Len][Page. Len][Url][Page] § The repository is indexed (not inverted here ) 8

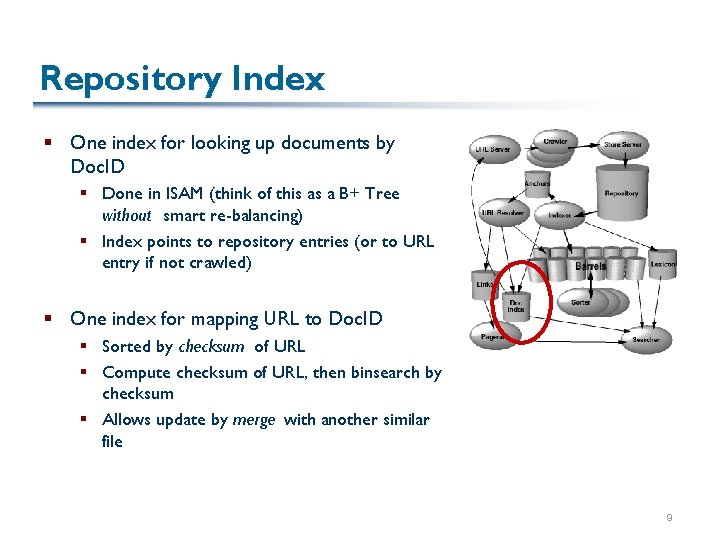

Repository Index § One index for looking up documents by Doc. ID § Done in ISAM (think of this as a B+ Tree without smart re-balancing) § Index points to repository entries (or to URL entry if not crawled) § One index for mapping URL to Doc. ID § Sorted by checksum of URL § Compute checksum of URL, then binsearch by checksum § Allows update by merge with another similar file 9

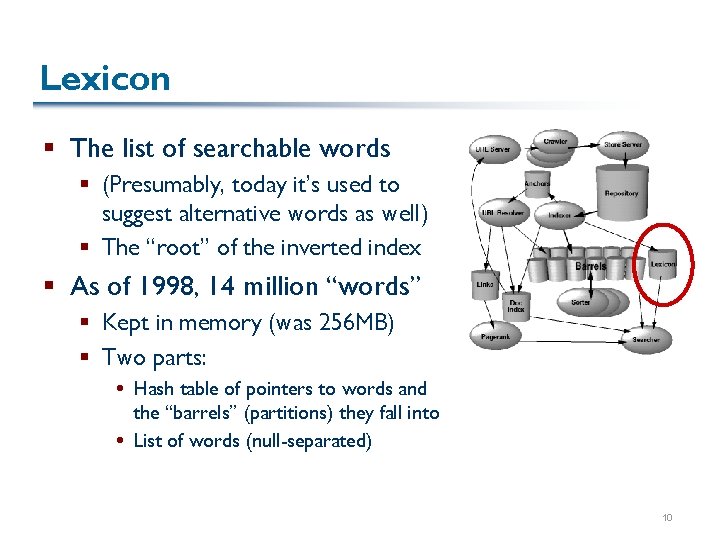

Lexicon § The list of searchable words § (Presumably, today it’s used to suggest alternative words as well) § The “root” of the inverted index § As of 1998, 14 million “words” § Kept in memory (was 256 MB) § Two parts: Hash table of pointers to words and the “barrels” (partitions) they fall into List of words (null-separated) 10

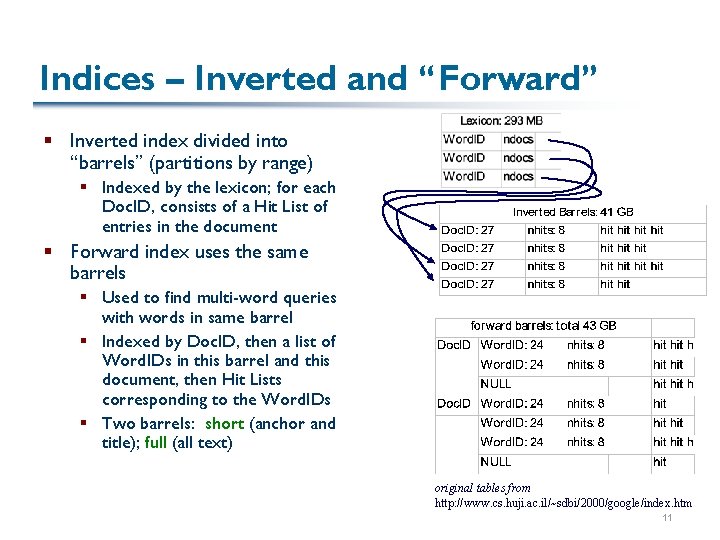

Indices – Inverted and “Forward” § Inverted index divided into “barrels” (partitions by range) § Indexed by the lexicon; for each Doc. ID, consists of a Hit List of entries in the document § Forward index uses the same barrels § Used to find multi-word queries with words in same barrel § Indexed by Doc. ID, then a list of Word. IDs in this barrel and this document, then Hit Lists corresponding to the Word. IDs § Two barrels: short (anchor and title); full (all text) original tables from http: //www. cs. huji. ac. il/~sdbi/2000/google/index. htm 11

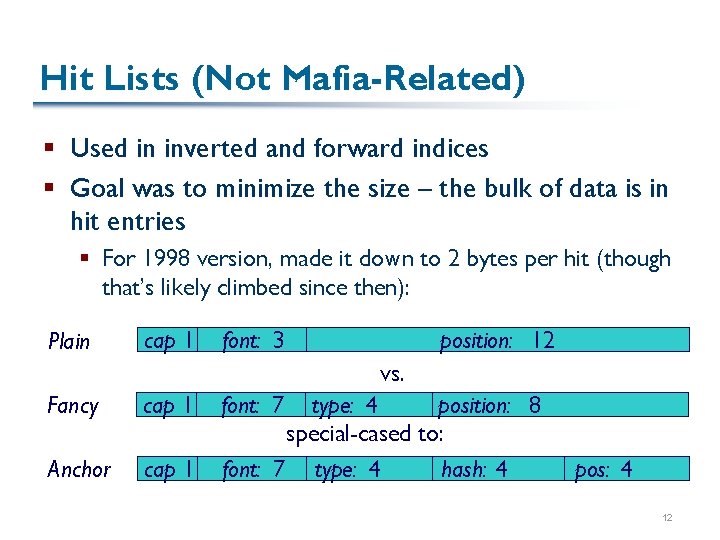

Hit Lists (Not Mafia-Related) § Used in inverted and forward indices § Goal was to minimize the size – the bulk of data is in hit entries § For 1998 version, made it down to 2 bytes per hit (though that’s likely climbed since then): Plain cap 1 font: 3 position: 12 vs. Fancy cap 1 Anchor cap 1 font: 7 type: 4 position: 8 special-cased to: font: 7 type: 4 hash: 4 pos: 4 12

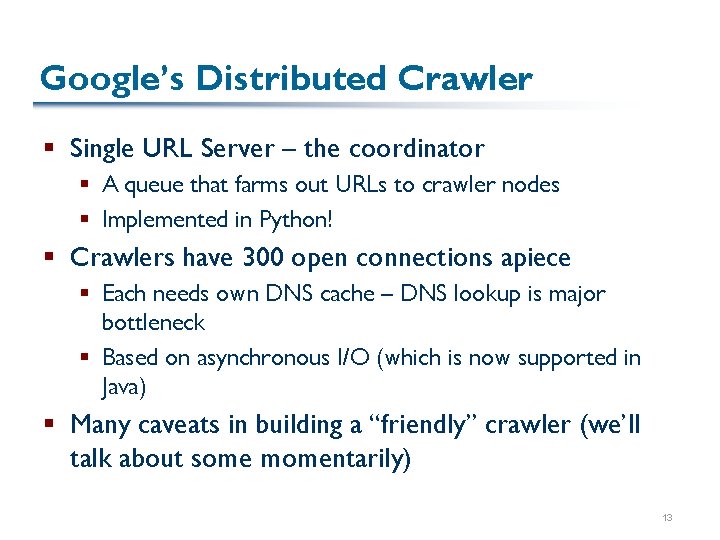

Google’s Distributed Crawler § Single URL Server – the coordinator § A queue that farms out URLs to crawler nodes § Implemented in Python! § Crawlers have 300 open connections apiece § Each needs own DNS cache – DNS lookup is major bottleneck § Based on asynchronous I/O (which is now supported in Java) § Many caveats in building a “friendly” crawler (we’ll talk about some momentarily) 13

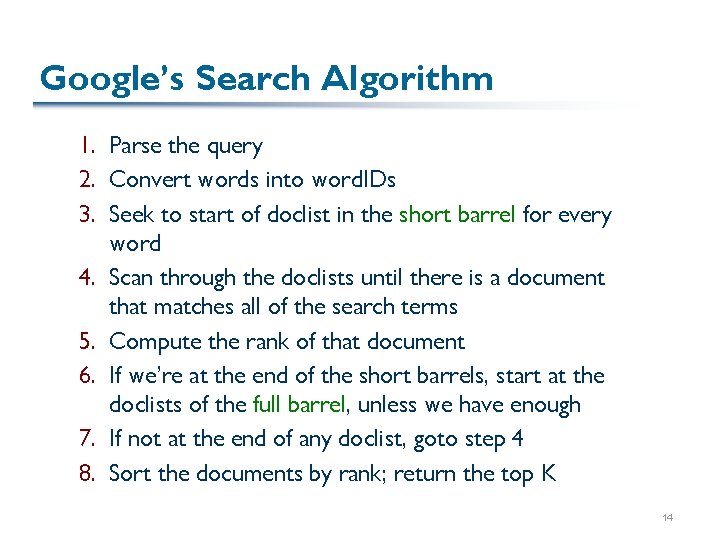

Google’s Search Algorithm 1. Parse the query 2. Convert words into word. IDs 3. Seek to start of doclist in the short barrel for every word 4. Scan through the doclists until there is a document that matches all of the search terms 5. Compute the rank of that document 6. If we’re at the end of the short barrels, start at the doclists of the full barrel, unless we have enough 7. If not at the end of any doclist, goto step 4 8. Sort the documents by rank; return the top K 14

Ranking in Google § Considers many types of information: Position, font size, capitalization Anchor text Page. Rank Count of occurrences (basically, TF) in a way that tapers off § (Not clear if they do IDF? ) § § § Multi-word queries consider proximity also 15

Google’s Resources § In 1998: § § 24 M web pages About 55 GB data w/o repository About 110 GB with repository Lexicon 293 MB § Worked quite well with low-end PC § Today, > 25 billion pages, 400 M queries/day: § Don’t attempt to include all barrels on every machine! e. g. , 5+TB repository on special servers separate from index servers § Many special-purpose indexing services (e. g. , images) § Much greater distribution of data (now ~20 K PCs? ), huge net BW § Advertising needs to be tied in (100, 000 advertisers claimed) 16

Web Crawling in More Detail § First, some politeness criteria § Then we’ll look at the Mercator distributed crawler as an example 17

The Basic Crawler (Spider/Robot) Process § Start with some initial page P 0 § Collect all URLs from P 0 and add to the crawler queue § Consider <base href> tag, anchor links, optionally image links, CSS, DTDs, scripts § Considerations: § § § What order to traverse (polite to do BFS – why? ) How deep to traverse What to ignore (coverage) How to escape “spider traps” and avoid cycles How often to crawl 18

Essential Crawler Etiquette § Robot exclusion protocols § First, ignore pages with: <META NAME="ROBOTS” CONTENT="NOINDEX"> § Second, look for robots. txt at root of web server § See http: //www. robotstxt. org/wc/robots. html § To exclude all robots from a server: User-agent: * Disallow: / § To exclude one robot from two directories: User-agent: Bobs. Crawler Disallow: /news/ Disallow: /tmp/ 19

Mercator § A scheme for building a distributed web crawler § Expands a “URL frontier” § Avoids re-crawling same URLs § Also considers whether a document has been seen before § Every document has signature/checksum info computed as it’s crawled 20

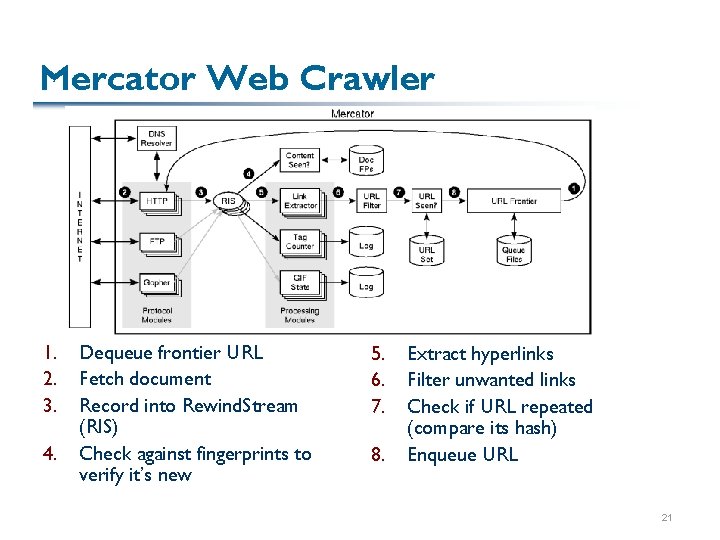

Mercator Web Crawler 1. 2. 3. 4. Dequeue frontier URL Fetch document Record into Rewind. Stream (RIS) Check against fingerprints to verify it’s new 5. 6. 7. 8. Extract hyperlinks Filter unwanted links Check if URL repeated (compare its hash) Enqueue URL 21

Mercator’s Polite Frontier Queues § Tries to go beyond breadth-first approach – want to have only one crawler thread per server § Distributed URL frontier queue: § One subqueue per worker thread § The worker thread is determined by hashing the hostname of the URL Thus, only one outstanding request per web server 22

Mercator’s HTTP Fetcher § First, needs to ensure robots. txt is followed § Caches the contents of robots. txt for various web sites as it crawls them § Designed to be extensible to other protocols § Had to write own HTTP requestor in Java – their Java version didn’t have timeouts § Today, can use set. So. Timeout() § Can also use Java non-blocking I/O if you wish: § http: //www. owlmountain. com/tutorials/Non. Blocking. Io. htm § But they use multiple threads and synchronous I/O 23

Other Caveats § Infinitely long URL names (good way to get a buffer overflow!) § Aliased host names § Alternative paths to the same host § Can catch most of these with signatures of document data (e. g. , MD 5) § Crawler traps (e. g. , CGI scripts that link to themselves using a different name) § May need to have a way for human to override certain URL paths – see Section 5 of paper 24

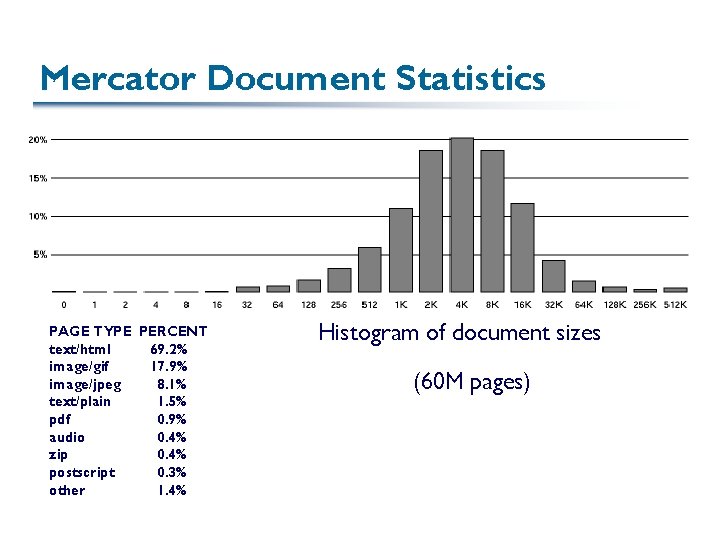

Mercator Document Statistics PAGE TYPE PERCENT text/html 69. 2% image/gif 17. 9% image/jpeg 8. 1% text/plain 1. 5% pdf 0. 9% audio 0. 4% zip 0. 4% postscript 0. 3% other 1. 4% Histogram of document sizes (60 M pages)

Further Considerations § May want to prioritize certain pages as being most worth crawling § Focused crawling tries to prioritize based on relevance § May need to refresh certain pages more often 26

Web Search Summarized § Two important factors: § Indexing and ranking scheme that allows most relevant documents to be prioritized highest § Crawler that manages to be (1) well-mannered, (2) avoid traps, (3) scale § We’ll be using Pastry to distribute the work of crawling and to distribute the data (what Google calls “barrels”) 27

- Slides: 27