Web Mining and Recommendation CENG 514 20 September

Web Mining and Recommendation CENG 514 20 September 2021 1

Web Mining § Web Mining is the use of the data mining techniques to automatically discover and extract information from web documents/services

Examples of Discovered Patterns t Association rules t t Classification t t People with age less than 40 and salary > 40 k trade on-line Clustering t t 75% of Facebook users also have Four. Square accounts Users A and B access similar URLs Outlier Detection t User A spends more than twice the average amount of time surfing on the Web

Why is Web Mining Different? t The Web is a huge collection of documents except for t t t The Web is very dynamic t t Hyper-link information Access and usage information New pages are constantly being generated Challenge: Develop new Web mining algorithms and adapt traditional data mining algorithms to t t Exploit hyper-links and access patterns Be incremental

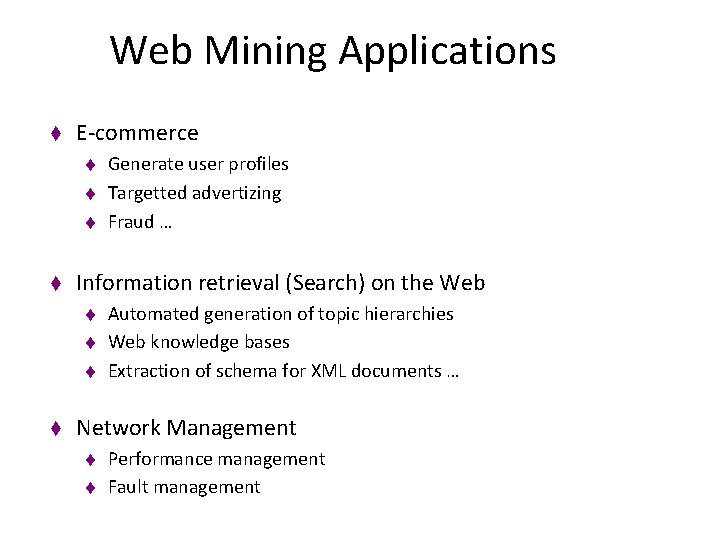

Web Mining Applications t E-commerce t t Information retrieval (Search) on the Web t t Generate user profiles Targetted advertizing Fraud … Automated generation of topic hierarchies Web knowledge bases Extraction of schema for XML documents … Network Management t t Performance management Fault management

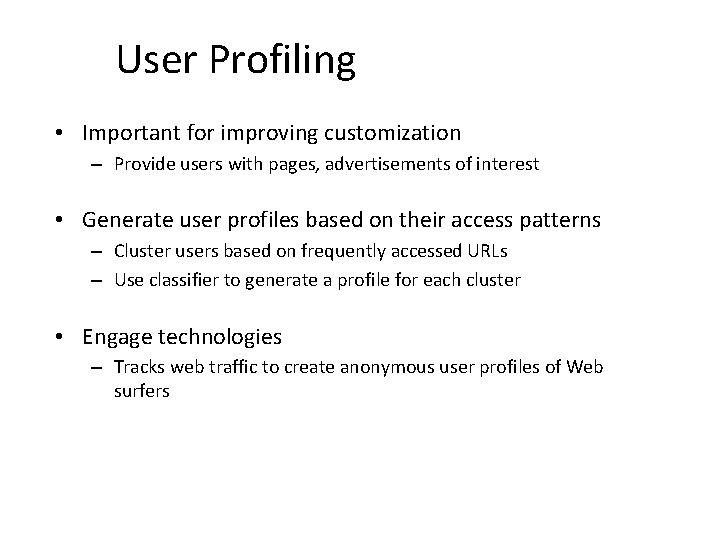

User Profiling • Important for improving customization – Provide users with pages, advertisements of interest • Generate user profiles based on their access patterns – Cluster users based on frequently accessed URLs – Use classifier to generate a profile for each cluster • Engage technologies – Tracks web traffic to create anonymous user profiles of Web surfers

Internet Advertizing • Ads are a major source of revenue for Web portals and E-commerce sites • Plenty of startups doing internet advertizing – Doubleclick, Ad. Force, Ad. Knowledge

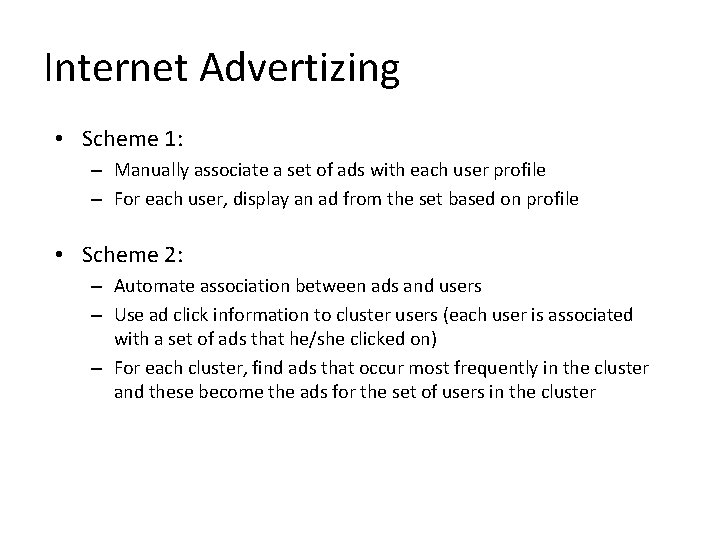

Internet Advertizing • Scheme 1: – Manually associate a set of ads with each user profile – For each user, display an ad from the set based on profile • Scheme 2: – Automate association between ads and users – Use ad click information to cluster users (each user is associated with a set of ads that he/she clicked on) – For each cluster, find ads that occur most frequently in the cluster and these become the ads for the set of users in the cluster

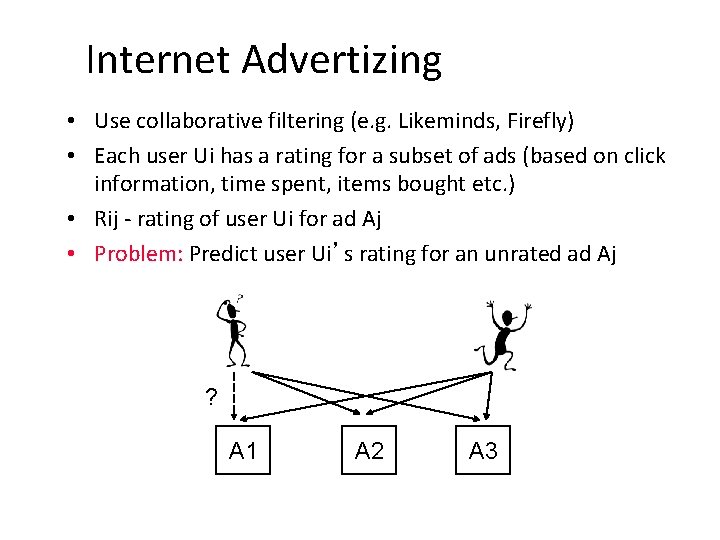

Internet Advertizing • Use collaborative filtering (e. g. Likeminds, Firefly) • Each user Ui has a rating for a subset of ads (based on click information, time spent, items bought etc. ) • Rij - rating of user Ui for ad Aj • Problem: Predict user Ui’s rating for an unrated ad Aj ? A 1 A 2 A 3

Internet Advertizing • Key Idea: User Ui’s rating for ad Aj is set to Rkj, where Uk is the user whose rating of ads is most similar to Ui’s • User Ui’s rating for an ad Aj that has not been previously displayed to Ui is computed as follows: – Consider a user Uk who has rated ad Aj – Compute Dik, the distance between Ui and Uk’s ratings on common ads – Ui’s rating for ad Aj = Rkj (Uk is user with smallest Dik) – Display to Ui ad Aj with highest computed rating

Fraud • With the growing popularity of E-commerce, systems to detect and prevent fraud on the Web become important • Maintain a signature for each user based on buying patterns on the Web (e. g. , amount spent, categories of items bought) • If buying pattern changes significantly, then signal fraud • E. g. use of domain knowledge and neural networks for credit card fraud detection

Network Management • Performance management : Annual bandwidth demand is increasing ten-fold on average, annual bandwidth supply is rising only by a factor of three. Result is frequent congestion. During a major event (World cup), an overwhelming number of user requests can result in millions of redundant copies of data flowing back and forth across the world • Fault management: Analyze alarm and traffic data to carry out root cause analysis of faults

Web Mining • Web Content Mining – Web page content mining – Search result mining • Web Structure Mining – Search • Web Usage Mining – Access patterns – Customized Usage patterns 20 September 2021 13

Web Content Mining • Crawler – A program that traverses the hypertext structure in the Web – Seed URL: page/set of pages that the crawler starts with – Links from visited page saved in a queue – Build an index • Focused crawlers 20 September 2021 14

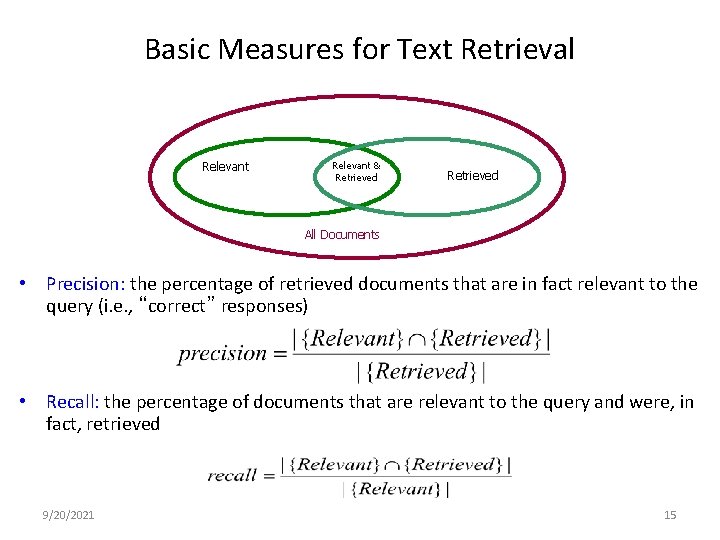

Basic Measures for Text Retrieval Relevant & Retrieved All Documents • Precision: the percentage of retrieved documents that are in fact relevant to the query (i. e. , “correct” responses) • Recall: the percentage of documents that are relevant to the query and were, in fact, retrieved 9/20/2021 15

Information Retrieval Techniques • Basic Concepts – A document can be described by a set of representative keywords called index terms. – Different index terms have varying relevance when used to describe document contents. – This effect is captured through the assignment of numerical weights to each index term of a document. (e. g. : frequency, tf-idf) • DBMS Analogy – Index Terms Attributes – Weights Attribute Values 9/20/2021 16

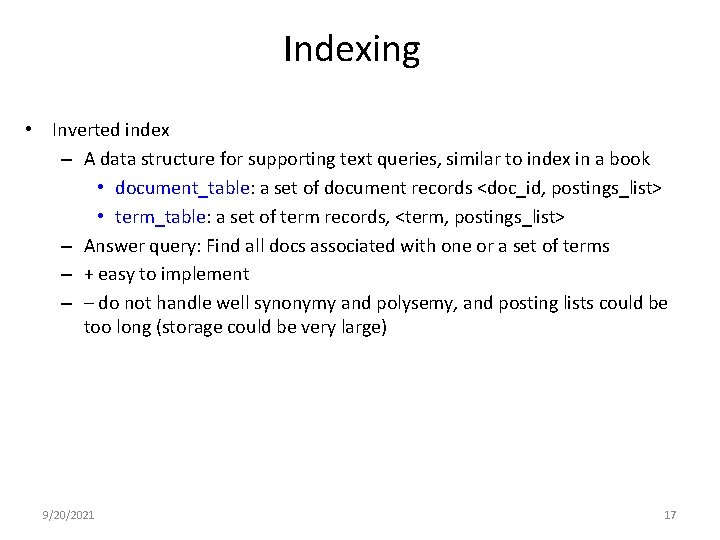

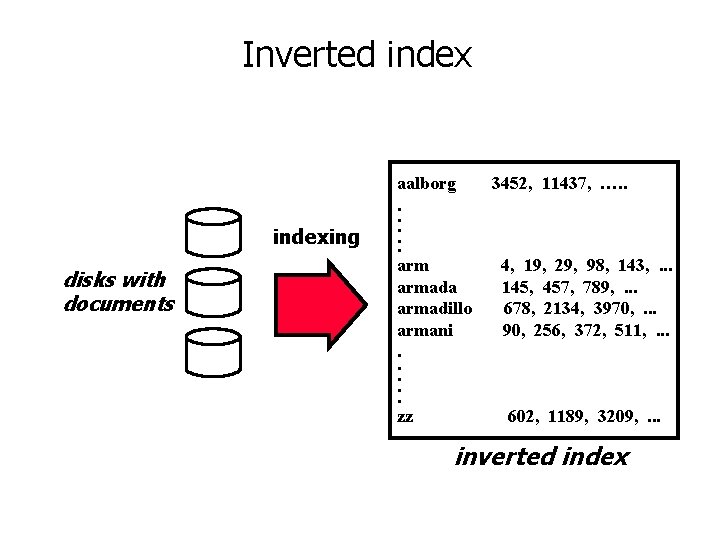

Indexing • Inverted index – A data structure for supporting text queries, similar to index in a book • document_table: a set of document records <doc_id, postings_list> • term_table: a set of term records, <term, postings_list> – Answer query: Find all docs associated with one or a set of terms – + easy to implement – – do not handle well synonymy and polysemy, and posting lists could be too long (storage could be very large) 9/20/2021 17

Inverted indexing disks with documents aalborg. . . armada armadillo armani. . . zz 3452, 11437, …. . 4, 19, 29, 98, 143, . . . 145, 457, 789, . . . 678, 2134, 3970, . . . 90, 256, 372, 511, . . . 602, 1189, 3209, . . . inverted index

Vector Space Model • Documents and user queries are represented as mdimensional vectors, where m is the total number of index terms in the document collection. • The degree of similarity of the document d with regard to the query q is calculated as the correlation between the vectors that represent them, using measures such as the Euclidian distance or the cosine of the angle between these two vectors. 9/20/2021 19

Vector Space Model • Represent a doc by a term vector – Term: basic concept, e. g. , word or phrase – Each term defines one dimension – N terms define a N-dimensional space – Element of vector corresponds to term weight – E. g. , d = (x 1, …, x. N), xi is “importance” of term i 9/20/2021 20

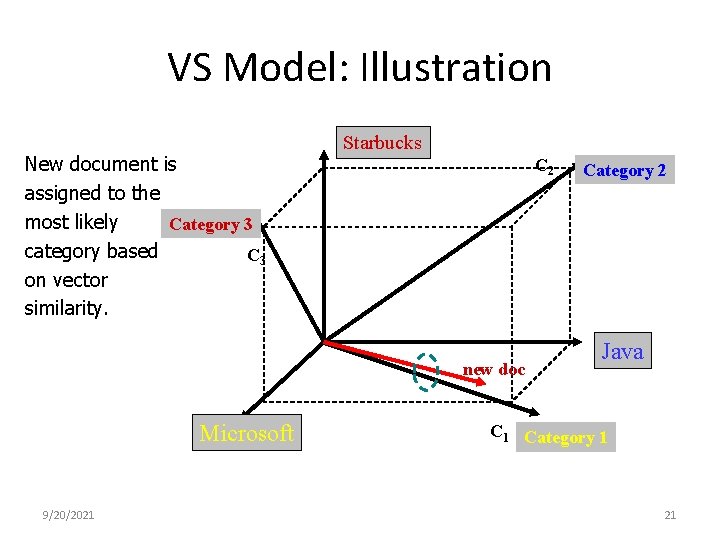

VS Model: Illustration New document is assigned to the most likely Category 3 category based C 3 on vector similarity. Starbucks C 2 new doc Microsoft 9/20/2021 Category 2 Java C 1 Category 1 21

Issues to be handled • How to select terms to capture “basic concepts” – Word stopping • e. g. “a”, “the”, “always”, “along” – Word stemming • e. g. “computer”, “computing”, “computerize” => “compute” – Latent semantic indexing • How to assign weights – Not all words are equally important: Some are more indicative than others • e. g. “algebra” vs. “science” • How to measure the similarity 9/20/2021 22

Latent Semantic Indexing • Basic idea – Similar documents have similar word frequencies – Difficulty: the size of the term frequency matrix is very large – Use a singular value decomposition (SVD) techniques to reduce the size of frequency table – Retain the K most significant rows of the frequency table • Method – Create a term x document weighted frequency matrix A – SVD construction: A = U * S * V’ – Define K and obtain Uk , , Sk , and Vk. – Create query vector q’. – Project q’ into the term-document space: Dq = q’ * Uk * Sk-1 – Calculate similarities: cos α = Dq. D / ||Dq|| * ||D|| 9/20/2021 23

How to Assign Weights • Two-fold heuristics based on frequency – TF (Term frequency) • More frequent within a document more relevant to semantics – IDF (Inverse document frequency) • Less frequent among documents more discriminative 9/20/2021 24

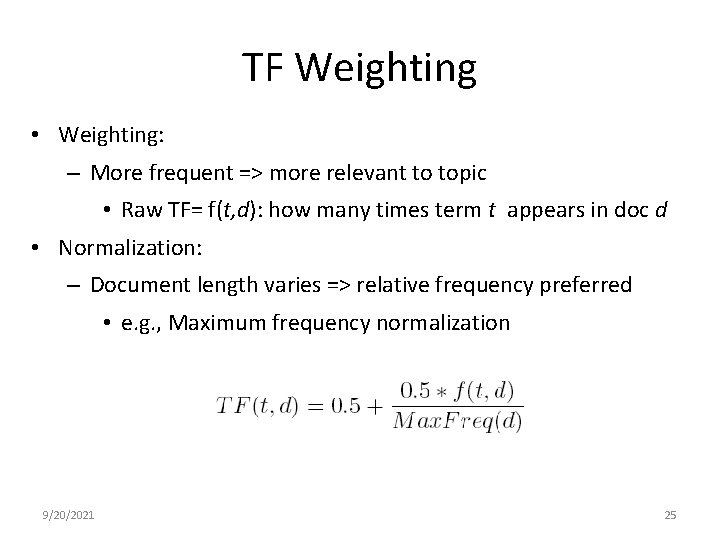

TF Weighting • Weighting: – More frequent => more relevant to topic • Raw TF= f(t, d): how many times term t appears in doc d • Normalization: – Document length varies => relative frequency preferred • e. g. , Maximum frequency normalization 9/20/2021 25

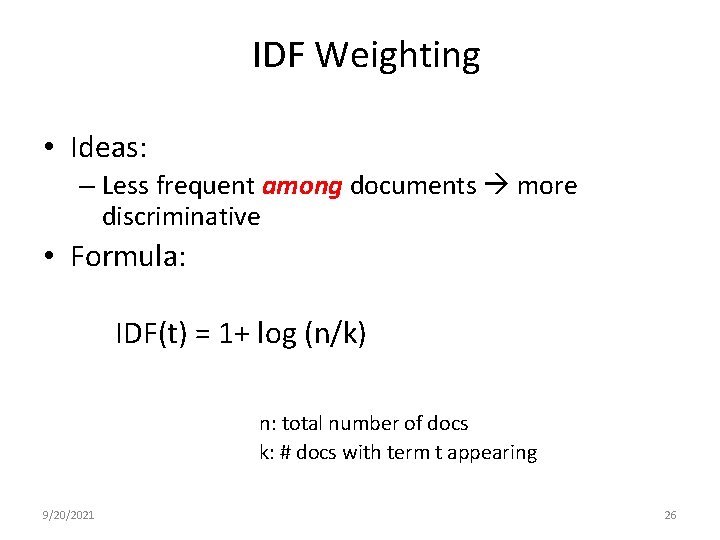

IDF Weighting • Ideas: – Less frequent among documents more discriminative • Formula: IDF(t) = 1+ log (n/k) n: total number of docs k: # docs with term t appearing 9/20/2021 26

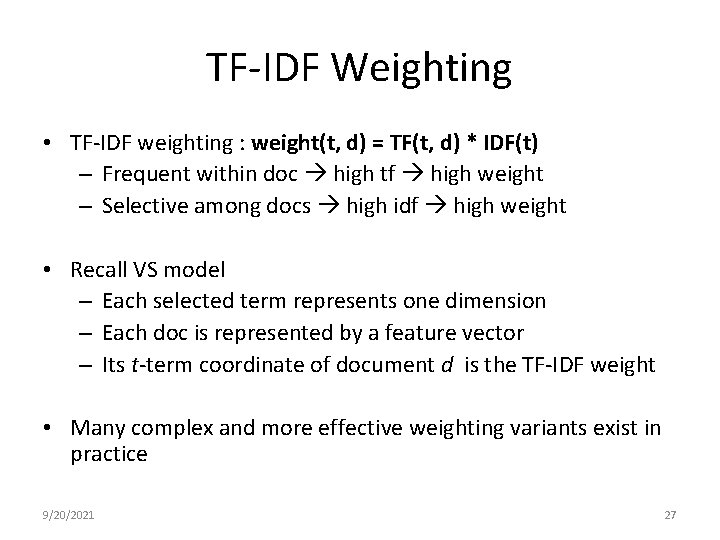

TF-IDF Weighting • TF-IDF weighting : weight(t, d) = TF(t, d) * IDF(t) – Frequent within doc high tf high weight – Selective among docs high idf high weight • Recall VS model – Each selected term represents one dimension – Each doc is represented by a feature vector – Its t-term coordinate of document d is the TF-IDF weight • Many complex and more effective weighting variants exist in practice 9/20/2021 27

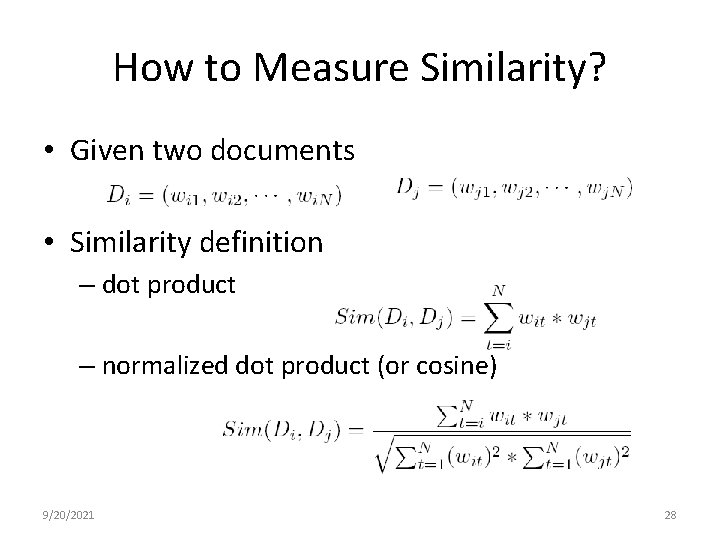

How to Measure Similarity? • Given two documents • Similarity definition – dot product – normalized dot product (or cosine) 9/20/2021 28

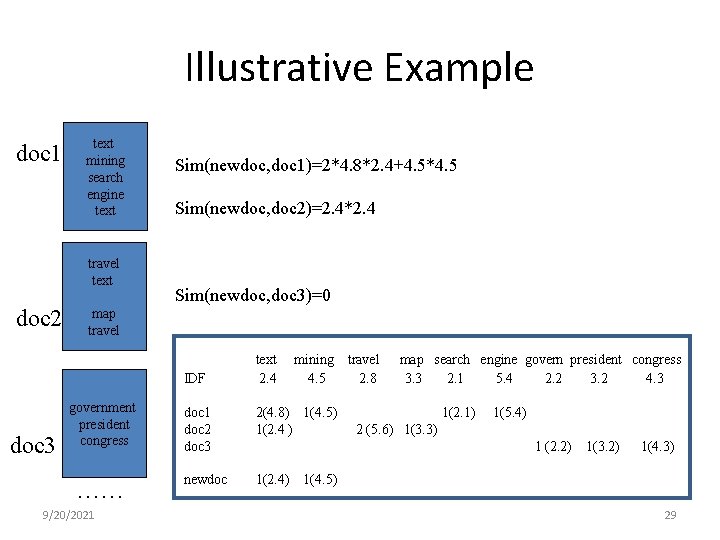

Illustrative Example doc 1 text mining search engine text travel text doc 2 Sim(newdoc, doc 1)=2*4. 8*2. 4+4. 5*4. 5 Sim(newdoc, doc 2)=2. 4*2. 4 Sim(newdoc, doc 3)=0 map travel IDF doc 3 government president congress …… 9/20/2021 text 2. 4 mining 4. 5 doc 1 doc 2 doc 3 2(4. 8) 1(4. 5) 1(2. 4 ) newdoc 1(2. 4) travel 2. 8 map search engine govern president congress 3. 3 2. 1 5. 4 2. 2 3. 2 4. 3 1(2. 1) 1(5. 4) 2 (5. 6) 1(3. 3) 1 (2. 2) 1(3. 2) 1(4. 3) 1(4. 5) 29

Web Structure Mining • Page. Rank (Google ’ 00) • Clever (IBM ’ 99) 20 September 2021 30

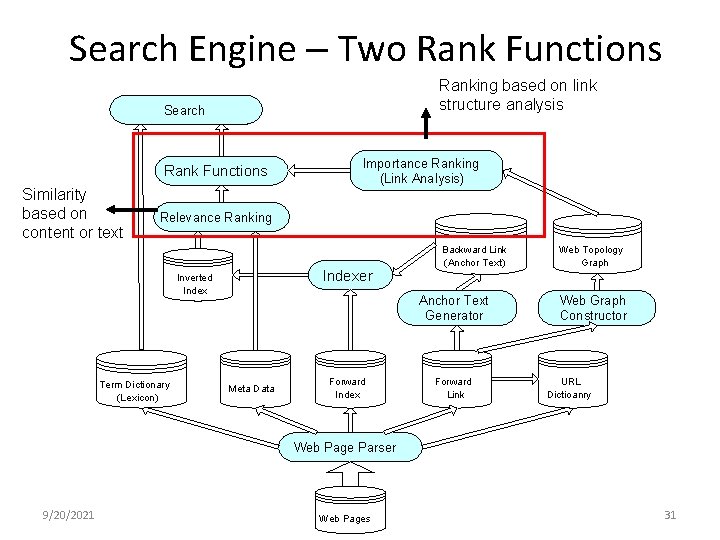

Search Engine – Two Rank Functions Ranking based on link structure analysis Search Rank Functions Similarity based on content or text Importance Ranking (Link Analysis) Relevance Ranking Backward Link (Anchor Text) Indexer Inverted Index Term Dictionary (Lexicon) Web Topology Graph Anchor Text Generator Meta Data Forward Index Forward Link Web Graph Constructor URL Dictioanry Web Page Parser 9/20/2021 Web Pages 31

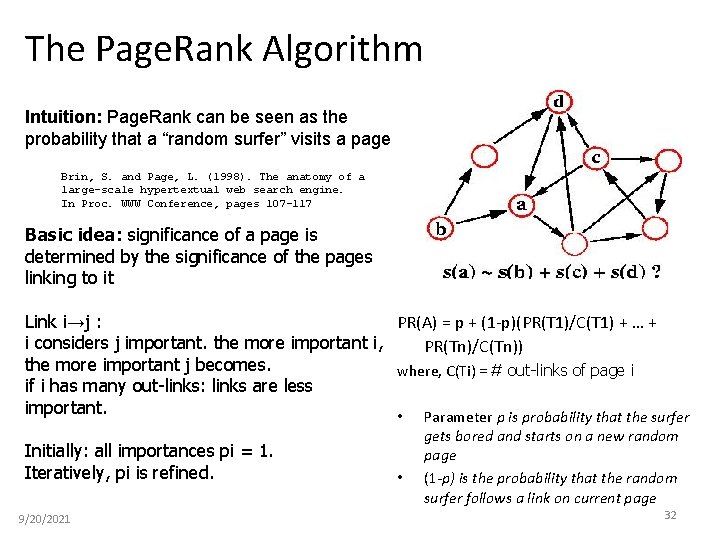

The Page. Rank Algorithm Intuition: Page. Rank can be seen as the probability that a “random surfer” visits a page Brin, S. and Page, L. (1998). The anatomy of a large-scale hypertextual web search engine. In Proc. WWW Conference, pages 107– 117 Basic idea: significance of a page is determined by the significance of the pages linking to it Link i→j : PR(A) = p + (1 -p)(PR(T 1)/C(T 1) + … + i considers j important. the more important i, PR(Tn)/C(Tn)) the more important j becomes. where, C(Ti) = # out-links of page i if i has many out-links: links are less important. • Parameter p is probability that the surfer Initially: all importances pi = 1. Iteratively, pi is refined. 9/20/2021 • gets bored and starts on a new random page (1 -p) is the probability that the random surfer follows a link on current page 32

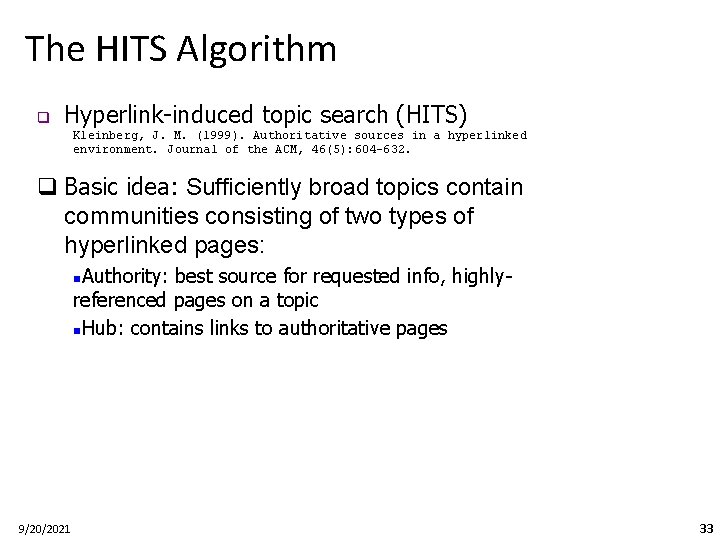

The HITS Algorithm q Hyperlink-induced topic search (HITS) Kleinberg, J. M. (1999). Authoritative sources in a hyperlinked environment. Journal of the ACM, 46(5): 604– 632. q Basic idea: Sufficiently broad topics contain communities consisting of two types of hyperlinked pages: Authority: best source for requested info, highlyreferenced pages on a topic n. Hub: contains links to authoritative pages n 9/20/2021 33

The HITS Algorithm • Collect seed set of pages S (returned by search engine) • Expand seed set to contain pages that point to or are pointed to by pages in seed set (removes links inside a site) • Iteratively update hub weight h(p) and authority weight a(p) for each page: a (p )= ∑ h (q ), for all q p h (p )= ∑ a (q), for all p q • After a fixed number of iterations, pages with highest hub/authority weights form core of community 9/20/2021 34

Problems with Web Search Today • Today’s search engines are plagued by problems: – the abundance problem (99% of info of no interest to 99% of people) – limited coverage of the Web (internet sources hidden behind search interfaces) Largest crawlers cover < 18% of all web pages – limited query interface based on keywordoriented search – limited customization to individual users

Problems with Web Search Today • Today’s search engines are plagued by problems: – Web is highly dynamic • Lot of pages added, removed, and updated every day – Very high dimensionality

Web Usage Mining • Pages contain information • Links are ‘roads’ • How do people navigate the Internet – Web Usage Mining (clickstream analysis) • Information on navigation paths available in log files • Logs can be mined from a client or a server perspective

Website Usage Analysis • Why analyze Website usage? • Knowledge about how visitors use Website could – – – Provide guidelines to web site reorganization; Help prevent disorientation Help designers place important information where the visitors look for it Pre-fetching and caching web pages Provide adaptive Website (Personalization) Questions which could be answered • • What are the differences in usage and access patterns among users? What user behaviors change over time? How usage patterns change with quality of service (slow/fast)? What is the distribution of network traffic over time?

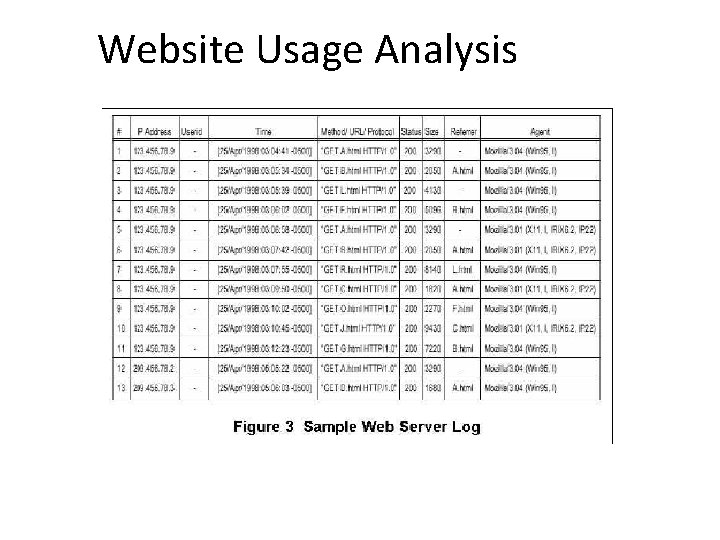

Website Usage Analysis

Website Usage Analysis There analysis services such as Analog (http: //www. analog. cx/), Google analytics Gives basic statistics such as • number of hits • average hits per time period • what are the popular pages in your site • who is visiting your site • what keywords are users searching for to get to you • what is being downloaded

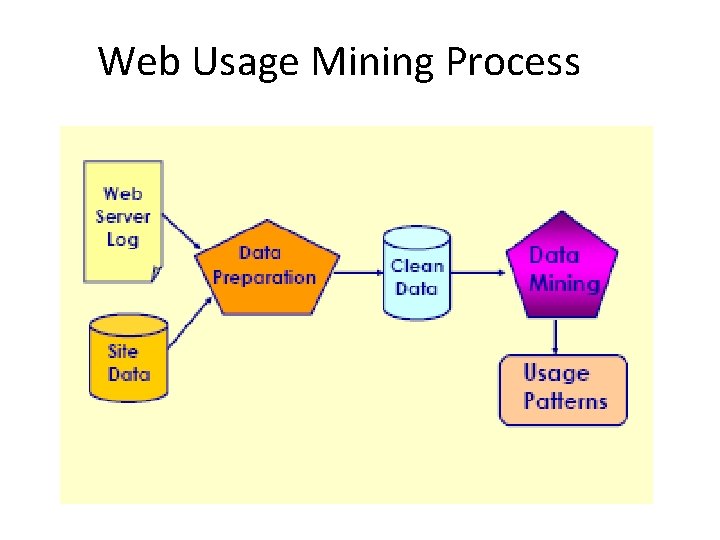

Web Usage Mining Process

Data Preparation • Data cleaning • By checking the suffix of the URL name, for example, all log entries with filename suffixes such as, gif, jpeg, etc • User identification • If a page is requested that is not directly linked to the previous pages, multiple users are assumed to exist on the same machine • Other heuristics involve using a combination of IP address, machine name, browser agent, and temporal information to identify users • Transaction identification • All of the page references made by a user during a single visit to a site • Size of a transaction can range from a single page reference to all of the page references 20 September 2021 Data Mining: Concepts and Techniques 42

Sessionizing • Main Questions: • how to identify unique users • how to identify/define a user transaction • Problems: • user ids are often suppressed due to security concerns • individual IP addresses are sometimes hidden behind proxy servers • client-side & proxy caching makes server log data less reliable • Standard Solutions/Practices: • user registration – practical ? ? • client-side cookies – not fool proof • cache busting - increases network traffic 20 September 2021 Data Mining: Concepts and Techniques 43

Sessionizing • Time oriented • By total duration of session not more than 30 minutes • By page stay times (good for short sessions) not more than 10 minutes per page • Navigation oriented (good for short sessions and when timestamps unreliable) • Referrer is previous page in session, or • Referrer is undefined but request within 10 secs, or • Link from previous to current page in web site 20 September 2021 Data Mining: Concepts and Techniques 44

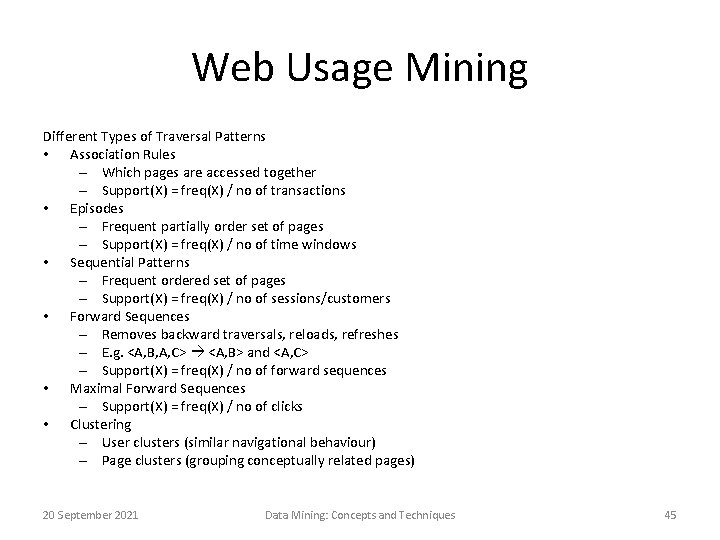

Web Usage Mining Different Types of Traversal Patterns • Association Rules – Which pages are accessed together – Support(X) = freq(X) / no of transactions • Episodes – Frequent partially order set of pages – Support(X) = freq(X) / no of time windows • Sequential Patterns – Frequent ordered set of pages – Support(X) = freq(X) / no of sessions/customers • Forward Sequences – Removes backward traversals, reloads, refreshes – E. g. <A, B, A, C> <A, B> and <A, C> – Support(X) = freq(X) / no of forward sequences • Maximal Forward Sequences – Support(X) = freq(X) / no of clicks • Clustering – User clusters (similar navigational behaviour) – Page clusters (grouping conceptually related pages) 20 September 2021 Data Mining: Concepts and Techniques 45

Recommender Systems 20 September 2021 Data Mining: Concepts and Techniques 46

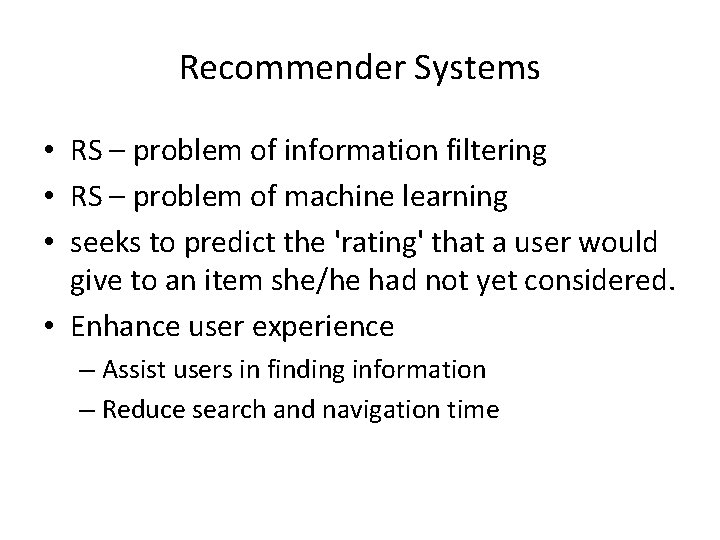

Recommender Systems • RS – problem of information filtering • RS – problem of machine learning • seeks to predict the 'rating' that a user would give to an item she/he had not yet considered. • Enhance user experience – Assist users in finding information – Reduce search and navigation time

Types of RS Three broad types: 1. Content based RS 2. Collaborative RS 3. Hybrid RS

Types of RS – Content based RS highlights – Recommend items similar to those users preferred in the past – User profiling is the key – Items/content usually denoted by keywords – Matching “user preferences” with “item characteristics” … works for textual information – Vector Space Model widely used

Types of RS – Content based RS - Limitations – Not all content is well represented by keywords, e. g. images – Items represented by the same set of features are indistinguishable – Users with thousands of purchases is a problem – New user: No history available

Types of RS – Collaborative RS highlights – Use other users recommendations (ratings) to judge item’s utility – Key is to find users/user groups whose interests match with the current user – Vector Space model widely used (directions of vectors are user specified ratings) – More users, more ratings: better results – Can account for items dissimilar to the ones seen in the past too – Example: Movielens. org

Types of Collaborative Filtering • User-based collaborative filtering • Item-based collaborative filtering

User-based Collaborative Filtering • Idea: People who agreed in the past are likely to agree again • To predict a user’s opinion for an item, use the opinion of similar users • Similarity between users is decided by looking at their overlap in opinions for other items

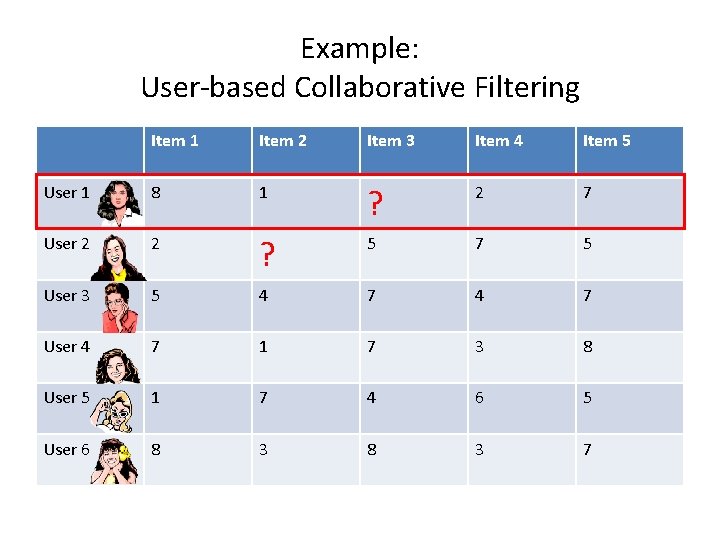

Example: User-based Collaborative Filtering Item 1 Item 2 Item 3 Item 4 Item 5 User 1 8 1 ? 2 7 User 2 2 ? 5 7 5 User 3 5 4 7 User 4 7 1 7 3 8 User 5 1 7 4 6 5 User 6 8 3 7

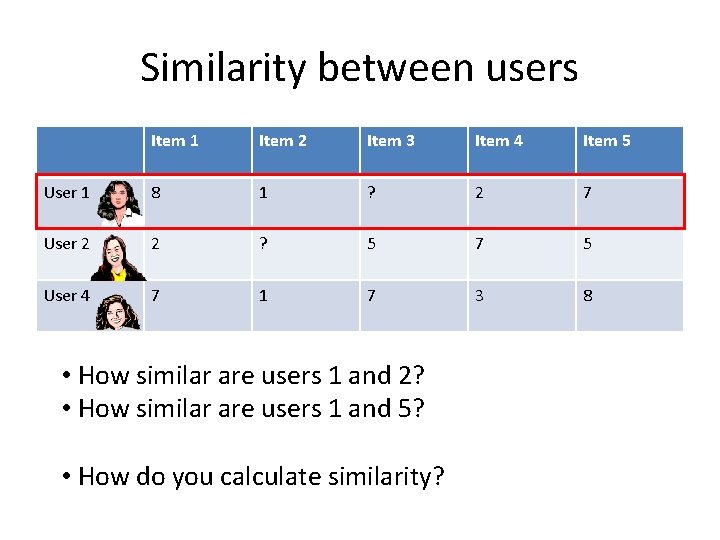

Similarity between users Item 1 Item 2 Item 3 Item 4 Item 5 User 1 8 1 ? 2 7 User 2 2 ? 5 7 5 User 4 7 1 7 3 8 • How similar are users 1 and 2? • How similar are users 1 and 5? • How do you calculate similarity?

Similarity between users: simple way Item 1 Item 2 Item 3 Item 4 Item 5 User 1 8 1 ? 2 7 User 2 2 ? 5 7 5 • Only consider items both users have rated • For each item: Calculate difference in the users’ ratings • Take the average of this difference over the items Average j : Item j rated by User 1 and User 2: | rating (User 1, Item j) – rating (User 2, Item j)

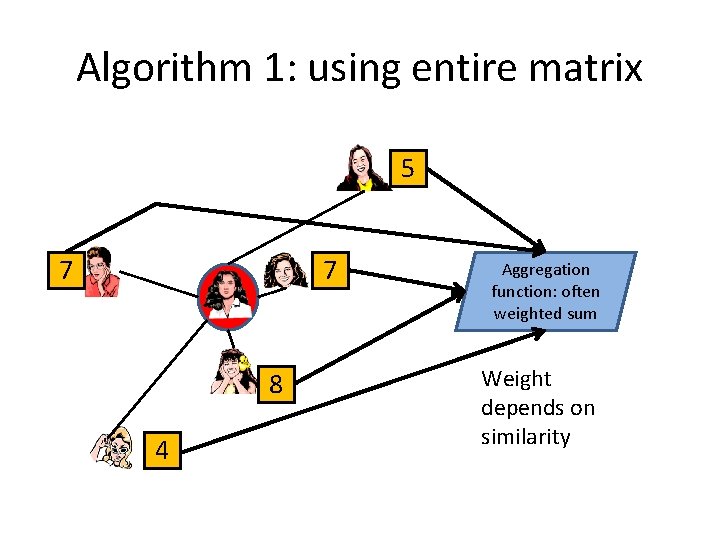

Algorithm 1: using entire matrix 5 7 7 8 4 Aggregation function: often weighted sum Weight depends on similarity

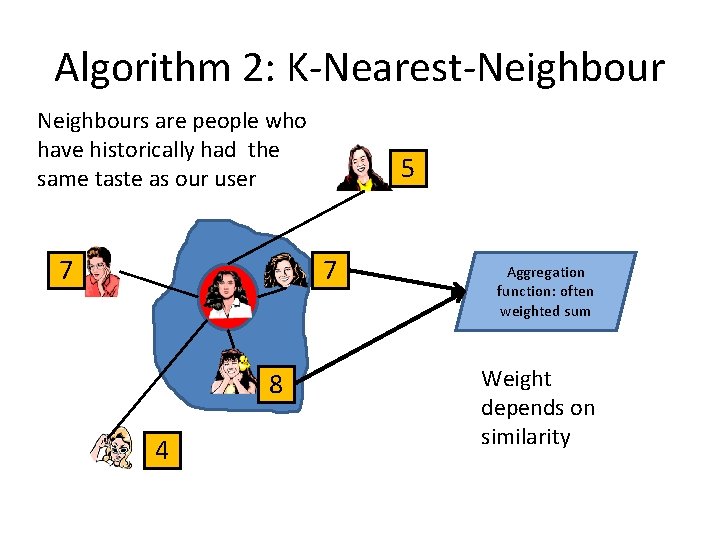

Algorithm 2: K-Nearest-Neighbours are people who have historically had the same taste as our user 7 5 7 8 4 Aggregation function: often weighted sum Weight depends on similarity

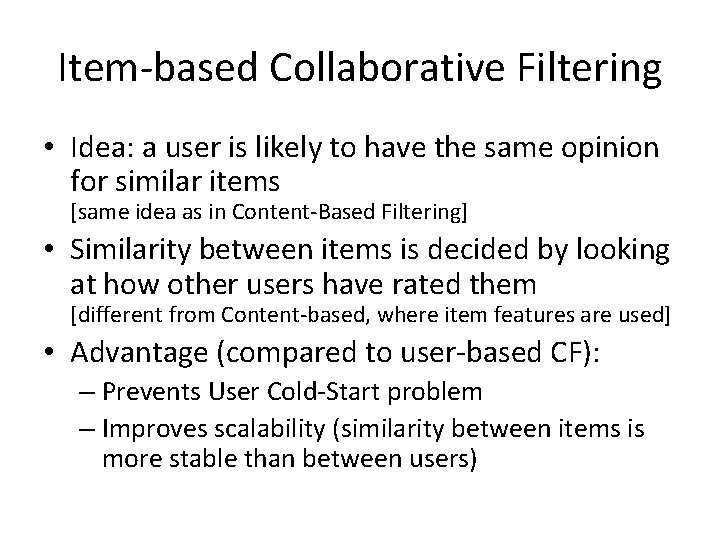

Item-based Collaborative Filtering • Idea: a user is likely to have the same opinion for similar items [same idea as in Content-Based Filtering] • Similarity between items is decided by looking at how other users have rated them [different from Content-based, where item features are used] • Advantage (compared to user-based CF): – Prevents User Cold-Start problem – Improves scalability (similarity between items is more stable than between users)

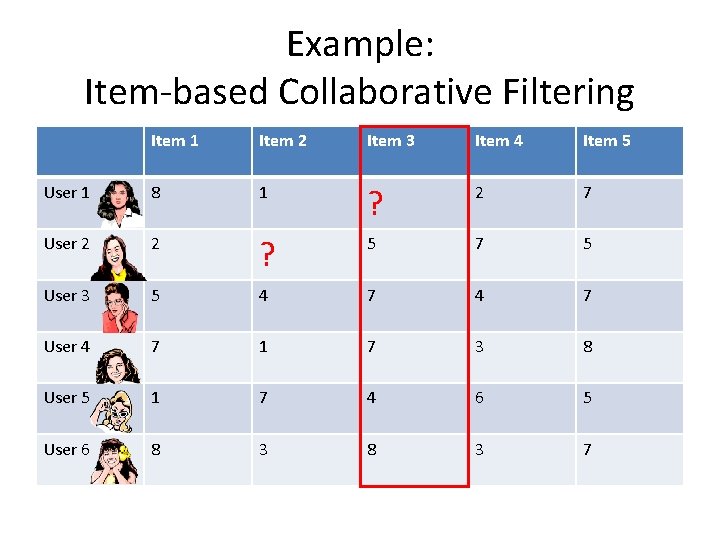

Example: Item-based Collaborative Filtering Item 1 Item 2 Item 3 Item 4 Item 5 User 1 8 1 ? 2 7 User 2 2 ? 5 7 5 User 3 5 4 7 User 4 7 1 7 3 8 User 5 1 7 4 6 5 User 6 8 3 7

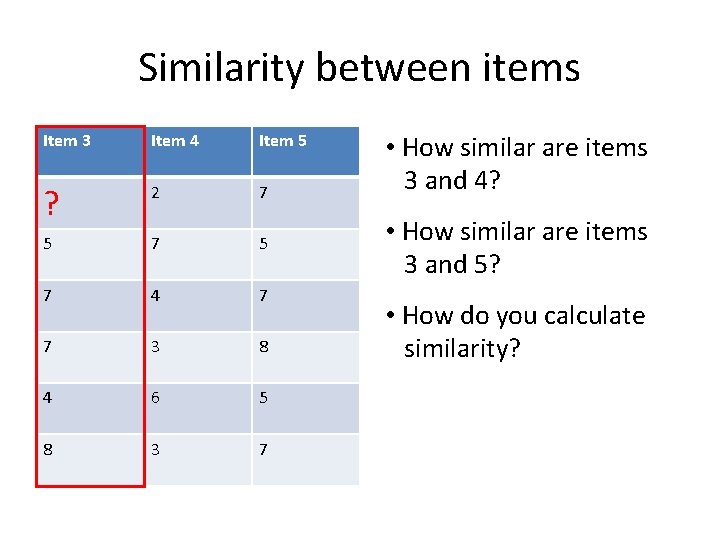

Similarity between items Item 3 Item 4 Item 5 ? 2 7 5 7 4 7 7 3 8 4 6 5 8 3 7 • How similar are items 3 and 4? • How similar are items 3 and 5? • How do you calculate similarity?

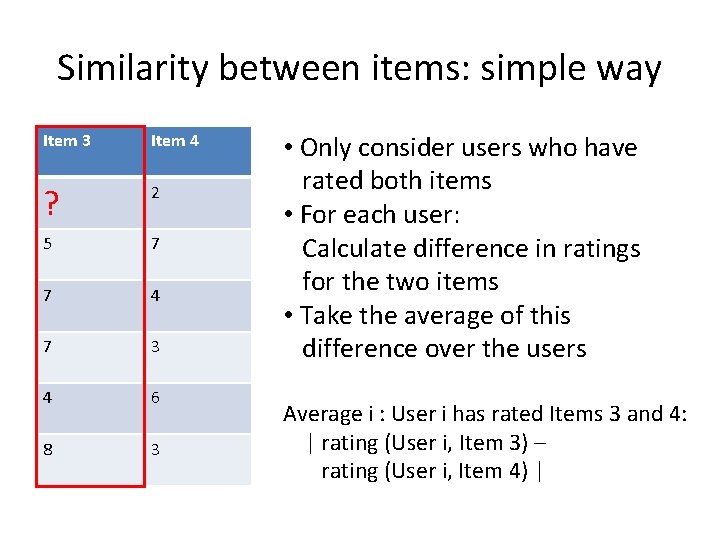

Similarity between items: simple way Item 3 Item 4 ? 2 5 7 7 4 7 3 4 6 8 3 • Only consider users who have rated both items • For each user: Calculate difference in ratings for the two items • Take the average of this difference over the users Average i : User i has rated Items 3 and 4: | rating (User i, Item 3) – rating (User i, Item 4) |

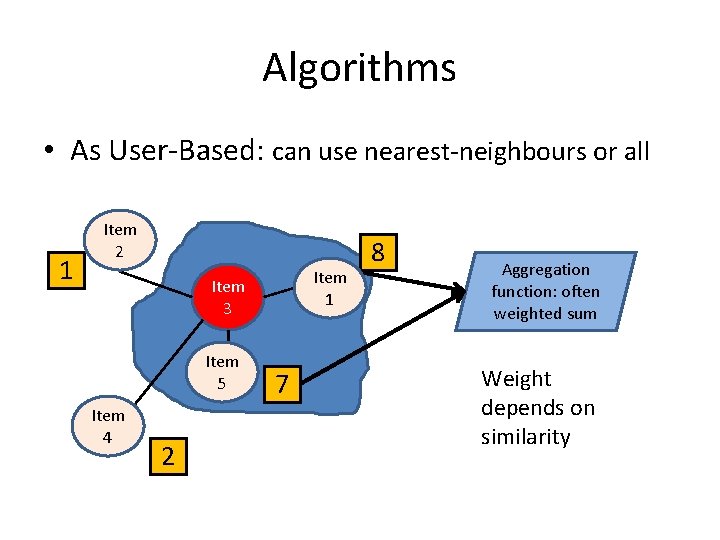

Algorithms • As User-Based: can use nearest-neighbours or all 1 Item 2 Item 1 Item 3 Item 5 Item 4 2 7 8 Aggregation function: often weighted sum Weight depends on similarity

Types of RS – Collaborative RS - Limitations – Different users might use different scales. Possible solution: weighted ratings, i. e. deviations from average rating – Finding similar users/user groups isn’t very easy – New user: No preferences available (user cold start problem) – New item: No ratings available (item cold start problem) – Demographic filtering is required

Some ways to make a Hybrid RS • Weighted. Ratings of several recommendation techniques are combined together to produce a single recommendation • Switching. The system switches between recommendation techniques depending on the current situation • Mixed. Recommendations from several different recommenders are presented simultaneously (e. g. Amazon) • Cascade. One recommender refines the recommendations given by another

Model-based collaborative filtering • Instead of using ratings directly, develop a model of user ratings • Use the model to predict ratings for new items • To build the model: – Bayesian network (probabilistic) – Clustering (classification) – Rule-based approaches (e. g. , association rules between co-purchased items)

Model-based collaborative filtering Cluster Models – Create clusters or groups – Put a customer into a category – Classification simplifies the task of user matching – More scalability and performance – Lesser accuracy than normal collaborative filtering method

Possible Improvement in RS Better understanding of users and items – Social network (social RS) 1. User level – Highlighting interests, hobbies, and keywords people have in common 2. Item level – link the keywords to e. Commerce

- Slides: 68