Web Application Firewall Evaluation with Dev Ops SDLC

Web Application Firewall Evaluation with Dev. Ops, SDLC and the New OWASP Core Rule Set

`su chaim` Chaim Sanders Current Roles • • Project Leader of the OWASP Core Rule Set Mod. Security Core Developer Security Researcher at Trustwave Spiderlabs Lecturer at Rochester Institute of Technology (RIT) Previous Roles • • Commercial Consultant Governmental Consultant

Why the web? Why OWASP? • We are going to focus on HTTP(S) Today • Special care needs to be taken here • According to CAIDA web is ~85% of internet traffic

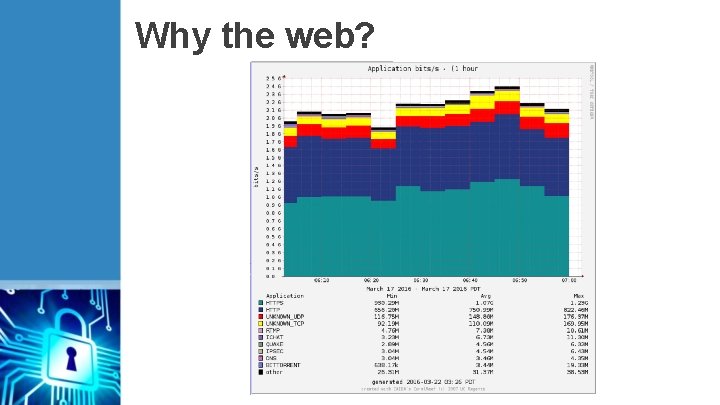

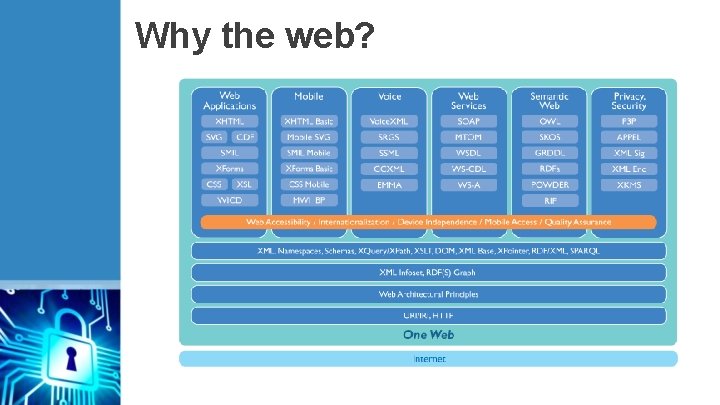

Why the web?

Why the web? Why OWASP? • We are going to focus on HTTP(S) Today • Special care needs to be taken here • According to CAIDA web is ~85% of internet traffic • As a result web architecture is complicated • It also means complicated attacks are acceptable • Attacks that will only work on 0. 01% of users are valuable

Why the web?

Why the web? Why OWASP? • We are going to focus on HTTP(S) Today • Special care needs to be taken here • According to CAIDA web is ~85% of internet traffic • As a result web architecture is complicated • It also means complicated attacks are acceptable • Attacks that will only work on 0. 01% of users are valuable • Diversity of attacks is high as well • Attacker on server / Attacker on client • Attacker on client via server • Attacker on server via server • Attacker on intermediary

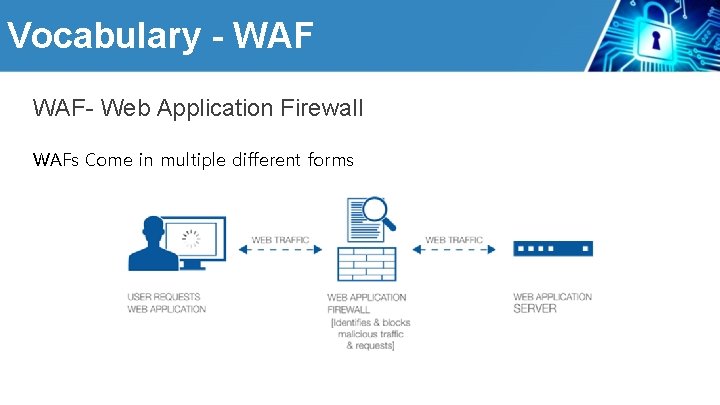

Vocabulary - WAF- Web Application Firewall WAFs Come in multiple different forms

Vocabulary - WAF- Web Application Firewall WAFs Come in multiple different forms • Can be placed in several places on the network • Inline • Out-of-line • On the web server • Different Technologies • Signatures • Heuristics • Often driven by PCI requirements, as it’s an approved security control What is the difference between an IDS versus WAF

Vocabulary - Mod. Security An Open Source Web Application Firewall • Probably the most popular WAF • Designed in 2002 • Currently on version 2. 9. 1 with version 3. 0 in the works • Designed to be open and supports the OWASP Core Rule Set • • First developed in 2009 An OWASP project meant to provide free generic rules to Mod. Security users

Problem Statement WAF- Web Application Firewall How do you evaluate security software More specifically how does one evaluate a Web Application Firewall? • Speed • Effectiveness • Support • Documentation • Market Share We need to address this from both a developer perspective, a client perspective, and a purchaser perspective.

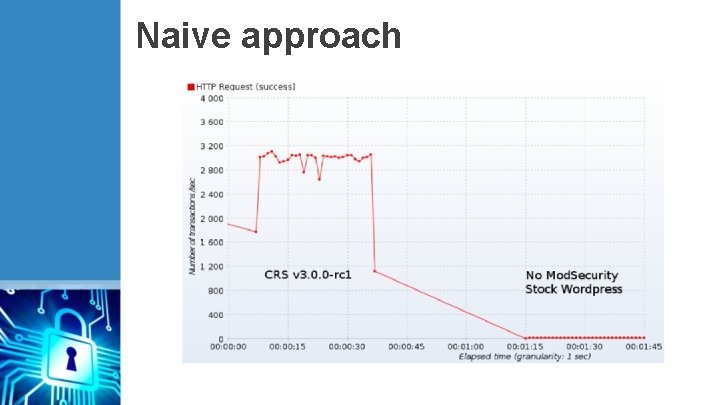

Naive approach The first thing people will ask – What is your performance? • Due to the difference in types of web applications there are different requirements • Per request speed differences • A request to upload a file vs a GET • Per protocol speed differences • A request that is processing XML • Amount of concurrent requests • Resource availability • Amount and effectiveness of defenses in place

Naive approach

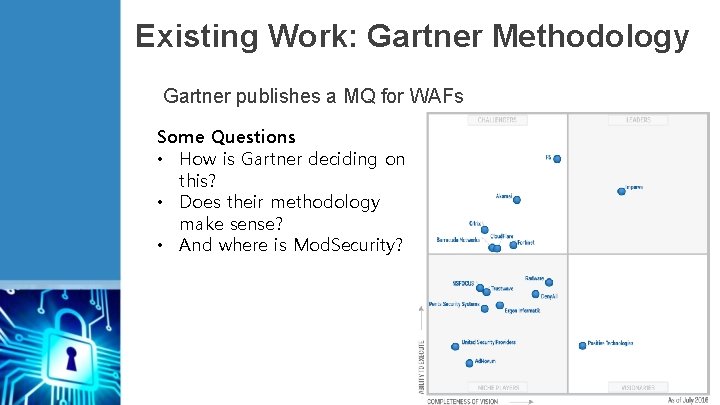

Existing Work: Gartner Methodology Gartner publishes a MQ for WAFs Some Questions • How is Gartner deciding on this? • Does their methodology make sense? • And where is Mod. Security?

Gartner Inclusion Requirements 1. Their offerings can protect applications running on different types of Web servers. 2. Their WAF technology is known to be approved by qualified security assessors as a solution for PCI DSS Requirement 6. 6 3. They provide physical, virtual or software appliances, or cloud instances. 4. Their WAFs were generally available as of 1 January 2015. 5. Their WAFs demonstrate features/scale relevant to enterprise-class organizations. 6. They have achieved $6 million in revenue from the sales of WAF technology. 7. Gartner has determined that they are significant players in the market due to market presence or technology innovation. 8. Gartner analysts assess that the vendor's WAF technology provides more than a repackaged Mod. Security engine and signatures.

Problem Statement PCI DSS 6. 6: Gartner’s Choice Gartner basis for it’s ‘is this security thing working’ is PCI • PCI outlines a lot of “recommended capabilities” • The important one for us is recommendation #2 “React appropriately (defined by active policy or rules) to threats against relevant vulnerabilities as identified, at a minimum, in the OWASP Top Ten and/or PCI DSS Requirement 6. 5. “ • Really this boils down to the QSA gets to decide Perhaps there is a more authoritative methodology…

Existing Work: WAFEC Web Application Firewall Evaluation Criteria 1. 0 • Presented as a yes/no spreadsheet for evaluating WAFs • Focuses on 9 feature areas: 1. Deployment Architecture 2. HTTP and HTML Support 3. Detection Techniques 4. Protection Techniques 5. Logging 6. Reporting 7. Management 8. Performance 9. XML

WAFEC and “Security” What does WAFEC says about evaluating security Let's say the criteria is ‘Protects from SQL injection attacks’ • How do I evaluate that effectiveness of this criteria? • Right now it’s just yes/no • How do i generate the weight for the importance of this? • What about in non-SQL environments? • As a result of the goal, and this problem, typically there isn’t any information beyond yes/no • More importantly, none of these responses are published : (

A purchasing problem Buyers remorse Sure knowing features is important, but do we have evidence of effectivness? • How do we test WAF effectiveness • Perhaps there are indvidual features that are weak • Effectivness seems like a helpful metric • Not all WAFs can be equally good • This will help architects know the WAF they picked works in their envirovment • If something is wrong can we track it? • We’ve all met product dev teams • Can we force them to get better?

Our Problems (Recap) OWASP CRS is just like everyone else • For years our team has struggled with effectivness metrics • People would ask us for performance, we’d explain…. • The last straw came when we were asked to benchmark against another WAF • Not only were we faster… • but the tests were useless Maybe performance isn’t everything (if it is we’re screwed) Maybe effetiveness is a better metric, but it’s harder

A New Dawn The OWASP CRS v 3. 0. 0 project Over the past few years we’ve been revamping the Core Rule Set this includes: Utilization of new Mod. Security features, such as libinjection A new, revamped paranoia level that promises to reduce false positives • Brand new defenses that make CRS more effective than ever • Effectiveness improvements to many existing rule areas • More documentation and a new, more intuitive, file layout • Mitigations for many common false positives • Additional configuration options, including a sampling mode • Additional testing and support scripts • Default of anomaly based protection Each one of these bullets can be their own talk easily, but today let's talk about how we ‘test’ WAF effectiveness • •

CRS as a base Pro v Con OWASP CRS is just like everyone else • Mod. Security is designed to be exteremly configurable and neutral • It doesn’t ship with a ruleset at all • CRS is in a unique position of being a separate project from Mod. Security • In fact other WAFs use it (both outwardly and not so much -_-) • But • • • we also have unqiue problems Lots of developers, it’s open source Our own language (many are compatabile with it) We run in a lot of enviorvments • Windows, Linux, AIX, Mac OS, Apache, Nginx, IIS So how do we properly ‘test’ in such an enviorvment.

Our Approach The OWASP CRS v 3. 0. 0 project WAFEC we felt provided a good base but we needed two things • Regression tests • And well… regression tests We laid out two story's • We wanted to know if there was a regression for a rule • Imagine someone changing a rule • Or if there was a regression for the system as a whole • Imagine making sure all known XSS is blocked Both of these were going to require making HTTP requests

Designing your own wheel I’m generally a huge proponent of reuse, if possible Step Step 1) 2) 3) 4) 5) As a project decide on a common testing language Find existing projects that might fit your need (look at existing wheels) Evaluate if they meet your goals (see if the wheels fit) Inevitably recreate some wheels (rebuild wheels) Profit? ? ? These are exactly the steps we went through

Deciding on a language Python and why we love it • OWASP CRS had a disadvantage, it has it’s own language. • Mod. Security is written in C/C++ and so are a lot of the tools • Turns out generally people don’t really love C projects • The choice came down to Python, Ruby, and Go • As a group we chose Python • It’s easy • Most people know it • One problem, our existing tools were in various langugues, perticularly our unit testing – which people had stopped to use.

Find existing projects Current Unit Testing • People had stopped to using it because: • It was hard to use • It was hard to write tests for • It was written in Perl • It wasn’t required • It didn’t integrate well • It did however make HTTP requests, but it wasn’t very modular. • Some other libraries also had the same problem • Things like Python requests, and Py. Curl – weren’t sufficient

Evaluate if they meet your goal Why not Curl or Requests? • It turns out frequently the things we need to test are straight up against spec • “EHELO mymailserver. test. com” • Tools like Py. Curl and Requests don’t even like to let you do things like change the version • We needed more flexability We were able to leverage many existing tools though • Py. YAML, Pytest, etc

Inevitably recreate the wheel Our Framework for Testing WAFS (FTW) The Framework for Testing WAFs - a solution when your test cases involve things that SHOULD never happen. • A modular HTTP request generator • Designed to be user friendly and easy (YAML tests) • Designed for multiple WAF support • Available as a python pip module (we got ftw, kinda neat) • We want to integrate with existing best practices in development • Open Source https: //github. com/fastly/ftw

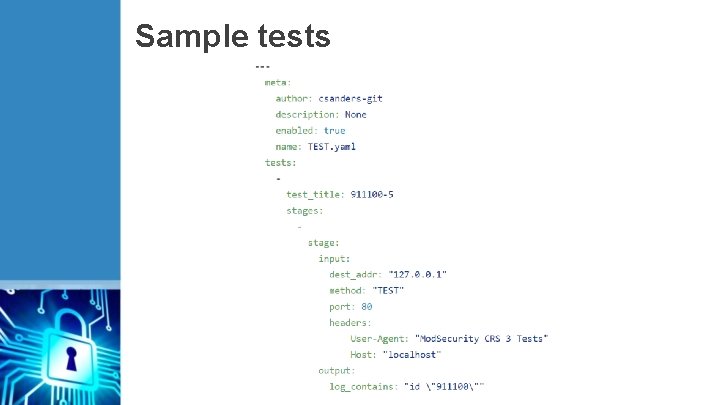

Sample tests

Integration with OWASP CRS Keep it separated! While we had FTW and it reached it’s 1. 0 Milestone we still needed actual tests • We generated a new repo to contain those and used gitsubmodules to bring it into CRS’s master repo. Some tests are targeted using the Mod. Securityv 2 plugin to trigger a rule (type 1) Other tests are just giant lists of exploits separated into categories. (type 2) https: //github. com/Spider. Labs/OWASP-CRS-regressions

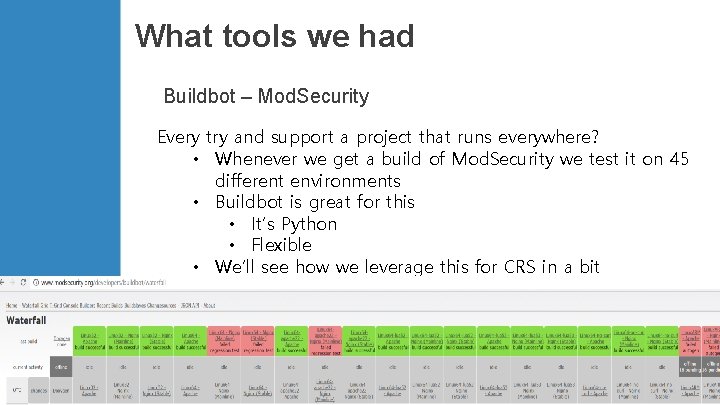

What tools we had Buildbot – Mod. Security Every try and support a project that runs everywhere? • Whenever we get a build of Mod. Security we test it on 45 different environments • Buildbot is great for this • It’s Python • Flexible • We’ll see how we leverage this for CRS in a bit

Solving only part of our problem Integrating our methodology with our environment • What we did so far • We develop this strict testing methodology • We make it easy to use • We write these tests… • This doesn’t solve the part where users don’t use these test • Using buildbot we can get email feedback, but its not enough • Such tests are good for internal tasks like our type 2 tests To this end we used our existing buildout environment to extend to OWASP CRS.

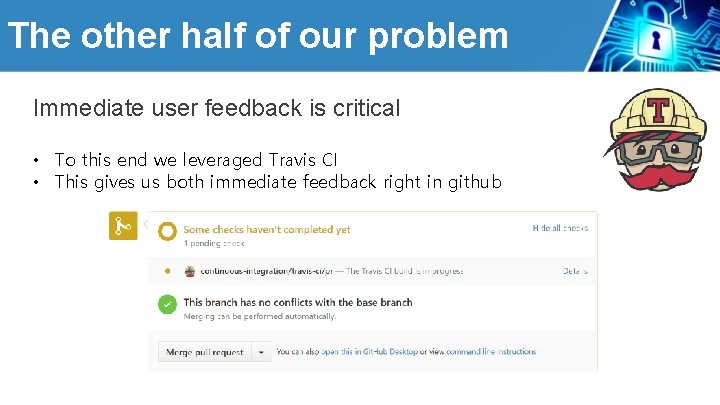

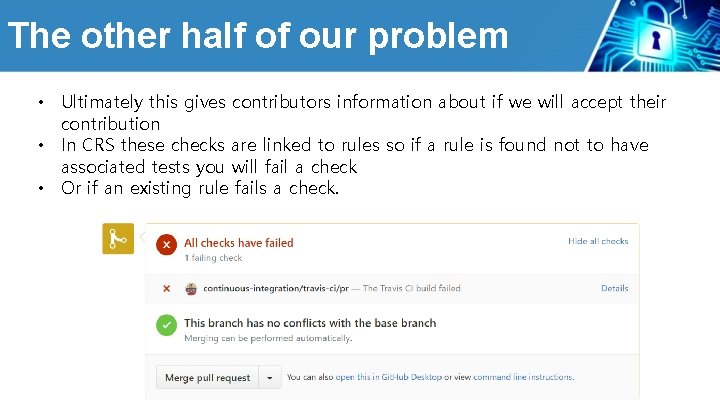

The other half of our problem Immediate user feedback is critical • To this end we leveraged Travis CI • This gives us both immediate feedback right in github

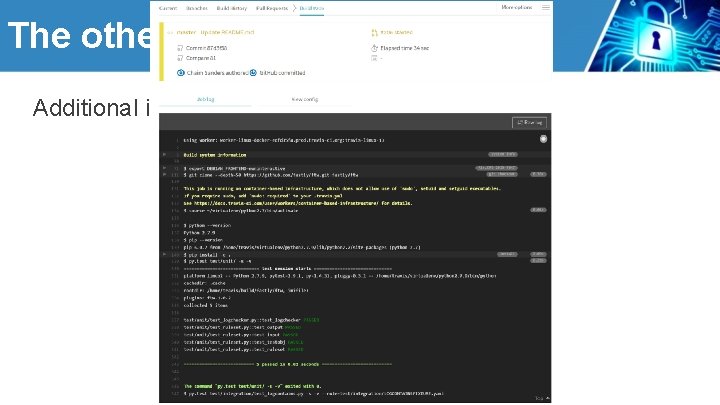

The other half of our problem Additional information as needed

The other half of our problem • Ultimately this gives contributors information about if we will accept their contribution • In CRS these checks are linked to rules so if a rule is found not to have associated tests you will fail a check • Or if an existing rule fails a check.

Travis CI problems • Ultimately Travis is awesome but you need to tell it what to do • To do full tests we need to install Mod. Security (or other WAFs) • This was a nice part of Buildbot, that we can’t leverage • To automate this we use Ansible • More Python goodness • Allows us to easily setup our enviorvment • Other teams use Vagrant for this effect • As Travis CI supports Docker this was our ideal deployment • Turns out docker isn’t quite ready for a Mod. Security image • Mod. Security v 3 complicates this even more https: //github. com/csanders-git/ansible-role-modsecurity https: //github. com/fastly/waf_testbed

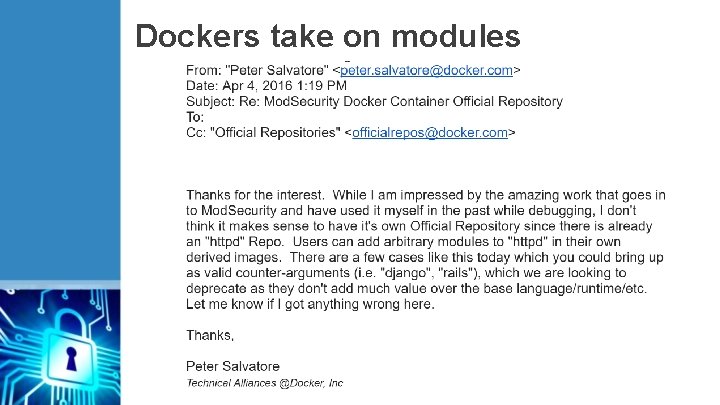

Dockers take on modules

Future Work What’s left to do … a lot • • AMIs Minimal Click Deployment (MCD) Parsers/linters And more!

Thank Yous Special thanks to Zach Allen for help developing FTW Thanks also to Christian Peron and the rest of the Fastly Team for great insight Thanks to Christian Folini and Walter Hop from the CRS team Thanks to Jared Strout for help reviewing the presentation And of course thanks to everyone in the community who uses CRS

Questions

- Slides: 40