WAN Monitoring Issues Prepared by Les Cottrell SLAC

WAN Monitoring Issues Prepared by Les Cottrell, SLAC, for the NASA/LSN Workshop on Optical Network Testbeds NASA Ames August 9 -11, 2004 www. slac. stanford. edu/grp/scs/net/talk 03/jet-aug 04. ppt Partially funded by DOE/MICS Field Work Proposal on Internet End-to-end Performance Monitoring (IEPM), also supported by IUPAP 1

The Problem • Distributed systems are very hard – A distributed system is one in which I can't get my work done because a computer I've never heard of has failed. Butler Lampson • Network is deliberately transparent • The bottlenecks can be in any of the following components: – – the applications the OS the disks, NICs, bus, memory, etc. on sender or receiver the network switches and routers, and so on • Problems may not be logical – Most problems are operator errors, configurations, bugs • When building distributed systems, we often observe unexpectedly low performance • the reasons for which are usually not obvious • Just when you think you’ve cracked it, in steps security 2

E 2 E Monitoring Goals • Solving the E 2 E performance problem is the critical problem for the user – Improve e 2 e throughput for data intensive apps in high-speed WANs – Provide ability to do performance analysis & fault detection ins Grid computing environment – Provide accurate, detailed, & adaptive monitoring of all distributed components including the network 3

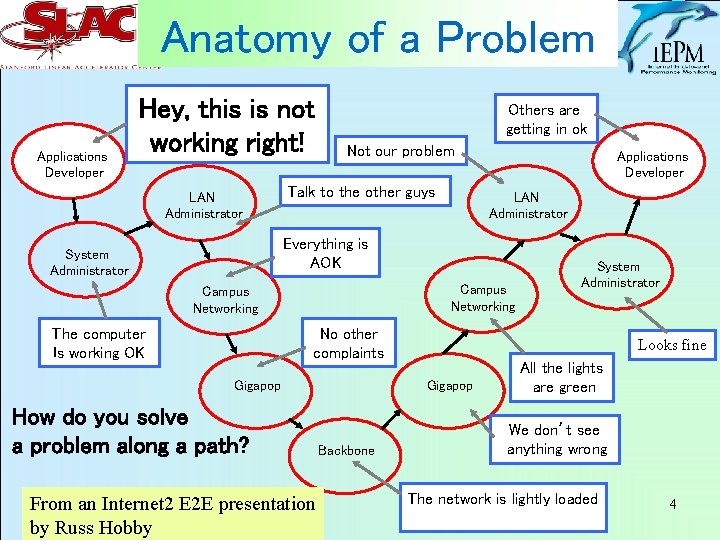

Anatomy of a Problem Applications Developer Hey, this is not working right! LAN Administrator Others are getting in ok Not our problem Talk to the other guys Applications Developer LAN Administrator Everything is AOK System Administrator Campus Networking The computer Is working OK No other complaints Gigapop How do you solve a problem along a path? From an Internet 2 E 2 E presentation by Russ Hobby Looks fine Gigapop Backbone System Administrator All the lights are green We don’t see anything wrong The network is lightly loaded 4

Needs • Measurement tools to quickly, accurately and automatically identify problems – Automatically take action to investigate and gather information, on-demand measurements • Tools need to scale to 10 Gbps and beyond • Standard ways to discover request and report results of measurements – GGF/NMWG schemas – Share information with people and apps across a federation of measurement infrastructures 5

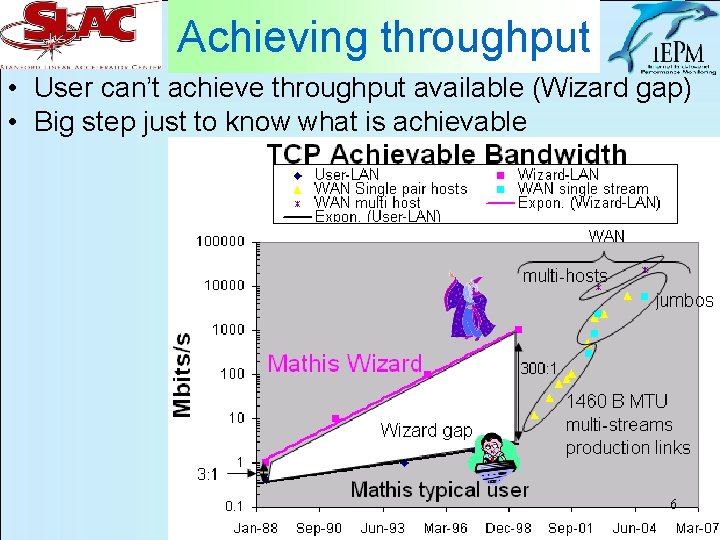

Achieving throughput • User can’t achieve throughput available (Wizard gap) • Big step just to know what is achievable 6

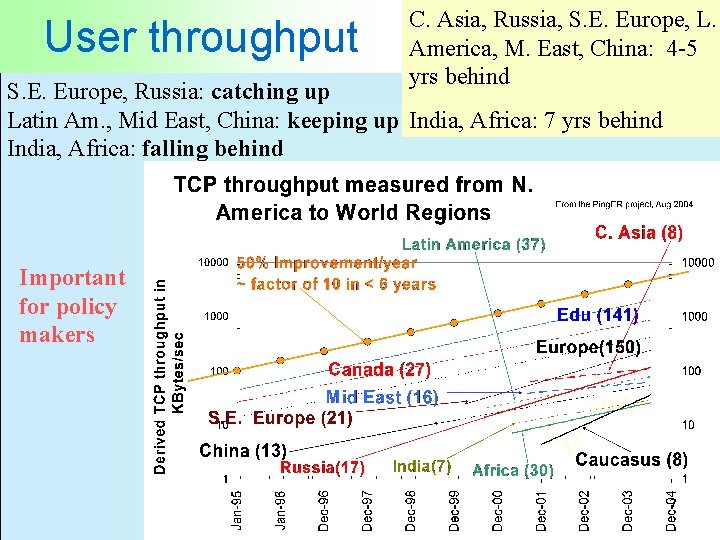

User throughput C. Asia, Russia, S. E. Europe, L. America, M. East, China: 4 -5 yrs behind S. E. Europe, Russia: catching up Latin Am. , Mid East, China: keeping up India, Africa: 7 yrs behind India, Africa: falling behind Important for policy makers 7

Hi-perf Challenges • Packet loss hard to measure by ping – For 10% accuracy on BER 1/10^8 ~ 1 day at 1/sec – Ping loss ≠ TCP loss • Iperf/Grid. FTP throughput at 10 Gbits/s – To measure stable (congestion avoidance) state for 90% of test takes ~ 60 secs ~ 75 GBytes – Requires scheduling implies authentication etc. • Using packet pair dispersion can use only few tens or hundreds of packets, however: – Timing granularity in host is hard (sub μsec) – NICs may buffer (e. g. coalesce interrupts. or TCP offload) so need info from NIC or before • Security: blocked ports, firewalls, keys vs. one time passwords, varying policies, Kerberos vs ssh etc. 8

Passive measurements • Security & privacy concerns – SNMP access to routers – Sniffers see all traffic • Keeping up with capturing and analysis – Only headers, sampling • Vast amounts of data, needs excellent datamining tools • Gives utilization, retries 9

Optical • Could be whole new playing field, today’s tools no longer applicable: – No jitter (so packet pair dispersion no use) – Instrumented TCP stacks a la Web 100 may not be relevant – Layer 1 & 2 switches make traceroute less useful – Losses so low, ping not viable to measure – High speeds make some current techniques fail or more difficult (timing, amounts of data etc. ) 10

- Slides: 10