VLDB99 TUTORIAL Metasearch Engines Solutions and Challenges Clement

VLDB'99 TUTORIAL Metasearch Engines: Solutions and Challenges Clement Yu Weiyi Meng Dept. of EECS Dept. of Computer Science U. of Illinois at Chicago SUNY at Binghamton Chicago, IL 60607 Binghamton, NY 13902 yu@eecs. uic. edu meng@cs. binghamton. edu

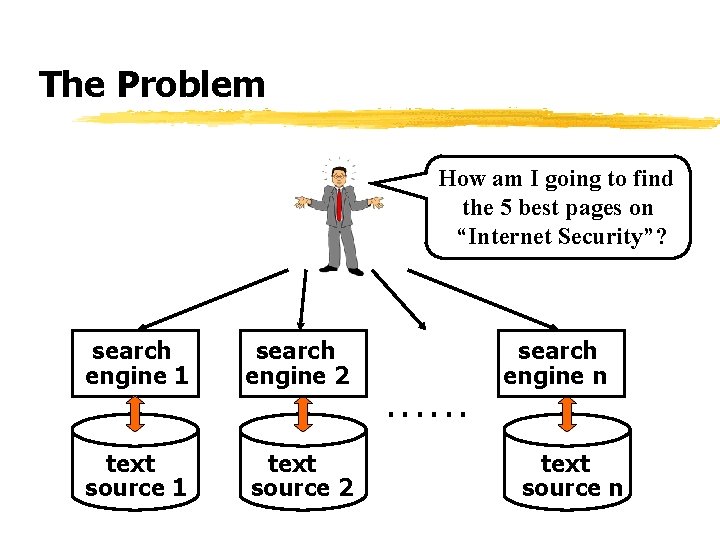

The Problem How am I going to find the 5 best pages on “Internet Security”? search engine 1 search engine 2 search engine n. . . text source 1 text source 2 text source n

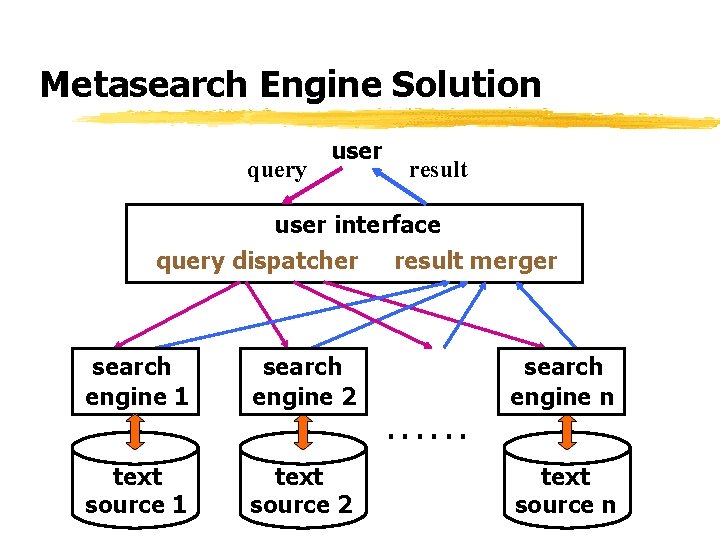

Metasearch Engine Solution query user result user interface query dispatcher result merger search engine 1 search engine 2 search engine n. . . text source 1 text source 2 text source n

Some Observations z most sources are not useful for a given query z sending a query to a useless source would yincur unnecessary network traffic ywaste local resources for evaluating the query yincrease the cost of merging the results z retrieving too many documents from a source is inefficient

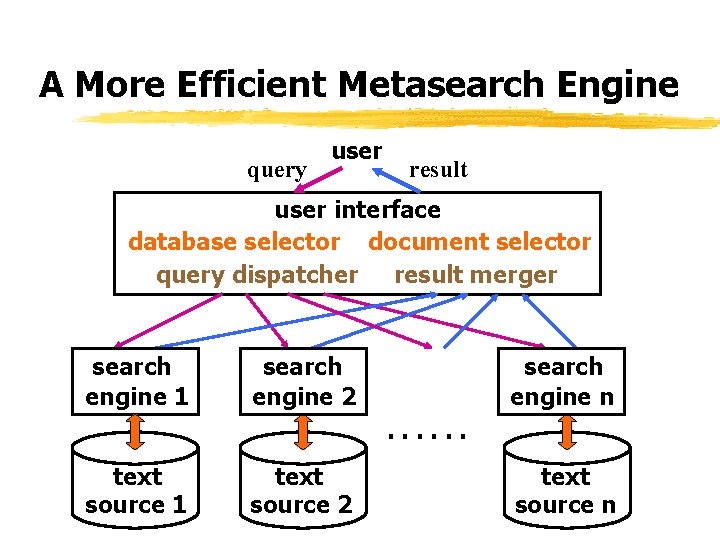

A More Efficient Metasearch Engine query user result user interface database selector document selector query dispatcher result merger search engine 1 search engine 2 search engine n. . . text source 1 text source 2 text source n

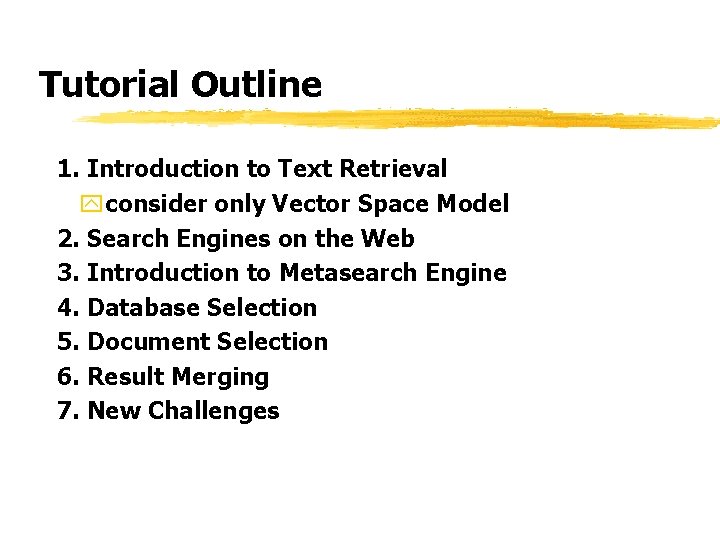

Tutorial Outline 1. Introduction to Text Retrieval yconsider only Vector Space Model 2. Search Engines on the Web 3. Introduction to Metasearch Engine 4. Database Selection 5. Document Selection 6. Result Merging 7. New Challenges

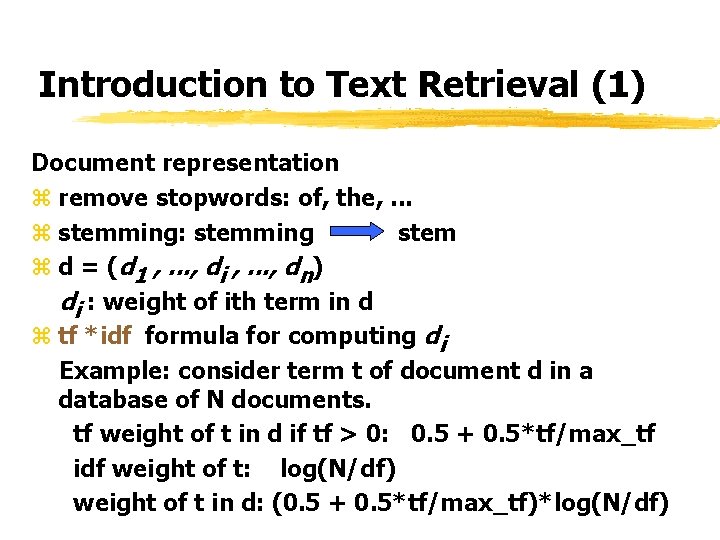

Introduction to Text Retrieval (1) Document representation z remove stopwords: of, the, . . . z stemming: stemming stem z d = (d 1 , . . . , di , . . . , dn) di : weight of ith term in d z tf *idf formula for computing di Example: consider term t of document d in a database of N documents. tf weight of t in d if tf > 0: 0. 5 + 0. 5*tf/max_tf idf weight of t: log(N/df) weight of t in d: (0. 5 + 0. 5*tf/max_tf)*log(N/df)

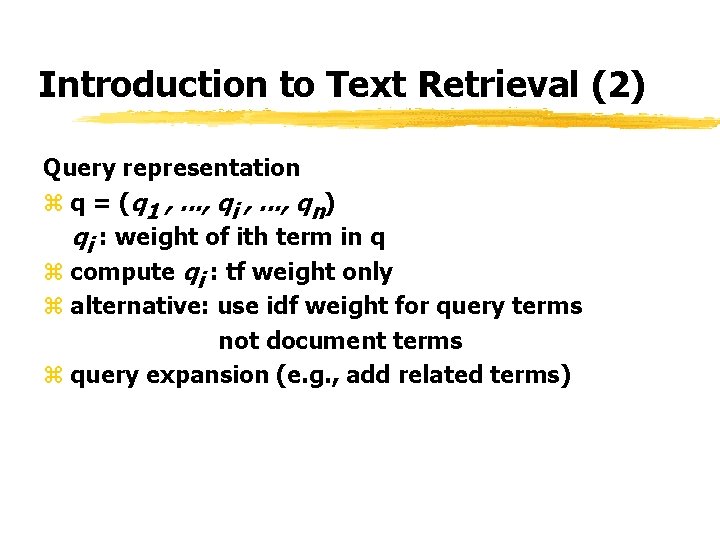

Introduction to Text Retrieval (2) Query representation z q = (q 1 , . . . , qi , . . . , qn) qi : weight of ith term in q z compute qi : tf weight only z alternative: use idf weight for query terms not document terms z query expansion (e. g. , add related terms)

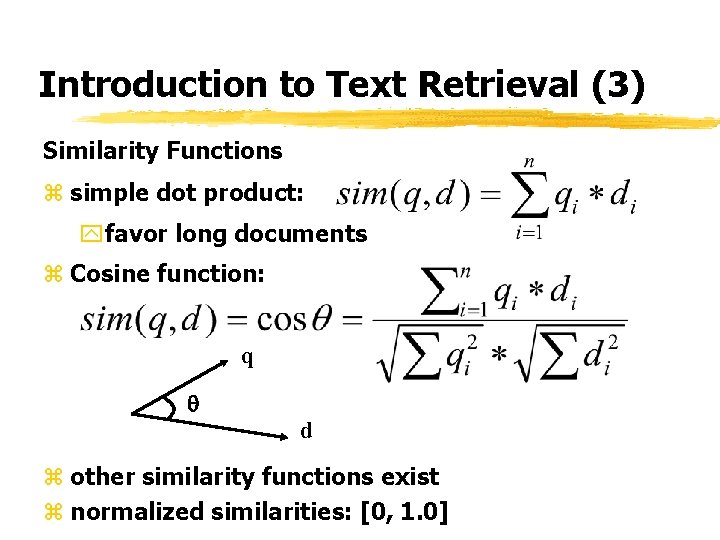

Introduction to Text Retrieval (3) Similarity Functions z simple dot product: yfavor long documents z Cosine function: q d z other similarity functions exist z normalized similarities: [0, 1. 0]

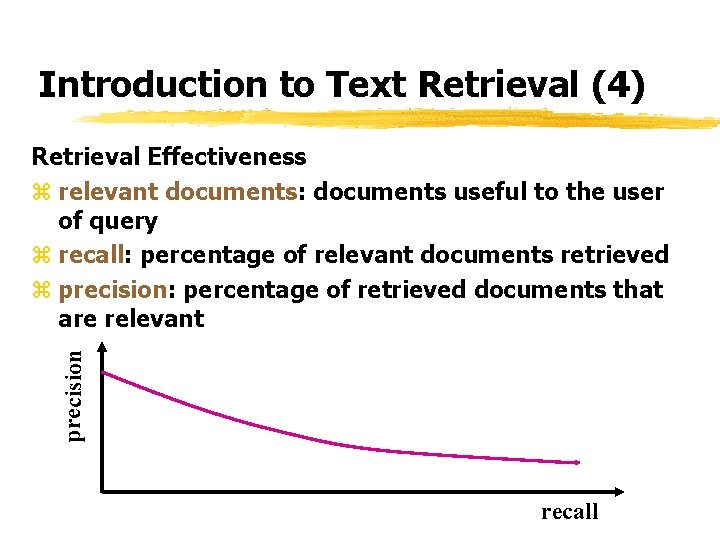

Introduction to Text Retrieval (4) precision Retrieval Effectiveness z relevant documents: documents useful to the user of query z recall: percentage of relevant documents retrieved z precision: percentage of retrieved documents that are relevant recall

Search Engines on the Web (1) Search engine as a document retrieval system z no control on web pages that can be searched z web pages have rich structures and semantics z web pages are extensively linked z additional information for each page (time last modified, organization publishing it, etc. ) z databases are dynamic and can be very large z few general-purpose search engines and numerous special-purpose search engines

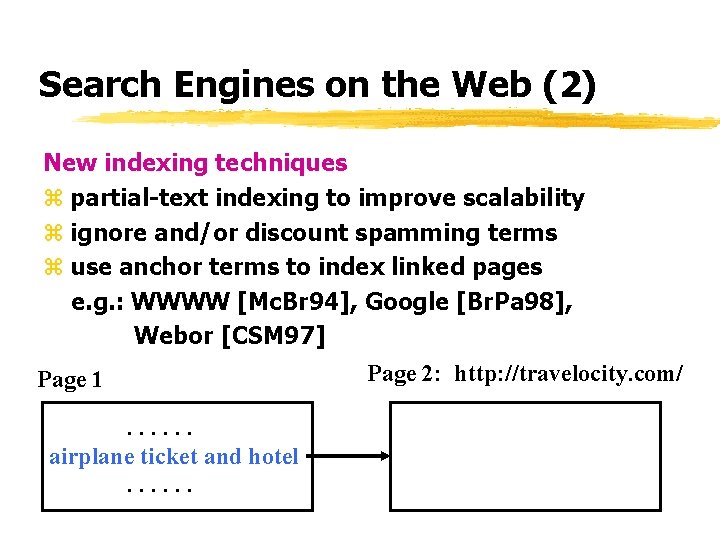

Search Engines on the Web (2) New indexing techniques z partial-text indexing to improve scalability z ignore and/or discount spamming terms z use anchor terms to index linked pages e. g. : WWWW [Mc. Br 94], Google [Br. Pa 98], Webor [CSM 97] Page 1. . . airplane ticket and hotel. . . Page 2: http: //travelocity. com/

Search Engines on the Web (3) New term weighting schemes z higher weights to terms enclosed by special tags ytitle (SIBRIS [Wa. WJ 89], Altavista, Hot. Bot, Yahoo) yspecial fonts (Google [Br. Pa 98]) yspecial fonts & tags (LASER [Bo. FJ 96]) z Webor [CSM 97] approach ypartition tags into disjoint classes (title, header, strong, anchor, list, plain text) yassign different importance factors to terms in different classes ydetermine optimal importance factors

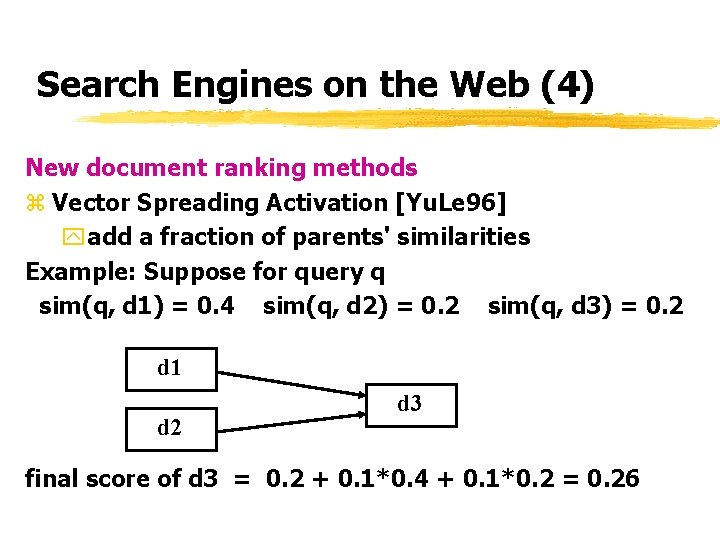

Search Engines on the Web (4) New document ranking methods z Vector Spreading Activation [Yu. Le 96] yadd a fraction of parents' similarities Example: Suppose for query q sim(q, d 1) = 0. 4 sim(q, d 2) = 0. 2 sim(q, d 3) = 0. 2 d 1 d 2 d 3 final score of d 3 = 0. 2 + 0. 1*0. 4 + 0. 1*0. 2 = 0. 26

Search Engines on the Web (5) New document ranking methods z combine similarity with rank y. Page. Rank [Pa. Br 98]: an important page is linked to by many pages and/or by important pages z combine similarity with authority score yauthority [Klei 98]: an important content page is highly linked to among initially retrieved pages and their neighbors

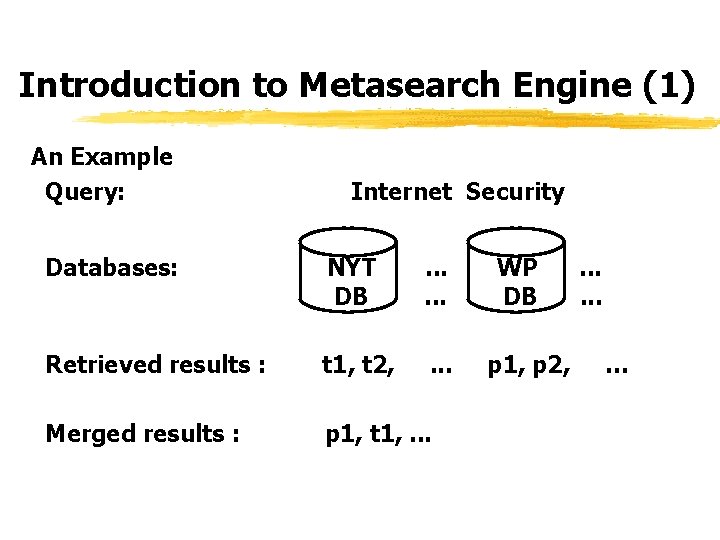

Introduction to Metasearch Engine (1) An Example Query: Internet Security Databases: NYT DB . . . Retrieved results : t 1, t 2, . . . Merged results : p 1, t 1, . . . WP DB p 1, p 2, . . . …

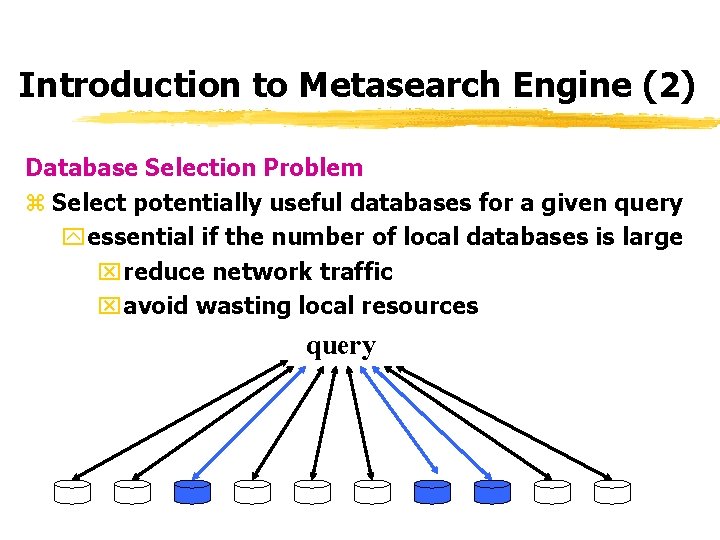

Introduction to Metasearch Engine (2) Database Selection Problem z Select potentially useful databases for a given query yessential if the number of local databases is large xreduce network traffic xavoid wasting local resources query

Introduction to Metasearch Engine (3) z Potentially useful database: contain potentially useful documents y. Potentially useful documents: xglobal similarity above a threshold xglobal similarity among m highest z Need some knowledge about each database in advance in order to perform database selection y. Database Representative

Introduction to Metasearch Engine (4) Document Selection Problem Select potentially useful documents from each selected local database efficiently Step 1: Retrieve all potentially useful documents while minimizing the retrieval of useless documents z from global similarity threshold to tightest local similarity threshold want all d: Gsim(q, d) > GT retrieve d from DBk : Lsim(q, d) > LTk is largest : Gsim(q, d) > GT Lsim(q, d) > LTk

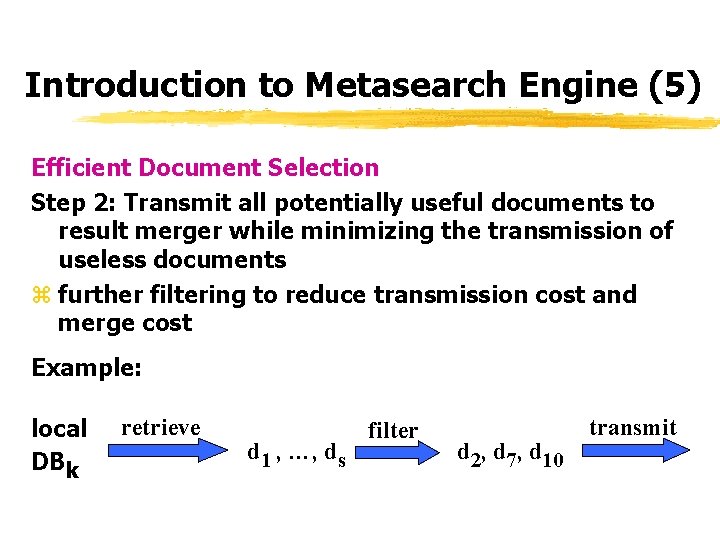

Introduction to Metasearch Engine (5) Efficient Document Selection Step 2: Transmit all potentially useful documents to result merger while minimizing the transmission of useless documents z further filtering to reduce transmission cost and merge cost Example: local DBk retrieve d 1 , …, ds filter d 2, d 7, d 10 transmit

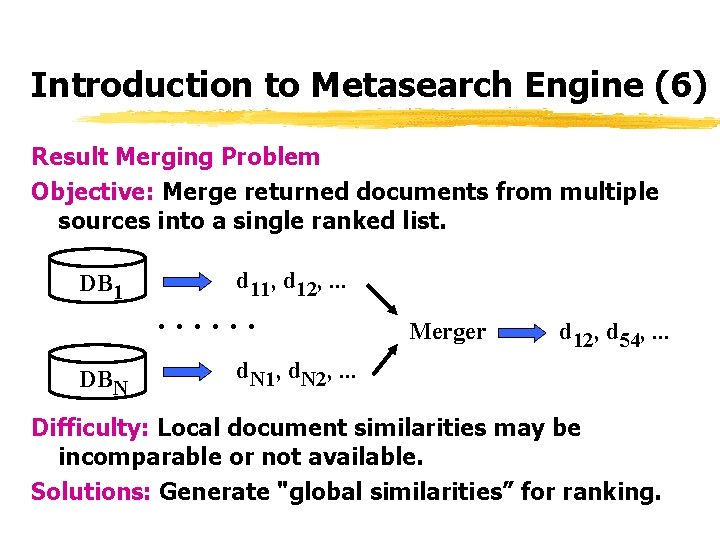

Introduction to Metasearch Engine (6) Result Merging Problem Objective: Merge returned documents from multiple sources into a single ranked list. DB 1 DBN d 11, d 12, . . Merger d 12, d 54, . . . d. N 1, d. N 2, . . . Difficulty: Local document similarities may be incomparable or not available. Solutions: Generate "global similarities” for ranking.

Introduction to Metasearch Engine (7) An Ideal Metasearch Engine: z Retrieval effectiveness: same as that as if all documents were in the same collection. z Efficiency: optimize the retrieval process Implications: should aimed at: z selecting only useful search engines z retrieving and transmitting only useful documents z ranking documents according to their degrees of relevance

![Introduction to Metasearch Engine (8) Main Sources of Difficulties: [MYL 99] z autonomy of Introduction to Metasearch Engine (8) Main Sources of Difficulties: [MYL 99] z autonomy of](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-23.jpg)

Introduction to Metasearch Engine (8) Main Sources of Difficulties: [MYL 99] z autonomy of local search engines ydesign autonomy ymaintenance autonomy z heterogeneities among local search engines yindexing method ydocument/query term weighting schemes ysimilarity/ranking function ydocument database ydocument version yresult presentation

![Introduction to Metasearch Engine (9) Impact of Autonomy and Heterogeneities [MLY 99] z unwilling Introduction to Metasearch Engine (9) Impact of Autonomy and Heterogeneities [MLY 99] z unwilling](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-24.jpg)

Introduction to Metasearch Engine (9) Impact of Autonomy and Heterogeneities [MLY 99] z unwilling to provide database representatives or provide different types of representatives z difficult to find potentially useful documents z difficult to merge documents from multiple sources

Database Selection: Basic Idea Goal: Identify potentially useful databases for each user query. General approach: z use representative to indicate approximately the content of each database z use these representatives to select databases for each query Diversity of solutions z different types of representatives z different algorithms using the representatives

Solution Classification z Naive Approach: select all databases (e. g. Meta. Crawler, NCSTRL) z Qualitative Approaches: estimate the quality of each local database ybased on rough representatives ybased on detailed representatives z Quantitative Approaches: estimate quantities that measure the quality of each local database more directly and explicitly z Learning-based Approaches: database representatives are obtained through training or learning

Qualitative Approaches Using Rough Representatives z typical representative: ya few words or a few paragraphs in certain format ymanual construction often needed z can work well for special-purpose local search engines z very scalable storage requirement z selection can be inaccurate as the description is too rough

![Qualitative Approaches Using Rough Representatives Example 1: ALIWEB [Kost 94] z Representative has a Qualitative Approaches Using Rough Representatives Example 1: ALIWEB [Kost 94] z Representative has a](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-28.jpg)

Qualitative Approaches Using Rough Representatives Example 1: ALIWEB [Kost 94] z Representative has a fixed format: site containing files for the Perl Language Template-Type: DOCUMENT Title: Perl Description: Information on the Perl Programming Language. Includes a local Hypertext Perl Manual, and the latest FAQ in Hypertext. Keywords: perl, perl-faq, language z user query can match against one or more fields

![Qualitative Approaches Using Rough Representatives Example 2: Net. Serf [Ch. Ha 95] z Representative Qualitative Approaches Using Rough Representatives Example 2: Net. Serf [Ch. Ha 95] z Representative](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-29.jpg)

Qualitative Approaches Using Rough Representatives Example 2: Net. Serf [Ch. Ha 95] z Representative has a Word. Net based structure: site for world facts listed by country topic: country synset: [nation, nationality, land, country, a_people] synset: [state, nation, country, land, commonwealth, res_publica, body_politic] synset: [country, state, land, nation] info-type: facts z user query is transformed to similar structure before match

Qualitative Approaches Using Detailed Representatives Use detailed statistical information for each term z employ special measures to estimate the usefulness/quality of each search engine for each query z the measures reflect the usefulness in a less direct/explicit way compared to those used in quantitative approaches. z scalability starts to become an issue

![Qualitative Approaches Using Detailed Representatives Example 1: g. Gl. OSS [Gr. Ga 95] z Qualitative Approaches Using Detailed Representatives Example 1: g. Gl. OSS [Gr. Ga 95] z](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-31.jpg)

Qualitative Approaches Using Detailed Representatives Example 1: g. Gl. OSS [Gr. Ga 95] z representative: for term ti -- document frequency of ti -- the sum of weights of ti in all documents z database usefulness: sum of high similarities usefulness(q, D, T) =

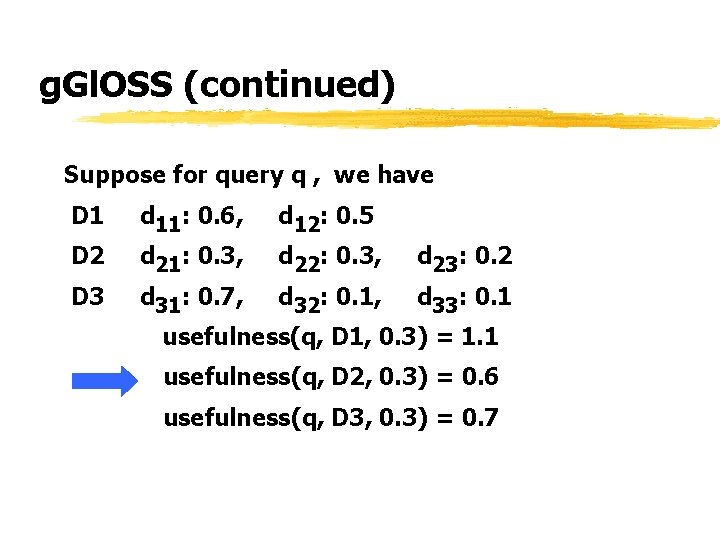

g. Gl. OSS (continued) Suppose for query q , we have D 1 d 11: 0. 6, d 12: 0. 5 D 2 d 21: 0. 3, d 22: 0. 3, d 23: 0. 2 D 3 d 31: 0. 7, d 32: 0. 1, d 33: 0. 1 usefulness(q, D 1, 0. 3) = 1. 1 usefulness(q, D 2, 0. 3) = 0. 6 usefulness(q, D 3, 0. 3) = 0. 7

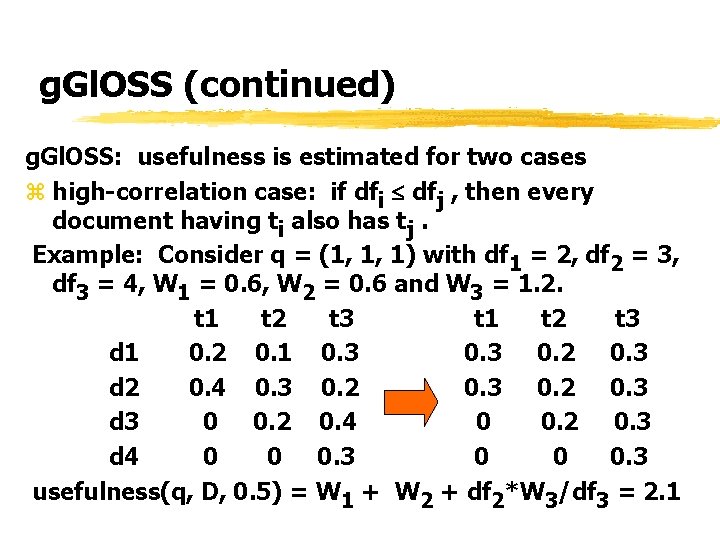

g. Gl. OSS (continued) g. Gl. OSS: usefulness is estimated for two cases z high-correlation case: if dfi dfj , then every document having ti also has tj. Example: Consider q = (1, 1, 1) with df 1 = 2, df 2 = 3, df 3 = 4, W 1 = 0. 6, W 2 = 0. 6 and W 3 = 1. 2. t 1 t 2 t 3 d 1 0. 2 0. 1 0. 3 0. 2 0. 3 d 2 0. 4 0. 3 0. 2 0. 3 d 3 0 0. 2 0. 4 0 0. 2 0. 3 d 4 0 0 0. 3 usefulness(q, D, 0. 5) = W 1 + W 2 + df 2*W 3/df 3 = 2. 1

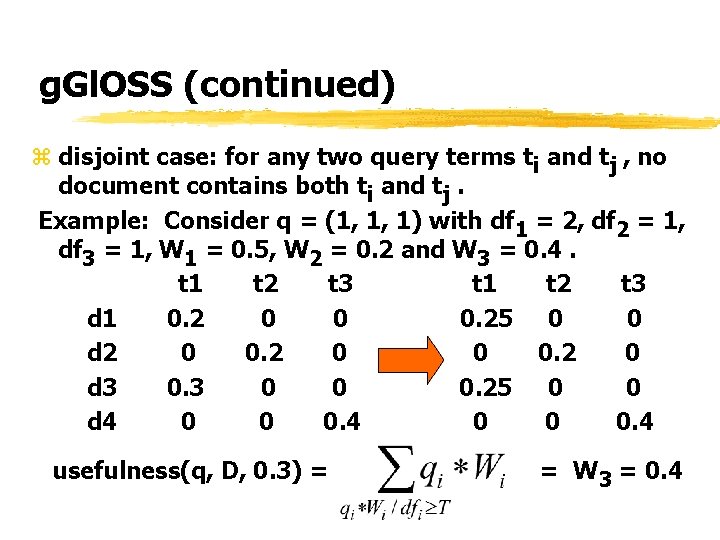

g. Gl. OSS (continued) z disjoint case: for any two query terms ti and tj , no document contains both ti and tj. Example: Consider q = (1, 1, 1) with df 1 = 2, df 2 = 1, df 3 = 1, W 1 = 0. 5, W 2 = 0. 2 and W 3 = 0. 4. t 1 t 2 t 3 d 1 0. 2 0 0 0. 25 0 0 d 2 0 0. 2 0 d 3 0 0 0. 25 0 0 d 4 0 0 0. 4 usefulness(q, D, 0. 3) = = W 3 = 0. 4

g. Gl. OSS (continued) Some observations z usefulness dependent on threshold z representative has two quantities per term z strong assumptions are used z high-correlation tends to overestimate z disjoint tends to underestimate z the two estimates tend to form bounds to the sum of the similarities T

![Qualitative Approaches Using Detailed Representatives Example 2: CORI Net [Ca. LC 95] z representative: Qualitative Approaches Using Detailed Representatives Example 2: CORI Net [Ca. LC 95] z representative:](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-36.jpg)

Qualitative Approaches Using Detailed Representatives Example 2: CORI Net [Ca. LC 95] z representative: (dfi , cfi ) for term ti dfi -- document frequency of ti cfi -- collection frequency of ti ycfi can be shared by all databases z database usefulness(q, D) = sim(q, representative of D) usefulness similarity dfi cfi tfi dfi

CORI Net (continued) Some observations z estimates independent of threshold z representative has less than two quantities per term z similarity is computed based on inference network z same method for ranking documents and ranking databases

![Qualitative Approaches Using Detailed Representatives Example 3: D-WISE [Yu. Le 97] z representative: dfi, Qualitative Approaches Using Detailed Representatives Example 3: D-WISE [Yu. Le 97] z representative: dfi,](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-38.jpg)

Qualitative Approaches Using Detailed Representatives Example 3: D-WISE [Yu. Le 97] z representative: dfi, j for term tj in database Di z database usefulness: a measure of query term concentration in different databases usefulness(q, Di) = k : number of query terms CVVj : cue validity variance of term tj across all databases; larger CVVj tj is more useful in distinguishing different databases

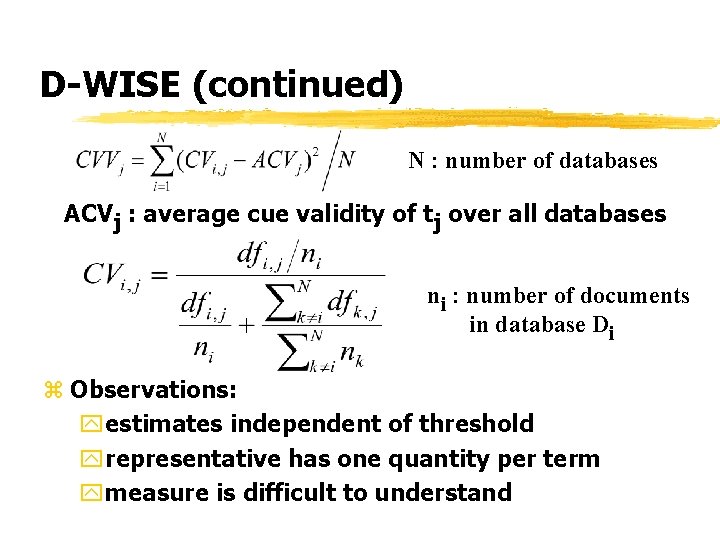

D-WISE (continued) N : number of databases ACVj : average cue validity of tj over all databases ni : number of documents in database Di z Observations: yestimates independent of threshold yrepresentative has one quantity per term ymeasure is difficult to understand

Quantitative Approaches Two types of quantities may be estimated wrt query q: z the number of documents in a database D with similarities higher than a threshold T: No. Doc(q, D, T) = |{ d : d D and sim(q, d) > T }| z the global similarity of the most similar document in D: msim(q, D) = max { sim(q, d) } d D ycan be used to rank databases in descending order of similarity (or any desirability measure)

![Estimating No. Doc(q, D, T) Basic Approach [MLYW 98] z representative: (pi , wi Estimating No. Doc(q, D, T) Basic Approach [MLYW 98] z representative: (pi , wi](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-41.jpg)

Estimating No. Doc(q, D, T) Basic Approach [MLYW 98] z representative: (pi , wi ) for term ti pi : probability that ti appears in a document wi : average weight of ti among documents having ti Example: normalized weights of ti in 10 documents are (0, 0, 0. 2, 0. 4, 0. 6, 0. 6). pi = 0. 6, wi = 0. 4

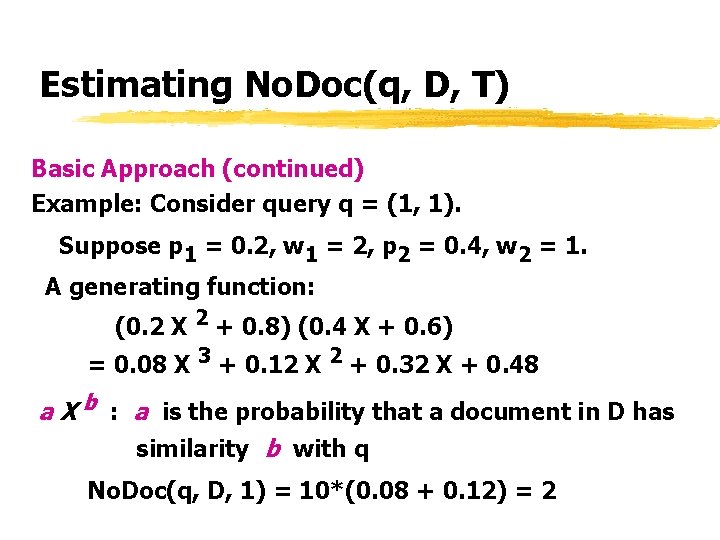

Estimating No. Doc(q, D, T) Basic Approach (continued) Example: Consider query q = (1, 1). Suppose p 1 = 0. 2, w 1 = 2, p 2 = 0. 4, w 2 = 1. A generating function: (0. 2 X 2 + 0. 8) (0. 4 X + 0. 6) = 0. 08 X 3 + 0. 12 X 2 + 0. 32 X + 0. 48 a X b : a is the probability that a document in D has similarity b with q No. Doc(q, D, 1) = 10*(0. 08 + 0. 12) = 2

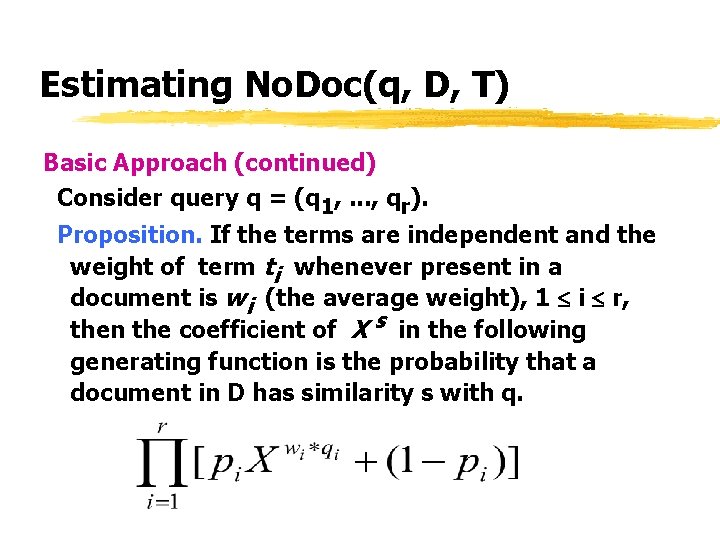

Estimating No. Doc(q, D, T) Basic Approach (continued) Consider query q = (q 1, . . . , qr). Proposition. If the terms are independent and the weight of term ti whenever present in a document is wi (the average weight), 1 i r, then the coefficient of X s in the following generating function is the probability that a document in D has similarity s with q.

![Estimating No. Doc(q, D, T) Subrange-based Approach [MLYW 99] z overcome the uniform term Estimating No. Doc(q, D, T) Subrange-based Approach [MLYW 99] z overcome the uniform term](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-44.jpg)

Estimating No. Doc(q, D, T) Subrange-based Approach [MLYW 99] z overcome the uniform term weight assumption z additional information for term ti : standard deviation of weights of ti in all documents mnwi : maximum normalized weight of ti

Estimating No. Doc(q, D, T) Example: weights of term ti : 4, 4, 1, 1, 0, 0 generating function (factor) using average weight 0. 6*X 2 + 0. 4 a more accurate function using subranges of weights 0. 2*X 4 + 0. 4*X + 0. 4 In general, weights are partitioned to k subranges: pi 1*X mi 1 +. . . + pik*X mik + (1 - pi) Probability pij and median mij can be estimated using di and the average of weights of ti. A special implementation: Use the maximum normalized weight as the first subrange by itself.

![Estimating No. Doc(q, D, T) Combined-term Approach [LYMW 99] z relieve the term independence Estimating No. Doc(q, D, T) Combined-term Approach [LYMW 99] z relieve the term independence](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-46.jpg)

Estimating No. Doc(q, D, T) Combined-term Approach [LYMW 99] z relieve the term independence assumption Example: Consider query : Chinese medicine. Suppose generating function for: Chinese: 0. 1 X 3 + 0. 3 X + 0. 6 medicine: 0. 2 X 2 + 0. 4 X + 0. 4 Chinese medicine: 0. 02 X 5 + 0. 04 X 4 + 0. 1 X 3 + … “Chinese medicine”: 0. 05 Xw +. . .

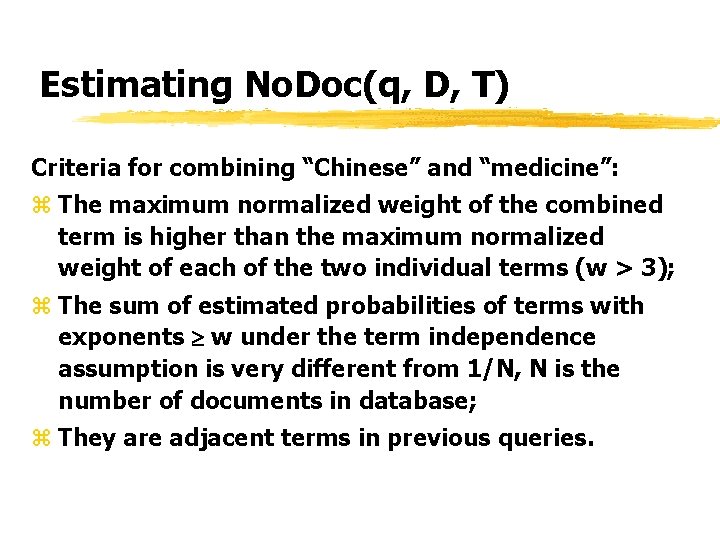

Estimating No. Doc(q, D, T) Criteria for combining “Chinese” and “medicine”: z The maximum normalized weight of the combined term is higher than the maximum normalized weight of each of the two individual terms (w > 3); z The sum of estimated probabilities of terms with exponents w under the term independence assumption is very different from 1/N, N is the number of documents in database; z They are adjacent terms in previous queries.

![Database Selection Using msim(q, D) Optimal Ranking of Databases [YLWM 99 b] User: for Database Selection Using msim(q, D) Optimal Ranking of Databases [YLWM 99 b] User: for](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-48.jpg)

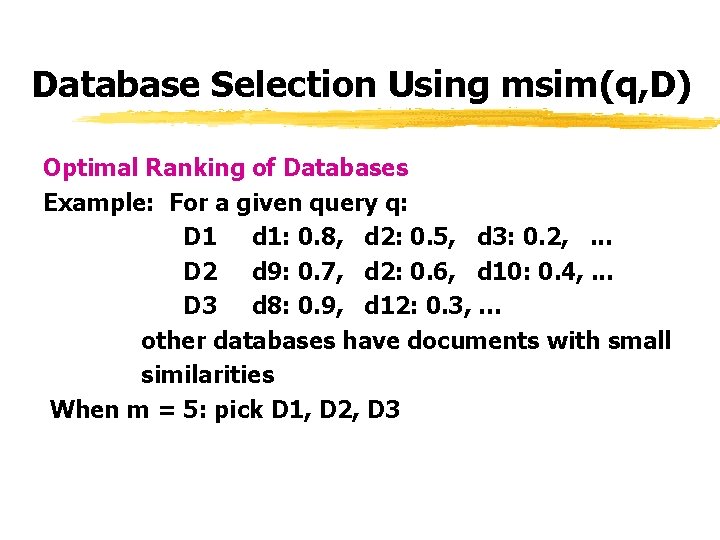

Database Selection Using msim(q, D) Optimal Ranking of Databases [YLWM 99 b] User: for query q, find the m most similar documents or with the m largest degrees of relevance Definition: Databases [D 1, D 2, …, Dp] are optimally ranked with respect to q if there exists a k such that each of the databases D 1, …, Dk contains one of the m most similar documents, and all of these m documents are contained in these k databases.

Database Selection Using msim(q, D) Optimal Ranking of Databases Example: For a given query q: D 1 d 1: 0. 8, d 2: 0. 5, d 3: 0. 2, . . . D 2 d 9: 0. 7, d 2: 0. 6, d 10: 0. 4, . . . D 3 d 8: 0. 9, d 12: 0. 3, … other databases have documents with small similarities When m = 5: pick D 1, D 2, D 3

![Database Selection Using msim(q, D) Proposition: Databases [D 1, D 2, …, Dp] are Database Selection Using msim(q, D) Proposition: Databases [D 1, D 2, …, Dp] are](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-50.jpg)

Database Selection Using msim(q, D) Proposition: Databases [D 1, D 2, …, Dp] are optimally ranked with respect to a query q if and only if msim(q, Di) msim(q, Dj), i < j Example: D 1 d 1: 0. 8, … D 2 d 9: 0. 7, … D 3 d 8: 0. 9, … Optimal rank: [D 3, D 1, D 2, …]

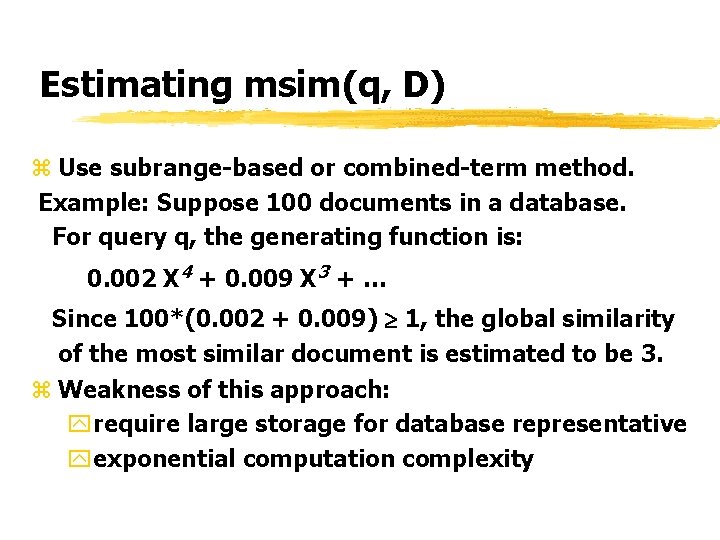

Estimating msim(q, D) z Use subrange-based or combined-term method. Example: Suppose 100 documents in a database. For query q, the generating function is: 0. 002 X 4 + 0. 009 X 3 + … Since 100*(0. 002 + 0. 009) 1, the global similarity of the most similar document is estimated to be 3. z Weakness of this approach: yrequire large storage for database representative yexponential computation complexity

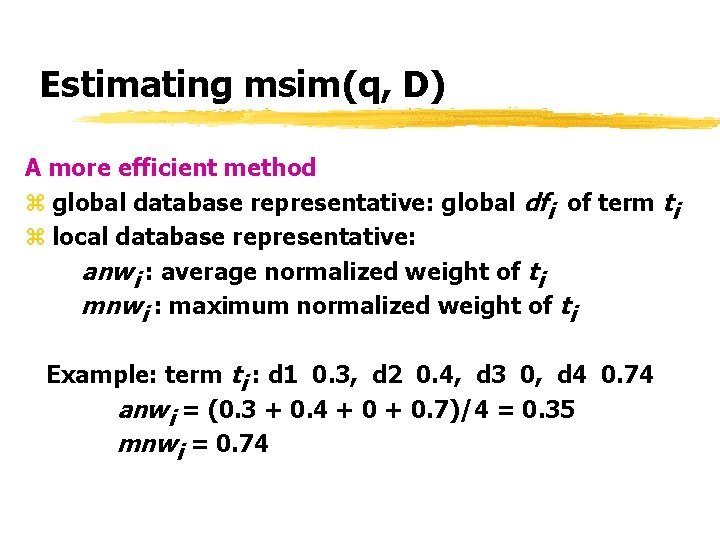

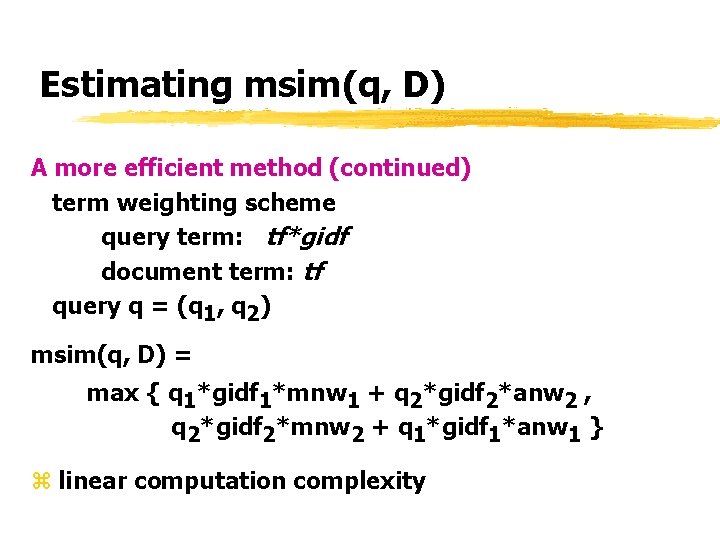

Estimating msim(q, D) A more efficient method z global database representative: global dfi of term ti z local database representative: anwi : average normalized weight of ti mnwi : maximum normalized weight of ti Example: term ti : d 1 0. 3, d 2 0. 4, d 3 0, d 4 0. 74 anwi = (0. 3 + 0. 4 + 0. 7)/4 = 0. 35 mnwi = 0. 74

Estimating msim(q, D) A more efficient method (continued) term weighting scheme query term: tf*gidf document term: tf query q = (q 1, q 2) msim(q, D) = max { q 1*gidf 1*mnw 1 + q 2*gidf 2*anw 2 , q 2*gidf 2*mnw 2 + q 1*gidf 1*anw 1 } z linear computation complexity

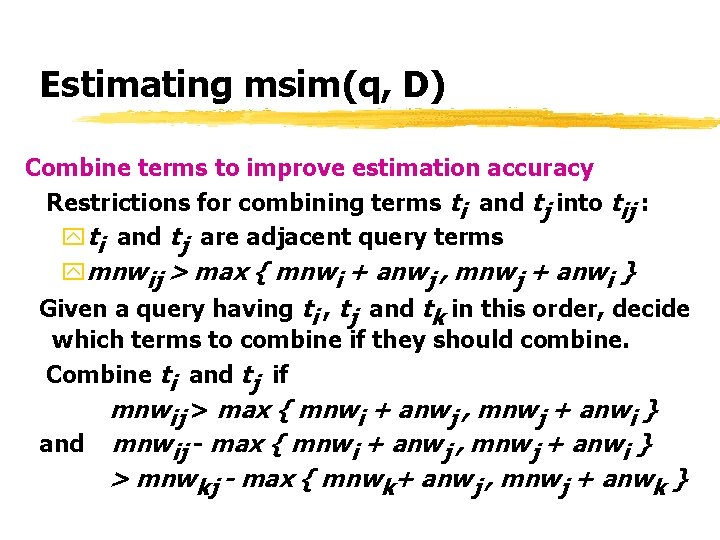

Estimating msim(q, D) Combine terms to improve estimation accuracy Restrictions for combining terms ti and tj into tij : yti and tj are adjacent query terms ymnwij > max { mnwi + anwj , mnwj + anwi } Given a query having ti , tj and tk in this order, decide which terms to combine if they should combine. Combine ti and tj if mnwij > max { mnwi + anwj , mnwj + anwi } and mnwij - max { mnwi + anwj , mnwj + anwi } > mnwkj - max { mnwk+ anwj , mnwj + anwk }

Learning-based Approaches z Use past retrieval experiences to determine usefulness z Assume no or little global database or local database statistics z Static learning : learning based on static training queries z Dynamic learning : learning based on evaluated user queries z Combined learning: learned knowledge based on training queries will be adjusted based on user queries

![Static Learning Example: MRDD (Modeling Relevant Document Distribution) [Vo. GJ 95] z record the Static Learning Example: MRDD (Modeling Relevant Document Distribution) [Vo. GJ 95] z record the](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-56.jpg)

Static Learning Example: MRDD (Modeling Relevant Document Distribution) [Vo. GJ 95] z record the result of each training query for each local database: <r 1, . . . , rs>: ri indicates the minimum number of top-ranked documents to retrieve in order to obtain i relevant documents <2, 5, … >: need to retrieve 2 documents in order to obtain 1 relevant document

MRDD (continued) For a new query: z identify the k most similar training queries z obtain the average distribution vector from the k training queries for each database z use these vectors to determine databases to search and documents to retrieve to maximize precision Example: Suppose for query q, three average distribution are obtained: D 1: <1, 4, 6, 7, 10, 12, 17> D 2: <1, 5, 7, 9, 15, 20> D 3: <2, 3, 6, 9, 11, 16> To retrieve two relevant documents: select D 1 and D 2.

![Dynamic Learning Example : Savvy. Search [Dr. Ho 97] z database representative: weight wi Dynamic Learning Example : Savvy. Search [Dr. Ho 97] z database representative: weight wi](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-58.jpg)

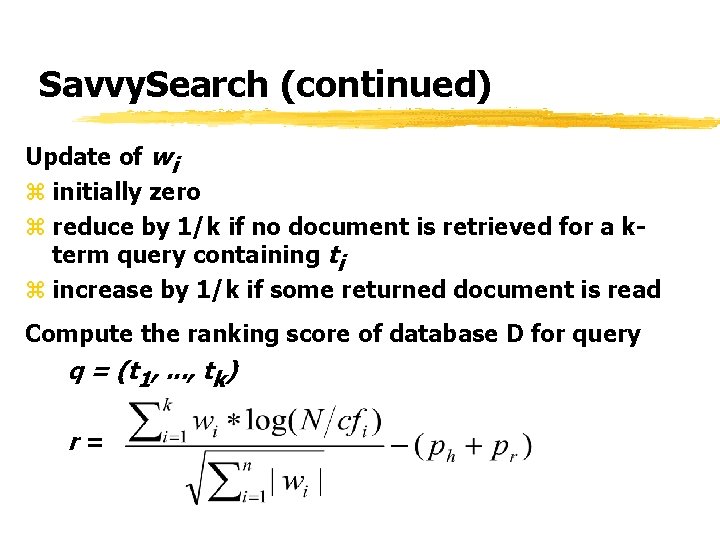

Dynamic Learning Example : Savvy. Search [Dr. Ho 97] z database representative: weight wi and cfi for term ti and two penalty values ph and pr for each D. wi : indicate how well D responds to query term ti cfi : number of databases containing ti ph : penalty if the average number of hits returned for most recent five queries < Th ph = (Th - h) 2 / Th 2 pr : penalty if the average response time for most recent five queries > Tr pr = (r - Tr ) 2 / (45 - Tr ) 2

Savvy. Search (continued) Update of wi z initially zero z reduce by 1/k if no document is retrieved for a kterm query containing ti z increase by 1/k if some returned document is read Compute the ranking score of database D for query q = (t 1, . . . , tk) r=

![Combined Learning Example: Pro. Fusion [Fa. Ga 99] Phase 1: Static Learning z 13 Combined Learning Example: Pro. Fusion [Fa. Ga 99] Phase 1: Static Learning z 13](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-60.jpg)

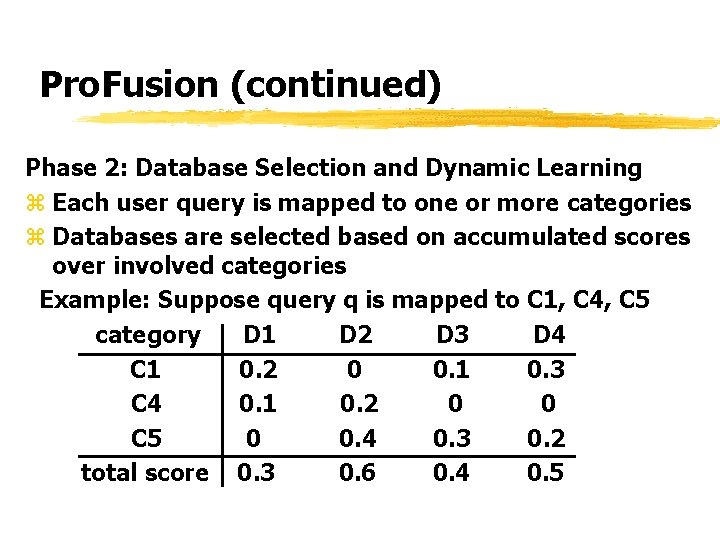

Combined Learning Example: Pro. Fusion [Fa. Ga 99] Phase 1: Static Learning z 13 categories/concepts are utilized z training queries in each category are selected z relevance assessment for each query is used to compute the average score of each local database with respect to each category D 1 D 2. . . Dn C 1 0. 3 0. 1. . . 0. 2. . C 13 0 0. 4. . . 0. 1

Pro. Fusion (continued) Phase 2: Database Selection and Dynamic Learning z Each user query is mapped to one or more categories z Databases are selected based on accumulated scores over involved categories Example: Suppose query q is mapped to C 1, C 4, C 5 category D 1 D 2 D 3 D 4 C 1 0. 2 0 0. 1 0. 3 C 4 0. 1 0. 2 0 0 C 5 0 0. 4 0. 3 0. 2 total score 0. 3 0. 6 0. 4 0. 5

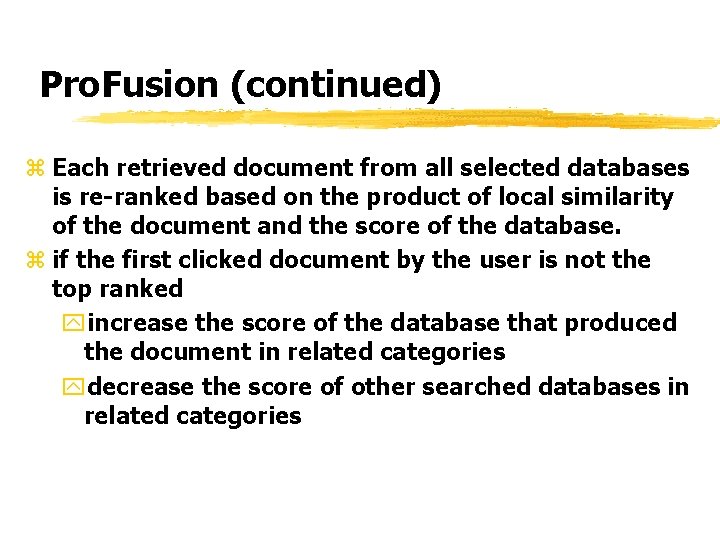

Pro. Fusion (continued) z Each retrieved document from all selected databases is re-ranked based on the product of local similarity of the document and the score of the database. z if the first clicked document by the user is not the top ranked yincrease the score of the database that produced the document in related categories ydecrease the score of other searched databases in related categories

![Other Database Selection Techniques z incorporating ranks [YMLW 99 a] z query expansion [Xu. Other Database Selection Techniques z incorporating ranks [YMLW 99 a] z query expansion [Xu.](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-63.jpg)

Other Database Selection Techniques z incorporating ranks [YMLW 99 a] z query expansion [Xu. Ca 98] z use of lightweight queries [Ha. Th 99] yshorter ynot evaluated like regular queries z use of representative hierarchies [YMLW 99 b]

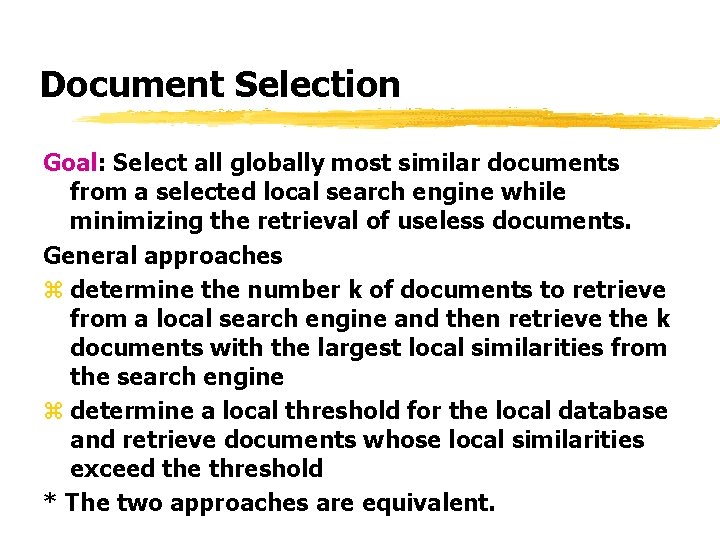

Document Selection Goal: Select all globally most similar documents from a selected local search engine while minimizing the retrieval of useless documents. General approaches z determine the number k of documents to retrieve from a local search engine and then retrieve the k documents with the largest local similarities from the search engine z determine a local threshold for the local database and retrieve documents whose local similarities exceed the threshold * The two approaches are equivalent.

Solution Classification z Local Determination yall locally retrieved documents will be returned Examples: NCSTRL, Search Broker [Ma. Bi 97] z User Determination yglobal user determines how many documents should be retrieved from each local database yneither effective nor practical when the number of databases is large. Examples: Meta. Crawler [Se. Et 97] Savvy. Search [Dr. Ho 97]

Solution Classification (continued) z Weighted Allocation yretrieve proportionally more documents from local databases that are ranked higher z Learning-based Approaches yuse past retrieval experience for selection z Guaranteed Retrieval yaimed at guaranteeing the retrieval of globally most similar documents

Weighted Allocation z Suppose m documents are to be retrieved from N local databases. Example 1: CORI net [Ca. LC 95] Retrieve m* 2(1+ N - i) / N ( N+1) documents from the ith ranked local database. Example 2: D-WISE [Yu. Le 97] Let ri be the ranking score of local database Di. Retrieve m * ri / documents from Di. z When retrieving k documents from local database D , the k documents with largest local similarities are retrieved from Di.

Learning-based Approaches z determine the number of documents to retrieve from a local databased on past retrieval experiences with the local database. Example: MRDD [Vo. GJ 95] For query q, three average distribution are obtained: D 1: <1, 4, 6, 7, 10, 12, 17> D 2: <1, 5, 7, 9, 15, 20> D 3: <2, 3, 6, 9, 11, 16> To retrieve four relevant documents: retrieve 1 document from D 1, 1 from D 2 and 3 from D 3.

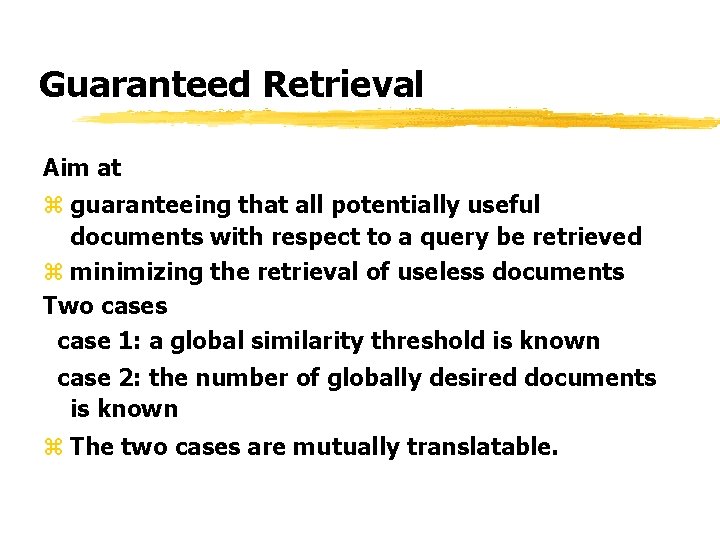

Guaranteed Retrieval Aim at z guaranteeing that all potentially useful documents with respect to a query be retrieved z minimizing the retrieval of useless documents Two cases case 1: a global similarity threshold is known case 2: the number of globally desired documents is known z The two cases are mutually translatable.

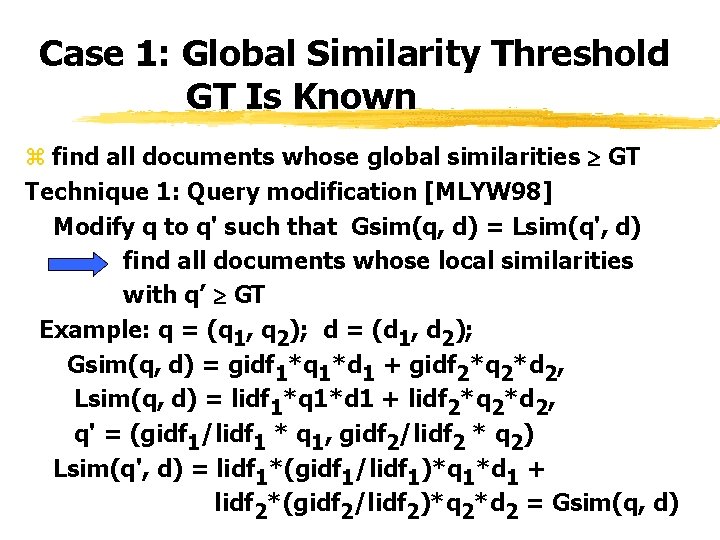

Case 1: Global Similarity Threshold GT Is Known z find all documents whose global similarities GT Technique 1: Query modification [MLYW 98] Modify q to q' such that Gsim(q, d) = Lsim(q', d) find all documents whose local similarities with q’ GT Example: q = (q 1, q 2); d = (d 1, d 2); Gsim(q, d) = gidf 1*q 1*d 1 + gidf 2*q 2*d 2, Lsim(q, d) = lidf 1*q 1*d 1 + lidf 2*q 2*d 2, q' = (gidf 1/lidf 1 * q 1, gidf 2/lidf 2 * q 2) Lsim(q', d) = lidf 1*(gidf 1/lidf 1)*q 1*d 1 + lidf 2*(gidf 2/lidf 2)*q 2*d 2 = Gsim(q, d)

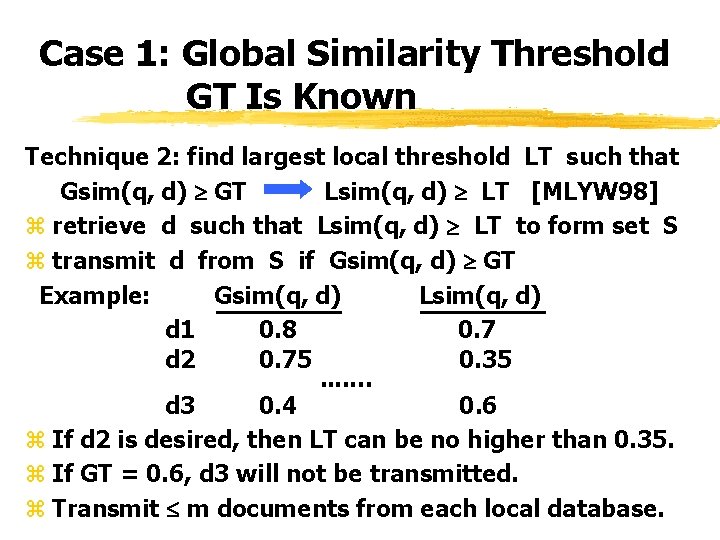

Case 1: Global Similarity Threshold GT Is Known Technique 2: find largest local threshold LT such that Gsim(q, d) GT Lsim(q, d) LT [MLYW 98] z retrieve d such that Lsim(q, d) LT to form set S z transmit d from S if Gsim(q, d) GT Example: Gsim(q, d) Lsim(q, d) d 1 0. 8 0. 7 d 2 0. 75 0. 35. . … d 3 0. 4 0. 6 z If d 2 is desired, then LT can be no higher than 0. 35. z If GT = 0. 6, d 3 will not be transmitted. z Transmit m documents from each local database.

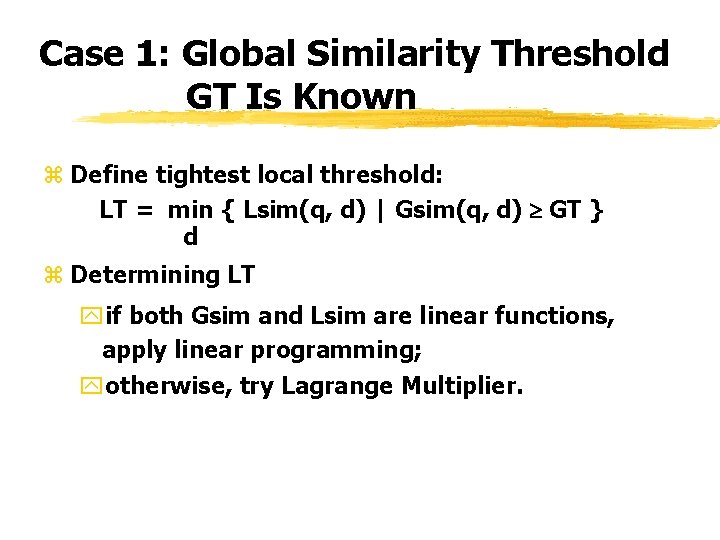

Case 1: Global Similarity Threshold GT Is Known z Define tightest local threshold: LT = min { Lsim(q, d) | Gsim(q, d) GT } d z Determining LT yif both Gsim and Lsim are linear functions, apply linear programming; yotherwise, try Lagrange Multiplier.

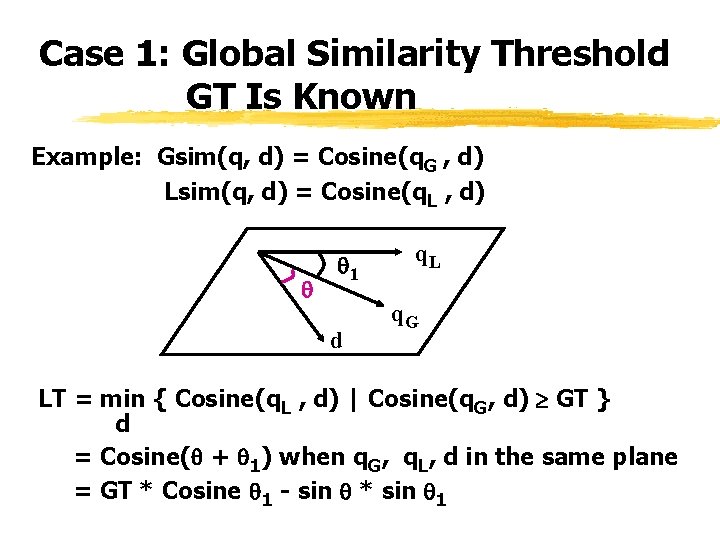

Case 1: Global Similarity Threshold GT Is Known Example: Gsim(q, d) = Cosine(q. G , d) Lsim(q, d) = Cosine(q. L , d) 1 d q. L q. G LT = min { Cosine(q. L , d) | Cosine(q. G, d) GT } d = Cosine( + 1) when q. G, q. L, d in the same plane = GT * Cosine 1 - sin * sin 1

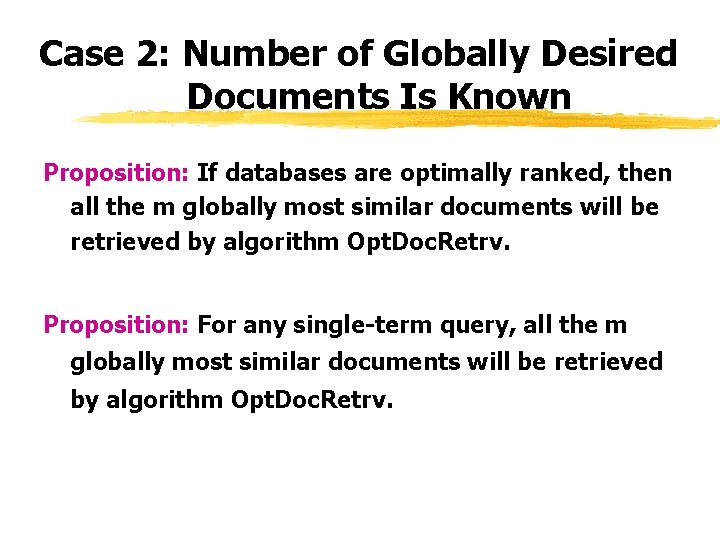

Case 2: Number of Globally Desired Documents Is Known Solution: z rank databases optimally for a given query q z retrieve documents from databases in the optimal order

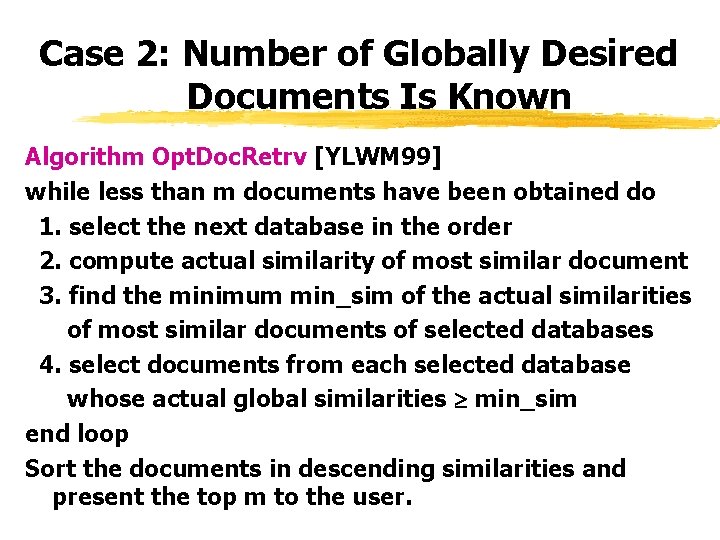

Case 2: Number of Globally Desired Documents Is Known Algorithm Opt. Doc. Retrv [YLWM 99] while less than m documents have been obtained do 1. select the next database in the order 2. compute actual similarity of most similar document 3. find the minimum min_sim of the actual similarities of most similar documents of selected databases 4. select documents from each selected database whose actual global similarities min_sim end loop Sort the documents in descending similarities and present the top m to the user.

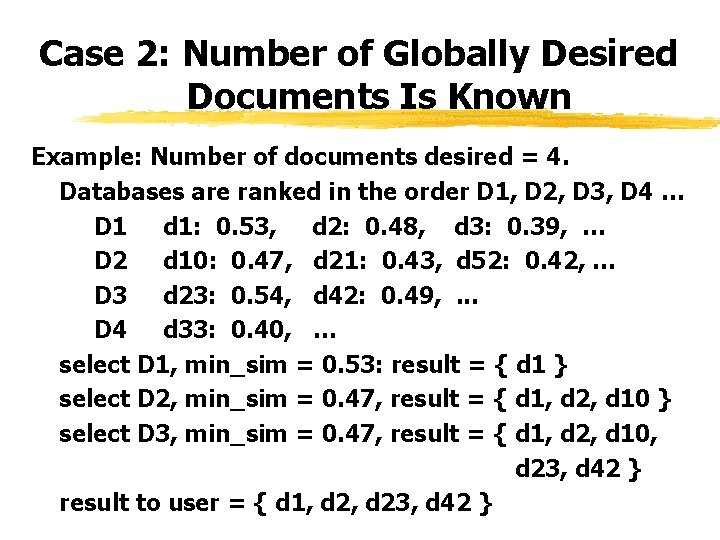

Case 2: Number of Globally Desired Documents Is Known Example: Number of documents desired = 4. Databases are ranked in the order D 1, D 2, D 3, D 4 … D 1 d 1: 0. 53, d 2: 0. 48, d 3: 0. 39, … D 2 d 10: 0. 47, d 21: 0. 43, d 52: 0. 42, … D 3 d 23: 0. 54, d 42: 0. 49, . . . D 4 d 33: 0. 40, … select D 1, min_sim = 0. 53: result = { d 1 } select D 2, min_sim = 0. 47, result = { d 1, d 2, d 10 } select D 3, min_sim = 0. 47, result = { d 1, d 2, d 10, d 23, d 42 } result to user = { d 1, d 23, d 42 }

Case 2: Number of Globally Desired Documents Is Known Proposition: If databases are optimally ranked, then all the m globally most similar documents will be retrieved by algorithm Opt. Doc. Retrv. Proposition: For any single-term query, all the m globally most similar documents will be retrieved by algorithm Opt. Doc. Retrv.

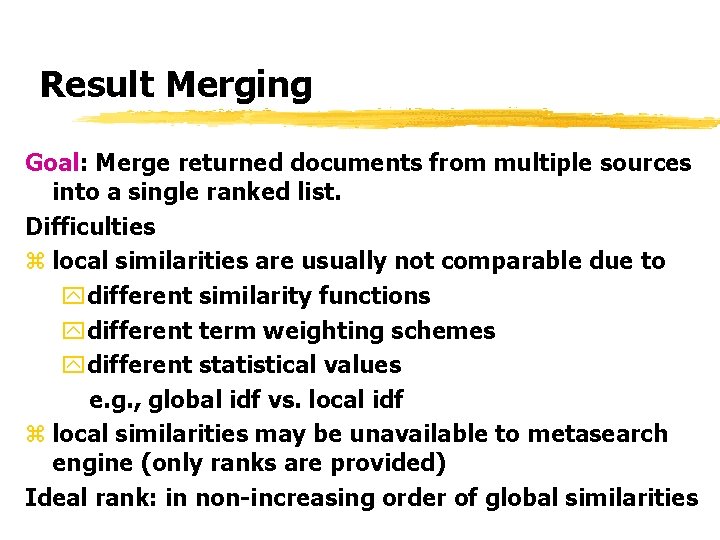

Result Merging Goal: Merge returned documents from multiple sources into a single ranked list. Difficulties z local similarities are usually not comparable due to ydifferent similarity functions ydifferent term weighting schemes ydifferent statistical values e. g. , global idf vs. local idf z local similarities may be unavailable to metasearch engine (only ranks are provided) Ideal rank: in non-increasing order of global similarities

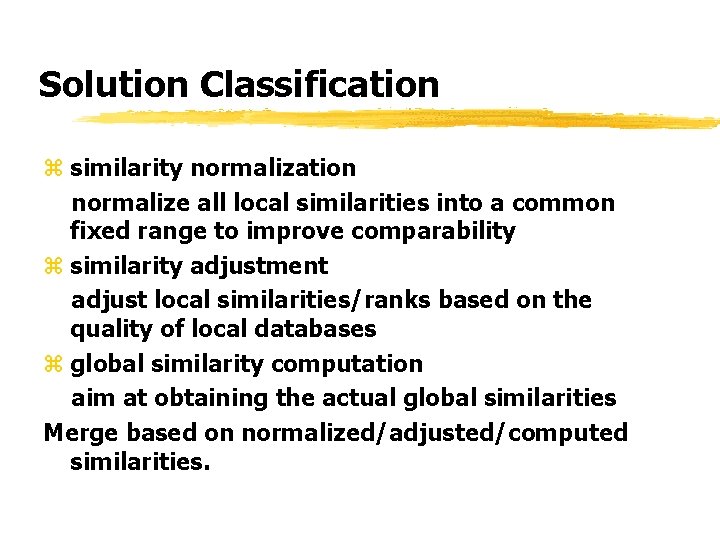

Solution Classification z similarity normalization normalize all local similarities into a common fixed range to improve comparability z similarity adjustment adjust local similarities/ranks based on the quality of local databases z global similarity computation aim at obtaining the actual global similarities Merge based on normalized/adjusted/computed similarities.

![Similarity Normalization Example 1: Meta. Crawler [Se. Et 97] z map all local similarities Similarity Normalization Example 1: Meta. Crawler [Se. Et 97] z map all local similarities](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-80.jpg)

Similarity Normalization Example 1: Meta. Crawler [Se. Et 97] z map all local similarities into [0, 1000] z map largest local similarity from each source to 1000 z map other local similarities proportionally z add normalized local similarities for documents retrieved from multiple sources D 1 D 2 d 1 d 2 d 3 d 1 d 4 d 5 local similarity: 100 200 400 0. 3 0. 2 0. 5 normalized: 250 500 1000 600 400 1000 final similarity: 850 500 1000 400 1000

![Similarity Normalization Example 2: Savvy. Search [Dr. Ho 97] z same as Meta. Crawler Similarity Normalization Example 2: Savvy. Search [Dr. Ho 97] z same as Meta. Crawler](http://slidetodoc.com/presentation_image_h/057b0433bc35d742c0f9adcc221e5584/image-81.jpg)

Similarity Normalization Example 2: Savvy. Search [Dr. Ho 97] z same as Meta. Crawler except using range [0, 1] z documents with no local similarities are assigned 0. 5 Retrieval based on Multiple Evidence z normalized similarity between 0 and 1 can be considered as a confidence that a document is useful z let si be the confidence of source i that document d is useful to query q z estimate overall confidence that d is useful: S(d, q) = 1 - (1 - si)*. . . *(1 - sk) Example: s 1 = 0. 7, s 2 = 0. 8 S(d, q) = 0. 94

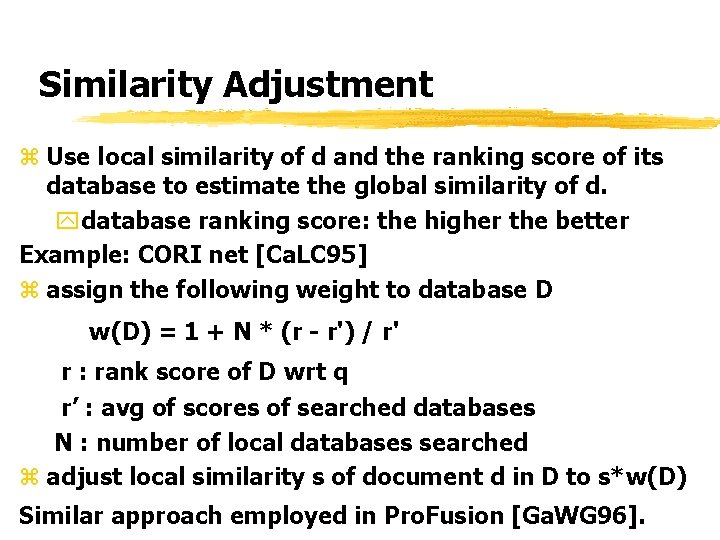

Similarity Adjustment z Use local similarity of d and the ranking score of its database to estimate the global similarity of d. ydatabase ranking score: the higher the better Example: CORI net [Ca. LC 95] z assign the following weight to database D w(D) = 1 + N * (r - r') / r' r : rank score of D wrt q r’ : avg of scores of searched databases N : number of local databases searched z adjust local similarity s of document d in D to s*w(D) Similar approach employed in Pro. Fusion [Ga. WG 96].

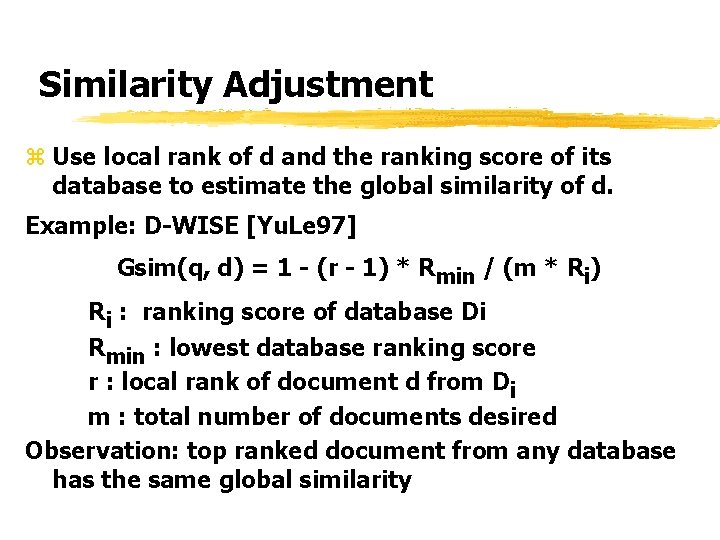

Similarity Adjustment z Use local rank of d and the ranking score of its database to estimate the global similarity of d. Example: D-WISE [Yu. Le 97] Gsim(q, d) = 1 - (r - 1) * Rmin / (m * Ri) Ri : ranking score of database Di Rmin : lowest database ranking score r : local rank of document d from Di m : total number of documents desired Observation: top ranked document from any database has the same global similarity

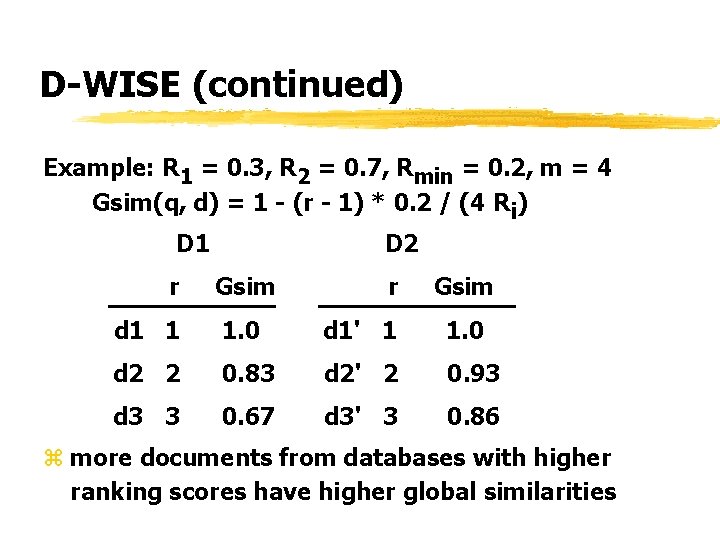

D-WISE (continued) Example: R 1 = 0. 3, R 2 = 0. 7, Rmin = 0. 2, m = 4 Gsim(q, d) = 1 - (r - 1) * 0. 2 / (4 Ri) D 1 r D 2 Gsim r Gsim d 1 1 1. 0 d 1' 1 1. 0 d 2 2 0. 83 d 2' 2 0. 93 d 3 3 0. 67 d 3' 3 0. 86 z more documents from databases with higher ranking scores have higher global similarities

Global Similarity Computation Technique 1: Document Fetching (e. g. : E 2 RD 2, Para. Crawler) z fetch documents to the metasearch engine z collect desired statistics (tf, idf, . . . ) z compute global similarities z Problem: may not scale well.

Global Similarity Computation Technique 2: Knowledge Discovery z discover similarity functions and term weighting schemes used in different search engines z use the discovered knowledge to determine ywhat local similarities are reasonably comparable? yhow to adjust local similarities to make them more comparable? yhow to compute/estimate global similarities?

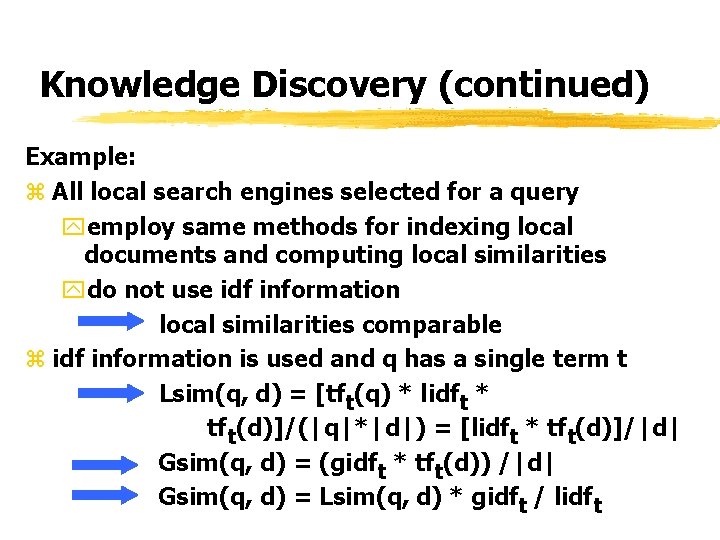

Knowledge Discovery (continued) Example: z All local search engines selected for a query yemploy same methods for indexing local documents and computing local similarities ydo not use idf information local similarities comparable z idf information is used and q has a single term t Lsim(q, d) = [tft(q) * lidft * tft(d)]/(|q|*|d|) = [lidft * tft(d)]/|d| Gsim(q, d) = (gidft * tft(d)) /|d| Gsim(q, d) = Lsim(q, d) * gidft / lidft

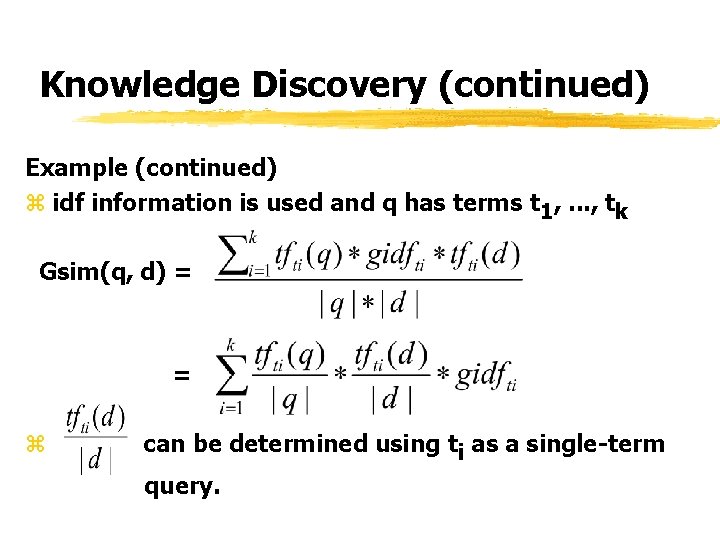

Knowledge Discovery (continued) Example (continued) z idf information is used and q has terms t 1, . . . , tk Gsim(q, d) = = z can be determined using ti as a single-term query.

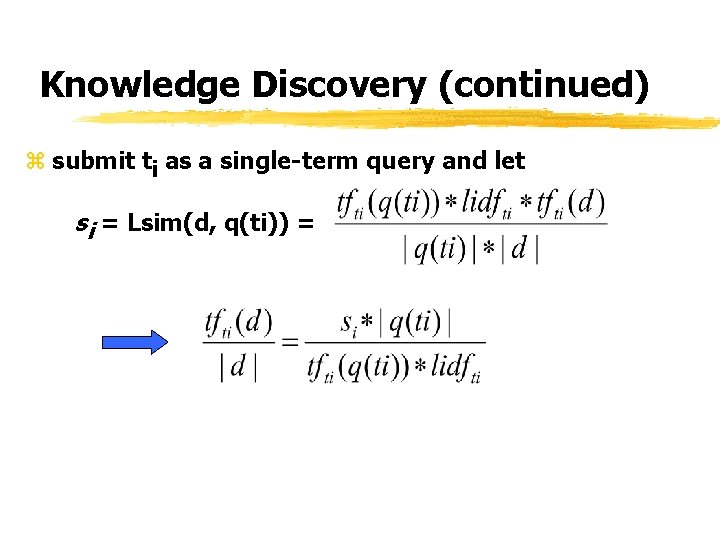

Knowledge Discovery (continued) z submit ti as a single-term query and let si = Lsim(d, q(ti)) =

New Challenges z Incorporate new search techniques into metasearch. y. Document ranks in Google y. Kleinberg's hub and authority scores y. Tag information in HTML documents y. Implicit user feedback on previous retrieval y. Pseudo relevance feedback on previous retrieval y. Use of user profiles z Integrate local systems supporting different query types yfewer researches on boolean queries, proximity queries and hierarchical queries

New Challenges (continued) z Develop techniques to discover knowledge (representatives, ranking algorithms) about local search engines more accurately and more efficiently. ysome search engines may be unwilling to provided desired representatives or may provide inaccurate representatives yindexing techniques, term weighting schemes and similarity functions are typically proprietary. z Develop standard guideline on what information each search engine should provide to metasearch engine (some efforts: STARTS, Dublin Core).

New Challenges (continued) z Distributed implementation of metasearch engine yalternative ways to store local database representatives? yhow to perform database selection and document selection at multiple sites in parallel? z Scale to a million databases ystorage of database representatives yfast algorithms for database selection, document selection and result merging yefficient network utilization

New Challenges (continued) z Standard testbed for evaluation yneed a large number of local databases ydocuments should have links for computing ranks, hub and authority scores ya large number of typical Internet queries yrelevance assessment of documents to each query z Go beyond text databases yhow to extend to databases containing text, images, video, audio, structured data?

- Slides: 93