Visionbased Control of 3 D Facial Animation Jinxiang

Vision-based Control of 3 D Facial Animation Jin-xiang Chai Jing Xiao Jessica Hodgins Carnegie Mellon University

Our Goal Interactive avatar control • Designing a rich set of realistic facial actions for a virtual character • Providing intuitive and interactive control over these actions

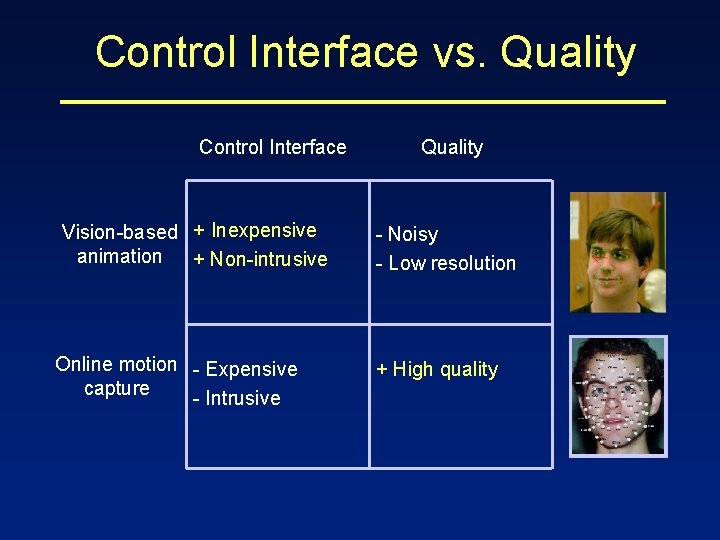

Control Interface vs. Quality Control Interface Vision-based + Inexpensive animation + Non-intrusive Online motion - Expensive capture - Intrusive Quality - Noisy - Low resolution + High quality

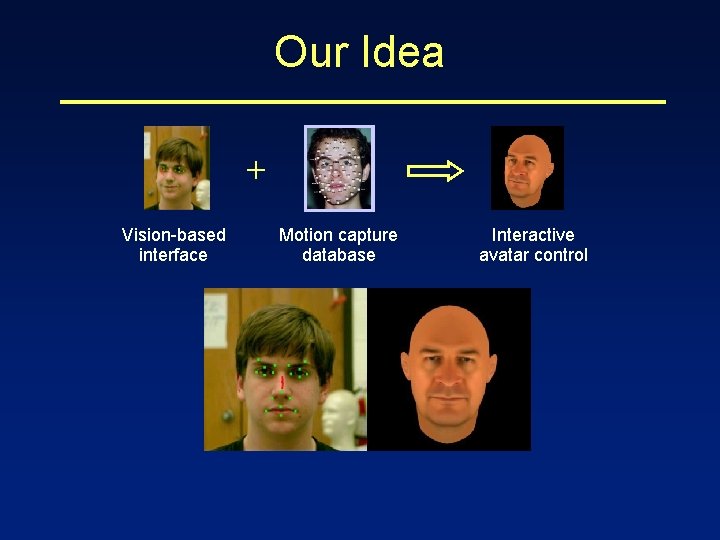

Our Idea + Vision-based interface Motion capture database Interactive avatar control

![Related Work Motion capture • Making faces [Guenter et al. 98] • Expression Cloning Related Work Motion capture • Making faces [Guenter et al. 98] • Expression Cloning](http://slidetodoc.com/presentation_image_h/db99380d93a9e9b03d5dd12bcb15e9d8/image-5.jpg)

Related Work Motion capture • Making faces [Guenter et al. 98] • Expression Cloning [Noh and Neumann 01] Vision-based tracking for direct animation • Physical markers [Williams 90] • Edges [Terzopoulos and Waters 93, Lanitis et al. 97] • Dense optical flow with 3 D models [Essa et al. 96, Pighin et al. 99, De. Carlo et al. 00] • Data-driven feature tracking [Gokturk et al. 01] Vision-based animation with blendshape • Hand-drawn expression [Buck et al. 00] • 3 D model avatar model [Face. Station]

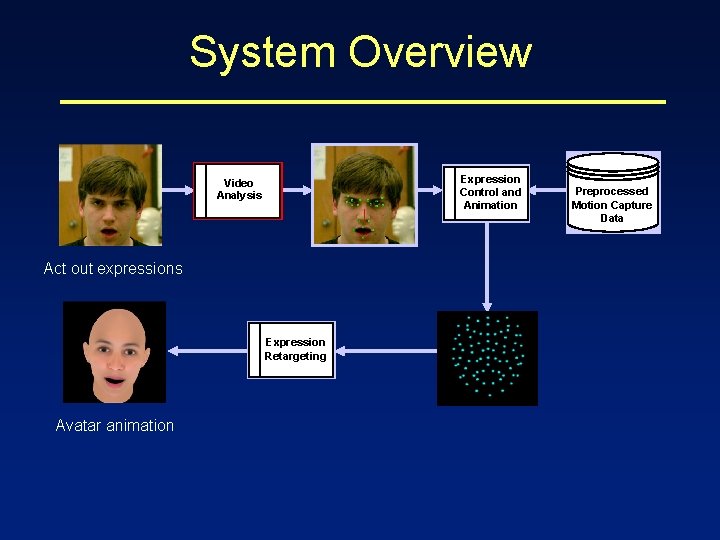

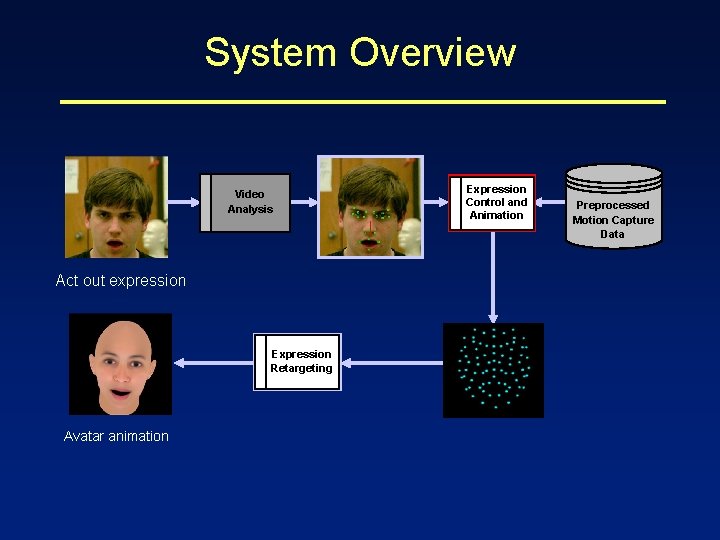

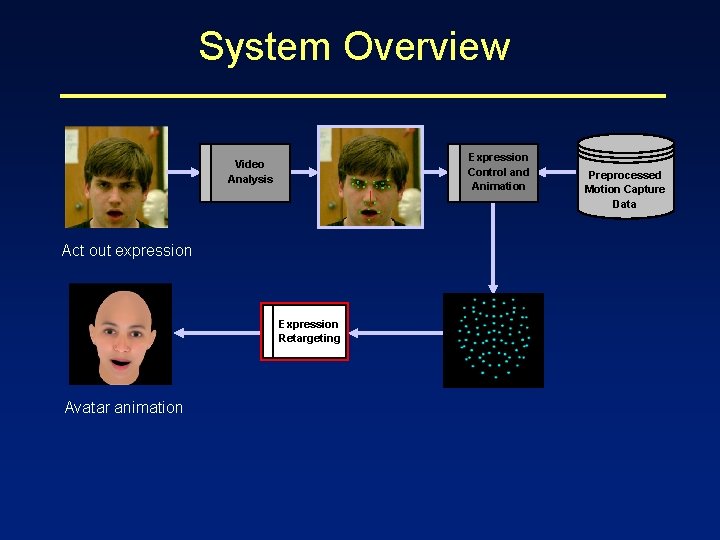

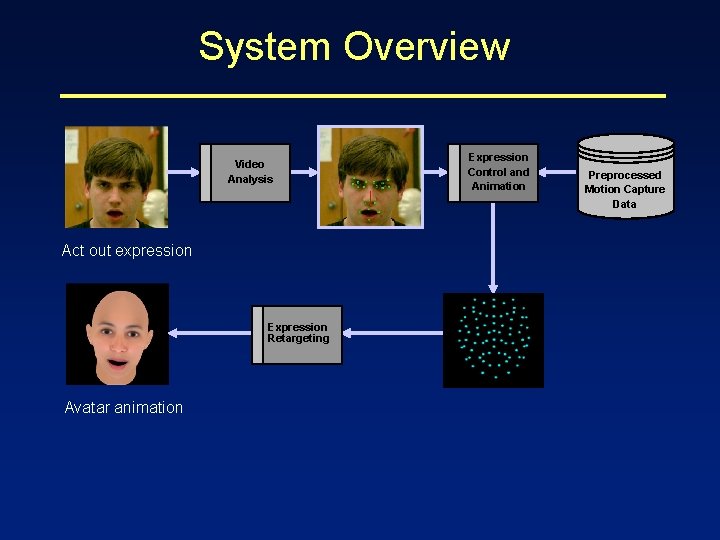

System Overview Video Analysis Act out expressions Expression Retargeting Avatar animation Expression Control and Animation Preprocessed Motion Capture Data

![Video Analysis Vision-based tracking • 3 D Head Poses [Xiao et al. 2002] • Video Analysis Vision-based tracking • 3 D Head Poses [Xiao et al. 2002] •](http://slidetodoc.com/presentation_image_h/db99380d93a9e9b03d5dd12bcb15e9d8/image-7.jpg)

Video Analysis Vision-based tracking • 3 D Head Poses [Xiao et al. 2002] • 2 D facial features Video Analysis

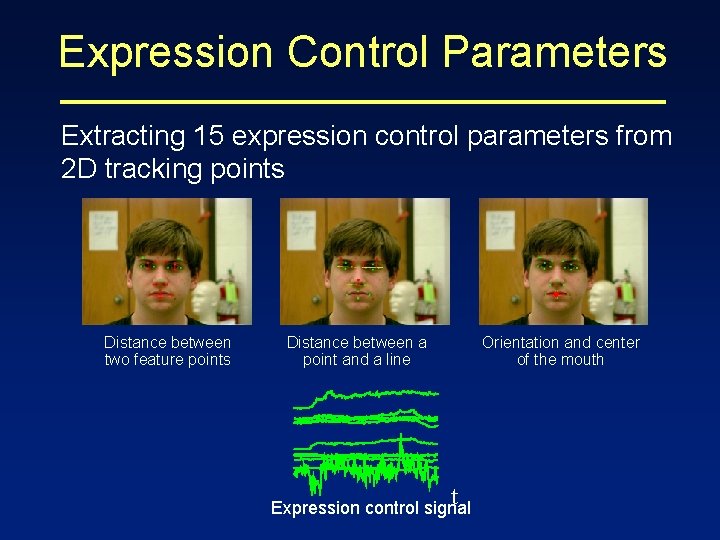

Expression Control Parameters Extracting 15 expression control parameters from 2 D tracking points Distance between two feature points Distance between a point and a line Orientation and center of the mouth t Expression control signal

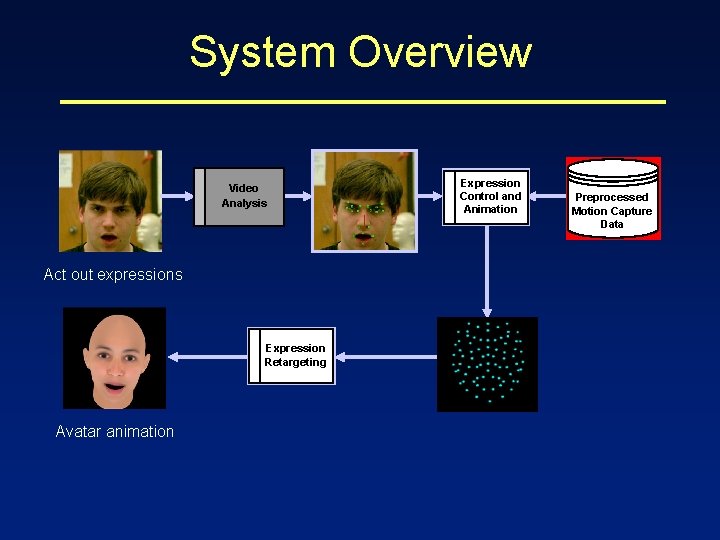

System Overview Video Analysis Act out expressions Expression Retargeting Avatar animation Expression Control and Animation Preprocessed Motion Capture Data

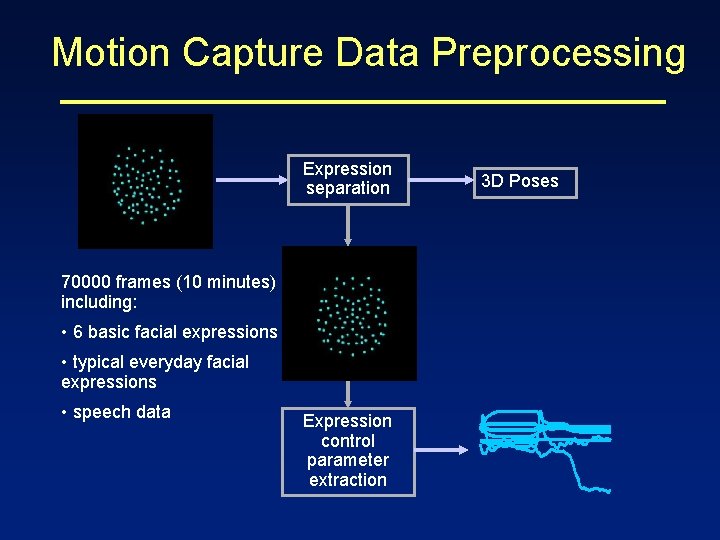

Motion Capture Data Preprocessing Expression separation 70000 frames (10 minutes) including: • 6 basic facial expressions • typical everyday facial expressions • speech data Expression control parameter extraction 3 D Poses

System Overview Video Analysis Act out expression Expression Retargeting Avatar animation Expression Control and Animation Preprocessed Motion Capture Data

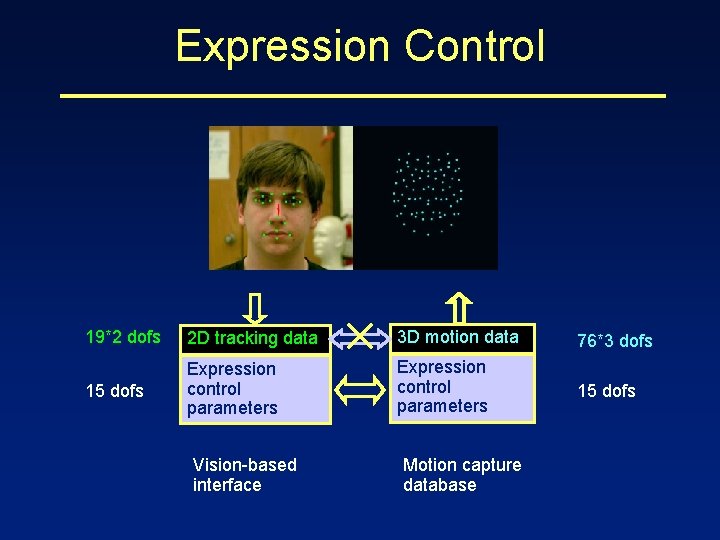

Expression Control 19*2 dofs 2 D tracking data 3 D motion data 76*3 dofs 15 dofs Expression control parameters 15 dofs Vision-based interface Motion capture database

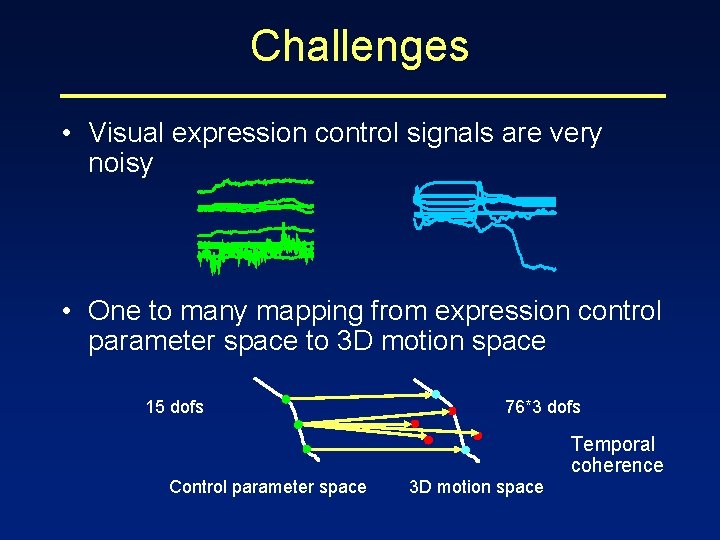

Challenges • Visual expression control signals are very noisy • One to many mapping from expression control parameter space to 3 D motion space 15 dofs 76*3 dofs Temporal coherence Control parameter space 3 D motion space

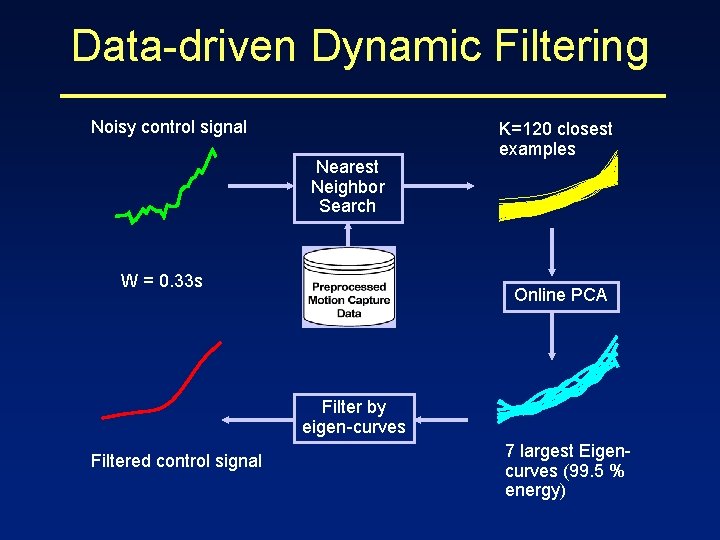

Data-driven Dynamic Filtering Noisy control signal Nearest Neighbor Search W = 0. 33 s K=120 closest examples Online PCA Filter by eigen-curves Filtered control signal 7 largest Eigencurves (99. 5 % energy)

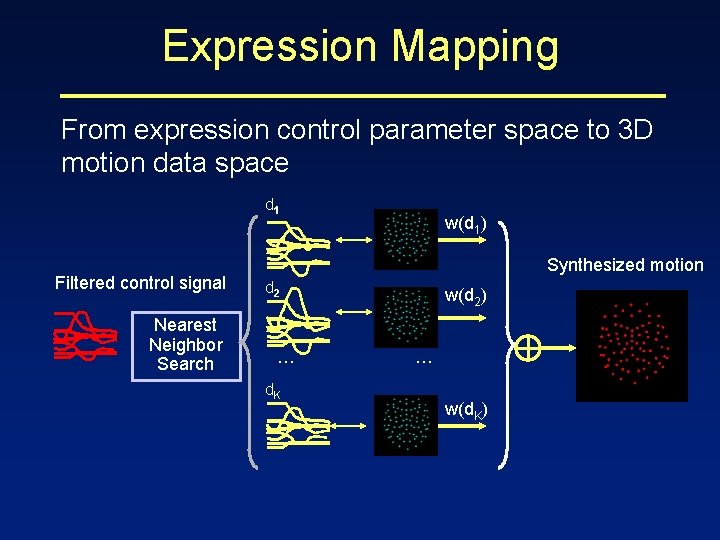

Expression Mapping From expression control parameter space to 3 D motion data space d 1 Filtered control signal Nearest Neighbor Search w(d 1) Synthesized motion d 2 . . . d. K w(d 2) . . . w(d. K)

System Overview Expression Control and Animation Video Analysis Act out expression Expression Retargeting Avatar animation Preprocessed Motion Capture Data

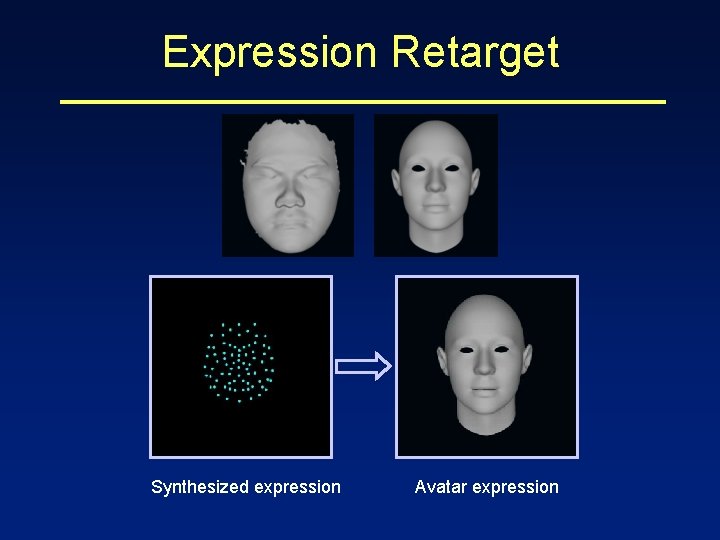

Expression Retarget Synthesized expression Avatar expression

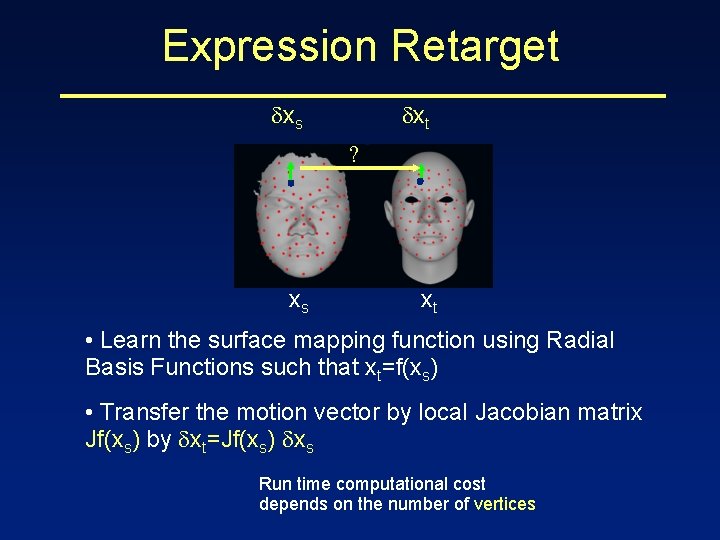

Expression Retarget xs xt ? xs xt • Learn the surface mapping function using Radial Basis Functions such that xt=f(xs) • Transfer the motion vector by local Jacobian matrix Jf(xs) by xt=Jf(xs) xs Run time computational cost depends on the number of vertices

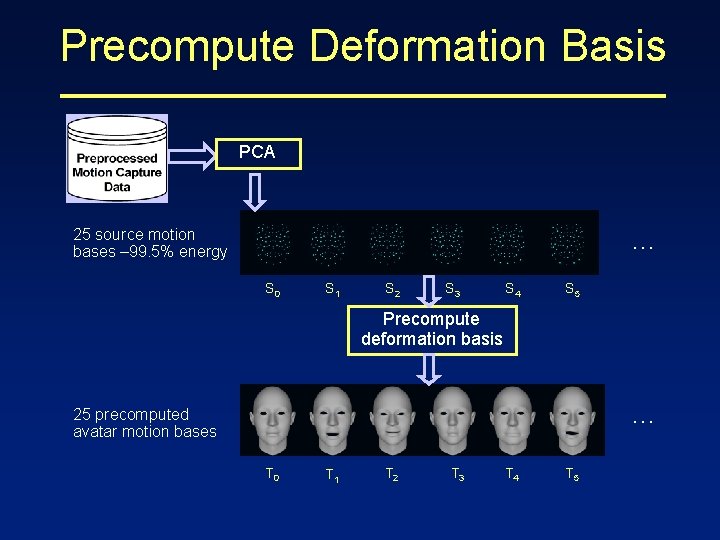

Precompute Deformation Basis PCA 25 source motion bases – 99. 5% energy … S 0 S 1 S 2 S 3 S 4 S 5 Precompute deformation basis … 25 precomputed avatar motion bases T 0 T 1 T 2 T 3 T 4 T 5

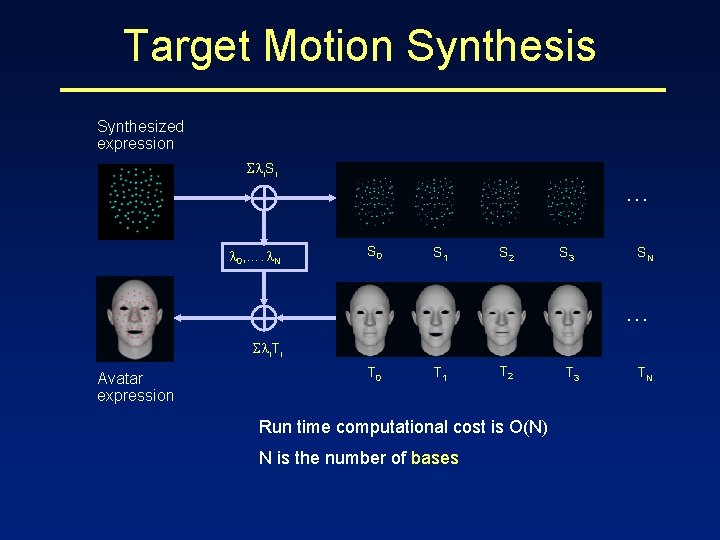

Target Motion Synthesis Synthesized expression i. Si … 0, …. N S 0 S 1 S 2 S 3 SN … i. Ti Avatar expression T 0 T 1 T 2 Run time computational cost is O(N) N is the number of bases T 3 TN

System Overview Video Analysis Act out expression Expression Retargeting Avatar animation Expression Control and Animation Preprocessed Motion Capture Data

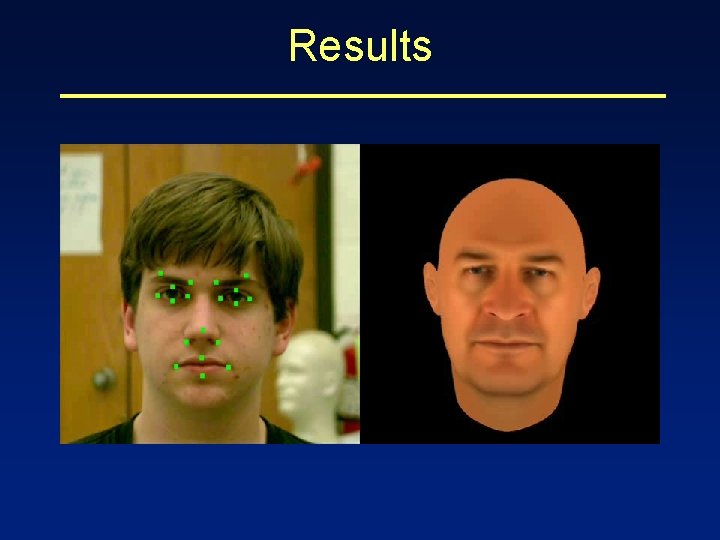

Results

Conclusions Developed a performance-based facial animation system for interactive expression control • Tracking real-time facial movements in video • Preprocessing the motion capture database • Transforming low-quality 2 D visual control signal to high quality 3 D facial expression • An efficient online expression retarget

Future Work • Formal user study on the quality of the synthesized motion • Controlling and animating 3 D photorealistic facial expression • Size of database

- Slides: 24