Virtual Memory Today Motivations for VM l Address

![Implicit list: Bidirectional coalescing • Boundary tags [Knuth 73] – Replicate size/allocated word at Implicit list: Bidirectional coalescing • Boundary tags [Knuth 73] – Replicate size/allocated word at](https://slidetodoc.com/presentation_image_h/0c6337e05644041c9f997119f3f65636/image-56.jpg)

![Overwriting memory Not checking the max string size char s[8]; int i; gets(s); /* Overwriting memory Not checking the max string size char s[8]; int i; gets(s); /*](https://slidetodoc.com/presentation_image_h/0c6337e05644041c9f997119f3f65636/image-73.jpg)

- Slides: 79

Virtual Memory Today Motivations for VM l Address translation l Accelerating translation with TLBs l Dynamic memory allocation – mechanisms & policies l Memory bugs l Fabián E. Bustamante, Spring 2007

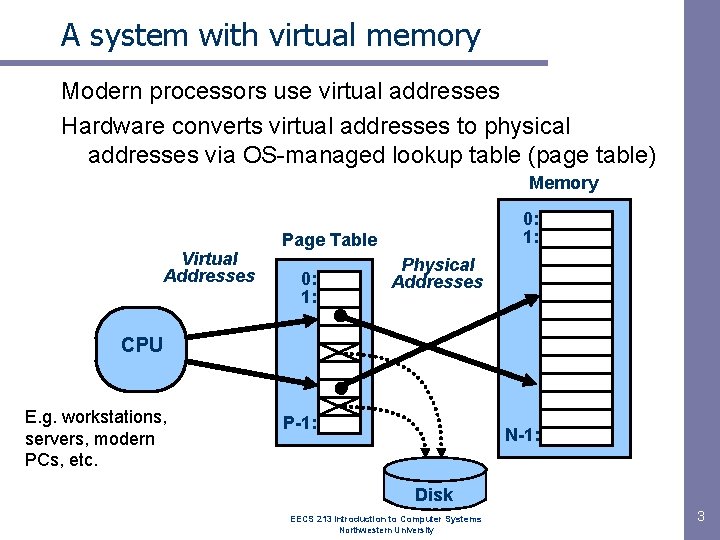

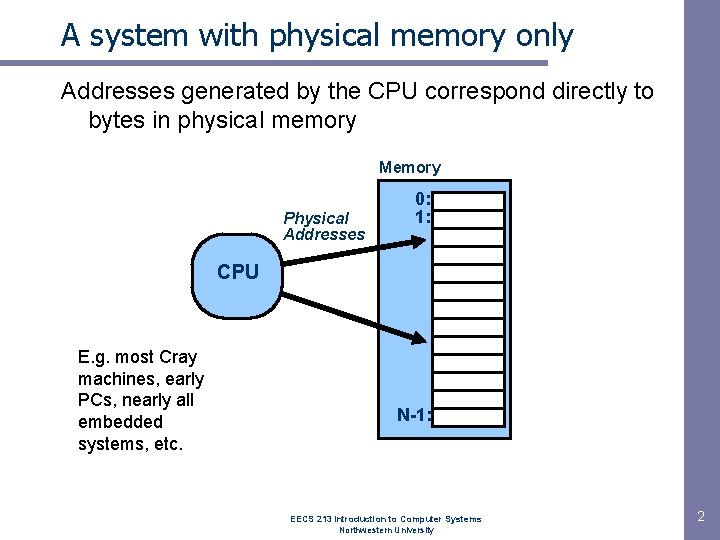

A system with physical memory only Addresses generated by the CPU correspond directly to bytes in physical memory Memory Physical Addresses 0: 1: CPU E. g. most Cray machines, early PCs, nearly all embedded systems, etc. N-1: EECS 213 Introduction to Computer Systems Northwestern University 2

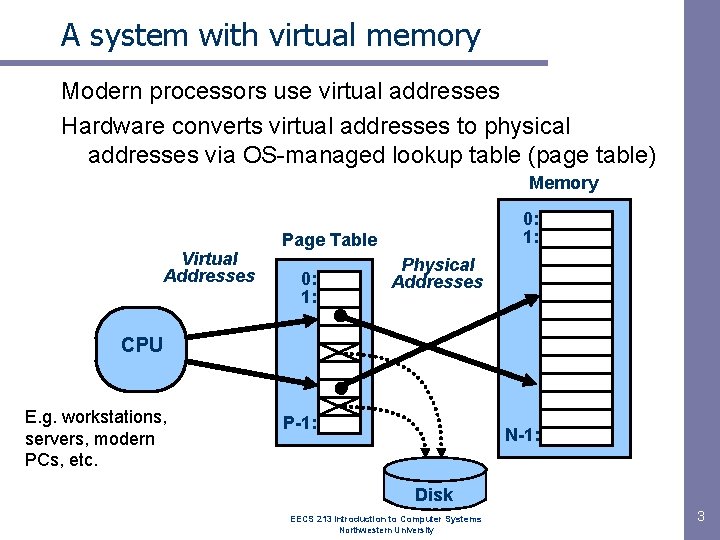

A system with virtual memory Modern processors use virtual addresses Hardware converts virtual addresses to physical addresses via OS-managed lookup table (page table) Memory Virtual Addresses 0: 1: Page Table 0: 1: Physical Addresses CPU E. g. workstations, servers, modern PCs, etc. P-1: N-1: Disk EECS 213 Introduction to Computer Systems Northwestern University 3

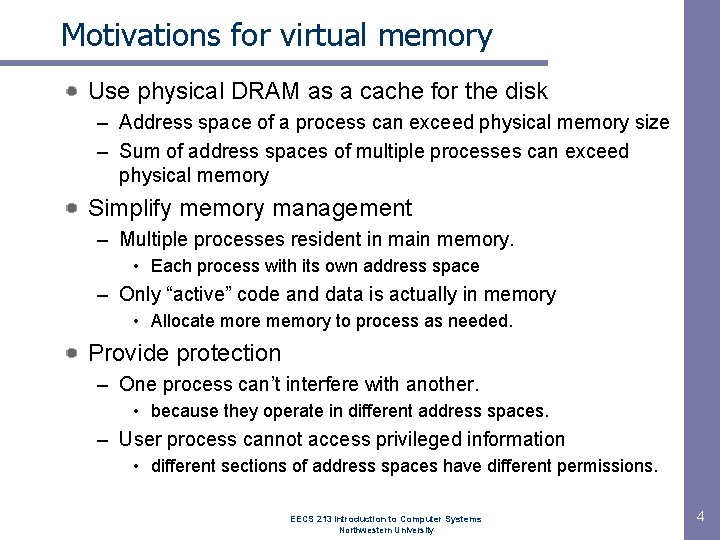

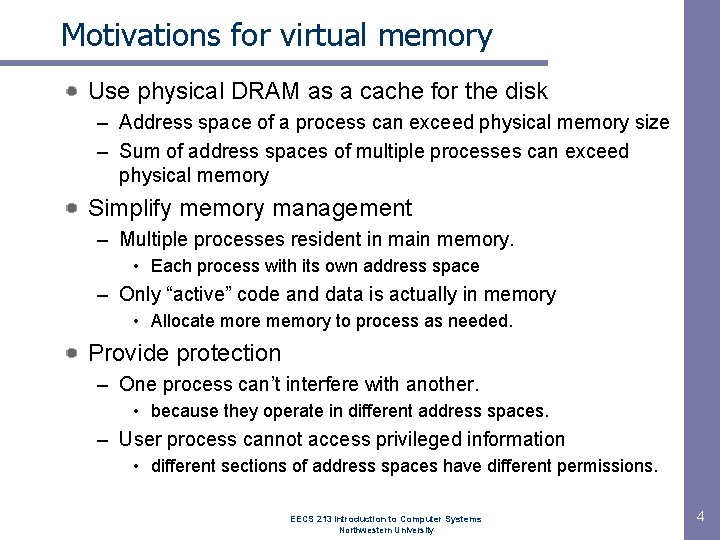

Motivations for virtual memory Use physical DRAM as a cache for the disk – Address space of a process can exceed physical memory size – Sum of address spaces of multiple processes can exceed physical memory Simplify memory management – Multiple processes resident in main memory. • Each process with its own address space – Only “active” code and data is actually in memory • Allocate more memory to process as needed. Provide protection – One process can’t interfere with another. • because they operate in different address spaces. – User process cannot access privileged information • different sections of address spaces have different permissions. EECS 213 Introduction to Computer Systems Northwestern University 4

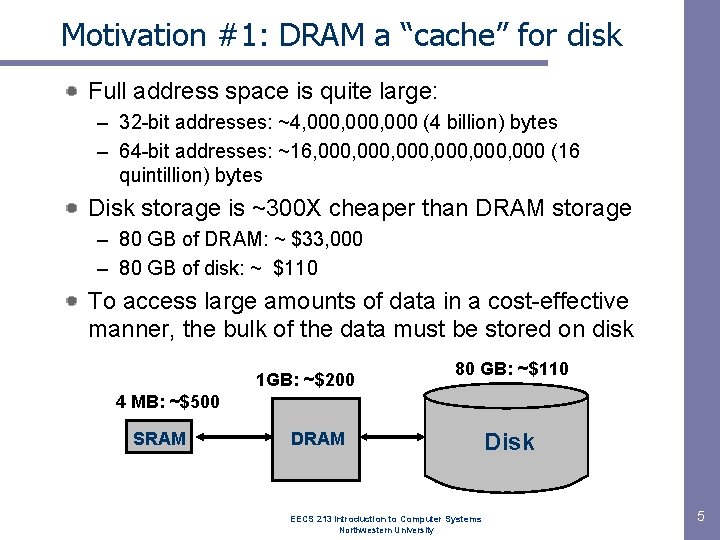

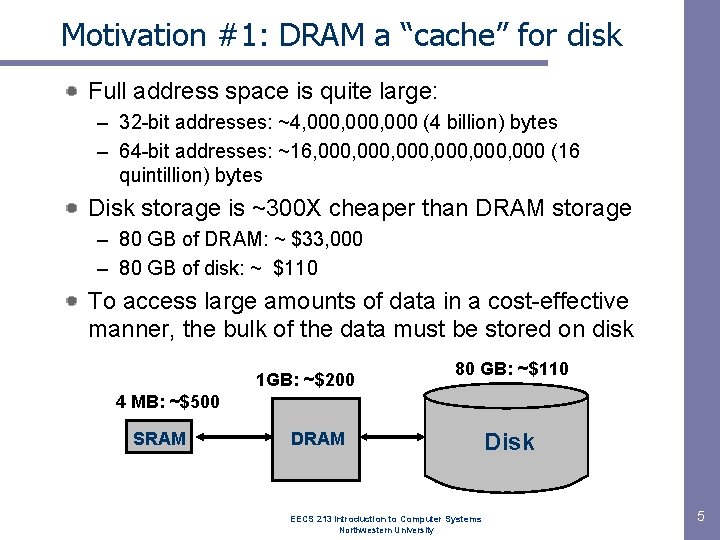

Motivation #1: DRAM a “cache” for disk Full address space is quite large: – 32 -bit addresses: ~4, 000, 000 (4 billion) bytes – 64 -bit addresses: ~16, 000, 000 (16 quintillion) bytes Disk storage is ~300 X cheaper than DRAM storage – 80 GB of DRAM: ~ $33, 000 – 80 GB of disk: ~ $110 To access large amounts of data in a cost-effective manner, the bulk of the data must be stored on disk 1 GB: ~$200 80 GB: ~$110 4 MB: ~$500 SRAM DRAM EECS 213 Introduction to Computer Systems Northwestern University Disk 5

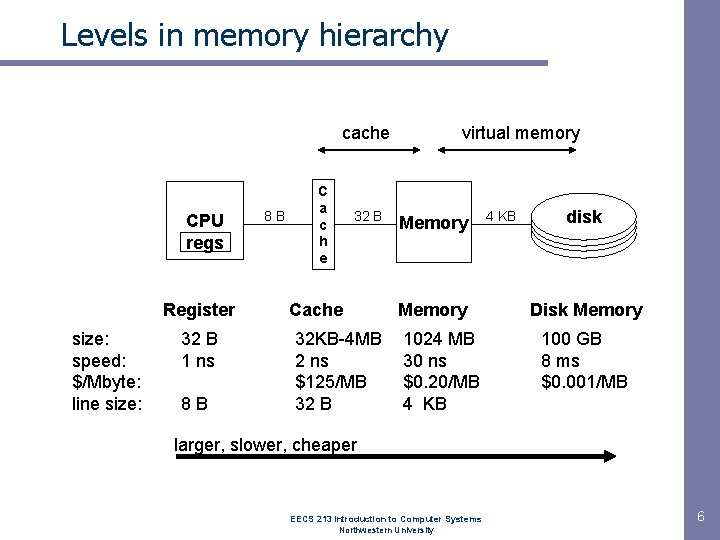

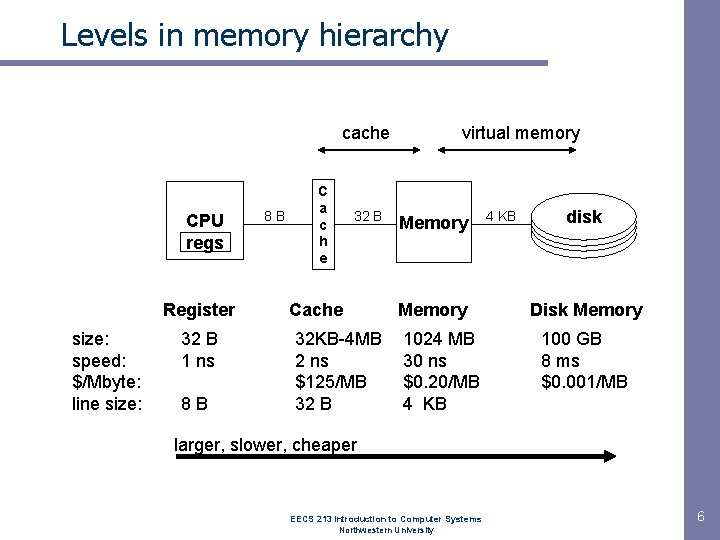

Levels in memory hierarchy cache CPU regs Register size: speed: $/Mbyte: line size: 32 B 1 ns 8 B 8 B C a c h e 32 B Cache 32 KB-4 MB 2 ns $125/MB 32 B virtual memory Memory 1024 MB 30 ns $0. 20/MB 4 KB disk Disk Memory 100 GB 8 ms $0. 001/MB larger, slower, cheaper EECS 213 Introduction to Computer Systems Northwestern University 6

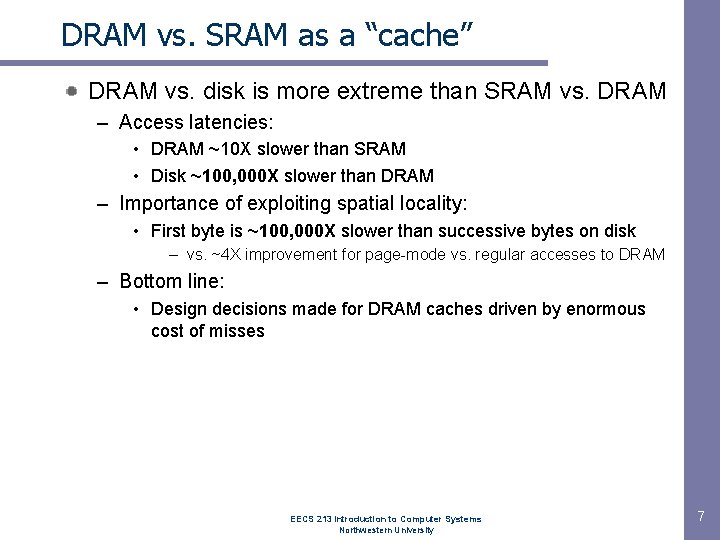

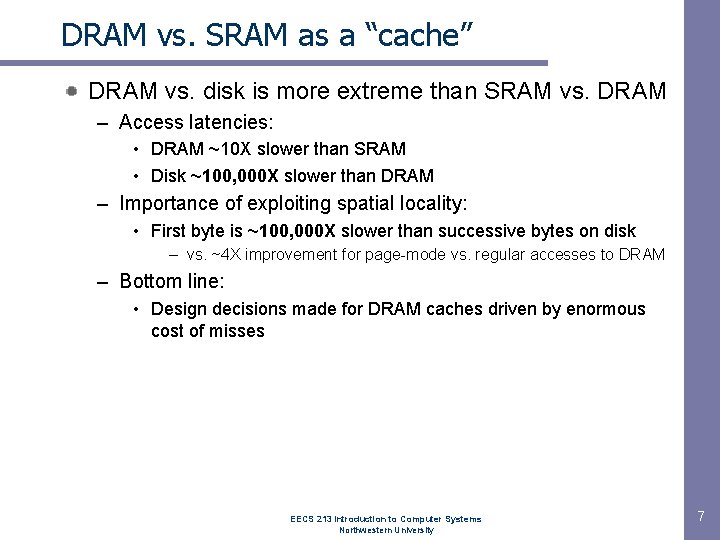

DRAM vs. SRAM as a “cache” DRAM vs. disk is more extreme than SRAM vs. DRAM – Access latencies: • DRAM ~10 X slower than SRAM • Disk ~100, 000 X slower than DRAM – Importance of exploiting spatial locality: • First byte is ~100, 000 X slower than successive bytes on disk – vs. ~4 X improvement for page-mode vs. regular accesses to DRAM – Bottom line: • Design decisions made for DRAM caches driven by enormous cost of misses EECS 213 Introduction to Computer Systems Northwestern University 7

Impact of properties on design If DRAM was to be organized similar to an SRAM cache, how would we set the following design parameters? – Line size? Large, since disk better at transferring large blocks – Associativity? High, to minimize miss rate – Write through or write back? • Write back, since can’t afford to perform small writes to disk What would the impact of these choices be on: – Miss rate: Extremely low. << 1% – Hit time: Must match cache/DRAM performance – Miss latency: Very high. ~20 ms – Tag storage overhead: Low, relative to block size EECS 213 Introduction to Computer Systems Northwestern University 8

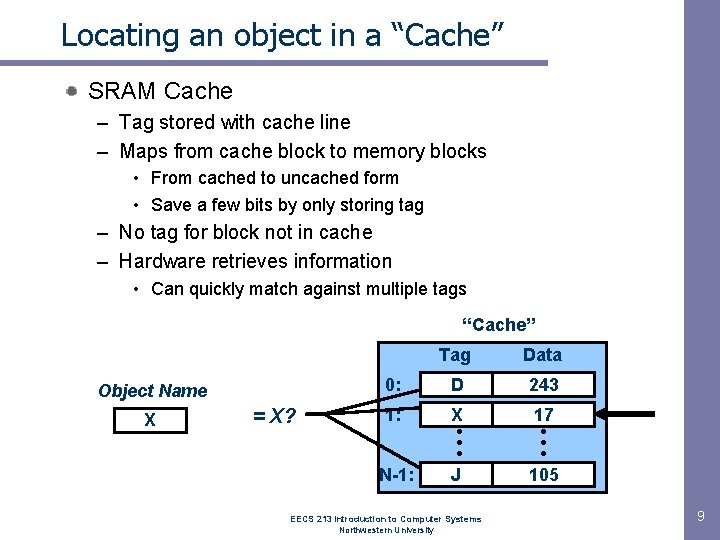

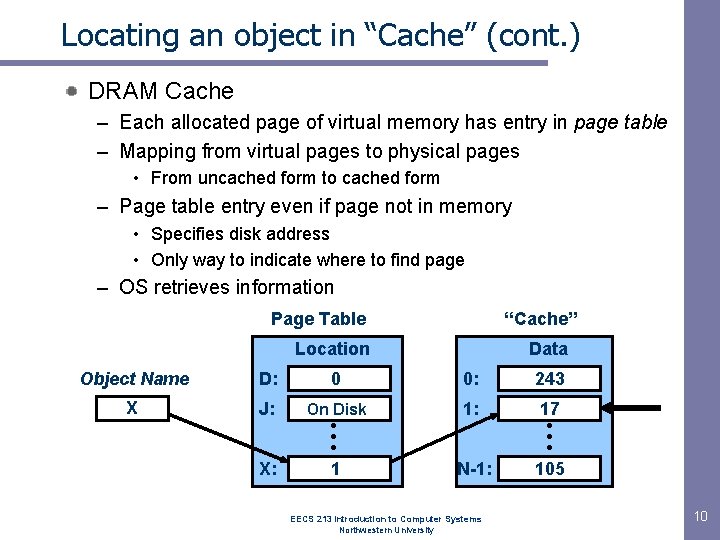

Locating an object in a “Cache” SRAM Cache – Tag stored with cache line – Maps from cache block to memory blocks • From cached to uncached form • Save a few bits by only storing tag – No tag for block not in cache – Hardware retrieves information • Can quickly match against multiple tags “Cache” Object Name X = X? Tag Data 0: D 243 1: X • • • J 17 • • • 105 N-1: EECS 213 Introduction to Computer Systems Northwestern University 9

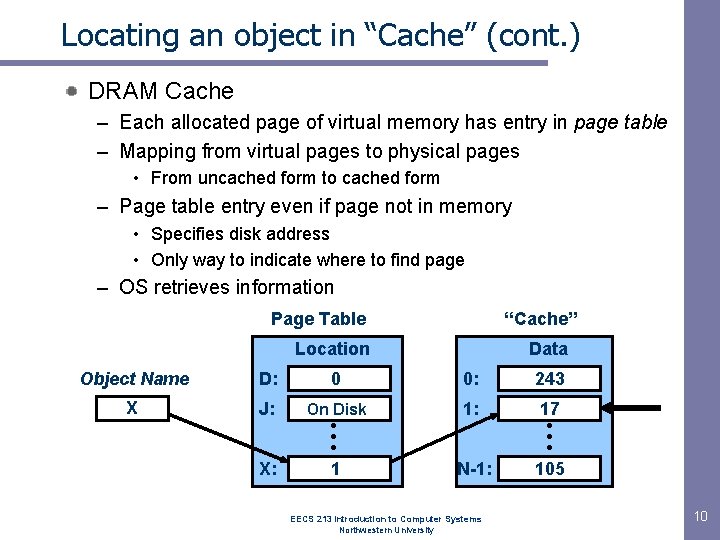

Locating an object in “Cache” (cont. ) DRAM Cache – Each allocated page of virtual memory has entry in page table – Mapping from virtual pages to physical pages • From uncached form to cached form – Page table entry even if page not in memory • Specifies disk address • Only way to indicate where to find page – OS retrieves information Page Table “Cache” Location Data Object Name D: 0 0: 243 X J: On Disk 1: 17 • • • 105 X: • • • 1 N-1: EECS 213 Introduction to Computer Systems Northwestern University 10

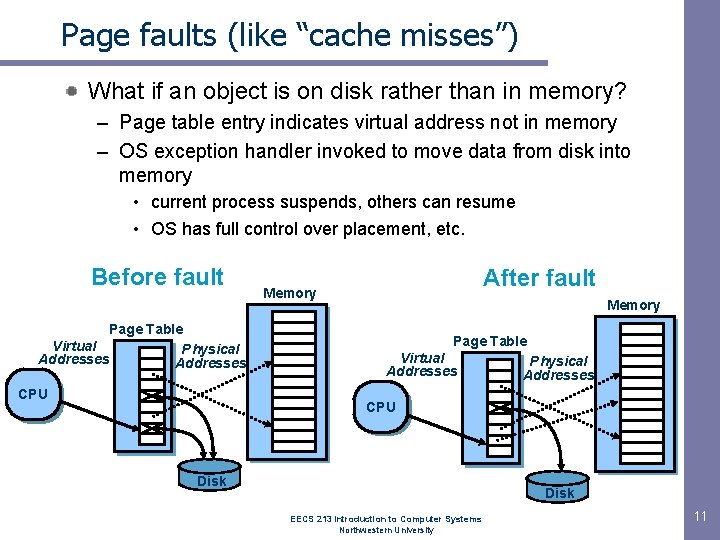

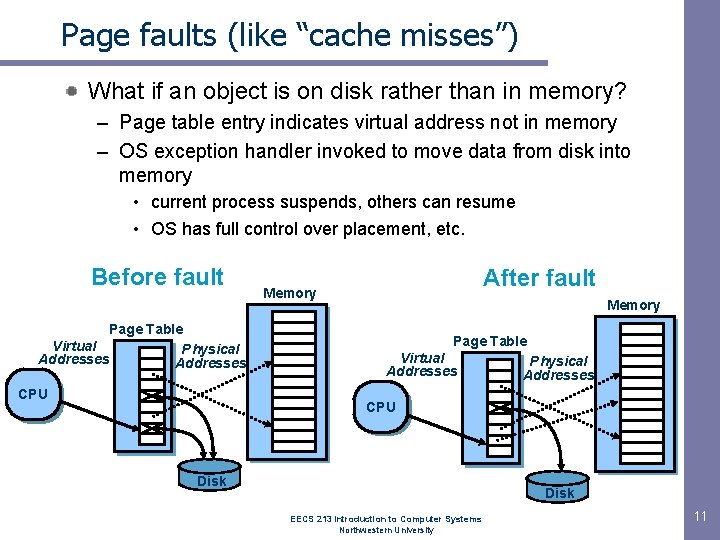

Page faults (like “cache misses”) What if an object is on disk rather than in memory? – Page table entry indicates virtual address not in memory – OS exception handler invoked to move data from disk into memory • current process suspends, others can resume • OS has full control over placement, etc. Before fault Page Table Virtual Physical Addresses CPU After fault Memory Page Table Virtual Addresses Physical Addresses CPU Disk EECS 213 Introduction to Computer Systems Northwestern University 11

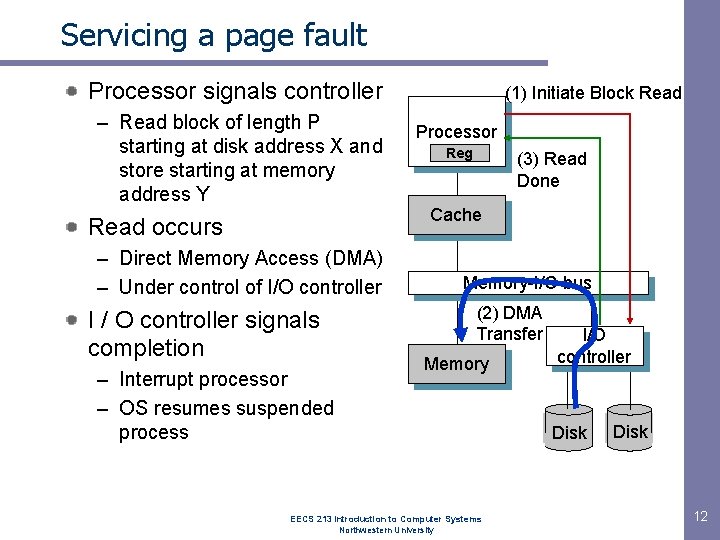

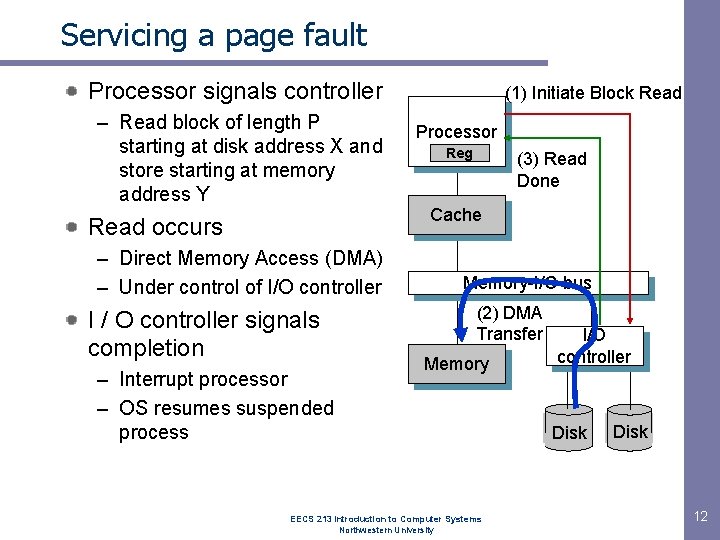

Servicing a page fault Processor signals controller – Read block of length P starting at disk address X and store starting at memory address Y Read occurs – Direct Memory Access (DMA) – Under control of I/O controller I / O controller signals completion – Interrupt processor – OS resumes suspended process (1) Initiate Block Read Processor Reg (3) Read Done Cache Memory-I/O bus (2) DMA Transfer Memory EECS 213 Introduction to Computer Systems Northwestern University I/O controller disk Disk 12

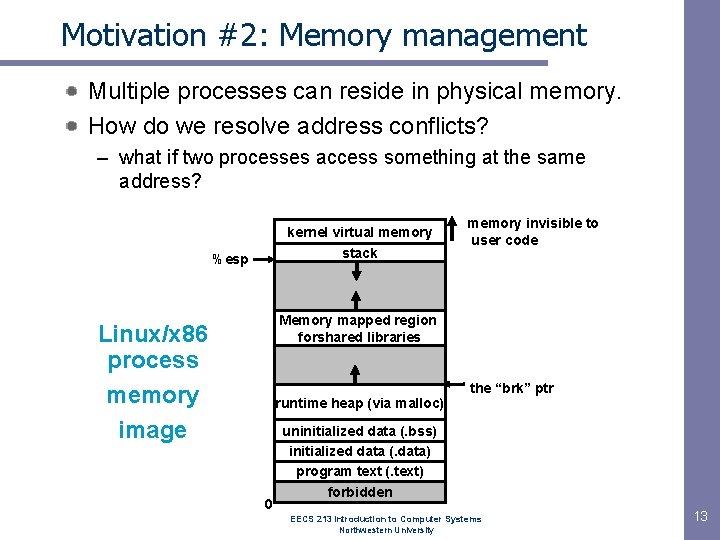

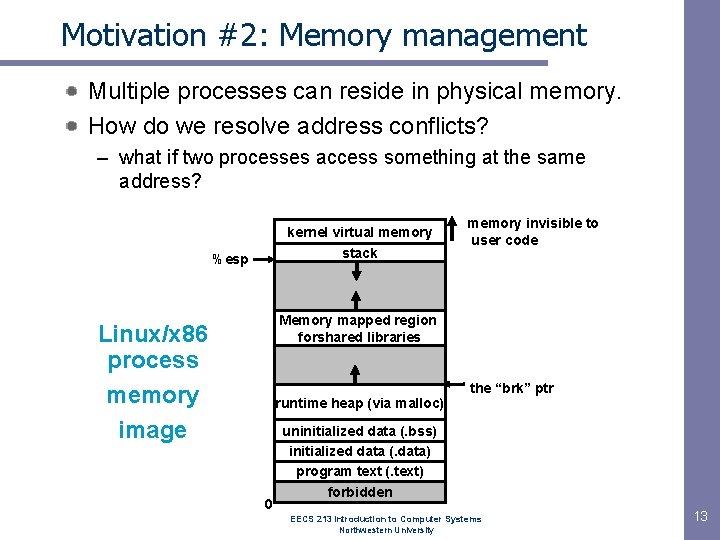

Motivation #2: Memory management Multiple processes can reside in physical memory. How do we resolve address conflicts? – what if two processes access something at the same address? kernel virtual memory stack %esp memory invisible to user code Memory mapped region forshared libraries Linux/x 86 process memory image runtime heap (via malloc) 0 the “brk” ptr uninitialized data (. bss) initialized data (. data) program text (. text) forbidden EECS 213 Introduction to Computer Systems Northwestern University 13

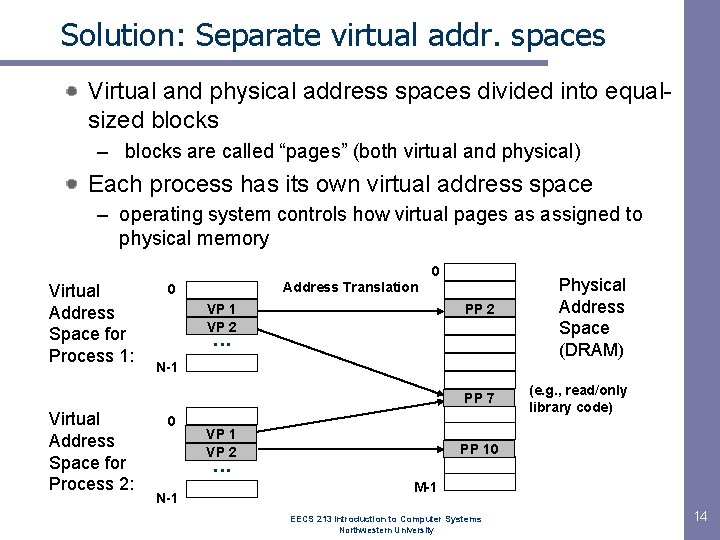

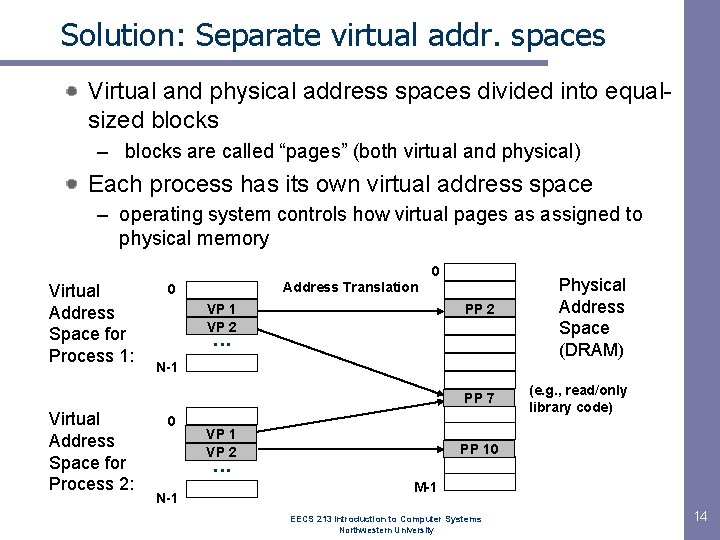

Solution: Separate virtual addr. spaces Virtual and physical address spaces divided into equalsized blocks – blocks are called “pages” (both virtual and physical) Each process has its own virtual address space – operating system controls how virtual pages as assigned to physical memory 0 Virtual Address Space for Process 1: Address Translation 0 VP 1 VP 2 PP 2 . . . N-1 PP 7 Virtual Address Space for Process 2: Physical Address Space (DRAM) 0 VP 1 VP 2 PP 10 . . . N-1 (e. g. , read/only library code) M-1 EECS 213 Introduction to Computer Systems Northwestern University 14

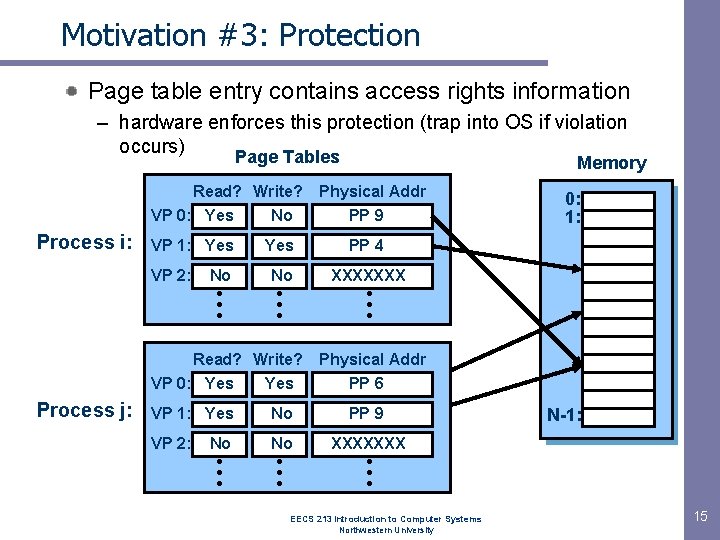

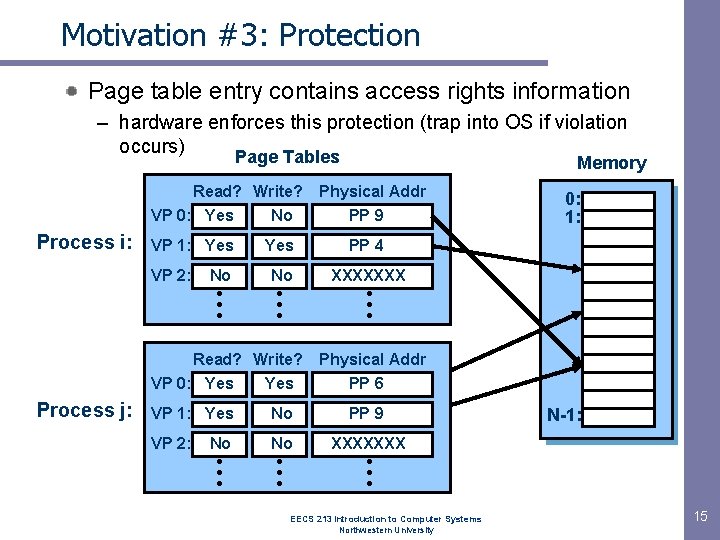

Motivation #3: Protection Page table entry contains access rights information – hardware enforces this protection (trap into OS if violation occurs) Page Tables Read? Write? VP 0: Yes No Process i: VP 1: Yes VP 2: No • • • Physical Addr PP 9 Yes PP 4 No XXXXXXX • • • Read? Write? VP 0: Yes Process j: Memory • • • Physical Addr PP 6 VP 1: Yes No PP 9 VP 2: No XXXXXXX No • • • 0: 1: N-1: • • • EECS 213 Introduction to Computer Systems Northwestern University 15

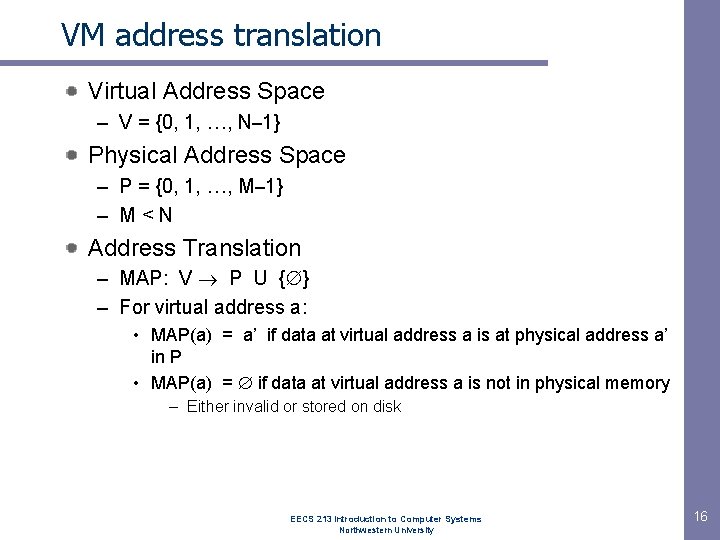

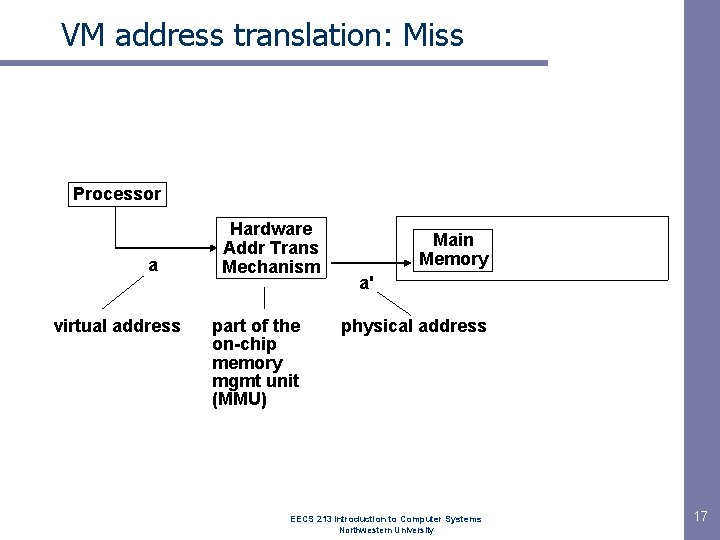

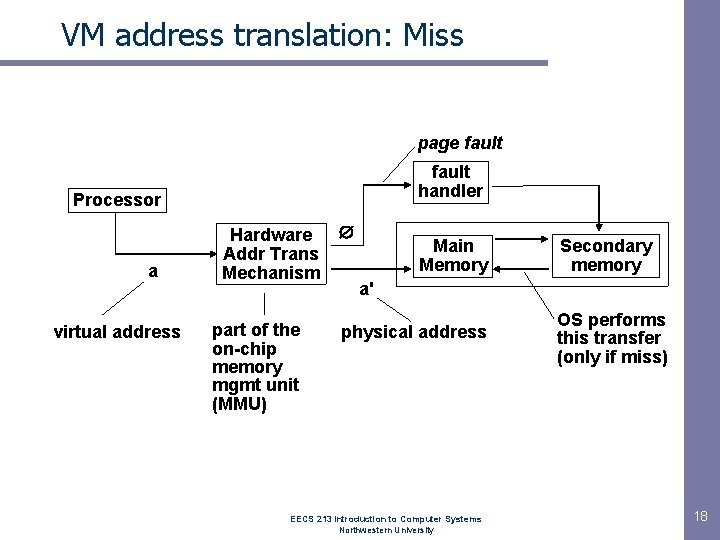

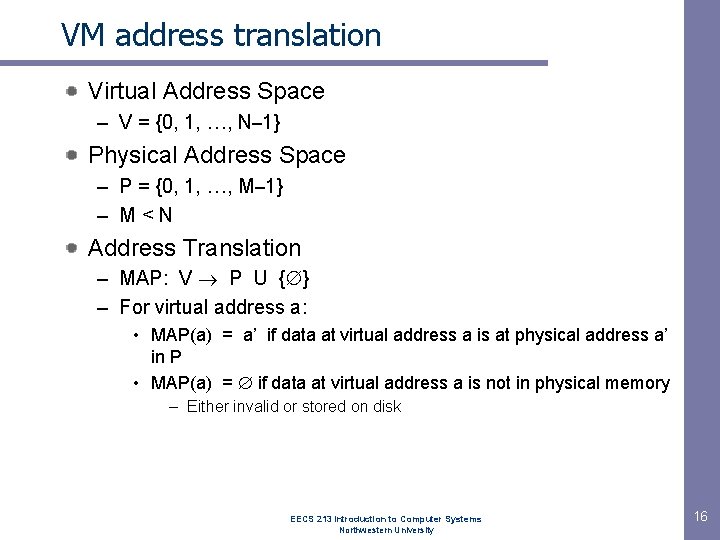

VM address translation Virtual Address Space – V = {0, 1, …, N– 1} Physical Address Space – P = {0, 1, …, M– 1} – M<N Address Translation – MAP: V P U { } – For virtual address a: • MAP(a) = a’ if data at virtual address a is at physical address a’ in P • MAP(a) = if data at virtual address a is not in physical memory – Either invalid or stored on disk EECS 213 Introduction to Computer Systems Northwestern University 16

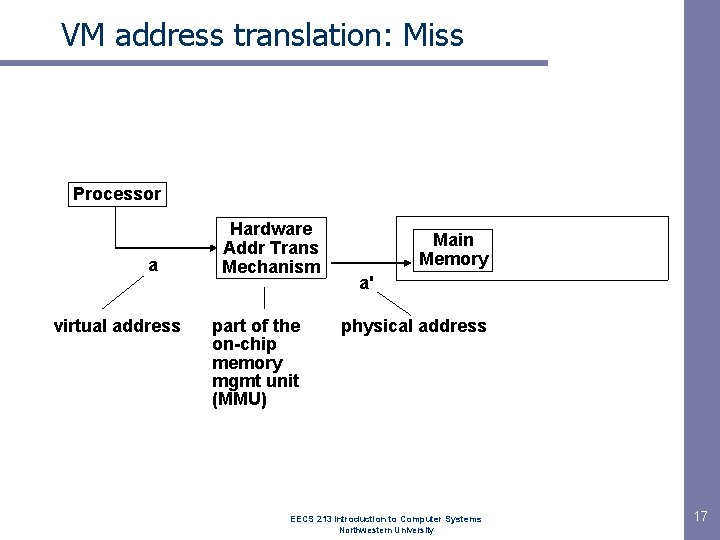

VM address translation: Miss Processor a virtual address Hardware Addr Trans Mechanism part of the on-chip memory mgmt unit (MMU) Main Memory a' physical address EECS 213 Introduction to Computer Systems Northwestern University 17

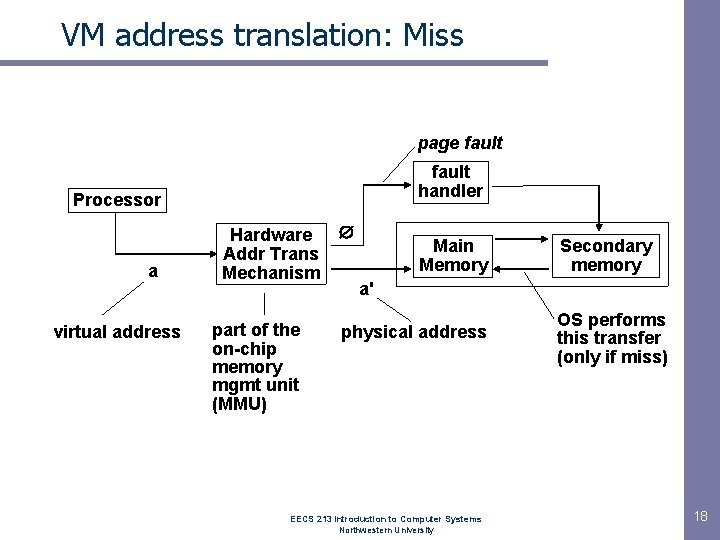

VM address translation: Miss page fault handler Processor a virtual address Hardware Addr Trans Mechanism part of the on-chip memory mgmt unit (MMU) Main Memory Secondary memory a' physical address EECS 213 Introduction to Computer Systems Northwestern University OS performs this transfer (only if miss) 18

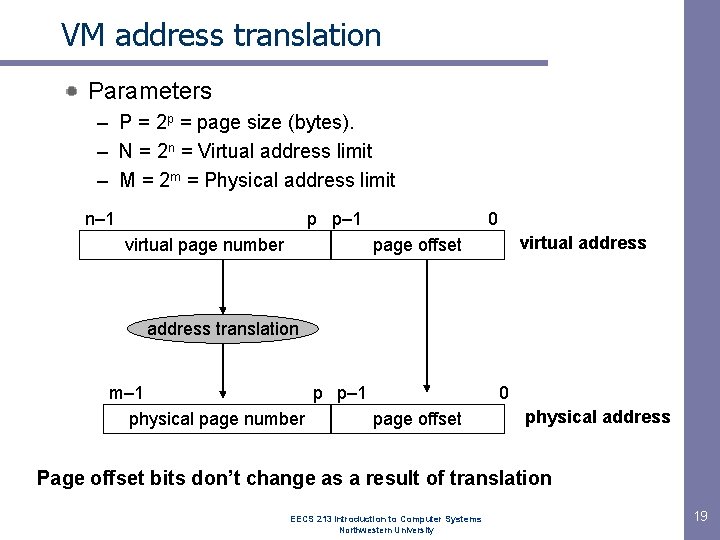

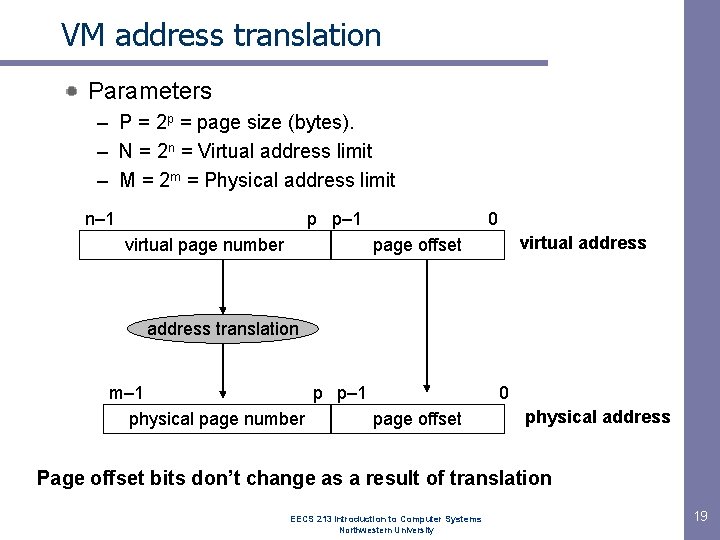

VM address translation Parameters – P = 2 p = page size (bytes). – N = 2 n = Virtual address limit – M = 2 m = Physical address limit n– 1 p p– 1 virtual page number 0 virtual address page offset address translation m– 1 p p– 1 physical page number page offset 0 physical address Page offset bits don’t change as a result of translation EECS 213 Introduction to Computer Systems Northwestern University 19

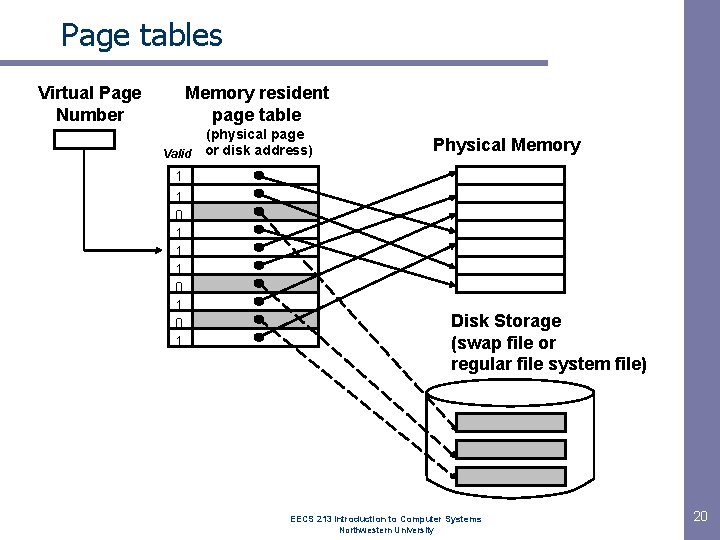

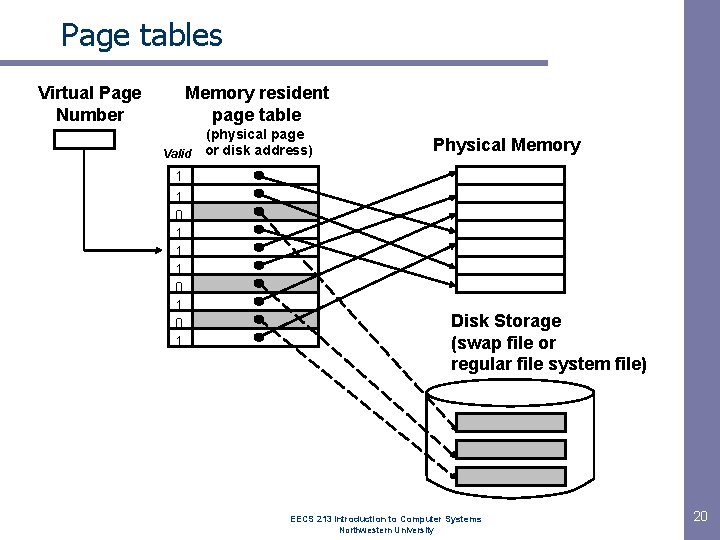

Page tables Virtual Page Number Memory resident page table (physical page Valid or disk address) 1 1 0 1 0 1 Physical Memory Disk Storage (swap file or regular file system file) EECS 213 Introduction to Computer Systems Northwestern University 20

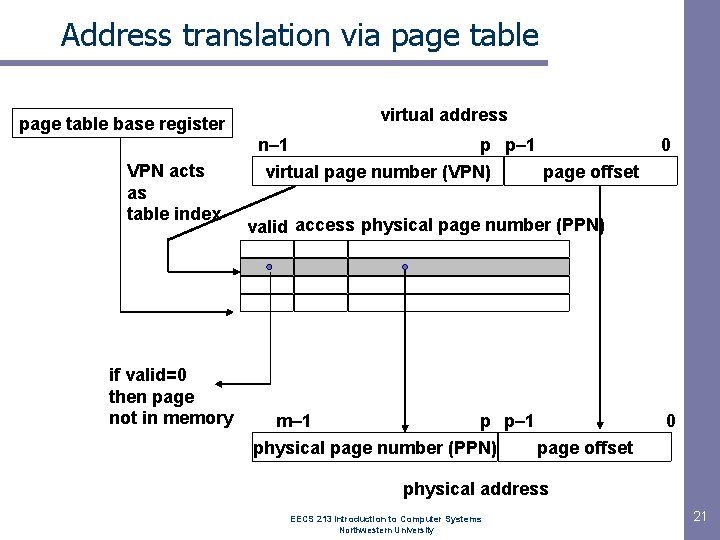

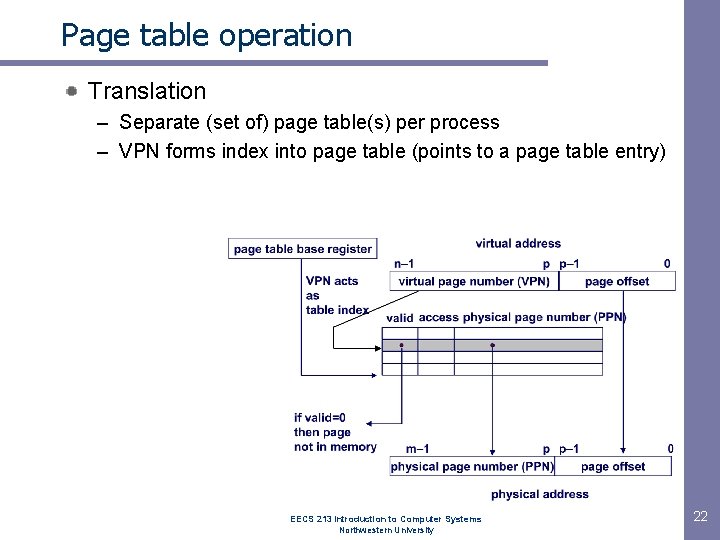

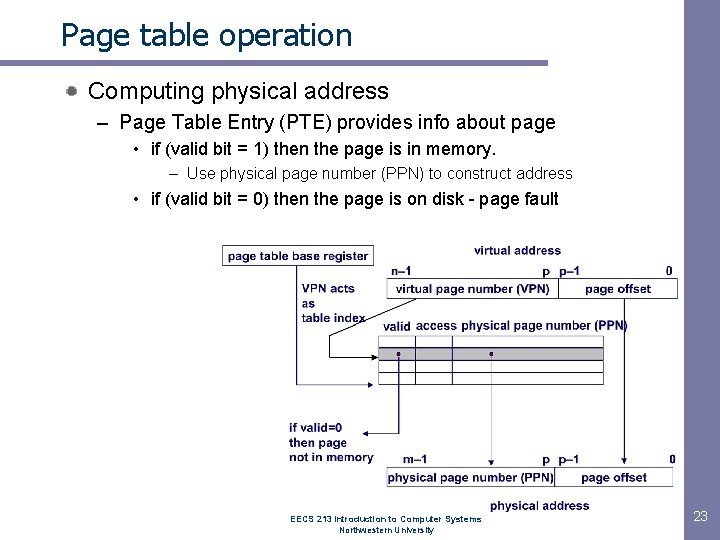

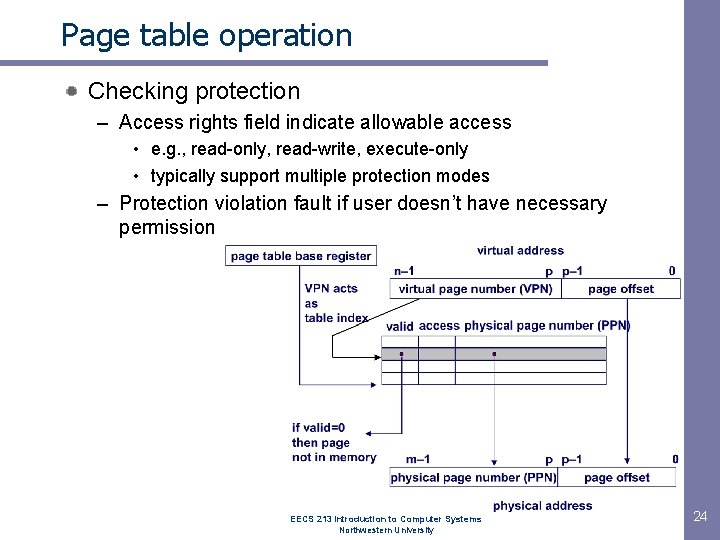

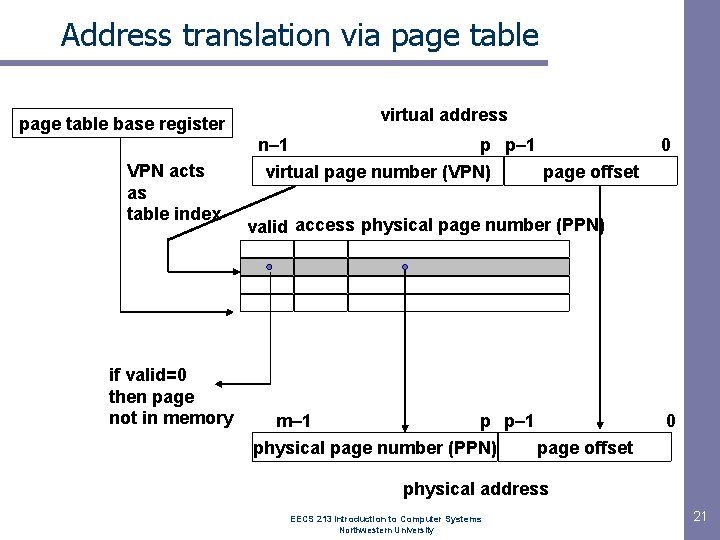

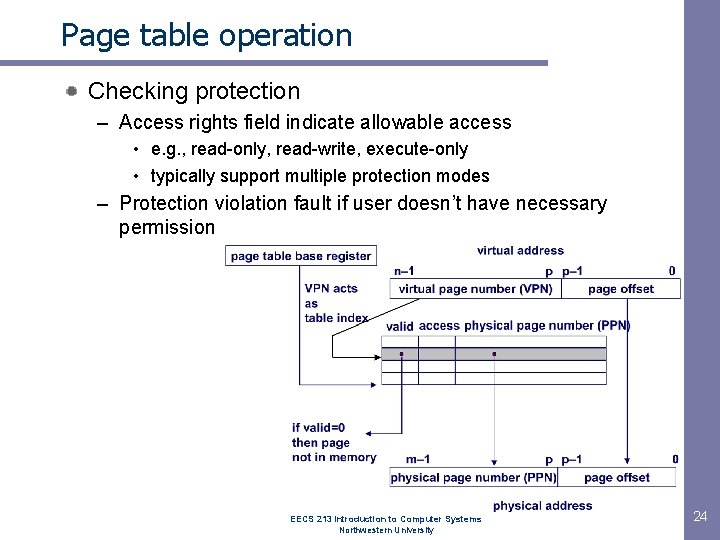

Address translation via page table base register VPN acts as table index if valid=0 then page not in memory virtual address n– 1 p p– 1 virtual page number (VPN) page offset 0 valid access physical page number (PPN) m– 1 p p– 1 physical page number (PPN) page offset 0 physical address EECS 213 Introduction to Computer Systems Northwestern University 21

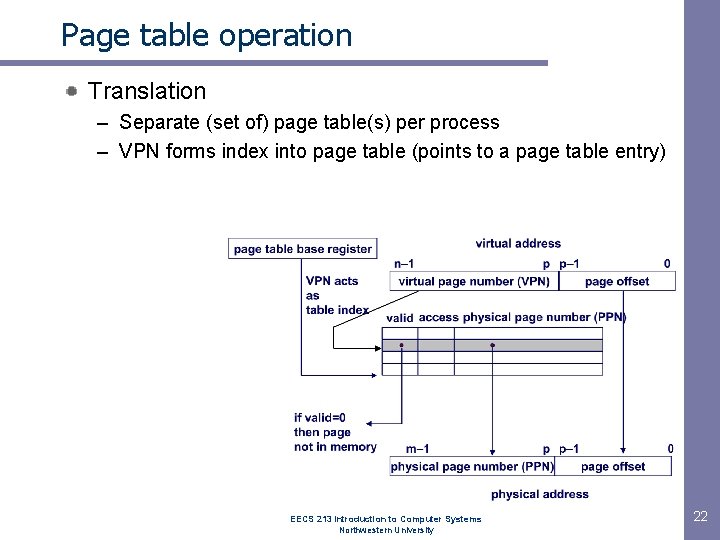

Page table operation Translation – Separate (set of) page table(s) per process – VPN forms index into page table (points to a page table entry) EECS 213 Introduction to Computer Systems Northwestern University 22

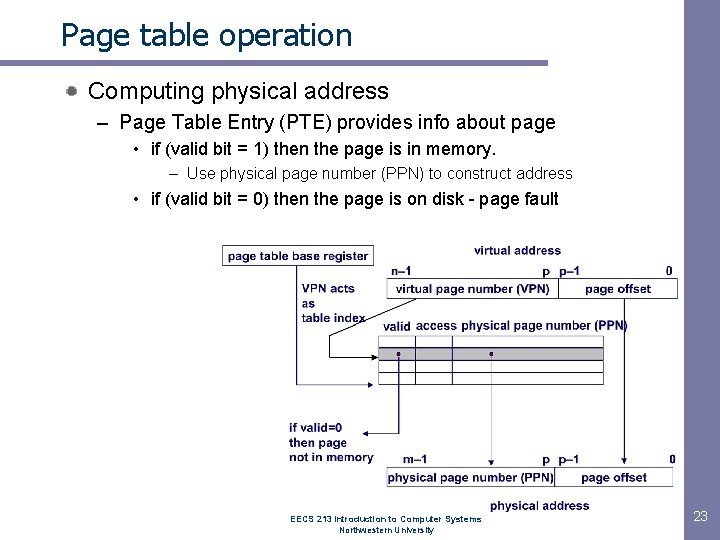

Page table operation Computing physical address – Page Table Entry (PTE) provides info about page • if (valid bit = 1) then the page is in memory. – Use physical page number (PPN) to construct address • if (valid bit = 0) then the page is on disk - page fault EECS 213 Introduction to Computer Systems Northwestern University 23

Page table operation Checking protection – Access rights field indicate allowable access • e. g. , read-only, read-write, execute-only • typically support multiple protection modes – Protection violation fault if user doesn’t have necessary permission EECS 213 Introduction to Computer Systems Northwestern University 24

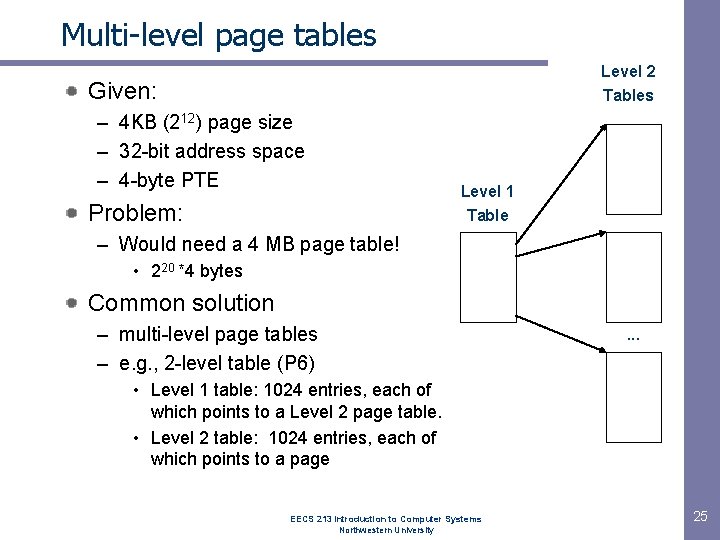

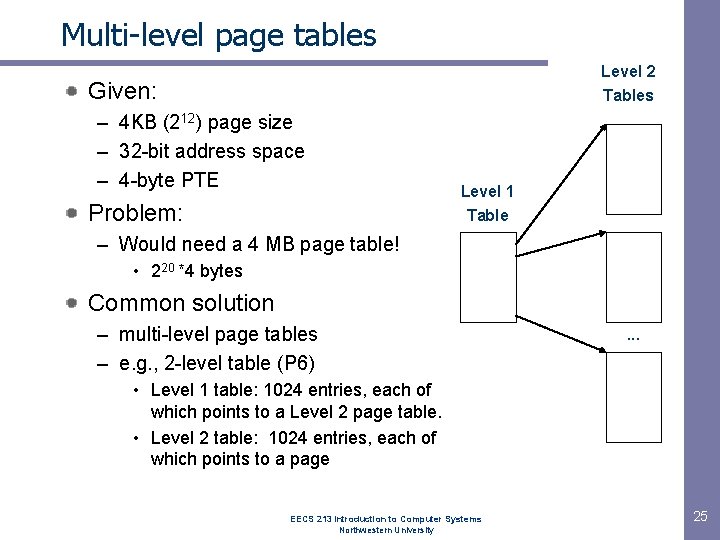

Multi-level page tables Level 2 Tables Given: – 4 KB (212) page size – 32 -bit address space – 4 -byte PTE Problem: Level 1 Table – Would need a 4 MB page table! • 220 *4 bytes Common solution – multi-level page tables – e. g. , 2 -level table (P 6) . . . • Level 1 table: 1024 entries, each of which points to a Level 2 page table. • Level 2 table: 1024 entries, each of which points to a page EECS 213 Introduction to Computer Systems Northwestern University 25

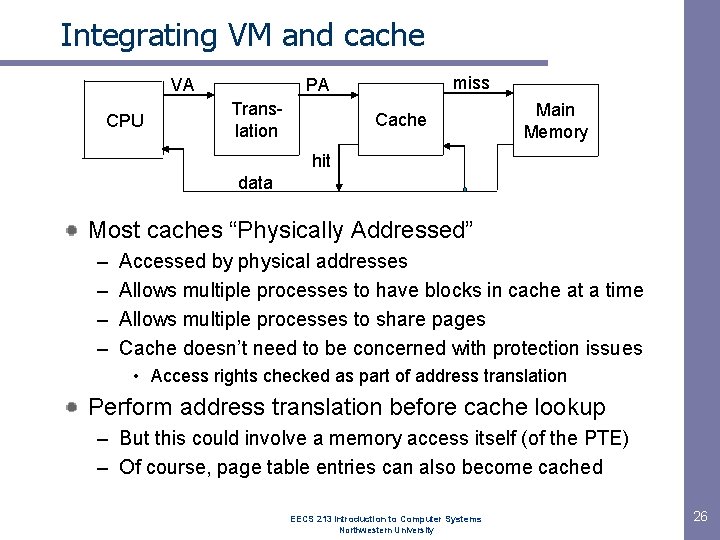

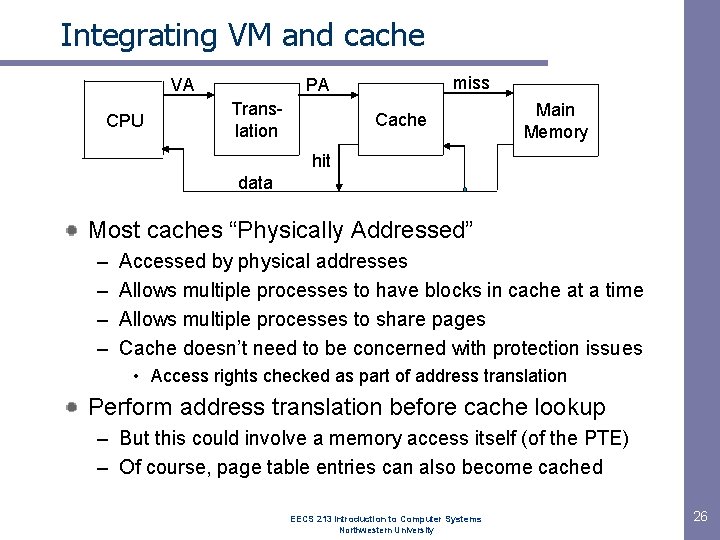

Integrating VM and cache VA CPU miss PA Translation Cache Main Memory hit data Most caches “Physically Addressed” – – Accessed by physical addresses Allows multiple processes to have blocks in cache at a time Allows multiple processes to share pages Cache doesn’t need to be concerned with protection issues • Access rights checked as part of address translation Perform address translation before cache lookup – But this could involve a memory access itself (of the PTE) – Of course, page table entries can also become cached EECS 213 Introduction to Computer Systems Northwestern University 26

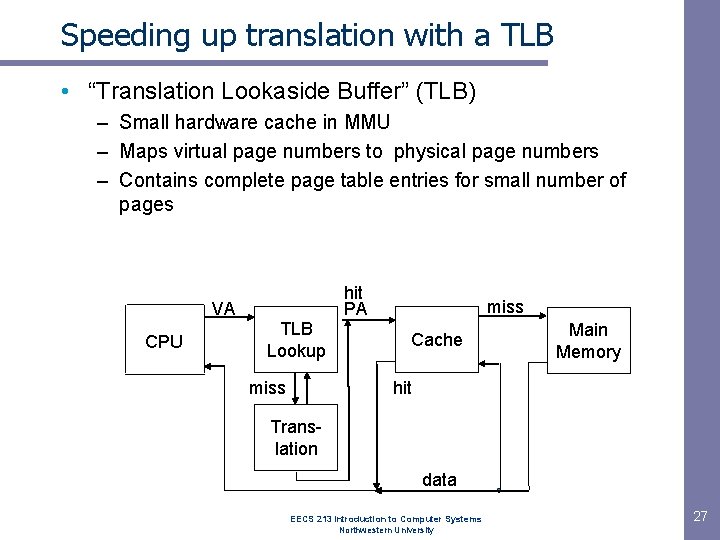

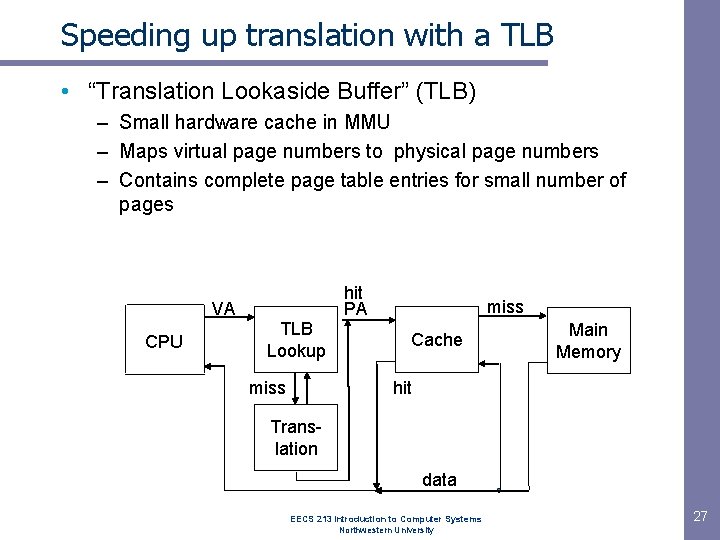

Speeding up translation with a TLB • “Translation Lookaside Buffer” (TLB) – Small hardware cache in MMU – Maps virtual page numbers to physical page numbers – Contains complete page table entries for small number of pages hit PA VA CPU TLB Lookup miss Cache Main Memory hit Translation data EECS 213 Introduction to Computer Systems Northwestern University 27

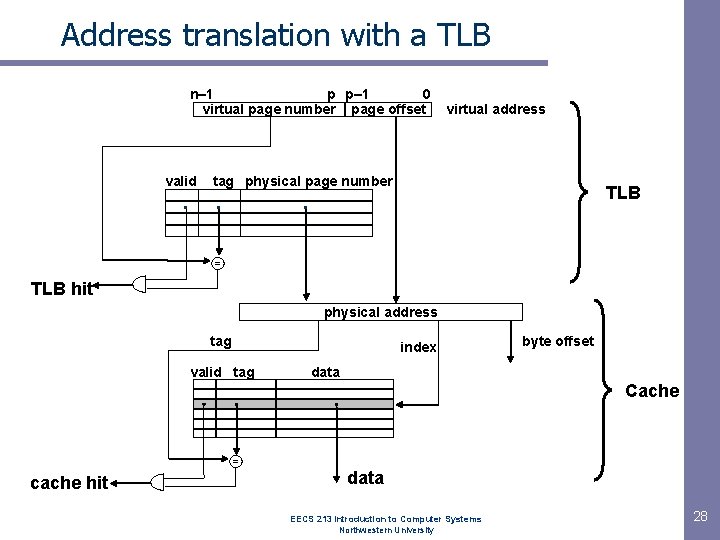

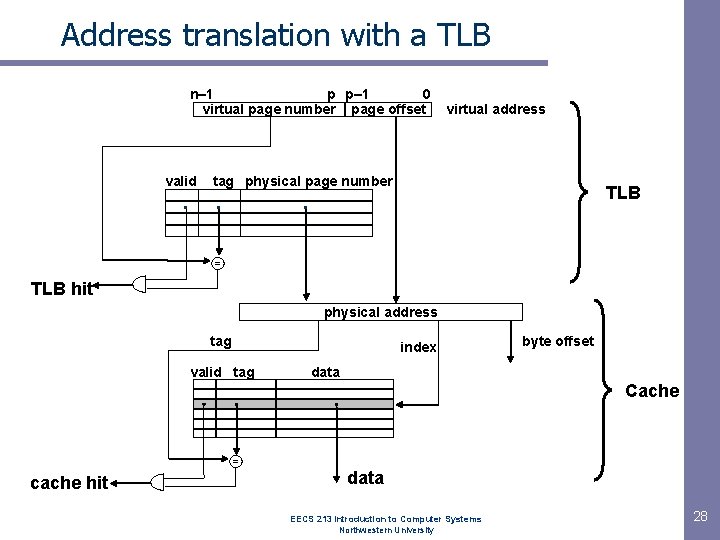

Address translation with a TLB n– 1 p p– 1 0 virtual page number page offset valid . virtual address tag physical page number . TLB . = TLB hit physical address tag index valid tag byte offset data Cache = cache hit data EECS 213 Introduction to Computer Systems Northwestern University 28

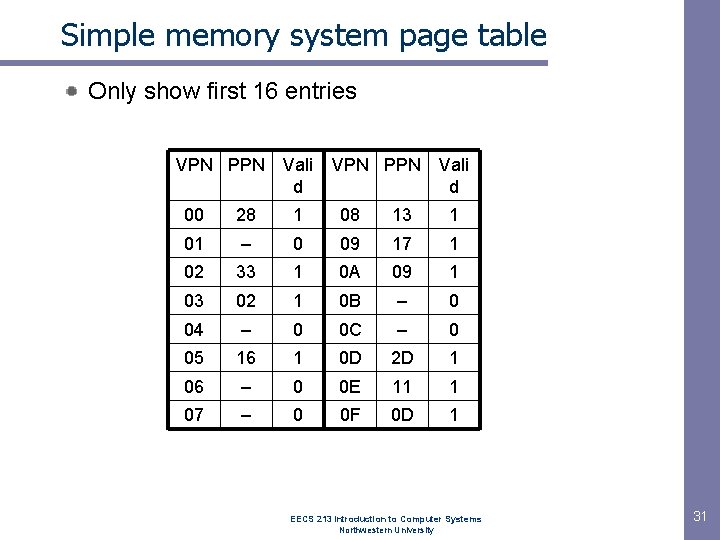

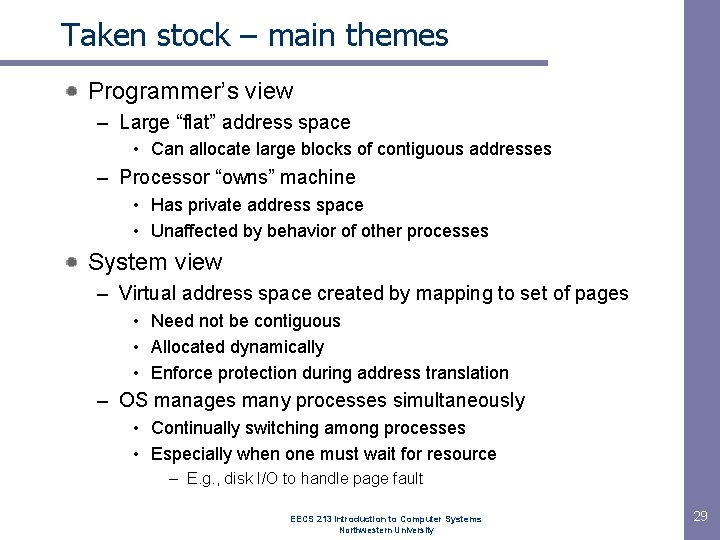

Taken stock – main themes Programmer’s view – Large “flat” address space • Can allocate large blocks of contiguous addresses – Processor “owns” machine • Has private address space • Unaffected by behavior of other processes System view – Virtual address space created by mapping to set of pages • Need not be contiguous • Allocated dynamically • Enforce protection during address translation – OS manages many processes simultaneously • Continually switching among processes • Especially when one must wait for resource – E. g. , disk I/O to handle page fault EECS 213 Introduction to Computer Systems Northwestern University 29

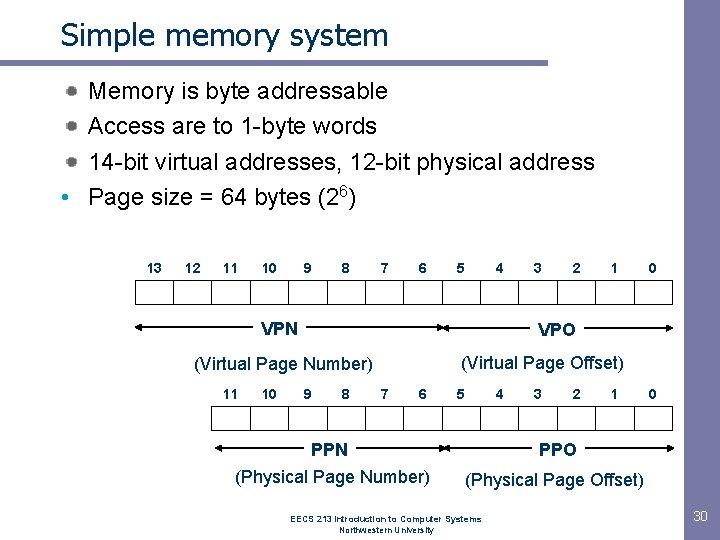

Simple memory system Memory is byte addressable Access are to 1 -byte words 14 -bit virtual addresses, 12 -bit physical address • Page size = 64 bytes (26) 13 12 11 10 9 8 7 6 5 4 VPN 10 2 1 0 VPO (Virtual Page Offset) (Virtual Page Number) 11 3 9 8 7 6 5 4 3 2 1 PPN PPO (Physical Page Number) (Physical Page Offset) EECS 213 Introduction to Computer Systems Northwestern University 0 30

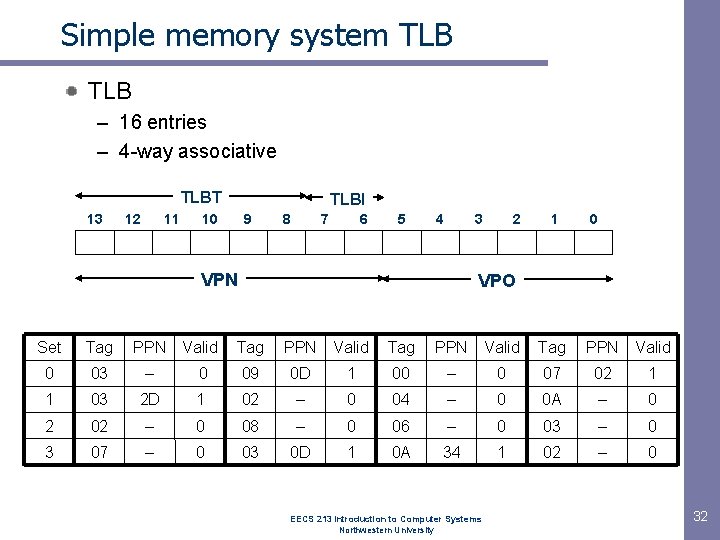

Simple memory system page table Only show first 16 entries VPN PPN Vali d 00 28 1 08 13 1 01 – 0 09 17 1 02 33 1 0 A 09 1 03 02 1 0 B – 0 04 – 0 0 C – 0 05 16 1 0 D 2 D 1 06 – 0 0 E 11 1 07 – 0 0 F 0 D 1 EECS 213 Introduction to Computer Systems Northwestern University 31

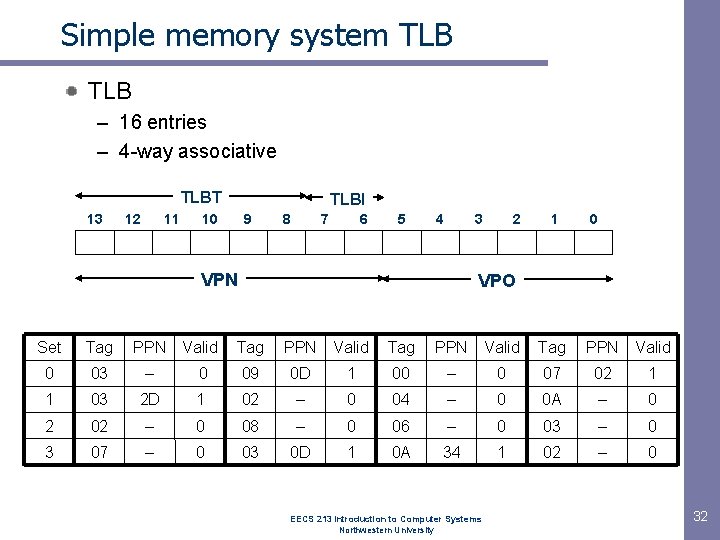

Simple memory system TLB – 16 entries – 4 -way associative TLBT 13 12 11 TLBI 10 9 8 7 6 5 4 3 VPN 2 1 0 VPO Set Tag PPN Valid 0 03 – 0 09 0 D 1 00 – 0 07 02 1 1 03 2 D 1 02 – 0 04 – 0 0 A – 0 2 02 – 0 08 – 0 06 – 0 03 – 0 3 07 – 0 03 0 D 1 0 A 34 1 02 – 0 EECS 213 Introduction to Computer Systems Northwestern University 32

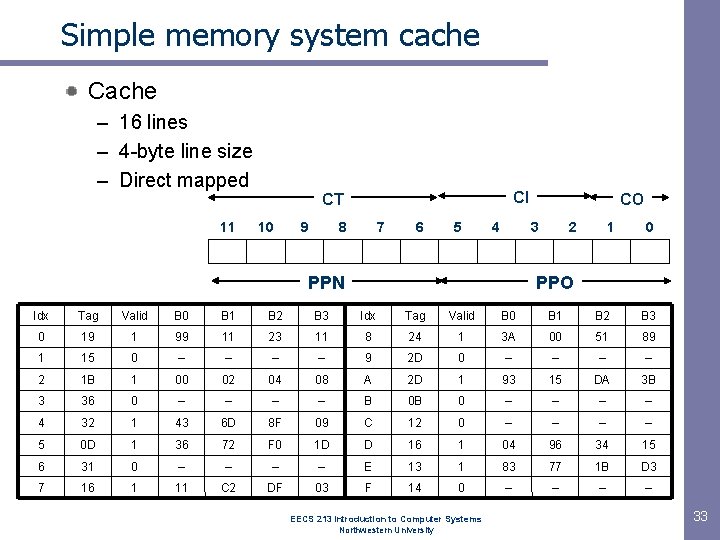

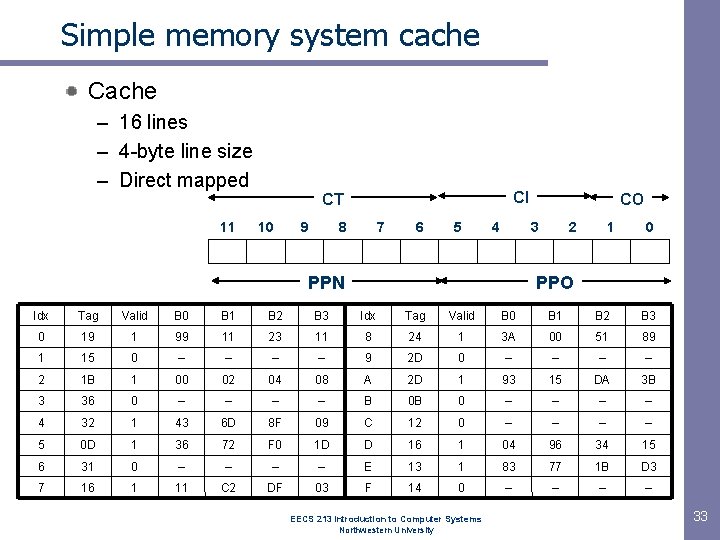

Simple memory system cache Cache – 16 lines – 4 -byte line size – Direct mapped 11 CI CT 10 9 8 7 6 5 4 CO 3 PPN 2 1 0 PPO Idx Tag Valid B 0 B 1 B 2 B 3 0 19 1 99 11 23 11 8 24 1 3 A 00 51 89 1 15 0 – – 9 2 D 0 – – 2 1 B 1 00 02 04 08 A 2 D 1 93 15 DA 3 B 3 36 0 – – B 0 B 0 – – 4 32 1 43 6 D 8 F 09 C 12 0 – – 5 0 D 1 36 72 F 0 1 D D 16 1 04 96 34 15 6 31 0 – – E 13 1 83 77 1 B D 3 7 16 1 11 C 2 DF 03 F 14 0 – – EECS 213 Introduction to Computer Systems Northwestern University 33

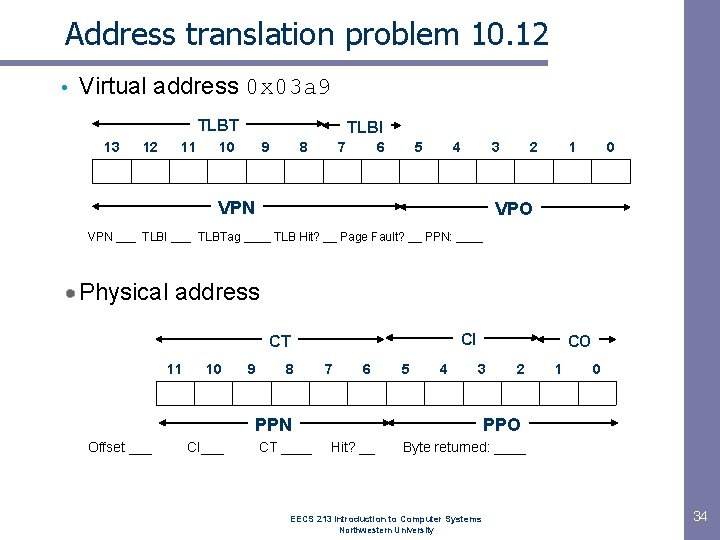

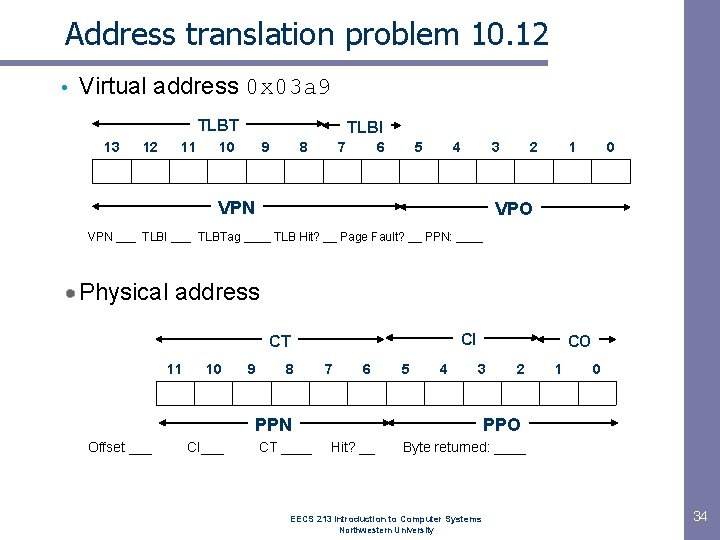

Address translation problem 10. 12 • Virtual address 0 x 03 a 9 TLBT 13 12 11 TLBI 10 9 8 7 6 5 4 3 VPN 2 1 0 VPO VPN ___ TLBI ___ TLBTag ____ TLB Hit? __ Page Fault? __ PPN: ____ Physical address CI CT 11 10 9 8 7 6 5 4 CO 3 PPN Offset ___ CI___ CT ____ 2 1 0 PPO Hit? __ Byte returned: ____ EECS 213 Introduction to Computer Systems Northwestern University 34

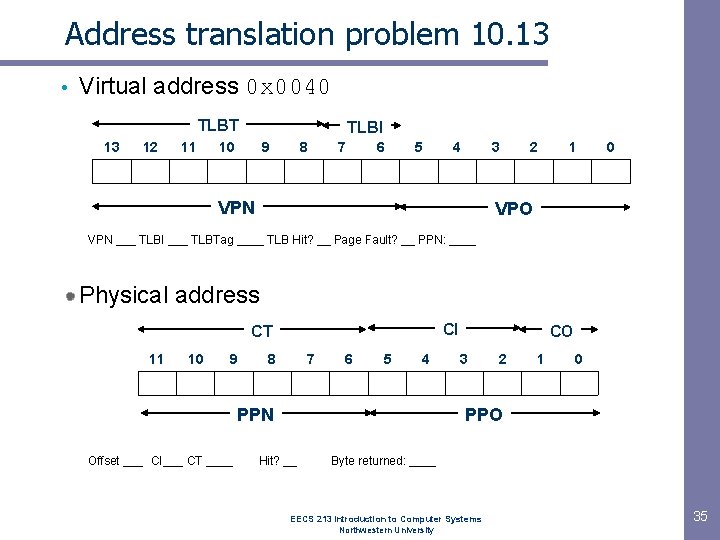

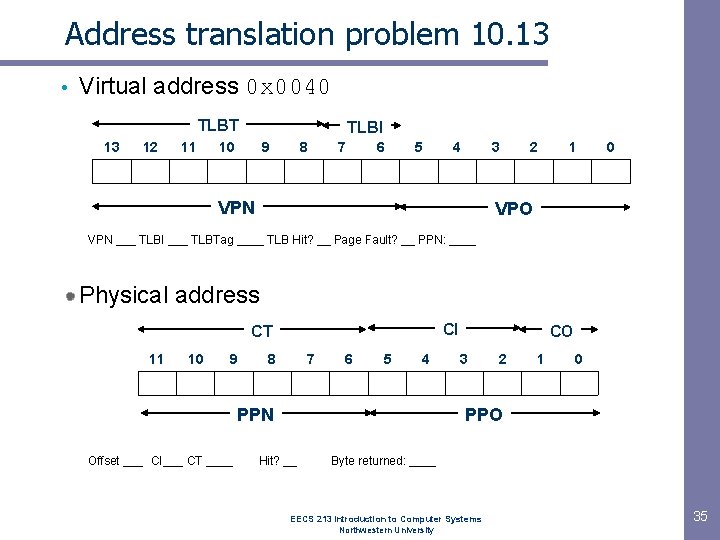

Address translation problem 10. 13 • Virtual address 0 x 0040 TLBT 13 12 11 TLBI 10 9 8 7 6 5 4 3 VPN 2 1 0 VPO VPN ___ TLBI ___ TLBTag ____ TLB Hit? __ Page Fault? __ PPN: ____ Physical address CI CT 11 10 9 8 7 6 5 4 PPN Offset ___ CI___ CT ____ CO 3 2 1 0 PPO Hit? __ Byte returned: ____ EECS 213 Introduction to Computer Systems Northwestern University 35

Harsh reality Memory matters Memory is not unbounded – It must be allocated and managed – Many applications are memory dominated • Especially those based on complex, graph algorithms Memory referencing bugs especially pernicious – Effects are distant in both time and space Memory performance is not uniform – Cache and virtual memory effects can greatly affect program performance – Adapting program to characteristics of memory system can lead to major speed improvements

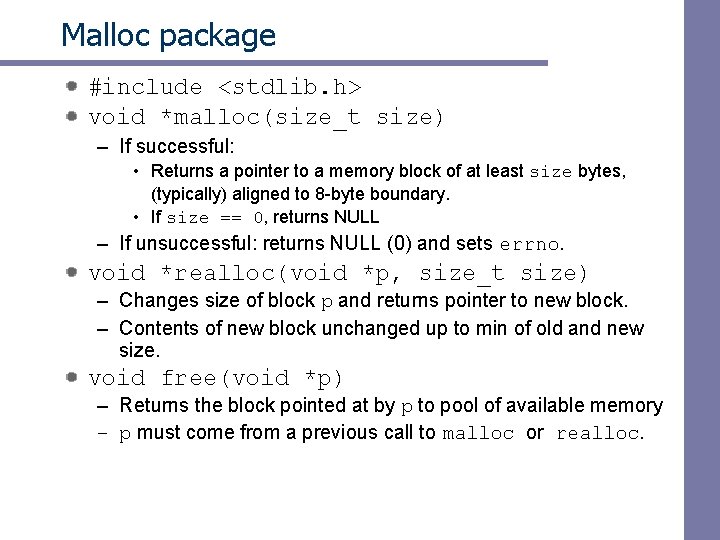

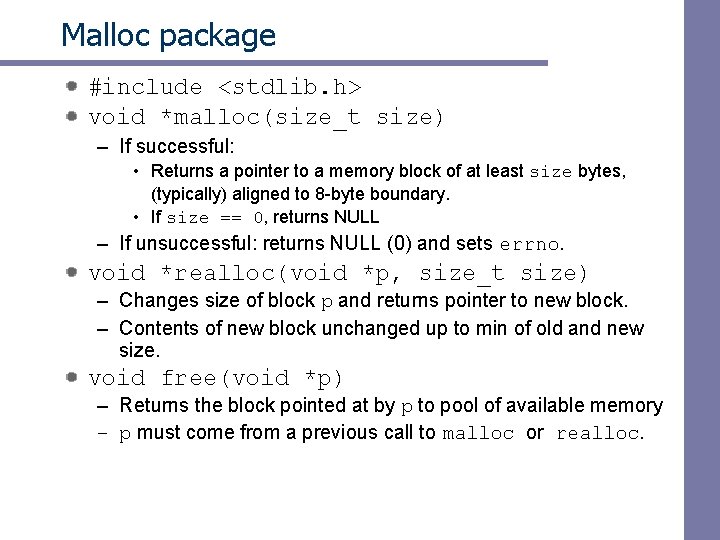

Dynamic memory allocation Application Dynamic Memory Allocator Heap Memory Explicit vs. implicit memory allocator – Explicit: application allocates and frees space • E. g. , malloc and free in C – Implicit: application allocates, but does not free space • E. g. garbage collection in Java, ML or Lisp Allocation – In both cases the memory allocator provides an abstraction of memory as a set of blocks – Doles out free memory blocks to application Will discuss simple explicit memory allocation today

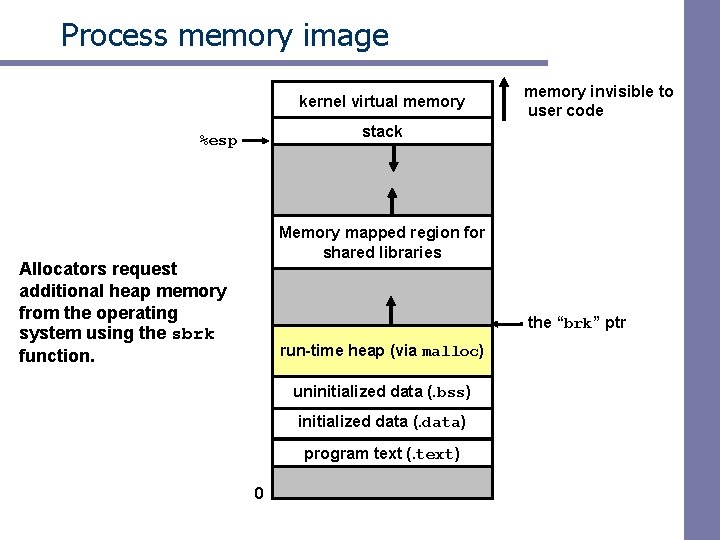

Process memory image kernel virtual memory invisible to user code stack %esp Memory mapped region for shared libraries Allocators request additional heap memory from the operating system using the sbrk function. the “brk” ptr run-time heap (via malloc) uninitialized data (. bss) initialized data (. data) program text (. text) 0

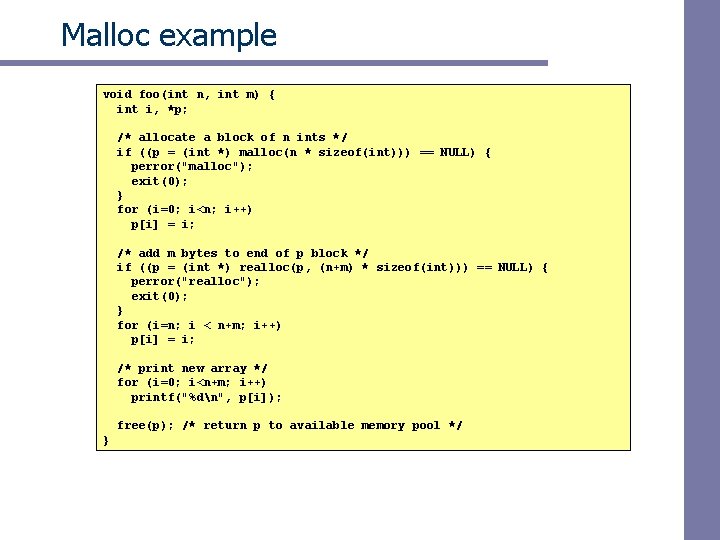

Malloc package #include <stdlib. h> void *malloc(size_t size) – If successful: • Returns a pointer to a memory block of at least size bytes, (typically) aligned to 8 -byte boundary. • If size == 0, returns NULL – If unsuccessful: returns NULL (0) and sets errno. void *realloc(void *p, size_t size) – Changes size of block p and returns pointer to new block. – Contents of new block unchanged up to min of old and new size. void free(void *p) – Returns the block pointed at by p to pool of available memory – p must come from a previous call to malloc or realloc.

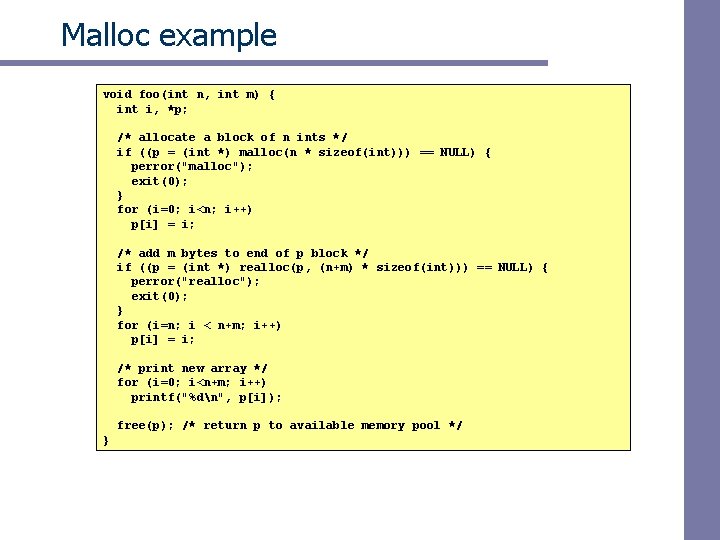

Malloc example void foo(int n, int m) { int i, *p; /* allocate a block of n ints */ if ((p = (int *) malloc(n * sizeof(int))) == NULL) { perror("malloc"); exit(0); } for (i=0; i<n; i++) p[i] = i; /* add m bytes to end of p block */ if ((p = (int *) realloc(p, (n+m) * sizeof(int))) == NULL) { perror("realloc"); exit(0); } for (i=n; i < n+m; i++) p[i] = i; /* print new array */ for (i=0; i<n+m; i++) printf("%dn", p[i]); free(p); /* return p to available memory pool */ }

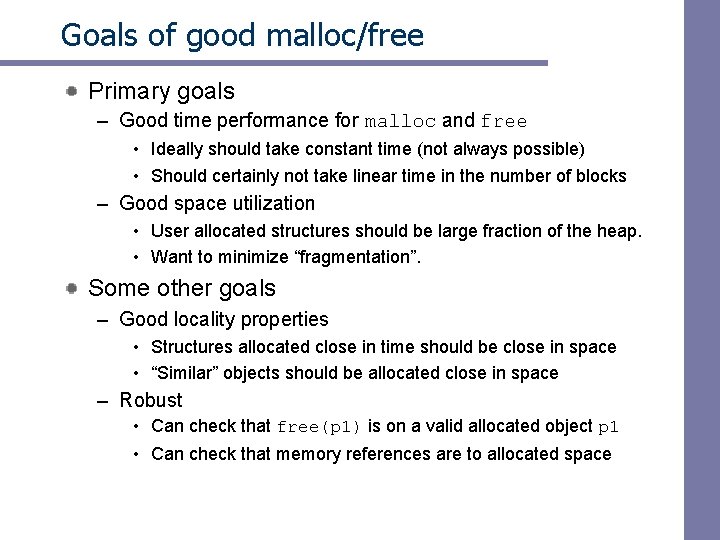

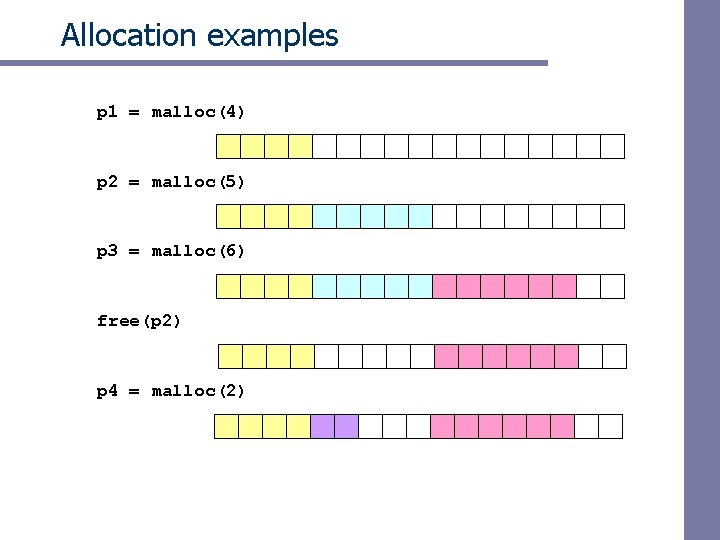

Allocation examples p 1 = malloc(4) p 2 = malloc(5) p 3 = malloc(6) free(p 2) p 4 = malloc(2)

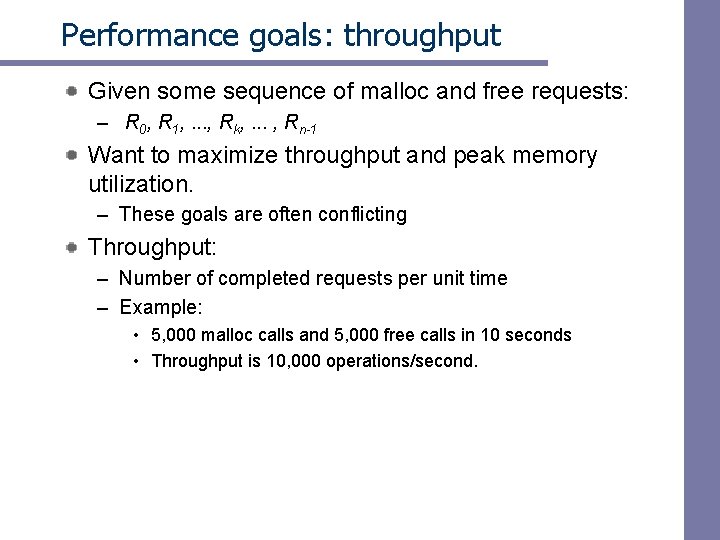

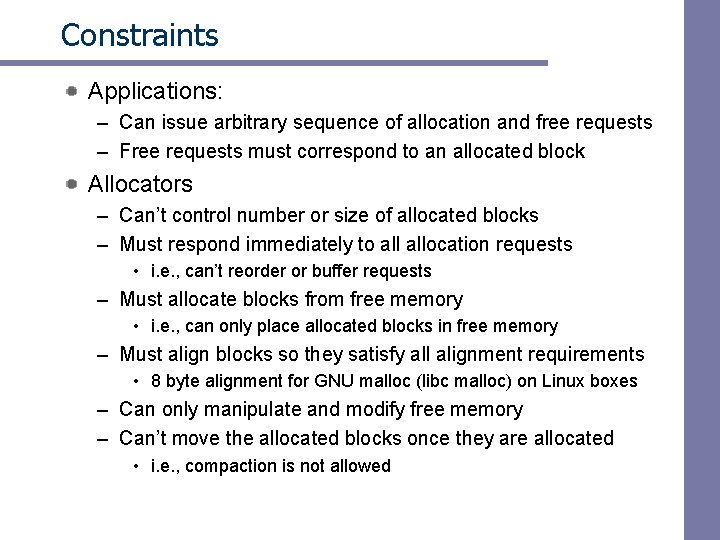

Constraints Applications: – Can issue arbitrary sequence of allocation and free requests – Free requests must correspond to an allocated block Allocators – Can’t control number or size of allocated blocks – Must respond immediately to allocation requests • i. e. , can’t reorder or buffer requests – Must allocate blocks from free memory • i. e. , can only place allocated blocks in free memory – Must align blocks so they satisfy all alignment requirements • 8 byte alignment for GNU malloc (libc malloc) on Linux boxes – Can only manipulate and modify free memory – Can’t move the allocated blocks once they are allocated • i. e. , compaction is not allowed

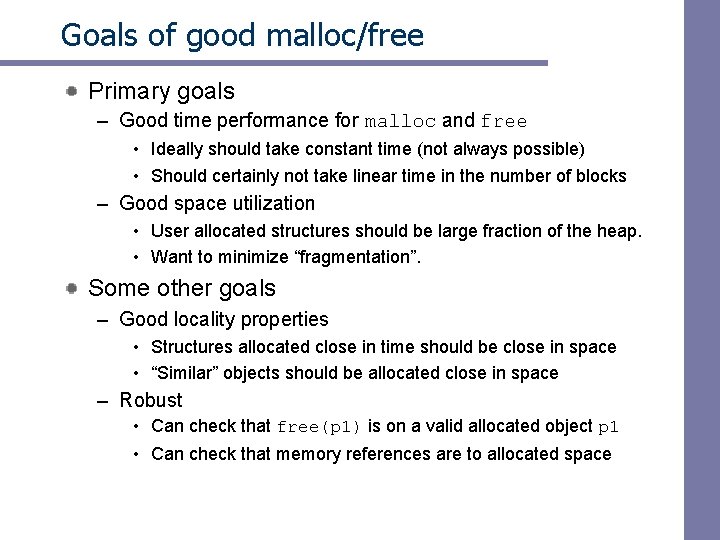

Goals of good malloc/free Primary goals – Good time performance for malloc and free • Ideally should take constant time (not always possible) • Should certainly not take linear time in the number of blocks – Good space utilization • User allocated structures should be large fraction of the heap. • Want to minimize “fragmentation”. Some other goals – Good locality properties • Structures allocated close in time should be close in space • “Similar” objects should be allocated close in space – Robust • Can check that free(p 1) is on a valid allocated object p 1 • Can check that memory references are to allocated space

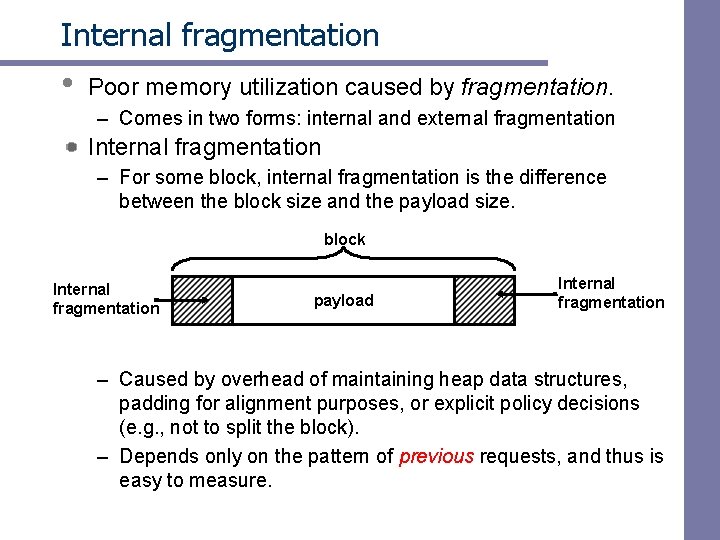

Performance goals: throughput Given some sequence of malloc and free requests: – R 0, R 1, . . . , Rk, . . . , Rn-1 Want to maximize throughput and peak memory utilization. – These goals are often conflicting Throughput: – Number of completed requests per unit time – Example: • 5, 000 malloc calls and 5, 000 free calls in 10 seconds • Throughput is 10, 000 operations/second.

Performance goals: Peak mem utilization Given some sequence of malloc and free requests: – R 0, R 1, . . . , Rk, . . . , Rn-1 Def: Aggregate payload Pk: malloc(p) results in a block with a payload of p bytes. . After request Rk has completed, the aggregate payload Pk is the sum of currently allocated payloads. Def: Current heap size is denoted by Hk Assume that Hk is monotonically nondecreasing Def: Peak memory utilization: – After k requests, peak memory utilization is: • Uk = ( maxi<k Pi ) / Hk

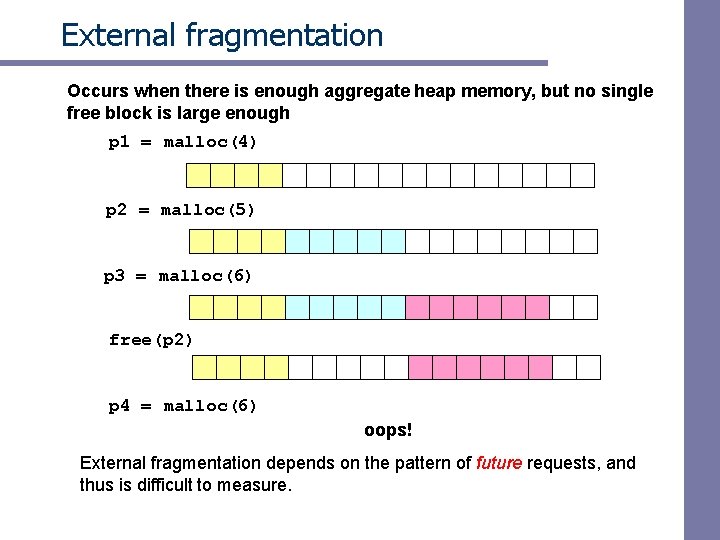

Internal fragmentation • Poor memory utilization caused by fragmentation. – Comes in two forms: internal and external fragmentation Internal fragmentation – For some block, internal fragmentation is the difference between the block size and the payload size. block Internal fragmentation payload Internal fragmentation – Caused by overhead of maintaining heap data structures, padding for alignment purposes, or explicit policy decisions (e. g. , not to split the block). – Depends only on the pattern of previous requests, and thus is easy to measure.

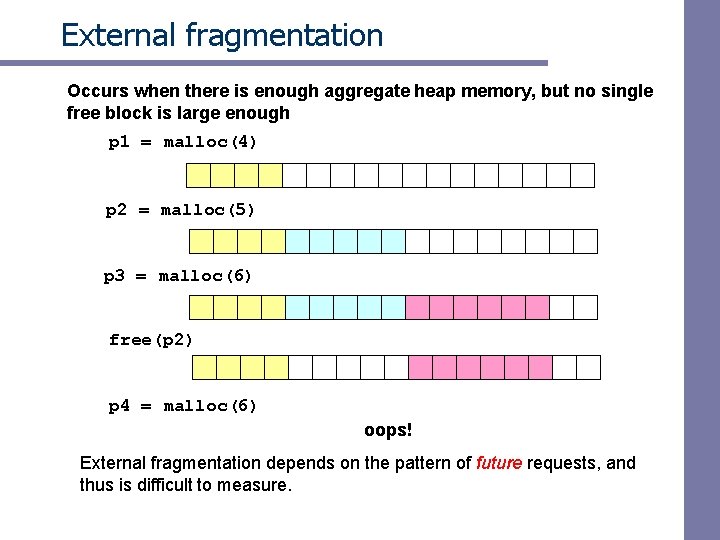

External fragmentation Occurs when there is enough aggregate heap memory, but no single free block is large enough p 1 = malloc(4) p 2 = malloc(5) p 3 = malloc(6) free(p 2) p 4 = malloc(6) oops! External fragmentation depends on the pattern of future requests, and thus is difficult to measure.

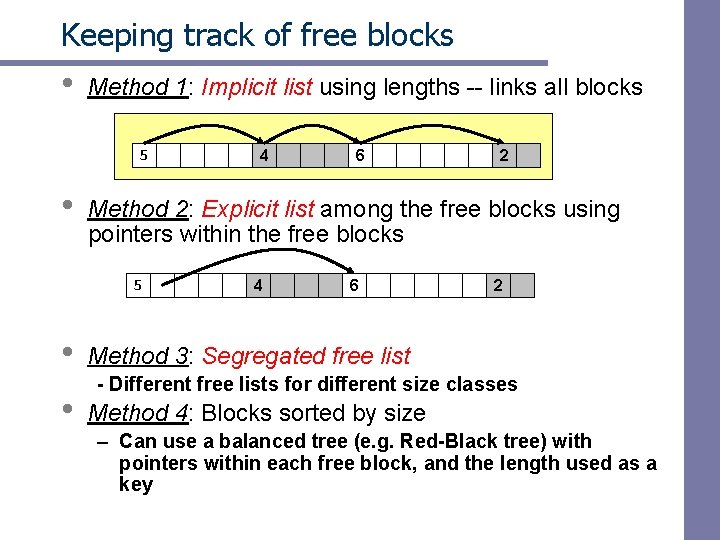

Implementation issues How do we know how much memory to free just given a pointer? How do we keep track of the free blocks? What do we do with the extra space when allocating a structure that is smaller than the free block it is placed in? How do we pick a block to use for allocation -many might fit? How do we reinsert freed block?

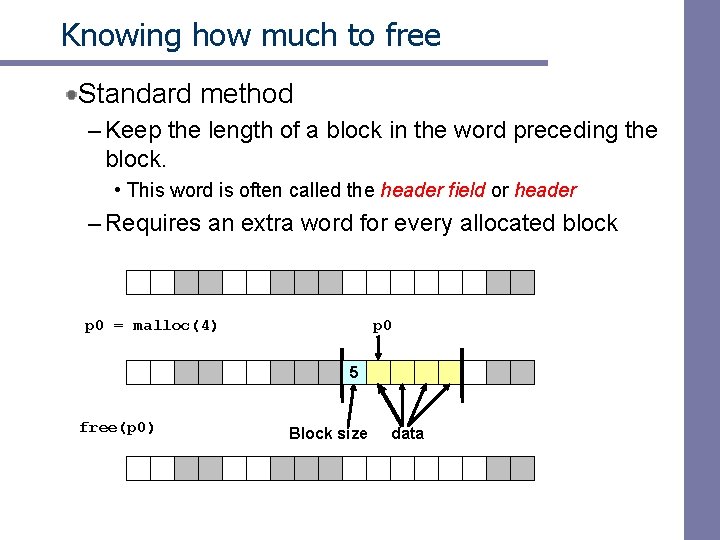

Knowing how much to free Standard method – Keep the length of a block in the word preceding the block. • This word is often called the header field or header – Requires an extra word for every allocated block p 0 = malloc(4) p 0 5 free(p 0) Block size data

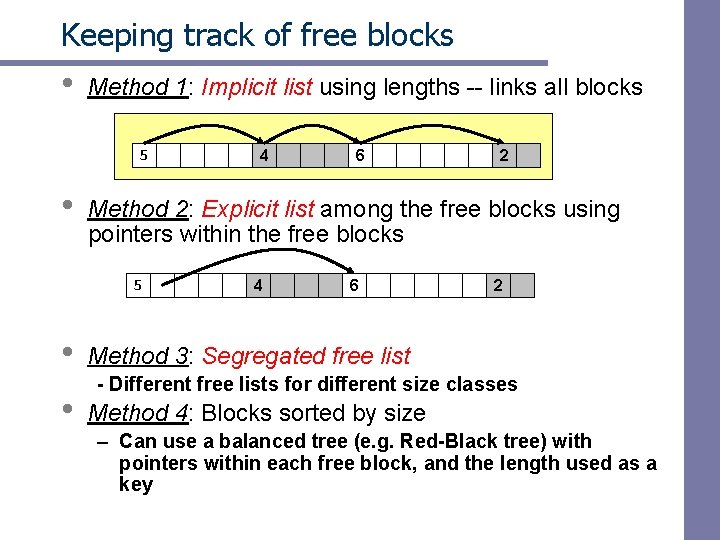

Keeping track of free blocks • Method 1: Implicit list using lengths -- links all blocks 5 • • 6 2 Method 2: Explicit list among the free blocks using pointers within the free blocks 5 • 4 4 6 2 Method 3: Segregated free list - Different free lists for different size classes Method 4: Blocks sorted by size – Can use a balanced tree (e. g. Red-Black tree) with pointers within each free block, and the length used as a key

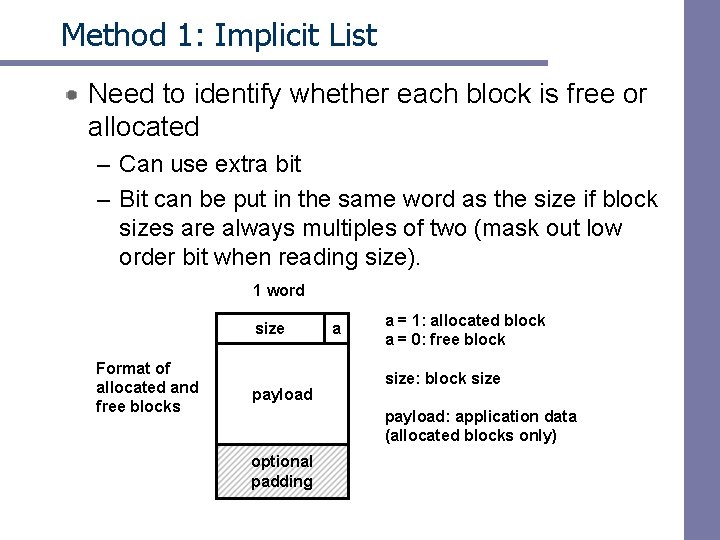

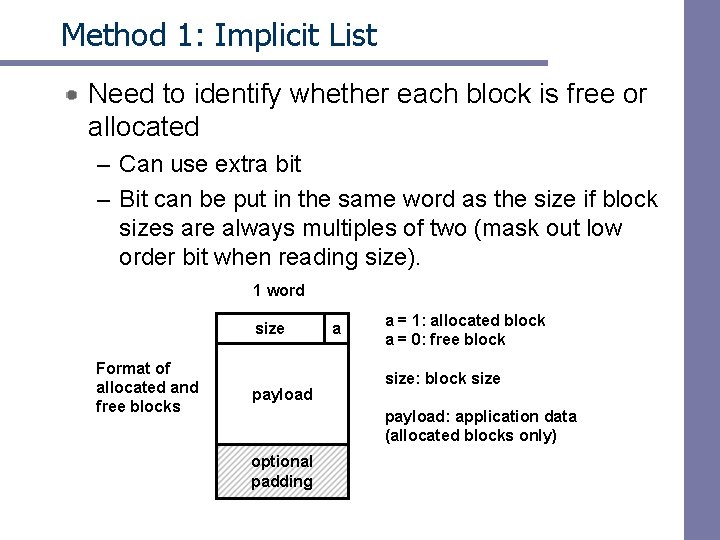

Method 1: Implicit List Need to identify whether each block is free or allocated – Can use extra bit – Bit can be put in the same word as the size if block sizes are always multiples of two (mask out low order bit when reading size). 1 word size Format of allocated and free blocks payload a a = 1: allocated block a = 0: free block size: block size payload: application data (allocated blocks only) optional padding

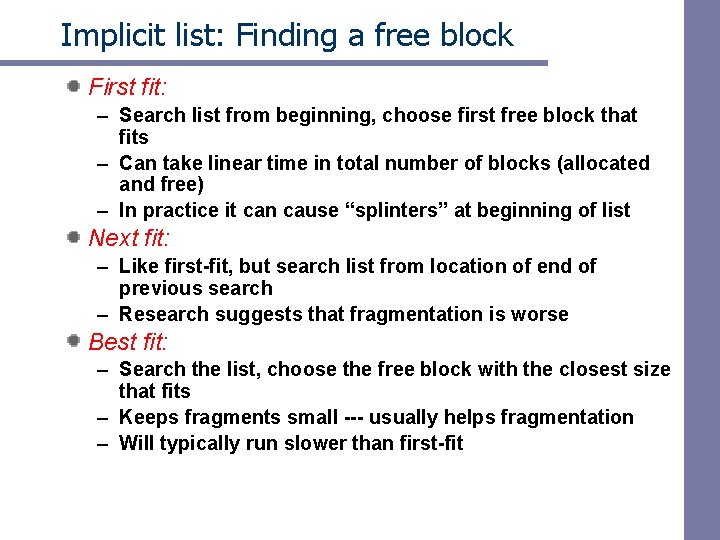

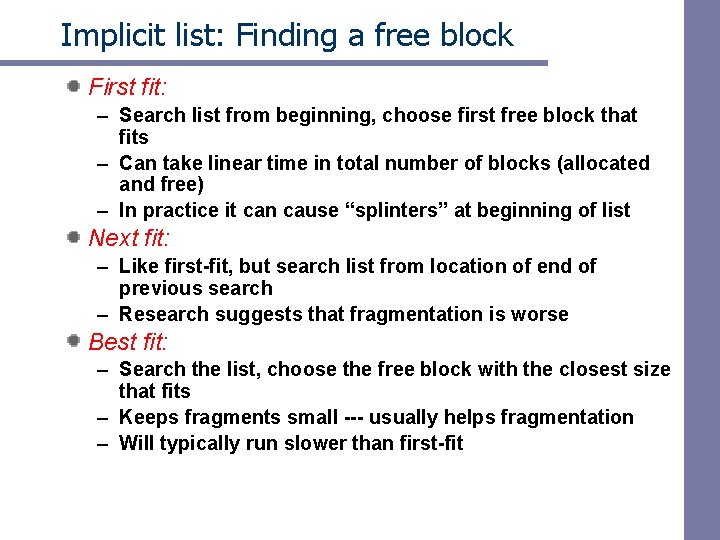

Implicit list: Finding a free block First fit: – Search list from beginning, choose first free block that fits – Can take linear time in total number of blocks (allocated and free) – In practice it can cause “splinters” at beginning of list Next fit: – Like first-fit, but search list from location of end of previous search – Research suggests that fragmentation is worse Best fit: – Search the list, choose the free block with the closest size that fits – Keeps fragments small --- usually helps fragmentation – Will typically run slower than first-fit

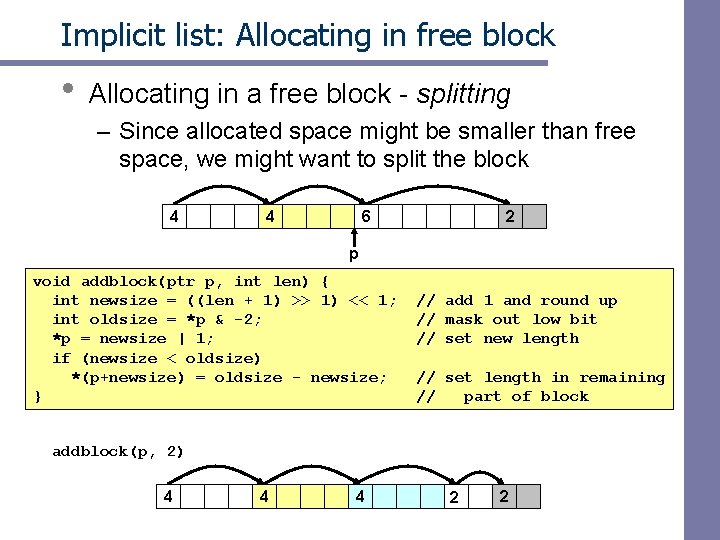

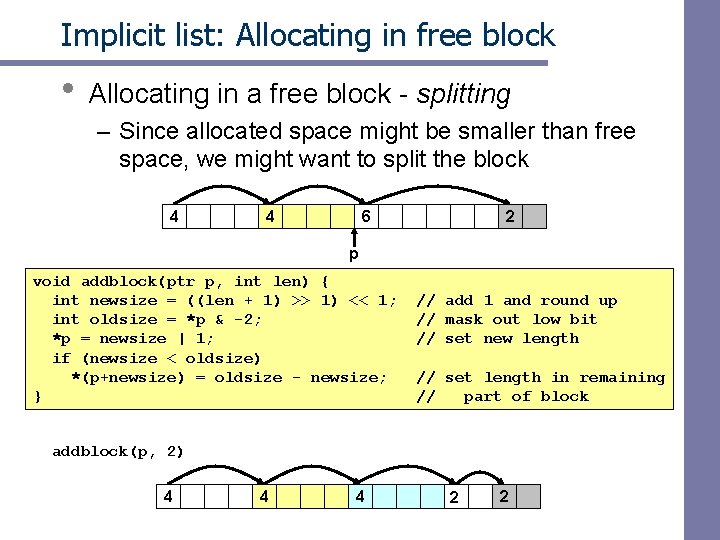

Implicit list: Allocating in free block • Allocating in a free block - splitting – Since allocated space might be smaller than free space, we might want to split the block 4 4 6 2 p void addblock(ptr p, int len) { int newsize = ((len + 1) >> 1) << 1; int oldsize = *p & -2; *p = newsize | 1; if (newsize < oldsize) *(p+newsize) = oldsize - newsize; } // add 1 and round up // mask out low bit // set new length // set length in remaining // part of block addblock(p, 2) 4 4 4 2 2

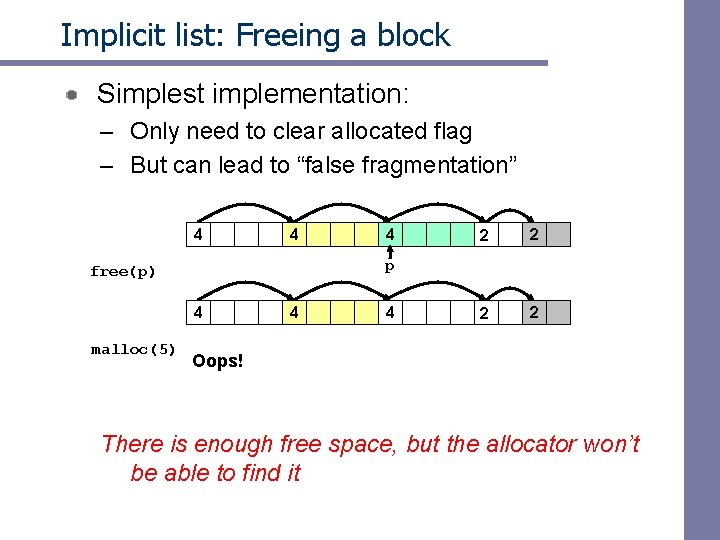

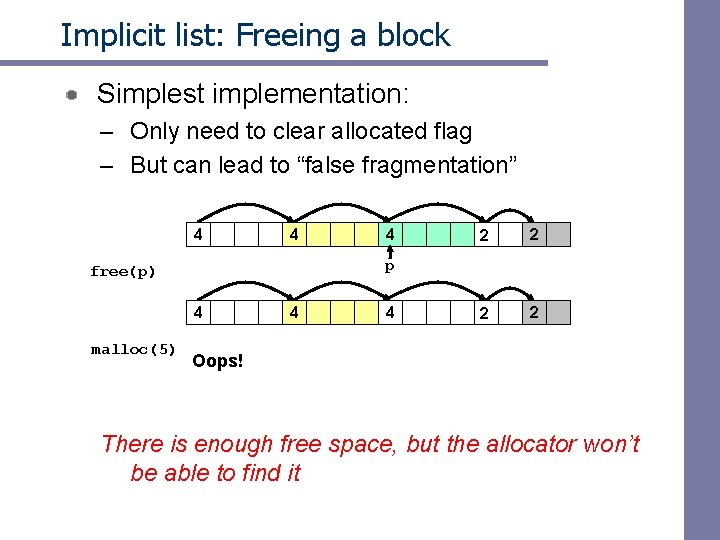

Implicit list: Freeing a block Simplest implementation: – Only need to clear allocated flag – But can lead to “false fragmentation” 4 4 2 2 p free(p) 4 malloc(5) 4 4 4 Oops! There is enough free space, but the allocator won’t be able to find it

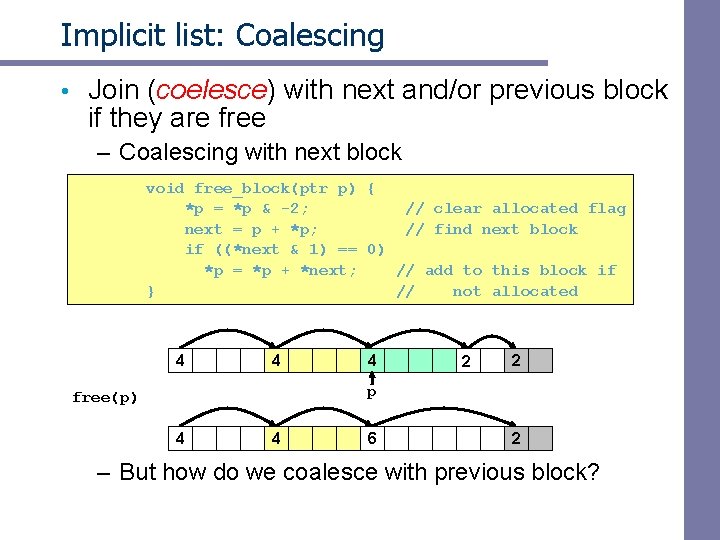

Implicit list: Coalescing • Join (coelesce) with next and/or previous block if they are free – Coalescing with next block void free_block(ptr p) { *p = *p & -2; // clear allocated flag next = p + *p; // find next block if ((*next & 1) == 0) *p = *p + *next; // add to this block if } // not allocated 4 4 4 2 2 p free(p) 4 4 6 2 – But how do we coalesce with previous block?

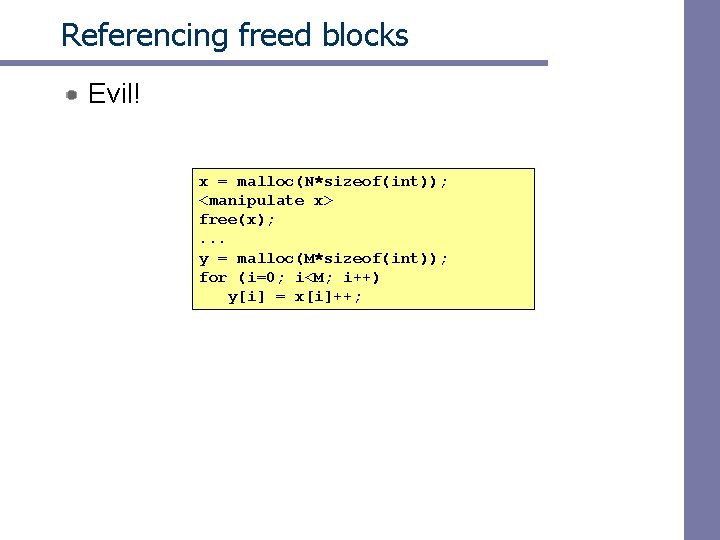

![Implicit list Bidirectional coalescing Boundary tags Knuth 73 Replicate sizeallocated word at Implicit list: Bidirectional coalescing • Boundary tags [Knuth 73] – Replicate size/allocated word at](https://slidetodoc.com/presentation_image_h/0c6337e05644041c9f997119f3f65636/image-56.jpg)

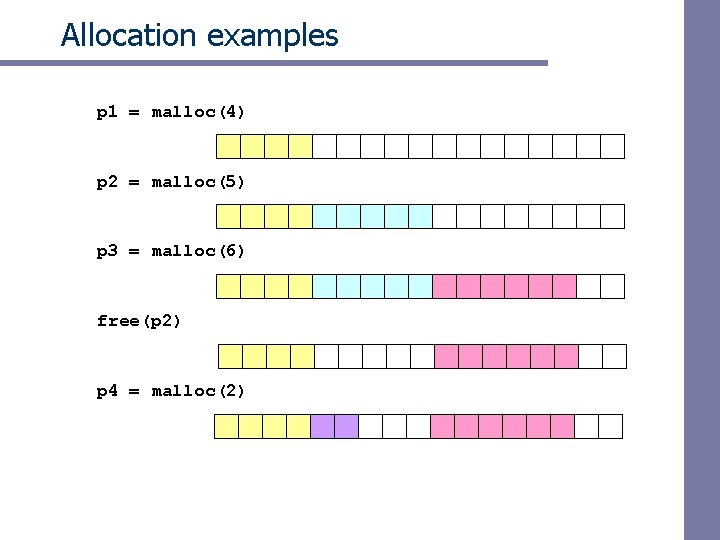

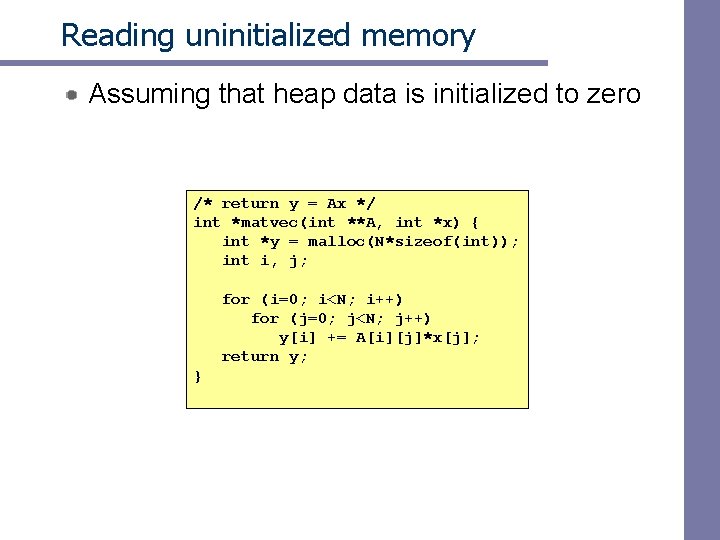

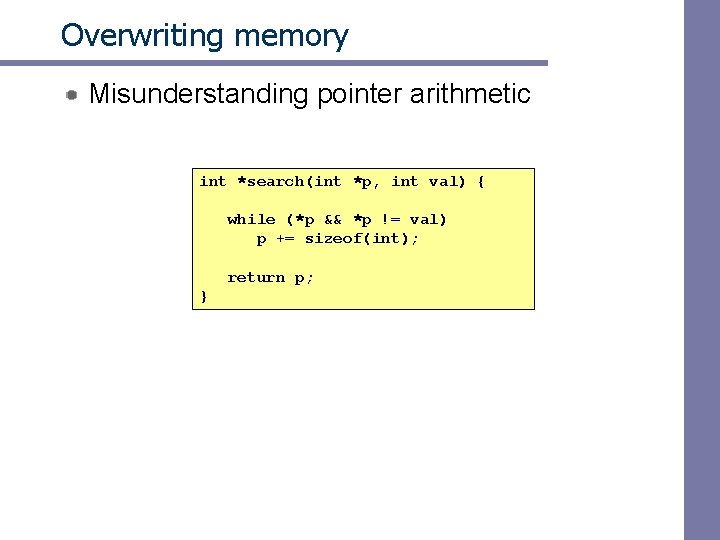

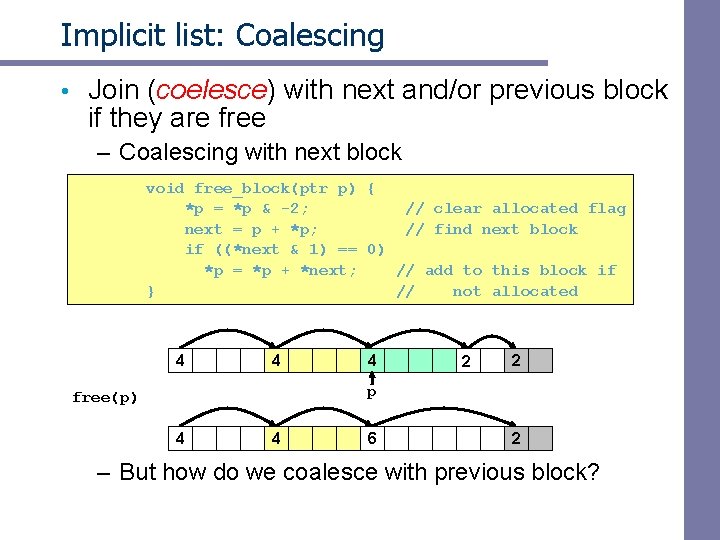

Implicit list: Bidirectional coalescing • Boundary tags [Knuth 73] – Replicate size/allocated word at bottom of free blocks – Allows us to traverse the “list” backwards, but requires extra space – Important and general technique! 1 word Header Format of allocated and free blocks a payload and padding Boundary tag (footer) 4 size 4 6 a = 1: allocated block a = 0: free block size: total block size a payload: application data (allocated blocks only) 6 4 4

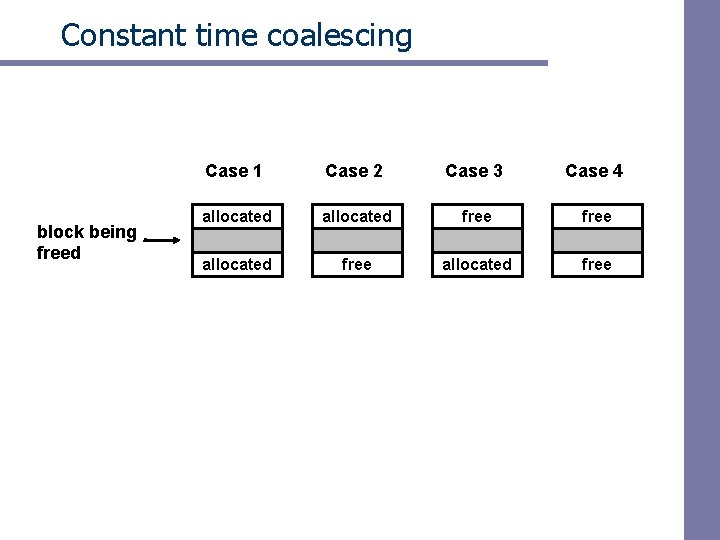

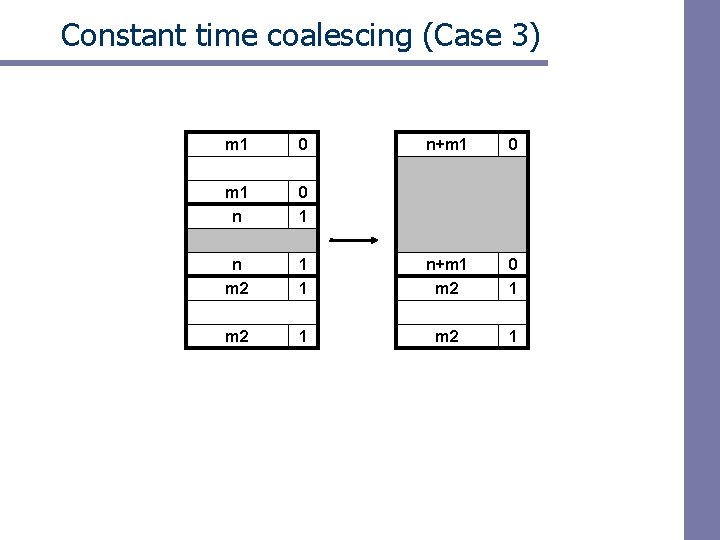

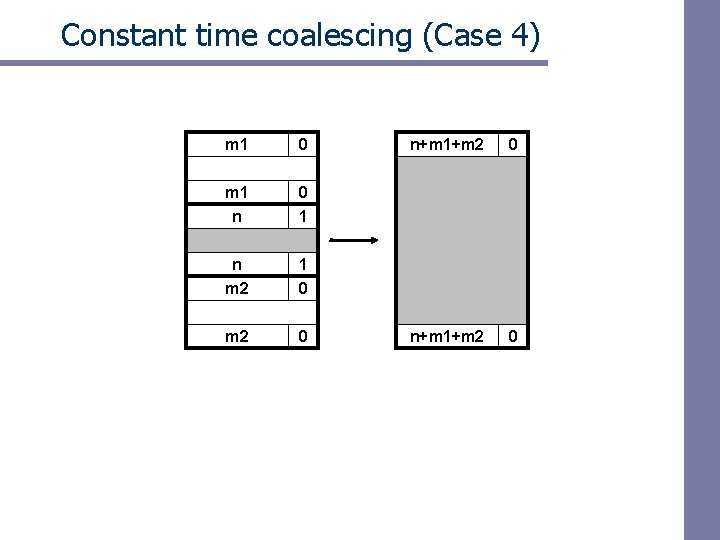

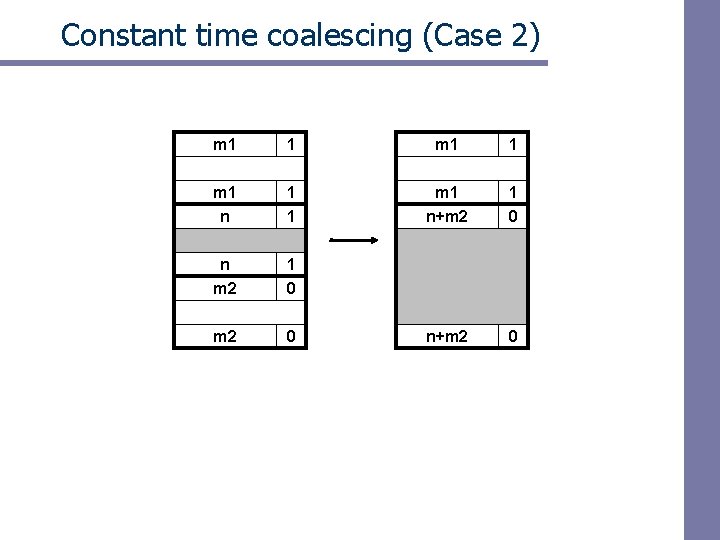

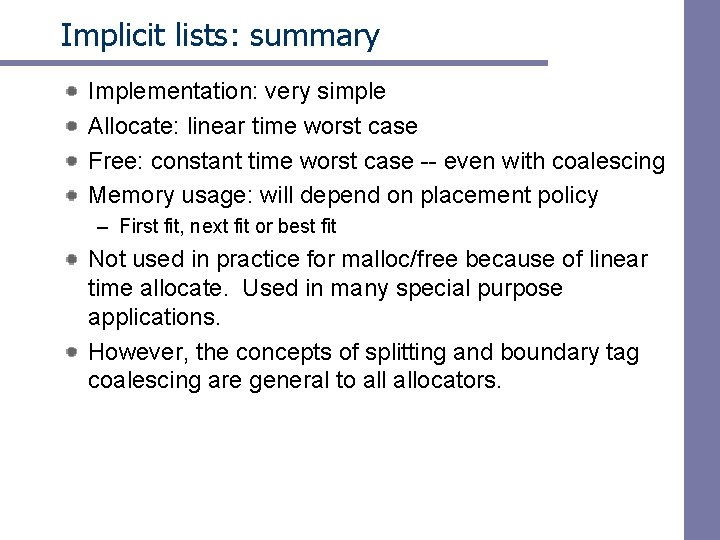

Constant time coalescing block being freed Case 1 Case 2 Case 3 Case 4 allocated free

Constant time coalescing (Case 1) m 1 1 m 1 n 1 0 n m 2 1 1 n m 2 0 1 m 2 1

Constant time coalescing (Case 2) m 1 1 m 1 n+m 2 1 0 n m 2 1 0 m 2 0 n+m 2 0

Constant time coalescing (Case 3) m 1 0 n+m 1 0 m 1 n 0 1 n m 2 1 1 n+m 1 m 2 0 1 m 2 1

Constant time coalescing (Case 4) m 1 0 m 1 n 0 1 n m 2 1 0 m 2 0 n+m 1+m 2 0

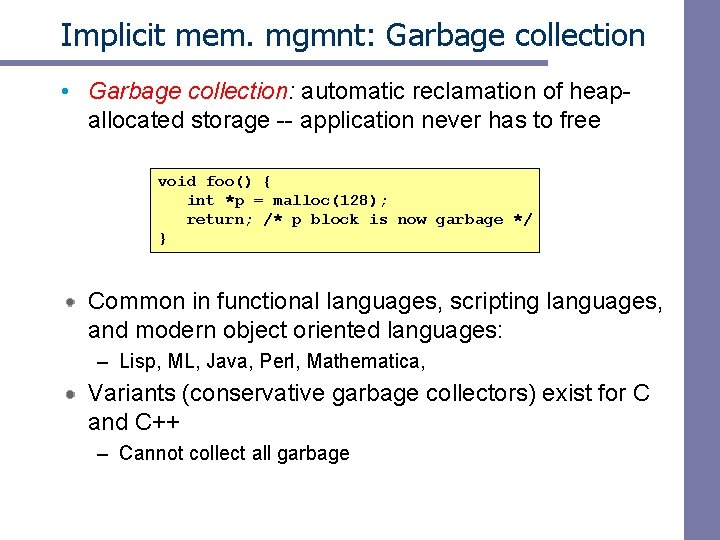

Summary of key allocator policies Placement policy: – First fit, next fit, best fit, etc. – Trades off lower throughput for less fragmentation Splitting policy: – When do we go ahead and split free blocks? – How much internal fragmentation are we willing to tolerate? Coalescing policy: – Immediate coalescing: coalesce adjacent blocks each time free is called – Deferred coalescing: try to improve performance of free by deferring coalescing until needed. e. g. , • Coalesce as you scan the free list for malloc. • Coalesce when the amount of external fragmentation reaches some threshold.

Implicit lists: summary Implementation: very simple Allocate: linear time worst case Free: constant time worst case -- even with coalescing Memory usage: will depend on placement policy – First fit, next fit or best fit Not used in practice for malloc/free because of linear time allocate. Used in many special purpose applications. However, the concepts of splitting and boundary tag coalescing are general to allocators.

Implicit mem. mgmnt: Garbage collection • Garbage collection: automatic reclamation of heap- allocated storage -- application never has to free void foo() { int *p = malloc(128); return; /* p block is now garbage */ } Common in functional languages, scripting languages, and modern object oriented languages: – Lisp, ML, Java, Perl, Mathematica, Variants (conservative garbage collectors) exist for C and C++ – Cannot collect all garbage

Garbage collection How does the memory manager know when memory can be freed? – In general we cannot know what is going to be used in the future since it depends on conditionals – But we can tell that certain blocks cannot be used if there are no pointers to them Need to make certain assumptions about pointers – Memory manager can distinguish pointers from non -pointers – All pointers point to the start of a block

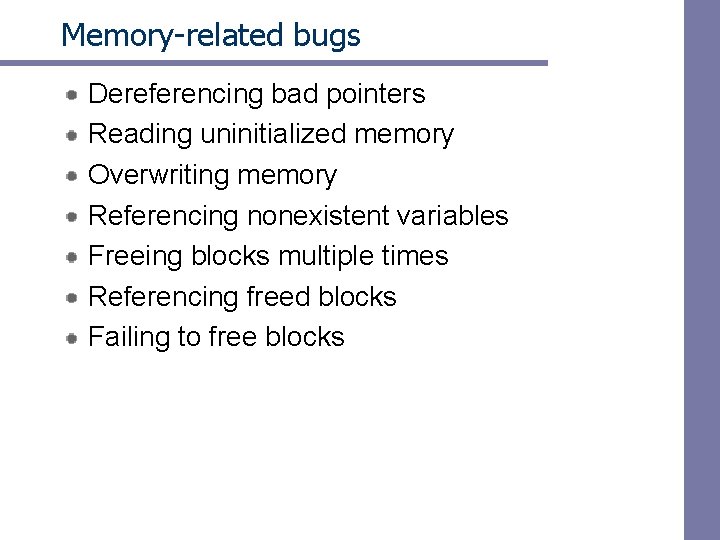

Memory as a graph We view memory as a directed graph – Each block is a node in the graph – Each pointer is an edge in the graph – Locations not in the heap that contain pointers into the heap are called root nodes (e. g. registers, locations on the stack, global variables) Root nodes Heap nodes reachable Not-reachable (garbage) A node (block) is reachable if there is a path from any root to that node. § Non-reachable nodes are garbage (never needed by the application) §

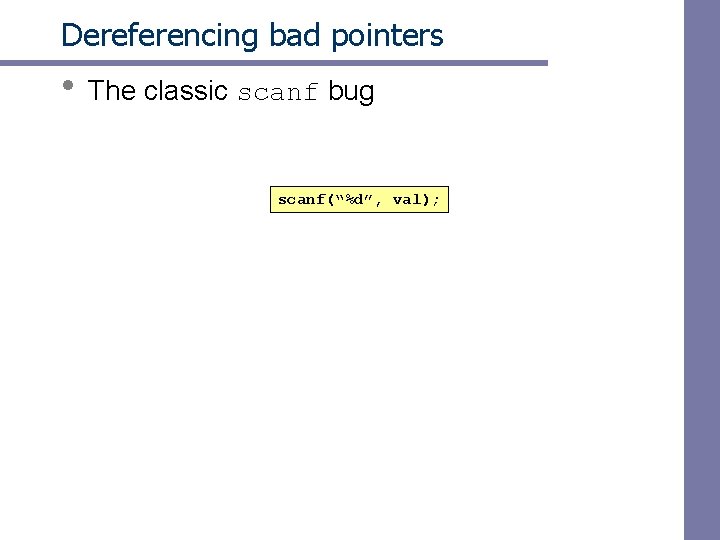

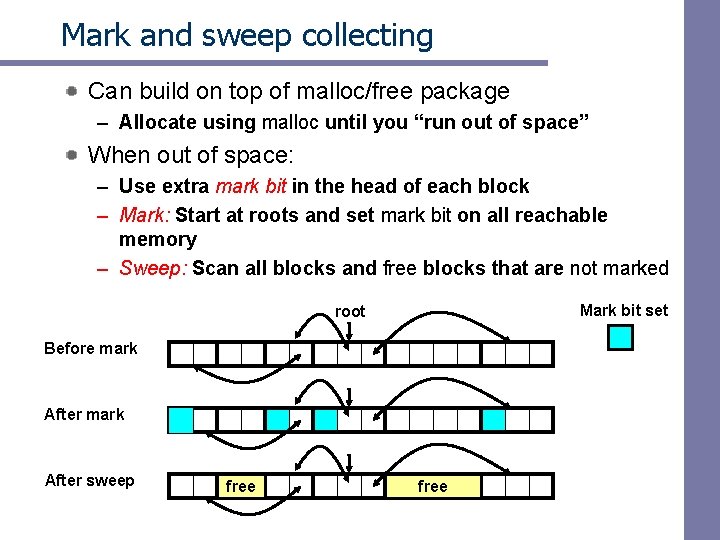

Mark and sweep collecting Can build on top of malloc/free package – Allocate using malloc until you “run out of space” When out of space: – Use extra mark bit in the head of each block – Mark: Start at roots and set mark bit on all reachable memory – Sweep: Scan all blocks and free blocks that are not marked Mark bit set root Before mark After sweep free

Memory-related bugs Dereferencing bad pointers Reading uninitialized memory Overwriting memory Referencing nonexistent variables Freeing blocks multiple times Referencing freed blocks Failing to free blocks

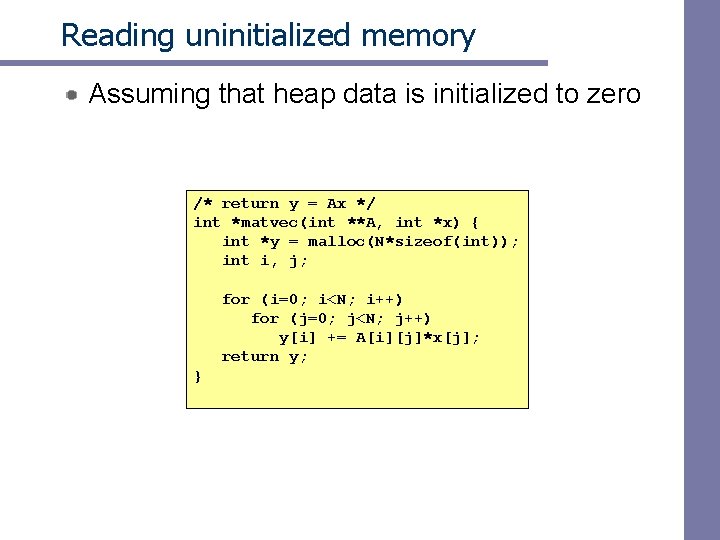

Dereferencing bad pointers • The classic scanf bug scanf(“%d”, val);

Reading uninitialized memory Assuming that heap data is initialized to zero /* return y = Ax */ int *matvec(int **A, int *x) { int *y = malloc(N*sizeof(int)); int i, j; for (i=0; i<N; i++) for (j=0; j<N; j++) y[i] += A[i][j]*x[j]; return y; }

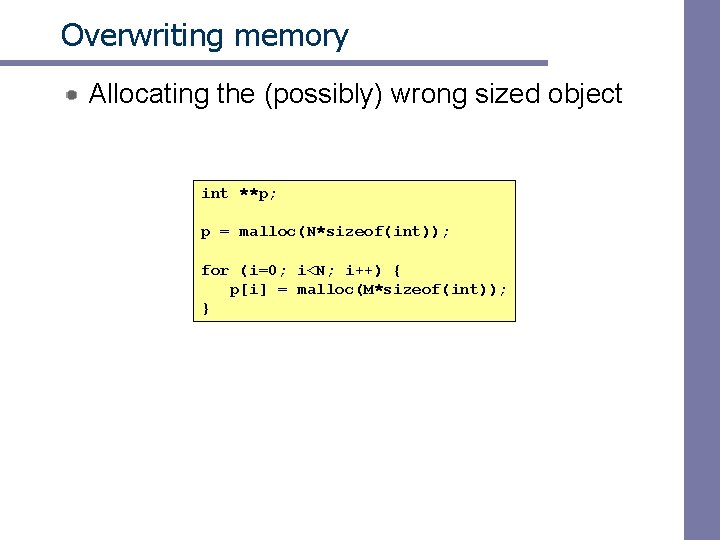

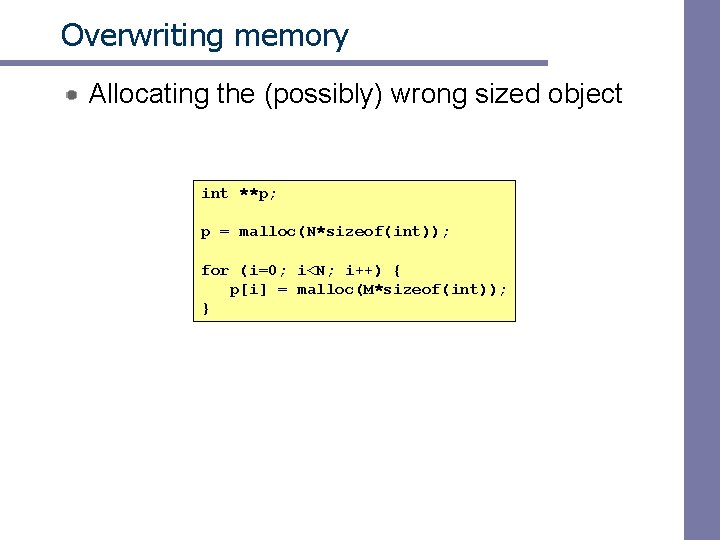

Overwriting memory Allocating the (possibly) wrong sized object int **p; p = malloc(N*sizeof(int)); for (i=0; i<N; i++) { p[i] = malloc(M*sizeof(int)); }

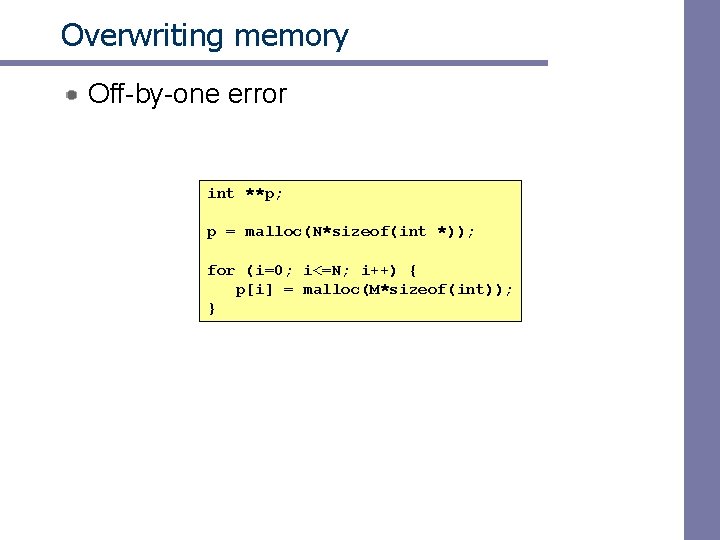

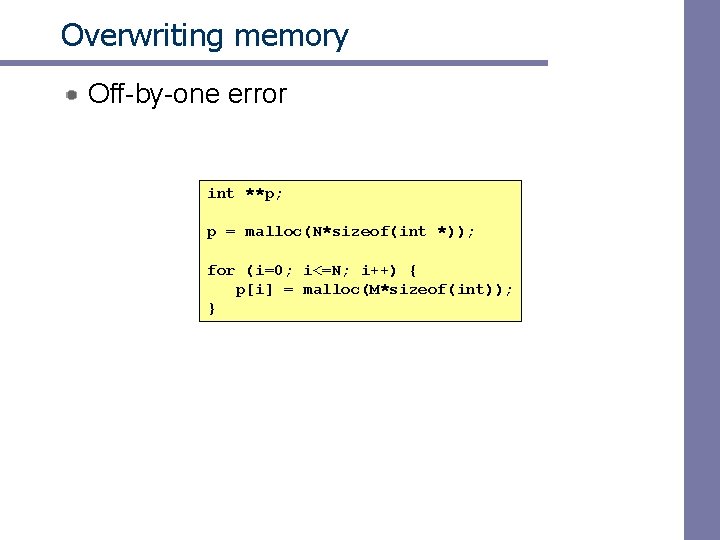

Overwriting memory Off-by-one error int **p; p = malloc(N*sizeof(int *)); for (i=0; i<=N; i++) { p[i] = malloc(M*sizeof(int)); }

![Overwriting memory Not checking the max string size char s8 int i getss Overwriting memory Not checking the max string size char s[8]; int i; gets(s); /*](https://slidetodoc.com/presentation_image_h/0c6337e05644041c9f997119f3f65636/image-73.jpg)

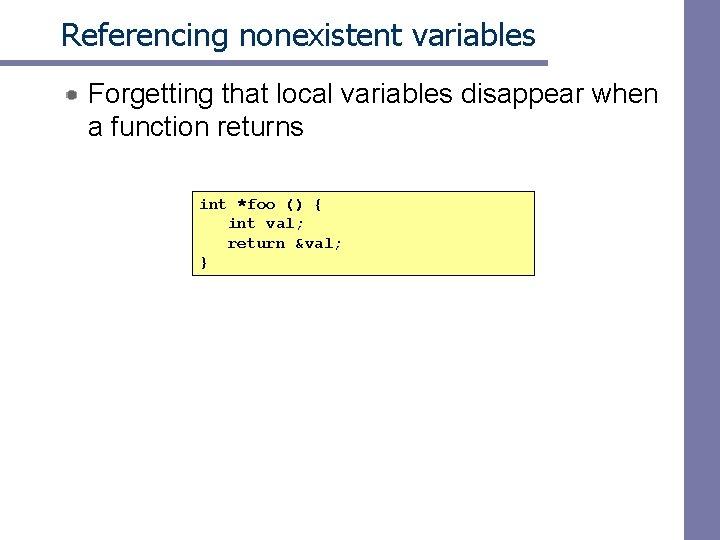

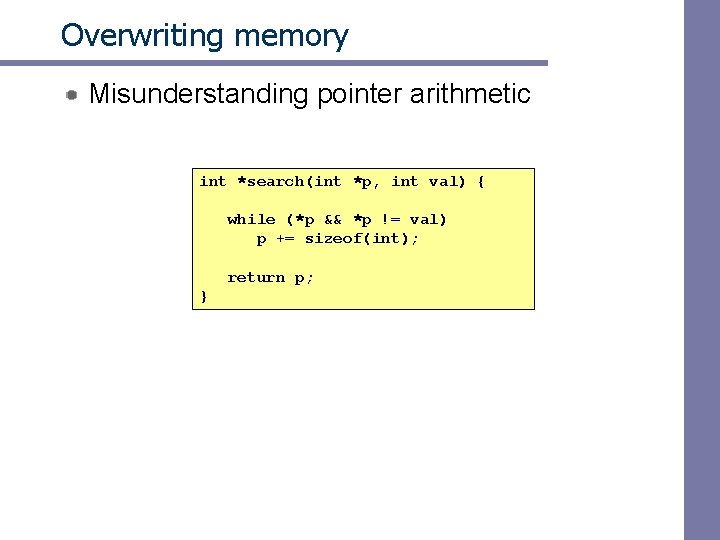

Overwriting memory Not checking the max string size char s[8]; int i; gets(s); /* reads “ 123456789” from stdin */ Basis for classic buffer overflow attacks – 1988 Internet worm – Modern attacks on Web servers

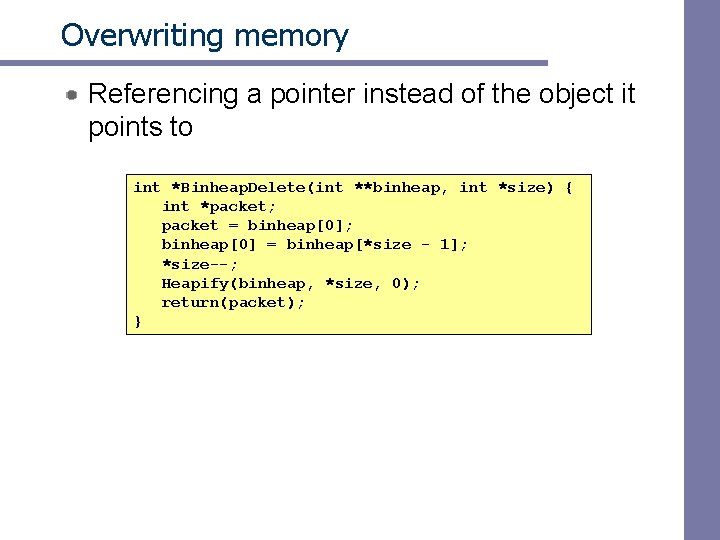

Overwriting memory Referencing a pointer instead of the object it points to int *Binheap. Delete(int **binheap, int *size) { int *packet; packet = binheap[0]; binheap[0] = binheap[*size - 1]; *size--; Heapify(binheap, *size, 0); return(packet); }

Overwriting memory Misunderstanding pointer arithmetic int *search(int *p, int val) { while (*p && *p != val) p += sizeof(int); return p; }

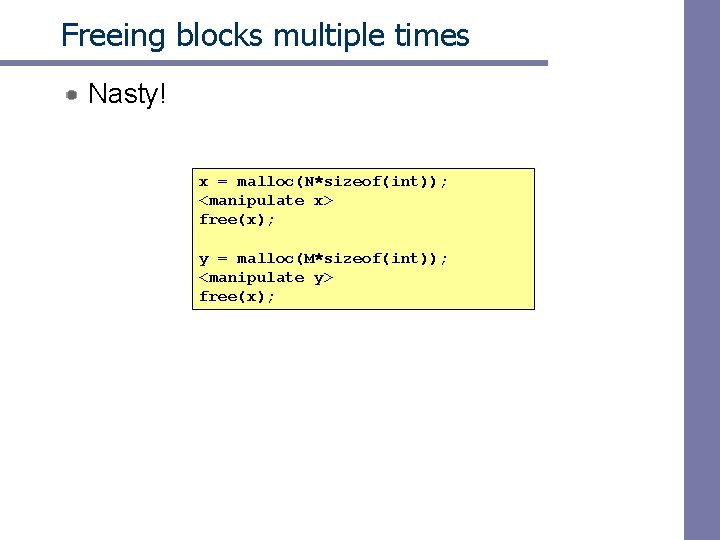

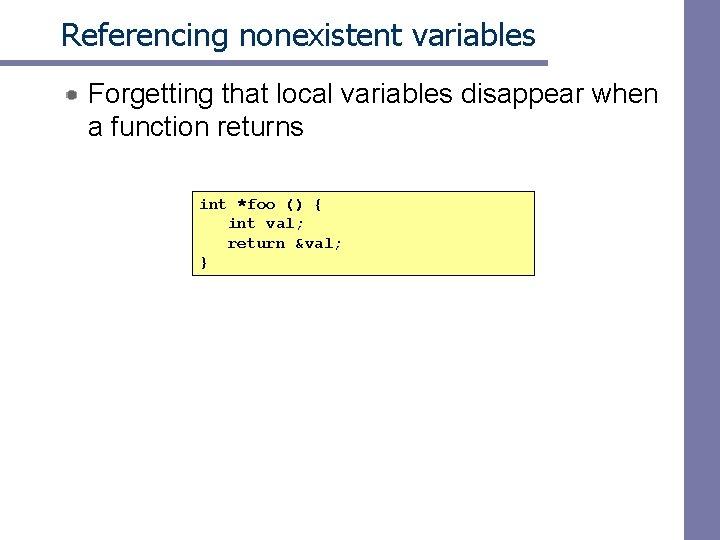

Referencing nonexistent variables Forgetting that local variables disappear when a function returns int *foo () { int val; return &val; }

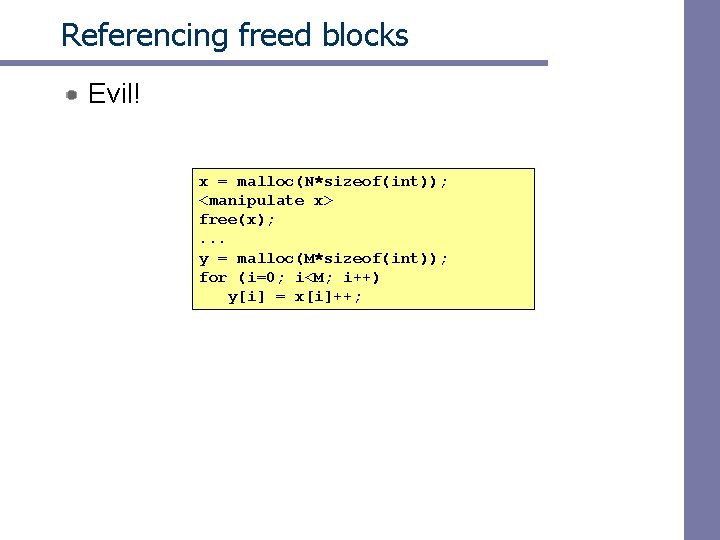

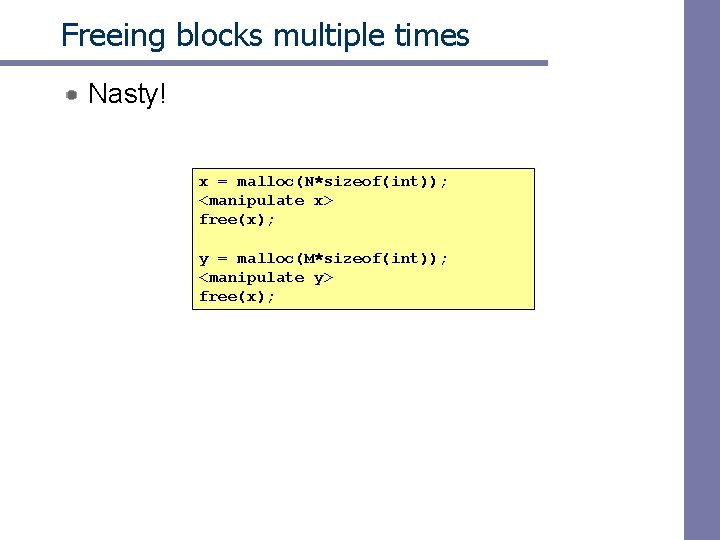

Freeing blocks multiple times Nasty! x = malloc(N*sizeof(int)); <manipulate x> free(x); y = malloc(M*sizeof(int)); <manipulate y> free(x);

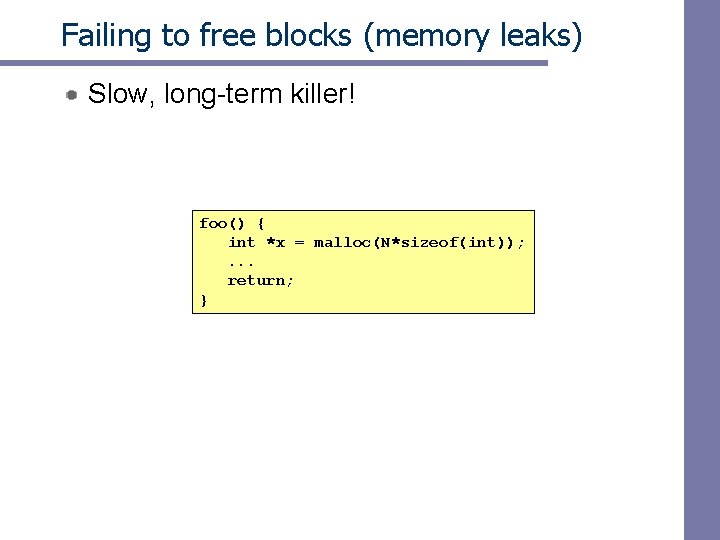

Referencing freed blocks Evil! x = malloc(N*sizeof(int)); <manipulate x> free(x); . . . y = malloc(M*sizeof(int)); for (i=0; i<M; i++) y[i] = x[i]++;

Failing to free blocks (memory leaks) Slow, long-term killer! foo() { int *x = malloc(N*sizeof(int)); . . . return; }