Virtual Memory and Address Translation Virtual Addressing virtual

![Page Tables (3) Typical page table entry [from Tanenbaum] Page Tables (3) Typical page table entry [from Tanenbaum]](https://slidetodoc.com/presentation_image_h2/11eace10f821c7a18cd9db4a7d62bc4d/image-13.jpg)

- Slides: 59

Virtual Memory and Address Translation

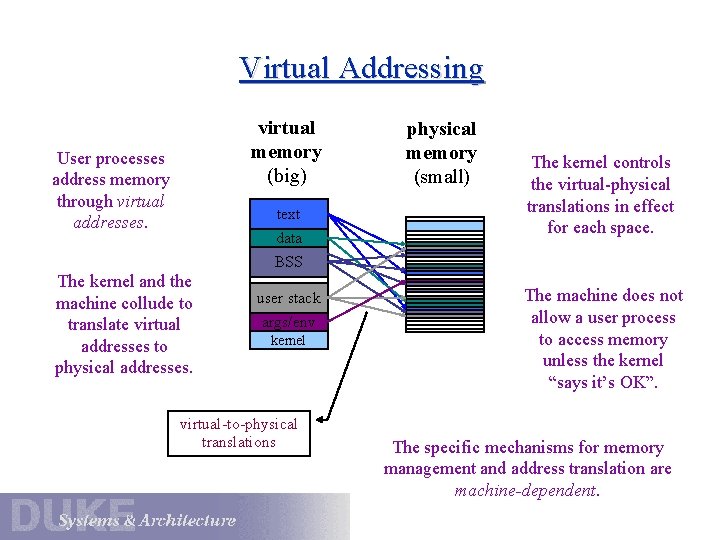

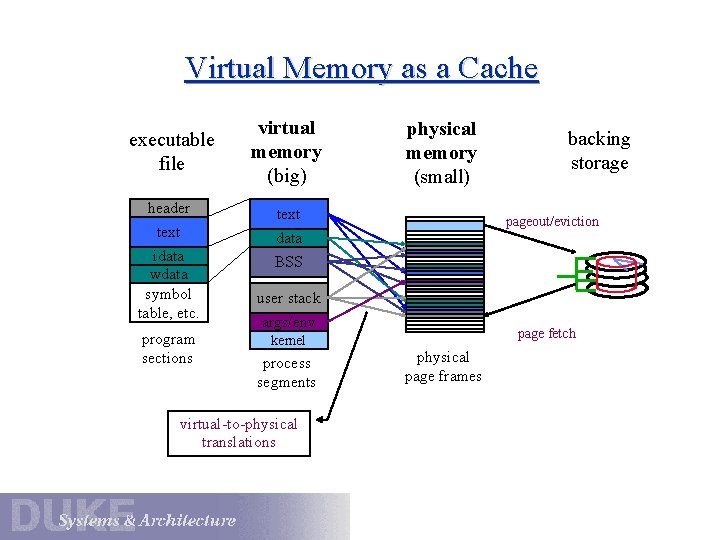

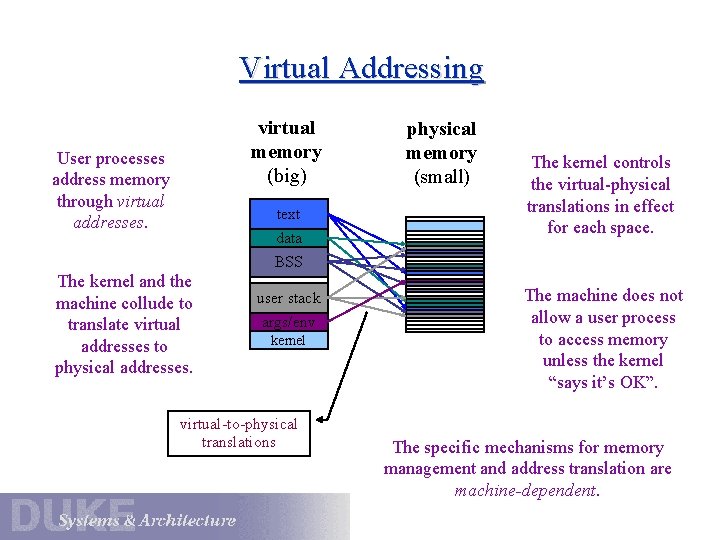

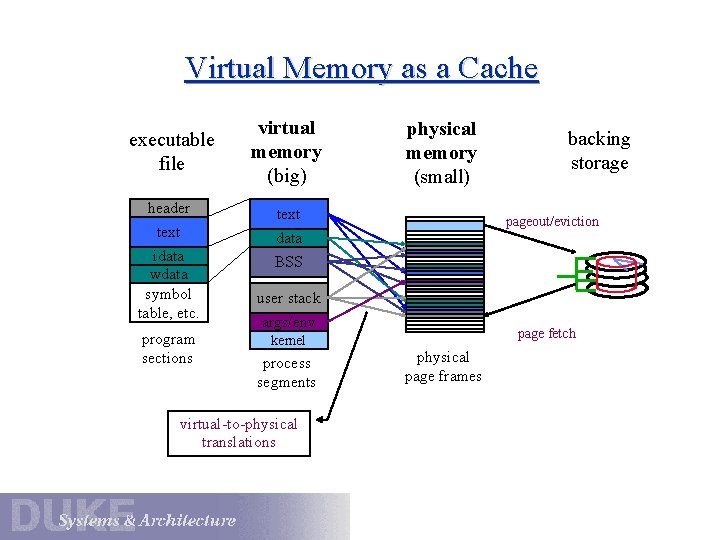

Virtual Addressing virtual memory (big) User processes address memory through virtual addresses. text data The kernel and the machine collude to translate virtual addresses to physical addresses. physical memory (small) The kernel controls the virtual-physical translations in effect for each space. BSS user stack args/env kernel virtual-to-physical translations The machine does not allow a user process to access memory unless the kernel “says it’s OK”. The specific mechanisms for memory management and address translation are machine-dependent.

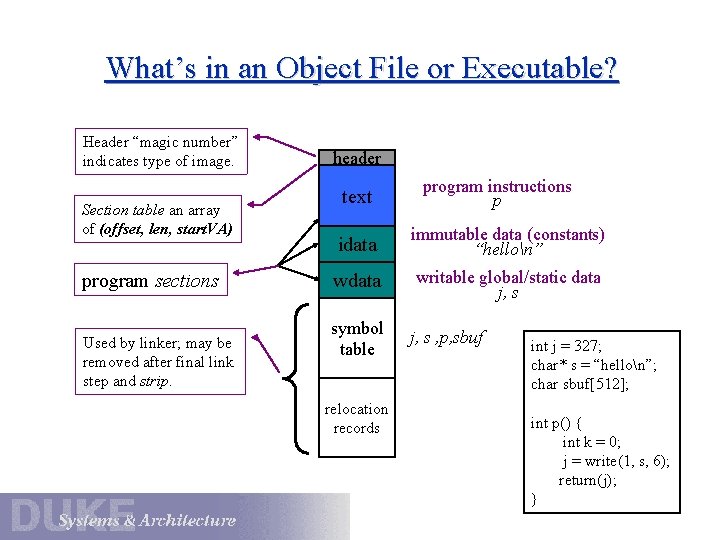

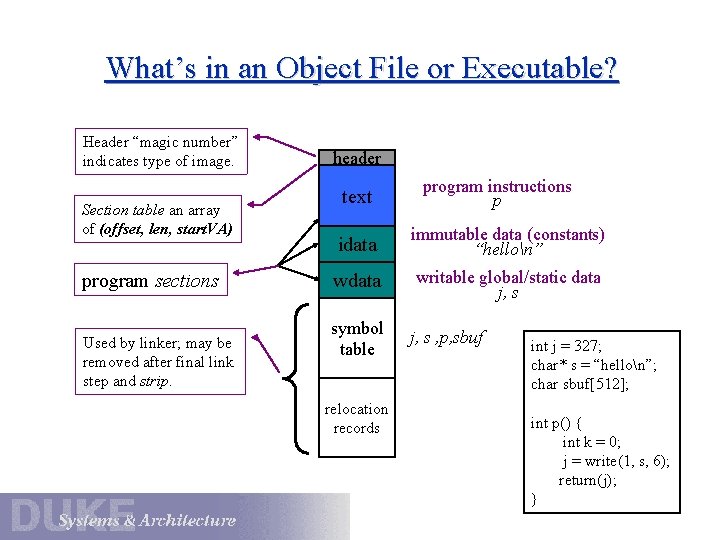

What’s in an Object File or Executable? Header “magic number” indicates type of image. Section table an array of (offset, len, start. VA) program sections Used by linker; may be removed after final link step and strip. header text program instructions p data immutable data (constants) “hellon” wdata writable global/static data j, s symbol table relocation records j, s , p, sbuf int j = 327; char* s = “hellon”; char sbuf[512]; int p() { int k = 0; j = write(1, s, 6); return(j); }

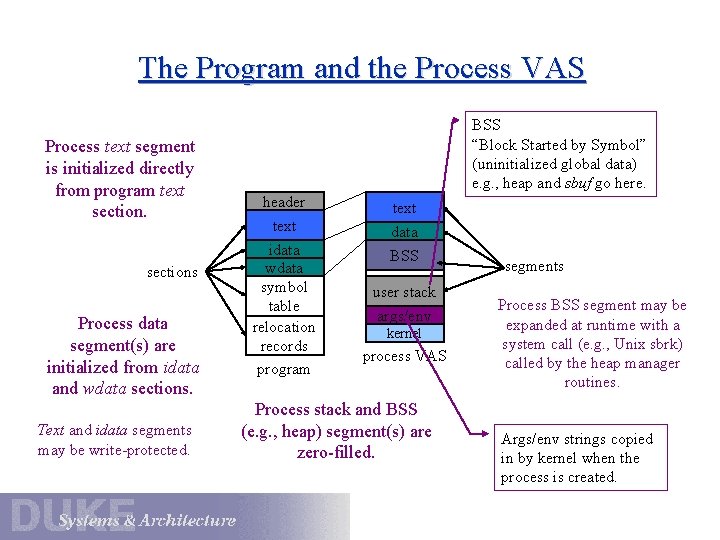

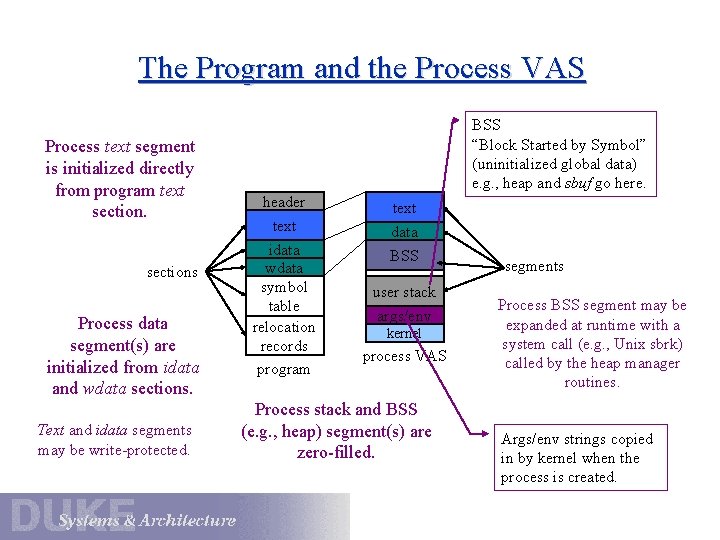

The Program and the Process VAS Process text segment is initialized directly from program text sections Process data segment(s) are initialized from idata and wdata sections. Text and idata segments may be write-protected. BSS “Block Started by Symbol” (uninitialized global data) e. g. , heap and sbuf go here. header text idata wdata symbol table relocation records program text data BSS user stack args/env kernel process VAS Process stack and BSS (e. g. , heap) segment(s) are zero-filled. segments Process BSS segment may be expanded at runtime with a system call (e. g. , Unix sbrk) called by the heap manager routines. Args/env strings copied in by kernel when the process is created.

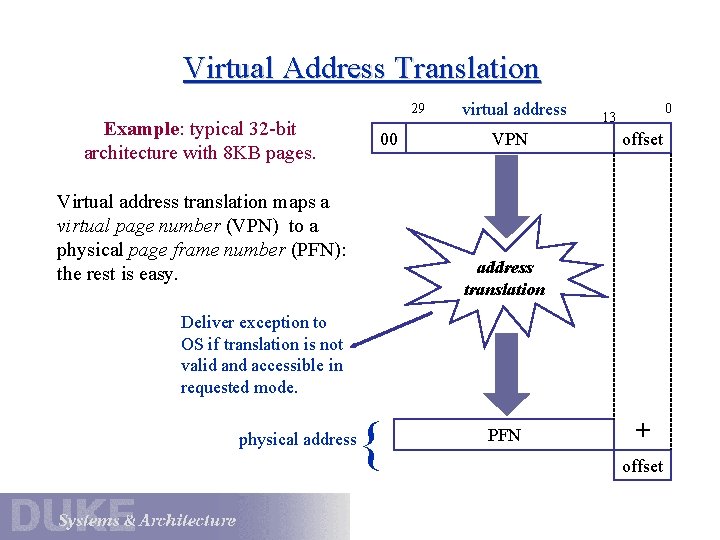

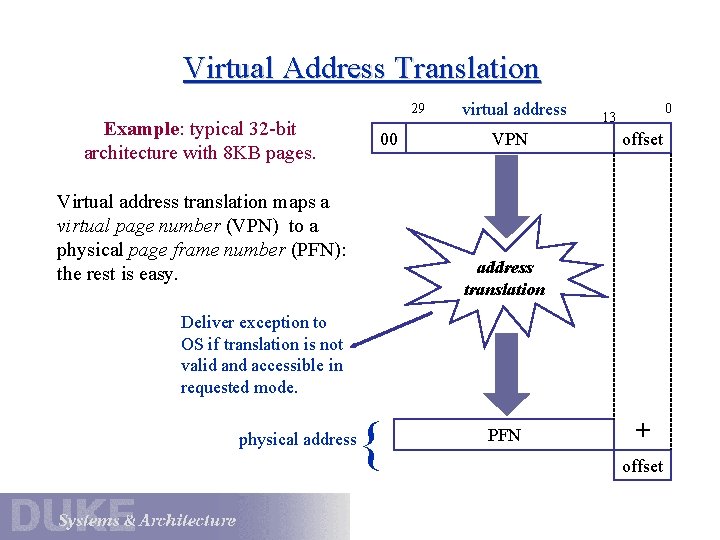

Virtual Address Translation 29 Example: typical 32 -bit architecture with 8 KB pages. 00 Virtual address translation maps a virtual page number (VPN) to a physical page frame number (PFN): the rest is easy. virtual address VPN 0 13 offset address translation Deliver exception to OS if translation is not valid and accessible in requested mode. physical address { PFN + offset

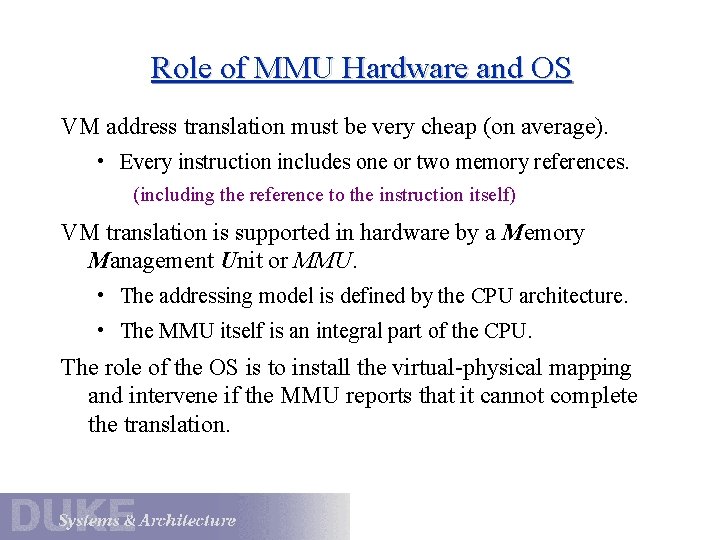

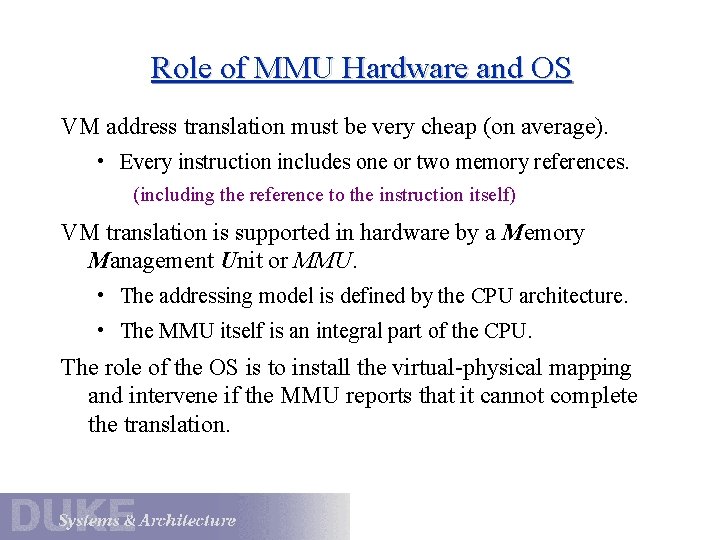

Role of MMU Hardware and OS VM address translation must be very cheap (on average). • Every instruction includes one or two memory references. (including the reference to the instruction itself) VM translation is supported in hardware by a Memory Management Unit or MMU. • The addressing model is defined by the CPU architecture. • The MMU itself is an integral part of the CPU. The role of the OS is to install the virtual-physical mapping and intervene if the MMU reports that it cannot complete the translation.

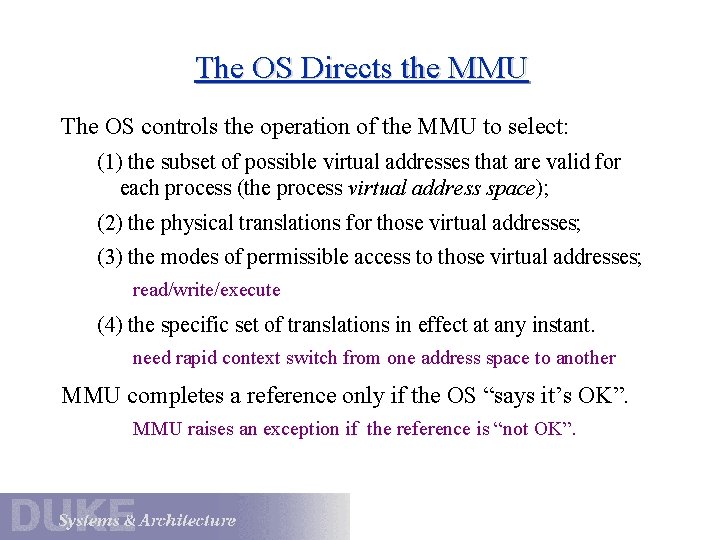

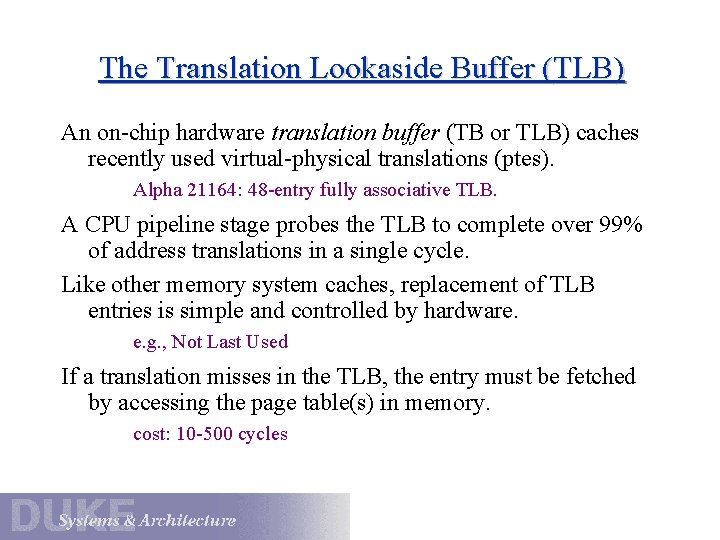

The OS Directs the MMU The OS controls the operation of the MMU to select: (1) the subset of possible virtual addresses that are valid for each process (the process virtual address space); (2) the physical translations for those virtual addresses; (3) the modes of permissible access to those virtual addresses; read/write/execute (4) the specific set of translations in effect at any instant. need rapid context switch from one address space to another MMU completes a reference only if the OS “says it’s OK”. MMU raises an exception if the reference is “not OK”.

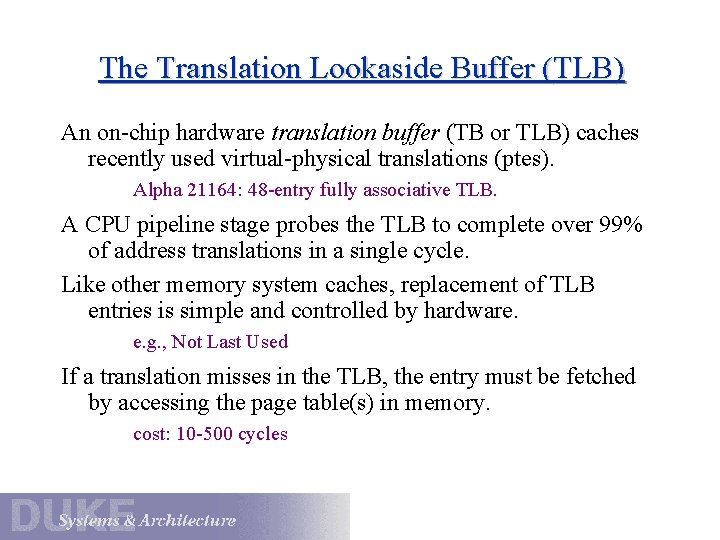

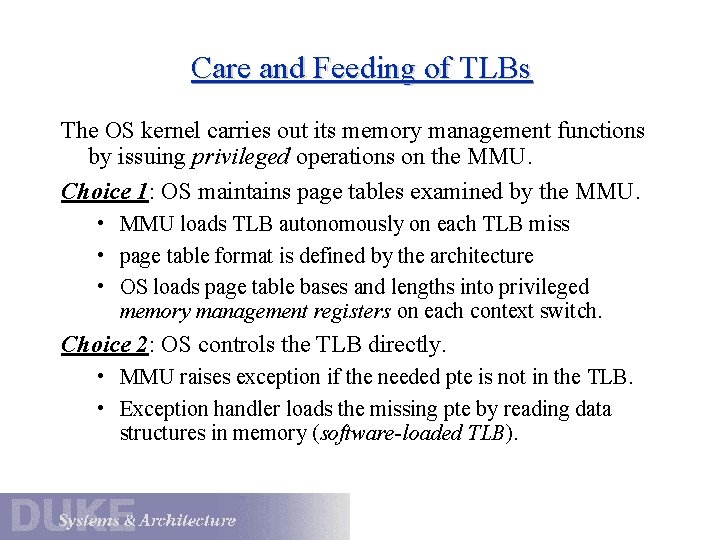

The Translation Lookaside Buffer (TLB) An on-chip hardware translation buffer (TB or TLB) caches recently used virtual-physical translations (ptes). Alpha 21164: 48 -entry fully associative TLB. A CPU pipeline stage probes the TLB to complete over 99% of address translations in a single cycle. Like other memory system caches, replacement of TLB entries is simple and controlled by hardware. e. g. , Not Last Used If a translation misses in the TLB, the entry must be fetched by accessing the page table(s) in memory. cost: 10 -500 cycles

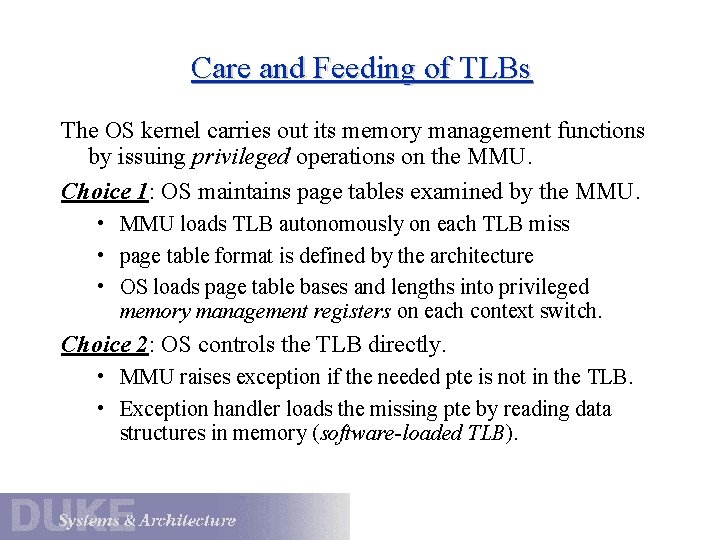

Care and Feeding of TLBs The OS kernel carries out its memory management functions by issuing privileged operations on the MMU. Choice 1: OS maintains page tables examined by the MMU. • MMU loads TLB autonomously on each TLB miss • page table format is defined by the architecture • OS loads page table bases and lengths into privileged memory management registers on each context switch. Choice 2: OS controls the TLB directly. • MMU raises exception if the needed pte is not in the TLB. • Exception handler loads the missing pte by reading data structures in memory (software-loaded TLB).

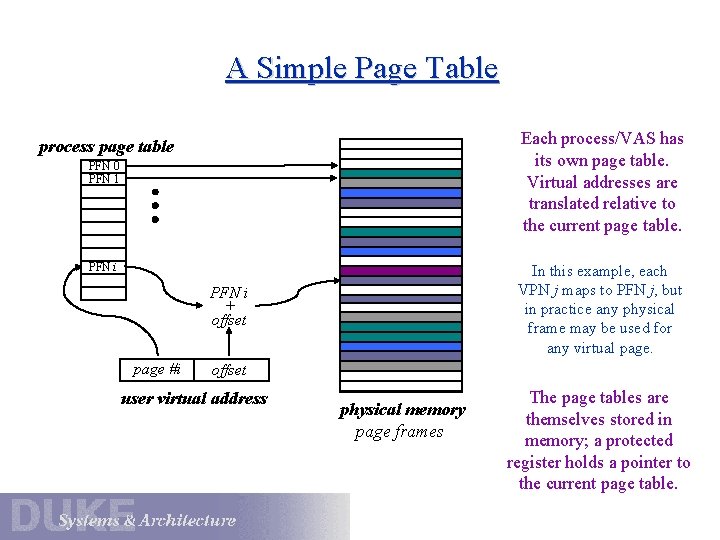

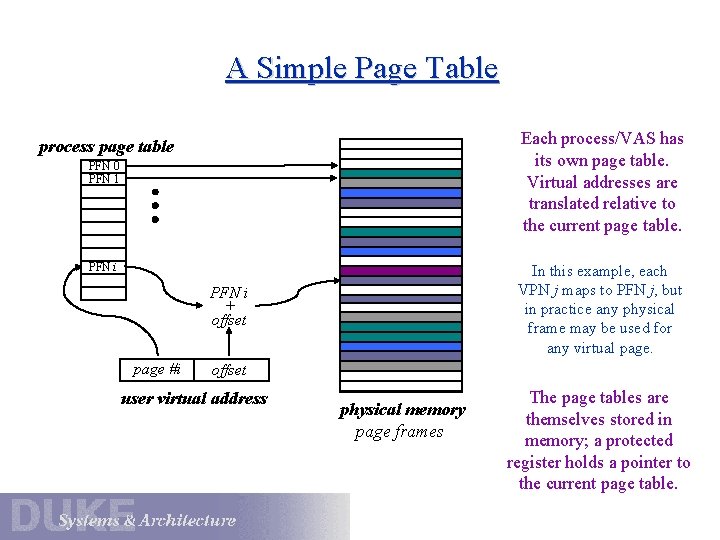

A Simple Page Table Each process/VAS has its own page table. Virtual addresses are translated relative to the current page table. process page table PFN 0 PFN 1 PFN i In this example, each VPN j maps to PFN j, but in practice any physical frame may be used for any virtual page. PFN i + offset page #i offset user virtual address physical memory page frames The page tables are themselves stored in memory; a protected register holds a pointer to the current page table.

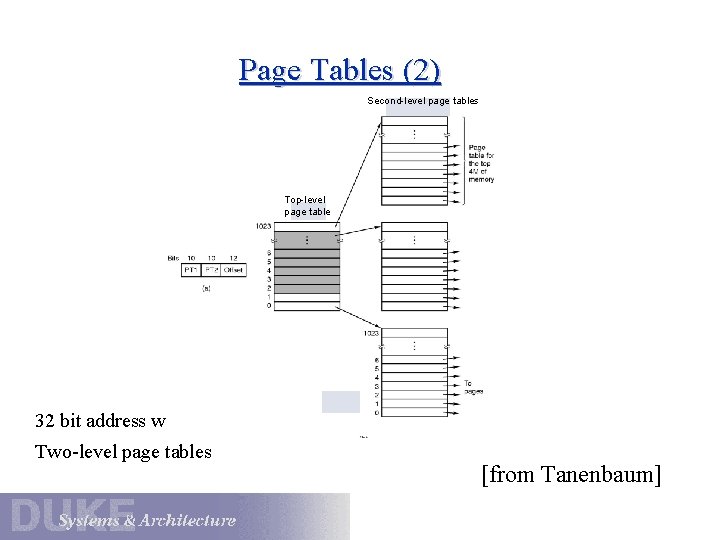

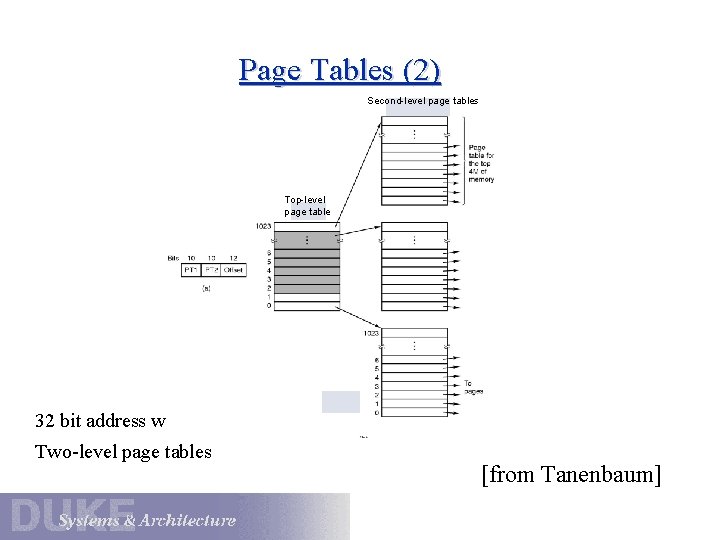

Page Tables (2) Second-level page tables Top-level page table 32 bit address with 2 page table fields Two-level page tables [from Tanenbaum]

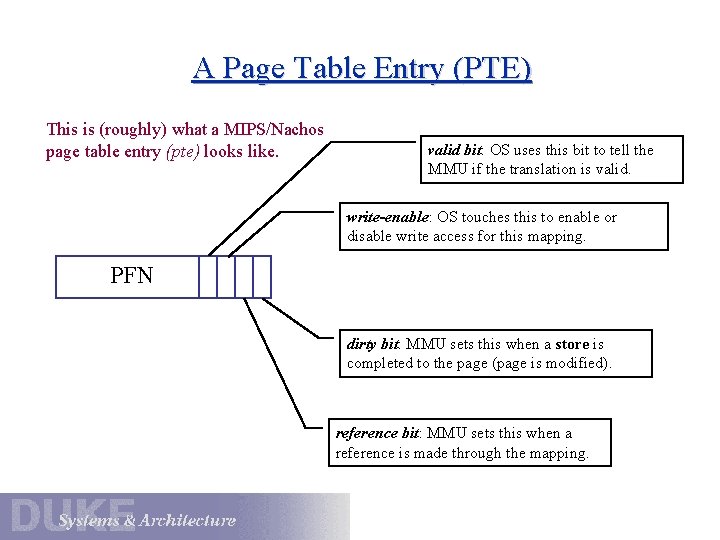

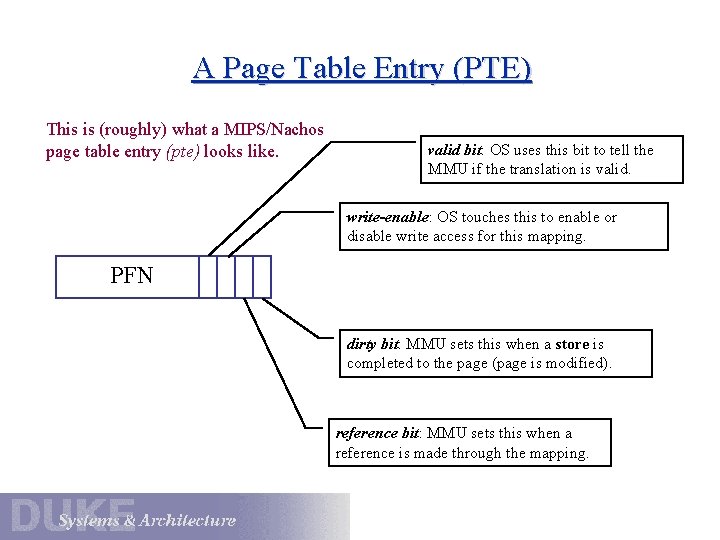

A Page Table Entry (PTE) This is (roughly) what a MIPS/Nachos page table entry (pte) looks like. valid bit: OS uses this bit to tell the MMU if the translation is valid. write-enable: OS touches this to enable or disable write access for this mapping. PFN dirty bit: MMU sets this when a store is completed to the page (page is modified). reference bit: MMU sets this when a reference is made through the mapping.

![Page Tables 3 Typical page table entry from Tanenbaum Page Tables (3) Typical page table entry [from Tanenbaum]](https://slidetodoc.com/presentation_image_h2/11eace10f821c7a18cd9db4a7d62bc4d/image-13.jpg)

Page Tables (3) Typical page table entry [from Tanenbaum]

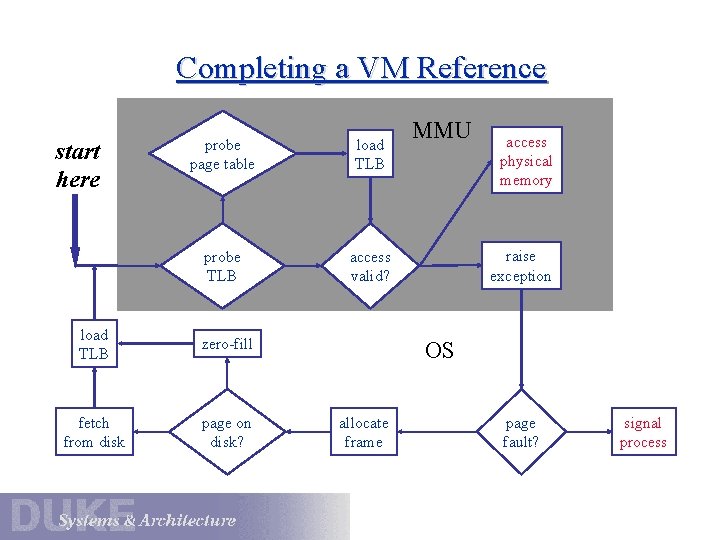

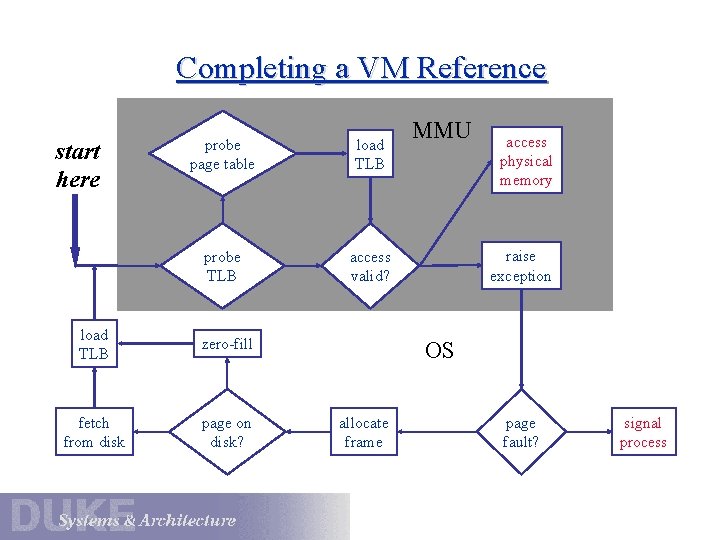

Completing a VM Reference start here probe page table load TLB probe TLB access valid? load TLB zero-fill fetch from disk page on disk? MMU access physical memory raise exception OS allocate frame page fault? signal process

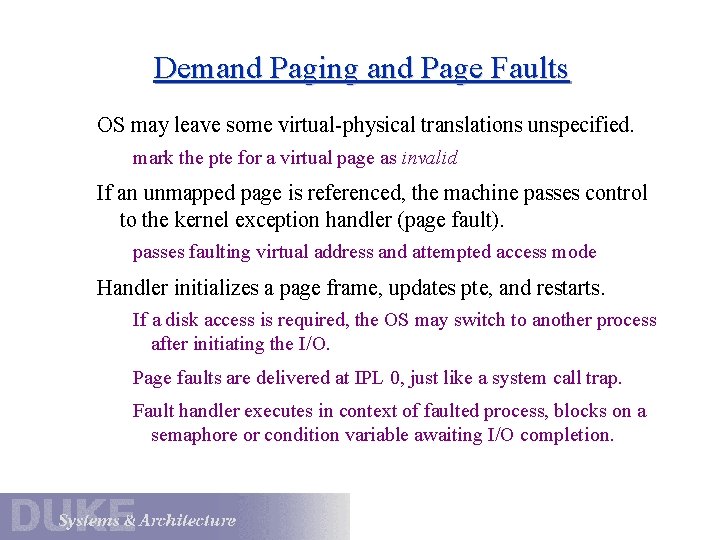

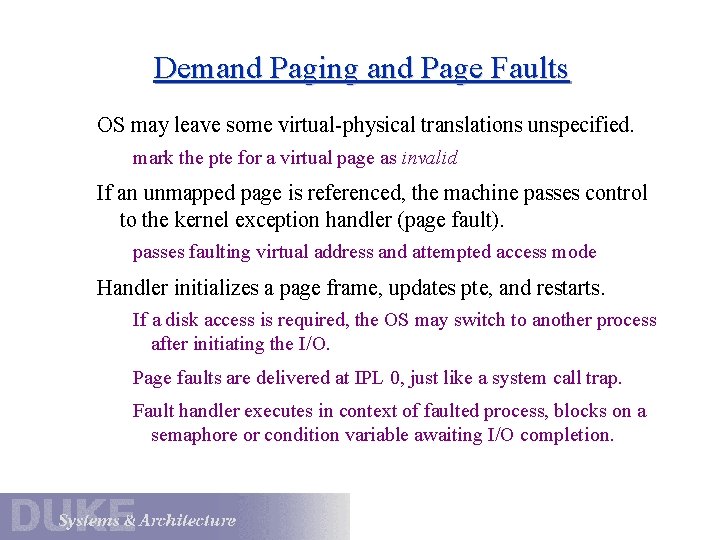

Demand Paging and Page Faults OS may leave some virtual-physical translations unspecified. mark the pte for a virtual page as invalid If an unmapped page is referenced, the machine passes control to the kernel exception handler (page fault). passes faulting virtual address and attempted access mode Handler initializes a page frame, updates pte, and restarts. If a disk access is required, the OS may switch to another process after initiating the I/O. Page faults are delivered at IPL 0, just like a system call trap. Fault handler executes in context of faulted process, blocks on a semaphore or condition variable awaiting I/O completion.

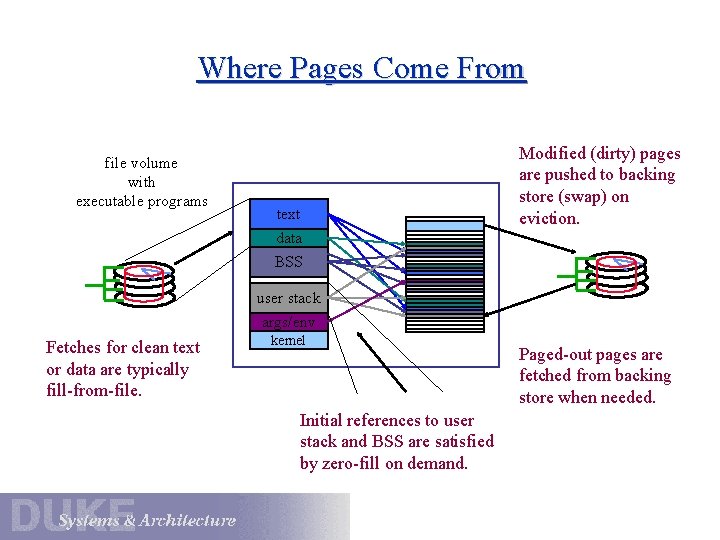

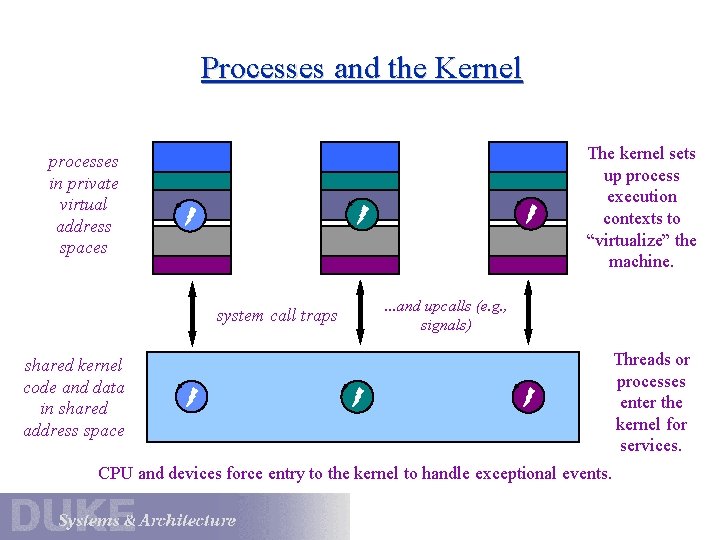

Where Pages Come From file volume with executable programs text data Modified (dirty) pages are pushed to backing store (swap) on eviction. BSS user stack args/env Fetches for clean text or data are typically fill-from-file. kernel Initial references to user stack and BSS are satisfied by zero-fill on demand. Paged-out pages are fetched from backing store when needed.

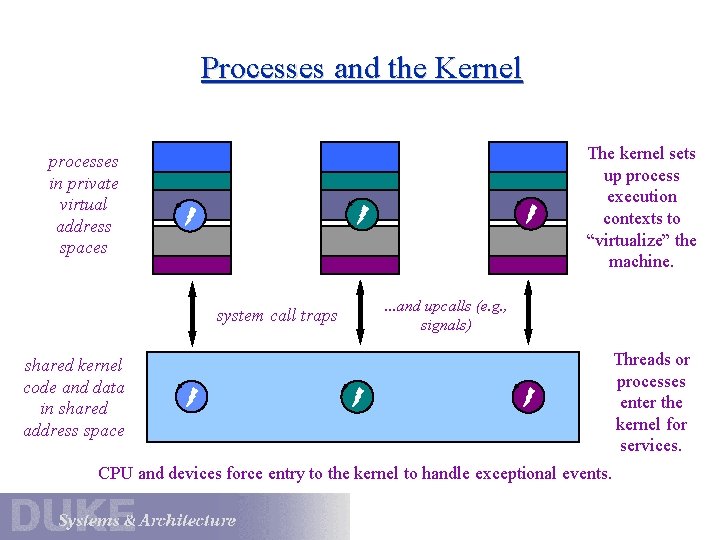

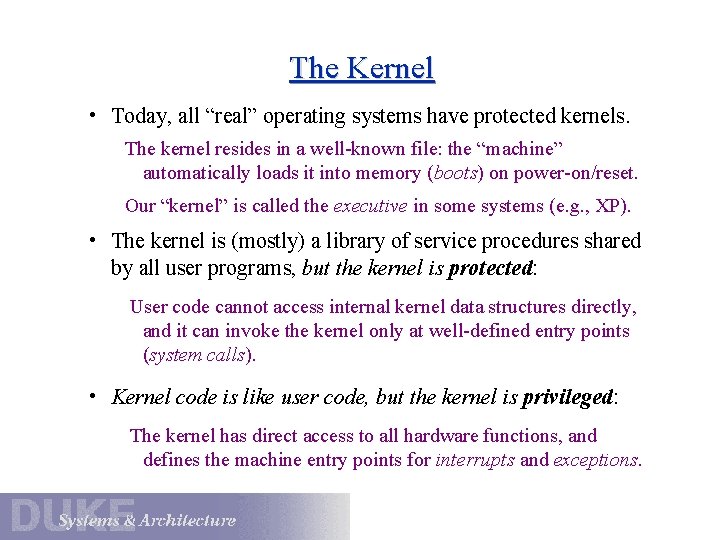

Processes and the Kernel processes in private virtual address spaces data The kernel sets up process execution contexts to “virtualize” the machine. data system call traps . . . and upcalls (e. g. , signals) shared kernel code and data in shared address space CPU and devices force entry to the kernel to handle exceptional events. Threads or processes enter the kernel for services.

The Kernel • Today, all “real” operating systems have protected kernels. The kernel resides in a well-known file: the “machine” automatically loads it into memory (boots) on power-on/reset. Our “kernel” is called the executive in some systems (e. g. , XP). • The kernel is (mostly) a library of service procedures shared by all user programs, but the kernel is protected: User code cannot access internal kernel data structures directly, and it can invoke the kernel only at well-defined entry points (system calls). • Kernel code is like user code, but the kernel is privileged: The kernel has direct access to all hardware functions, and defines the machine entry points for interrupts and exceptions.

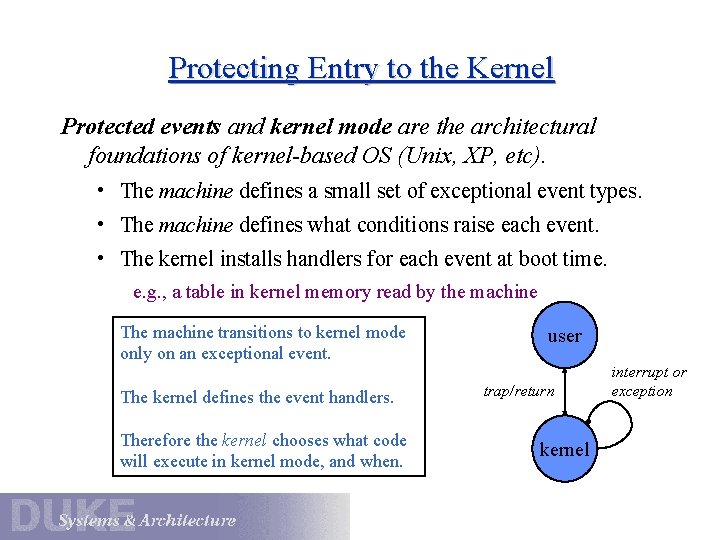

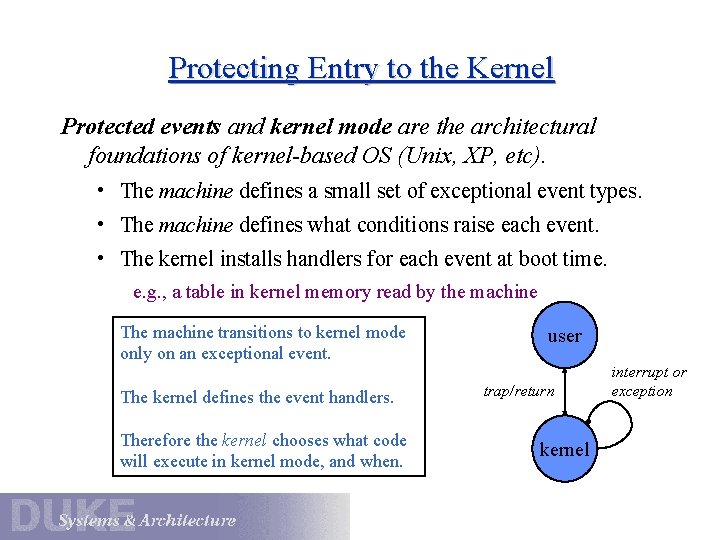

Protecting Entry to the Kernel Protected events and kernel mode are the architectural foundations of kernel-based OS (Unix, XP, etc). • The machine defines a small set of exceptional event types. • The machine defines what conditions raise each event. • The kernel installs handlers for each event at boot time. e. g. , a table in kernel memory read by the machine The machine transitions to kernel mode only on an exceptional event. The kernel defines the event handlers. Therefore the kernel chooses what code will execute in kernel mode, and when. user trap/return kernel interrupt or exception

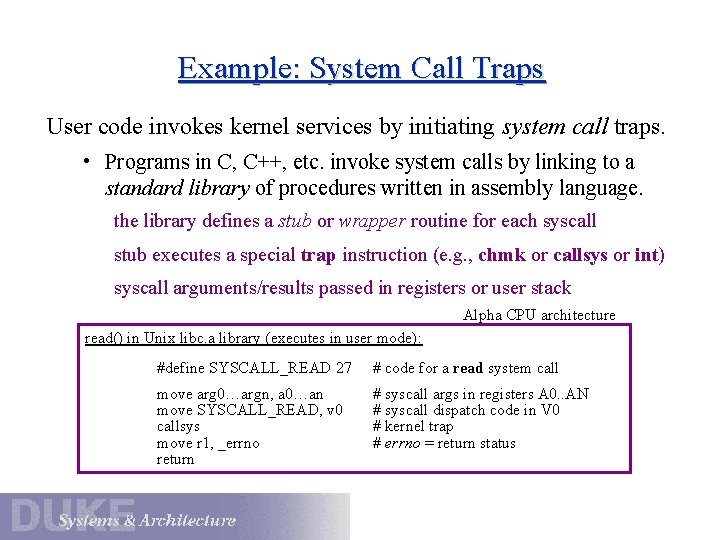

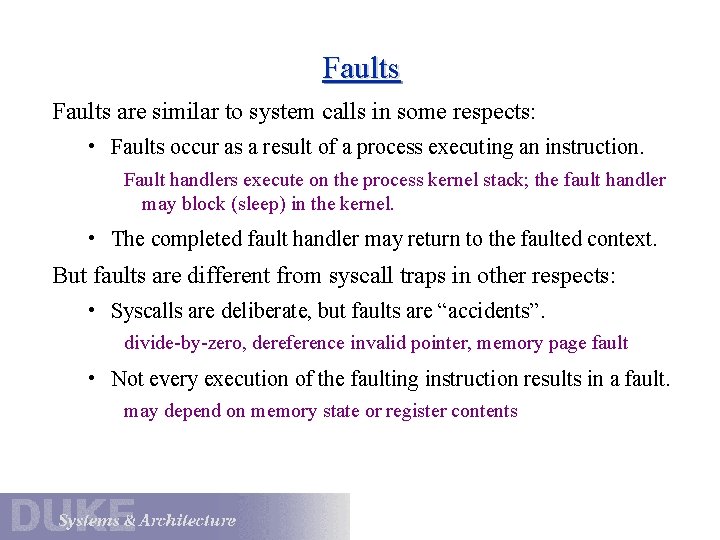

Example: System Call Traps User code invokes kernel services by initiating system call traps. • Programs in C, C++, etc. invoke system calls by linking to a standard library of procedures written in assembly language. the library defines a stub or wrapper routine for each syscall stub executes a special trap instruction (e. g. , chmk or callsys or int) syscall arguments/results passed in registers or user stack Alpha CPU architecture read() in Unix libc. a library (executes in user mode): #define SYSCALL_READ 27 # code for a read system call move arg 0…argn, a 0…an move SYSCALL_READ, v 0 callsys move r 1, _errno return # syscall args in registers A 0. . AN # syscall dispatch code in V 0 # kernel trap # errno = return status

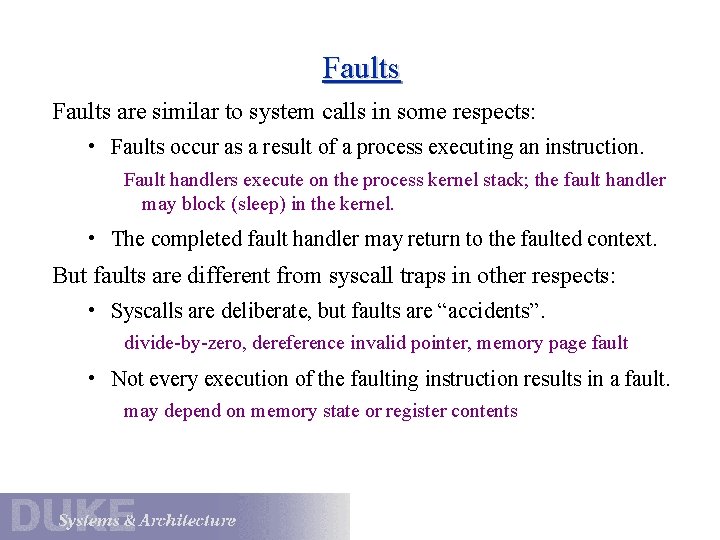

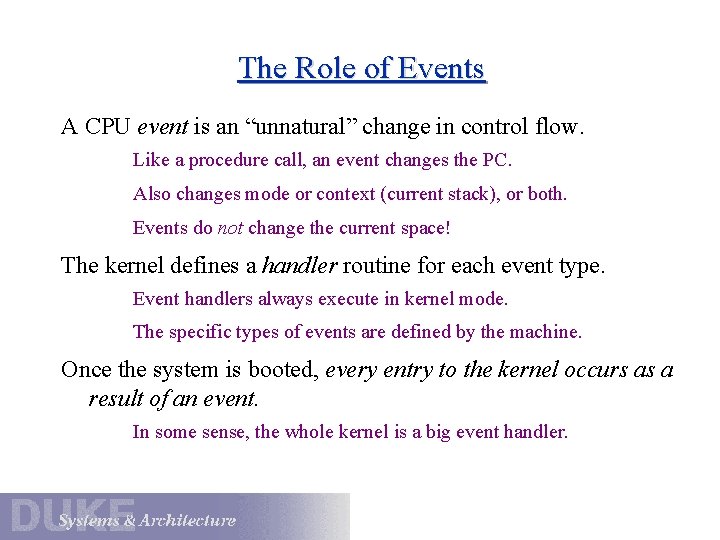

Faults are similar to system calls in some respects: • Faults occur as a result of a process executing an instruction. Fault handlers execute on the process kernel stack; the fault handler may block (sleep) in the kernel. • The completed fault handler may return to the faulted context. But faults are different from syscall traps in other respects: • Syscalls are deliberate, but faults are “accidents”. divide-by-zero, dereference invalid pointer, memory page fault • Not every execution of the faulting instruction results in a fault. may depend on memory state or register contents

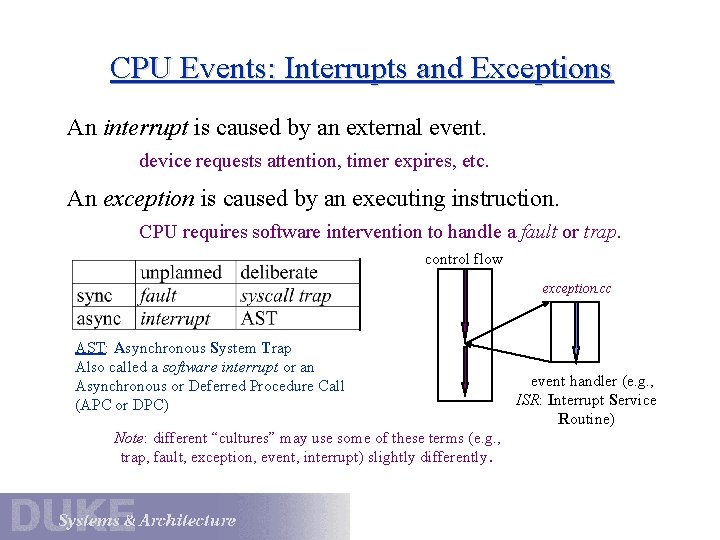

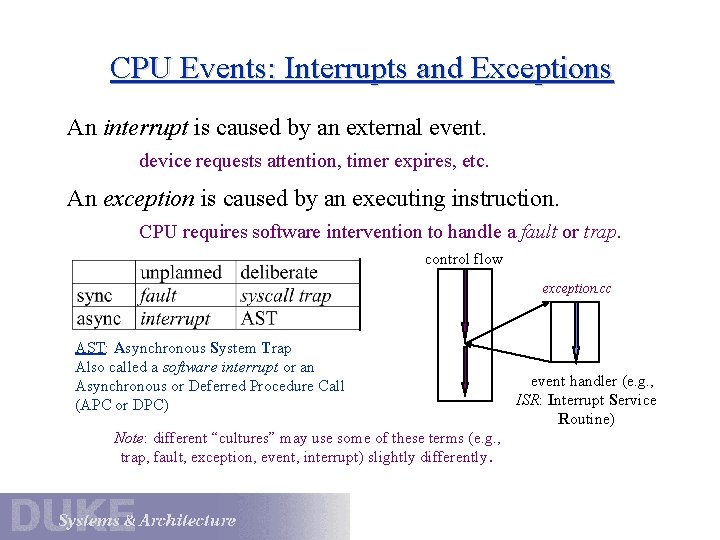

The Role of Events A CPU event is an “unnatural” change in control flow. Like a procedure call, an event changes the PC. Also changes mode or context (current stack), or both. Events do not change the current space! The kernel defines a handler routine for each event type. Event handlers always execute in kernel mode. The specific types of events are defined by the machine. Once the system is booted, every entry to the kernel occurs as a result of an event. In some sense, the whole kernel is a big event handler.

CPU Events: Interrupts and Exceptions An interrupt is caused by an external event. device requests attention, timer expires, etc. An exception is caused by an executing instruction. CPU requires software intervention to handle a fault or trap. control flow exception. cc AST: Asynchronous System Trap Also called a software interrupt or an Asynchronous or Deferred Procedure Call (APC or DPC) Note: different “cultures” may use some of these terms (e. g. , trap, fault, exception, event, interrupt) slightly differently. event handler (e. g. , ISR: Interrupt Service Routine)

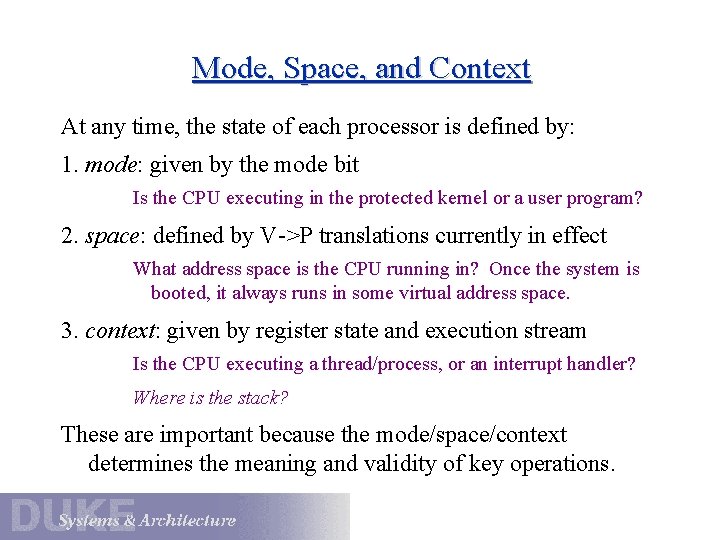

Mode, Space, and Context At any time, the state of each processor is defined by: 1. mode: given by the mode bit Is the CPU executing in the protected kernel or a user program? 2. space: defined by V->P translations currently in effect What address space is the CPU running in? Once the system is booted, it always runs in some virtual address space. 3. context: given by register state and execution stream Is the CPU executing a thread/process, or an interrupt handler? Where is the stack? These are important because the mode/space/context determines the meaning and validity of key operations.

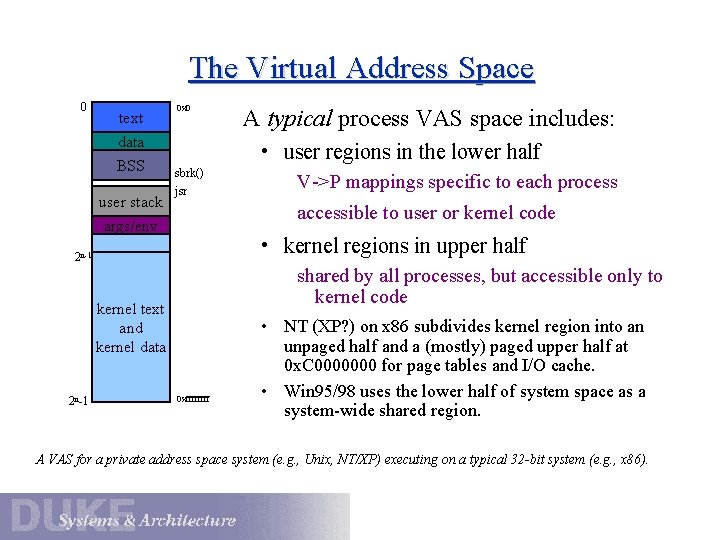

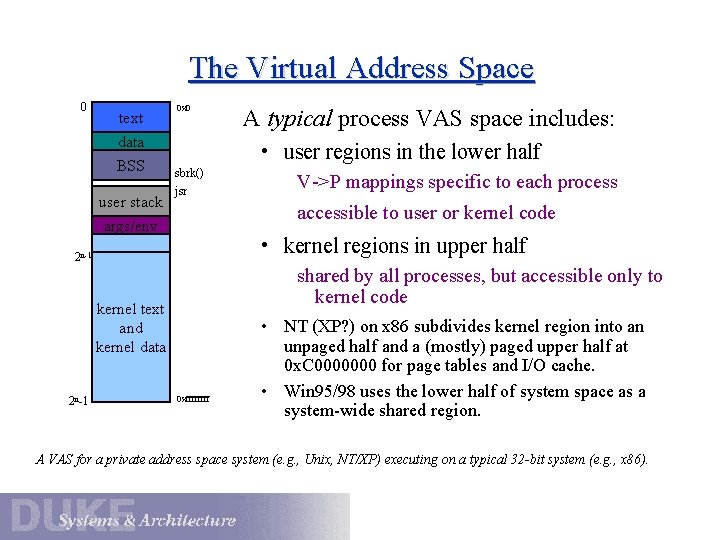

The Virtual Address Space 0 text 0 x 0 data BSS user stack • user regions in the lower half sbrk() jsr V->P mappings specific to each process accessible to user or kernel code args/env • kernel regions in upper half 2 n-1 shared by all processes, but accessible only to kernel code kernel text and kernel data 2 n-1 A typical process VAS space includes: 0 xffff • NT (XP? ) on x 86 subdivides kernel region into an unpaged half and a (mostly) paged upper half at 0 x. C 0000000 for page tables and I/O cache. • Win 95/98 uses the lower half of system space as a system-wide shared region. A VAS for a private address space system (e. g. , Unix, NT/XP) executing on a typical 32 -bit system (e. g. , x 86).

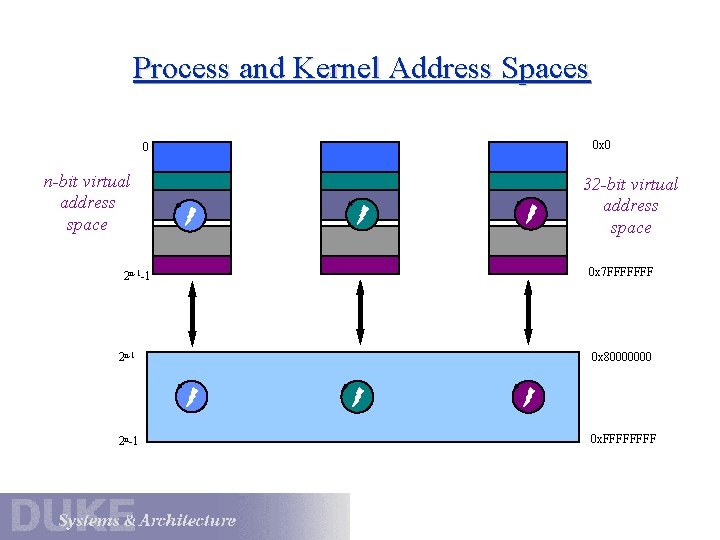

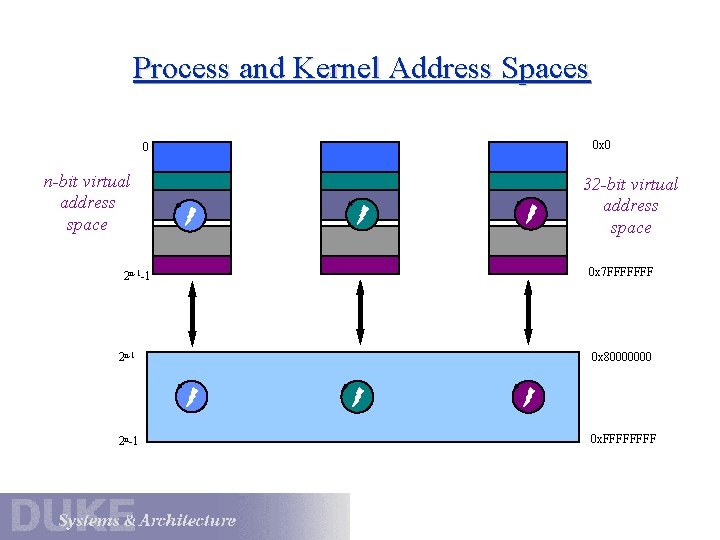

Process and Kernel Address Spaces 0 x 0 0 n-bit virtual address space 2 n-1 -1 data 32 -bit virtual address space 0 x 7 FFFFFFF 2 n-1 0 x 80000000 2 n-1 0 x. FFFF

Faults are similar to system calls in some respects: • Faults occur as a result of a process executing an instruction. Fault handlers execute on the process kernel stack; the fault handler may block (sleep) in the kernel. • The completed fault handler may return to the faulted context. But faults are different from syscall traps in other respects: • Syscalls are deliberate, but faults are “accidents”. divide-by-zero, dereference invalid pointer, memory page fault • Not every execution of the faulting instruction results in a fault. may depend on memory state or register contents

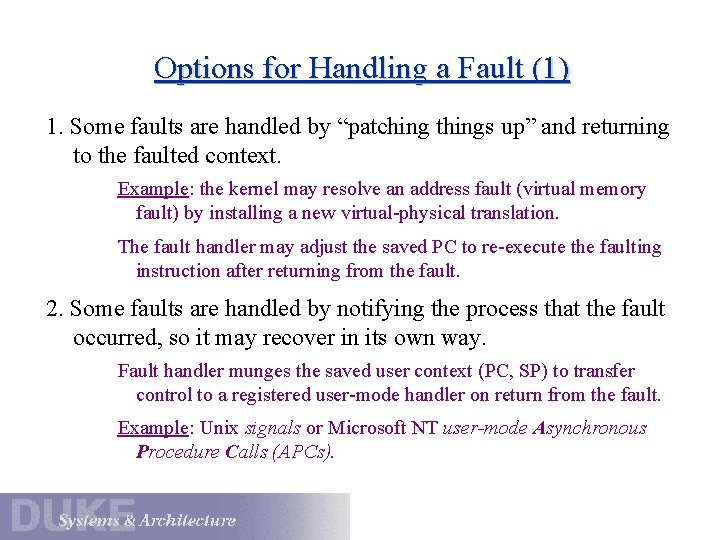

Options for Handling a Fault (1) 1. Some faults are handled by “patching things up” and returning to the faulted context. Example: the kernel may resolve an address fault (virtual memory fault) by installing a new virtual-physical translation. The fault handler may adjust the saved PC to re-execute the faulting instruction after returning from the fault. 2. Some faults are handled by notifying the process that the fault occurred, so it may recover in its own way. Fault handler munges the saved user context (PC, SP) to transfer control to a registered user-mode handler on return from the fault. Example: Unix signals or Microsoft NT user-mode Asynchronous Procedure Calls (APCs).

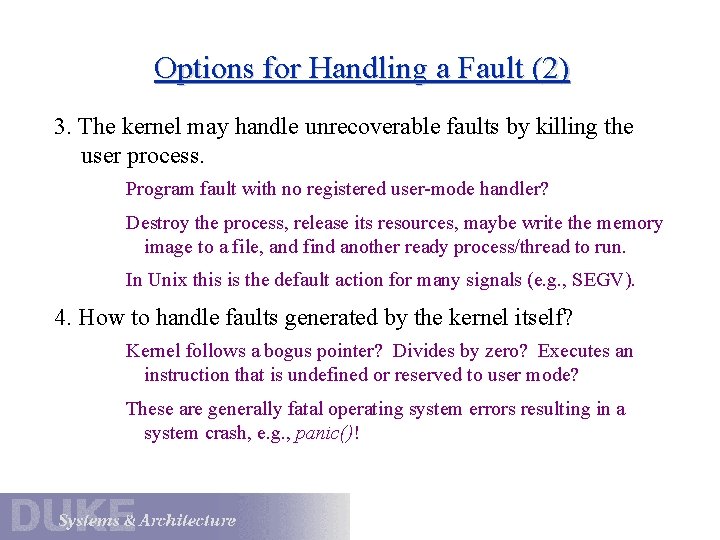

Options for Handling a Fault (2) 3. The kernel may handle unrecoverable faults by killing the user process. Program fault with no registered user-mode handler? Destroy the process, release its resources, maybe write the memory image to a file, and find another ready process/thread to run. In Unix this is the default action for many signals (e. g. , SEGV). 4. How to handle faults generated by the kernel itself? Kernel follows a bogus pointer? Divides by zero? Executes an instruction that is undefined or reserved to user mode? These are generally fatal operating system errors resulting in a system crash, e. g. , panic()!

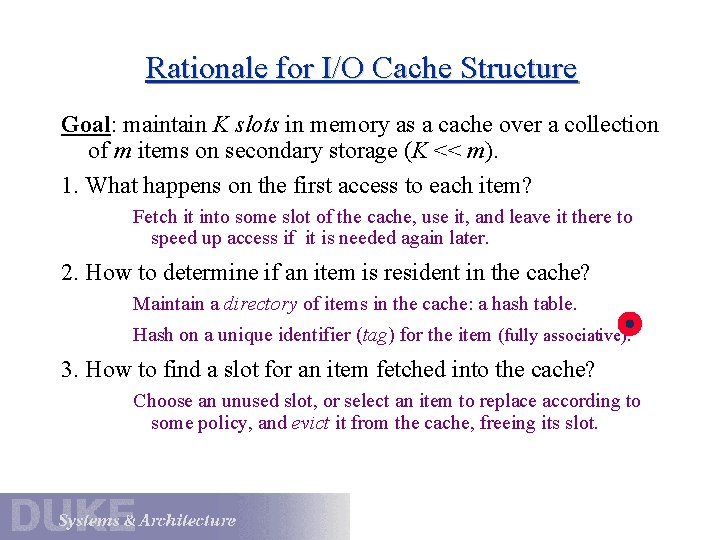

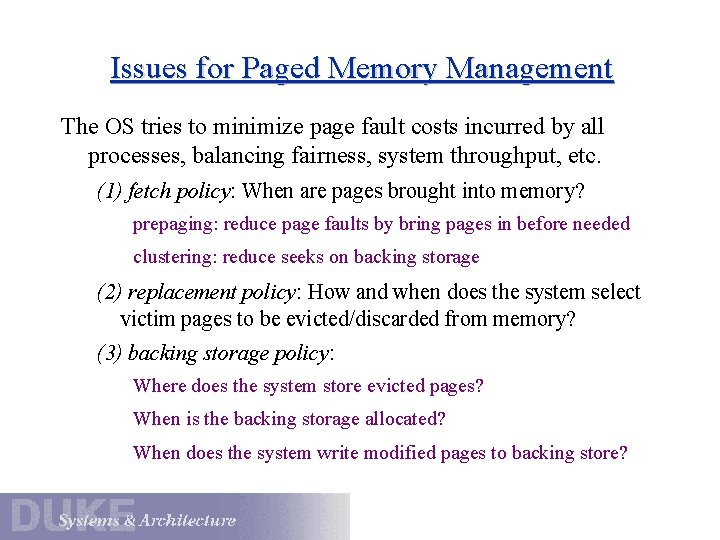

Issues for Paged Memory Management The OS tries to minimize page fault costs incurred by all processes, balancing fairness, system throughput, etc. (1) fetch policy: When are pages brought into memory? prepaging: reduce page faults by bring pages in before needed clustering: reduce seeks on backing storage (2) replacement policy: How and when does the system select victim pages to be evicted/discarded from memory? (3) backing storage policy: Where does the system store evicted pages? When is the backing storage allocated? When does the system write modified pages to backing store?

Virtual Memory as a Cache executable file header text idata wdata symbol table, etc. program sections virtual memory (big) physical memory (small) text data backing storage pageout/eviction BSS user stack args/env page fetch kernel process segments virtual-to-physical translations physical page frames

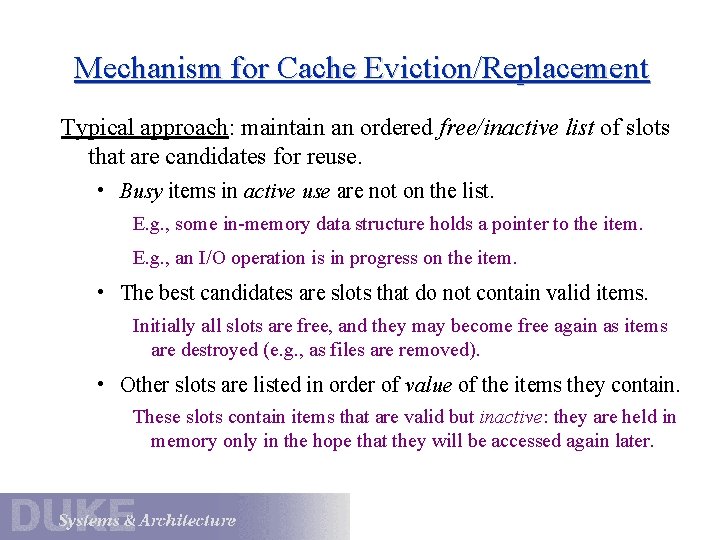

Rationale for I/O Cache Structure Goal: maintain K slots in memory as a cache over a collection of m items on secondary storage (K << m). 1. What happens on the first access to each item? Fetch it into some slot of the cache, use it, and leave it there to speed up access if it is needed again later. 2. How to determine if an item is resident in the cache? Maintain a directory of items in the cache: a hash table. Hash on a unique identifier (tag) for the item (fully associative). 3. How to find a slot for an item fetched into the cache? Choose an unused slot, or select an item to replace according to some policy, and evict it from the cache, freeing its slot.

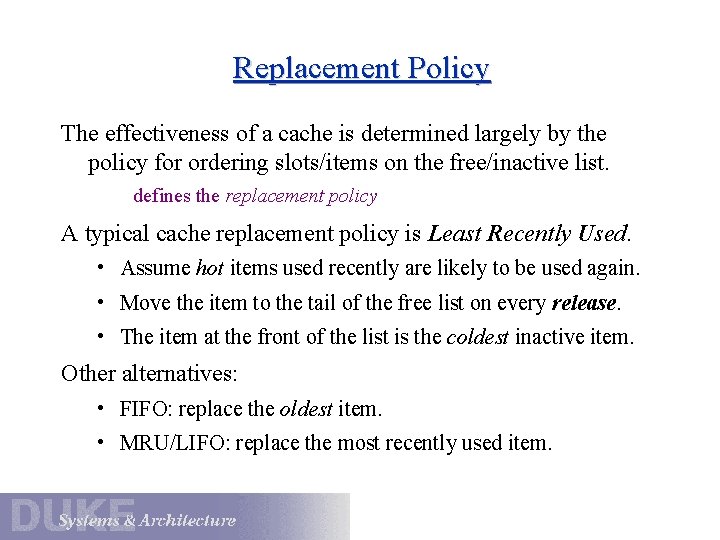

Mechanism for Cache Eviction/Replacement Typical approach: maintain an ordered free/inactive list of slots that are candidates for reuse. • Busy items in active use are not on the list. E. g. , some in-memory data structure holds a pointer to the item. E. g. , an I/O operation is in progress on the item. • The best candidates are slots that do not contain valid items. Initially all slots are free, and they may become free again as items are destroyed (e. g. , as files are removed). • Other slots are listed in order of value of the items they contain. These slots contain items that are valid but inactive: they are held in memory only in the hope that they will be accessed again later.

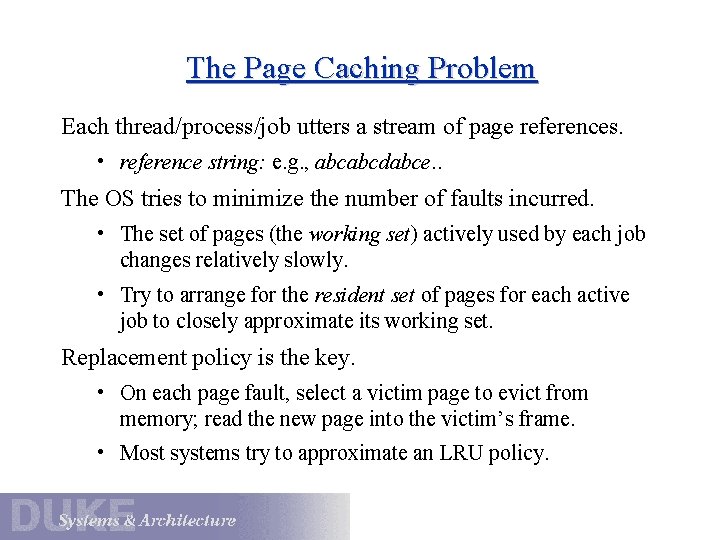

Replacement Policy The effectiveness of a cache is determined largely by the policy for ordering slots/items on the free/inactive list. defines the replacement policy A typical cache replacement policy is Least Recently Used. • Assume hot items used recently are likely to be used again. • Move the item to the tail of the free list on every release. • The item at the front of the list is the coldest inactive item. Other alternatives: • FIFO: replace the oldest item. • MRU/LIFO: replace the most recently used item.

The Page Caching Problem Each thread/process/job utters a stream of page references. • reference string: e. g. , abcabcdabce. . The OS tries to minimize the number of faults incurred. • The set of pages (the working set) actively used by each job changes relatively slowly. • Try to arrange for the resident set of pages for each active job to closely approximate its working set. Replacement policy is the key. • On each page fault, select a victim page to evict from memory; read the new page into the victim’s frame. • Most systems try to approximate an LRU policy.

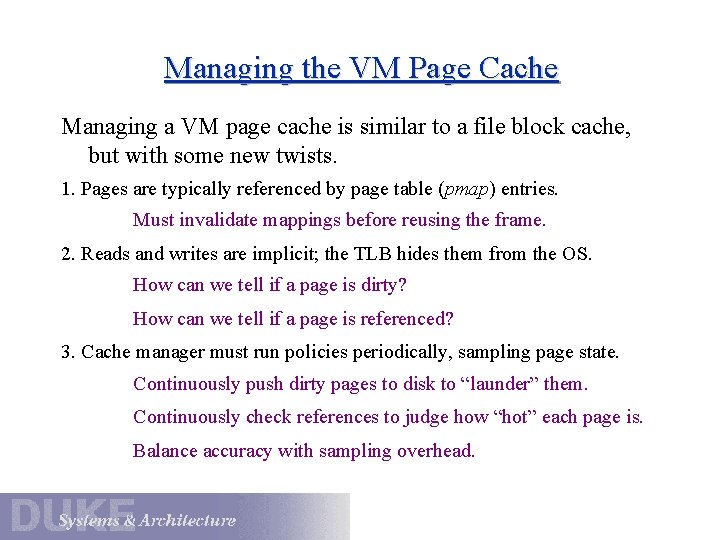

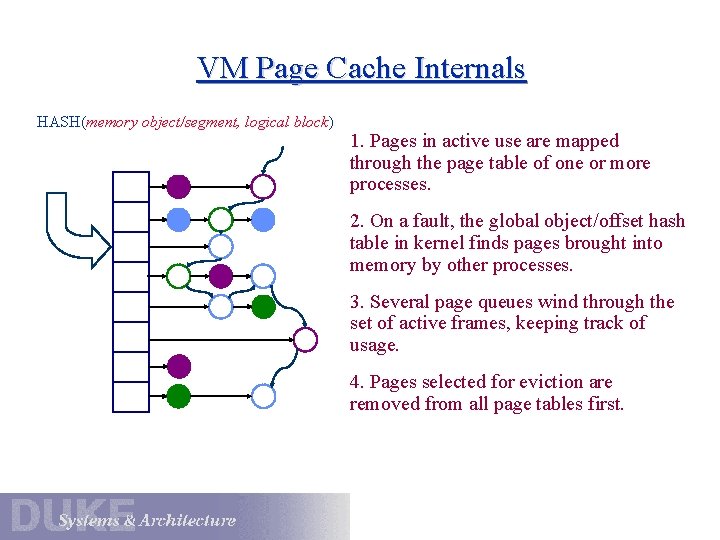

VM Page Cache Internals HASH(memory object/segment, logical block) 1. Pages in active use are mapped through the page table of one or more processes. 2. On a fault, the global object/offset hash table in kernel finds pages brought into memory by other processes. 3. Several page queues wind through the set of active frames, keeping track of usage. 4. Pages selected for eviction are removed from all page tables first.

Managing the VM Page Cache Managing a VM page cache is similar to a file block cache, but with some new twists. 1. Pages are typically referenced by page table (pmap) entries. Must invalidate mappings before reusing the frame. 2. Reads and writes are implicit; the TLB hides them from the OS. How can we tell if a page is dirty? How can we tell if a page is referenced? 3. Cache manager must run policies periodically, sampling page state. Continuously push dirty pages to disk to “launder” them. Continuously check references to judge how “hot” each page is. Balance accuracy with sampling overhead.

The Paging Daemon Most OS have one or more system processes responsible for implementing the VM page cache replacement policy. • A daemon is an autonomous system process that periodically performs some housekeeping task. The paging daemon prepares for page eviction before the need arises. • Wake up when free memory becomes low. • Clean dirty pages by pushing to backing store. prewrite or pageout • Maintain ordered lists of eviction candidates. • Decide how much memory to allocate to file cache, VM, etc.

LRU Approximations for Paging Pure LRU and LFU are prohibitively expensive to implement. • most references are hidden by the TLB • OS typically sees less than 10% of all references • can’t tweak your ordered page list on every reference Most systems rely on an approximation to LRU for paging. • periodically sample the reference bit on each page visit page and set reference bit to zero run the process for a while (the reference window) come back and check the bit again • reorder the list of eviction candidates based on sampling

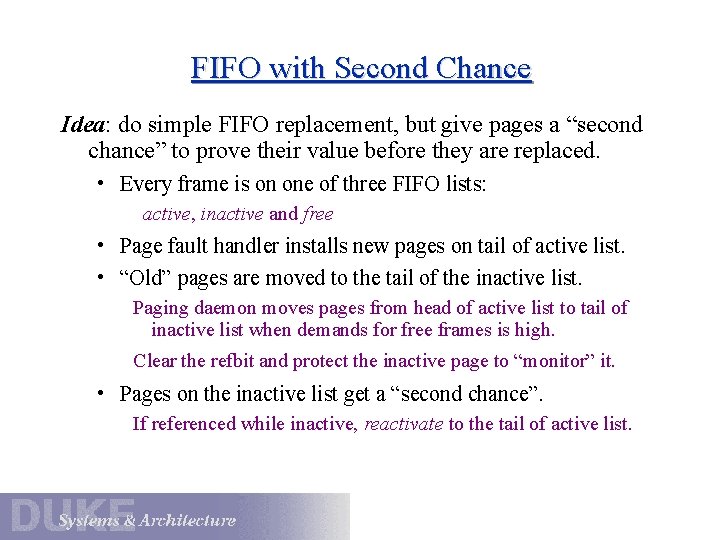

FIFO with Second Chance Idea: do simple FIFO replacement, but give pages a “second chance” to prove their value before they are replaced. • Every frame is on one of three FIFO lists: active, inactive and free • Page fault handler installs new pages on tail of active list. • “Old” pages are moved to the tail of the inactive list. Paging daemon moves pages from head of active list to tail of inactive list when demands for free frames is high. Clear the refbit and protect the inactive page to “monitor” it. • Pages on the inactive list get a “second chance”. If referenced while inactive, reactivate to the tail of active list.

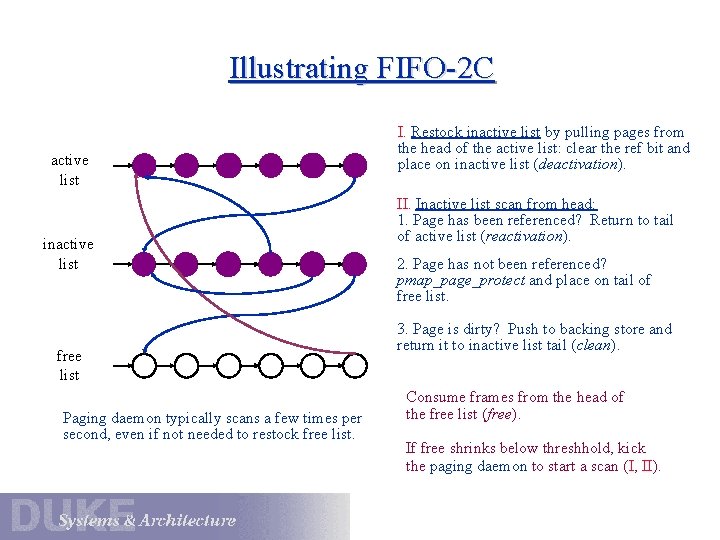

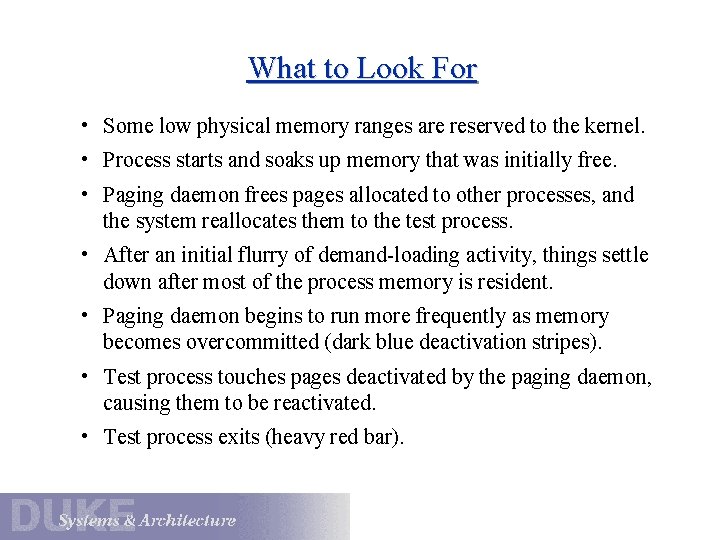

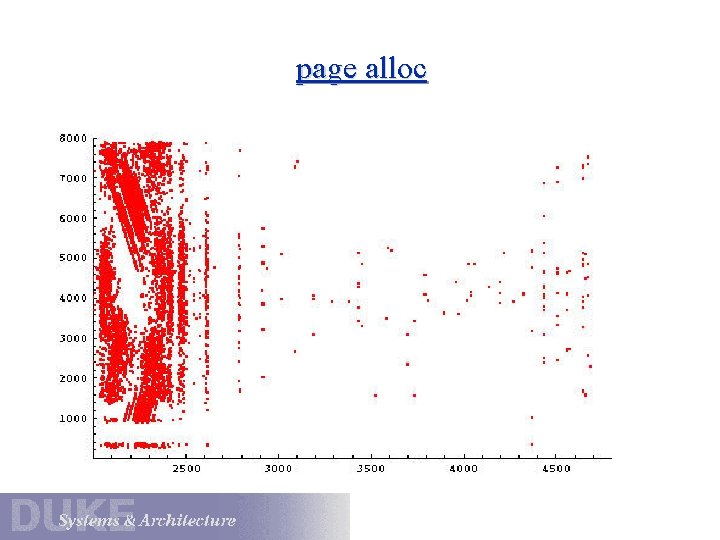

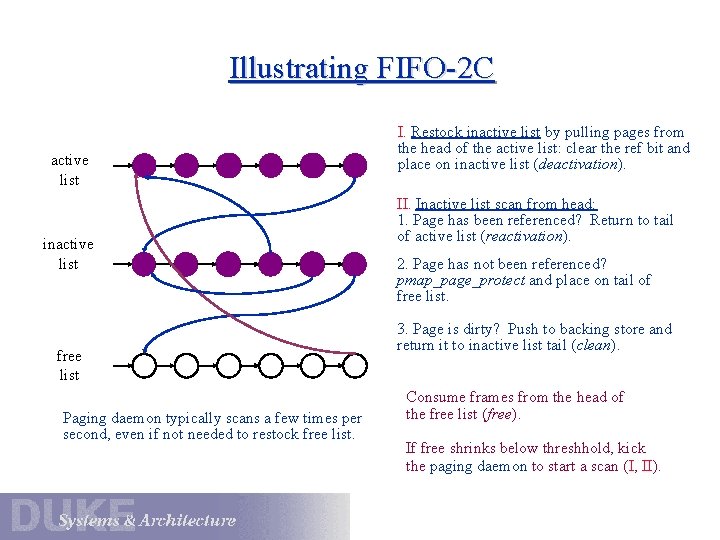

Illustrating FIFO-2 C active list inactive list free list Paging daemon typically scans a few times per second, even if not needed to restock free list. I. Restock inactive list by pulling pages from the head of the active list: clear the ref bit and place on inactive list (deactivation). II. Inactive list scan from head: 1. Page has been referenced? Return to tail of active list (reactivation). 2. Page has not been referenced? pmap_page_protect and place on tail of free list. 3. Page is dirty? Push to backing store and return it to inactive list tail (clean). Consume frames from the head of the free list (free). If free shrinks below threshhold, kick the paging daemon to start a scan (I, II).

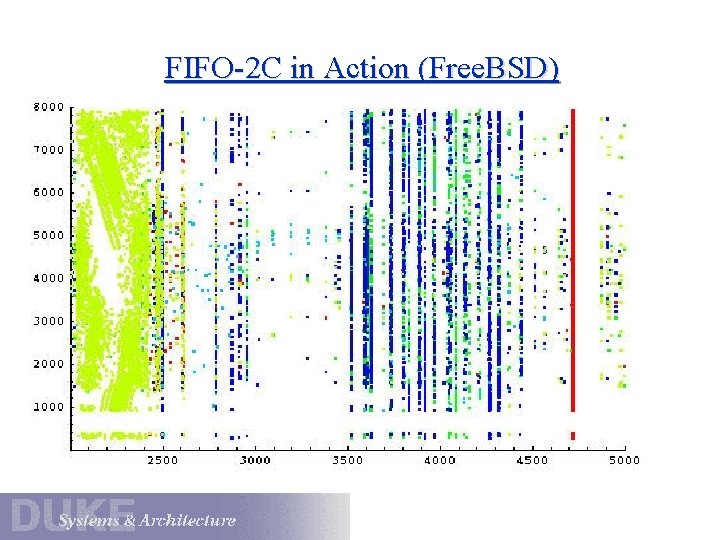

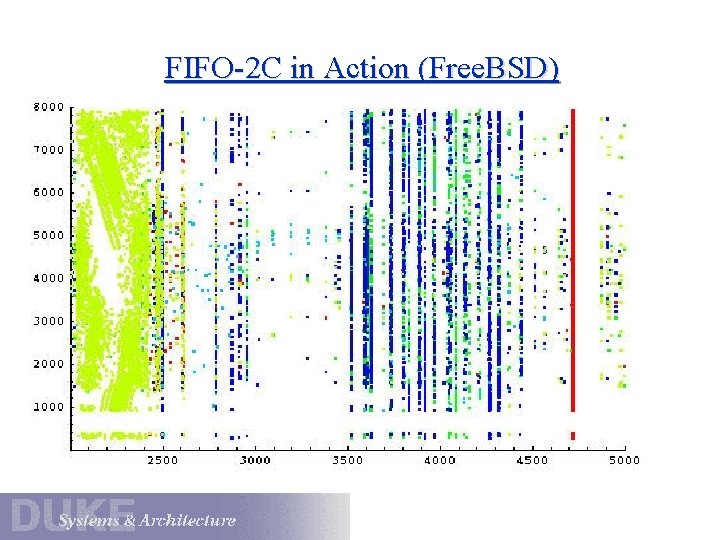

FIFO-2 C in Action (Free. BSD)

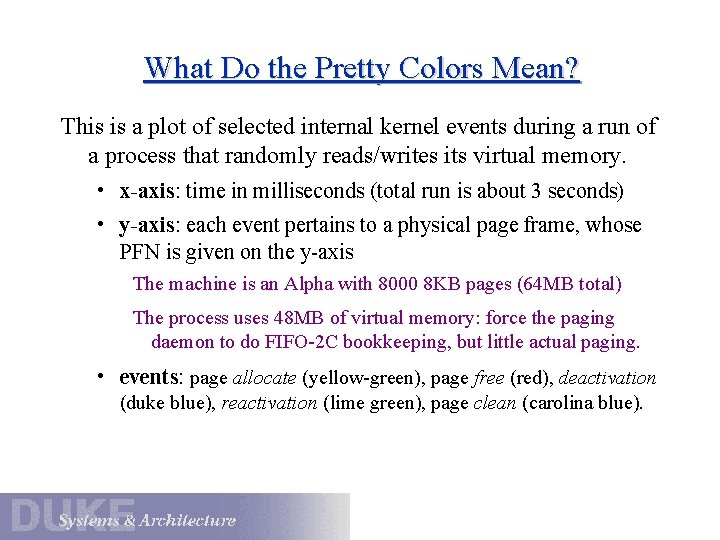

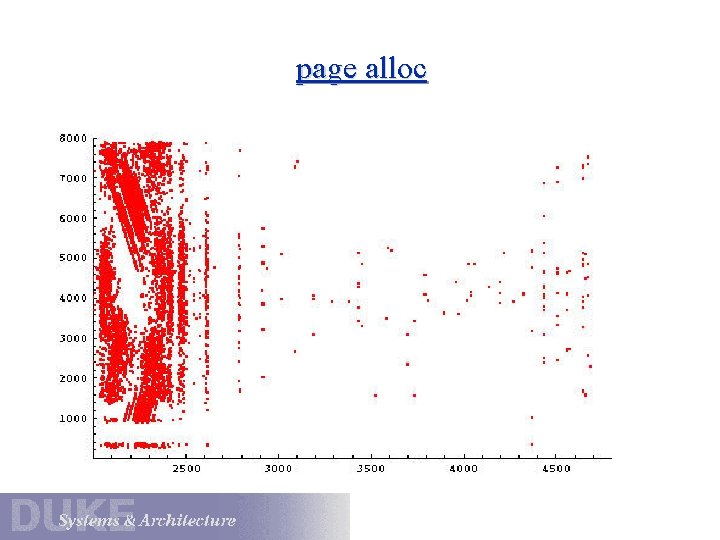

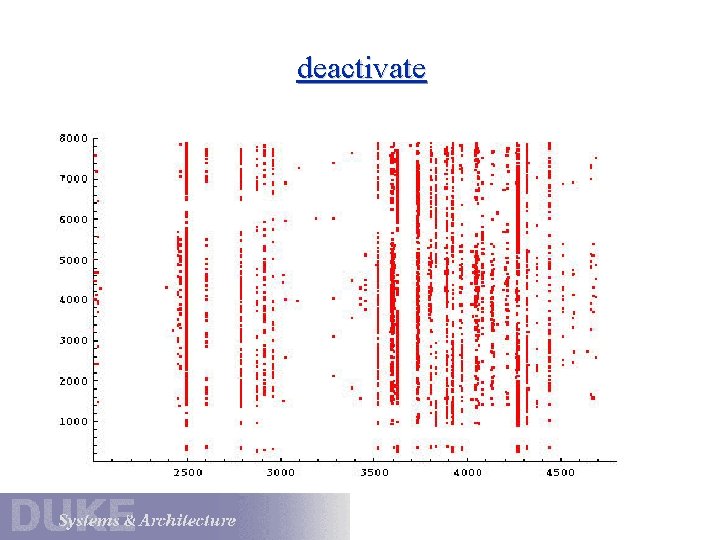

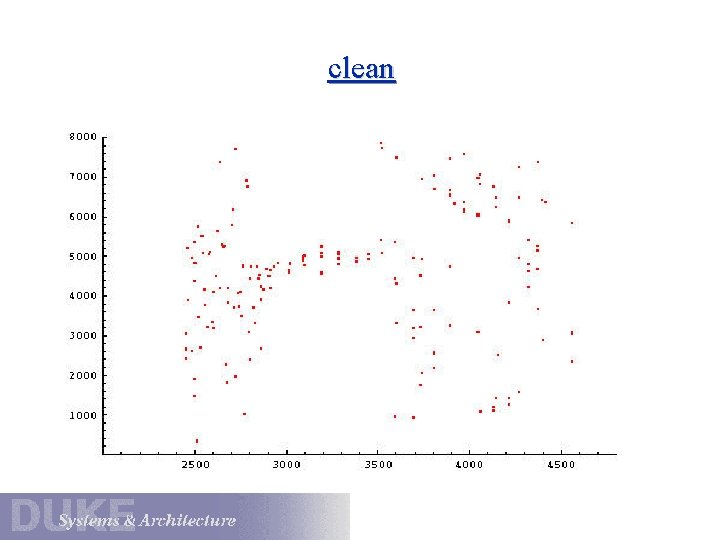

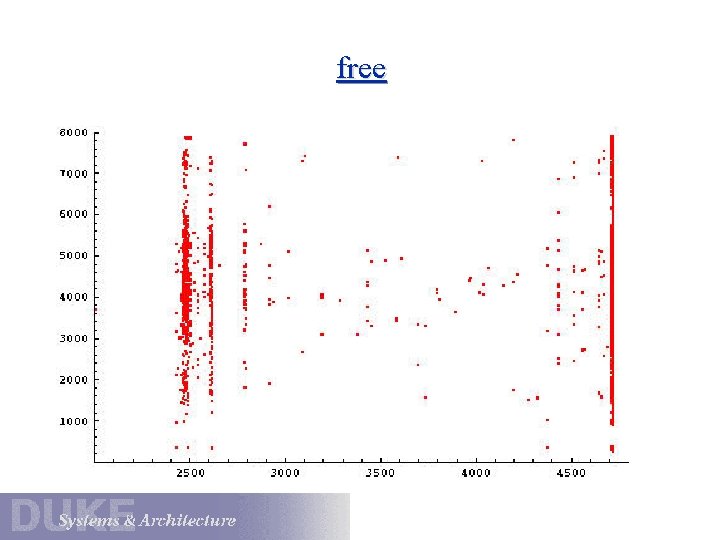

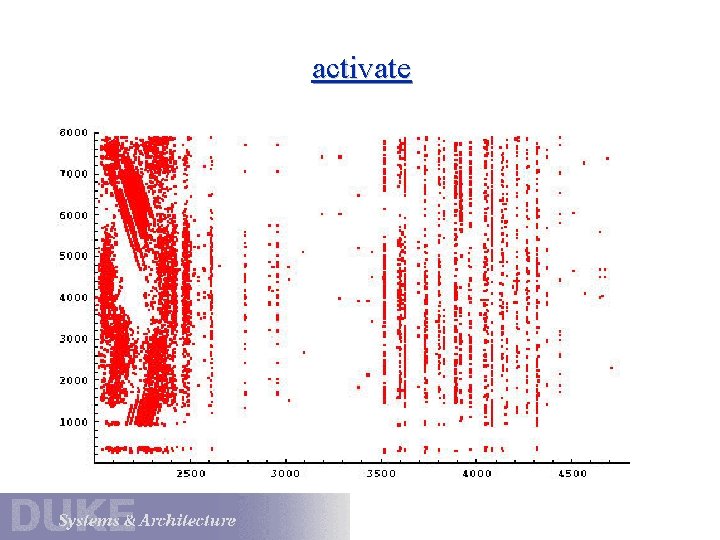

What Do the Pretty Colors Mean? This is a plot of selected internal kernel events during a run of a process that randomly reads/writes its virtual memory. • x-axis: time in milliseconds (total run is about 3 seconds) • y-axis: each event pertains to a physical page frame, whose PFN is given on the y-axis The machine is an Alpha with 8000 8 KB pages (64 MB total) The process uses 48 MB of virtual memory: force the paging daemon to do FIFO-2 C bookkeeping, but little actual paging. • events: page allocate (yellow-green), page free (red), deactivation (duke blue), reactivation (lime green), page clean (carolina blue).

What to Look For • Some low physical memory ranges are reserved to the kernel. • Process starts and soaks up memory that was initially free. • Paging daemon frees pages allocated to other processes, and the system reallocates them to the test process. • After an initial flurry of demand-loading activity, things settle down after most of the process memory is resident. • Paging daemon begins to run more frequently as memory becomes overcommitted (dark blue deactivation stripes). • Test process touches pages deactivated by the paging daemon, causing them to be reactivated. • Test process exits (heavy red bar).

page alloc

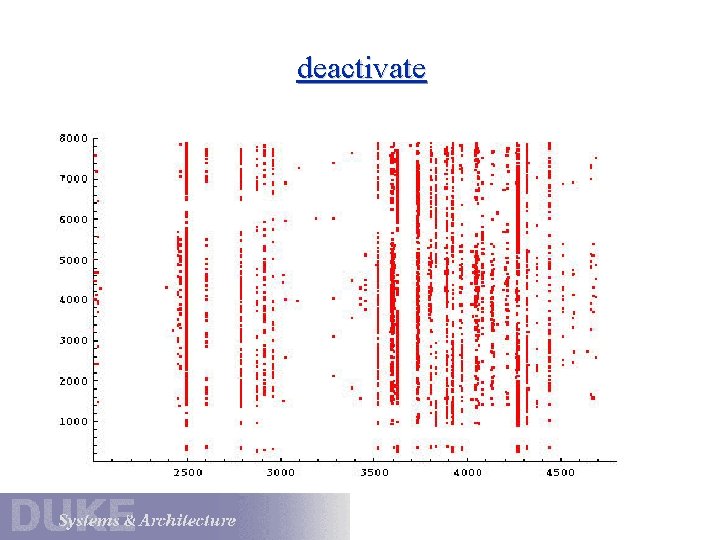

deactivate

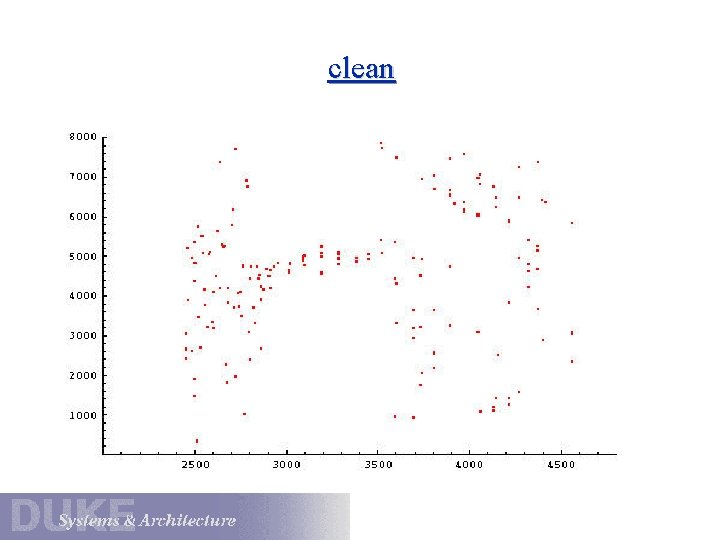

clean

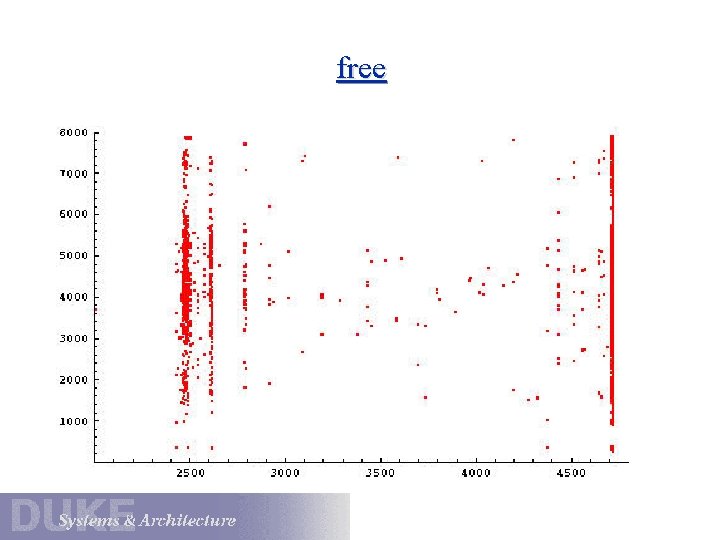

free

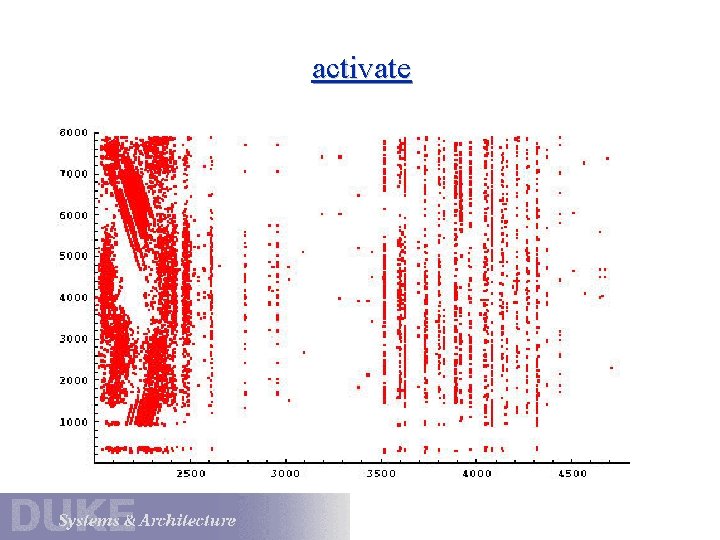

activate

Questions for Paged Virtual Memory 1. How do we prevent users from accessing protected data? 2. If a page is in memory, how do we find it? Address translation must be fast. 3. If a page is not in memory, how do we find it? 4. When is a page brought into memory? 5. If a page is brought into memory, where do we put it? 6. If a page is evicted from memory, where do we put it? 7. How do we decide which pages to evict from memory? Page replacement policy should minimize overall I/O.

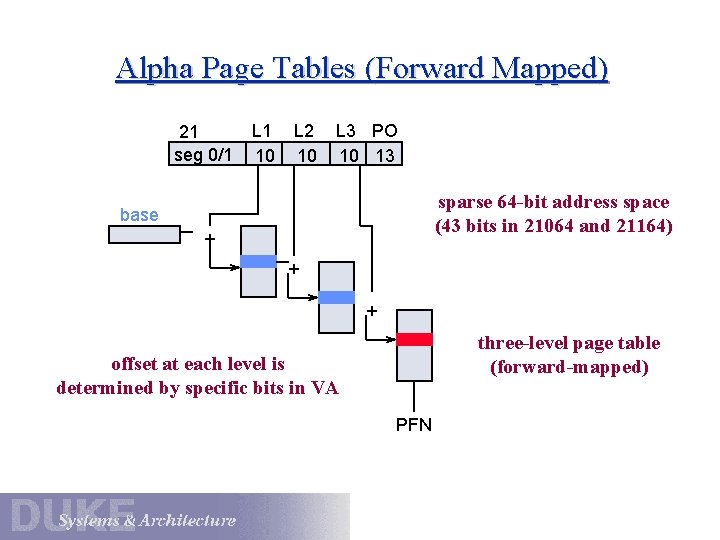

What You Should Know • Basics of paged memory management • Typical address space layout • Basics of address translation • Architectural mechanisms to support paged memory • Importance for kernel protection and process isolation • Why the simple page table is inadequate • Motivation for and structure of hierarchical tables • Optional: motivation for and structure of hashed (inverted) tables

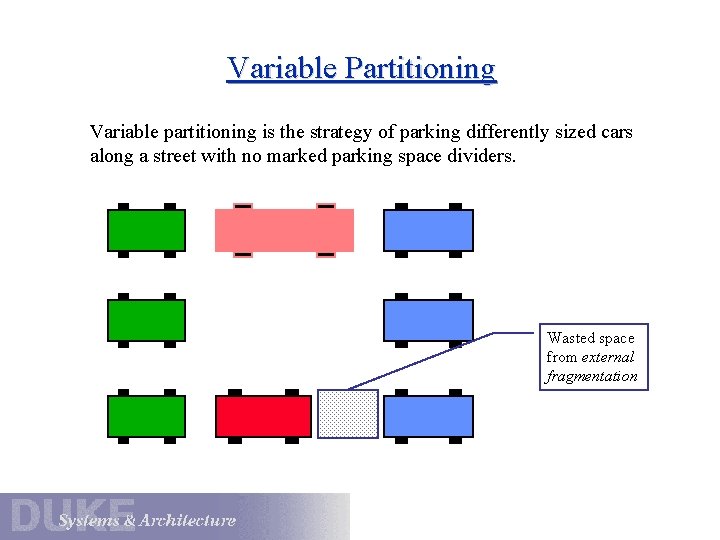

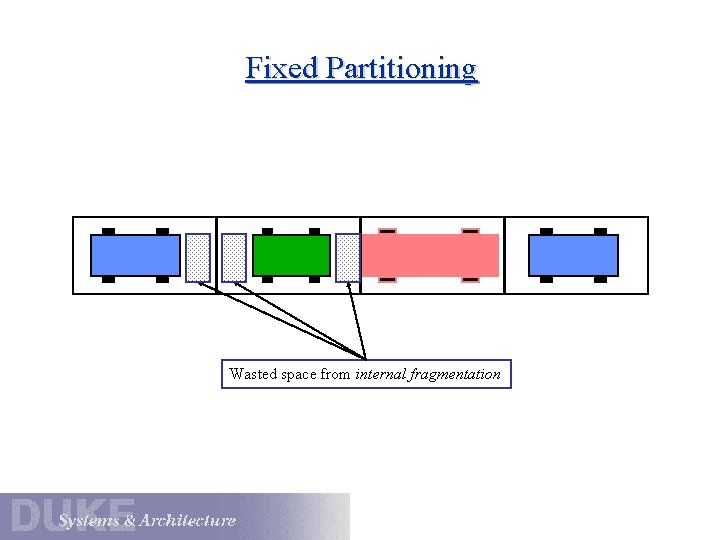

Background Be sure you understand why page-based memory allocation is more memory-efficient than the old way: allocating contiguous physical memory for each address space (partitioning). • Two partitioning strategies: fixed and variable • How to make partitioning transparent to programs • How to protect memory in a partitioned system • Fragmentation: internal and external • Fragmentation issues for each strategy • Relevance to heap managers today • Approaches to variable partitioning: First fit, best fit, etc. , and the role of compaction.

Memory Management 101 Once upon a time. . . memory was called “core”, and programs (“jobs”) were loaded and executed one by one. • load image in contiguous physical memory start execution at a known physical location allocate space in high memory for stack and data • address text and data using physical addresses prelink executables for known start address • run to completion

Memory and Multiprogramming One day, IBM decided to load multiple jobs in memory at once. • improve utilization of that expensive CPU • improve system throughput Problem 1: how do programs address their memory space? load-time relocation? Problem 2: how does the OS protect memory from rogue programs? ?

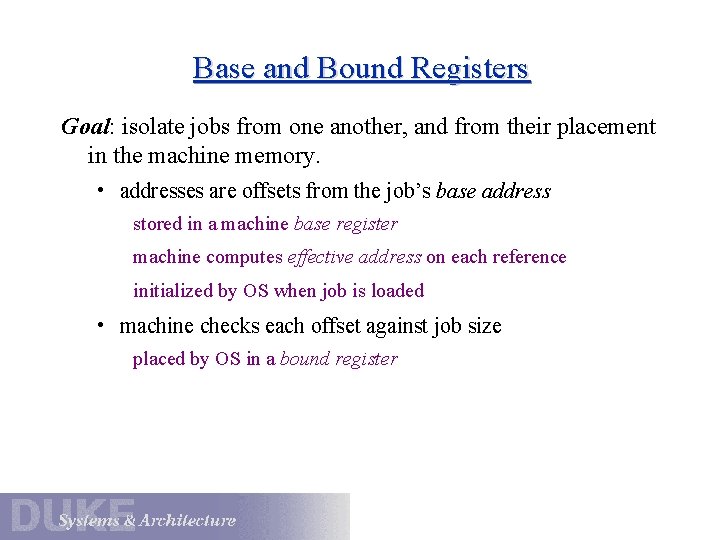

Base and Bound Registers Goal: isolate jobs from one another, and from their placement in the machine memory. • addresses are offsets from the job’s base address stored in a machine base register machine computes effective address on each reference initialized by OS when job is loaded • machine checks each offset against job size placed by OS in a bound register

Base and Bound: Pros and Cons Pro: • each job is physically contiguous • simple hardware and software • no need for load-time relocation of linked addresses • OS may swap or move jobs as it sees fit Con: • memory allocation is a royal pain • job size is limited by available memory

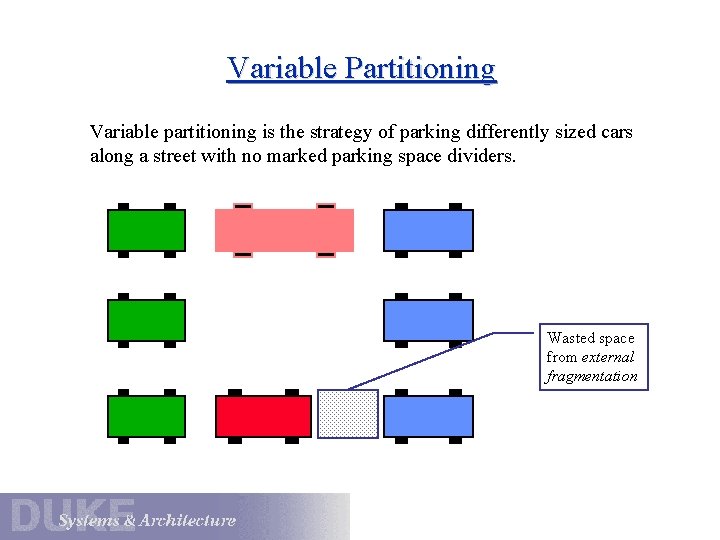

Variable Partitioning Variable partitioning is the strategy of parking differently sized cars along a street with no marked parking space dividers. Wasted space from external fragmentation

Fixed Partitioning Wasted space from internal fragmentation

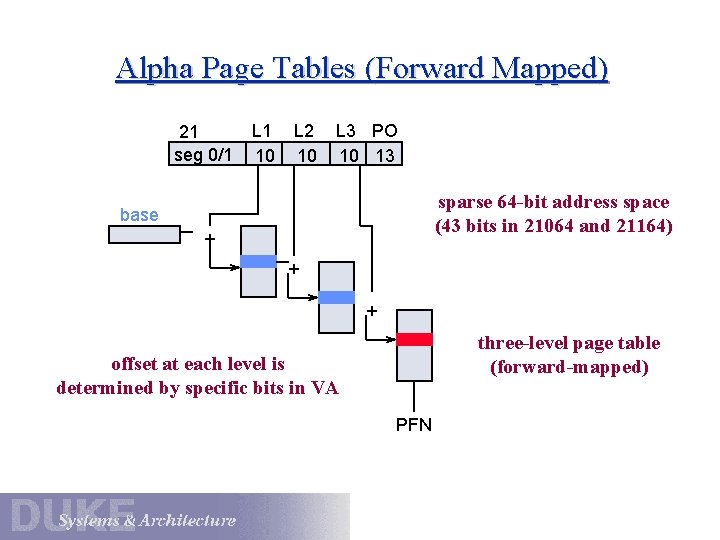

Alpha Page Tables (Forward Mapped) 21 seg 0/1 L 1 10 L 2 10 L 3 PO 10 13 sparse 64 -bit address space (43 bits in 21064 and 21164) base + + + three-level page table (forward-mapped) offset at each level is determined by specific bits in VA PFN