Virtual Memory 3122021 A BerrachedCMS UHD 1 Outline

Virtual Memory 3/12/2021 A. Berrached@CMS: : UHD 1

Outline 3/12/2021 A. Berrached@CMS: : UHD 2

Background • Virtual Memory - separation of user logical memory from physical memory. – Only part of the program need to be in memory for execution. The rest is kept in secondary storage (disk). – When program needs to access a page that is not in memory, OS swaps out a page that is no longer needed and swaps in the needed page into memory. • The success of virtual memory is due to the locality of reference exhibited by most programs. 3/12/2021 A. Berrached@CMS: : UHD 3

Principle of Locality of Reference • Memory references within a process tend to be clustered – Temporal locality of reference: recently accessed items are most likely to be accessed in the near future – Spatial locality of reference: items accessed in the near future are most likely to be adjacent to items accessed recently. 3/12/2021 A. Berrached@CMS: : UHD 4

Locality of Virtual Memory • Only small part of the address space of a process (code and data) will be needed in a short period of time. • Possible to make intelligent guesses about which pieces will be needed in the near future. • This suggests that virtual memory may work efficiently. • Note that even if guess wrong, pay performance penalty but computation is correct. 3/12/2021 A. Berrached@CMS: : UHD 5

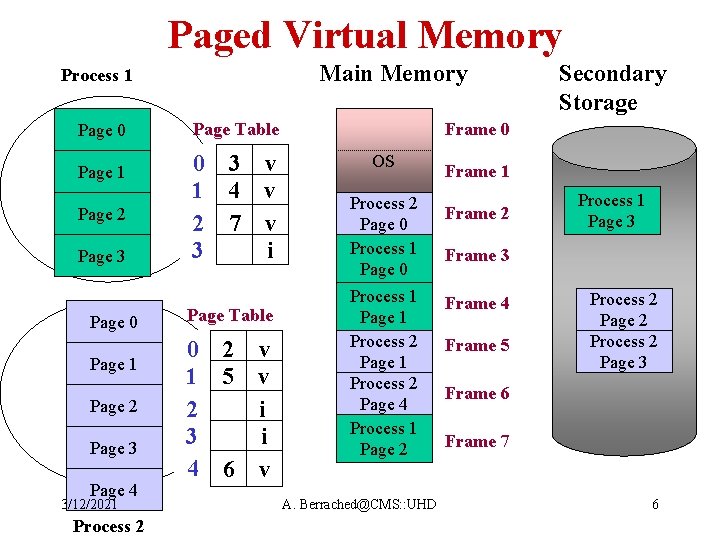

Paged Virtual Memory Main Memory Process 1 Page 0 Page 1 Page 2 Page 3 Page 4 3/12/2021 Process 2 Frame 0 Page Table 0 3 v 1 4 v 2 7 v 3 i Page Table 0 2 v 1 5 v 2 i 3 i 4 6 v Secondary Storage OS Process 2 Page 0 Process 1 Page 1 Process 2 Page 4 Process 1 Page 2 A. Berrached@CMS: : UHD Frame 1 Frame 2 Process 1 Page 3 Frame 4 Frame 5 Process 2 Page 2 Process 2 Page 3 Frame 6 Frame 7 6

Paged Virtual Memory • In page table use a valid-invalid bit: – valid bit = 1 ==> page is in memory – valid bit = 0 ==> page not in memory • Each time a reference is made: – lookup page table – if valid bit = 0 ==> page fault • page fault ==> trap to the OS to service the page fault. 3/12/2021 A. Berrached@CMS: : UHD 7

Paged Virtual Memory Page Fault: • OS checks reference: – if referenced page is not in memory: • Find an empty frame • Bring referenced page from secondary storage into frame • update page table: new frame # and valid-bit=1 • return back to interrupted process 3/12/2021 A. Berrached@CMS: : UHD 8

Page Size Issue • large page size is good since this means smaller page table. Note that when page table is very large, the system may have to store part of it in virtual memory. • Large page size is good because this means fewer pages per process; this increases TLB hit ratio. • Larger page sizes is good because disks are designed to transfer large blocks of data • Smaller page sizes is good to reduce internal fragmentation 3/12/2021 A. Berrached@CMS: : UHD 9

Page Size Issue (cont. ) • With a small page size, each page matches the code that is actually used by process ==> better match process locality of reference: less fault rate • Increased page size causes each page to contain more code & data that are not referenced ==>page fault rise • Pages size is generally 1 K to 4 K 3/12/2021 A. Berrached@CMS: : UHD 10

What happens if there is no free frame? 3/12/2021 A. Berrached@CMS: : UHD 11

Page Replacement 3/12/2021 A. Berrached@CMS: : UHD 12

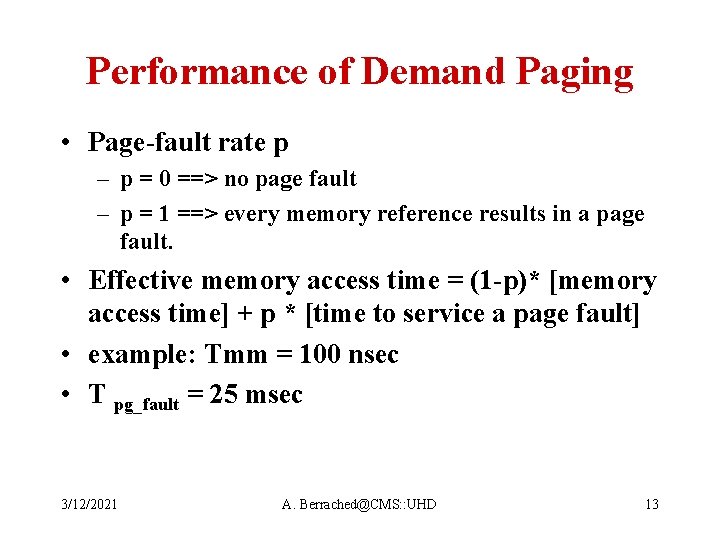

Performance of Demand Paging • Page-fault rate p – p = 0 ==> no page fault – p = 1 ==> every memory reference results in a page fault. • Effective memory access time = (1 -p)* [memory access time] + p * [time to service a page fault] • example: Tmm = 100 nsec • T pg_fault = 25 msec 3/12/2021 A. Berrached@CMS: : UHD 13

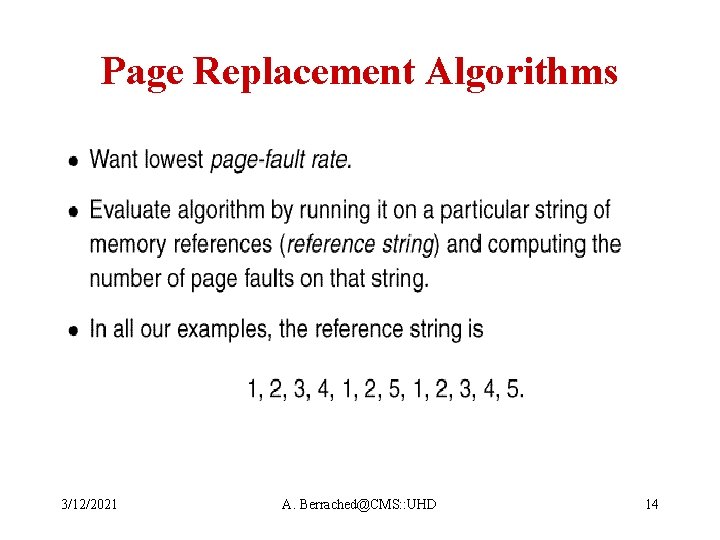

Page Replacement Algorithms 3/12/2021 A. Berrached@CMS: : UHD 14

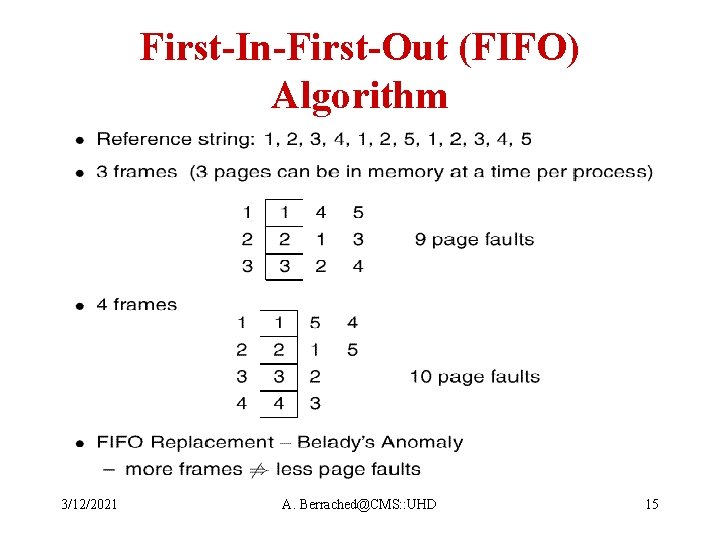

First-In-First-Out (FIFO) Algorithm 3/12/2021 A. Berrached@CMS: : UHD 15

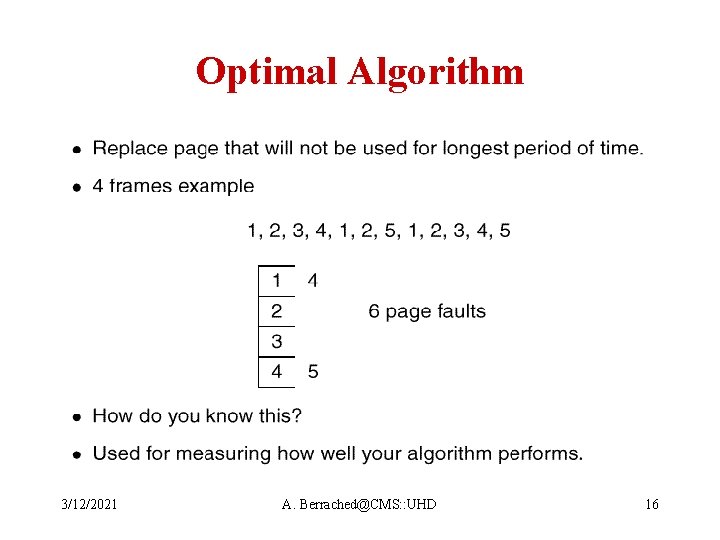

Optimal Algorithm 3/12/2021 A. Berrached@CMS: : UHD 16

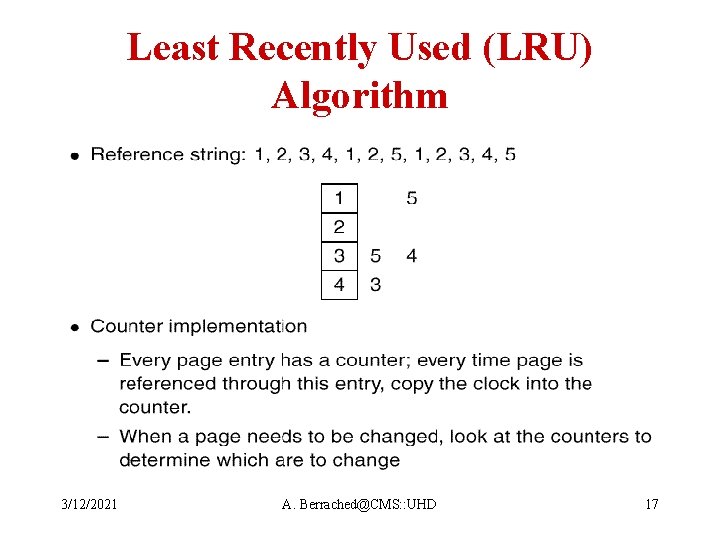

Least Recently Used (LRU) Algorithm 3/12/2021 A. Berrached@CMS: : UHD 17

LRU Approximation Algorithms • Reference bit – with each page associate a bit, initially 0. – when a page is referenced set bit to 1. – Replace page with reference bit 0. If there are more than one pick one randomly. – At regular intervals, clear all reference bits and start all over. 3/12/2021 A. Berrached@CMS: : UHD 18

LRU Approximation Algorithms • Enhanced reference bit – Instead of clearing the reference bits periodically, save it to gain more info. – keep a 1 byte with each page (reference byte) – most significant bit of byte is the reference bit. – At regular intervals, shift all bits in reference byte 1 bit position to the right. 0 goes to MSB – If reference byte = 0000 ==> page has not been referenced for the last 8 time periods – if byte = 1111 ==> page has been referenced in each of the past 8 period – two pages: 0101 1111 and 0110 0011, page with smaller byte was accessed less recently. 3/12/2021 A. Berrached@CMS: : UHD 19

LRU Approximation Algorithms • Second-Chance: – Based on reference bit and FIFO – Use FIFO algorithm – Select page at head of FIFO queue – If reference bit is 1, give it a second chance: • clear its reference bit. • send it to back of queue – Can be implemented using a circular queue 3/12/2021 A. Berrached@CMS: : UHD 20

Counting Algorithms 3/12/2021 A. Berrached@CMS: : UHD 21

Local vs. Global Replacement • Local replacement: each process selects from its own set of allocated frames • Global replacement: process selects a replacement frame from the set of all frames; a process can take a frame from another process 3/12/2021 A. Berrached@CMS: : UHD 22

Dynamic Page Replacement • The amount of physical memory-- number of memory frames-- needed by a process varies as the process executes • How much memory should be allocated? – Fault rate "tolerable' – will change according to the phase of the process • Ideally, this should be set based on the locality of reference of the process 3/12/2021 A. Berrached@CMS: : UHD 23

Thrashing • If a process does not have "enough" pages, the page fault rate is very high. This leads to; – low CPU utilization – Operating system thinks it needs to increase degree of multiprogramming – another process is brought to the system – this leads to more page faults • Thrashing = process spends most of its time swapping pages in and out. 3/12/2021 A. Berrached@CMS: : UHD 24

Dynamic Replacement • Contemporary models use the working set replacement strategy • Dynamic and global • Intuitively, the working set is the set of pages in the process locality – somewhat imprecise – varies with time as process migrates from one locality to another. 3/12/2021 A. Berrached@CMS: : UHD 25

Working Set Replacement • = working-set window: a fixed number of page references • Working Set : the set of pages in the most recent page references. • If a page is in active use, it will be in the working set. If not it will drop from the working set. • ==> working set is an approximation of the program's locality. 3/12/2021 A. Berrached@CMS: : UHD 26

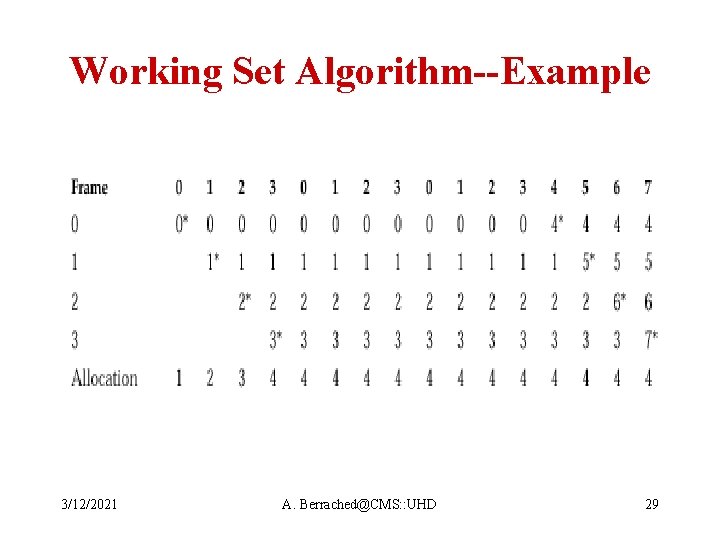

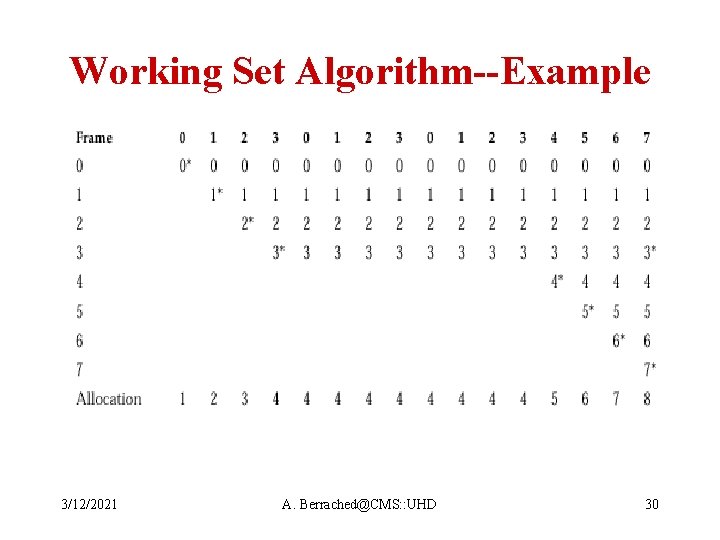

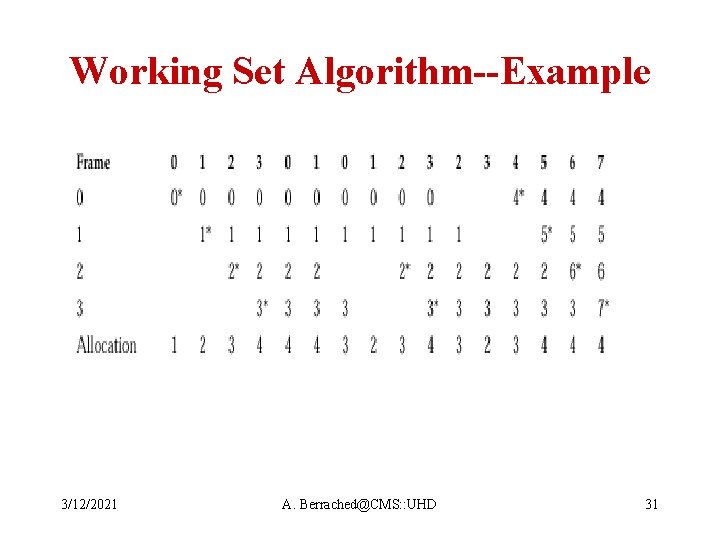

Working Set Replacement-Example Page references: 2, 6, 1, 5, 7, 7, 5, 1. , 6, 2, 3, 4, 2, 2, 3, 4, 4, 4. t 1 t 2 • if = 10 – at time t 1: working set = {1, 2, 5, 6, 7} – at t 2: {2, 3, 4, 6} • Selection of is important: – too small, will not encompass entire locality – too large, will include multiple localities Performance depends on locality and choice of 3/12/2021 A. Berrached@CMS: : UHD 27

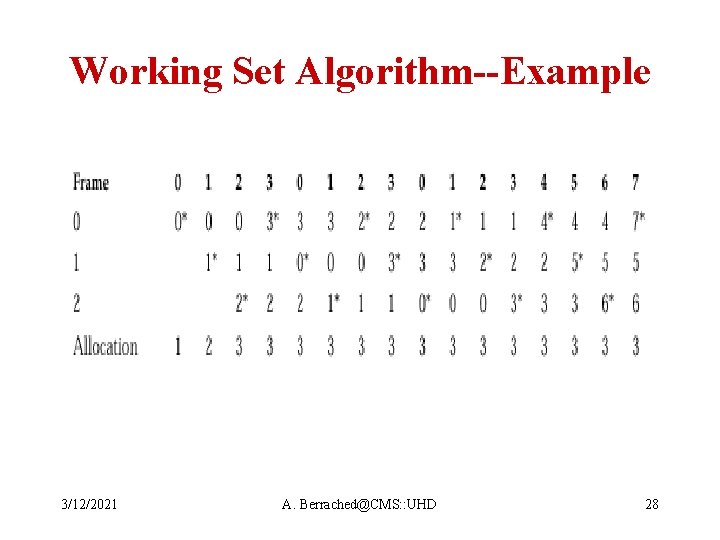

Working Set Algorithm--Example 3/12/2021 A. Berrached@CMS: : UHD 28

Working Set Algorithm--Example 3/12/2021 A. Berrached@CMS: : UHD 29

Working Set Algorithm--Example 3/12/2021 A. Berrached@CMS: : UHD 30

Working Set Algorithm--Example 3/12/2021 A. Berrached@CMS: : UHD 31

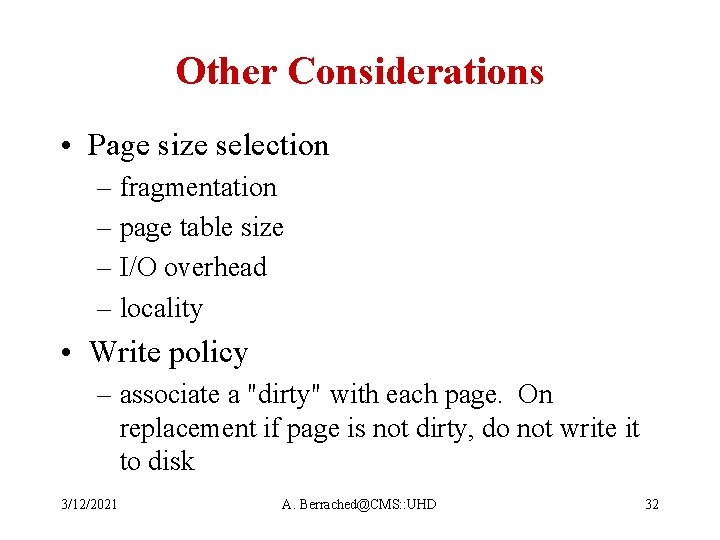

Other Considerations • Page size selection – fragmentation – page table size – I/O overhead – locality • Write policy – associate a "dirty" with each page. On replacement if page is not dirty, do not write it to disk 3/12/2021 A. Berrached@CMS: : UHD 32

Other Considerations (cont. ) • Page buffering: – keep a pool of free frames – On a page fault: select victim frame to replace – before writing victim to disk, read new page from disk into one of the frames in free pool – Later, write replaced paged to disk and add its frame to free pool. 3/12/2021 A. Berrached@CMS: : UHD 33

Other Considerations (cont. ) Pre-fetching strategies – attempt to predict pages that are going to be needed in the near future and bring into memory before they are actually requested • Gain: – can reduce page fault rate, good when process is swapped in and out. • Drawback: – if wrong prediction, may increase page fault. 3/12/2021 A. Berrached@CMS: : UHD 34

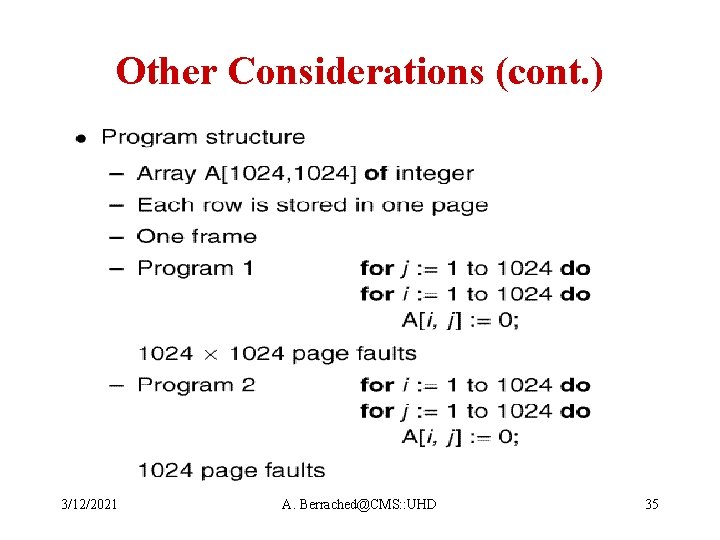

Other Considerations (cont. ) 3/12/2021 A. Berrached@CMS: : UHD 35

- Slides: 35