Virtual IO Caching Dynamic Storage Cache Management for

- Slides: 24

Virtual I/O Caching: Dynamic Storage Cache Management for Concurrent Workloads

Outline • • • Introduction Motivation Design Evaluation Conclusion

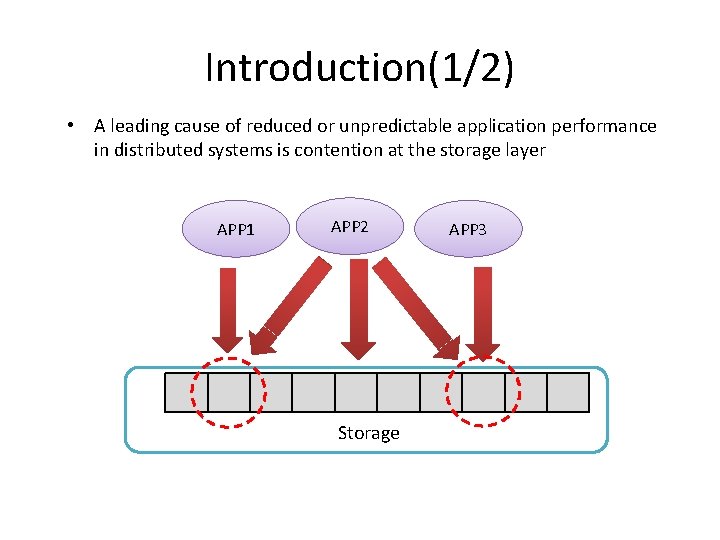

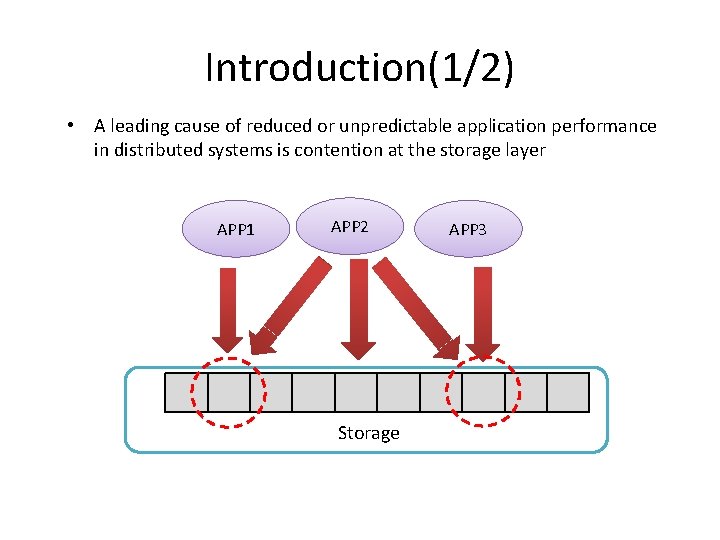

Introduction(1/2) • A leading cause of reduced or unpredictable application performance in distributed systems is contention at the storage layer APP 1 APP 2 Storage APP 3

Introduction(2/2) • Application behavior and the corresponding performance of a chosen replacement policy are observed at run time • a mechanism is designed to increase cache efficiency. virtual I/O cache • We further use the virtual I/O cache to isolate concurrent workloads and globally manage physical resource allocation towards system level performance objectives.

Outline • • • Introduction Motivation Design Evaluation Conclusion

Motivation(1/2) • In general design: • It is necessary to divide a unified logical cache into a fixed number of partitions. • It necessary to statically decide where each partition is located. • Virtual I/O cache: • More flexible cache partitioning. • All replacement policies make prior workload assumptions implied by the priority algorithm • Not specific to any one replacement strategy.

Motivation(2/2) • Virtual I/O cache • three components: 1. Virtual Resource Monitor (VRM) 2. Virtual-Physical Allocator (VPA) 3. the Global Virtual-Physical Allocator(G-VPA)

Outline • • • Introduction Motivation Design Evaluation Conclusion

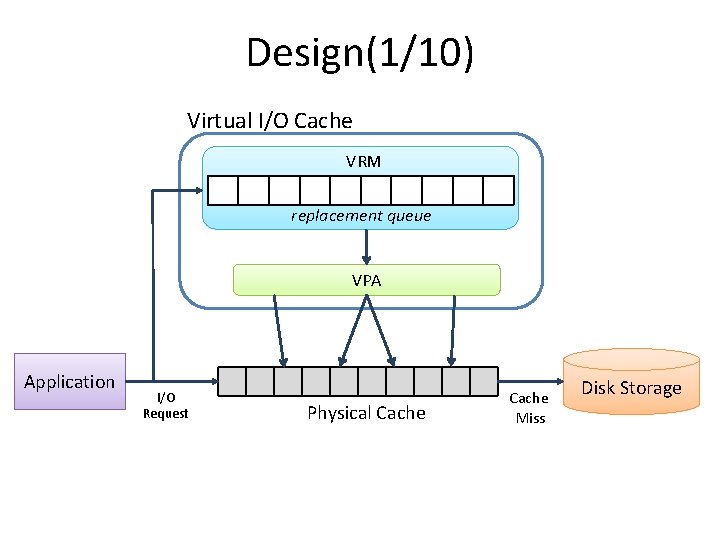

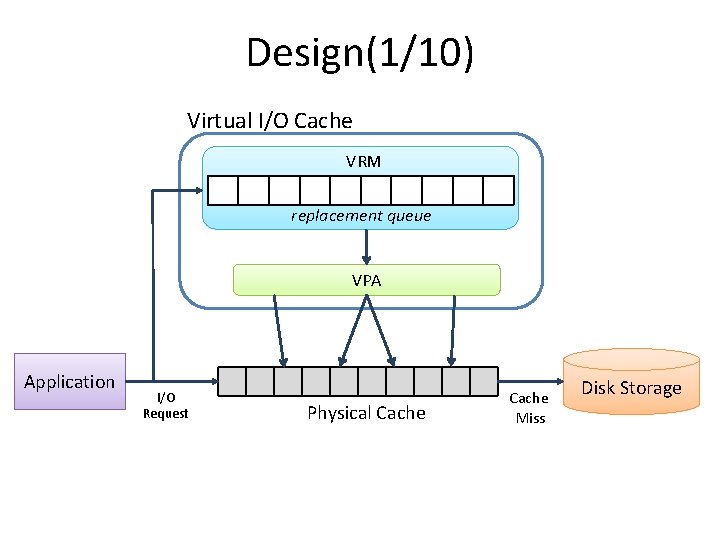

Design(1/10) Virtual I/O Cache VRM replacement queue VPA Application I/O Request Physical Cache Miss Disk Storage

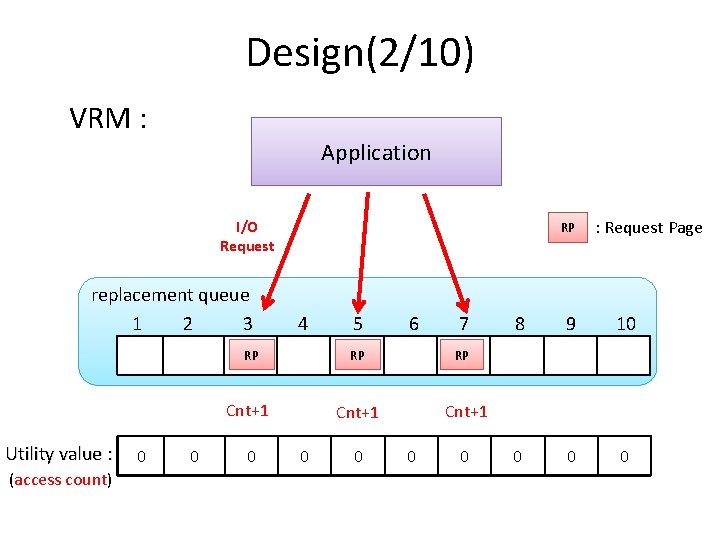

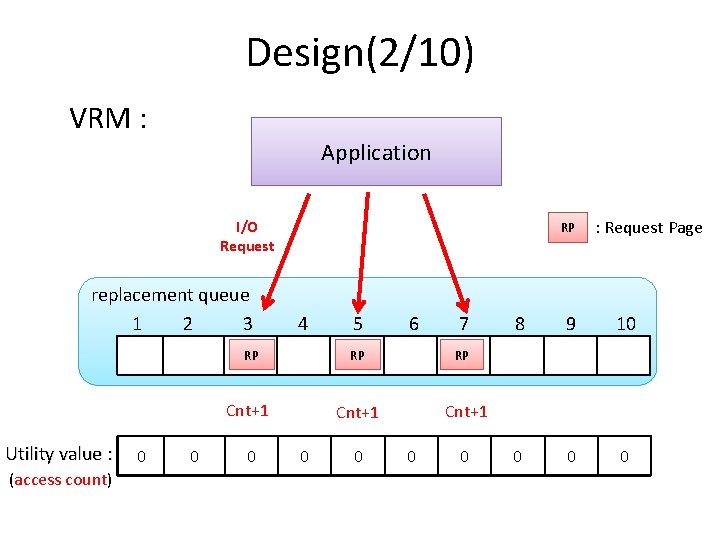

Design(2/10) VRM : Application I/O Request replacement queue 1 2 3 RP 4 RP Cnt+1 Utility value : (access count) 0 0 10 0 5 6 7 RP RP Cnt+1 10 0 10 : Request Page 8 9 10 0

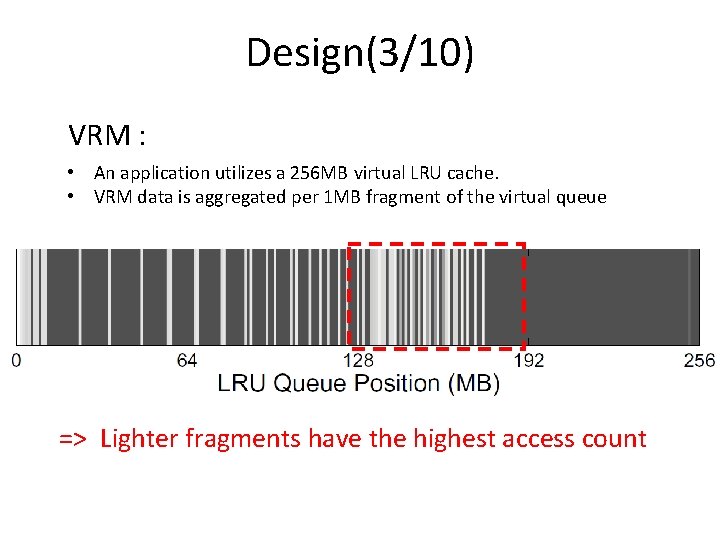

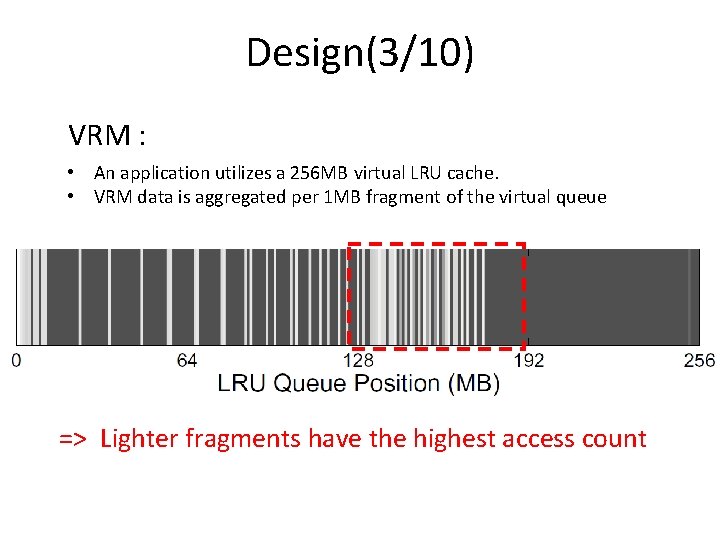

Design(3/10) VRM : • An application utilizes a 256 MB virtual LRU cache. • VRM data is aggregated per 1 MB fragment of the virtual queue => Lighter fragments have the highest access count

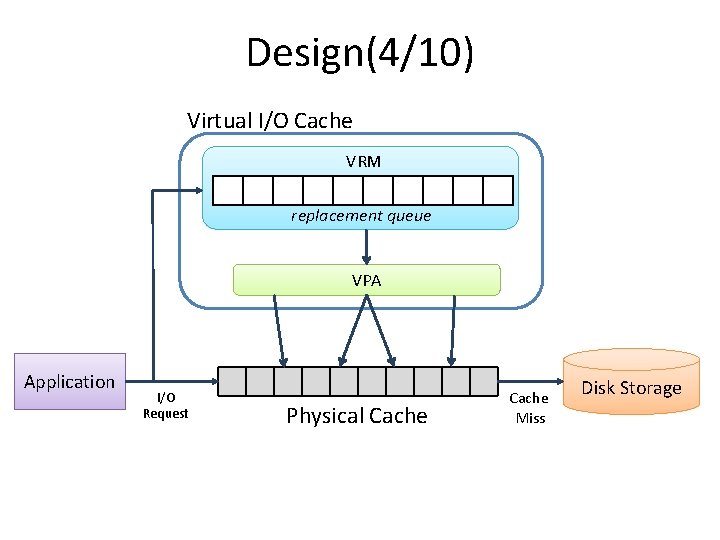

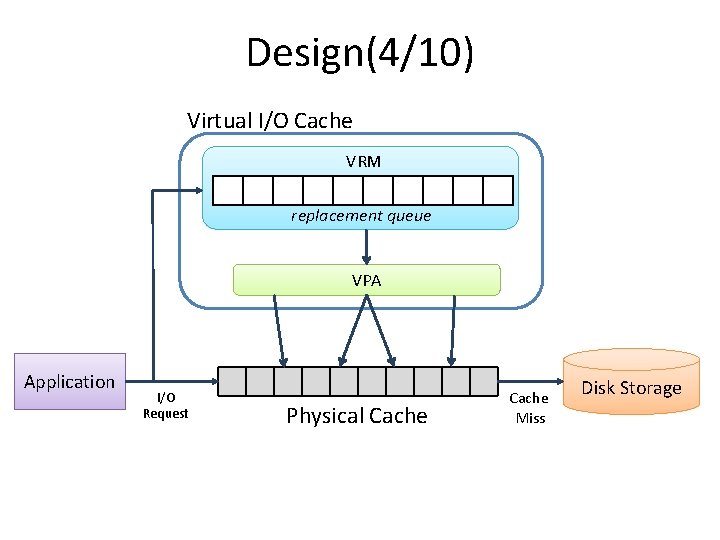

Design(4/10) Virtual I/O Cache VRM replacement queue VPA Application I/O Request Physical Cache Miss Disk Storage

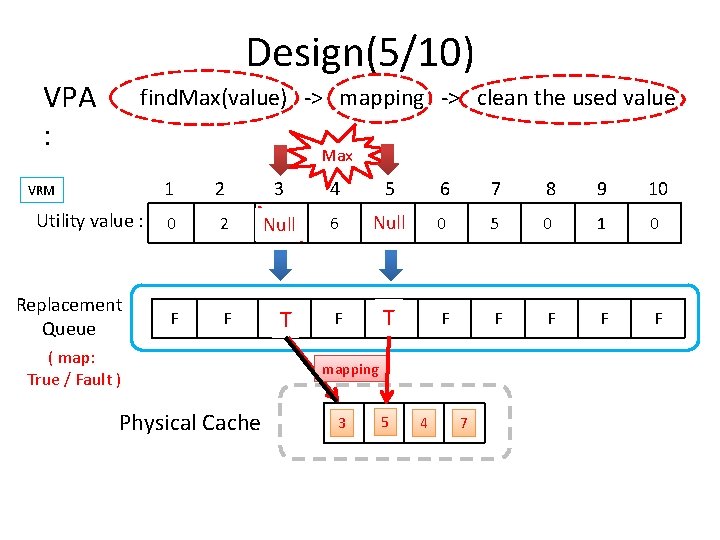

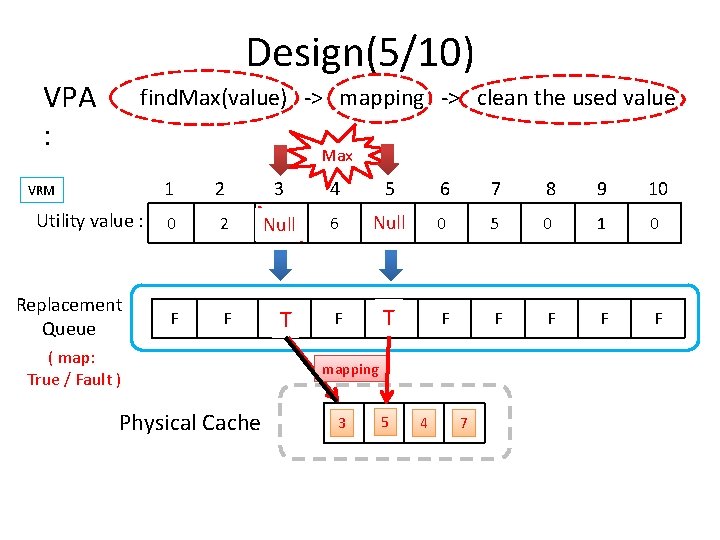

Design(5/10) VPA : find. Max(value) -> mapping -> clean the used value Max VRM Utility value : Replacement Queue 1 2 3 4 5 6 7 8 9 10 0 2 10 Null 6 Null 8 0 5 0 1 0 F F TF F F ( map: True / Fault ) Physical Cache mapping 3 5 4 7

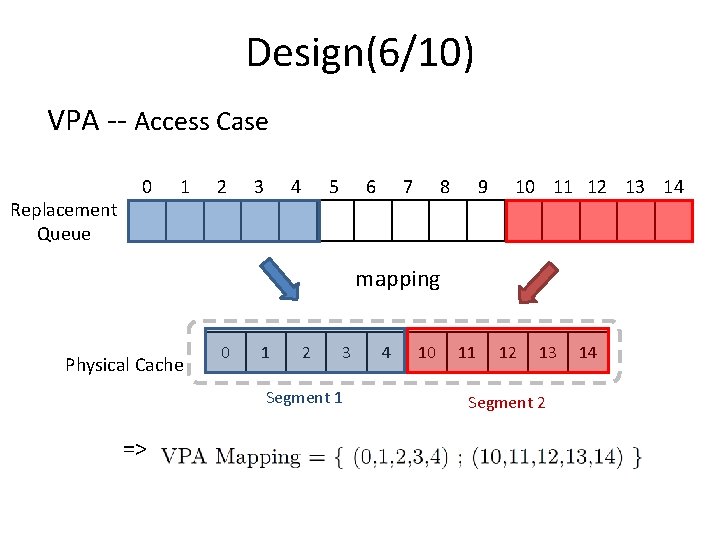

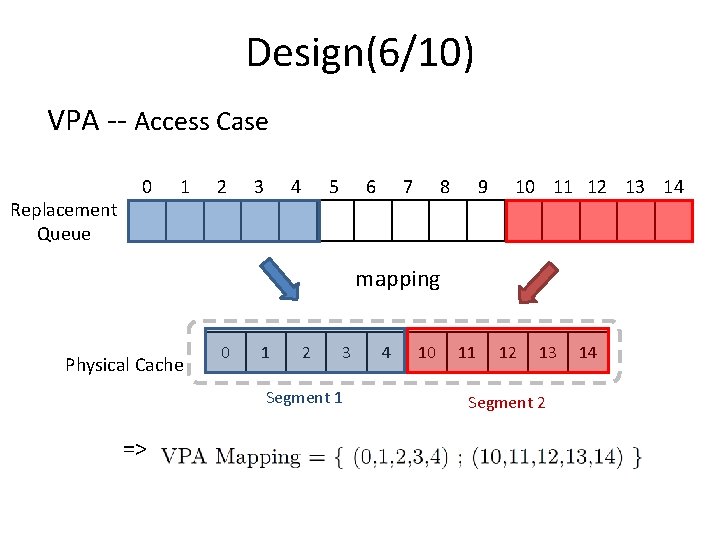

Design(6/10) VPA -- Access Case Replacement Queue 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 mapping Physical Cache 0 1 2 3 Segment 1 => 4 10 11 12 13 Segment 2 14

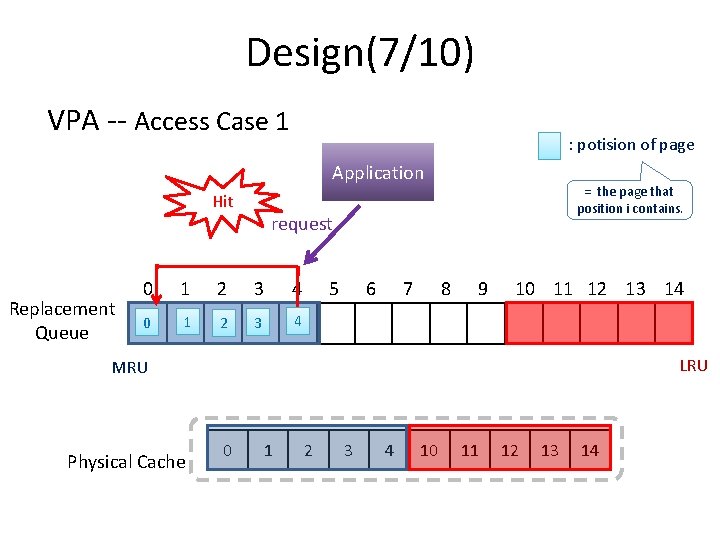

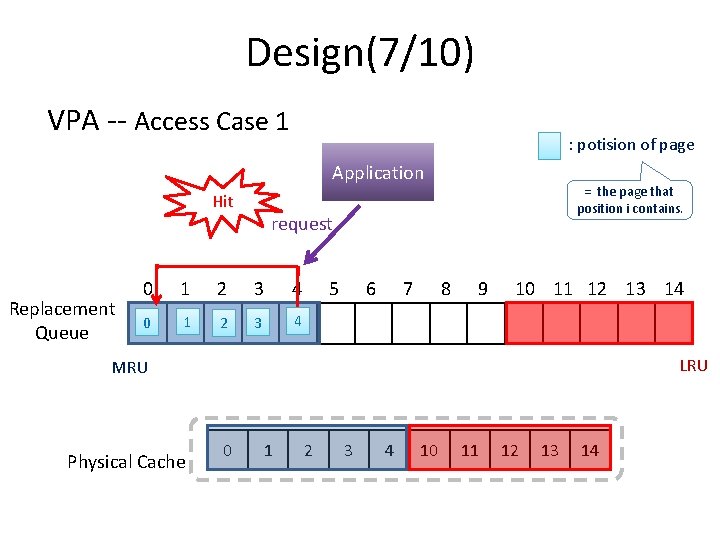

Design(7/10) VPA -- Access Case 1 : potision of page Application Hit Replacement Queue = the page that position i contains. request 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 LRU MRU Physical Cache 0 1 2 3 4 10 11 12 13 14

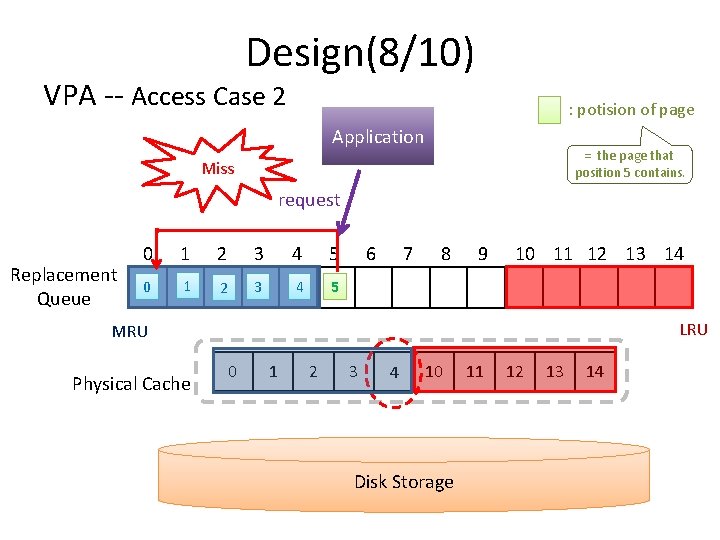

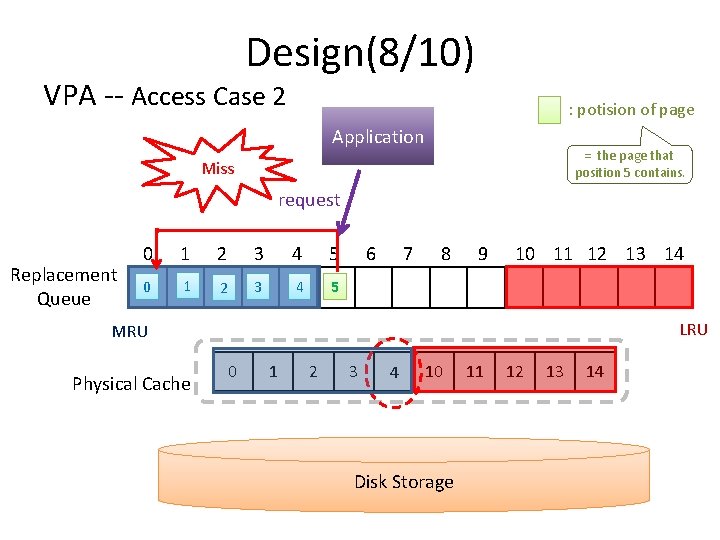

Design(8/10) VPA -- Access Case 2 : potision of page Application = the page that position 5 contains. Miss request Replacement Queue 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 LRU MRU Physical Cache 0 1 2 3 4 10 Disk Storage 11 12 13 14

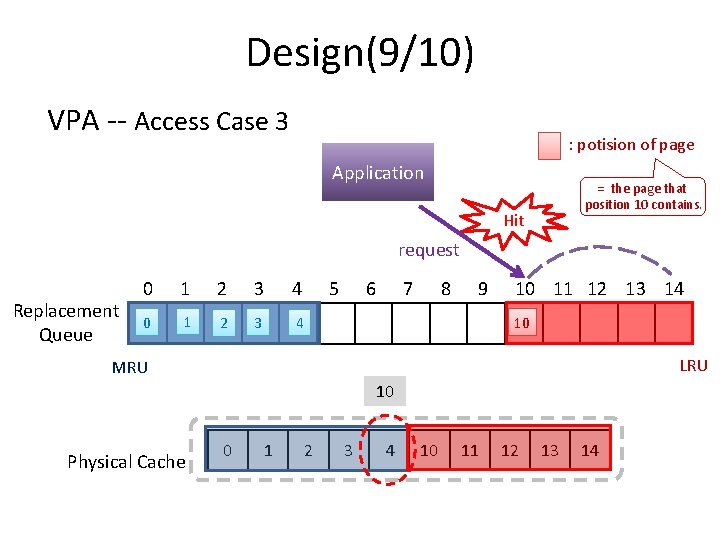

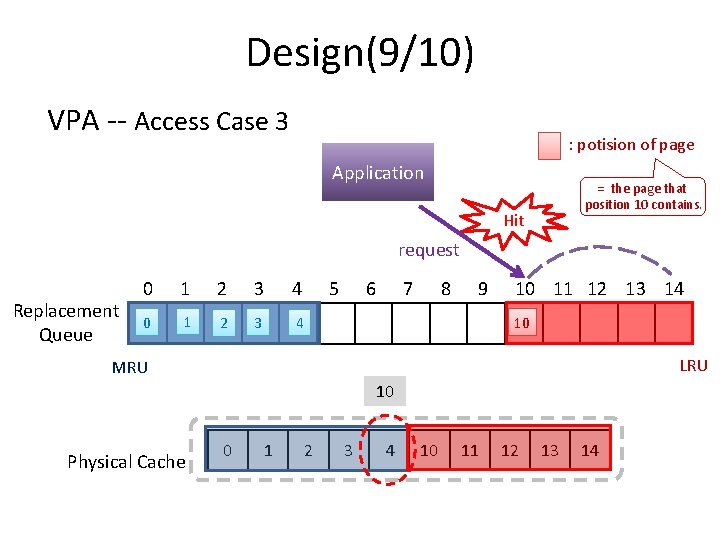

Design(9/10) VPA -- Access Case 3 : potision of page Application = the page that position 10 contains. Hit request Replacement Queue 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 10 LRU MRU 10 Physical Cache 0 1 2 3 4 10 11 12 13 14

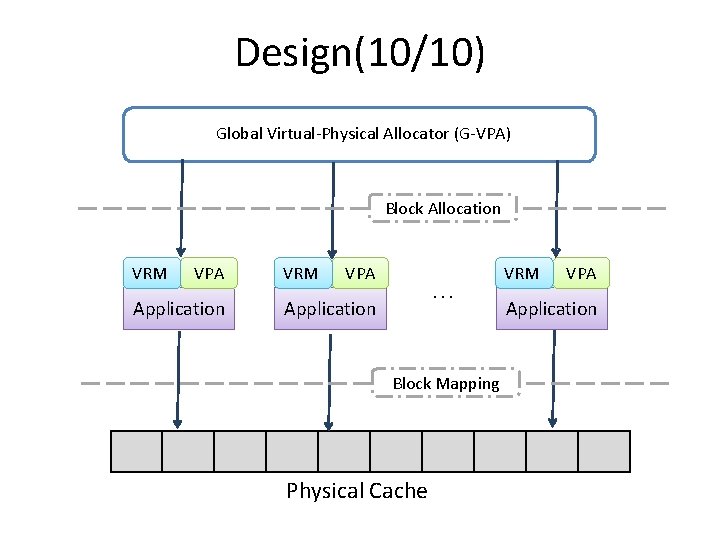

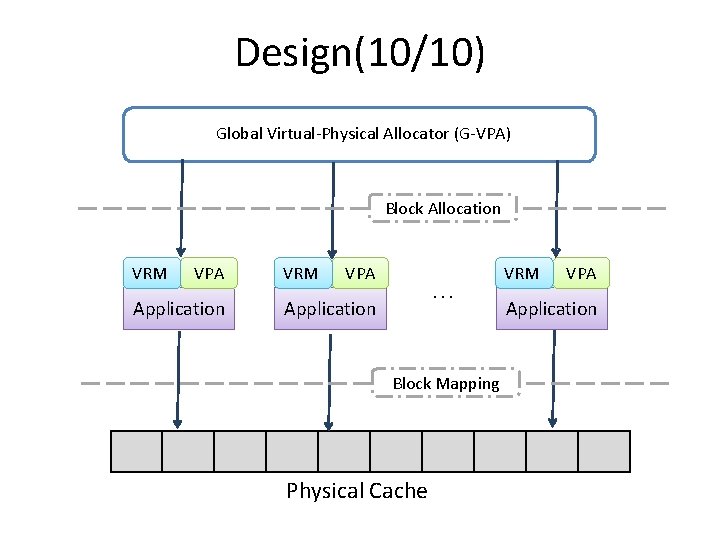

Design(10/10) Global Virtual-Physical Allocator (G-VPA) Block Allocation VRM VPA Application VRM VPA . . . Application Block Mapping Physical Cache VRM VPA Application

Outline • • • Introduction Motivation Design Evaluation Conclusion

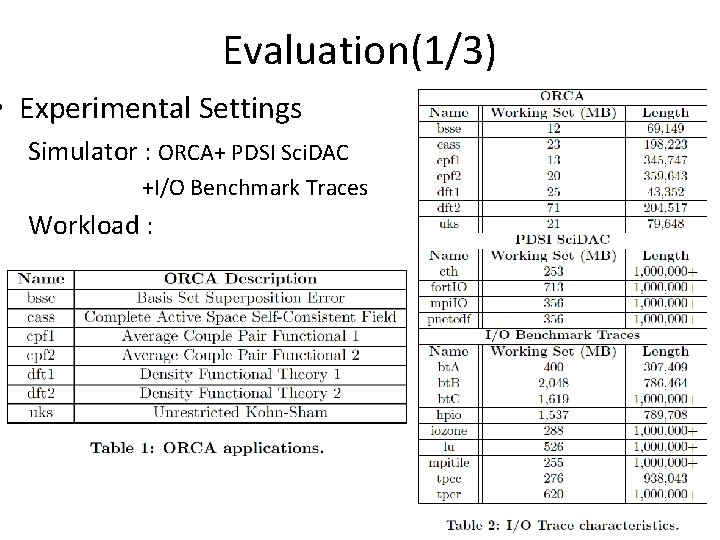

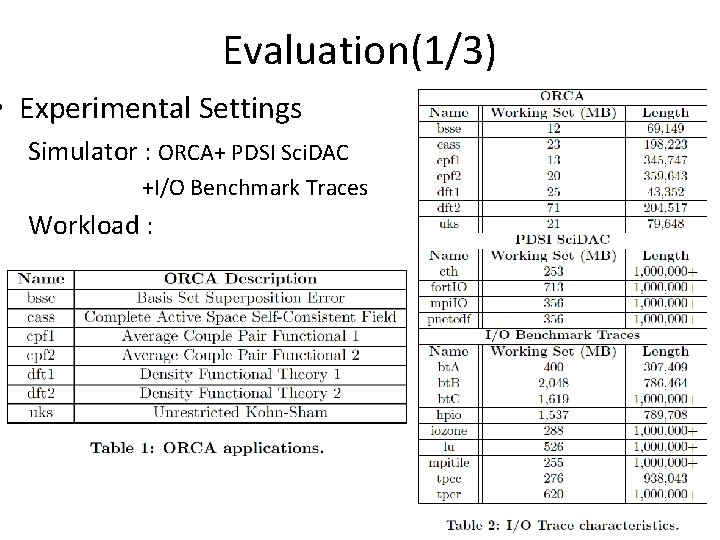

Evaluation(1/3) • Experimental Settings Simulator : ORCA+ PDSI Sci. DAC +I/O Benchmark Traces Workload :

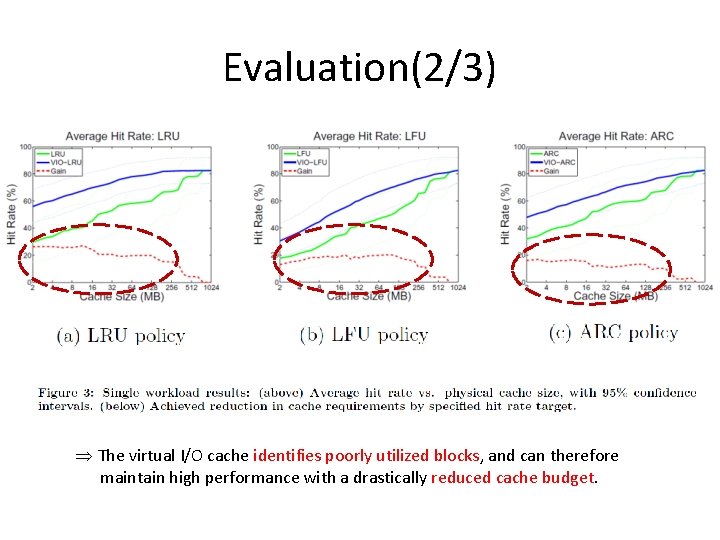

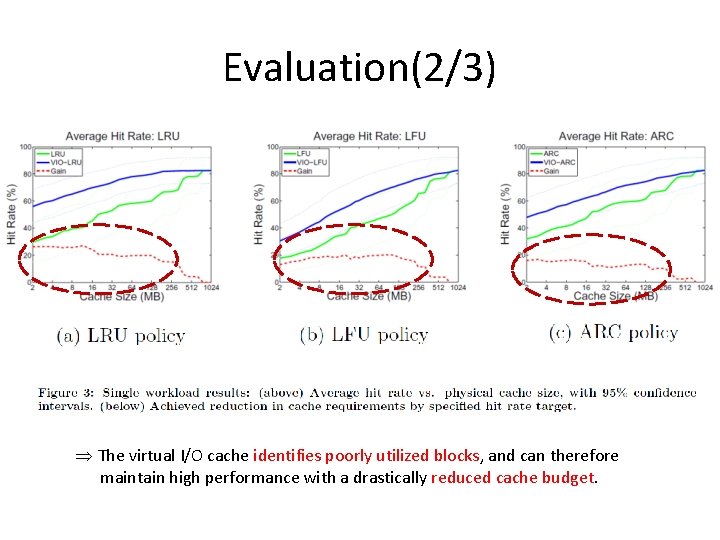

Evaluation(2/3) Þ The virtual I/O cache identifies poorly utilized blocks, and can therefore maintain high performance with a drastically reduced cache budget.

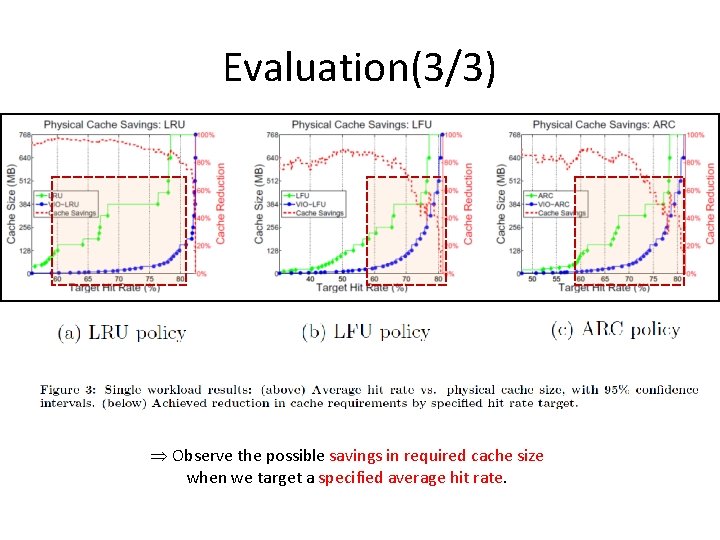

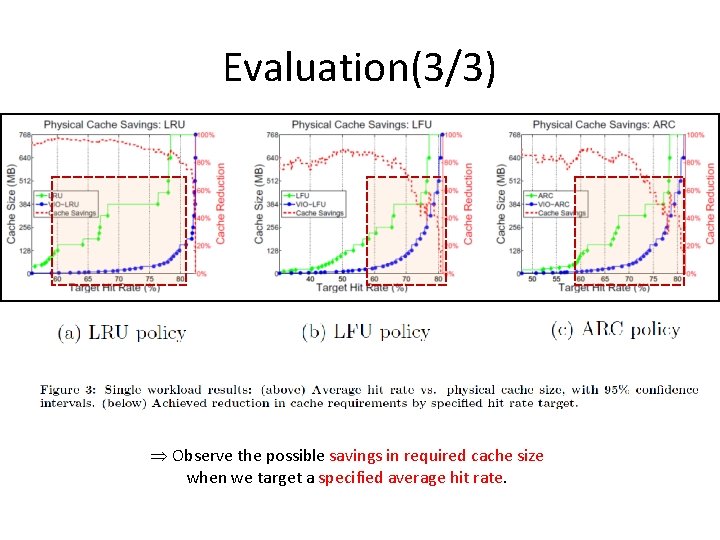

Evaluation(3/3) Þ Observe the possible savings in required cache size when we target a specified average hit rate.

Outline • • • Introduction Motivation Design Evaluation Conclusion

Conclusion • This paper focuses on how to solve a leading cause of reduced performance in distributed systems is contention at the storage layer. • The goal of our work is to cache size reductions large for equivalent performance levels.