Vectorization of the 2 D Wavelet Lifting Transform

- Slides: 39

Vectorization of the 2 D Wavelet Lifting Transform Using SIMD Extensions D. Chaver, C. Tenllado, L. Piñuel, M. Prieto, F. Tirado UCM

2 Index 1. Motivation 2. Experimental environment 3. Lifting Transform 4. Memory hierarchy exploitation 5. SIMD optimization 6. Conclusions 7. Future work UC M

3 Motivation UC M

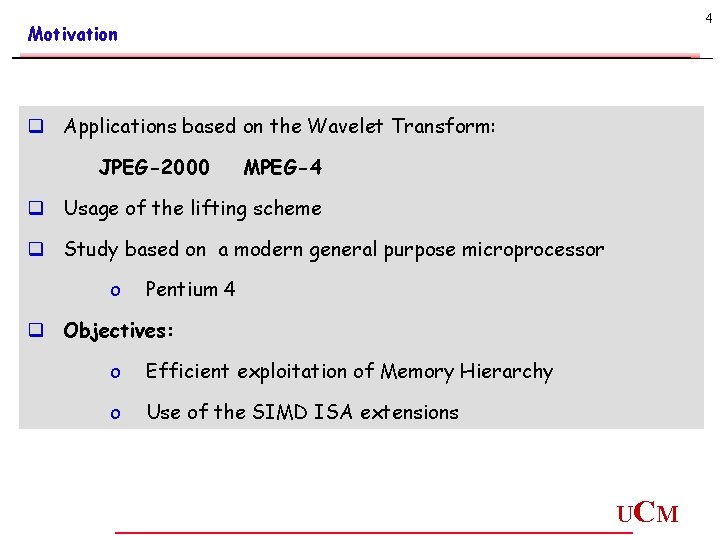

4 Motivation q Applications based on the Wavelet Transform: JPEG-2000 MPEG-4 q Usage of the lifting scheme q Study based on a modern general purpose microprocessor o Pentium 4 q Objectives: o Efficient exploitation of Memory Hierarchy o Use of the SIMD ISA extensions UC M

5 Experimental Environment UC M

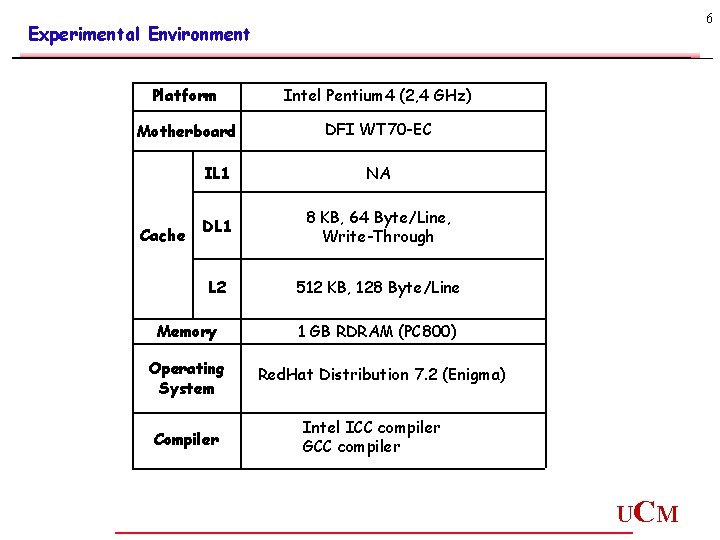

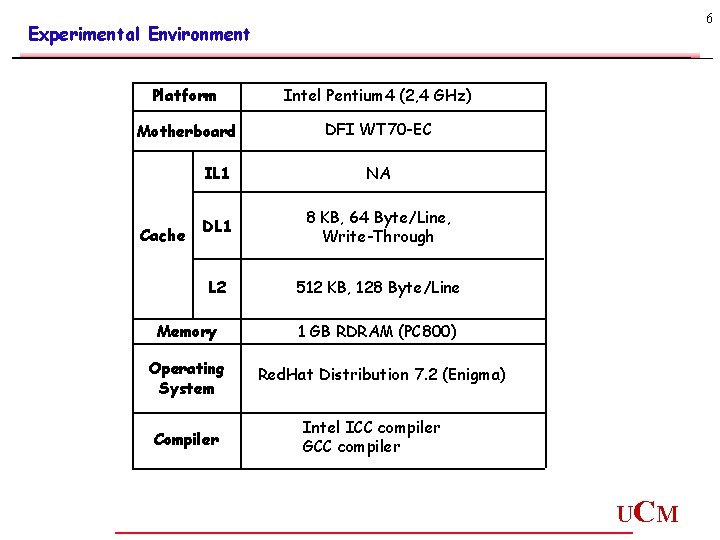

6 Experimental Environment Platform Intel Pentium 4 (2, 4 GHz) Motherboard DFI WT 70 -EC Cache IL 1 NA DL 1 8 KB, 64 Byte/Line, Write-Through L 2 512 KB, 128 Byte/Line Memory 1 GB RDRAM (PC 800) Operating System Red. Hat Distribution 7. 2 (Enigma) Compiler Intel ICC compiler GCC compiler UC M

7 Lifting Transform UC M

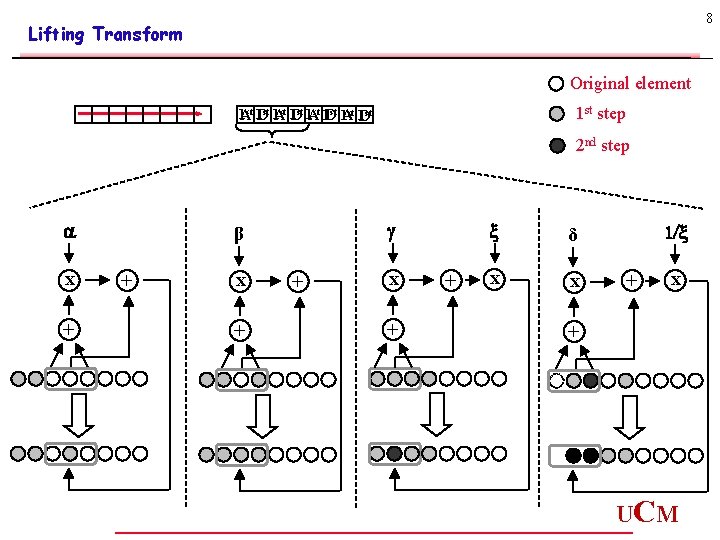

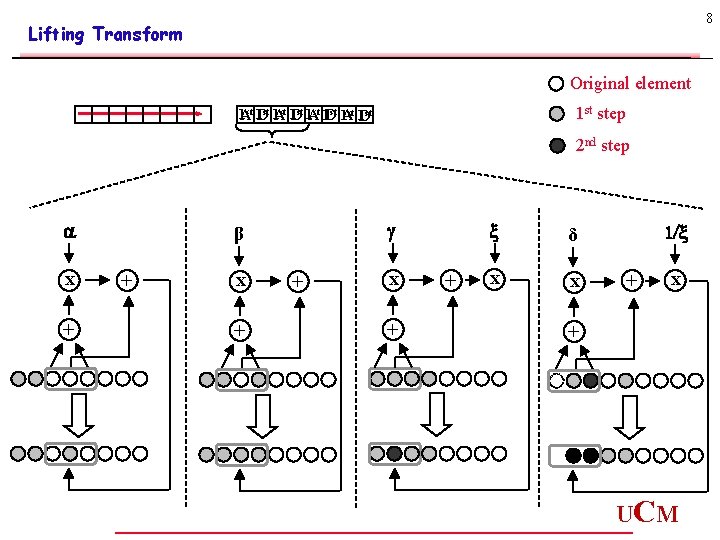

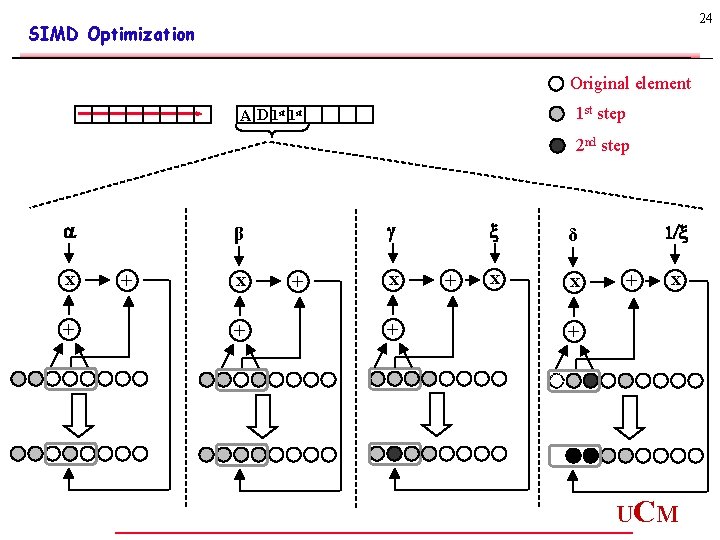

8 Lifting Transform Original element 1 st step 1 Ast 1 Dst 2 nd step a x + β + x + + δ x x 1/ + x + UC M

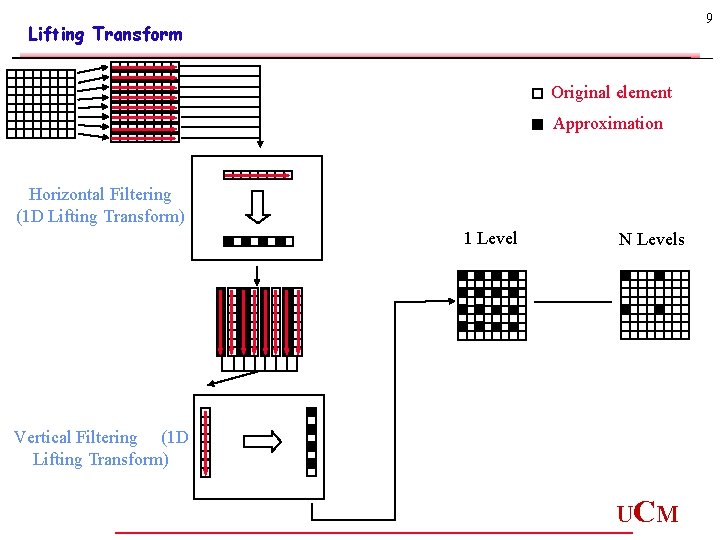

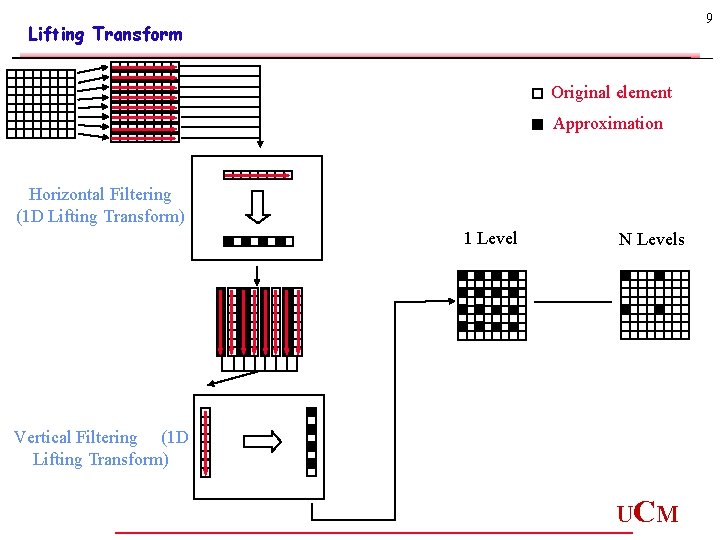

9 Lifting Transform Original element Approximation Horizontal Filtering (1 D Lifting Transform) 1 Level N Levels Vertical Filtering (1 D Lifting Transform) UC M

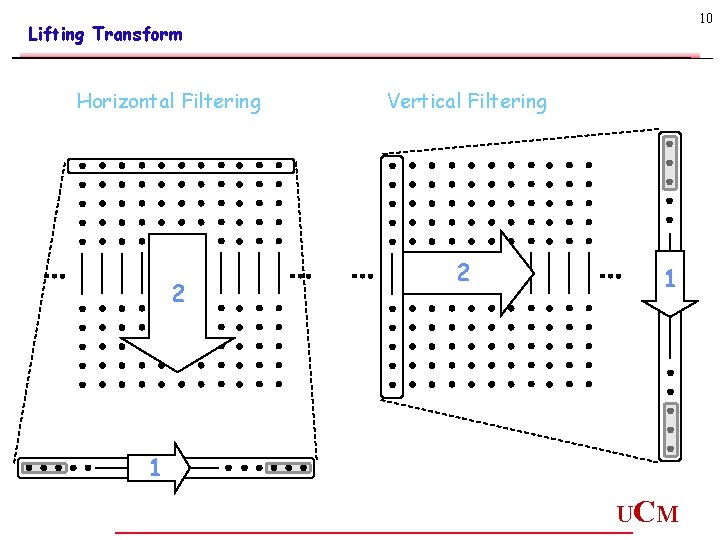

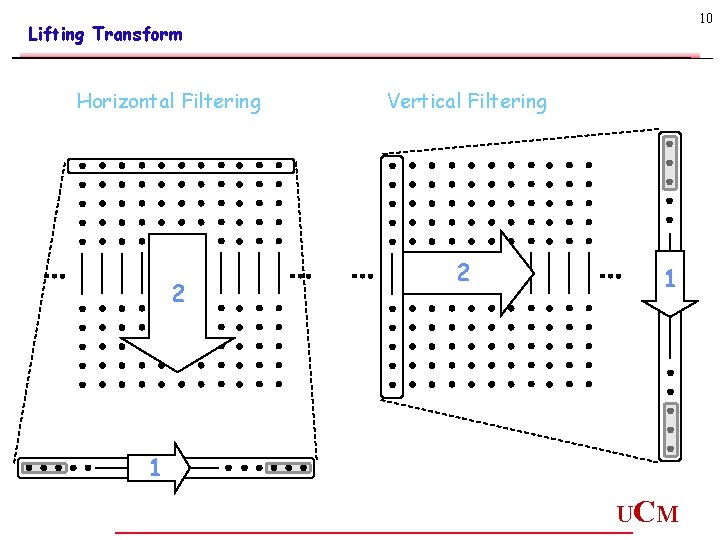

10 Lifting Transform Horizontal Filtering 2 Vertical Filtering 2 1 1 UC M

11 Memory Hierarchy Exploitation UC M

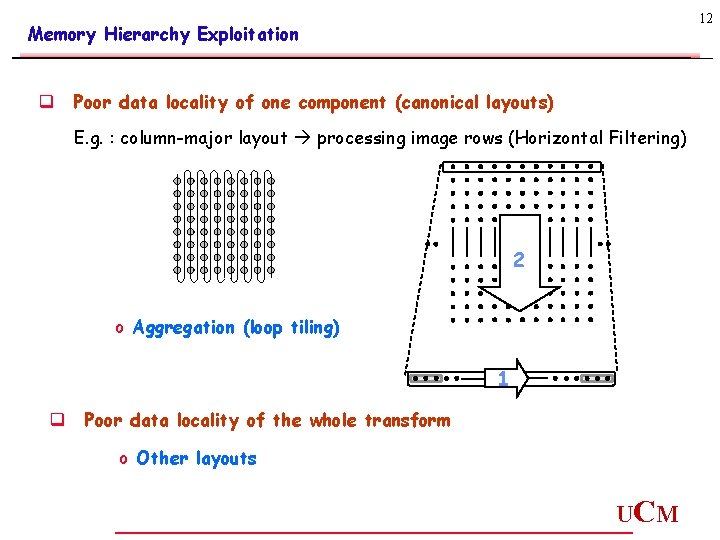

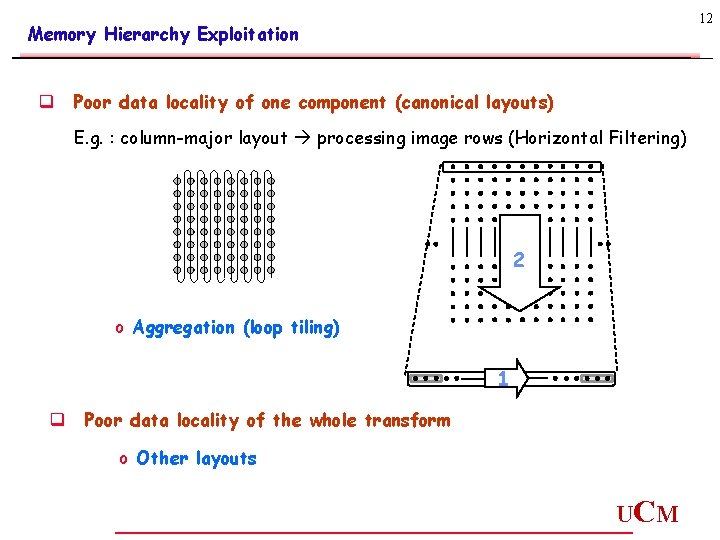

12 Memory Hierarchy Exploitation q Poor data locality of one component (canonical layouts) E. g. : column-major layout processing image rows (Horizontal Filtering) 2 o Aggregation (loop tiling) 1 q Poor data locality of the whole transform o Other layouts UC M

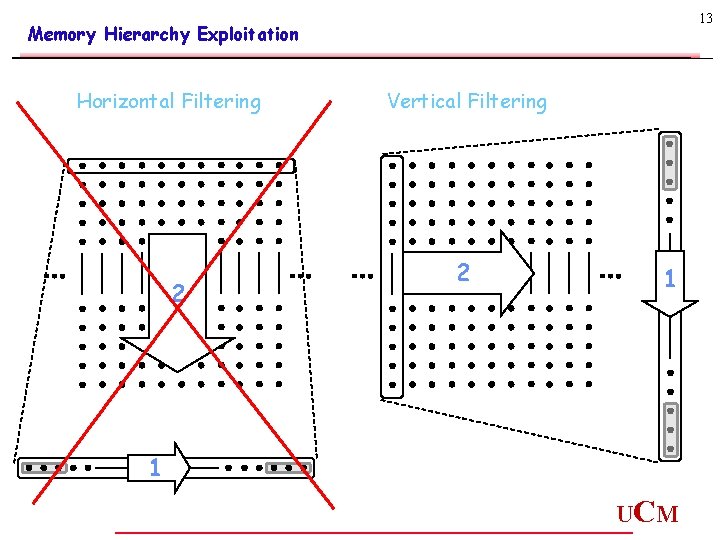

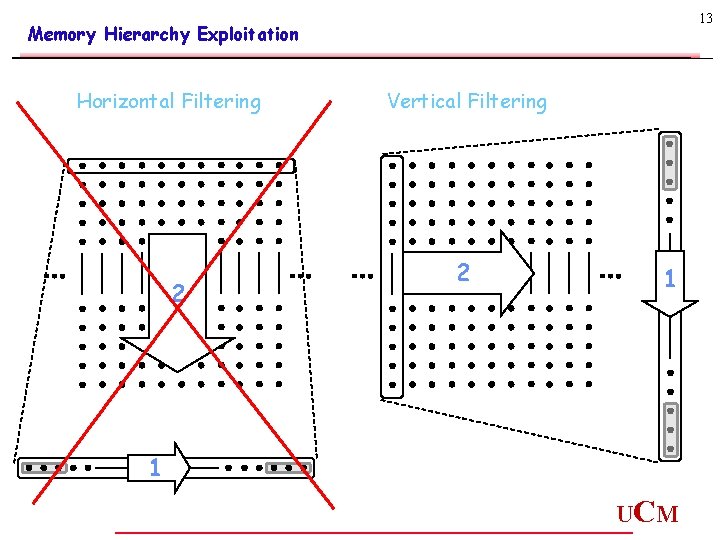

13 Memory Hierarchy Exploitation Horizontal Filtering 2 Vertical Filtering 2 1 1 UC M

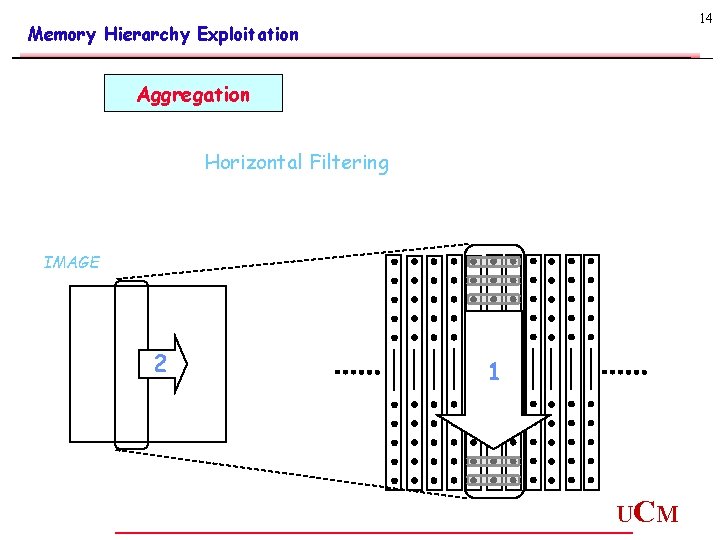

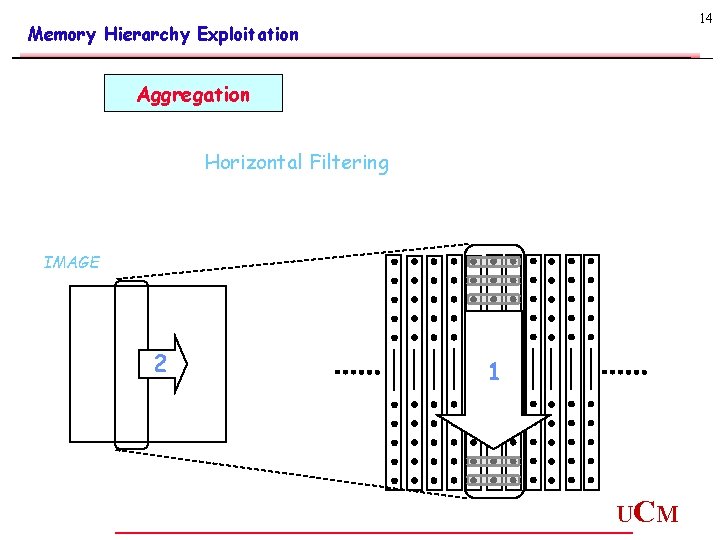

14 Memory Hierarchy Exploitation Aggregation Horizontal Filtering IMAGE 2 1 UC M

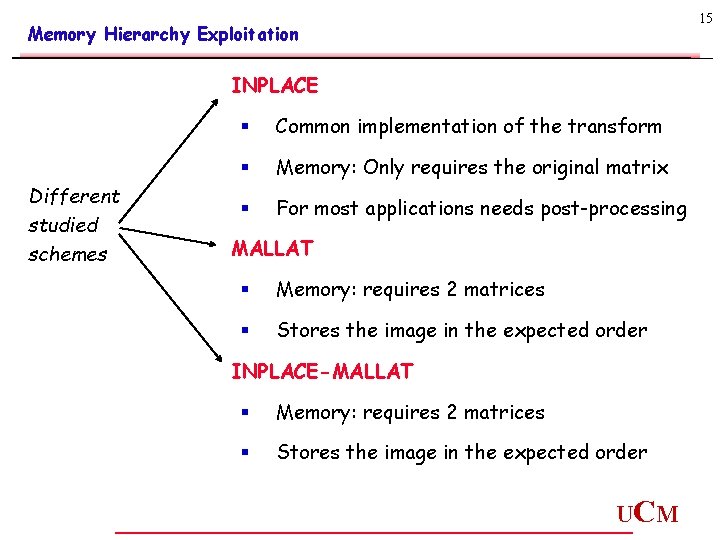

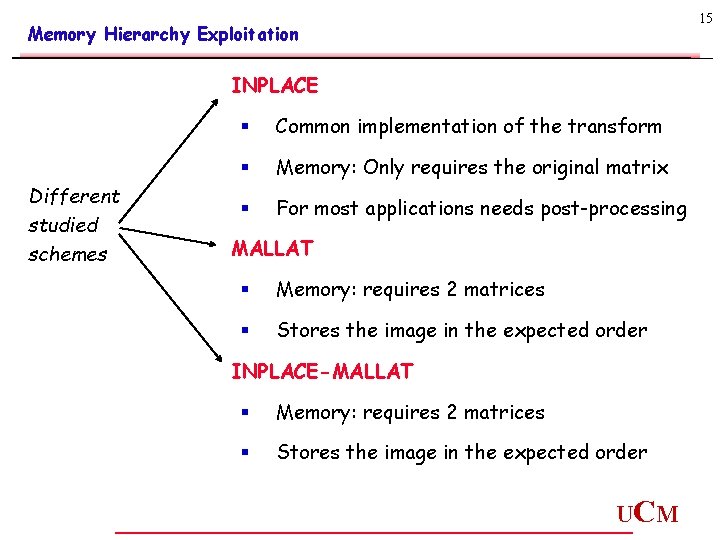

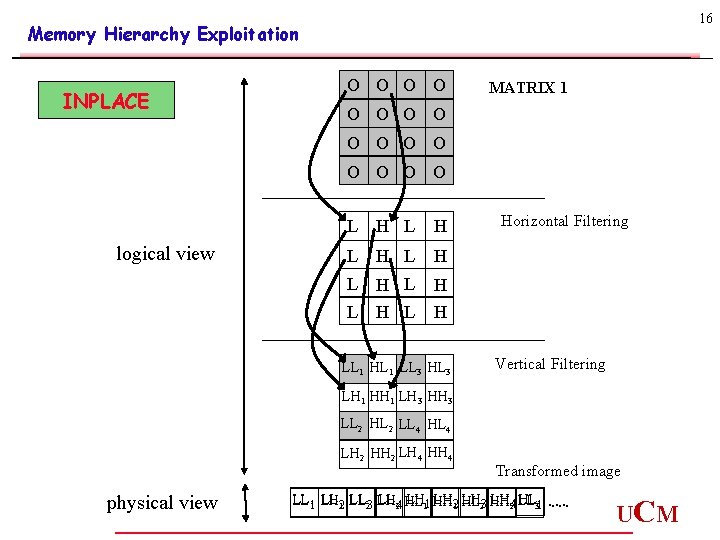

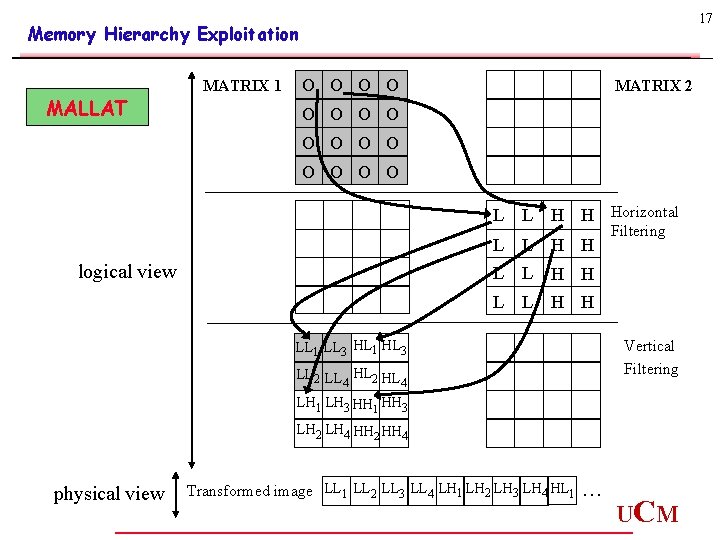

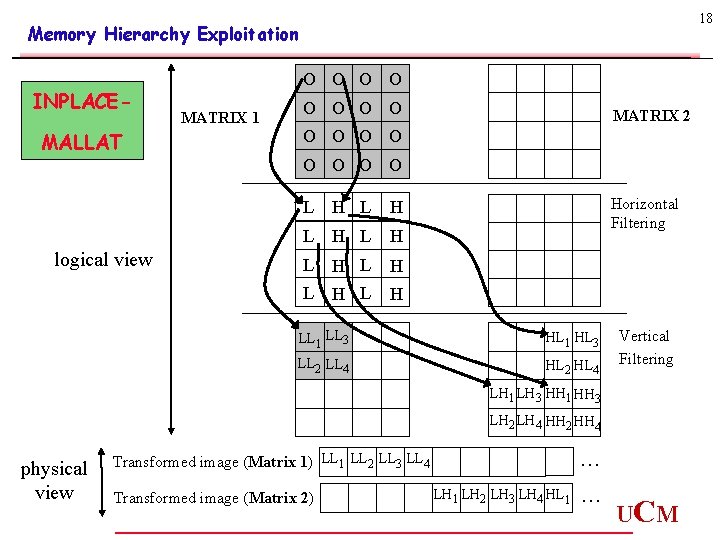

15 Memory Hierarchy Exploitation INPLACE Different studied schemes § Common implementation of the transform § Memory: Only requires the original matrix § For most applications needs post-processing MALLAT § Memory: requires 2 matrices § Stores the image in the expected order INPLACE-MALLAT § Memory: requires 2 matrices § Stores the image in the expected order UC M

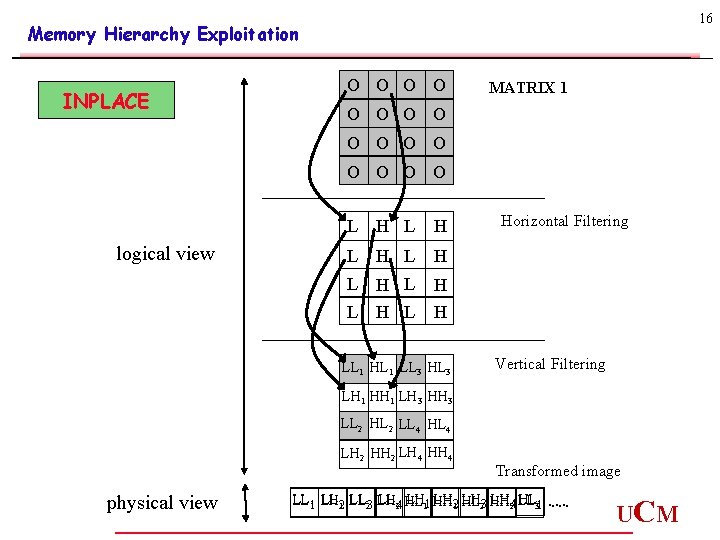

16 Memory Hierarchy Exploitation INPLACE O O MATRIX 1 O O O logical view L H L H H LL 1 HL 1 LL 3 Horizontal Filtering Vertical Filtering LH 1 HH 1 LH 3 HH 3 LL 2 HL 2 LL 4 HL 4 LH 2 HH 2 LH 4 HH 4 physical view Transformed image LH 11 HH LH 12 HL LH 23 HH LH 42 LL LL 11 LH LL 21 LL 23 LH LL 24 HL HL 31 . . . UC M

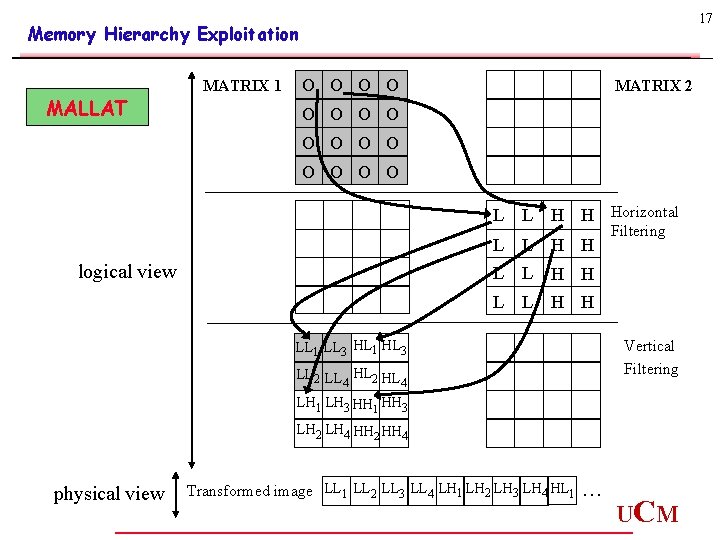

17 Memory Hierarchy Exploitation MALLAT MATRIX 1 O O MATRIX 2 O O O L L H H Horizontal L L H H logical view Filtering L L H H Vertical Filtering LL 1 LL 3 HL 1 HL 3 LL 2 LL HL 2 HL 4 4 LH 1 LH 3 HH 1 HH 3 LH 2 LH 4 HH 2 HH 4 physical view Transformed image LL 1 LL 2 LL 3 LL 4 LH 1 LH 2 LH 3 LH 4 HL 1 . . . UC M

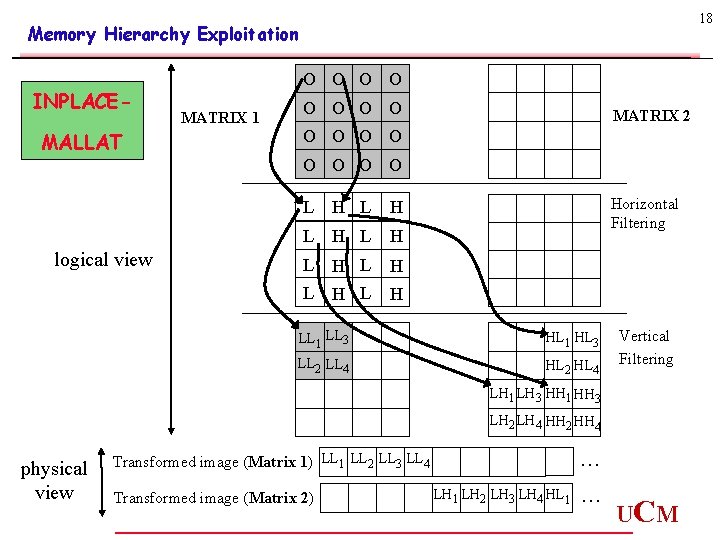

18 Memory Hierarchy Exploitation INPLACEMALLAT O O MATRIX 1 O O MATRIX 2 O O O O logical view L H L H Horizontal Filtering LL 1 LL 3 HL 1 HL 3 LL 2 LL 4 HL 2 HL 4 Vertical Filtering LH 1 LH 3 HH 1 HH 3 LH 2 LH 4 HH 2 HH 4 physical view Transformed image (Matrix 1) LL 1 LL 2 LL 3 LL 4 Transformed image (Matrix 2) LH 1 LH 2 LH 3 LH 4 HL 1 . . . UC M

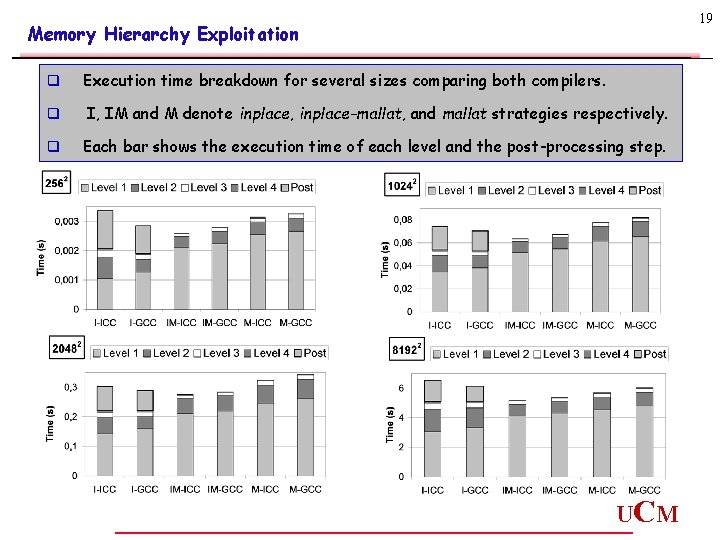

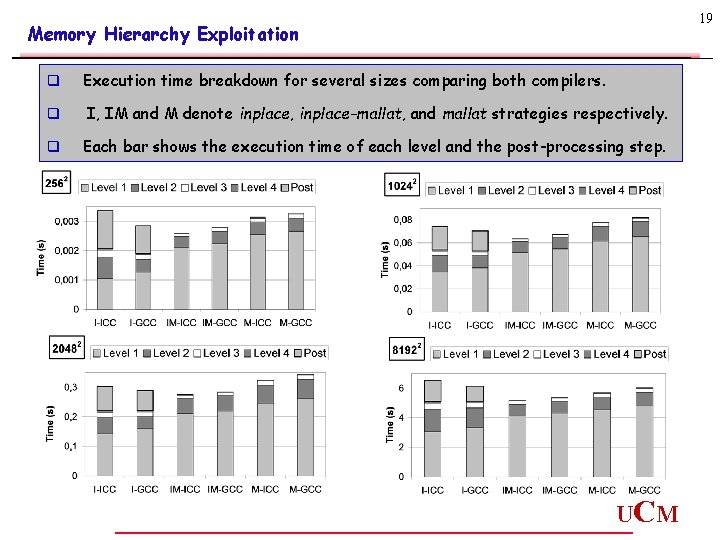

19 Memory Hierarchy Exploitation q Execution time breakdown for several sizes comparing both compilers. q I, IM and M denote inplace, inplace-mallat, and mallat strategies respectively. q Each bar shows the execution time of each level and the post-processing step. UC M

20 Memory Hierarchy Exploitation CONCLUSIONS q The Mallat and Inplace-Mallat approaches outperform the Inplace approach for levels 2 and above q These 2 approaches have a noticeable slowdown for the 1 st level: • Larger working set • More complex access pattern q The Inplace-Mallat version achieves the best execution time q ICC compiler outperforms GCC for Mallat and Inplace-Mallat, but not for the Inplace approach UC M

21 SIMD Optimization UC M

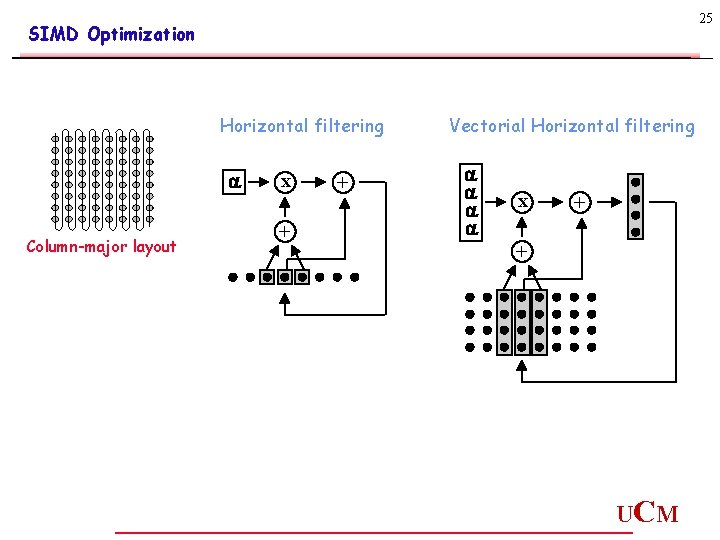

22 SIMD Optimization q Objective: Extract the parallelism available on the Lifting Transform q Different strategies: Semi-automatic vectorization Hand-coded vectorization q Only the horizontal filtering of the transform can be semiautomatically vectorized (when using a column-major layout) UC M

23 SIMD Optimization Automatic Vectorization (Intel C/C++ Compiler) üInner loops üSimple array index manipulation ü Iterate over contiguous memory locations ü Global variables avoided ü Pointer disambiguation if pointers are employed UC M

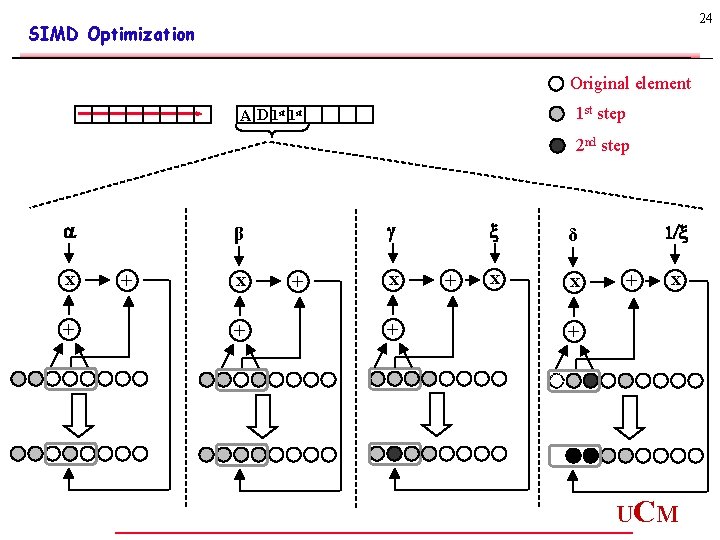

24 SIMD Optimization Original element 1 st step A D 1 st 2 nd step a x + β + x + + δ x x 1/ + x + UC M

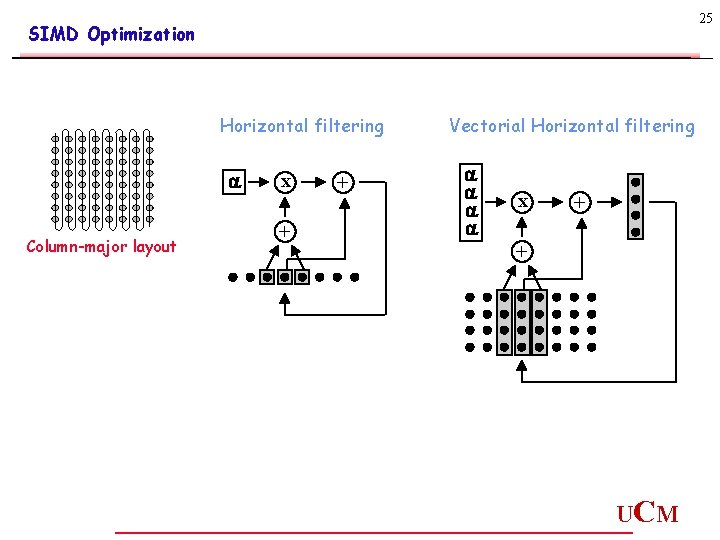

25 SIMD Optimization Horizontal filtering a Column-major layout x + + Vectorial Horizontal filtering a a x + + UC M

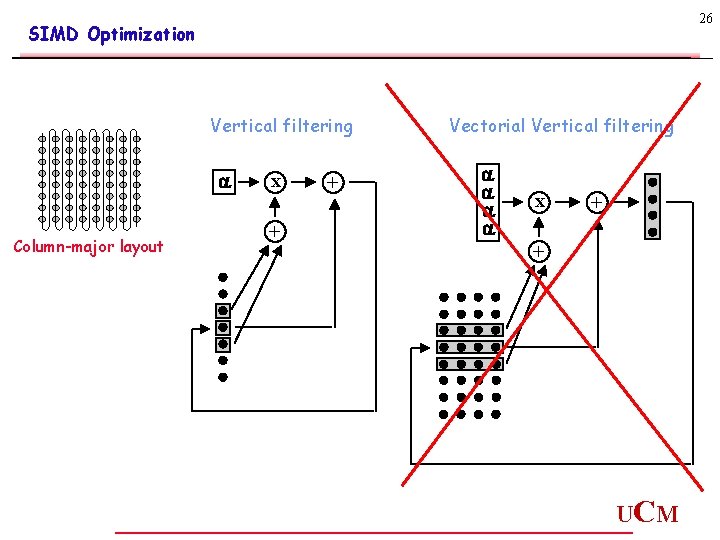

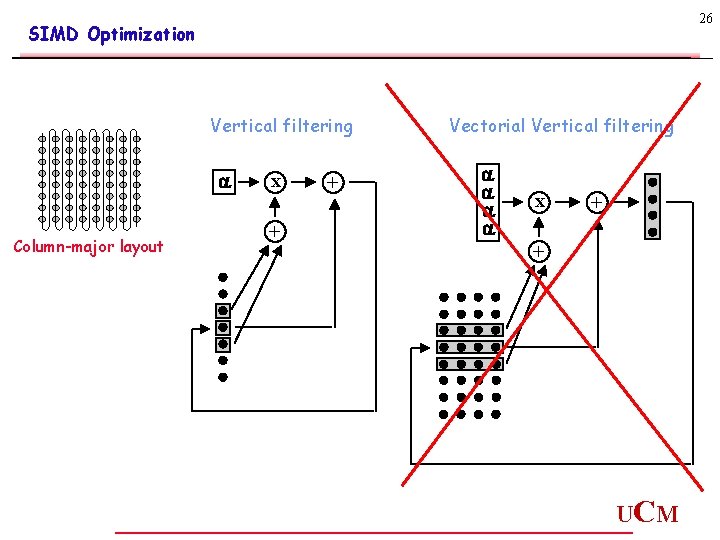

26 SIMD Optimization Vertical filtering a Column-major layout x + + Vectorial Vertical filtering a a x + + UC M

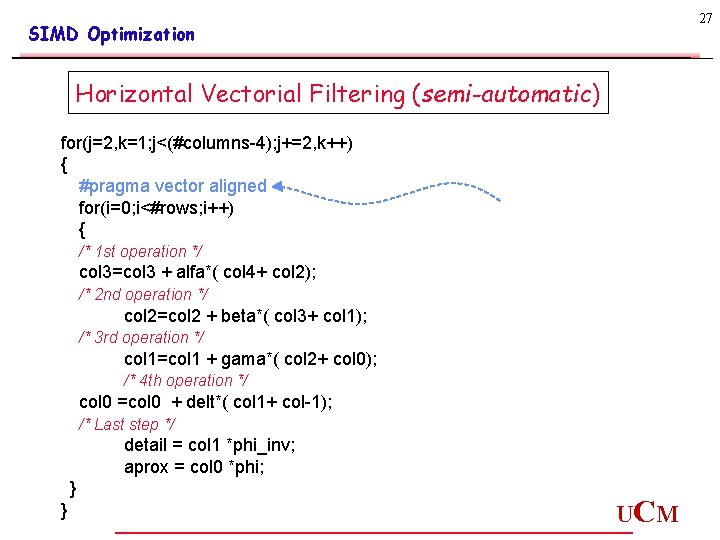

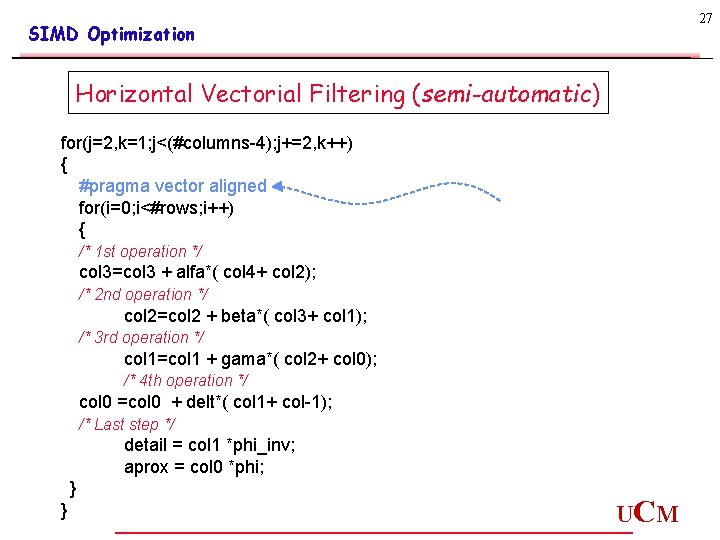

27 SIMD Optimization Horizontal Vectorial Filtering (semi-automatic) for(j=2, k=1; j<(#columns-4); j+=2, k++) { #pragma vector aligned for(i=0; i<#rows; i++) { /* 1 st operation */ col 3=col 3 + alfa*( col 4+ col 2); /* 2 nd operation */ col 2=col 2 + beta*( col 3+ col 1); /* 3 rd operation */ col 1=col 1 + gama*( col 2+ col 0); /* 4 th operation */ col 0 =col 0 + delt*( col 1+ col-1); /* Last step */ detail = col 1 *phi_inv; aprox = col 0 *phi; } } UC M

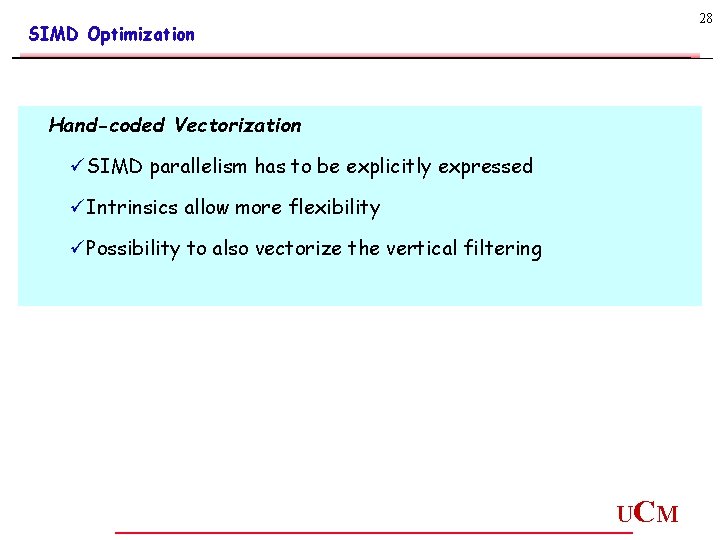

28 SIMD Optimization Hand-coded Vectorization üSIMD parallelism has to be explicitly expressed üIntrinsics allow more flexibility üPossibility to also vectorize the vertical filtering UC M

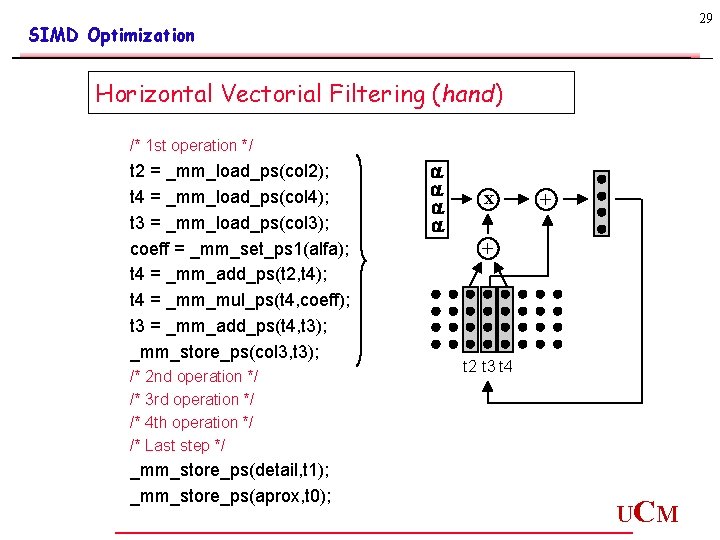

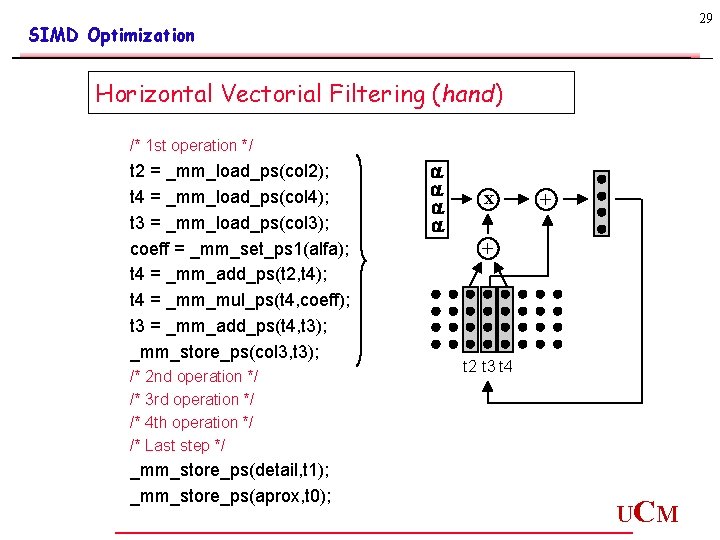

29 SIMD Optimization Horizontal Vectorial Filtering (hand) /* 1 st operation */ t 2 = _mm_load_ps(col 2); t 4 = _mm_load_ps(col 4); t 3 = _mm_load_ps(col 3); coeff = _mm_set_ps 1(alfa); t 4 = _mm_add_ps(t 2, t 4); t 4 = _mm_mul_ps(t 4, coeff); t 3 = _mm_add_ps(t 4, t 3); _mm_store_ps(col 3, t 3); /* 2 nd operation */ /* 3 rd operation */ /* 4 th operation */ /* Last step */ _mm_store_ps(detail, t 1); _mm_store_ps(aprox, t 0); a a x + + t 2 t 3 t 4 UC M

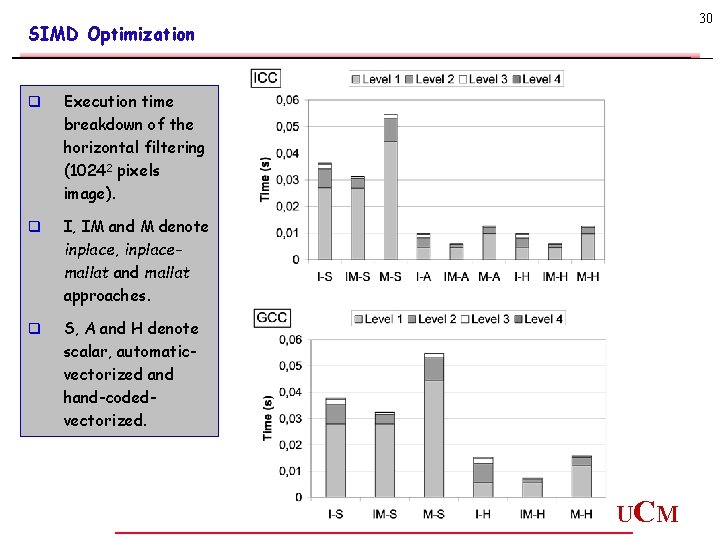

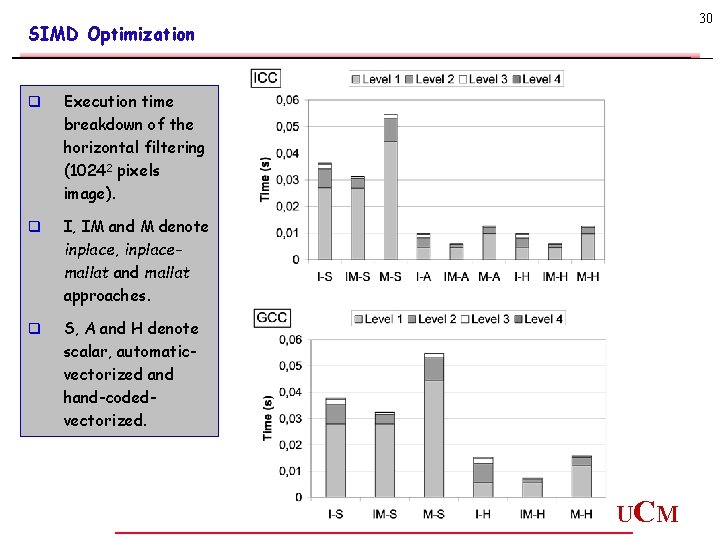

30 SIMD Optimization q Execution time breakdown of the horizontal filtering (10242 pixels image). q I, IM and M denote inplace, inplacemallat and mallat approaches. q S, A and H denote scalar, automaticvectorized and hand-codedvectorized. UC M

31 SIMD Optimization CONCLUSIONS q Speedup between 4 and 6 depending on the strategy. The reason for such a high improvement is due not only to the vectorial computations, but also to a considerable reduction in the memory accesses. q The speedups achieved by the strategies with recursive layouts (i. e. inplacemallat and mallat) are higher than the inplace version counterparts, since the computation on the latter can only be vectorized in the first level. q For ICC, both vectorization approaches (i. e. automatic and hand-tuned) produce similar speedups, which highlights the quality of the ICC vectorizer. UC M

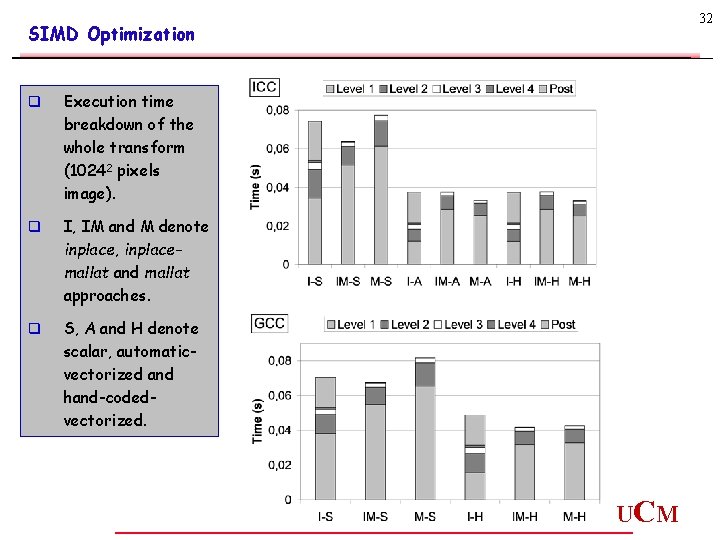

32 SIMD Optimization q Execution time breakdown of the whole transform (10242 pixels image). q I, IM and M denote inplace, inplacemallat and mallat approaches. q S, A and H denote scalar, automaticvectorized and hand-codedvectorized. UC M

33 SIMD Optimization CONCLUSIONS q Speedup between 1, 5 and 2 depending on the strategy. q For ICC the shortest execution time is reached by the mallat version. q When using GCC both recursive-layout strategies obtain similar results. UC M

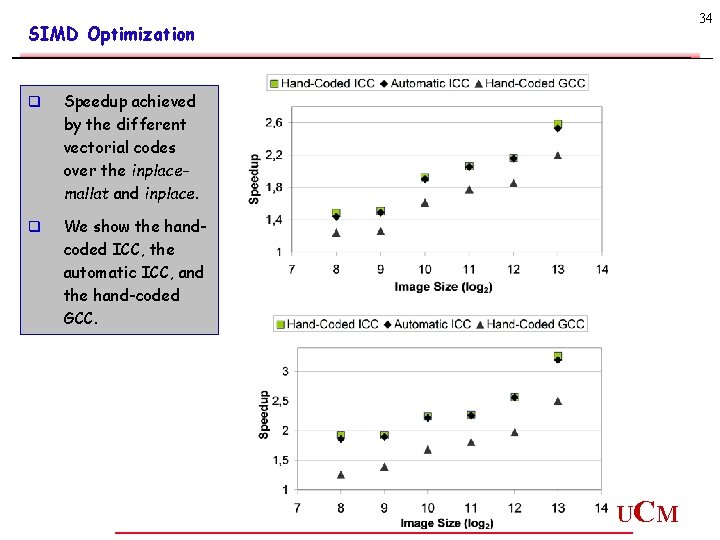

34 SIMD Optimization q Speedup achieved by the different vectorial codes over the inplacemallat and inplace. q We show the handcoded ICC, the automatic ICC, and the hand-coded GCC. UC M

35 SIMD Optimization CONCLUSIONS q The speedup grows with the image size since. q On average, the speedup is about 1. 8 over the inplace-mallat scheme, growing to about 2 when considering it over the inplace strategy. q Focusing on the compilers, ICC clearly outperforms GCC by a significant 2025% for all the image sizes UC M

36 Conclusions UC M

37 Conclusions q Scalar version: We have introduced a new scheme called Inplace-Mallat, that outperforms both the Inplace implementation and the Mallat scheme. q SIMD exploitation: Code modifications for the vectorial processing of the lifting algorithm. Two different methodologies with ICC compiler: semiautomatic and intrinsic-based vectorizations. Both provide similar results. q Speedup: Horizontal filtering about 4 -6 (vectorization also reduces the pressure on the memory system). Whole transform around 2. q The vectorial Mallat approach outperforms the other schemes and exhibits a better scalability. q Most of our insights are compiler independent. UC M

38 Future work UC M

39 Future work ü 4 D layout for a lifting-based scheme ü Measurements using other platforms ü • Intel Itanium • Intel Pentium-4 with hiperthreading Parallelization using Open. MP (SMT) For additional information: http: //www. dacya. ucm. es/dchaver UC M