Vector Representations of Entity Sets in Web Tables

Vector Representations of Entity Sets in Web Tables Ezra Winston, Igor Gitman CMU 10 -805/10 -605

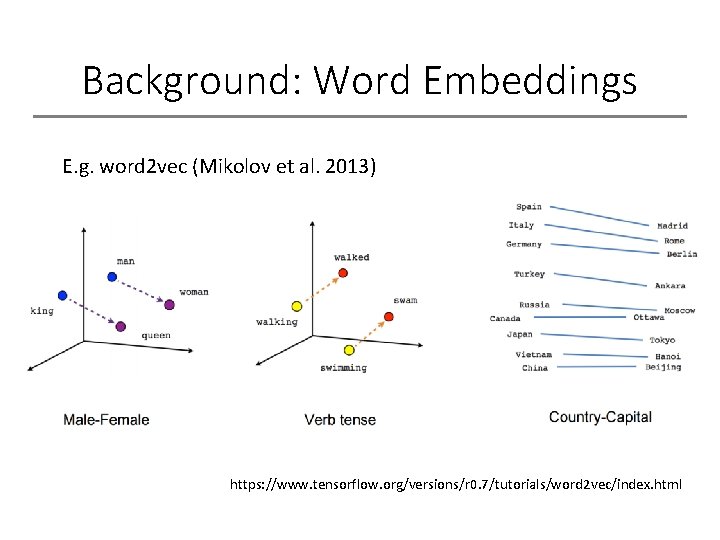

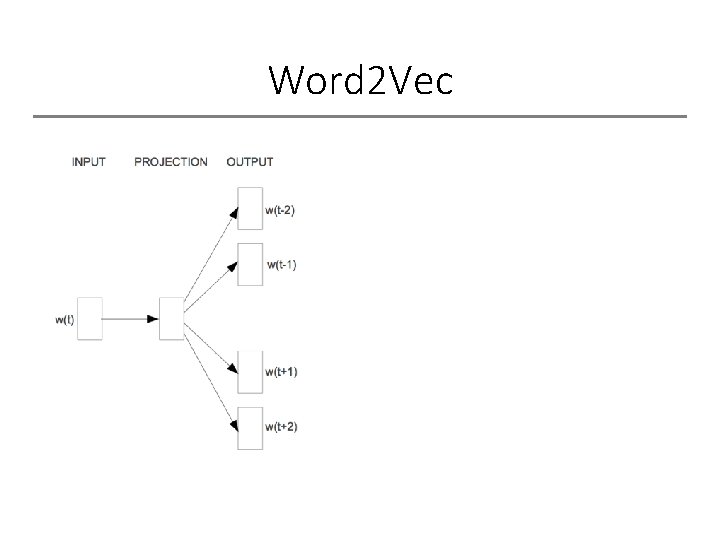

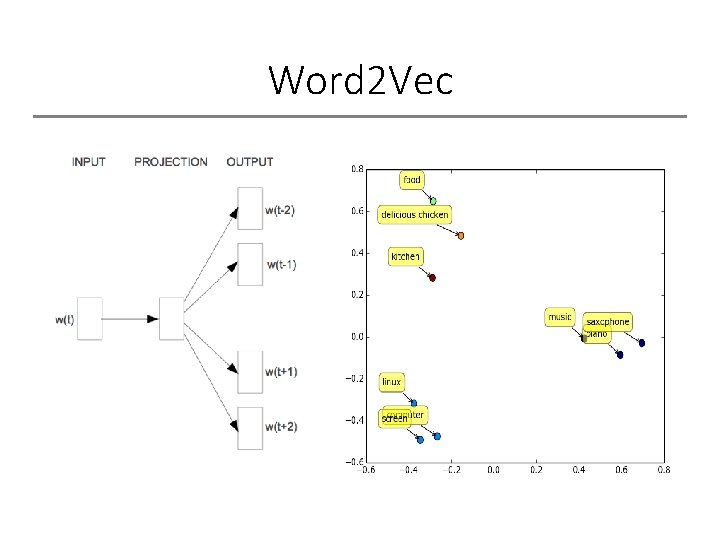

Background: Word Embeddings E. g. word 2 vec (Mikolov et al. 2013) https: //www. tensorflow. org/versions/r 0. 7/tutorials/word 2 vec/index. html

![Entity-Set Embeddings Objective: generalize word-embeddings to sets of related entities [Orange, Banana, Apple] Apple Entity-Set Embeddings Objective: generalize word-embeddings to sets of related entities [Orange, Banana, Apple] Apple](http://slidetodoc.com/presentation_image_h2/a7dc2c2618c61ba765661c83feb4df3d/image-3.jpg)

Entity-Set Embeddings Objective: generalize word-embeddings to sets of related entities [Orange, Banana, Apple] Apple [George H. W. Bush, Ronald Regan, Jimmy Carter] Carter

Applications Many applications for Information Extraction: • Schema integration / table expansion • Set Expansion • Knowledge base construction / expansion

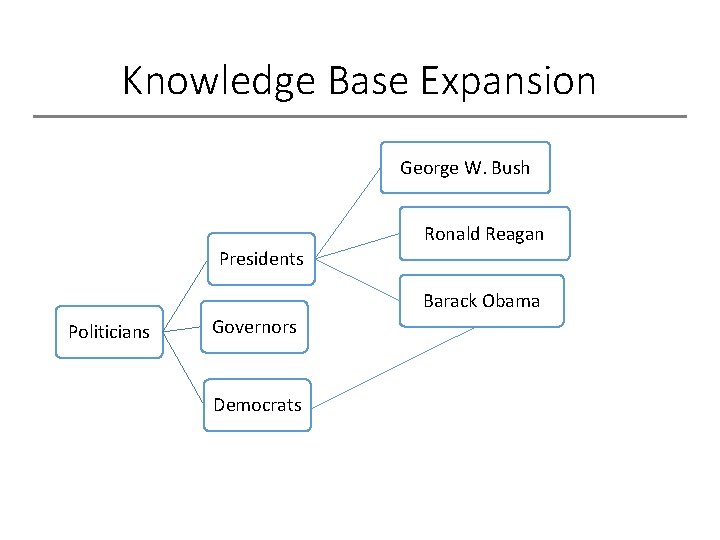

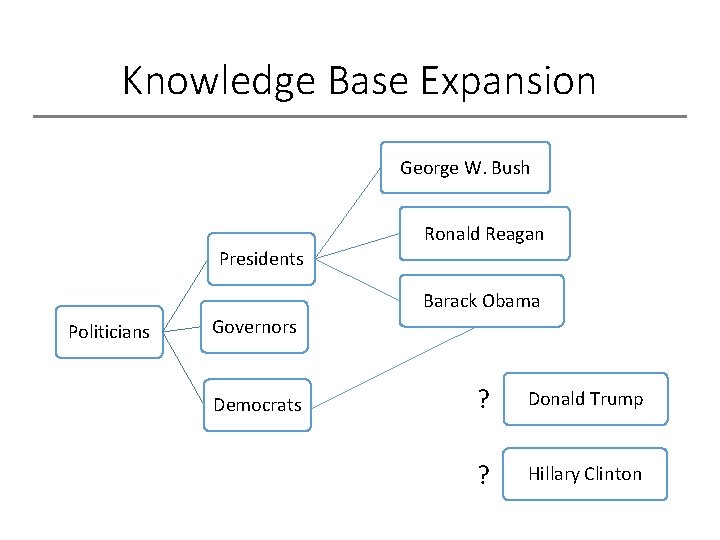

Knowledge Base Expansion George W. Bush Ronald Reagan Presidents Barack Obama Politicians Governors Democrats

Knowledge Base Expansion George W. Bush Ronald Reagan Presidents Barack Obama Politicians Governors Democrats ? Donald Trump ? Hillary Clinton

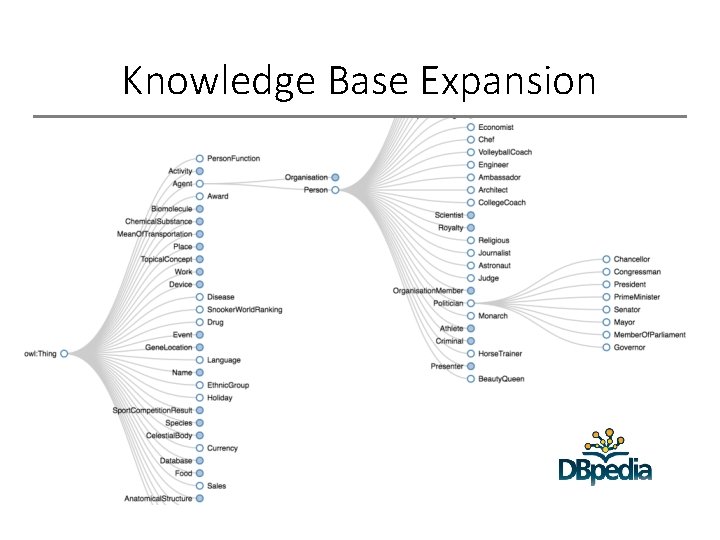

Knowledge Base Expansion

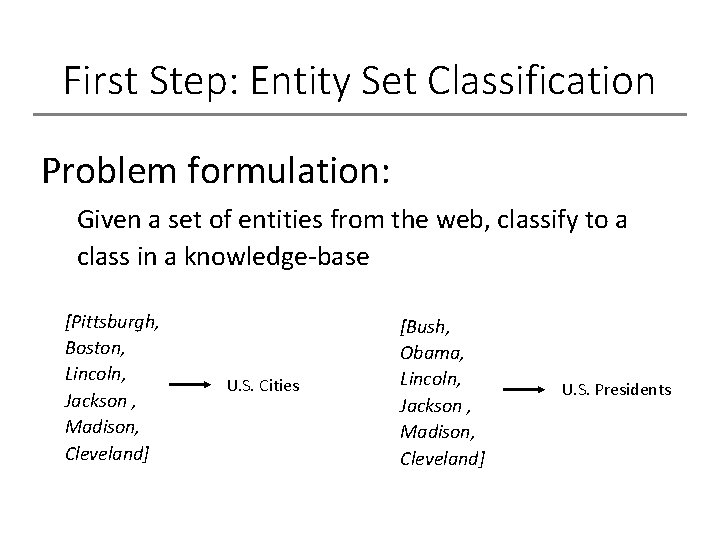

First Step: Entity Set Classification Problem formulation: Given a set of entities from the web, classify to a class in a knowledge-base [Pittsburgh, Boston, Lincoln, Jackson , Madison, Cleveland] U. S. Cities [Bush, Obama, Lincoln, Jackson , Madison, Cleveland] U. S. Presidents

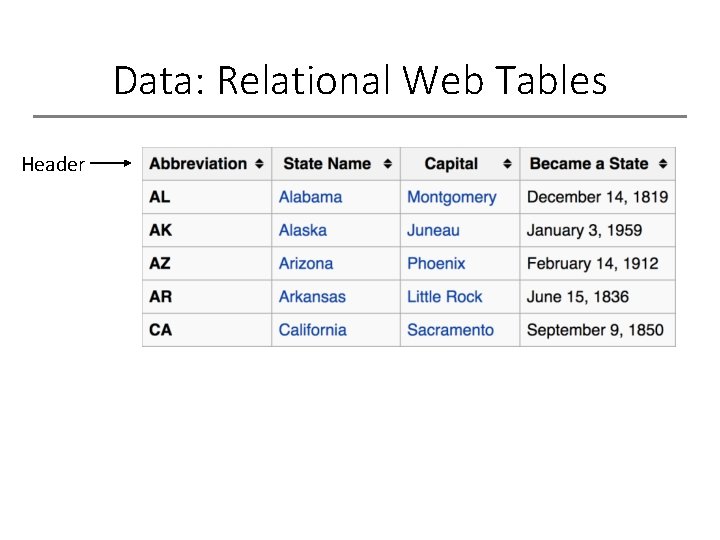

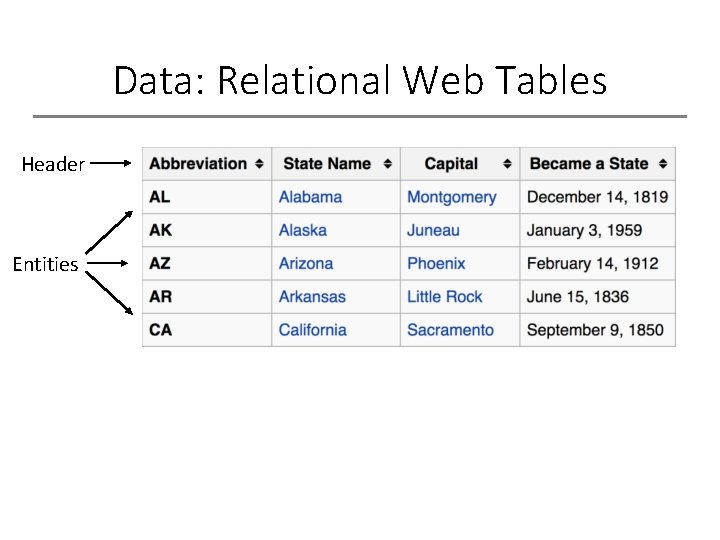

Data: Relational Web Tables

Data: Relational Web Tables Header

Data: Relational Web Tables Header Entities

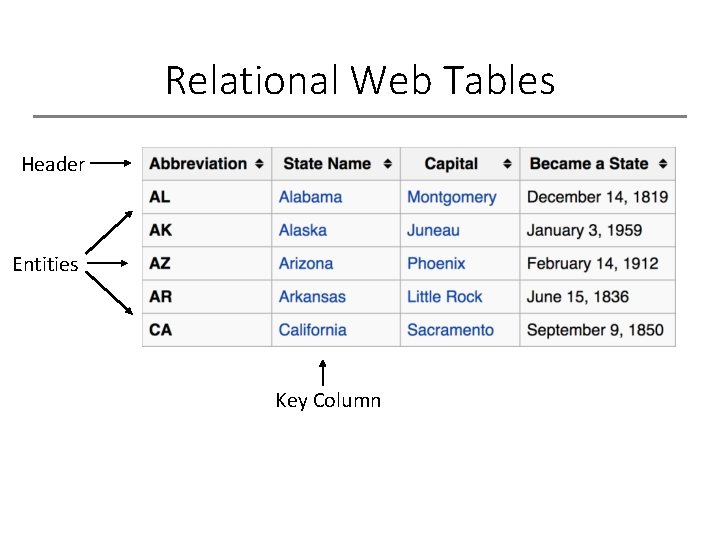

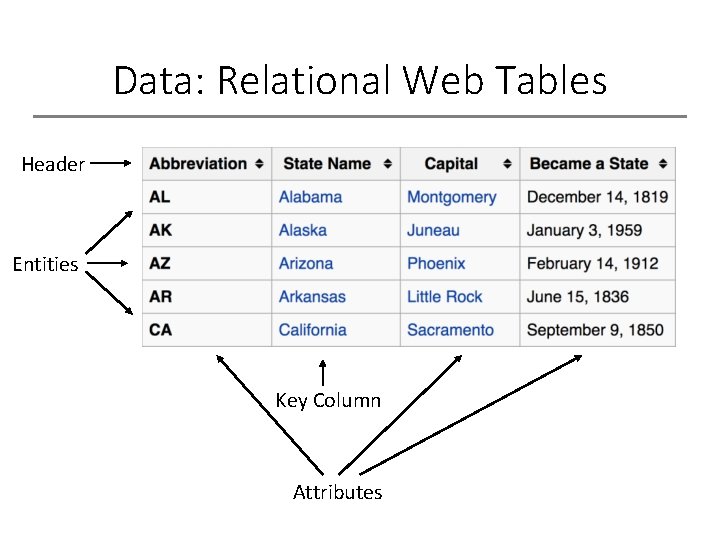

Relational Web Tables Header Entities Key Column

Data: Relational Web Tables Header Entities Key Column Attributes

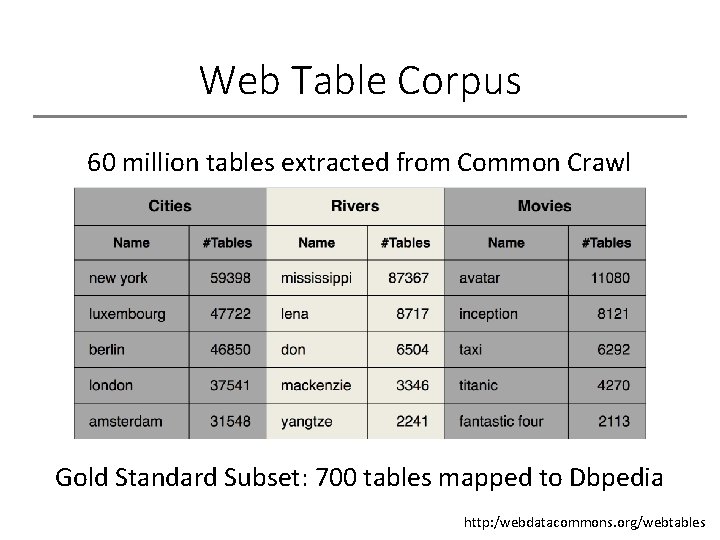

Web Table Corpus 60 million tables extracted from Common Crawl Gold Standard Subset: 700 tables mapped to Dbpedia http: /webdatacommons. org/webtables

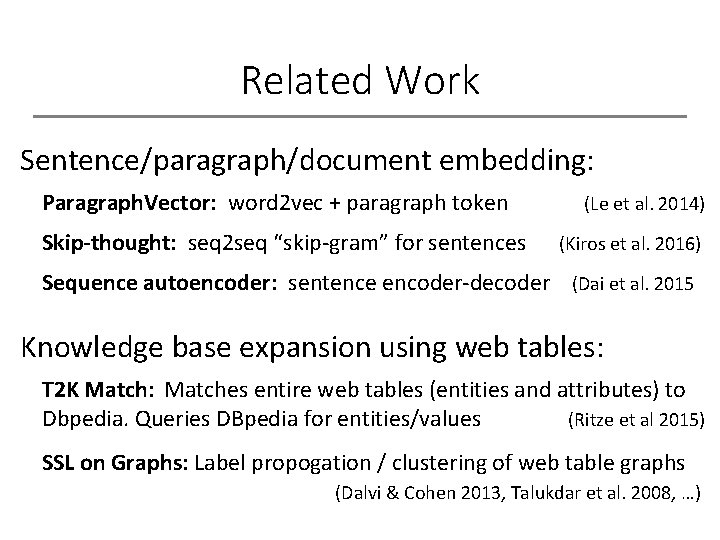

Related Work Sentence/paragraph/document embedding: Paragraph. Vector: word 2 vec + paragraph token Skip-thought: seq 2 seq “skip-gram” for sentences (Le et al. 2014) (Kiros et al. 2016) Sequence autoencoder: sentence encoder-decoder (Dai et al. 2015 Knowledge base expansion using web tables: T 2 K Match: Matches entire web tables (entities and attributes) to Dbpedia. Queries DBpedia for entities/values (Ritze et al 2015) SSL on Graphs: Label propogation / clustering of web table graphs (Dalvi & Cohen 2013, Talukdar et al. 2008, …)

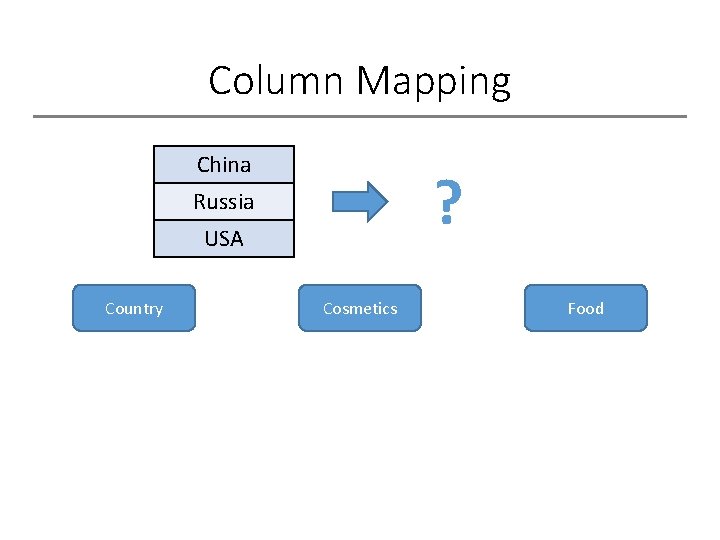

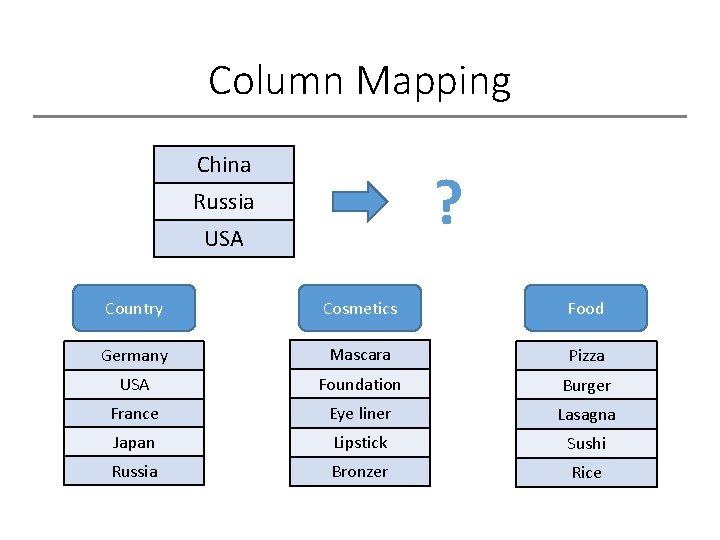

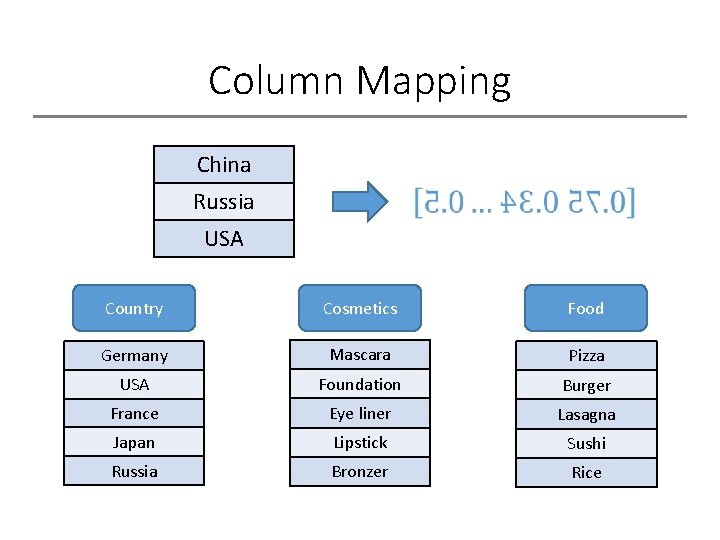

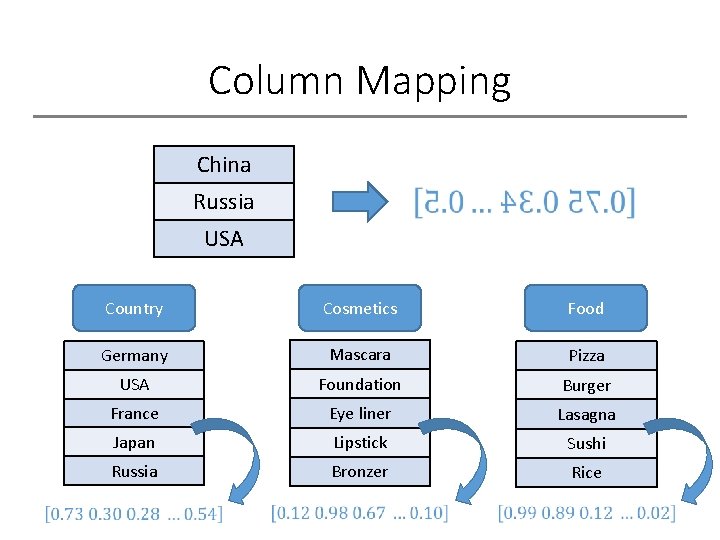

Column Mapping China ? Russia USA Country Cosmetics Food

Column Mapping China ? Russia USA Country Cosmetics Food Germany Mascara Pizza USA Foundation Burger France Eye liner Lasagna Japan Lipstick Sushi Russia Bronzer Rice

Column Mapping China Russia USA Country Cosmetics Food Germany Mascara Pizza USA Foundation Burger France Eye liner Lasagna Japan Lipstick Sushi Russia Bronzer Rice

Column Mapping China Russia USA Country Cosmetics Food Germany Mascara Pizza USA Foundation Burger France Eye liner Lasagna Japan Lipstick Sushi Russia Bronzer Rice

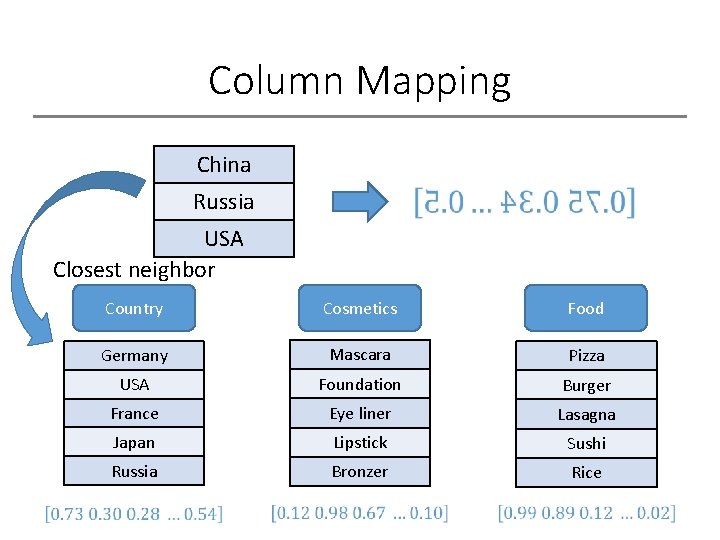

Column Mapping China Russia USA Closest neighbor Country Cosmetics Food Germany Mascara Pizza USA Foundation Burger France Eye liner Lasagna Japan Lipstick Sushi Russia Bronzer Rice

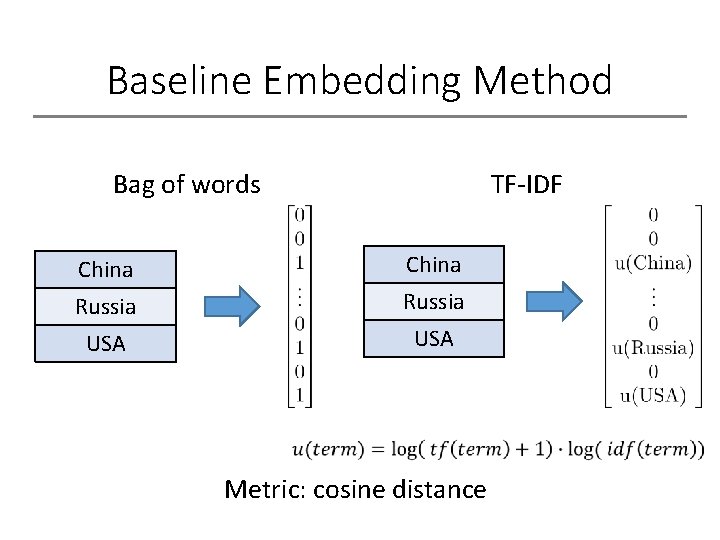

Baseline Embedding Method Bag of words TF-IDF

Baseline Embedding Method Bag of words TF-IDF China Russia USA Metric: cosine distance

Word 2 Vec

Word 2 Vec

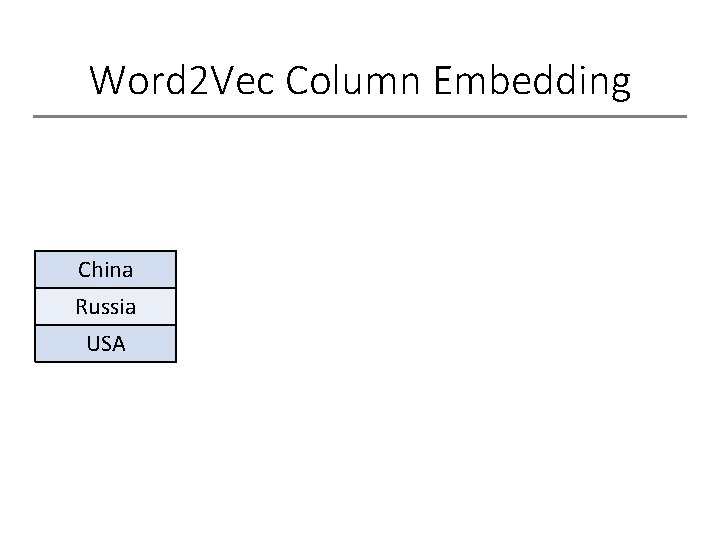

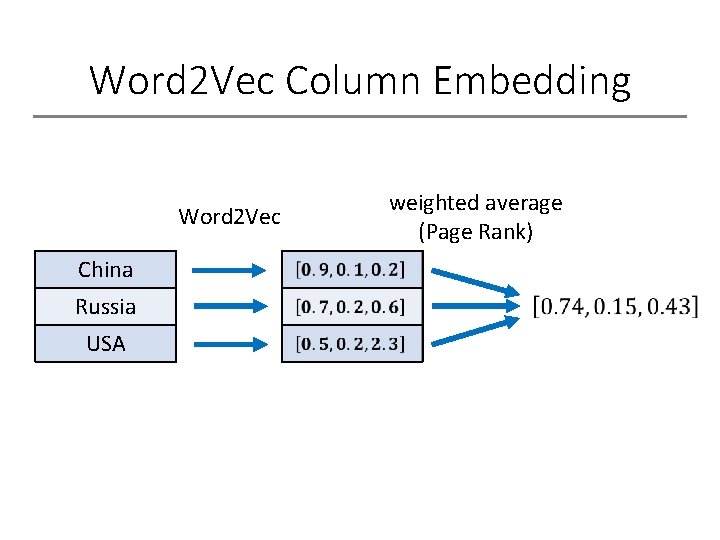

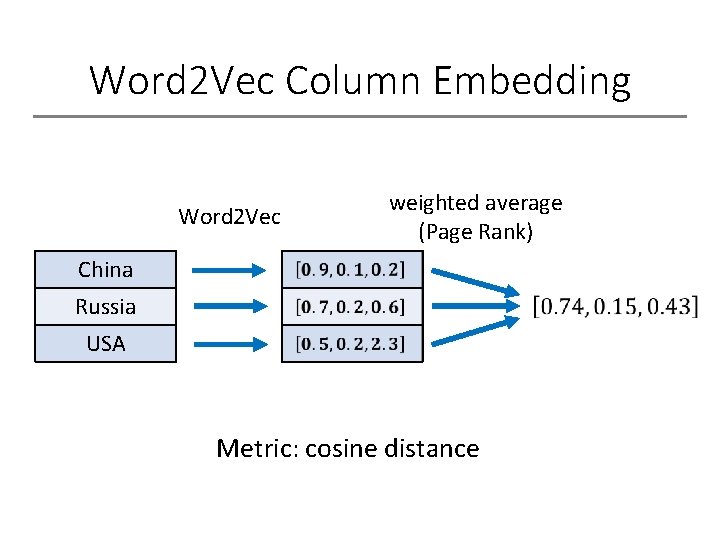

Word 2 Vec Column Embedding China Russia USA

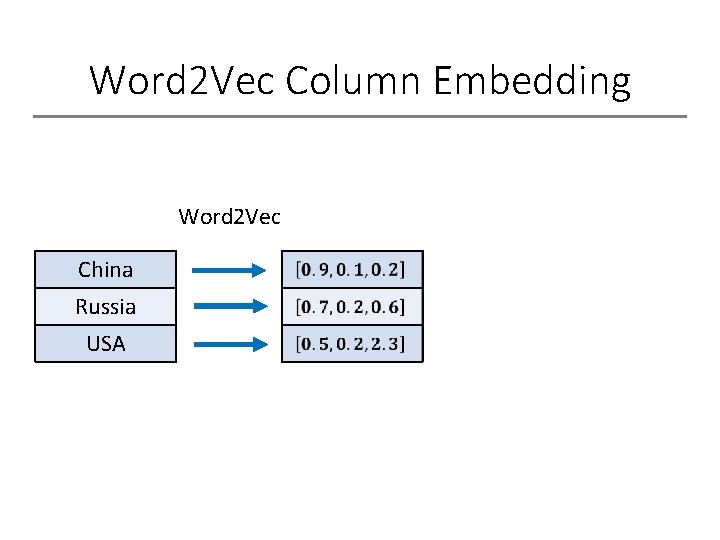

Word 2 Vec Column Embedding Word 2 Vec China Russia USA

Word 2 Vec Column Embedding Word 2 Vec China Russia USA weighted average (Page Rank)

Word 2 Vec Column Embedding Word 2 Vec weighted average (Page Rank) China Russia USA Metric: cosine distance

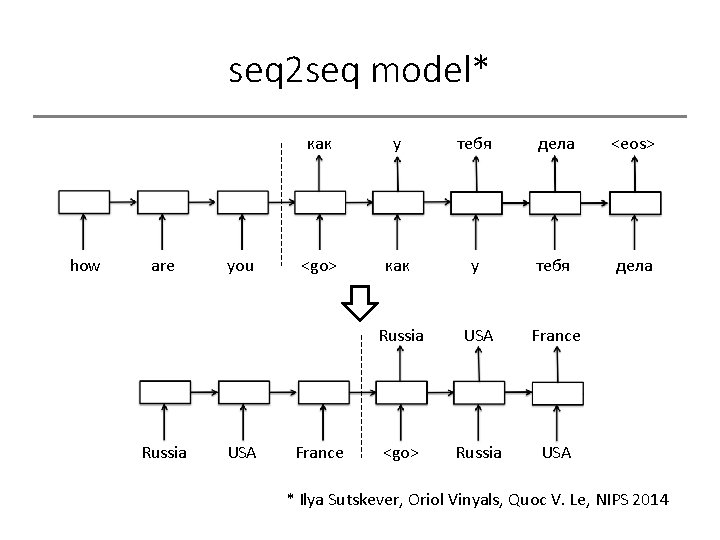

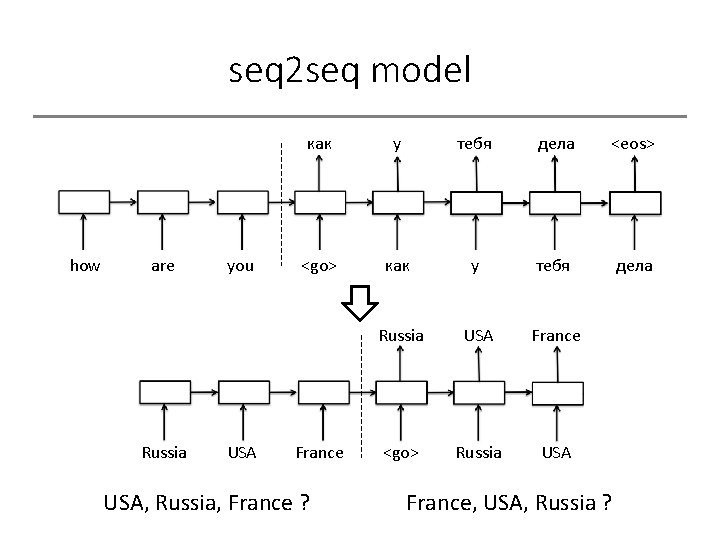

seq 2 seq model* how are Russia you USA как у тебя дела <eos> <go> как у тебя дела Russia USA France <go> Russia USA France * Ilya Sutskever, Oriol Vinyals, Quoc V. Le, NIPS 2014

seq 2 seq model* how are Russia you USA как у тебя дела <eos> <go> как у тебя дела Russia USA France <go> Russia USA France USA, Russia, France ? France, USA, Russia ?

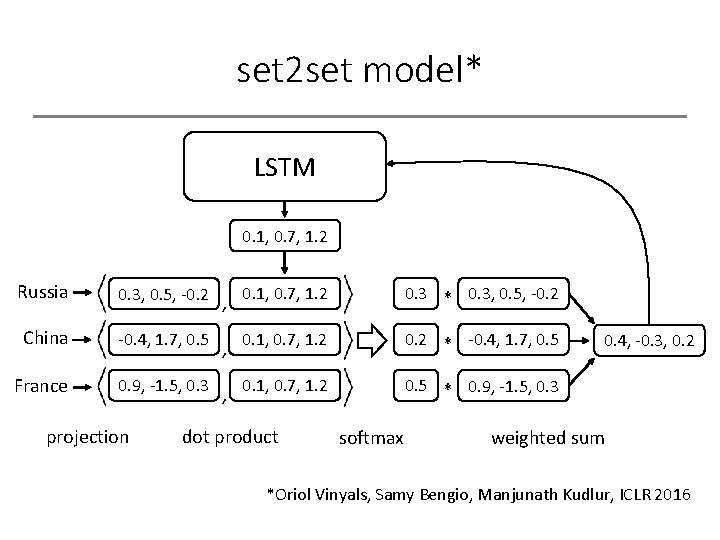

set 2 set model* LSTM 0. 1, 0. 7, 1. 2 Russia 0. 3, 0. 5, -0. 2 , 0. 1, 0. 7, 1. 2 0. 3 * 0. 3, 0. 5, -0. 2 China -0. 4, 1. 7, 0. 5 , 0. 1, 0. 7, 1. 2 0. 2 * -0. 4, 1. 7, 0. 5 France 0. 9, -1. 5, 0. 3 , 0. 1, 0. 7, 1. 2 0. 5 * 0. 9, -1. 5, 0. 3 projection dot product softmax 0. 4, -0. 3, 0. 2 weighted sum *Oriol Vinyals, Samy Bengio, Manjunath Kudlur, ICLR 2016

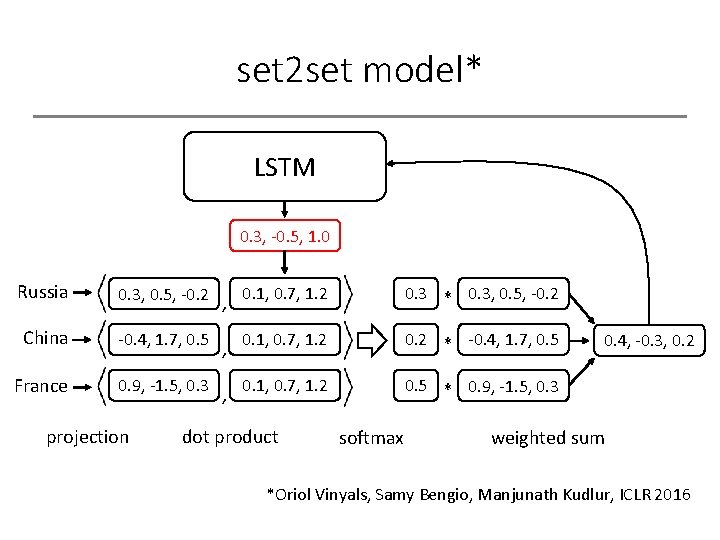

set 2 set model* LSTM 0. 3, -0. 5, 1. 0 Russia 0. 3, 0. 5, -0. 2 , 0. 1, 0. 7, 1. 2 0. 3 * 0. 3, 0. 5, -0. 2 China -0. 4, 1. 7, 0. 5 , 0. 1, 0. 7, 1. 2 0. 2 * -0. 4, 1. 7, 0. 5 France 0. 9, -1. 5, 0. 3 , 0. 1, 0. 7, 1. 2 0. 5 * 0. 9, -1. 5, 0. 3 projection dot product softmax 0. 4, -0. 3, 0. 2 weighted sum *Oriol Vinyals, Samy Bengio, Manjunath Kudlur, ICLR 2016

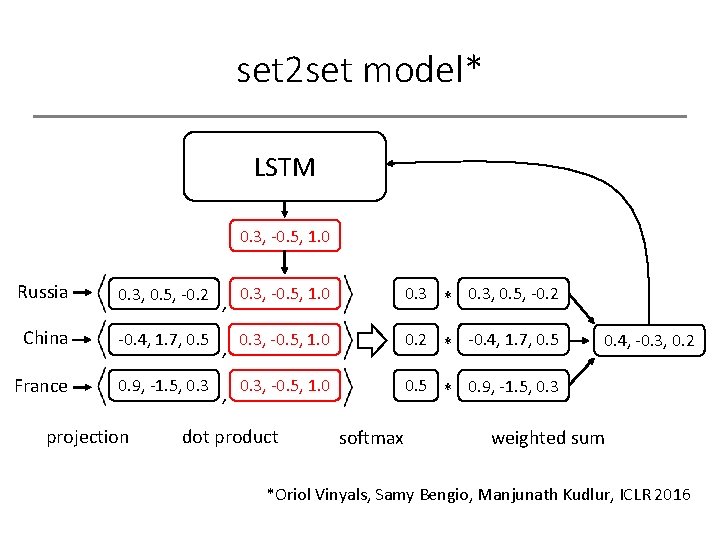

set 2 set model* LSTM 0. 3, -0. 5, 1. 0 Russia 0. 3, 0. 5, -0. 2 , 0. 3, -0. 5, 1. 0 0. 3 * 0. 3, 0. 5, -0. 2 China -0. 4, 1. 7, 0. 5 , 0. 3, -0. 5, 1. 0 0. 2 * -0. 4, 1. 7, 0. 5 France 0. 9, -1. 5, 0. 3 , 0. 3, -0. 5, 1. 0 0. 5 * 0. 9, -1. 5, 0. 3 projection dot product softmax 0. 4, -0. 3, 0. 2 weighted sum *Oriol Vinyals, Samy Bengio, Manjunath Kudlur, ICLR 2016

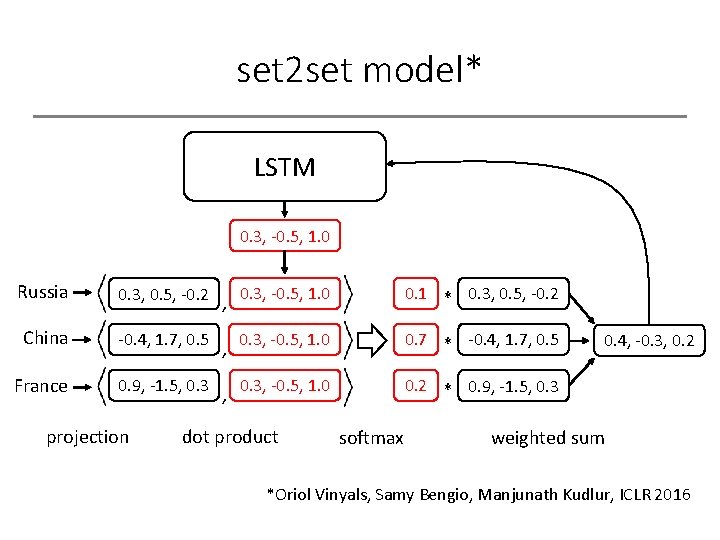

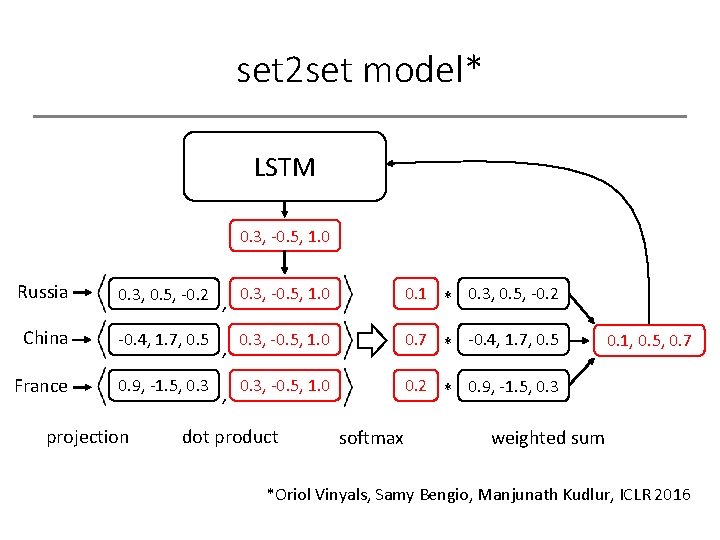

set 2 set model* LSTM 0. 3, -0. 5, 1. 0 Russia 0. 3, 0. 5, -0. 2 , 0. 3, -0. 5, 1. 0 0. 1 * 0. 3, 0. 5, -0. 2 China -0. 4, 1. 7, 0. 5 , 0. 3, -0. 5, 1. 0 0. 7 * -0. 4, 1. 7, 0. 5 France 0. 9, -1. 5, 0. 3 , 0. 3, -0. 5, 1. 0 0. 2 * 0. 9, -1. 5, 0. 3 projection dot product softmax 0. 4, -0. 3, 0. 2 weighted sum *Oriol Vinyals, Samy Bengio, Manjunath Kudlur, ICLR 2016

set 2 set model* LSTM 0. 3, -0. 5, 1. 0 Russia 0. 3, 0. 5, -0. 2 , 0. 3, -0. 5, 1. 0 0. 1 * 0. 3, 0. 5, -0. 2 China -0. 4, 1. 7, 0. 5 , 0. 3, -0. 5, 1. 0 0. 7 * -0. 4, 1. 7, 0. 5 France 0. 9, -1. 5, 0. 3 , 0. 3, -0. 5, 1. 0 0. 2 * 0. 9, -1. 5, 0. 3 projection dot product softmax 0. 1, 0. 5, 0. 7 weighted sum *Oriol Vinyals, Samy Bengio, Manjunath Kudlur, ICLR 2016

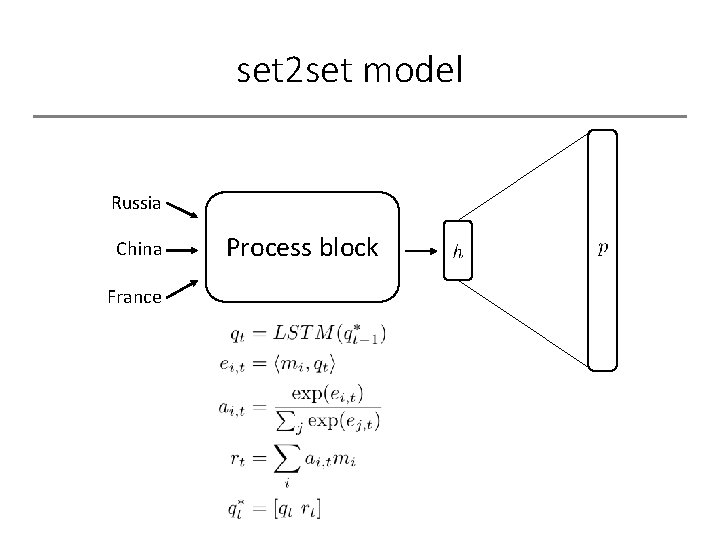

set 2 set model* Russia China France Process block

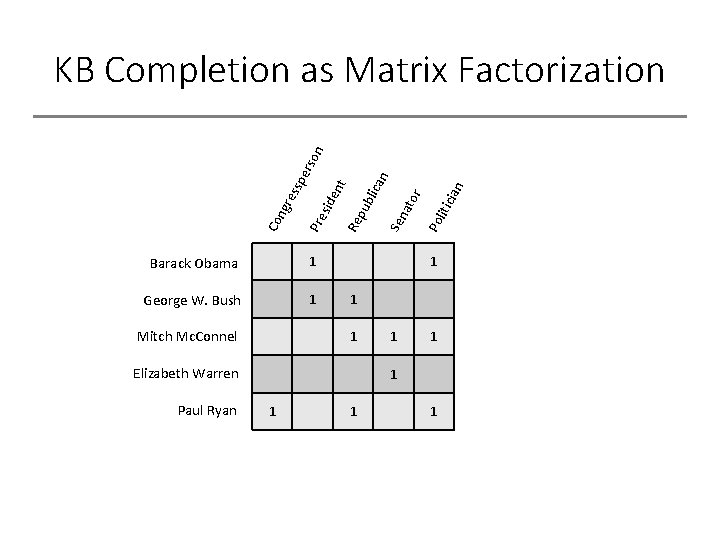

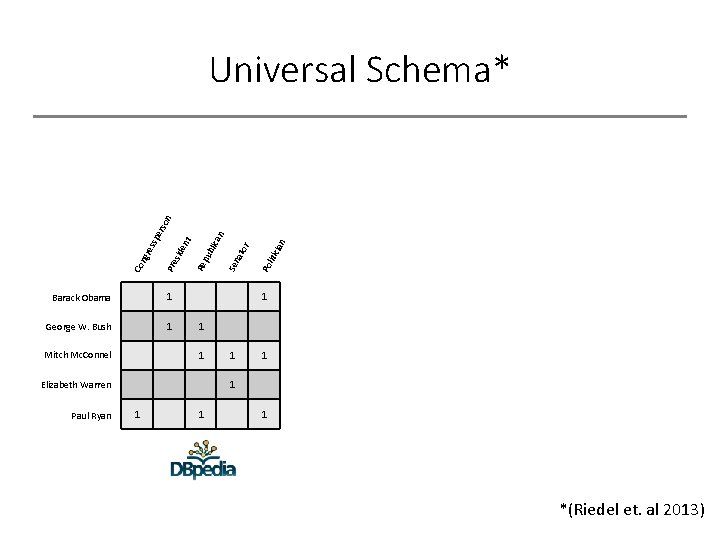

Barack Obama 1 George W. Bush 1 Mitch Mc. Connel icia Po lit ato r n n 1 1 1 Elizabeth Warren Paul Ryan Sen blic a Re pu en t Pre sid Co ngr ess p ers on KB Completion as Matrix Factorization 1 1 1

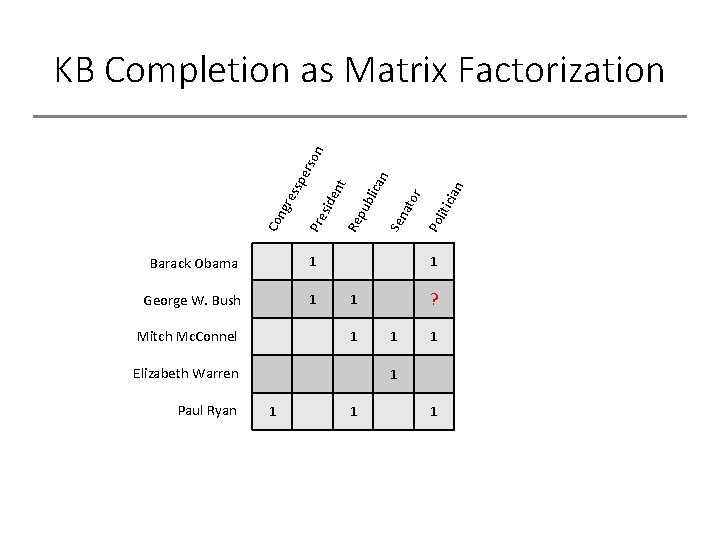

Barack Obama 1 George W. Bush 1 Mitch Mc. Connel icia Po lit ato r n n 1 ? 1 1 Elizabeth Warren Paul Ryan Sen blic a Re pu en t Pre sid Co ngr ess p ers on KB Completion as Matrix Factorization 1 1 1

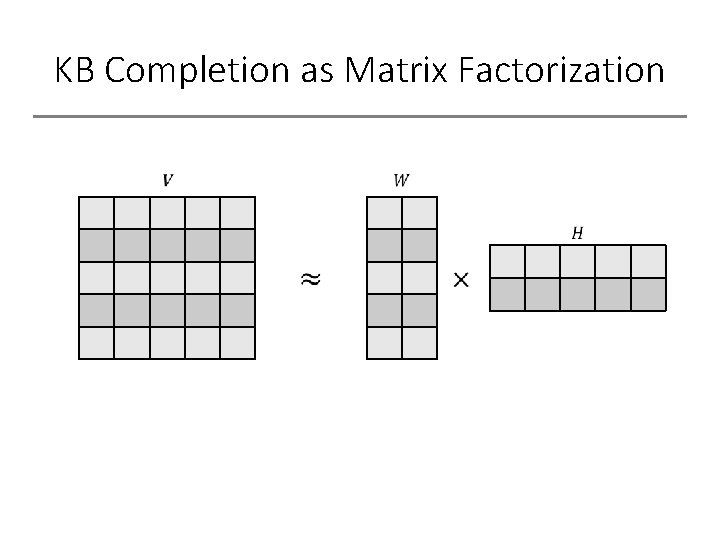

KB Completion as Matrix Factorization

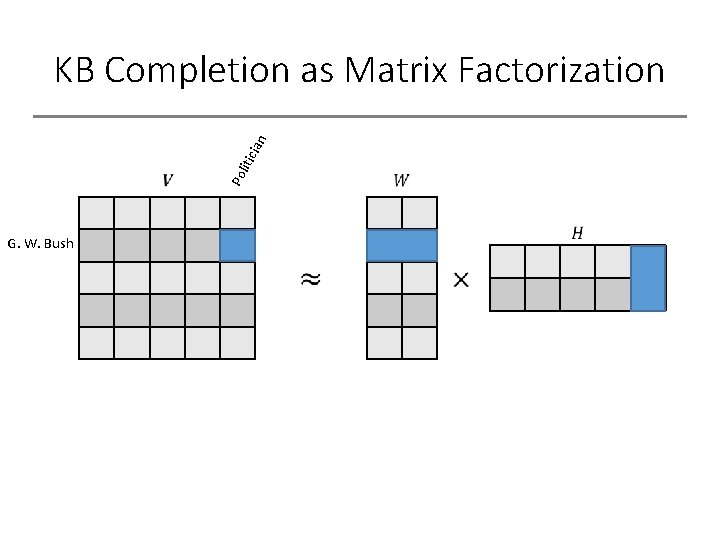

Po lit icia n KB Completion as Matrix Factorization G. W. Bush

Barack Obama 1 George W. Bush 1 cia n liti Po 1 1 Elizabeth Warren Paul Ryan Sen 1 1 Mitch Mc. Connel ato r an blic Re pu nt ide Pre s Co ngr e ssp ers on Universal Schema* 1 1 1 *(Riedel et. al 2013)

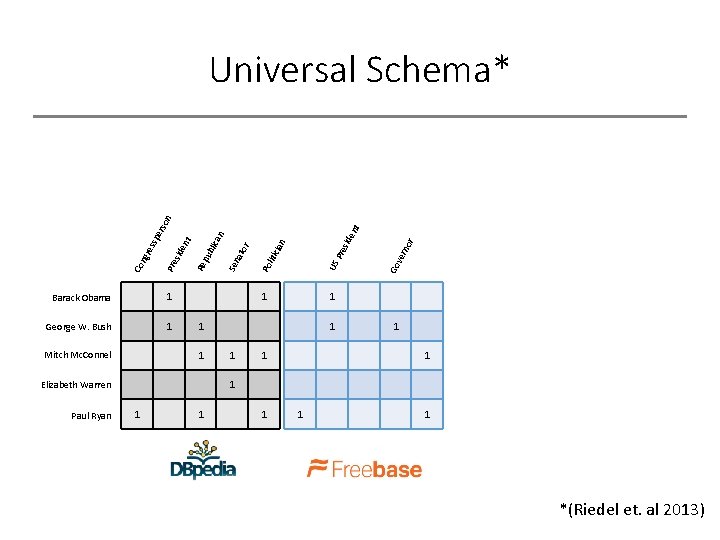

1 1 r no ver Go sid Pre cia n liti ato r Sen blic an 1 1 Elizabeth Warren Paul Ryan 1 1 1 Mitch Mc. Connel en t George W. Bush US 1 Po Barack Obama Re pu nt ide Pre s Co ngr e ssp ers on Universal Schema* 1 1 1 *(Riedel et. al 2013)

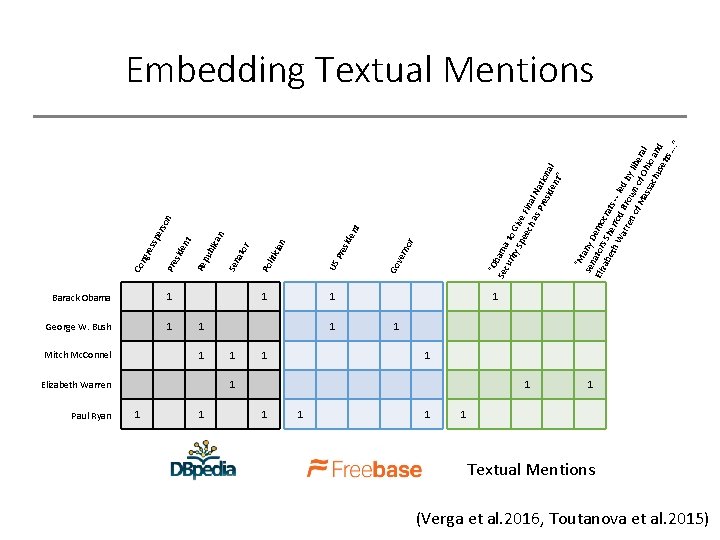

Mitch Mc. Connel Paul Ryan 1 George W. Bush 1 1 Elizabeth Warren 1 1 1 1 t on 1 1 1 “M sen any D Eliz ator emo ab s Sh cra eth er ts Wa rod -- led rre Bro n o wn by lib f M of e ass Ohi ral ach o a use nd tts …. ” “O Sec bama uri ty to Gi Spe ve ech Fina as l Na Pre tio sid nal en t” r no ver 1 Go en sid Pre cia n liti ers an blic ato r Sen US 1 Po Barack Obama Re pu nt ide Pre s ssp ngr e Co Embedding Textual Mentions 1 1 1 Textual Mentions (Verga et al. 2016, Toutanova et al. 2015)

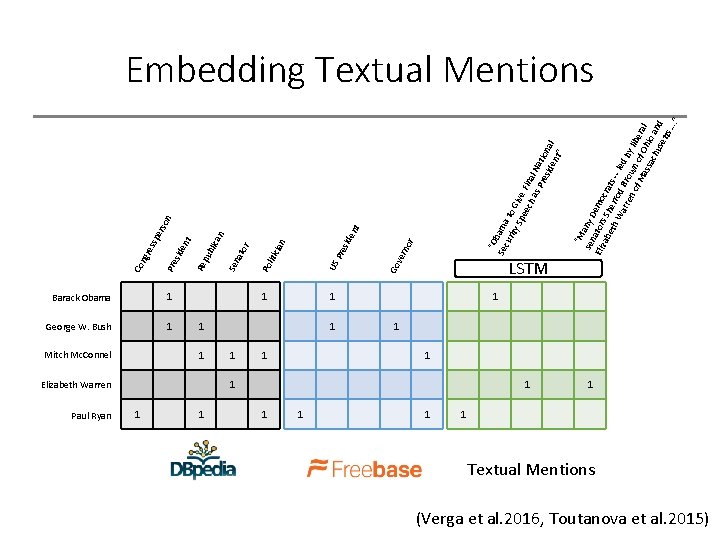

Mitch Mc. Connel Paul Ryan 1 George W. Bush 1 1 Elizabeth Warren 1 1 1 1 t on 1 1 1 “M sen any D Eliz ator emo ab s Sh cra eth er ts Wa rod -- led rre Bro n o wn by lib f M of e ass Ohi ral ach o a use nd tts …. ” “O Sec bama uri ty to Gi Spe ve ech Fina as l Na Pre tio sid nal en t” r no ver 1 Go en sid Pre cia n liti ers an blic ato r Sen US 1 Po Barack Obama Re pu nt ide Pre s ssp ngr e Co Embedding Textual Mentions LSTM 1 1 1 Textual Mentions (Verga et al. 2016, Toutanova et al. 2015)

![Extending To Entity Sets [Elizabeth Warren, Mitch Mc. Connel, Patrick Toomey, Ted Cruz] Cruz Extending To Entity Sets [Elizabeth Warren, Mitch Mc. Connel, Patrick Toomey, Ted Cruz] Cruz](http://slidetodoc.com/presentation_image_h2/a7dc2c2618c61ba765661c83feb4df3d/image-45.jpg)

Extending To Entity Sets [Elizabeth Warren, Mitch Mc. Connel, Patrick Toomey, Ted Cruz] Cruz [George W. Bush, Ronald Regan, Jimmy Carter, Barack Obama] Obama LSTM Barack Obama 1 George W. Bush 1 1 1 Mitch Mc. Connel 1 Elizabeth Warren Paul Ryan 1 1

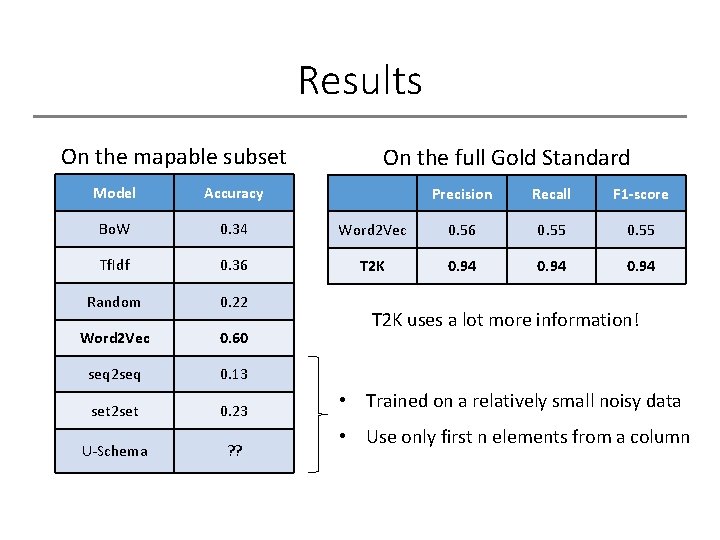

Results On the mapable subset Model Accuracy Bo. W 0. 34 Tf. Idf 0. 36 Random 0. 22 Word 2 Vec 0. 60 seq 2 seq 0. 13 set 2 set 0. 23 U-Schema ? ? On the full Gold Standard Precision Recall F 1 -score Word 2 Vec 0. 56 0. 55 T 2 K 0. 94 T 2 K uses a lot more information! • Trained on a relatively small noisy data • Use only first n elements from a column

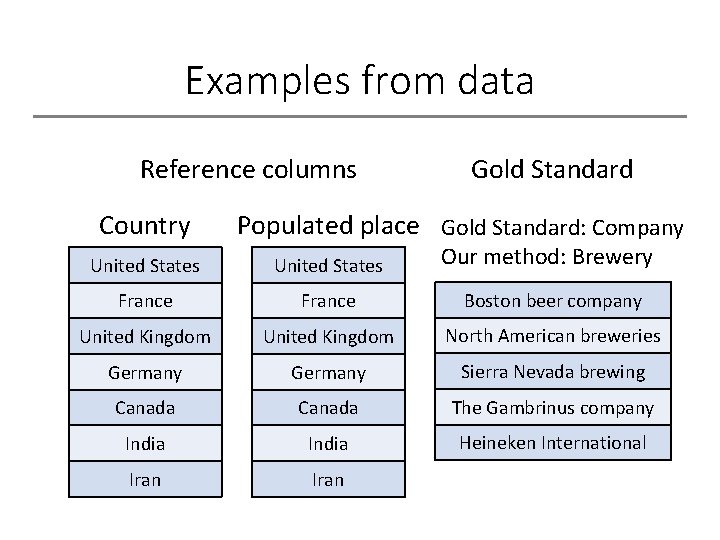

Examples from data Reference columns Country Gold Standard Populated place Gold Standard: Company United States Our method: Brewery France Boston beer company United Kingdom North American breweries Germany Sierra Nevada brewing Canada The Gambrinus company India Heineken International Iran

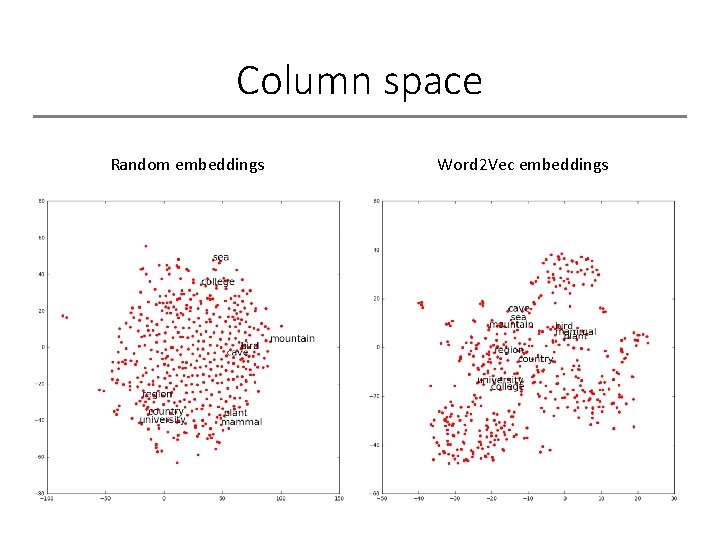

Column space Random embeddings Word 2 Vec embeddings

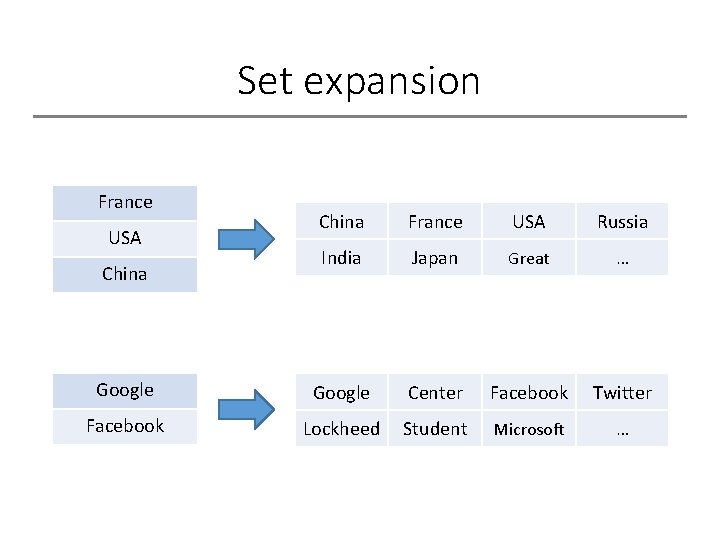

Set expansion France China France USA Russia India Japan Great … Google Center Facebook Twitter Facebook Lockheed Student Microsoft … USA China

Conclusions/Future work 1. Column embeddings seem to be good, but accuracy is bad: • evaluation on a different problem (!) • data preprocessing 2. Improve models: • tune the parameters of the models • learn aggregation function • replace LSTM with set 2 set for U-Schema

- Slides: 50