Validity cont Control RMS October 7 Validity Experimental

Validity (cont. )/Control RMS – October 7

Validity • Experimental validity – the soundness of the experimental design – Not the same as measurement validity (the goodness of the operational definition) • Internal validity • Construct validity • External validity

Internal Validity • Concerns the logic of the relationship between the independent and dependent variables • It is the extent to which a study provides evidence of a cause-effect relationship between the IV and the DV

confounding • Error that occurs when the effects of two variables in an experiment cannot be separated – results in a confused interpretation of the results • Example – Group A (IV = 0) – Group B (IV = 1) – Behaviour observed

How do you know what could be confounding? • Need to make judgments as you design the experiment • Be particularly careful with subject variables

Construct Validity • Extent to which the results support theory behind the research – Would another theory predict the same experimental results? • Hypotheses cannot be tested in a vacuum – The conditions of a study constitute auxilliary hypotheses that must also be true so that you can test the main hypothesis

Example • H 1: Anxiety is conducive to learning • Participants selected on basis of whether they bit their fingernails (sign of anxiety) • Observe how fast they can learn to write by holding a pencil in their toes (a learning task) • Did not just test impact of anxiety of learning – Tested that fingernail biting is a measure of anxiety (H 2) – Tested that writing with toes is a good learning task (H 3) • If either is false, you could have negative results even if the main hypothesis is true

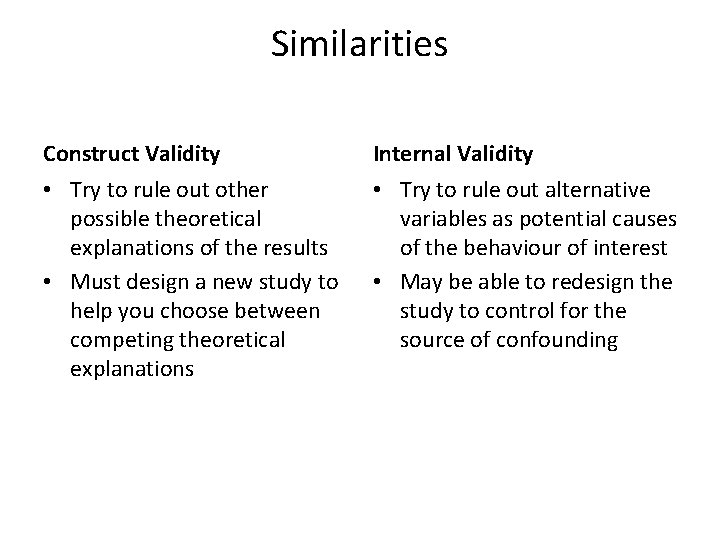

Similarities Construct Validity Internal Validity • Try to rule out other possible theoretical explanations of the results • Must design a new study to help you choose between competing theoretical explanations • Try to rule out alternative variables as potential causes of the behaviour of interest • May be able to redesign the study to control for the source of confounding

External Validity • How well the findings of an experiment generalize to other situations or populations – Strictly speaking results are only valid for other identical situations – Can be hard to know which situational variables are important • Ecological validity is related – Extent to which an experimental situation mimics a real-world situation

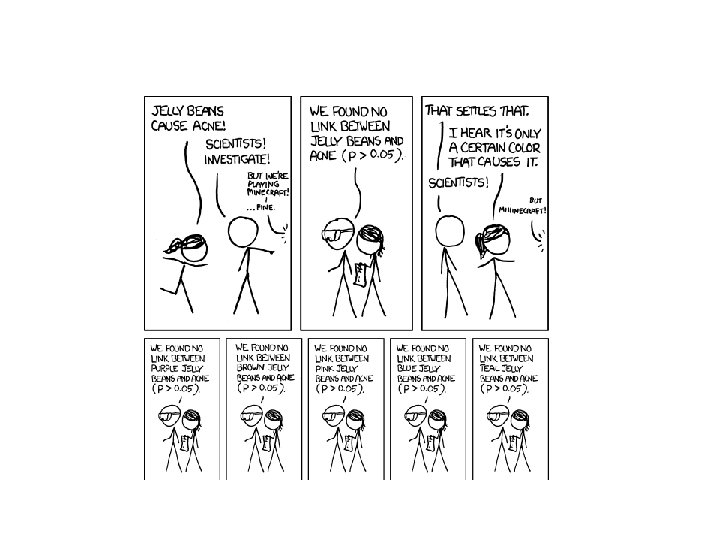

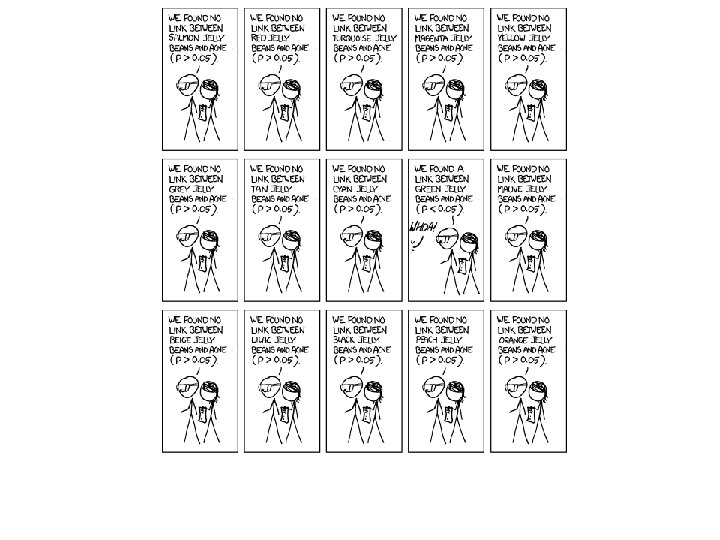

Statistical Validity • Extent to which data are shown to be the result of a causeeffect relationship rather than an accident – Does the relationship exist or was it caused by pure chance • • Similar to internal validity Notion of power (is n big enough? ) Is measure accurate? Is the statistical test appropriate? – 3 kinds of lies: lies, damn lies, and statistics • Statistical test establishes that an outcome has a certain low probability of happening by chance alone – No guarantee that it’s not a random error in sampling or measurement

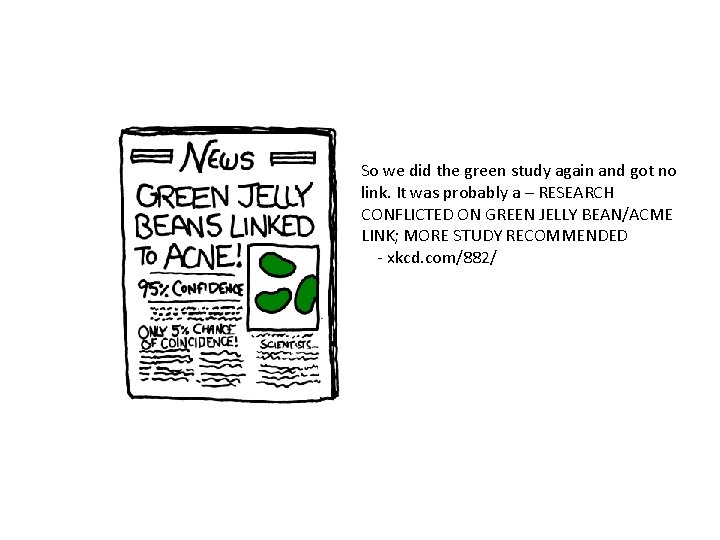

So we did the green study again and got no link. It was probably a – RESEARCH CONFLICTED ON GREEN JELLY BEAN/ACME LINK; MORE STUDY RECOMMENDED - xkcd. com/882/

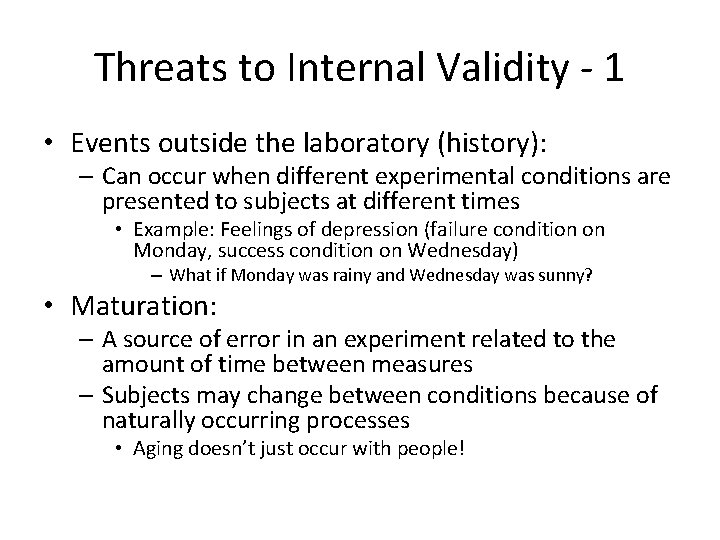

Threats to Internal Validity - 1 • Events outside the laboratory (history): – Can occur when different experimental conditions are presented to subjects at different times • Example: Feelings of depression (failure condition on Monday, success condition on Wednesday) – What if Monday was rainy and Wednesday was sunny? • Maturation: – A source of error in an experiment related to the amount of time between measures – Subjects may change between conditions because of naturally occurring processes • Aging doesn’t just occur with people!

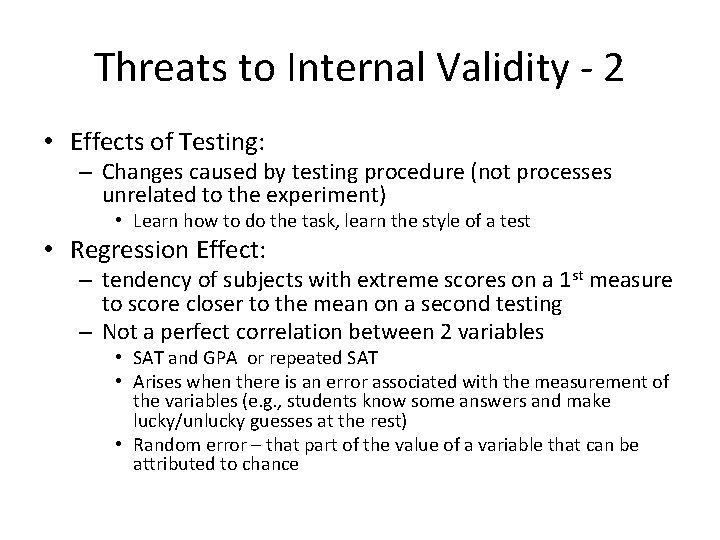

Threats to Internal Validity - 2 • Effects of Testing: – Changes caused by testing procedure (not processes unrelated to the experiment) • Learn how to do the task, learn the style of a test • Regression Effect: – tendency of subjects with extreme scores on a 1 st measure to score closer to the mean on a second testing – Not a perfect correlation between 2 variables • SAT and GPA or repeated SAT • Arises when there is an error associated with the measurement of the variables (e. g. , students know some answers and make lucky/unlucky guesses at the rest) • Random error – that part of the value of a variable that can be attributed to chance

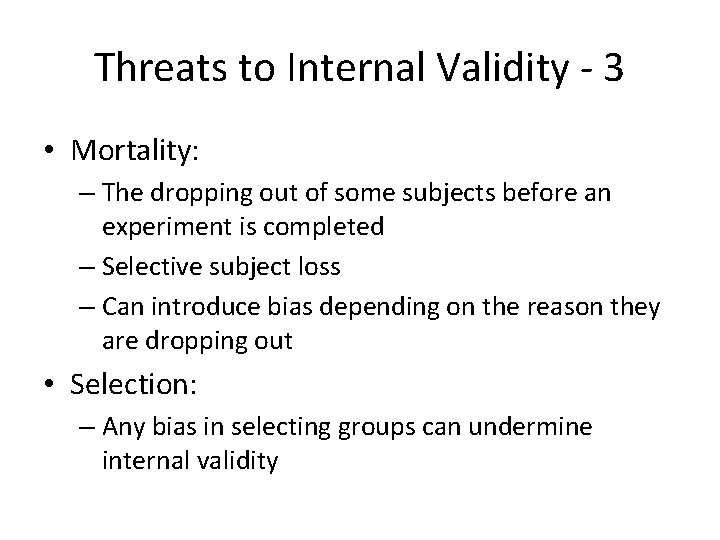

Threats to Internal Validity - 3 • Mortality: – The dropping out of some subjects before an experiment is completed – Selective subject loss – Can introduce bias depending on the reason they are dropping out • Selection: – Any bias in selecting groups can undermine internal validity

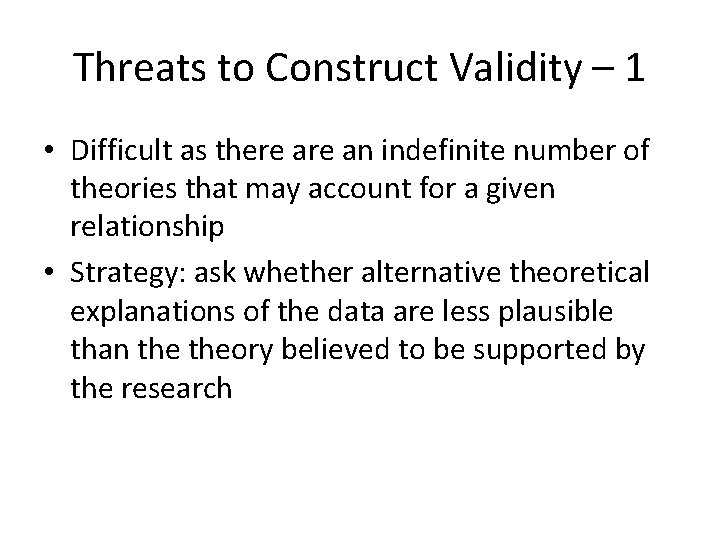

Threats to Construct Validity – 1 • Difficult as there an indefinite number of theories that may account for a given relationship • Strategy: ask whether alternative theoretical explanations of the data are less plausible than theory believed to be supported by the research

Threats to Construct Validity - 2 • Loose connection to theory and method – The anxiety/learning example – Often research suffers from poor operational definition of theoretical constructs • Ambiguous effect of IV – Experimenter can control all reasonable confound variables – Participant may compromise result by seeing the situation differently than the experimenter

Human-subject research • Good subject tendency: – Participants want to help the research achieve their goals (and may not understand the goals) • Evaluation apprehension: – Tendency of participants to alter their behaviour to appear as socially desirable as possible

Threats To External Validity • Other subjects – Are the participants truly representative of the sample to which you are trying to generalize? • Other times – Would the same experiment conducted at another time produce the same results? – Caution: the web is rapidly changing • Other settings – Lab vs field – Structured vs unstructured environments

Reading: DRAM errors in the wild • What’s the research problem? • What was their approach? • Hypotheses? – IV, DV – Measurement validity • Internal validity • Construct validity • External validity

Control • Basic idea: isolate the effect of the treatment on the dependent variable – Ensure there are no reasonable alternative causes • Basic design: control vs. experimental group • Setting: – Field vs. lab – Experiment vs. non-experiment

Allocation (sampling) • Random, stratified random, matching – Video http: //www. youtube. com/watch? v=r. ASK 8 Ppqak M&feature=related

Experimental Design • Within subjects – All subjects receive all conditions • Between subjects – All subjects receive only one of the conditions • Mixed design – Some factors between, some within

- Slides: 24